GenNBV: Generalizable Next-Best-View Policy for Active 3D Reconstruction

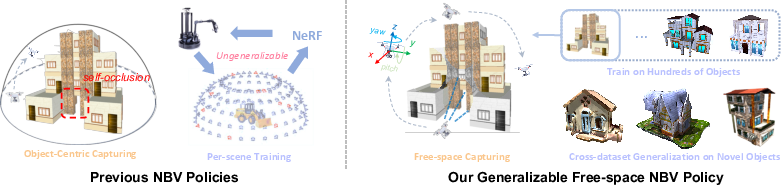

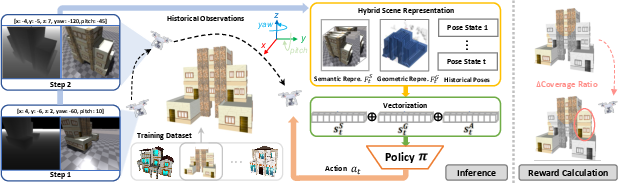

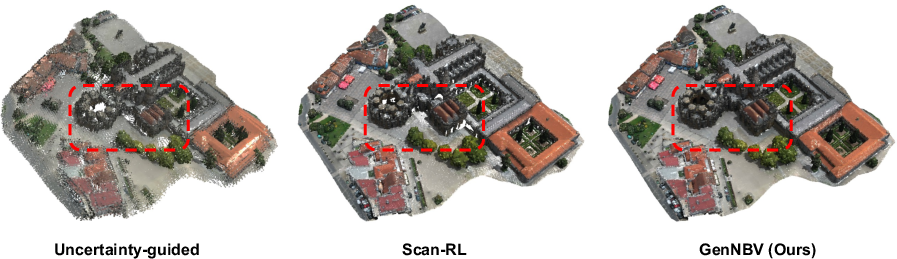

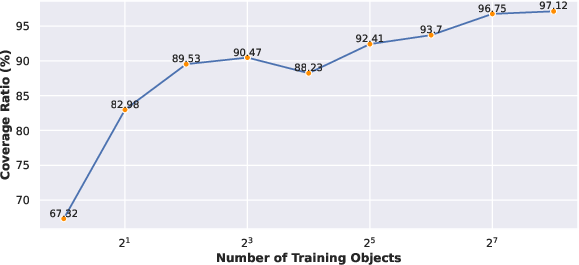

Abstract: While recent advances in neural radiance field enable realistic digitization for large-scale scenes, the image-capturing process is still time-consuming and labor-intensive. Previous works attempt to automate this process using the Next-Best-View (NBV) policy for active 3D reconstruction. However, the existing NBV policies heavily rely on hand-crafted criteria, limited action space, or per-scene optimized representations. These constraints limit their cross-dataset generalizability. To overcome them, we propose GenNBV, an end-to-end generalizable NBV policy. Our policy adopts a reinforcement learning (RL)-based framework and extends typical limited action space to 5D free space. It empowers our agent drone to scan from any viewpoint, and even interact with unseen geometries during training. To boost the cross-dataset generalizability, we also propose a novel multi-source state embedding, including geometric, semantic, and action representations. We establish a benchmark using the Isaac Gym simulator with the Houses3K and OmniObject3D datasets to evaluate this NBV policy. Experiments demonstrate that our policy achieves a 98.26% and 97.12% coverage ratio on unseen building-scale objects from these datasets, respectively, outperforming prior solutions.

- Vision-only robot navigation in a neural radiance world. IEEE Robotics and Automation Letters, 7(2):4606–4613, 2022.

- Jack E Bresenham. Algorithm for computer control of a digital plotter. IBM Systems journal, 4(1):25–30, 1965.

- Learning to explore using active neural slam. In International Conference on Learning Representations, 2020.

- Tensorf: Tensorial radiance fields. In European Conference on Computer Vision, pages 333–350. Springer, 2022.

- Learning exploration policies for navigation. In International Conference on Learning Representations, 2019.

- Leveraging procedural generation to benchmark reinforcement learning. In International conference on machine learning, pages 2048–2056. PMLR, 2020.

- Objaverse: A universe of annotated 3d objects. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 13142–13153, 2023.

- A reinforcement learning approach to the view planning problem. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pages 6933–6941, 2017.

- Crazyflie 2.0 quadrotor as a platform for research and education in robotics and control engineering. In 2017 22nd International Conference on Methods and Models in Automation and Robotics (MMAR), pages 37–42. IEEE, 2017.

- Scone: Surface coverage optimization in unknown environments by volumetric integration. In Advances in Neural Information Processing Systems, 2022.

- Asynchronous collaborative autoscanning with mode switching for multi-robot scene reconstruction. ACM Transactions on Graphics (TOG), 41(6):1–13, 2022.

- Next-best-view planning for surface reconstruction of large-scale 3d environments with multiple uavs. In 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pages 1567–1574. IEEE, 2020.

- An information gain formulation for active volumetric 3d reconstruction. In 2016 IEEE International Conference on Robotics and Automation (ICRA), pages 3477–3484. IEEE, 2016.

- Eslam: Efficient dense slam system based on hybrid representation of signed distance fields. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 17408–17419, 2023.

- Curl: Contrastive unsupervised representations for reinforcement learning. In International Conference on Machine Learning, pages 5639–5650. PMLR, 2020.

- Randomized kinodynamic planning. The international journal of robotics research, 20(5):378–400, 2001.

- Uncertainty guided policy for active robotic 3d reconstruction using neural radiance fields. IEEE Robotics and Automation Letters, 7(4):12070–12077, 2022.

- Scenarionet: Open-source platform for large-scale traffic scenario simulation and modeling. In Advances in Neural Information Processing Systems, 2022a.

- Metadrive: Composing diverse driving scenarios for generalizable reinforcement learning. IEEE transactions on pattern analysis and machine intelligence, 45(3):3461–3475, 2022b.

- Matrixcity: A large-scale city dataset for city-scale neural rendering and beyond. arXiv e-prints, pages arXiv–2308, 2023.

- Learning reconstructability for drone aerial path planning. ACM Transactions on Graphics (TOG), 41(6):1–17, 2022.

- Isaac gym: High performance gpu-based physics simulation for robot learning, 2021.

- Nerf: Representing scenes as neural radiance fields for view synthesis. In Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part I 16, pages 405–421. Springer, 2020.

- isdf: Real-time neural signed distance fields for robot perception. Robotics: Science and Systems, 2022.

- Activenerf: Learning where to see with uncertainty estimation. In Computer Vision–ECCV 2022: 17th European Conference, Tel Aviv, Israel, October 23–27, 2022, Proceedings, Part XXXIII, pages 230–246. Springer, 2022.

- Deepsdf: Learning continuous signed distance functions for shape representation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 165–174, 2019.

- Houses3k dataset. https://github.com/darylperalta/Houses3K, 2020a.

- Next-best view policy for 3d reconstruction. In Computer Vision–ECCV 2020 Workshops: Glasgow, UK, August 23–28, 2020, Proceedings, Part IV 16, pages 558–573. Springer, 2020b.

- Stable-baselines3: Reliable reinforcement learning implementations. The Journal of Machine Learning Research, 22(1):12348–12355, 2021.

- Neurar: Neural uncertainty for autonomous 3d reconstruction with implicit neural representations. IEEE Robotics and Automation Letters, 2023.

- Accelerating 3d deep learning with pytorch3d. arXiv:2007.08501, 2020.

- Nerf-slam: Real-time dense monocular slam with neural radiance fields. arXiv preprint arXiv:2210.13641, 2022.

- Learning to walk in minutes using massively parallel deep reinforcement learning. In 5th Annual Conference on Robot Learning, 2021.

- Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

- James A Sethian. A fast marching level set method for monotonically advancing fronts. proceedings of the National Academy of Sciences, 93(4):1591–1595, 1996.

- Uncertainty-driven active vision for implicit scene reconstruction. arXiv preprint arXiv:2210.00978, 2022.

- The replica dataset: A digital replica of indoor spaces. arXiv preprint arXiv:1906.05797, 2019.

- imap: Implicit mapping and positioning in real-time. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 6229–6238, 2021.

- Direct voxel grid optimization: Super-fast convergence for radiance fields reconstruction. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 5459–5469, 2022a.

- Neuralrecon: Real-time coherent 3d reconstruction from monocular video. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 15598–15607, 2021.

- Neural 3D reconstruction in the wild. In SIGGRAPH Conference Proceedings, 2022b.

- Sebastian Thrun. Probabilistic robotics. Communications of the ACM, 45(3):52–57, 2002.

- Domain randomization for transferring deep neural networks from simulation to the real world. In 2017 IEEE/RSJ international conference on intelligent robots and systems (IROS), pages 23–30. IEEE, 2017.

- Omniobject3d: Large-vocabulary 3d object dataset for realistic perception, reconstruction and generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 803–814, 2023.

- Bungeenerf: Progressive neural radiance field for extreme multi-scale scene rendering. In The European Conference on Computer Vision (ECCV), 2022.

- Multi-robot active mapping via neural bipartite graph matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 14839–14848, 2022.

- Cem Yuksel. Sample elimination for generating poisson disk sample sets. Computer Graphics Forum, 34(2):25–32, 2015.

- Activermap: Radiance field for active mapping and planning. arXiv preprint arXiv:2211.12656, 2022.

- Continuous aerial path planning for 3d urban scene reconstruction. ACM Trans. Graph., 40(6):225–1, 2021.

- Nerfusion: Fusing radiance fields for large-scale scene reconstruction. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 5449–5458, 2022.

- Open3D: A modern library for 3D data processing. arXiv:1801.09847, 2018.

- Nice-slam: Neural implicit scalable encoding for slam. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 12786–12796, 2022.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.