UniDex: A Robot Foundation Suite for Universal Dexterous Hand Control from Egocentric Human Videos

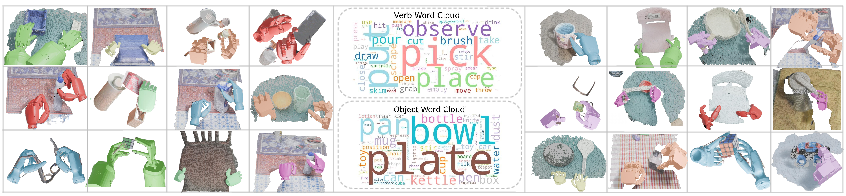

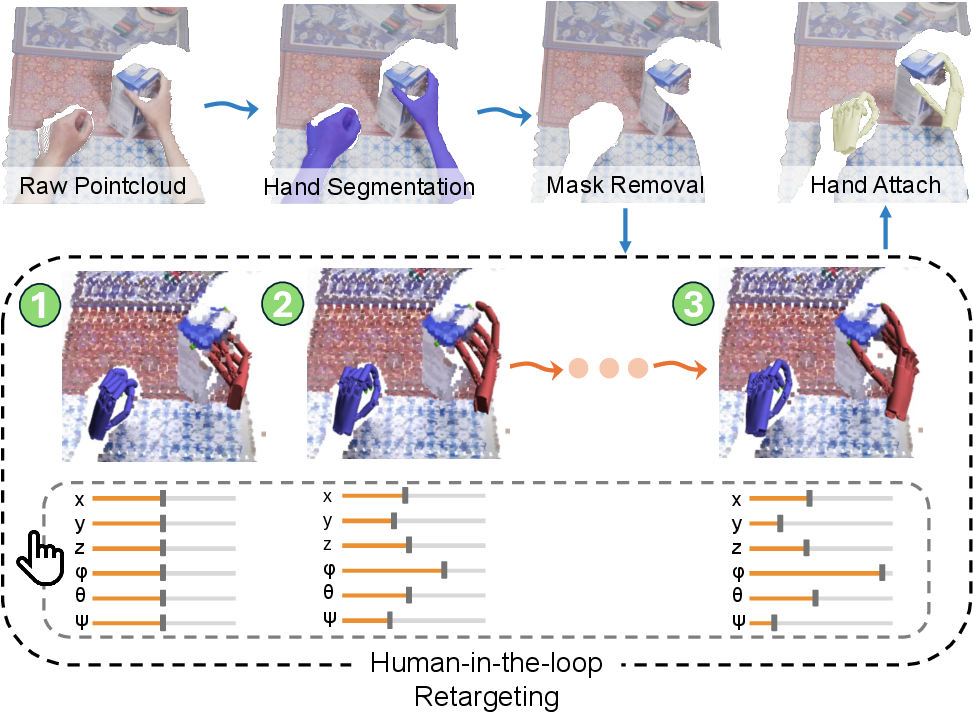

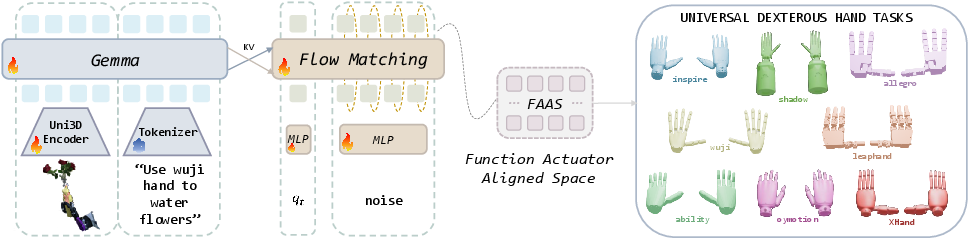

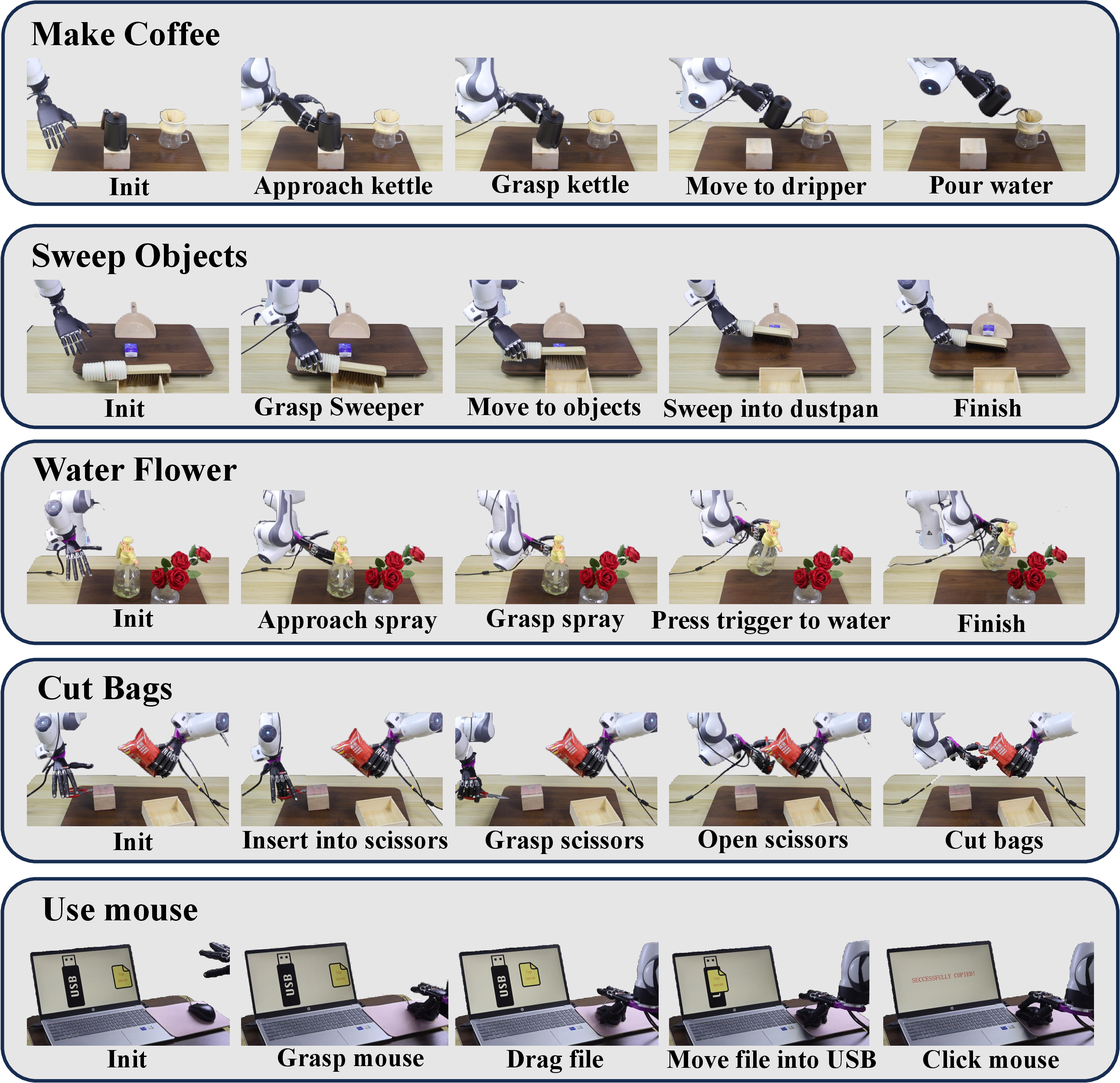

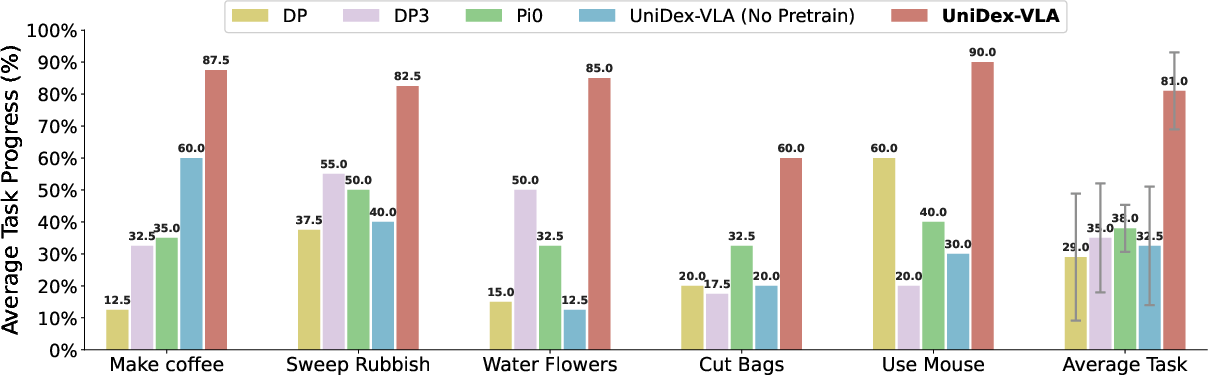

Abstract: Dexterous manipulation remains challenging due to the cost of collecting real-robot teleoperation data, the heterogeneity of hand embodiments, and the high dimensionality of control. We present UniDex, a robot foundation suite that couples a large-scale robot-centric dataset with a unified vision-language-action (VLA) policy and a practical human-data capture setup for universal dexterous hand control. First, we construct UniDex-Dataset, a robot-centric dataset over 50K trajectories across eight dexterous hands (6--24 DoFs), derived from egocentric human video datasets. To transform human data into robot-executable trajectories, we employ a human-in-the-loop retargeting procedure to align fingertip trajectories while preserving plausible hand-object contacts, and we operate on explicit 3D pointclouds with human hands masked to narrow kinematic and visual gaps. Second, we introduce the Function-Actuator-Aligned Space (FAAS), a unified action space that maps functionally similar actuators to shared coordinates, enabling cross-hand transfer. Leveraging FAAS as the action parameterization, we train UniDex-VLA, a 3D VLA policy pretrained on UniDex-Dataset and finetuned with task demonstrations. In addition, we build UniDex-Cap, a simple portable capture setup that records synchronized RGB-D streams and human hand poses and converts them into robot-executable trajectories to enable human-robot data co-training that reduces reliance on costly robot demonstrations. On challenging tool-use tasks across two different hands, UniDex-VLA achieves 81% average task progress and outperforms prior VLA baselines by a large margin, while exhibiting strong spatial, object, and zero-shot cross-hand generalization. Together, UniDex-Dataset, UniDex-VLA, and UniDex-Cap provide a scalable foundation suite for universal dexterous manipulation.

First 10 authors:

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about teaching robot hands to use tools in the real world—things like pouring water from a kettle, spraying a bottle, or using scissors. The authors build a “foundation suite” called UniDex that gives robots three things:

- a huge training dataset made from first‑person human videos,

- a single, shared “action language” that works across many different robot hands,

- and a simple capture kit to collect new human data and turn it into robot training data.

Together, these make it easier and cheaper to train robots to do dexterous (finger‑heavy) tasks that ordinary simple grippers can’t handle.

What questions are the researchers trying to answer?

In everyday words, they want to know:

- How can we use the huge number of human videos online to train robot hands without needing tons of expensive robot demonstrations?

- Can we design one “control vocabulary” so a single model can work across very different robot hands?

- Can a robot learn general tool skills (not just simple grasps) and then adapt to new objects, new positions, and even new robot hands?

How did they approach the problem?

The authors built three key pieces. Think of them like the ingredients for a good recipe:

1) UniDex‑Dataset: Turning human videos into robot training data

- What they start with: First‑person videos of people using their hands (egocentric videos) from public datasets.

- How they convert it:

- They extract 3D “pointclouds,” which are like a 3D picture made of many dots.

- They “mask” (remove) the human hands from the image and insert a 3D model of a robot hand in their place. This reduces the visual mismatch between seeing a human hand during training and a robot hand during testing.

- They use a “human‑in‑the‑loop retargeting” process to copy the human’s fingertip paths to a robot hand. Imagine copying a dance: you align the performer’s fingertips with a puppet’s fingertips and make small adjustments so the puppet’s fingers touch the object in a believable way. This keeps contact realistic while fitting the robot’s joints and shape.

- What they built: Over 9 million frames and 50,000+ robot‑ready trajectories across 8 different robot hands (from 6 to 24 movable joints), covering lots of everyday actions.

2) FAAS: A shared “action language” for many robot hands

- Problem: Different robot hands are like different game controllers—buttons and sticks are in different places and do different things.

- Solution: FAAS (Function–Actuator–Aligned Space) is like a universal controller layout. Instead of saying “move joint #7,” the model says “do the thumb‑index pinch” or “curl fingers around a handle,” mapped to the right joints for each hand.

- Why this helps: A single model can transfer skills between different hands because it speaks “function,” not “hardware.” This makes “cross‑hand” transfer (using a skill on a new hand) much more reliable.

3) UniDex‑VLA: The model that sees, reads, and acts

- Inputs: A 3D pointcloud view of the scene, a short language instruction (like “pour water into the dripper”), and the robot’s own joint positions.

- Outputs: A short sequence of actions in FAAS that move the wrist and fingers.

- How it learns: It’s first pretrained on the UniDex‑Dataset (from human videos turned into robot motions), then fine‑tuned on a small number of real robot demos. The training uses a modern technique that learns to turn rough action guesses into accurate commands over time.

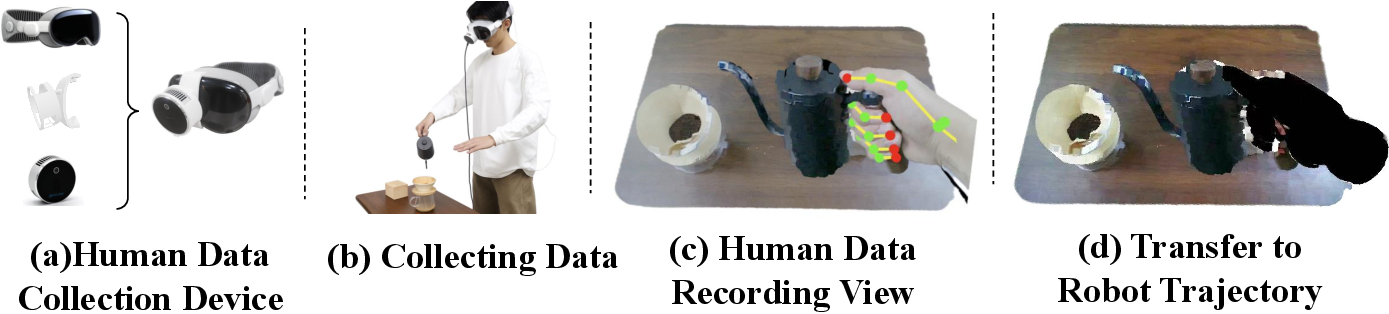

4) UniDex‑Cap: A portable human capture kit

- This is a simple setup (e.g., Apple Vision Pro for hand pose + a depth camera) that records human hand motion and 3D video together.

- It then uses the same pipeline as above to turn those human motions into robot‑executable trajectories.

- Why it matters: It lets teams cheaply collect new training data and mix (“co-train”) it with a few real robot demos, reducing expensive robot time.

What did they find, and why does it matter?

Here are the main results, with a short explanation of why each is important:

- Strong tool use with few robot demos:

- Across five difficult real‑world tasks (make coffee with a kettle, sweep with a brush, water flowers with a spray bottle, cut with scissors, use a computer mouse), the UniDex‑VLA model achieved about 81% average task progress, beating strong baselines (the best baseline around 38%).

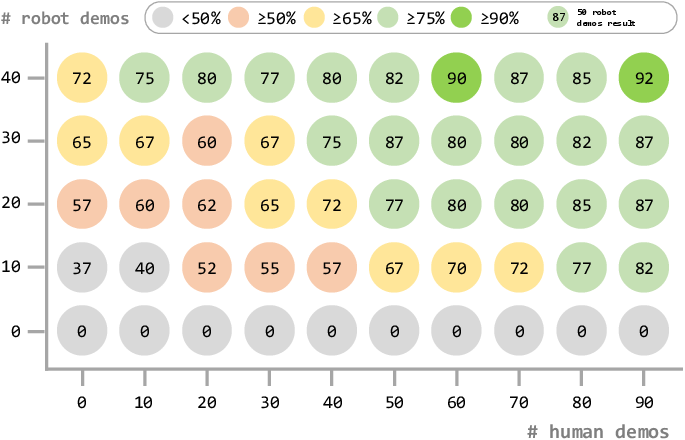

- Why it matters: Tools need precise finger coordination. Doing well with only ~50 robot demos per task means the approach is data‑efficient and practical.

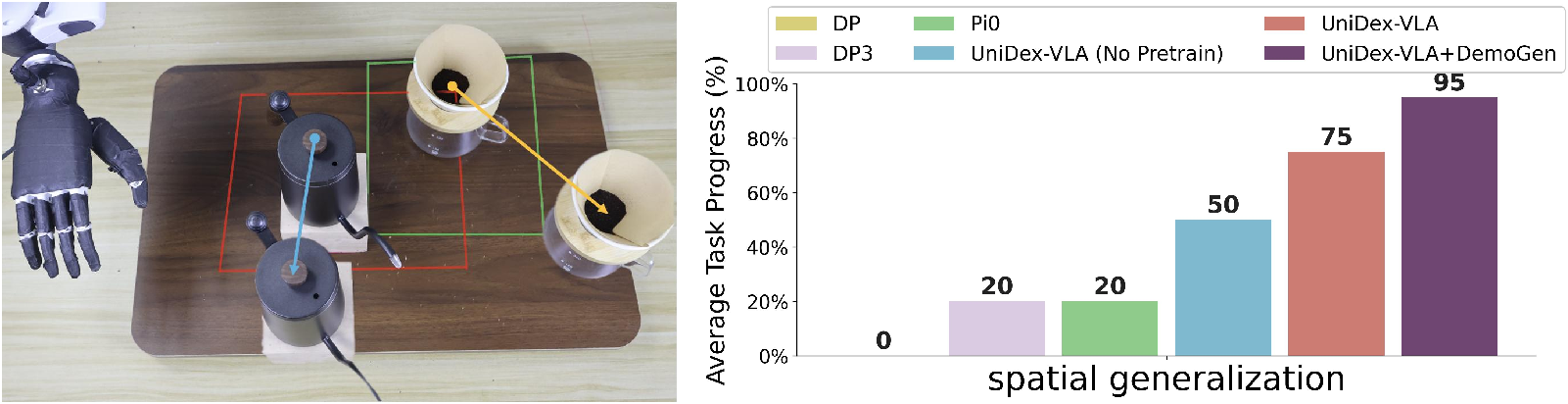

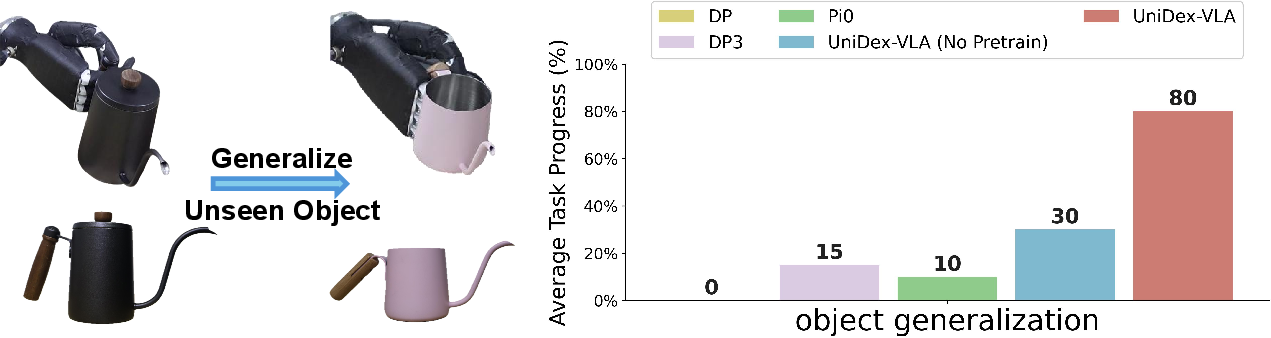

- Spatial and object generalization:

- The model handled objects moved to new places (out‑of‑distribution) and worked on a new kettle with different color and size.

- Why it matters: Real homes and workplaces aren’t fixed; objects move and vary. Generalization is key for real use.

- Zero‑shot cross‑hand transfer:

- A policy trained on one hand (Inspire) worked without retraining on other hands (Wuji and Oymotion), reaching up to 60% task progress.

- Why it matters: You don’t need a separate model for every hand. This is a big step toward “universal” dexterous control.

- Human–robot co‑training reduces cost:

- Mixing retargeted human demos with a smaller number of robot demos still achieves high performance.

- Roughly, two human demos can replace one robot demo, and human demos were about 5.2× faster to collect in their tests.

- Why it matters: It lowers the time and money needed to train robots, making real deployment more feasible.

What’s the big picture?

- Using human videos to train robot hands unlocks a giant source of cheap, diverse data.

- A shared action space (FAAS) makes it possible for one model to control many different hands and pass skills between them.

- The combination (dataset + shared action space + 3D vision model + simple capture kit) is a practical path toward robots that can handle everyday tools with finger‑level dexterity.

- Limitations and next steps: The current work focuses on human videos that include explicit hand motion data; future versions could scale even more by using large, weakly labeled or action‑free egocentric videos.

In short, UniDex shows a promising way to teach robots human‑like hand skills at scale—by learning from people, speaking a universal “action language,” and cutting down the need for expensive robot teaching.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper.

- Dataset coverage and bias

- Quantify scene/object/cultural biases introduced by relying on four egocentric datasets (H2O, HOI4D, HOT3D, TACO); assess how these biases affect downstream generalization.

- Evaluate category diversity and long-tail coverage for tool-use (e.g., fasteners, flexible objects, deformables, precision tasks like threading or tying).

- Provide standardized statistics on trajectory quality (e.g., occlusion rates, depth noise, segmentation artifacts) and consistency across source datasets.

- Human-to-robot retargeting quality and scalability

- Report quantitative metrics for retargeting accuracy (e.g., fingertip positional error, contact plausibility, collision violations) and inter-annotator consistency.

- Assess how often manual intervention is required, time per clip, and how human-in-the-loop adjustments scale to much larger datasets.

- Extend beyond fingertip alignment to model palm/surface contacts and contact forces/torques; evaluate how missing contact/force supervision impacts learning.

- Analyze failure modes from masking (WiLoR + SAM2) and 3D insertion of robot hands (e.g., occlusion errors, geometry misalignment), and their effect on policy performance.

- Automate or learn the base-offset calibration across scenes/hands to reduce human effort.

- Unified action space (FAAS) design and extensibility

- Provide rigorous ablations isolating FAAS benefits vs. joint-space or learned latent actions; test sensitivity to FAAS dimensionality and grouping choices.

- Develop semi-automatic procedures for mapping new hands into FAAS (e.g., by learning functional correspondences), and evaluate mapping robustness for non-anthropomorphic/soft hands.

- Characterize how FAAS handles missing DoFs (degraded capabilities) and mechanically coupled/tendon-driven hands; evaluate performance degradation and mitigation strategies.

- Investigate whether functional roles are consistent across tasks and hands, or require task-specific remappings.

- Perception and sensing limitations

- Study the trade-offs of single-view pointclouds (occlusions, depth noise) vs. multi-view sensing; quantify how occlusion affects success and whether multi-view improves robustness.

- Integrate and evaluate tactile/force sensing for contact-rich manipulation; quantify gains over vision-only inputs.

- Analyze sensitivity to camera type, extrinsics/intrinsics errors, lighting, and depth artifacts, and test robustness across different RGB-D sensors.

- Policy training and architecture

- Isolate the contributions of 3D encoders (Uni3D) vs. 2D encoders through controlled ablations; measure the impact on spatial/object generalization.

- Compare conditional flow matching to diffusion or autoregressive policies in this setting; report training stability, sample efficiency, and inference latency differences.

- Evaluate long-horizon control beyond short H-step chunks (e.g., hierarchical planning, recovery behaviors), including failure recovery and subgoal sequencing.

- Evaluation scope and metrics

- Expand real-world evaluation beyond five tasks and two hands to a broader set of tools, materials (e.g., cloth, cables), and environments (clutter, tight spaces, moving targets).

- Assess bimanual capabilities (FAAS includes two hands), including coordinated two-hand tasks; provide hardware experiments that match the bimanual representation.

- Standardize and report richer metrics (completion time, energy, safety margins, smoothness, contact quality) in addition to average task progress.

- Provide detailed failure analysis (taxonomy and frequencies) to guide targeted improvements.

- Cross-embodiment and cross-domain generalization

- Test systematic cross-hand transfer across many source–target hand pairs and varying morphology gaps; quantify when zero-shot transfer breaks and why.

- Evaluate transfer to hands with substantially different kinematics (e.g., underactuated, non-anthropomorphic, soft/compliant hands).

- Investigate transfer across robot arms and wrist kinematics (not just end-effector), including mobile manipulators and whole-body systems.

- Human–robot co-training (UniDex-Cap)

- Validate the “2 human : 1 robot” demonstration exchange rate across more tasks, hands, and users; analyze variance across subjects and task complexity.

- Account for the full pipeline cost including retargeting/cleanup when comparing human vs. robot demo efficiency; present end-to-end time/cost curves.

- Quantify pose accuracy from Apple Vision Pro in cluttered settings and its effect on retargeting and downstream policy performance.

- Explore methods to reduce or eliminate manual retargeting when using UniDex-Cap (e.g., learned IK initialization, contact-aware optimization).

- Dynamics, control, and safety

- Incorporate dynamics and actuation limits (torques, compliance) into training/retargeting to handle force-critical tasks (e.g., pushing, turning screws, cutting under load).

- Evaluate controllers beyond position control (impedance/force control) and study interaction stability and safety during tool use.

- Analyze safety risks and failure modes in sharp-tool tasks (e.g., scissors), including safe-guard policies, fail-safes, and human-in-the-loop monitoring.

- Scaling and unlabeled data

- As acknowledged, investigate leveraging large-scale action-free/weakly labeled egocentric video (e.g., Ego4D) for pretraining, and design alignment objectives that avoid brittle post-processing.

- Study curriculum or self-supervised objectives that exploit contact cues, affordances, and temporal structure to improve dexterous priors without explicit actions.

- Reproducibility and ecosystem

- Provide detailed protocols for community contributions of new hands/datasets, including FAAS mapping templates, QA checks, and automated validation tools.

- Establish benchmarks and leaderboards for universal dexterous manipulation with standardized tasks, sensors, and metrics to drive comparable progress.

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that can be piloted today using the UniDex suite (UniDex-Dataset, UniDex-VLA, UniDex-Cap) and the paper’s methods (FAAS, human-in-the-loop retargeting, 3D VLA with point clouds).

- Sector: Manufacturing (electronics, light assembly, rework)

- Application: Flexible tool-use cells that cut zip ties, trim packaging, squeeze triggers (adhesives, cleaners), manipulate small fixtures, and operate consumer-grade tools that require human-like finger coordination.

- Tools/products/workflows:

- Deploy UniDex-VLA as a ROS node controlling a dexterous end-effector (e.g., Allegro, Shadow, Inspire, Wuji).

- Use UniDex-Cap to capture SMEs performing SOPs via egocentric RGB-D + hand pose; transform with the retargeting GUI; fine-tune with ~50 robot demos plus co-training human demos (≈2 human demos ≈ 1 robot demo).

- Apply DemoGen-like spatial augmentation to expand placement coverage without extra data collection.

- Assumptions/dependencies:

- Availability of a dexterous hand and a 7-DoF arm; single-view RGB-D of sufficient quality; FAAS map for the target hand; safety-rated enclosure for tools; modest manual calibration for retargeting.

- Sector: Warehousing and logistics (returns processing, kitting)

- Application: Non-destructive opening of packages (peeling tape ends, trigger-operated box sealers), removing tags/caps, re-bagging small items with fingered grasp-and-press actions that grippers struggle with.

- Tools/products/workflows:

- Pretrain with UniDex-Dataset; task-specific fine-tuning on site with 30–50 demos; leverage co-training to reduce robot demo hours.

- Build a skills library for “press,” “pinch,” “squeeze,” and “thumb-index tool actuation,” aligned with FAAS.

- Assumptions/dependencies:

- Tool and packaging variability managed by point-cloud perception and object generalization; safety constraints for any sharp tools (may require custom end-effector guards).

- Sector: Facilities, retail, and hospitality operations

- Application: Trigger-based spraying for surface disinfection, sweeping debris into dustpans, operating pump bottles, using handheld wipers/squeegees.

- Tools/products/workflows:

- Minimal teleop data collection with OpenTeleVision + Apple Vision Pro; human-in-the-loop retargeting to ensure plausible hand–object contacts; UniDex-VLA fine-tuning for target site.

- Assumptions/dependencies:

- Moderate precision tasks; single-view camera coverage; smooth floor/table surfaces; periodic skill health checks.

- Sector: Agriculture (greenhouse, indoor farming)

- Application: Targeted spraying and simple horticultural tasks requiring thumb-index coordination (e.g., squeeze-to-mist plant care).

- Tools/products/workflows:

- Port a trained policy zero-shot across different dexterous hands using FAAS; use point-cloud-based spatial augmentation to adapt to row-by-row layout differences.

- Assumptions/dependencies:

- Stable lighting; tool standardization (common spray bottles); safe chemical handling procedures.

- Sector: Lab operations (non-sterile)

- Application: Operating trigger-driven dispensers, simple vial cap opening/closing, pipette-like squeeze motions (beginner level).

- Tools/products/workflows:

- UniDex-Cap to record experts; transform to robot-executable; fine-tune UniDex-VLA with 3D perception; deploy in benchtop cells.

- Assumptions/dependencies:

- Tasks that do not require tight compliance or force-controlled insertion; no sterile constraints; reliable camera vantage.

- Sector: Consumer and service robotics (pilot deployments, labs, testbeds)

- Application: Teach-and-repeat household tool-use tasks such as watering plants (spray bottle), sweeping small objects, basic device manipulation (e.g., mouse use).

- Tools/products/workflows:

- DIY data capture with UniDex-Cap; single-view RGB-D; an affordable dexterous hand (e.g., Inspire/Oymotion); on-device fine-tuning or cloud training service that returns a FAAS-compatible policy.

- Assumptions/dependencies:

- Early-adopter environments; user tolerance for occasional re-teaching; safety around people; hardware cost acceptance.

- Sector: Robotics OEMs and integrators (software/products)

- Application: FAAS-based SDKs and adapters that unify control across different dexterous hands; drop-in policy heads for cross-hand deployment.

- Tools/products/workflows:

- Ship FAAS mappings and the retargeting GUI; provide ROS packages for UniDex-VLA; offer conversion tools from URDF to FAAS; model zoo with off-the-shelf tool-use skills.

- Assumptions/dependencies:

- Accurate FAAS mapping per hand; calibration utilities; licensing compatibility with UniDex-Dataset.

- Sector: Academia and education

- Application: Course/lab modules on dexterous manipulation with unified action spaces; reproducible benchmarks for cross-hand transfer and 3D VLA training.

- Tools/products/workflows:

- Use UniDex-Dataset for pretraining experiments; release new FAAS-mapped hands; assign student projects to capture, retarget, and fine-tune new tool-use tasks; create standardized evaluation suites with spatial/object shifts.

- Assumptions/dependencies:

- Access to an RGB-D sensor, a dexterous hand, and minimal compute for fine-tuning; adherence to dataset contribution protocols.

- Sector: Policy and governance (near-term guidance)

- Application: Data privacy and consent playbooks for egocentric capture; procurement language for “FAAS-compatible” dexterous hands to reduce vendor lock-in.

- Tools/products/workflows:

- Draft organizational SOPs that cover storage/processing of egocentric video; update robotics RFPs to include unified action-space support and safety mechanisms for tool-use.

- Assumptions/dependencies:

- Institutional review processes for human video data; legal counsel for data sharing and IP of demonstrations.

Long-Term Applications

These opportunities are plausible given the results and innovations, but will require more research, scale, safety engineering, or ecosystem development.

- Sector: General-purpose tool-use in open environments

- Application: Household or workplace robots that robustly handle a wide variety of tools (scissors, blades, drivers, staplers) and materials, with reliable zero-shot transfer across hands and settings.

- Tools/products/workflows:

- Large-scale pretraining on action-free egocentric video; FAAS standard adoption across vendors; integration with tactile/force sensing for safe contact-rich manipulation.

- Assumptions/dependencies:

- Improved safety certification for tool-use near humans; richer multimodal pretraining; real-time inference on edge compute.

- Sector: Bimanual dexterous manipulation

- Application: Two-handed tasks (e.g., opening jars, threading, cable management, garment handling, cooking prep) that need coordinated in-hand reconfiguration.

- Tools/products/workflows:

- Extend FAAS to multi-hand coordination; couple UniDex-VLA with task-and-motion planning and learned contact planners; synchronized dual-RGB-D or multi-view setups.

- Assumptions/dependencies:

- Stable hand–eye calibration across two arms; latency-bounded inference; robust 3D scene reconstruction beyond single view.

- Sector: Healthcare and assistive robotics

- Application: Safe, dexterous bedside assistance (button pressing, cable routing, dressing aids), and, further out, task support in clinical labs or therapy contexts.

- Tools/products/workflows:

- Safety-first policies with uncertainty-aware execution; verified motion primitives; FAAS-aligned prosthetic/orthotic devices for shared autonomy.

- Assumptions/dependencies:

- Regulatory approval; comprehensive risk analyses for tool use; fail-safe hardware and tactile feedback; stringent hygiene/sterility protocols.

- Sector: Prosthetics and human augmentation

- Application: Mapping EMG/nerve interfaces to FAAS for intuitive multi-finger control and cross-device transfer (train on one device, deploy to another).

- Tools/products/workflows:

- Learn a user-specific EMG→FAAS decoder; leverage UniDex-Dataset priors for typical grasp synergies; add tactile sensing for stable manipulation.

- Assumptions/dependencies:

- High-quality biosignal decoding; personalized calibration; medical-grade hardware and validation.

- Sector: Precision lab automation and micro-assembly

- Application: Pipetting with force/angle sensitivity, vial capping at precise torque, tiny connector mating, and tasks demanding force/vision fusion.

- Tools/products/workflows:

- Integrate high-rate force/torque/tactile sensing into UniDex-VLA; contact-rich flow matching with compliant control; cleanroom-ready end effectors.

- Assumptions/dependencies:

- Sub-mm positioning accuracy; closed-loop force control; multi-sensor synchronization.

- Sector: Construction and field service

- Application: Caulking, wiring, cutting and trimming flexible materials, operating panel knobs/valves with dexterity in unstructured environments.

- Tools/products/workflows:

- Ruggedized dexterous hands; outdoor-capable 3D perception; domain randomization and large-scale human-video pretraining.

- Assumptions/dependencies:

- Environmental robustness (dust, lighting, weather); mobile manipulation platforms; safety certification for tool operation in public spaces.

- Sector: Energy and process industries

- Application: Remote inspection/manipulation—turning valves, flipping breakers, operating safety latches—performed by dexterous manipulators in hazardous zones.

- Tools/products/workflows:

- Tele-supervised UniDex-VLA with FAAS; egocentric data capture from human operators on replica panels; co-training to achieve low-robot-demo deployment.

- Assumptions/dependencies:

- Domain-specific safety standards; reliable communications; explosion-proof hardware variants.

- Sector: Policy and standards (ecosystem building)

- Application: Establish FAAS-like open standards for dexterous hand control and dataset-sharing consortia for egocentric manipulation data.

- Tools/products/workflows:

- Industry/academic working groups to define FAAS mappings, evaluation protocols, and safety benchmarks for tool use.

- Assumptions/dependencies:

- Multi-vendor participation; IP frameworks for sharing egocentric data and derived robot trajectories; alignment with regulatory bodies.

- Sector: Software infrastructure and cloud services

- Application: “Data-to-policy” cloud pipelines that ingest egocentric human videos, perform retargeting at scale, auto-augment with scene edits, and return FAAS-compatible policies for diverse dexterous hands.

- Tools/products/workflows:

- Managed training with 3D encoders and flow matching; automated FAAS mapping validators; model versioning and rollback; cost-optimized human–robot co-training (using ~2:1 human:robot demo exchange rate as planning prior).

- Assumptions/dependencies:

- Scalable compute; privacy-preserving data handling; robust QA for retargeting quality.

- Sector: Education and workforce upskilling

- Application: Hands-on curricula where technicians author robot skills by demonstration (egocentric capture), building a shared library of dexterous tool-use procedures.

- Tools/products/workflows:

- Low-cost kits bundling an RGB-D sensor, a lightweight dexterous hand, UniDex-Cap, and a library of starter FAAS skills.

- Assumptions/dependencies:

- Affordable hardware; institutional support for open data contributions; instructor training.

Notes on general feasibility across applications:

- The strongest near-term performance is for single-view, moderate-precision tool-use tasks that involve pressing/squeezing/pinching and do not require strict force-controlled insertions.

- Cross-hand deployment is enabled by FAAS but may require brief calibration and verifying joint limit interpretations per device.

- Safety, especially with sharp tools or human proximity, is the main gating factor for broad public deployments; safety-rated cells and conservative policies are recommended.

- Human-to-robot co-training materially lowers data costs; budgeting can assume an initial 2:1 human:robot demo exchange rate, with validation on local tasks.

Glossary

- Ab-/adduction: Biomechanical finger motions moving toward (adduction) or away from (abduction) the hand’s midline, used for stabilizing grips. "lateral ab-/adduction for stabilization."

- Action chunk: A short horizon sequence of consecutive actions predicted jointly by a policy. "and predicts an -step action chunk expressed in the unified action space FAAS."

- Affordance: The actionable possibilities offered by objects, such as where stable contacts can be made. "reasoning about fine 3D geometry and contact affordances"

- Conditional flow matching: A training objective that learns to map noise to target actions conditioned on observations by matching flows. "optimized with a conditional flow-matching objective."

- Cross-hand transfer: The ability to apply skills learned on one dexterous hand to different hand embodiments. "enabling cross-hand transfer."

- Degrees of Freedom (DoF): Independent controllable movements in a mechanism or robot hand. "covering active DoF from 6 to 24."

- Dexterous manipulation: Complex, fine-grained hand control for grasping and using tools or objects. "Dexterous manipulation remains challenging"

- Domain gap: Systematic differences between data sources (e.g., human vs. robot) that hinder transfer. "introduces visual and kinematic domain gaps."

- Dummy base: A virtual 6-DoF frame inserted before the real robot base to adjust global alignment during IK. "a dummy base inserted before the real robot base."

- Egocentric: First-person viewpoint aligned with the actor’s perspective. "derived from egocentric human video datasets."

- Embodiment heterogeneity: Variation in robot hardware morphology and kinematics across platforms. "to handle embodiment heterogeneity"

- Forward kinematics: Computing end-effector positions from joint configurations. "The forward kinematics of fingertip is"

- Forward–Euler integration: A simple numerical method for stepping differential equations forward in time. "via forwardâEuler integration"

- Function–Actuator–Aligned Space (FAAS): A unified action representation that aligns actuators by functional roles across different hands. "we introduce the FunctionâActuatorâAligned Space (FAAS)"

- Homogeneous transform: A matrix representation of rotation and translation in a single 4×4 transform. "is the homogeneous transform from the robot base to fingertip "

- Human-in-the-loop: A process where humans provide interactive adjustments during computational procedures. "we employ a human-in-the-loop retargeting procedure"

- Inverse kinematics (IK): Computing joint configurations that achieve target end-effector positions. "fingertip-based inverse kinematics"

- Joint limits: Mechanical or safety bounds restricting joint angles or motions. "while satisfying joint limits and damping."

- Kinematic Retargeting: Mapping human hand motions to robot hand joint configurations while preserving contact constraints. "Kinematic Retargeting"

- Latent action space: A learned low-dimensional action representation used by policies instead of direct joint commands. "and other methods introduce latent action spaces"

- Left-aligned action representation: An action formatting scheme that aligns controls to the left to standardize variable-length outputs. "adopts a left-aligned action representation"

- Mimic joint: A dependent joint whose motion is linearly linked to a master joint. "For robot hands containing mimic joint structures (e.g., Inspire, Oymotion, Agility), we handle dependent joints through an iterative correction process."

- Multi-end-effector IK: Inverse kinematics solving for multiple targets (e.g., several fingertips) simultaneously. "multi-end-effector IK solver"

- Parallel-jaw gripper: A simple end-effector with two opposing jaws that move in parallel for grasping. "parallel-jaw grippers"

- Pinhole camera model: A geometric projection model mapping 3D points to 2D image coordinates. "via a pinhole camera model"

- Pointcloud: A set of 3D points representing scene geometry, often colored from RGB-D. "We compute pointclouds from RGB-D frames."

- Proprioception: Internal robot sensing of its own states such as joint angles and poses. "and proprioception "

- Rigid transform: A transformation consisting of rotation and translation without deformation. "be the rigid transform from the dummy base to the real base"

- RGB-D: Color (RGB) images paired with depth (D) measurements. "records synchronized RGB-D streams"

- Six-dimensional (6D) continuous rotation representation: A 6D parameterization of rotation using two 3D axes to avoid singularities. "a 6d continuous rotation representation (two 3d vectors for the local - and -axes)"

- Task and Motion Planning (TAMP): Integrating symbolic task planning with geometric motion planning for robotics. "using Task and Motion Planning (TAMP)"

- Teleoperation: Human remote control of a robot to collect demonstrations or perform tasks. "real-robot teleoperation data"

- Unified Robot Description Format (URDF): A standardized XML format describing robot kinematics and dynamics. "derived from the robot URDF, including mimic joints when present."

- Vision Transformer (ViT): A transformer architecture applied to vision by treating images (or 3D tokens) as sequences. "adopts a vanilla ViT~\cite{dosovitskiy2020image} design"

- Vision–language–action (VLA): Models that jointly process visual input and language to produce actions. "a unified visionâlanguageâaction (VLA) policy"

- Zero-shot: Deploying on new tasks or embodiments without any additional fine-tuning. "in a zero-shot manner."

Collections

Sign up for free to add this paper to one or more collections.