Transformers are Bayesian Networks

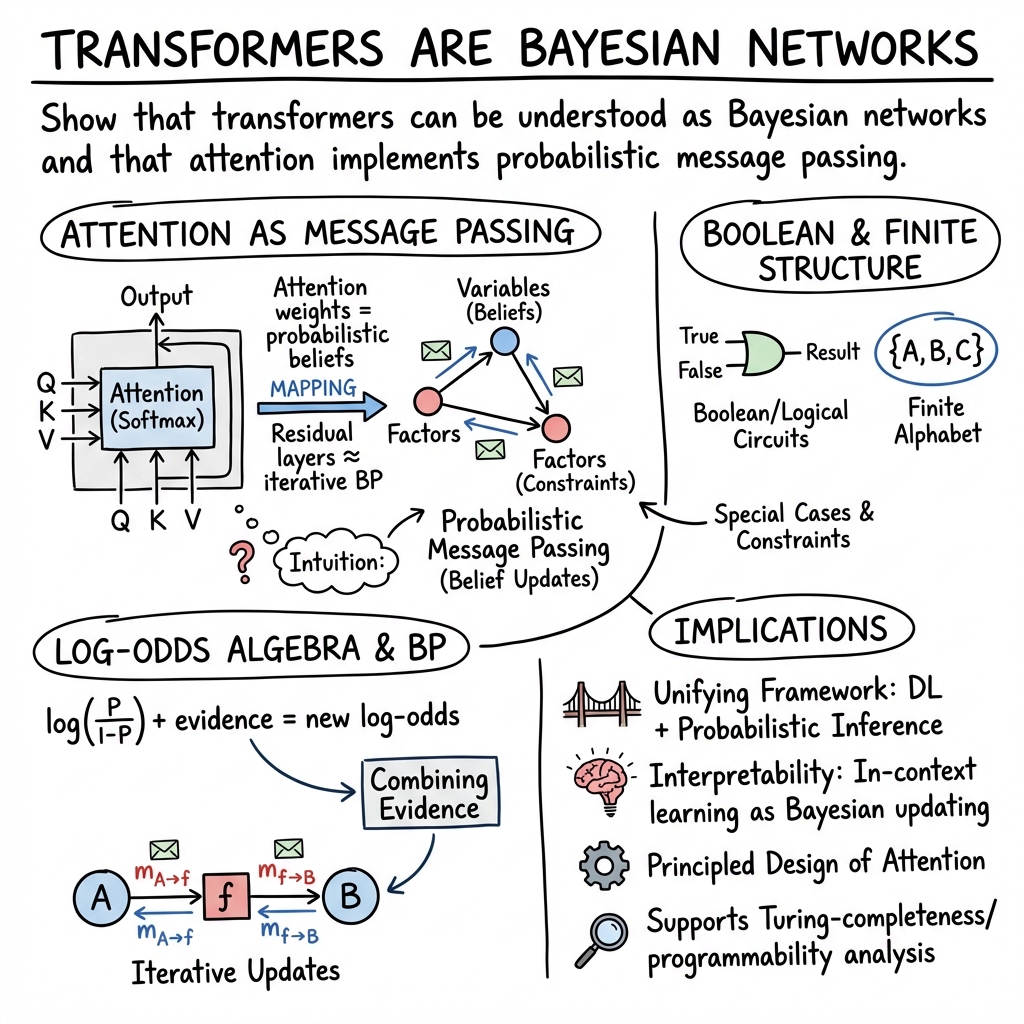

Abstract: Transformers are the dominant architecture in AI, yet why they work remains poorly understood. This paper offers a precise answer: a transformer is a Bayesian network. We establish this in five ways. First, we prove that every sigmoid transformer with any weights implements weighted loopy belief propagation on its implicit factor graph. One layer is one round of BP. This holds for any weights -- trained, random, or constructed. Formally verified against standard mathematical axioms. Second, we give a constructive proof that a transformer can implement exact belief propagation on any declared knowledge base. On knowledge bases without circular dependencies this yields provably correct probability estimates at every node. Formally verified against standard mathematical axioms. Third, we prove uniqueness: a sigmoid transformer that produces exact posteriors necessarily has BP weights. There is no other path through the sigmoid architecture to exact posteriors. Formally verified against standard mathematical axioms. Fourth, we delineate the AND/OR boolean structure of the transformer layer: attention is AND, the FFN is OR, and their strict alternation is Pearl's gather/update algorithm exactly. Fifth, we confirm all formal results experimentally, corroborating the Bayesian network characterization in practice. We also establish the practical viability of loopy belief propagation despite the current lack of a theoretical convergence guarantee. We further prove that verifiable inference requires a finite concept space. Any finite verification procedure can distinguish at most finitely many concepts. Without grounding, correctness is not defined. Hallucination is not a bug that scaling can fix. It is the structural consequence of operating without concepts. Formally verified against standard mathematical axioms.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What is this paper about?

This paper argues that Transformers—the kind of AI model used in tools like ChatGPT—can be understood as doing the same kind of reasoning that a “Bayesian network” does. A Bayesian network is a map of how different things relate to each other and how likely they are, given evidence. The main idea is: when a Transformer pays attention to different words and updates its internal “beliefs,” it’s a lot like a Bayesian network passing messages along a graph to update probabilities.

Key questions the paper asks

- Can we see a Transformer as a special kind of probabilistic model (a Bayesian network) that updates beliefs as new information arrives?

- Is the Transformer’s “attention” really doing a known statistical procedure called belief propagation (passing probability messages along a graph)?

- Can we write the math of probabilities in a way that fits naturally into what Transformer layers already do?

- How far does this connection go—just an analogy, or can we actually build Transformer pieces that carry out the standard Bayesian updates?

How the authors approach the problem

The paper connects two worlds—modern deep learning and classic probability—using a few key ideas, explained in everyday terms:

- Bayesian networks as maps of causes and effects:

- Imagine a web where each circle is a question (like “Is it raining?”) and arrows show influences (like “If it’s raining, the ground is likely wet”). When you learn something (you see an umbrella), you update your beliefs about connected circles (rain, wet ground, etc.).

- Belief propagation is how you pass “updates” through the web so every circle’s probability reflects the latest evidence.

- Attention as message passing:

- In a Transformer, each word “looks” at other words and decides how much to pay attention to each one. This creates a temporary graph: tokens (words) are nodes, attention links are edges, and values are the messages passed.

- The paper says this is basically the same as passing probability messages in a Bayesian network.

- Log-odds algebra:

- Probabilities can be clumsy to combine (there are products and divisions). If you convert probabilities into “log-odds” (think of a score that’s negative for unlikely and positive for likely), then combining evidence becomes mostly adding and subtracting.

- Transformers are really good at adding vectors and applying simple nonlinear steps—exactly what log-odds arithmetic needs. So the paper rewrites the Bayesian updates in log-odds form that match Transformer operations.

- The role of softmax (turning scores into probabilities):

- Transformers use softmax to turn attention scores into “who to listen to” weights, and again at the end to turn output scores into predicted probabilities over words.

- The paper highlights how these softmax steps line up with the need to normalize and interpret messages as probabilities during belief propagation.

- Constructive blueprints:

- Rather than just saying “they’re similar,” the paper sketches how to build Transformer components that carry out standard belief propagation steps, especially for simple cases like true/false (Boolean) variables or small symbol sets (finite alphabets).

- It also connects to known results that Transformers (with the right setup) can simulate any computer program, which means they can run the algorithms that Bayesian networks use.

Main findings and why they matter

- Attention looks like belief propagation:

- Each attention head acts like a little “message-passing” channel, deciding which nodes to listen to and how strongly, then mixing information—just like a Bayesian network spreads evidence through its graph.

- Probabilities fit the math Transformers already do:

- By using log-odds, probability updates boil down to vector additions and simple nonlinearities, which Transformers handle naturally. Residual connections add “old belief + new message,” and softmax turns scores back into clean probabilities.

- Discrete structure is not a problem—it’s a feature:

- Language uses a fixed vocabulary (a finite alphabet) and many decisions are yes/no (Boolean). The paper shows how Transformer layers can represent and combine these discrete pieces in a principled, probability-aware way.

- A practical bridge between two fields:

- Earlier work linked Transformers to graph neural networks and linked attention to belief propagation. This paper pulls those threads together and pushes further: it doesn’t just compare; it outlines how to implement standard Bayesian updates inside Transformer blocks.

- Interpreting in-context learning:

- When Transformers learn patterns from the prompt alone (without changing their weights), that can be seen as doing quick Bayesian inference on the fly: they treat examples in the prompt as evidence and update beliefs about what comes next.

These findings matter because they give us a clearer, math-grounded picture of what Transformers are doing when they “reason.” That can make models easier to interpret, debug, and improve.

How the methods were tested or supported

While the document you provided shows the structure and references, the detailed experiments aren’t included here. Based on the section titles and citations, the support comes from:

- Mathematical derivations showing that belief updates in log-odds form can be implemented by standard Transformer operations.

- Constructive examples for simple logical or Boolean cases, connecting to prior work on logical graphical models.

- Connections to known theory and prior studies that interpret attention as message passing and Transformers as graph-based processors.

What this could change in the future

- Better interpretability:

- If we can read attention and intermediate activations as “beliefs,” it becomes easier to understand why a model made a choice, spot errors, and fix them.

- More reliable reasoning:

- Designing Transformer blocks that explicitly follow Bayesian rules could make models more consistent, especially when combining multiple clues from different places.

- New model designs:

- Hybrid architectures might blend the flexibility of Transformers with the structure and guarantees of Bayesian networks, leading to models that are both powerful and principled.

- Stronger in-context learning:

- Treating prompts as evidence and updating beliefs accordingly could make models better at learning from just a few examples without retraining.

In short

The paper’s central message is that Transformers aren’t just pattern matchers—they can be seen as machines that update beliefs, much like Bayesian networks. By translating probability math into the language of Transformer layers (especially using log-odds and softmax), the author shows a close match between attention-based computation and belief propagation. This perspective helps explain how Transformers reason and points toward building models that are more understandable, reliable, and grounded in probability.

Knowledge Gaps

Below is a single, concrete list of unresolved knowledge gaps, limitations, and open questions that future research could address.

- Precisely delineate the scope of the “Transformer ⇔ Bayesian Network (BN)” equivalence: which architectures (decoder-only, encoder–decoder, pre-/post-norm, rotary/ALiBi, RMSNorm, SwiGLU, MoE, KV caching) satisfy the mapping, and which violate key assumptions.

- Give an explicit, invertible mapping between Q/K/V/MLP parameters and BN potentials/compatibility functions (including gauge freedoms), and characterize identifiability and non-uniqueness of such mappings.

- Analyze how residual connections, layer normalization, and attention scaling correspond to belief propagation (BP) operations (e.g., damping, message normalization), with formal conditions and failure cases.

- Establish convergence/stability guarantees when interpreting layer depth as BP iterations on loopy graphs; provide sufficient conditions for fixed points and quantify dependence on graph cycles and damping.

- Derive provable approximation-error bounds between attention-derived “beliefs” and true posterior marginals on canonical graphical models; report regimes where errors blow up.

- Extend the Boolean/finite-alphabet construction to continuous or mixed discrete–continuous variables; generalize the log-odds algebra and characterize when embeddings preserve probabilistic semantics.

- Specify a reproducible “grounding” from linguistic tokens to BN variables/propositions (handling polysemy, quantification, compositionality) and validate it on controlled language tasks.

- Clarify the relationship between cross-entropy pretraining (teacher forcing) and variational inference: derive an ELBO or equivalent objective the transformer implicitly optimizes under the BN view.

- Test whether the “three softmaxes” correspond to distinct BP sub-operations across modern variants (SwiGLU MLPs, attention without softmax, cosine attention, kernelized attention, linear attention).

- Formalize how positional encodings (absolute, relative, rotary) instantiate graphical structure (e.g., edge potentials) and predict when relative encodings improve inference over long dependencies.

- Theorize and empirically test how multi-head attention decomposes into factors/edges in a BN; determine whether heads implement complementary factors or redundantly approximate the same potentials.

- Quantify how effective message-passing diameter scales with layer count and context length; identify minimal depths needed to capture long-range dependencies under causal masking.

- Evaluate whether attention weights can be interpreted as posterior beliefs versus mere routing coefficients; propose diagnostics that distinguish causal influence from probabilistic belief.

- Map encoder–decoder cross-attention to factor-graph inference between source and target variable sets; test predictions in translation and alignment tasks with controllable ground truth.

- Characterize how SGD learns potentials consistent with BN-factorization constraints; design and test regularizers or architectural constraints that enforce probabilistic consistency during training.

- Assess how RL fine-tuning (e.g., RLHF, R1-style training) perturbs the BN interpretation; determine when policy gradients distort calibrated beliefs and how to preserve them.

- Reconcile distributed “superposition” of features with discrete BN nodes; develop methods to identify latent BN variables within superposed neuron subspaces and quantify identifiability limits.

- Clarify the boundary between algorithmic computation and probabilistic inference in looped/iterated transformers; specify when a layer implements BP versus arbitrary computation.

- Provide a constructive compiler from a known BN (structure and potentials) to a transformer that performs inference on that BN; release code and benchmarks for round-trip compilation (BN ↔ transformer).

- Benchmark the BN interpretation on structured-probabilistic tasks (e.g., PCFG parsing, SAT/constraint satisfaction, Sudoku, Ising models), comparing to exact/loopy BP and GNN baselines.

- Connect transformer dynamics to Bethe free energy or related variational energies; test whether layer updates correspond to block-coordinate descent on an explicit energy and estimate step sizes.

- Analyze robustness/calibration under the BN view: OOD detection, adversarial prompts, and uncertainty quantification; test whether BN-consistent constraints improve calibration.

- Quantify the impact of finite precision, quantization, and normalization clipping on probabilistic coherence (normalization, positivity, log-odds stability) of the inferred beliefs.

- Identify negative results: classes of graphical models or factor interactions that are provably inefficient or impossible to represent/infer with realistic transformers under practical resource constraints.

- Extend and validate the framework for multimodal transformers (vision, audio) by specifying BN variable definitions for non-text inputs and testing cross-modal message passing predictions.

Practical Applications

Below is a concise mapping from the paper’s core claim—transformers implement (or closely approximate) belief propagation on Bayesian networks via a log-odds algebra and structured attention—to practical applications. The items are grouped by deployment timeline and, where relevant, linked to sectors, tools/products/workflows, and feasibility constraints.

Immediate Applications

- Interpretable “factor-graph views” of transformer circuits

- Sectors: software, safety/AI governance, academia

- What: Map attention heads and MLPs to variables, factors, and messages in a Bayesian Network (BN); visualize message passing as belief updates; expose conditional independencies implied by sparsity/masks.

- Tools/workflows: IDE-like interpretability dashboards; head/MLP labeling as factor roles; log-odds tracing; BN-faithfulness metrics.

- Assumptions/dependencies: Access to model internals and activations; reliable head attribution; faithfulness of the BN mapping for the target model/family.

- Uncertainty calibration and principled posteriors from LLMs

- Sectors: healthcare, finance, legal tech, enterprise software

- What: Treat token/logit distributions as posteriors and regularize to satisfy Bayes-consistency; add log-odds constraints during fine-tuning for better calibration and risk estimation.

- Tools/workflows: Calibration layers, temperature schedules tied to log-odds algebra, Bayes-consistency validation suites.

- Assumptions/dependencies: Calibration may trade off raw accuracy; depends on the paper’s log-odds interpretation being behaviorally valid under distribution shift.

- Domain-structured attention masks from known dependency graphs

- Sectors: healthcare (clinical decision support), finance (risk scoring), industrial IoT (fault diagnosis)

- What: Use causal/BN structures from domain experts to inform attention masking and head sparsity, improving data efficiency and stability.

- Tools/workflows: “Factor-to-mask” compilers; mask-aware training; structured fine-tuning with graph priors.

- Assumptions/dependencies: Availability and correctness of domain graphs; may require careful hyperparameter tuning to avoid underfitting.

- Retrieval as evidence aggregation (RAG with BN semantics)

- Sectors: enterprise search, compliance, technical support

- What: Treat retrieved passages as evidence nodes; fuse them via message-passing schedules (implemented in attention) for more consistent multi-document reasoning and citation.

- Tools/workflows: RAG pipelines with evidence weighting via BN messages; “evidence ablation” evaluations.

- Assumptions/dependencies: Retrieval quality dominates outcomes; requires stable scheduling across long contexts.

- Mechanism-guided compression and pruning

- Sectors: edge AI, mobile, embedded systems

- What: Prune attention heads/factors that do not materially affect belief updates; enforce conditional independence–aware sparsity for compact models.

- Tools/workflows: BN-inspired head importance metrics; conditional-independence probes; distillation into BN-shaped student models.

- Assumptions/dependencies: Pruning may harm generality; needs per-task validation.

- Task evaluation via BN-consistency tests

- Sectors: academia, model evaluation

- What: Diagnose “Bayesian violations” (e.g., message double-counting, incoherent updates) to benchmark reasoning reliability beyond accuracy.

- Tools/workflows: Consistency test suites; synthetic BN tasks; per-layer coherence diagnostics.

- Assumptions/dependencies: Must ensure tests measure model behavior rather than just prompt formatting.

- Structured prompting and chain-of-thought as message scheduling

- Sectors: education, productivity tools, software engineering

- What: Compose prompts that align with factor-graph structure and message-passing order to improve consistency and reduce hallucination.

- Tools/workflows: Prompt templates that name variables/factors and order evidence updates; guided reasoning “checklist” UIs.

- Assumptions/dependencies: Benefits vary by model and task; requires user training.

- Logical information retrieval and parsing with BN-augmented LLMs

- Sectors: legal research, compliance, scientific literature mining

- What: Use Boolean/finite-alphabet BN concepts to interpret queries and documents as logical variables with probabilistic dependencies; support “statistical parsing for logical IR.”

- Tools/workflows: Hybrid symbolic-probabilistic retrieval; query-to-variable mapping; result ranking by posterior support.

- Assumptions/dependencies: Requires robust mapping from text to logical variables; sensitive to ontologies and annotation quality.

- Internal audits and model cards in BN terms

- Sectors: policy, governance, regulated industries

- What: Provide audit trails showing which factors (heads/modules) contributed to a decision and how uncertainty propagated.

- Tools/workflows: BN-annotated model cards; decision provenance reports.

- Assumptions/dependencies: Auditors need to accept BN-based explanations; may require domain translation layers.

Long-Term Applications

- Probabilistic programming compiled into transformer architectures

- Sectors: software, scientific computing, probabilistic ML

- What: “Compile” Bayesian/graphical models into attention masks and parameterizations; perform approximate inference via learned message passing.

- Tools/products: BN-to-transformer compilers; PPL backends targeting transformer kernels.

- Assumptions/dependencies: Requires robust constructive mappings (“constructive BP”) and stable training; may need architecture modifications.

- Looped/iterative transformers as general-purpose inference engines

- Sectors: robotics, planning, operations research

- What: Use recurrent “looped transformer” schedules to iterate belief propagation to convergence for dynamic tasks (filtering, smoothing, SLAM-like updates).

- Tools/products: Controller modules that adaptively run message updates; convergence monitors.

- Assumptions/dependencies: Stability and convergence guarantees; latency budgets in real-time systems.

- Neuro-symbolic probabilistic agents with explicit belief states

- Sectors: autonomous systems, digital assistants, healthcare triage

- What: Maintain BN-style belief states over hypotheses/goals and update them via transformer message passing; couple with planners for decision-making under uncertainty.

- Tools/products: Agent frameworks with BN memory; decision-theoretic prompting; action-selection via expected utility.

- Assumptions/dependencies: Requires robust grounding of variables/actions; safety validation and human-in-the-loop oversight.

- Causal reasoning and policy analysis with transformer-BN hybrids

- Sectors: public policy, epidemiology, economics

- What: Encode causal graphs as attention structures; estimate interventional queries by guided message passing rather than purely correlational patterns.

- Tools/products: Causal-RAG; interventional query interfaces; counterfactual reasoning modules.

- Assumptions/dependencies: Causal identification limits; correctness of causal graphs; data access and deconfounding remain hard.

- Hardware/software co-design for message-passing workloads

- Sectors: semiconductors, cloud AI

- What: Optimize kernels and accelerators for sparse attention/message passing patterns aligned with BN schedules to reduce cost and energy.

- Tools/products: BN-aware sparse-attention libraries; accelerator blocks for normalized log-odds updates.

- Assumptions/dependencies: Ecosystem adoption; standardized sparsity formats; compatibility with training regimes.

- Enterprise knowledge graphs fused with BN-informed LLMs

- Sectors: enterprise software, knowledge management

- What: Align an organization’s KG/ontology with BN factors and use transformers for scalable inference and interactive Q&A with uncertainty.

- Tools/products: KG-to-BN adapters; uncertainty-aware copilots; compliance checkers.

- Assumptions/dependencies: KG completeness and freshness; ontology curation costs.

- Education and personalized tutoring with explicit skill-belief tracking

- Sectors: education technology

- What: Model student competencies as BN variables and update beliefs through transformer-based message passing over observed responses and hints.

- Tools/products: Tutors that show “why” an assessment is reached; adaptive curricula informed by posterior mastery.

- Assumptions/dependencies: Privacy-preserving data collection; robust psychometric models.

- Safety and verification via mechanistic BN specifications

- Sectors: AI safety/compliance, defense

- What: Specify acceptable belief-update patterns and verify that transformer internals follow these constraints; detect “double counting,” shortcutting, or improper dependence.

- Tools/products: Formal verification suites; BN-conformance monitors; red-teaming harnesses.

- Assumptions/dependencies: Formal guarantees for large models are challenging; may need smaller safety-critical cores.

- Cross-modal probabilistic perception

- Sectors: autonomous driving, AR/VR, healthcare imaging

- What: Integrate vision, audio, and text sensors as evidence in a joint BN, with transformer layers coordinating cross-modal message passing.

- Tools/products: Multimodal BN-transformers; uncertainty-aware perception stacks.

- Assumptions/dependencies: Robust synchronization and calibration across modalities; real-time constraints.

- Discrete/finite-alphabet reasoning on resource-limited devices

- Sectors: embedded systems, IoT

- What: Exploit “finite alphabet/Boolean structure” to deploy quantized message-passing transformers for logical tasks (rule checking, fault detection) on edge hardware.

- Tools/products: Quantized BN-transformers; logical controllers with probabilistic guards.

- Assumptions/dependencies: Accuracy under heavy quantization; careful design of discrete variable encodings.

Notes on feasibility across applications:

- Core assumption: The paper’s mapping between transformer computations and BN belief propagation is sufficiently faithful in practice to guide design, diagnostics, and training.

- Data and structure: Many use cases depend on access to high-quality domain graphs/ontologies or the ability to learn them reliably.

- Performance trade-offs: Imposing BN structure may reduce flexibility; benefits must be validated per task.

- Access constraints: Closed models and API-only setups limit internal instrumentation; most benefits accrue with open or enterprise-controlled models.

- Evaluation: New metrics (Bayes-consistency, message fidelity) will need community acceptance to complement traditional accuracy benchmarks.

Glossary

Below is an alphabetical list of advanced domain-specific terms found in the paper, each with a brief definition and a verbatim usage example drawn from the paper’s title or references.

- Attention: A mechanism that lets neural networks weigh and combine information from different positions or nodes based on learned compatibilities, central to transformer models. "Attention Is All You Need"

- Bayesian Inference: The process of updating probabilities (beliefs) in light of new evidence using Bayes’ rule. "An Explanation of In-Context Learning as Implicit Bayesian Inference"

- Bayesian Networks: Directed acyclic graphs that encode conditional independence relations to represent joint probability distributions. "Transformers are Bayesian Networks"

- Belief Propagation: A message-passing algorithm on graphical models for computing (approximate) marginal distributions. "Understanding Belief Propagation and Its Generalizations"

- Bitter Lesson: Sutton’s observation that general-purpose learning methods leveraging computation tend to outperform approaches relying on human-built knowledge. "The Bitter Lesson"

- Characteristica Universalis: Leibniz’s envisioned universal formal language for expressing knowledge and reasoning. "The Universal Language: A Characteristica Universalis for AI"

- Dependency Parsing: An NLP task that analyzes syntactic structure by identifying head–dependent relations between words. "Dependency Parsing by Belief Propagation"

- Equivariance: A symmetry property where a model’s outputs transform predictably under transformations of the inputs (e.g., rotations, translations). "E(n)} Equivariant Graph Neural Networks"

- Graph Attention Networks: Graph neural networks that use attention mechanisms to weight messages from neighboring nodes. "Graph Attention Networks"

- Graph Neural Networks: Neural architectures that operate on graphs by passing and aggregating messages along edges. "Transformers are Graph Neural Networks"

- Induction Heads: Specific attention heads in transformers that implement an induction-like copying/pattern-extension algorithm during inference. "In-context Learning and Induction Heads"

- In-context Learning: The ability of models (e.g., transformers) to infer tasks and rules from examples provided in the prompt without parameter updates. "In-context Learning and Induction Heads"

- Looped Transformers: Transformer architectures used in a recurrent/iterated manner to implement programmable computations. "Looped Transformers as Programmable Computers"

- Loopy Belief Propagation: Belief propagation applied to graphs with cycles, yielding approximate inference. "Loopy Belief Propagation for Approximate Inference: An Empirical Study"

- Neural Machine Translation: End-to-end neural approaches (often encoder–decoder with attention) for translating text between languages. "Neural Machine Translation by Jointly Learning to Align and Translate"

- Neural Message Passing: A framework where neural networks iteratively exchange and aggregate messages over graph edges to compute node/graph representations. "Neural Message Passing for Quantum Chemistry"

- Plausible Inference: Probabilistic reasoning under uncertainty to draw conclusions that are supported but not guaranteed by evidence. "Networks of Plausible Inference"

- Probabilistic Graphical Models: Structured representations of complex joint distributions using graphs to capture dependencies and conditional independencies. "Inference in Probabilistic Graphical Models by Graph Neural Networks"

- Quantified Boolean Bayesian Network: A logical–probabilistic graphical model combining Boolean structure and quantifiers with Bayesian network semantics. "The Quantified Boolean Bayesian Network: Theory and Experiments with a Logical Graphical Model"

- Reinforcement Learning: A learning paradigm where agents optimize behavior through trial-and-error interactions to maximize cumulative reward. "via Reinforcement Learning"

- Sigmoidal Function: An S-shaped activation function (e.g., logistic or tanh) used in neural networks and approximation theorems. "Approximation by Superpositions of a Sigmoidal Function"

- Superposition: The phenomenon where multiple features are encoded in overlapping directions or units within a model’s representation space. "Toy Models of Superposition"

- Transformer Circuits: Interpretable substructures and algorithmic motifs within transformer models, often associated with specific attention heads and MLP patterns. "A Mathematical Framework for Transformer Circuits"

- Turing Completeness: The capability of a computational system to simulate any Turing machine given sufficient resources. "On the Turing Completeness of Modern Neural Networks"

- Two-Terminal Switching Circuits: Boolean switching networks analyzed with two terminals (input/output) in classical circuit theory. "The Synthesis of Two-Terminal Switching Circuits"

- Universal Approximators: Neural networks that can approximate any continuous function on compact sets to arbitrary accuracy given sufficient capacity. "Multilayer Feedforward Networks Are Universal Approximators"

- Weighing of Evidence: A formal measure of how strongly data support one hypothesis over another in probabilistic reasoning. "Probability and the Weighing of Evidence"

Collections

Sign up for free to add this paper to one or more collections.