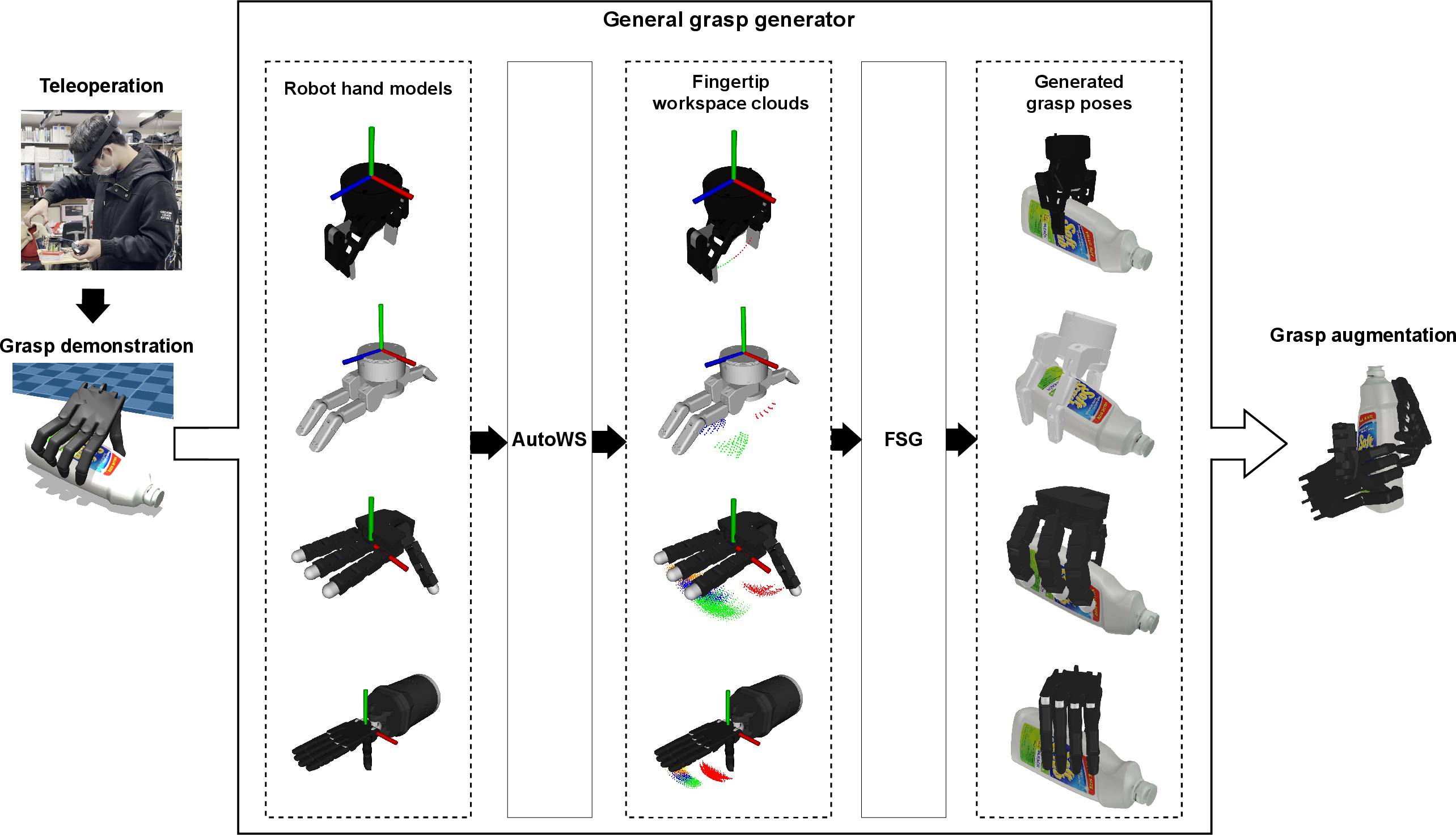

- The paper introduces an innovative grasp data augmentation pipeline using AutoWS for workspace cloud generation and FSG for contact-aware grasp synthesis.

- The method bypasses inverse kinematics, leading to significant speedups and improved valid pose rates across various robotic hand models.

- Experimental results using YCB benchmarks and diverse hand architectures demonstrate its effectiveness for real-time, data-driven grasp learning.

Overview

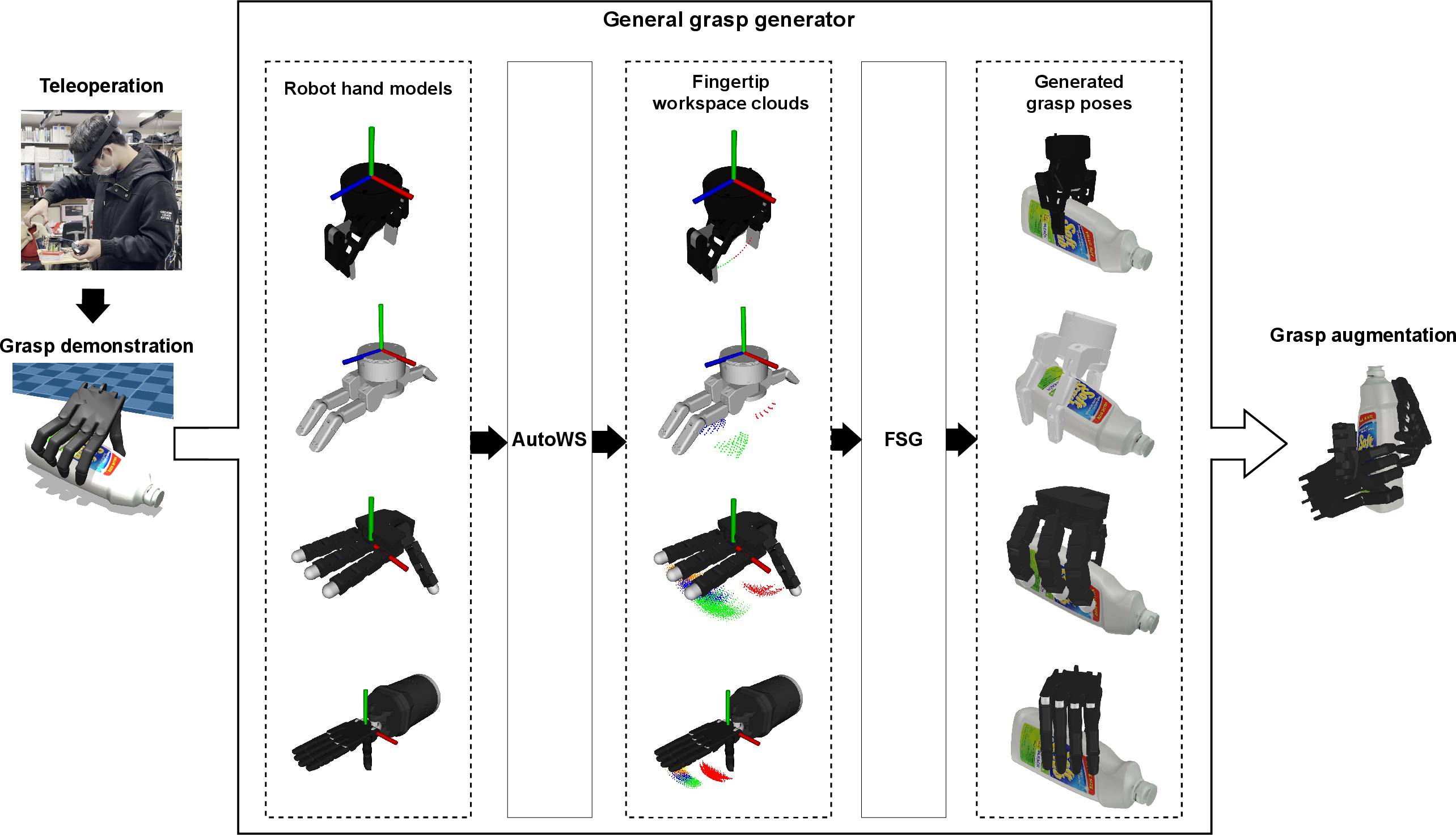

The paper "Dexterous grasp data augmentation based on grasp synthesis with fingertip workspace cloud and contact-aware sampling" (2603.16609) introduces a comprehensive pipeline for efficient and robust generation and augmentation of grasp pose data for multi-fingered dexterous robotic hands. The method consists of two principal modules: AutoWS, which automatically generates fingertip workspace clouds incorporating structural information of robotic hands, and FSG, a fingertip-contact-aware sampling-based grasp generator enabling rapid creation of valid, human-like grasp poses. The approach is validated across diverse hand structures and demonstrates significant improvements in both speed and valid pose generation rate compared to existing methods, facilitating real-time data-driven grasp learning.

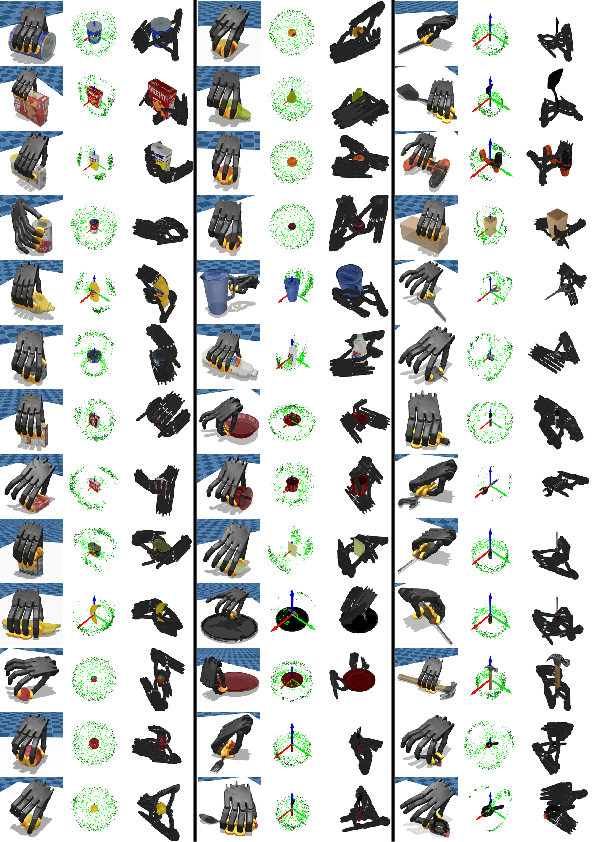

Figure 1: System architecture for dexterous grasp data augmentation showing AutoWS and FSG modules for workspace cloud generation and contact-aware sampling.

Robotic grasp synthesis encompasses model-based and data-driven methods. Model-based approaches, including sampling- and optimization-based algorithms, face limitations when transitioning from simple parallel grippers to dexterous hands with higher DOF. Contact point sampling techniques (e.g., antipodal sampling) are efficient for parallel jaws but are computationally expensive to extend to multi-fingered hands due to combinatorial explosion. Approach direction-based sampling improves efficiency by abstracting away finger count but demands complex parameter tuning. Optimization-based schemes, such as EigenGrasp and gradient-based planners, offer dimensionality reduction but remain slow and may yield suboptimal grasp quality.

Data-driven approaches are mainstream due to advances in deep learning but depend critically on large-scale, diverse datasets. Manual data collection, even with AR/VR teleoperation, is resource-intensive. This paper positions its method to address dataset generation efficiency and generalizability, leveraging workspace cloud abstraction to bypass inverse kinematics and embracing demonstration-based augmentation for human-like pose diversity.

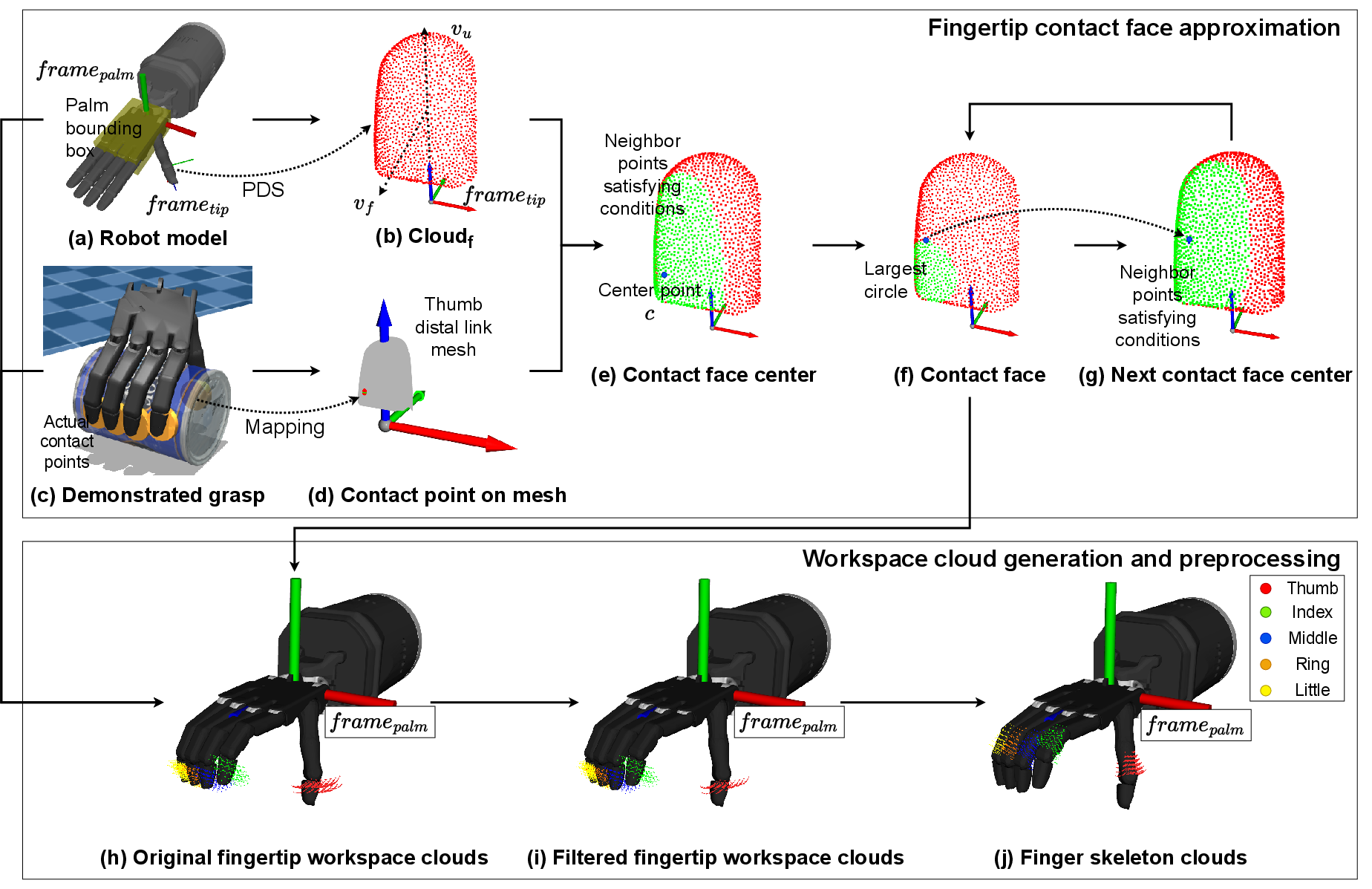

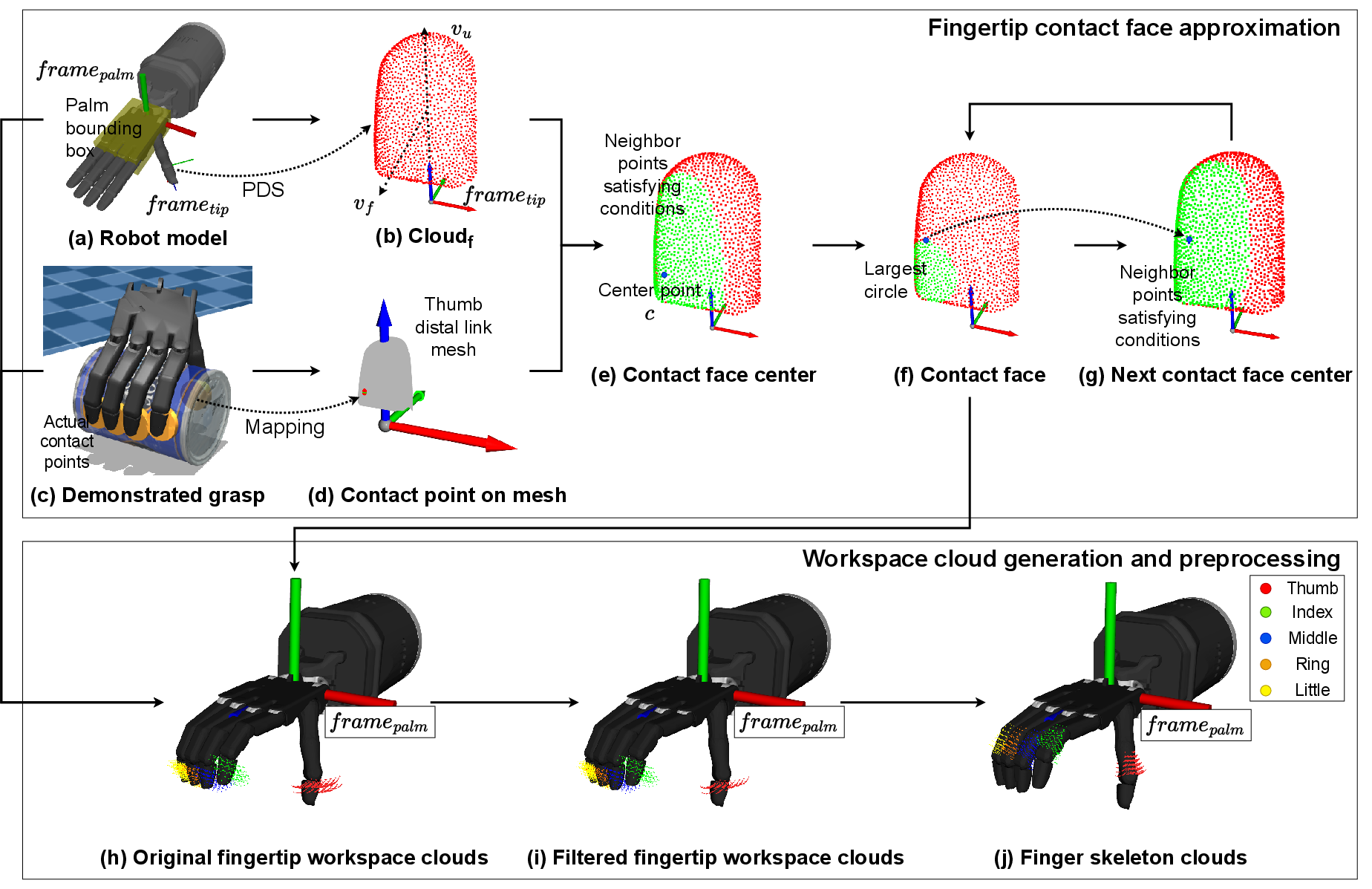

AutoWS: Automatic Fingertip Workspace Cloud Generation

AutoWS generates fingertip workspace clouds as point clouds in the palm frame, embedding joint angle information and fingertip contact surface properties directly into each point's features. This allows for direct sampling of fingertip contact poses without explicit IK computation. For each grasp demonstration, the system approximates contact faces by selecting candidate points on fingertip meshes using Poisson Disk Sampling. Filtering based on surface normals and face convex hulls yields robust contact regions. The workspace cloud is constructed by sampling the joint space—centered on demonstration joint angles when available—using forward kinematics, and storing position, normal, joint configuration, and contact face parameters in each cloud point.

Figure 2: Flowchart of AutoWS detailing mesh sampling, contact face approximation, workspace cloud generation, and filtering based on palm orientation.

Points are filtered to preserve only those facing toward the palm appropriately, minimizing invalid poses and optimizing subsequent contact sampling. Link skeleton clouds are also generated for collision checking, enabling detection of finger-object penetration in later stages.

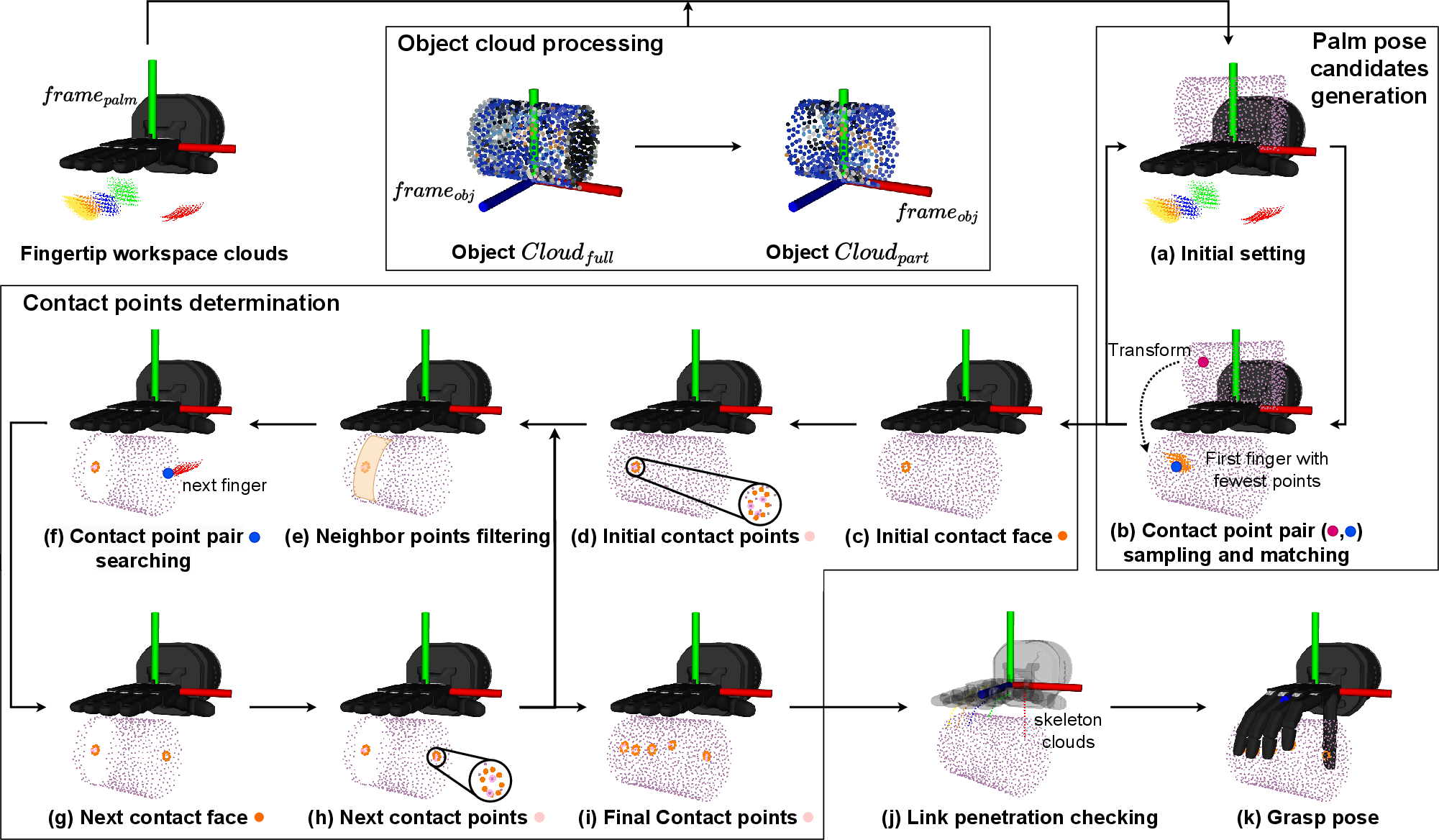

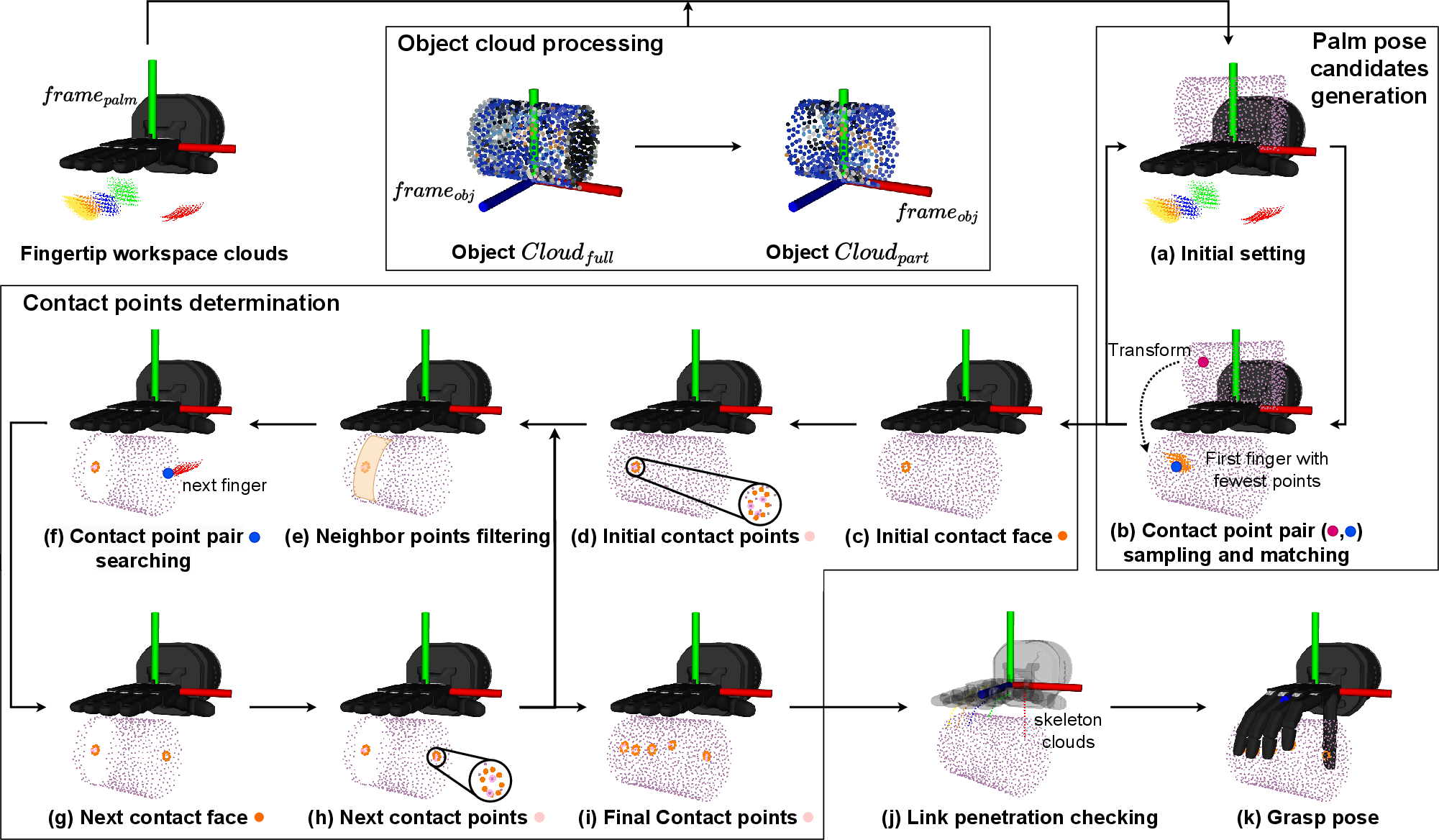

The FSG module employs workspace clouds and object point cloud representations to synthesize precision grasp poses. Candidate palm poses are generated by matching pairs between filtered object regions and workspace clouds, followed by iterative refinement through rotation and collision checking. Contact points are determined using k-d tree queries based on spatial and normal proximity, and contact faces are reconstructed for each finger. Finger iteration permits flexible specification of active fingers for grasp, prioritizing thumb for dexterous hands. Link penetration checks using skeleton clouds further refine valid pose selection.

Grasp quality is evaluated using GWS epsilon, applying unit-force friction cones at each contact point and assessing force closure and resistance to disturbance via convex hull analysis.

Figure 3: Flowchart of FSG, illustrating random sampling, pose candidate generation, contact face reconstruction, iterative finger search, and quality evaluation.

Experimental Evaluation

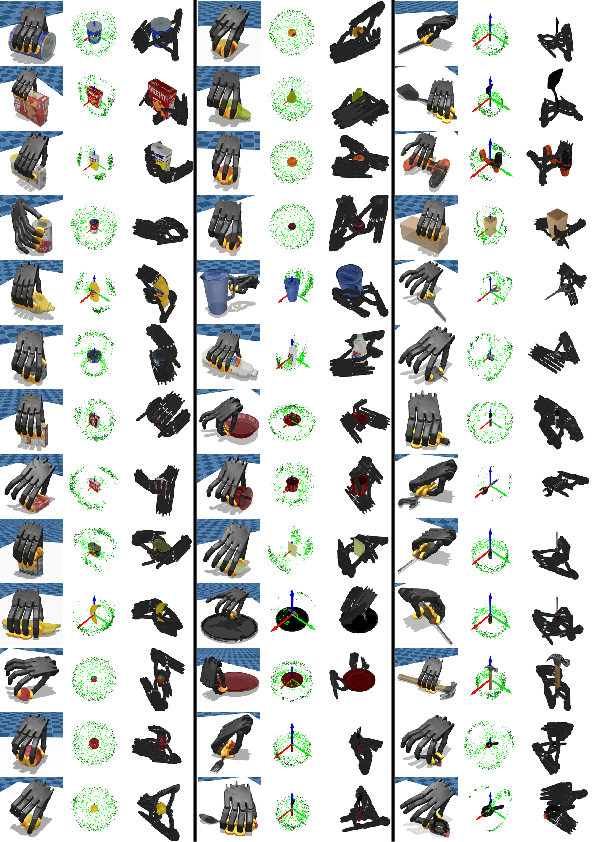

Experiments use YCB objects as benchmarks and the Shadow Hand, among other hand models. Teleoperation is performed in MuJoCo with Meta Quest, collecting demonstration data in real time. The pipeline augments these demonstrations to generate large datasets. Comparative evaluation with baselines such as EigenGrasp, DexGraspNet, and QD-Grasp-6DoF reveals strong enhancements in both pose generation speed and valid epsilon percentage for typical objects.

Numerical results demonstrate that for objects such as 004-sugar-box and 005-tomato-soup-can, the proposed FSG+Aff variant achieves up to an order of magnitude speedup and higher valid pose rates, with comparable or superior GWS epsilon and volume metrics. For challenging objects (024-bowl, 062-dice), compatible workspace clouds derived from demonstration data enable valid pose generation where prior methods fail.

Distributed workspace clouds enable direct specification of active fingers; generated poses for the ycb 021-bleach-cleanser and various hand models highlight the method's versatility across hand structure.

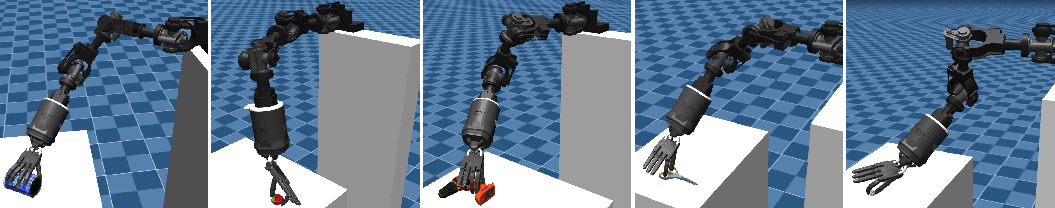

Figure 4: Poses generated for the Shadow Hand with varying finger count, demonstrating adaptive finger selection.

Augmentation using demonstration poses yields dense datasets with diverse palm positions and example grasp poses, maintaining contact points and joint angle consistency.

Figure 5: Collected grasp pose demonstrations and augmented datasets (Part1), showing contact points, palm position distribution, and example grasp diversity.

Figure 6: Collected grasp pose demonstrations and augmented datasets (Part2) with detailed augmentation outcomes.

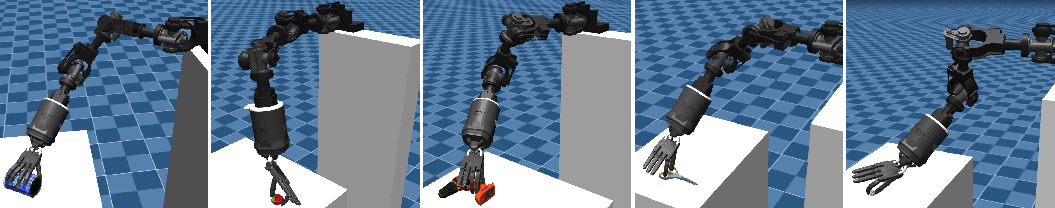

Additional figures demonstrate the system's effectiveness in practical hand-arm integration and in addressing penetration and contact point challenges.

Figure 7: Example grasp poses generated by the hand-arm system for objects on a table.

Implications and Future Directions

This pipeline enables rapid, scalable grasp dataset generation for data-driven training of dexterous manipulation, supporting real-time learning and adaptation. Integration of structural information into workspace point clouds allows universal applicability across robot hand architectures, accelerating both synthetic and human-like pose generation.

Current limitations include reliance on VR for demonstration collection and constraints to precision grasps. Extensions could focus on image/video-based pose retargeting and contact estimation, robust power grasp augmentation, and improved soft contact modeling. Further, real-world dense point cloud reconstruction and environment-adaptive grasp generation will expand the approach’s applicability in practical manipulation tasks.

Conclusion

The proposed method establishes an efficient workflow for both collection and augmentation of dexterous grasp pose data through workspace cloud embedding and contact-aware sampling. Experimental benchmarks substantiate its gains in computational efficiency and valid pose discovery, across varied hand structures and target objects. The flexible architecture supports customization of finger activity, real-time grasp synthesis, and robust augmentation for deep learning pipelines. Future enhancements targeting data acquisition simplification, grasp diversity, and real-environment adaptation could further consolidate its value for robotic grasp research and deployment.