- The paper introduces DM4CT, a benchmark for evaluating diffusion-based CT reconstruction methods against traditional and supervised models.

- It demonstrates that diffusion approaches recover sharper details under noise and sparsity, though with risks of hallucinated structures.

- It highlights the critical balance between data consistency and prior enforcement, offering insights into practical deployment and computational trade-offs.

DM4CT: A Systematic Benchmark for Diffusion Models in CT Reconstruction

Overview and Motivation

Recent advances in diffusion models have established them as state-of-the-art priors for solving a variety of inverse problems. However, their application to computed tomography (CT) reconstruction presents significant practical challenges due to the complex, ill-posed nature of the problem and the prevalence of domain-specific distortions, such as correlated noise, ring artifacts, and system-related value range misalignments. DM4CT provides a comprehensive benchmark framework to systematically evaluate ten representative diffusion-based reconstruction algorithms alongside established classical, model-based, unsupervised, and supervised methods across medical, industrial, and real-world synchrotron CT datasets. The benchmark is designed to interrogate both the numerical performance of these models and their robustness with respect to noise, sparsity, and artifacts.

Figure 1: Overview of the DM4CT benchmark including reconstruction pipeline, datasets, simulation configurations, and evaluation metrics.

Benchmark Datasets, Protocols, and Model Taxonomy

The DM4CT suite incorporates two simulated datasets targeting medical and industrial use-cases and a high-resolution, real-world synchrotron scan, especially relevant for bridging the gap between synthetic and physically acquired data. For the simulated datasets, a five-fold protocol varies the number of projections, noise severity, and presence of artifacts. The real-world dataset leverages parallel-beam geometry to focus on structurally realistic, high-resolution regimes, minimizing geometric confounders and maximizing cross-method reproducibility.

All diffusion-based reconstruction methods share common pixel-space or latent-space UNet/VQ-VAE backbones to isolate the effect of reconstruction strategy from prior/encoder architecture, and a unified taxonomy is introduced organizing methods by how they enforce data consistency (gradient steering, optimization steps, plug-and-play, pseudoinverse, variational Bayesian), supporting rigorous ablation across approaches.

Empirical Evaluation and Key Findings

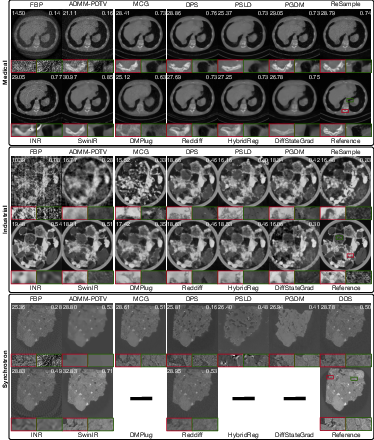

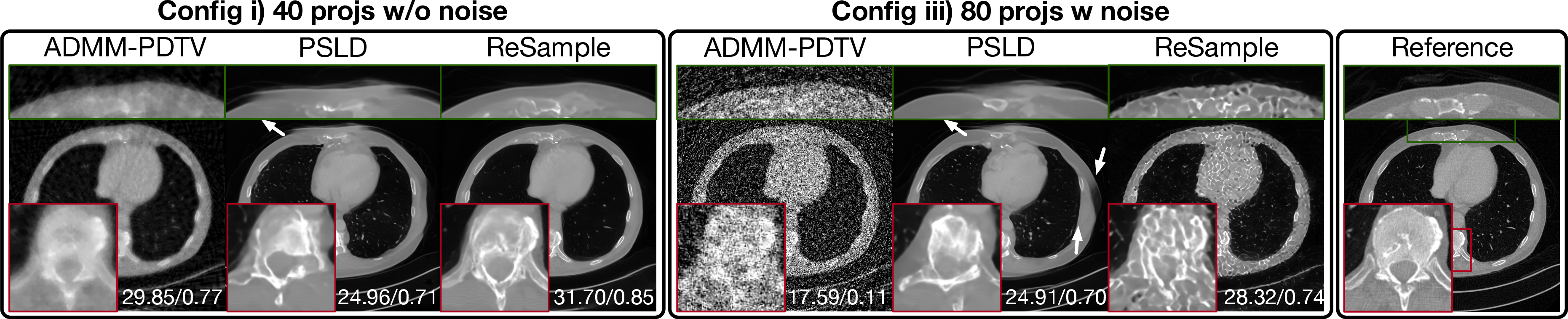

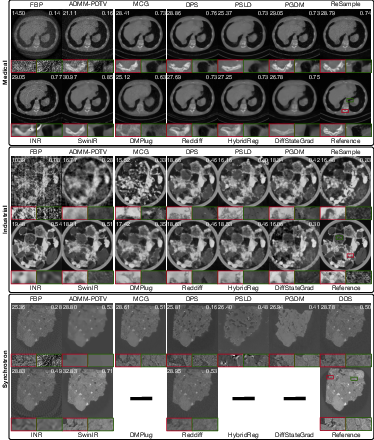

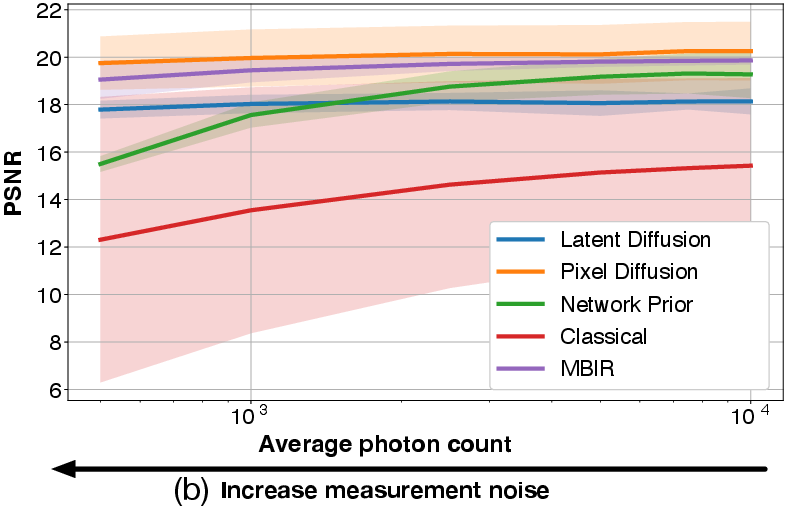

Numerical and Visual Comparison

Across a wide range of noise and sparsity levels, diffusion-based methods consistently outperform traditional filtered back-projection (FBP) and model-based iterative reconstruction (MBIR) baselines in PSNR/SSIM, and are competitive—but not dominant—when compared to strong supervised models such as SwinIR. The best-performing supervised methods produce smoother reconstructions with higher image quality metrics, but diffusion techniques tend to recover sharper features typically lost in supervised regression, at the cost of occasional hallucinated structures. Notably, no single diffusion subclass (pixel, latent, plug-and-play, etc.) dominates across all domains and contexts.

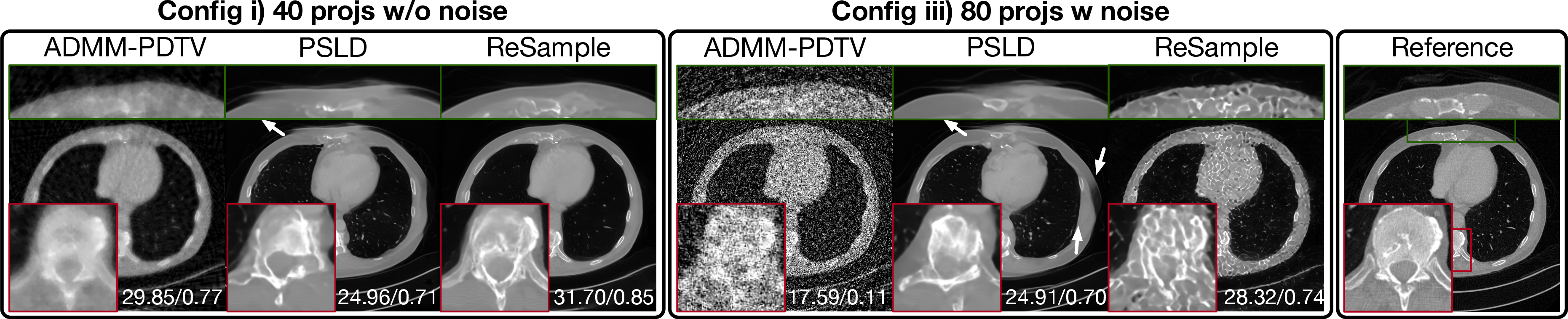

Figure 2: Visual comparison of diffusion-based and established methods across medical, industrial, and synchrotron datasets, illustrating PSNR/SSIM and local detail recovery.

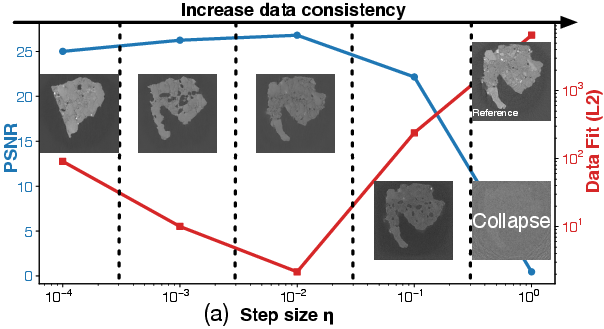

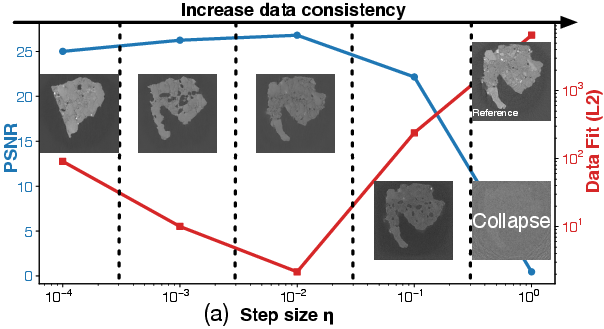

Data Consistency, Prior Contribution, and Null Space Analysis

The balance between prior enforcement and measurement consistency proves critical and is governed by explicit parameters (e.g., data consistency gradient step size η). Excessive enforcement (large η) causes convergence to degenerate, noise-dominated solutions, while insufficient enforcement results in strong hallucination due to over-reliance on prior.

Figure 3: Variation in PSNR and data-fit as a function of the consistency gradient step size η, revealing stability boundaries for prior-vs-data control.

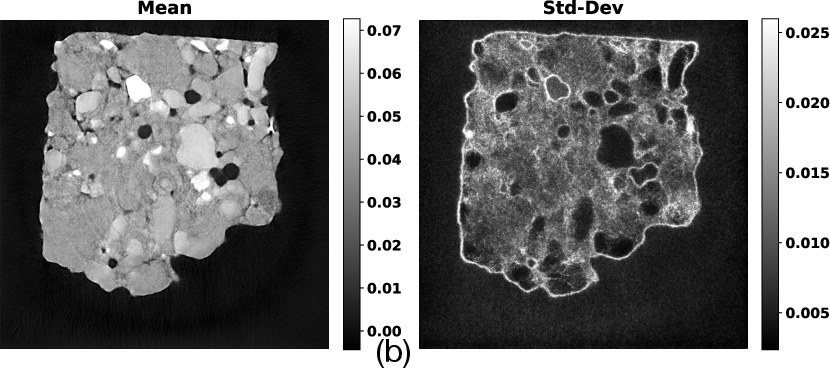

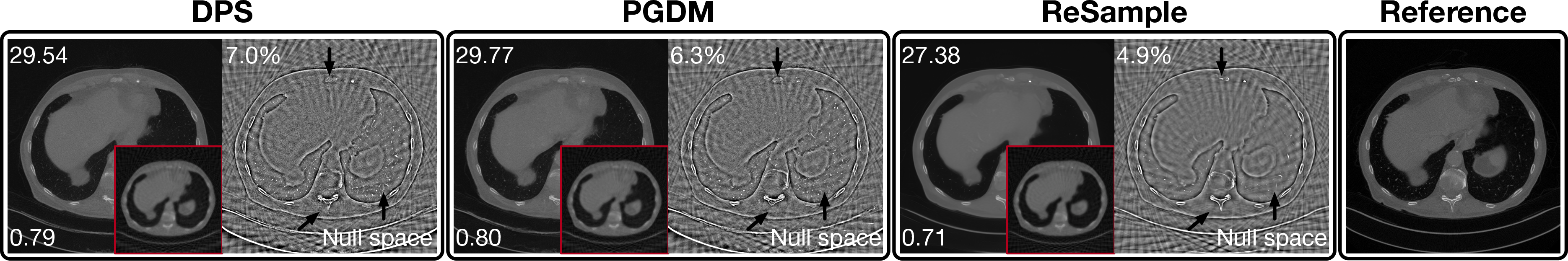

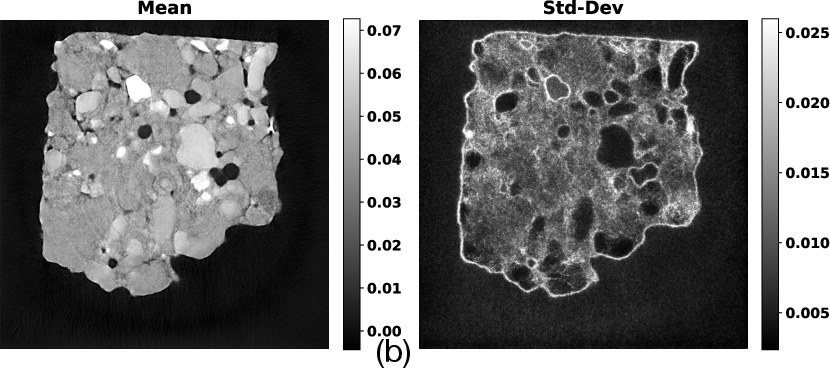

A range/null-space decomposition analysis clarifies that soft data consistency (gradient-based, e.g., DPS) allows a substantial prior-driven null space, whereas explicit optimization steps (e.g., ReSample) tightly constrain reconstructions, reducing prior's effect but introducing susceptibility to noise overfitting.

Figure 4: Visualization of reconstructions decomposed into range and null space components for each data consistency strategy, highlighting prior-induced hallucination.

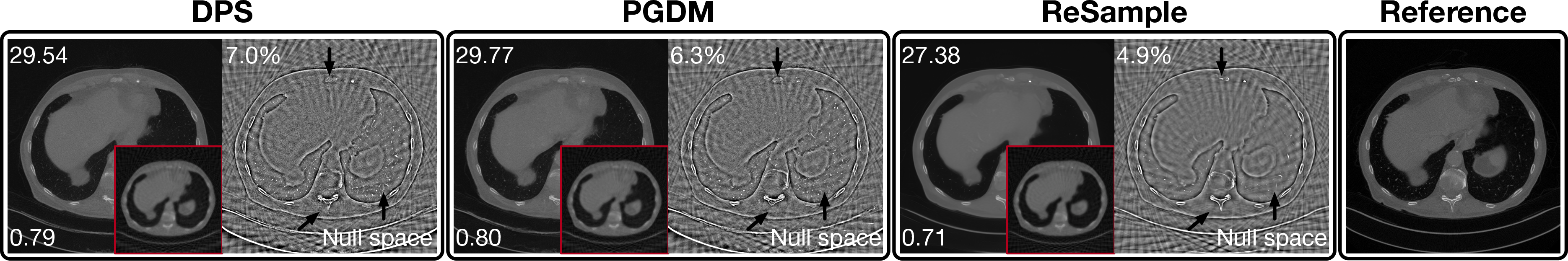

Latent vs. Pixel Diffusion Behavior

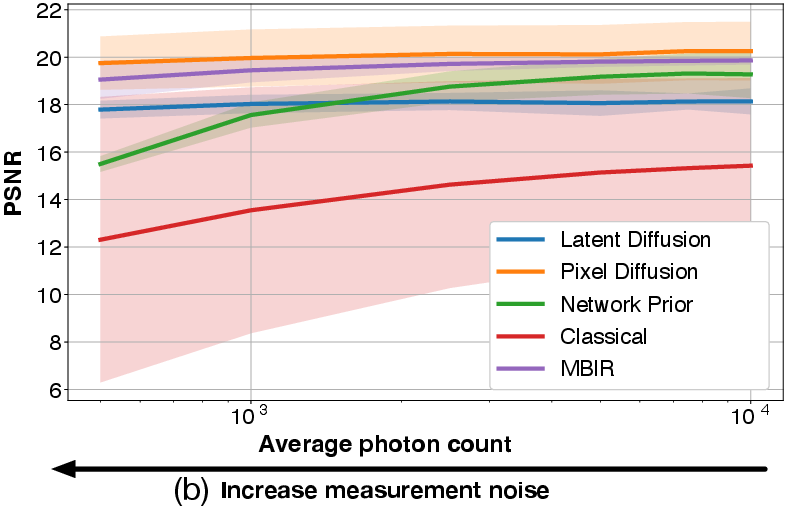

Enforcing data consistency in latent diffusion pipelines is especially challenging. Gradient-based approaches (e.g., PSLD) tend to introduce artifacts and structural discontinuities, particularly in noise-free regimes. Explicit optimization corrections (e.g., ReSample) can eliminate these artifacts in clean settings, but can become harmful under noisy or aliased measurement regimes due to overfitting.

Figure 5: Latent diffusion reconstructions with and without explicit data consistency optimization, demonstrating artifact correction and noise amplification.

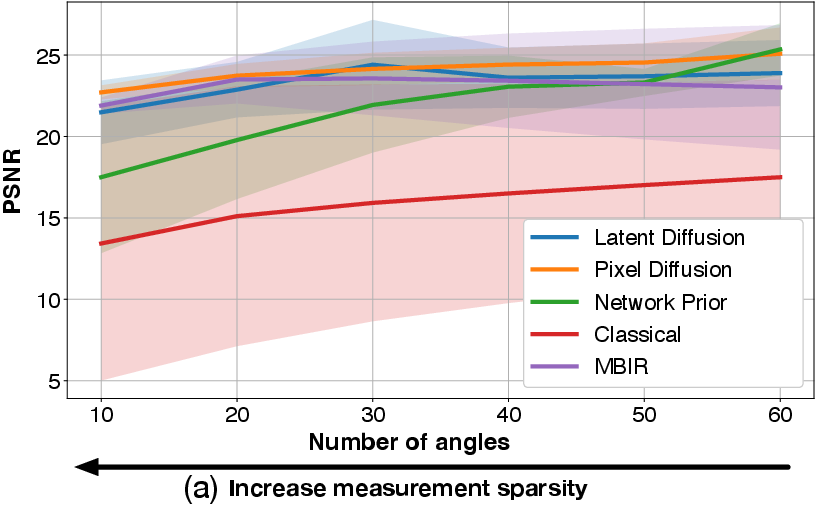

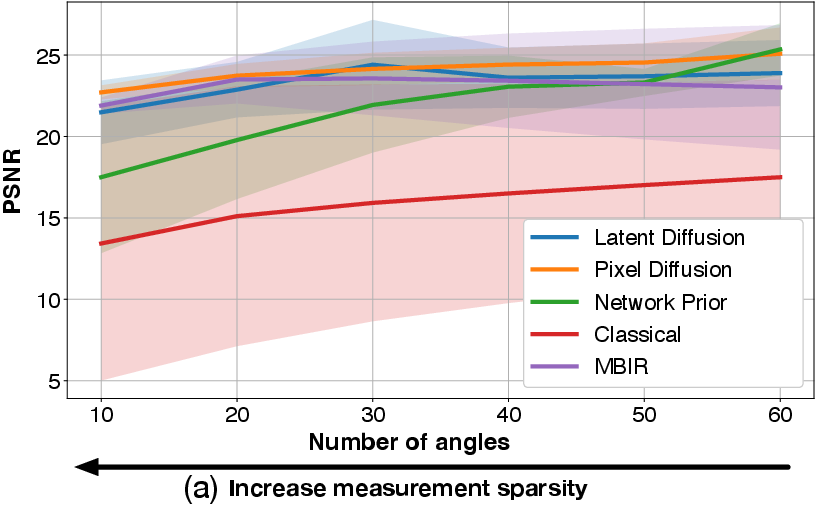

Sensitivity to Measurement Sparsity, Noise, and Computational Constraints

Pixel-space diffusion models remain robust under high noise and extreme measurement sparsity, outperforming classical iterative methods notably in these regimes. However, their advantages diminish as the data approach ideal conditions. Latent models, while more efficient, face optimization expressive bottlenecks and require careful training and tuning.

Figure 6: Method category comparison (PSNR) with respect to projection sparsity and noise, highlighting the relative strengths of pixel-, latent-, end-to-end, and unsupervised priors.

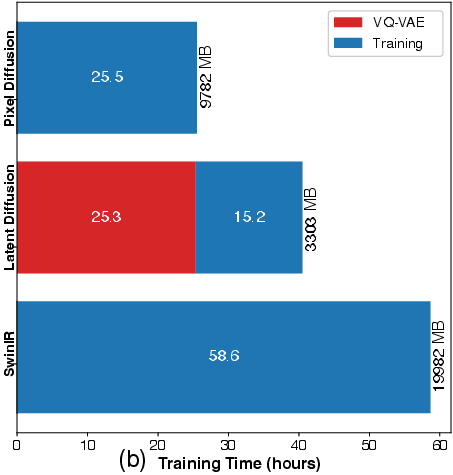

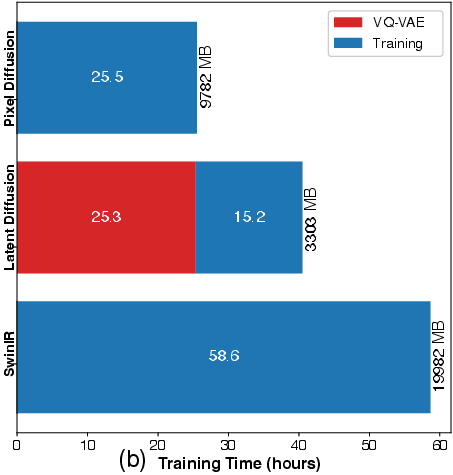

The computational profile reveals significant resource demands, with pixel diffusion more inference-efficient than latent diffusion, though overall training time trends favor supervised baselines. These trade-offs imply important hardware and deployment considerations.

Figure 7: Reconstruction and training time, and corresponding GPU memory demand across representative methods.

Practical Deployment Considerations

Three deployment obstacles are emphasized: (1) small, non-standardized CT datasets relative to computer vision corpora; (2) system- and protocol-dependent value range misalignment compromising direct generalization; and (3) prohibitive computational requirements for 3D and complex system geometries, motivating the use of 2D slice-wise, parallel-beam data for benchmarking.

Theoretical and Practical Implications

DM4CT shows that diffusion models are effective, but not universally superior or robust to the nuances of real-world CT. The need for precise balancing between prior and measurement consistency is acute and remains an open challenge: too much prior induces hallucination; too much enforcement encourages noise overfitting. Robustness against data- and noise-model mismatch (e.g., Gaussian vs. Poisson, range misalignment) is also a limiting factor, especially for methods that hard-code a specific data likelihood. Furthermore, no examined diffusion model outperforms a specialized supervised regressor (SwinIR) across the board, and classical unsupervised approaches achieve similar or better results in artifact-free, high-quality regimes.

The null space analysis provides diagnostic tools for quantifying—and potentially controlling—the hallucination capacity of generative priors, which is critical for clinical safety and interpretability.

The resources (public datasets, pretrained models, and open-source code) provided by DM4CT lower the barrier for rigorous cross-method comparison in diffusion-based CT and related inverse imaging applications, and facilitate reproducibility for future research.

Future Directions

DM4CT highlights several important research avenues:

- Enhancing prior-data consistency integration, possibly via adaptive or learned balancing schemes optimized for target domains.

- Exploring alternative generative priors (e.g., flow-based models, INR-fusion, or plug-and-play strategies) for greater expressiveness and generalizability.

- Addressing practical limitations through improved calibration-insensitive architectures, standardized intensity normalization, and geometric invariance.

- Systematic evaluation on clinically meaningful downstream tasks (e.g., anatomical segmentation, detection, and outcome prediction) with domain-specific metrics.

- Extending benchmarks to cover cross-institutional, protocol-diverse, and multi-modality datasets to rigorously test generalization, especially in potential translation to clinical deployment.

Conclusion

DM4CT establishes a rigorous, open-source foundation for evaluating diffusion models in CT reconstruction, exposes current strengths and limitations, and sets a high bar for future research to meet both the theoretical and practical demands of real-world inverse imaging. The nuanced interplay between learned priors, data fidelity, and computational constraints uncovered here provides the necessary context for developing the next generation of robust, interpretable, and deployable generative approaches for tomographic reconstruction.

(2602.18589)