Rethinking the Trust Region in LLM Reinforcement Learning

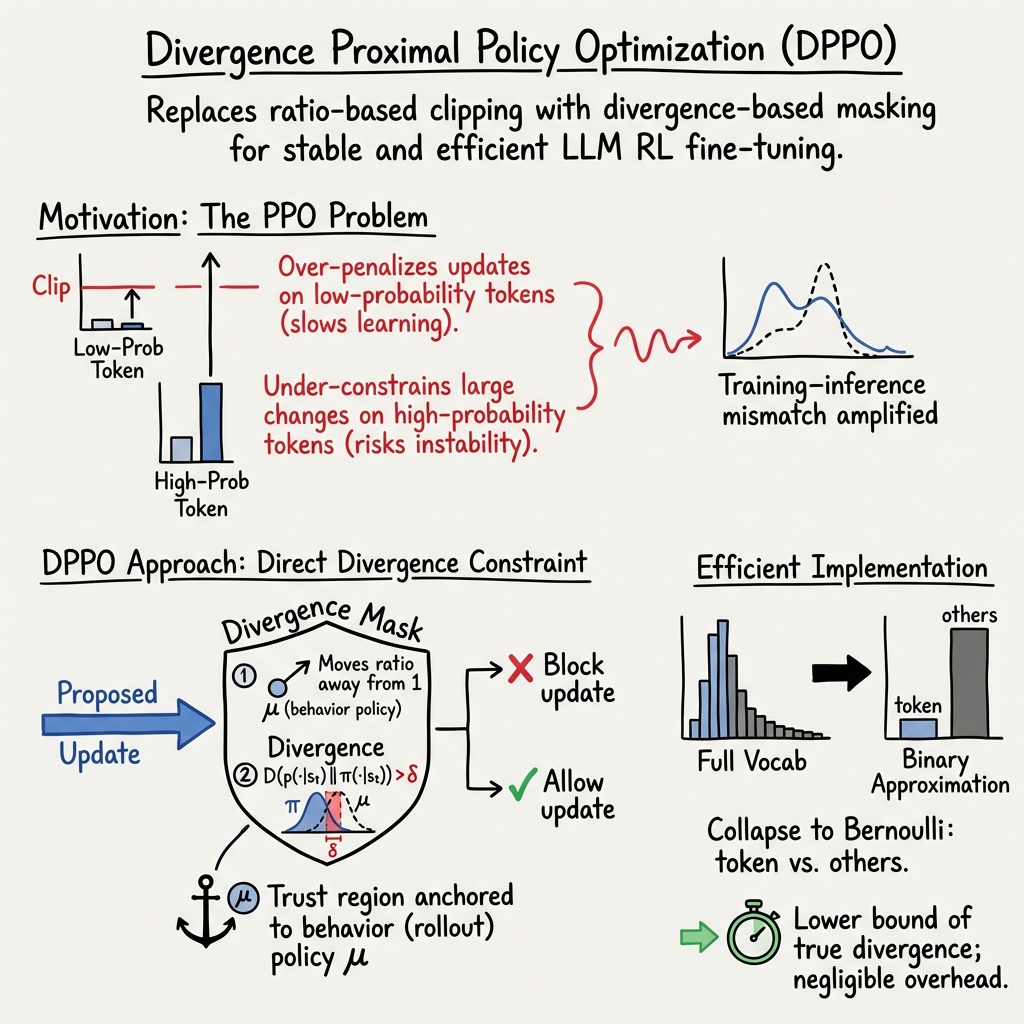

Abstract: Reinforcement learning (RL) has become a cornerstone for fine-tuning LLMs, with Proximal Policy Optimization (PPO) serving as the de facto standard algorithm. Despite its ubiquity, we argue that the core ratio clipping mechanism in PPO is structurally ill-suited for the large vocabularies inherent to LLMs. PPO constrains policy updates based on the probability ratio of sampled tokens, which serves as a noisy single-sample Monte Carlo estimate of the true policy divergence. This creates a sub-optimal learning dynamic: updates to low-probability tokens are aggressively over-penalized, while potentially catastrophic shifts in high-probability tokens are under-constrained, leading to training inefficiency and instability. To address this, we propose Divergence Proximal Policy Optimization (DPPO), which substitutes heuristic clipping with a more principled constraint based on a direct estimate of policy divergence (e.g., Total Variation or KL). To avoid huge memory footprint, we introduce the efficient Binary and Top-K approximations to capture the essential divergence with negligible overhead. Extensive empirical evaluations demonstrate that DPPO achieves superior training stability and efficiency compared to existing methods, offering a more robust foundation for RL-based LLM fine-tuning.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper looks at a popular way to train LLMs using reinforcement learning (RL). The standard method, called PPO (Proximal Policy Optimization), tries to keep training “safe” by limiting how much the model’s behavior can change at each step. The authors argue that PPO’s safety rule doesn’t work well for LLMs because these models choose from thousands of possible next tokens (tiny pieces of text). They propose a new method, DPPO (Divergence Proximal Policy Optimization), that uses a better measure of “how much the model changed,” leading to faster and more stable training.

What questions did the researchers ask?

- Is the usual PPO “clipping” rule actually a good safety rule for LLMs?

- Can we design a safer, more principled rule based on how different the whole token distribution is (not just one token)?

- How can we make that safer rule efficient enough to run on big models?

- What exactly causes training runs to become unstable or “collapse”?

- Where should we “anchor” the safety rule—relative to the model that generated the data or a recomputed model?

- Does the new method work better across different models and tasks?

How did they study it?

Think of training as teaching the model to “stay close” to what it already does while nudging it toward better answers—this “stay close” zone is called the trust region (like a speed limit for learning).

- How PPO works today:

- PPO checks the ratio of the new probability vs. the old probability for the specific token the model just produced. If that ratio is too big or too small, PPO clips (limits) the update.

- Problem: This is like judging a whole movie by one frame. It uses only one sampled token and ignores the rest of the distribution. With huge vocabularies, that single ratio can be noisy and misleading.

- What DPPO changes:

- DPPO measures how different the entire token distribution is between the old and new model using standard “difference” measures called divergences (like Total Variation or KL divergence). These tell you how much the whole probability distribution changed.

- DPPO uses a mask (a rule to allow or block an update) that only blocks updates when:

- 1) the update moves in the wrong direction (hurts performance), and

- 2) the whole distribution has changed too much (beyond a threshold).

- This keeps updates inside a well-founded trust region, based on theory rather than a single token’s ratio.

- Making it efficient:

- Binary approximation: Compare just two buckets—“the sampled token” vs. “all other tokens.” This is very cheap but still captures big changes where it matters.

- Top-K approximation: Track only the K most likely tokens plus “other.” This focuses on the most important parts of the distribution with small extra cost.

- Experiments:

- The team tested on math reasoning datasets and benchmarks (like AIME24/AIME25) with several LLMs (including Qwen variants, both dense and mixture-of-experts).

- They compared DPPO to common baselines like GRPO and CISPO and checked learning speed, stability (no training collapse), and final scores.

What did they find, and why is it important?

- The core flaw in PPO’s clipping:

- Rare (low-probability) tokens: Their probability ratios swing wildly even when the actual overall change is tiny. PPO over-penalizes these, slowing learning. But rare tokens often carry important exploratory “thinking” signals in reasoning tasks.

- Common (high-probability) tokens: Small ratio changes can hide big shifts in total probability mass. PPO under-penalizes these, which can cause large, destabilizing changes.

- Measurements confirm this: the ratio is volatile for rare tokens; divergence (like Total Variation) is much more stable and meaningful.

- DPPO is more stable and efficient:

- DPPO learns faster and more reliably than PPO-style methods in many setups, often reaching higher scores and avoiding collapses.

- It works well even without extra stabilizing tricks (like router replay in mixture-of-experts models).

- A trust region is necessary—even with tiny learning rates:

- Methods without a trust region (or with a bad one) accumulate “training vs. inference” mismatch and eventually crash.

- The trust region must be anchored to the rollout (data-generating) policy:

- If you anchor it to a recomputed policy instead, instability is likely and performance collapses. Anchoring to the rollout policy is both more stable and cheaper to train.

- A small number of “bad updates” cause most collapses:

- Specifically, large negative updates (when a sample gets a negative reward) that push the model too far outside the trust region. Blocking just these few bad updates stabilizes training.

- Truncated Importance Sampling (TIS) can hurt stability:

- Although TIS is meant to reduce variance, it tends to down-weight gradients for rare tokens (the very tokens that matter for exploration), introducing harmful bias.

- Relaxing constraints for low-probability tokens helps:

- Allowing more freedom on rare tokens speeds up learning and can improve efficiency. The direction of relaxation matters; relaxing both “sides” (increases and decreases) works best without causing entropy collapse.

What does this mean going forward?

- Better training rules for LLMs: DPPO shows that using a real measure of distribution change (divergence) instead of a single-token ratio leads to faster and safer training.

- Practical guidelines:

- Keep a trust region, even with small learning rates.

- Anchor the trust region to the rollout policy (the one that generated your data).

- Watch out for a tiny fraction of large, negative updates—blocking them can prevent collapses.

- Be cautious with techniques like TIS that may unintentionally silence useful signals from rare tokens.

- Broader impact: These improvements can make RL fine-tuning of LLMs more stable, faster, and more compute-efficient across different models and tasks, helping build models that learn better from feedback without crashing.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of unresolved issues and open questions that future researchers could address to strengthen and generalize the paper’s contributions.

- Formal convergence guarantees for DPPO with approximate divergence masks are missing. Provide proofs that using Binary or Top-K divergence (which are lower bounds of the true divergence) with a given threshold still implies monotonic improvement or bounded degradation under the LLM finite-horizon setting.

- Threshold calibration remains heuristic. Develop principled methods to set and adapt the divergence threshold ε (and asymmetric clip parameters), explicitly accounting for sequence length T, reward scale max|R(y)|, and model size, possibly via an online controller driven by mismatch metrics.

- Safety margins for underestimation risk. Because Binary and Top-K divergences lower-bound the true divergence, quantify how often the mask permits unsafe updates (true divergence > ε) and propose correction factors or conservative scaling to ensure trust-region compliance.

- Sensitivity to Top-K design. Systematically study how K and the choice of anchor distribution (behavior vs recomputed) affect divergence estimation accuracy, mask decisions, and performance; explore dynamic K or adaptive token sets that track shifting heads/tails.

- Sequence-level budget allocation. The derived bounds depend on horizon T, but the algorithm applies per-token masks. Design and evaluate length-adaptive per-step divergence budgets that ensure the cumulative divergence satisfies the sequence-level trust region.

- Advantage estimation and credit assignment. The approach largely uses sequence-level advantages with token-level masks. Investigate token-level advantage estimation, variance-reduction baselines, and their impact on mask decisions, training stability, and efficiency.

- Theoretical explanation of TIS instability. Provide a formal analysis clarifying why truncated importance sampling degrades stability in this regime, and propose variance reduction techniques compatible with DPPO (e.g., control variates, baselines, advantage normalization) without biasing low-probability tokens.

- Anchoring the trust region under off-policy mixtures. Many RLHF pipelines use replay buffers with mixed behavior policies. Formalize and evaluate how to anchor the trust region when data originate from multiple historical policies (e.g., per-sample μ, mixture anchors, or importance-weighted anchors).

- Mask differentiability and optimization dynamics. The mask introduces non-differentiable gating. Study smooth relaxations (e.g., sigmoid masks, soft constraints, barrier terms), their optimization behavior, and whether they improve convergence or robustness.

- Robustness to training–inference engine mismatch across implementations. Expand the analysis beyond a single engine to multiple kernels, precisions (FP16/FP8), KV-cache implementations (e.g., PagedAttention), and sampling settings, and identify engine-specific mitigation strategies.

- Computational overhead characterization. Provide detailed throughput and latency benchmarks (forward/backward time, memory footprint) for Binary vs Top-K divergence in realistic large-batch, distributed settings; quantify the cost-benefit relative to PPO/GRPO.

- Exploration–exploitation trade-offs. Measure how divergence masking affects exploration (e.g., diversity, entropy trajectories, rare-token visitation) and whether relaxing constraints on low-probability tokens consistently improves sample efficiency without destabilizing high-probability tokens.

- Metric choice and calibration (TV vs KL vs others). Offer empirical and theoretical guidance on when to prefer TV or KL (or symmetric KL/JS/Rényi/Wasserstein), how to set ε across metrics, and how metric choice interacts with model scale and task characteristics.

- Sequence length and long-context scaling. The bound penalizes with T, but the paper does not assess very long contexts. Evaluate DPPO on long-context tasks (e.g., 128k tokens), adapt ε schedules, and test memory/performance trade-offs.

- Generalization beyond math reasoning. Validate DPPO on diverse RLHF tasks (helpfulness, harmlessness, safety, summarization, coding), measure alignment and safety metrics, and test for reward hacking or undesirable behaviors under divergence relaxation.

- Test set leakage and overfitting risk. The online evaluation heavily uses AIME24/25; design robust validation strategies (e.g., held-out unseen benchmarks, cross-domain tests) to ensure improvements are not due to overfitting to evaluation sets.

- Safety and negative feedback handling. The analysis identifies a small subset of destabilizing negative updates; investigate targeted strategies for negative samples (e.g., cap divergence on penalized tokens, specialized masks) and assess safety-side effects.

- MoE routing dynamics. While R3 is discussed, analyze how DPPO affects MoE router behavior (expert load balance, expert specialization), and whether DPPO obviates R3 across different MoE configurations; provide formal or empirical guidance.

- Interaction with LoRA and parameter-efficient tuning. Expand ablations across LoRA ranks, adapter placements, and hybrid full/PEFT setups to understand how trust-region thresholds should be adjusted for different adaptation regimes.

- Sampling strategy dependence. Study how temperature, top-p/top-k sampling, and beam search interact with divergence estimation and mask behavior; propose sampling-aware threshold schedules.

- Failure modes of Binary approximation. Identify conditions where Binary divergence misses harmful shifts (e.g., mass redistributed among non-sampled head tokens), quantify their frequency, and propose detection or fallback to richer approximations.

- Monitoring and mitigation of “bad updates.” Generalize the ad-hoc probability-difference threshold (e.g., 0.5) into a principled online detector using mismatch metrics, per-step divergence estimates, and risk scoring; provide intervention policies.

- Hyperparameter robustness. Report comprehensive sensitivity analyses for ε, K, E_low/E_high, learning rate, batch size, and reward scaling across models/tasks; develop default recipes that transfer reliably.

- Sample efficiency and gradient quality. Quantify improvements in sample complexity (reward per collected token), gradient signal retention for low-probability tokens, and compare against PPO/GRPO across fixed compute budgets.

- Distributed training considerations. Assess DPPO under real-world pipelines (micro-batching, gradient accumulation, ZeRO/offloading, parameter servers) and identify any interaction effects on trust-region enforcement and mismatch.

- Extending theory to mixed objectives. Many practical RLHF pipelines use decoupled or batched objectives. Provide theory/algorithms that preserve stability with decoupled evaluations or recomputed anchors, or formally show why they fail and how to fix them.

- Calibration to reward scales and normalization. The bound depends on max|R(y)|. Study the impact of reward normalization, clipping, and shaping on mask decisions and stability; provide standardized reward preprocessing guidelines.

- Broader divergence controllers. Explore adaptive controllers that adjust ε based on observed DTV/DKL trajectories, advantage distributions, or reward volatility, aiming to maintain a target mismatch level automatically.

- Reproducibility details. Include seeds, optimizer configs, numerical tolerances, engine versions, and ablation logs to enable faithful reproduction and cross-lab validation of DPPO’s stability and efficiency claims.

Glossary

- Advantage function: The difference between the action-value and state-value under a policy, indicating how much better an action is than average at a state. "advantage function AT (s, a) = Q™ (s, a) - VT (s)."

- Behavior policy: The policy used to generate data (rollouts) from which learning updates are computed. "behavior policy (for rollout)"

- Binary approximation: A divergence approximation that collapses a categorical distribution into a Bernoulli over “sampled token vs. others” to cheaply estimate shift. "Binary Approximation"

- CISPO: A policy-gradient method that applies truncated importance sampling; used as a baseline RL algorithm for LLMs. "CISPO"

- Clip-Higher: A heuristic that relaxes the upper clipping bound in PPO-like methods to reduce over-clipping of large ratios. "Clip-Higher suggests"

- Divergence Proximal Policy Optimization (DPPO): The proposed algorithm that replaces ratio clipping with a divergence-based mask to enforce a principled trust region. "Divergence Proximal Policy Optimization (DPPO)"

- Entropy collapse: A failure mode where the policy’s entropy drops sharply, leading to unstable training or mode collapse. "entropy collapse (Cui et al., 2025)"

- Finite-horizon, undiscounted setting: An episodic RL setup with fixed sequence length and no discounting, matching LLM generation. "finite-horizon, undiscounted setting of LLM generation"

- GRPO: A PPO-like algorithm used for LLM RL (e.g., in DeepSeek) that employs group-based advantages and clipping. "GRPO"

- Importance sampling: A variance-reduction technique reweighting samples collected under one policy to estimate objectives for another. "importance sampling"

- KL divergence: A measure of difference between probability distributions often used to constrain policy updates. "KL divergence"

- LoRA (Low-Rank Adaptation): A parameter-efficient fine-tuning method that adapts large models via low-rank updates. "with LoRA (Hu et al., 2022)"

- Markov Decision Process (MDP): A formal framework for sequential decision making defined by states, actions, transitions, and rewards. "Markov Decision Process (MDP)"

- Minorize-Maximization (MM) algorithm: An optimization scheme that iteratively maximizes a surrogate lower bound touching the target objective. "Minorize-Maximization (MM) algorithm"

- Mixture-of-Experts (MoE): A model architecture that routes inputs to specialized expert modules to scale capacity. "MoE Base"

- Monte Carlo estimate: An estimate computed from random samples; here, a single sampled token’s ratio approximates divergence. "single-sample Monte Carlo estimate"

- Pinsker's inequality: A bound relating total variation and KL divergence, often used to justify KL-based trust regions. "Pinsker's inequality:"

- Policy improvement bound: A lower bound on return difference that trades off a surrogate advantage term with a divergence penalty. "Policy Improvement Bound"

- Proximal Policy Optimization (PPO): A first-order RL algorithm that stabilizes updates via ratio clipping instead of explicit constraints. "Proximal Policy Optimization (PPO)"

- Ratio clipping: The PPO heuristic that limits the probability ratio between new and old policy to a fixed range to avoid large updates. "ratio clipping"

- Rollout router replay (R3): A MoE-specific stabilization technique that reuses or aligns router decisions between training and inference. "rollout router replay (R3)"

- State-value function: The expected return starting from a state under a given policy. "state-value function V™(s)"

- Surrogate objective: An analytically tractable objective that approximates the true performance improvement for policy optimization. "surrogate objective"

- Top-K approximation: A divergence approximation computed over the top-K tokens (plus an “other” bucket) to track head mass shifts. "Top-K Approximation"

- Total Variation (TV) divergence: A distributional distance (half the L1 difference) used to bound and control policy shifts. "Total Variation (TV) divergence"

- Training-inference mismatch: Numerical discrepancies between training and inference engines that can destabilize RL fine-tuning. "training-inference mismatch"

- Truncated Importance Sampling (TIS): A technique that caps importance weights to reduce variance, potentially introducing bias. "Truncated Importance Sampling (TIS)"

- Trust Region Policy Optimization (TRPO): A second-order algorithm that maximizes a surrogate objective subject to a KL (or TV) constraint. "Trust Region Policy Optimization (TRPO)"

- Trust region: A constraint limiting how far the updated policy may move from the behavior policy to ensure stable improvement. "trust region"

Practical Applications

Immediate Applications

The following applications can be deployed now using the paper’s methods and guidance, with minimal additional research or engineering beyond integration and tuning.

- Stable RLHF/RLAIF training for LLMs in production (Software/AI)

- What: Replace PPO/GRPO clipping with DPPO’s divergence-based mask (Binary or Top-K TV/KL), anchor the trust region to the rollout policy, and monitor training–inference mismatch.

- Why: Immediate gains in training stability and efficiency; fewer collapses; faster convergence than GRPO; reduced sensitivity to low-probability token clipping.

- Tools/Workflows: Integrate DPPO into RLHF pipelines; use binary TV/KL approximation for scalability; set a divergence threshold δ; audit “bad updates” on negative samples; track mean |π−μ|.

- Assumptions/Dependencies: Availability of a reward model and on-policy rollouts; PyTorch/Deep Learning framework; proper advantage estimation; consistent inference/training engines.

- Cost-efficient MoE reinforcement learning without rollout router replay (Software/Cloud)

- What: Use DPPO to stabilize MoE training, reducing or eliminating the need for R3 (router replay) and decoupled objectives.

- Why: Lower training cost and complexity (≈25% savings reported from avoiding recompute); better stability than R3-enhanced baselines in experiments.

- Tools/Workflows: Apply DPPO masks per-token at each step; keep trust region anchored to µ_rollout; monitor router behavior for drift.

- Assumptions/Dependencies: MoE infrastructure (sharding, routing) in place; monitoring of router distributions; DPPO threshold tuning per model.

- Faster math and reasoning model fine-tuning for AI tutoring (Education/EdTech)

- What: Adopt DPPO for math reasoning datasets (e.g., AIME, DAPO-Math) to train tutors with higher efficiency and stability.

- Why: Demonstrated faster reward optimization and better online scores; mitigates entropy collapse; handles low-probability “reasoning” tokens effectively.

- Tools/Workflows: Use Top-K or Binary divergence masks; relax constraints symmetrically for low-probability tokens (“Relax-both”); entropy-aware monitoring.

- Assumptions/Dependencies: High-quality domain datasets; reliable reward functions (e.g., correctness checks); calibration of δ to maintain exploration without collapse.

- Safer negative-feedback handling in alignment (Healthcare, Finance, Safety-critical domains)

- What: Block only large-divergence updates on negatively rewarded samples to avoid destabilizing knowledge while still learning from penalties.

- Why: Paper shows a small fraction of large negative updates cause collapse; DPPO prevents catastrophic shifts on high-probability tokens.

- Tools/Workflows: Minimal mask on negative samples (e.g., block when Δprobability > δ); domain-specific reward models (guideline adherence, compliance).

- Assumptions/Dependencies: Accurate negative signal labeling; trustworthy reward models; careful δ selection to avoid over-blocking.

- LoRA-compatible stable RLHF for smaller budgets (Startups/SMBs)

- What: Apply DPPO with LoRA adapters to fine-tune LLMs economically while preserving stability.

- Why: DPPO demonstrated effectiveness with LoRA in experiments; reduces instability-induced restarts and wasted compute.

- Tools/Workflows: DPPO-LoRA training plugin; binary divergence masks to keep memory overhead negligible.

- Assumptions/Dependencies: LoRA integration; reward model; monitoring of mismatch and entropy to avoid collapse.

- MLOps monitoring for training–inference mismatch (MLOps/Platform)

- What: Introduce dashboards tracking |π−μ|, divergence per token, and “bad update” fractions; alert on rising mismatch.

- Why: Mismatch accumulation predicts collapse; DPPO maintains low mismatch; visibility helps early interventions.

- Tools/Workflows: Logging hooks for rollout and current policy distributions; periodic mismatch summaries; automated threshold tuning.

- Assumptions/Dependencies: Unified logging across training/inference stacks; reproducible inference kernels; basic observability stack.

- Agentic LLM skill learning with stable updates (Software/Robotics/Agents)

- What: Use DPPO for agent training (e.g., tool use, planning environments like GEM) to improve stability under sparse rewards.

- Why: Divergence masks reduce catastrophic policy shifts; better handling of high-entropy tokens driving exploration.

- Tools/Workflows: On-policy rollouts anchored masks; entropy-aware curriculum; binary divergence to limit memory footprint.

- Assumptions/Dependencies: Environment rewards available; stable tool APIs; alignment objectives defined.

- Token-level exploration analysis and control (Software/Research)

- What: Instrument training to identify frequently clipped/high-entropy tokens; relax constraints for low-probability tokens in both directions.

- Why: Low-probability/high-entropy tokens drive exploration; symmetric relaxation (“Relax-both”) yields efficient and stable learning.

- Tools/Workflows: Token entropy analyzer; per-token clipping statistics; adaptive relaxation when p(yt|st) < α.

- Assumptions/Dependencies: Token logging; careful α, δ tuning; guardrails to prevent entropy collapse.

- Immediate framework adoption in open-source stacks (Academia/OSS)

- What: Integrate DPPO into RLHF frameworks (e.g., OAT, HybridFlow) and release “Stable-RL” trainers.

- Why: Low-overhead binary divergence makes DPPO practical; reproducible stability for research benchmarks.

- Tools/Workflows: Stable-RL GitHub (as referenced); unit tests for mismatch metrics; examples for PPO→DPPO migration.

- Assumptions/Dependencies: Open-source licensing; CI for benchmarks; community datasets and reward models.

Long-Term Applications

These applications will benefit from further research, scaling, standardization, or ecosystem development before broad deployment.

- Industry standardization of divergence-based trust regions for LLM training (Policy/Standards)

- What: Develop best-practice guidelines and benchmarks mandating divergence-anchored trust regions to reduce training collapses.

- Why: Evidence that ratio clipping is structurally mismatched for LLMs; divergence masks improve safety and reliability.

- Tools/Workflows: “Trust Region Auditor” certification; conformance tests (mismatch thresholds, bad-update rates).

- Assumptions/Dependencies: Multi-stakeholder consensus; standardized metrics and reporting; regulatory interest.

- Adaptive, auto-tuned trust region controllers (MLOps/AutoML)

- What: Controllers that automatically adjust δ, Top-K size, and mask directionality based on live mismatch/entropy signals.

- Why: Reduce manual tuning; maintain optimal balance between efficiency and stability across training phases.

- Tools/Workflows: Feedback loops; Bayesian or RL-based schedulers; anomaly detection on divergence trends.

- Assumptions/Dependencies: High-quality telemetry; safe auto-tuning policies; robust fallback behaviors.

- Safe on-device or online personalization with trust regions (Consumer/Daily life)

- What: Continual learning for personal assistants with divergence guards to prevent model drift or catastrophic forgetting on-device.

- Why: Users get tailored behavior without instability risks; divergence masks limit unsafe updates from noisy feedback.

- Tools/Workflows: Lightweight binary divergence masks; periodic consolidation; privacy-preserving reward estimation.

- Assumptions/Dependencies: Edge compute capability; privacy-safe data/rewards; incremental learning infrastructure.

- Cross-modal DPPO for multi-modal and embodied AI (Robotics/Multimodal AI)

- What: Extend divergence-based trust regions to images/audio/sensor streams and action spaces in embodied agents.

- Why: Stability benefits may translate to multi-modal distributions and robot policies under sparse rewards.

- Tools/Workflows: Modal-specific divergence approximations; joint trust regions across modalities; safety masks for physical actions.

- Assumptions/Dependencies: Well-defined multi-modal divergences; robust simulators; safety validation protocols.

- Hardware–software co-design to minimize training–inference mismatch (Semiconductors/Systems)

- What: Kernel/inference engine designs that reduce numerical discrepancies contributing to mismatch.

- Why: Paper highlights mismatch as a collapse driver; co-designed libraries could make DPPO even more robust.

- Tools/Workflows: Deterministic kernels; standardized precision strategies; hardware-level telemetry.

- Assumptions/Dependencies: Vendor collaboration; performance–determinism trade-offs; ecosystem adoption.

- Federated, privacy-preserving DPPO for sensitive domains (Healthcare/Finance)

- What: Stable federated RLHF with divergence masks to align models across institutions without sharing raw data.

- Why: Better safety under heterogeneous data; controlled negative feedback prevents destabilizing updates.

- Tools/Workflows: Secure aggregation; per-site trust region settings; mismatch monitoring across clients.

- Assumptions/Dependencies: Federated infrastructure; privacy compliance (HIPAA/GDPR); robust reward modeling.

- Safety certifications built on divergence audits (Policy/Regulation)

- What: Auditable pipelines requiring documented divergence bounds, bad-update rates, and mismatch control during fine-tuning.

- Why: Provides measurable safety guarantees; aligns with responsible AI mandates.

- Tools/Workflows: Compliance reports; third-party audits; continuous assurance pipelines.

- Assumptions/Dependencies: Regulatory frameworks; accredited auditors; standardized test suites.

- DPPO at trillion-scale model training (Big Tech/Frontier labs)

- What: Use divergence masks and Top-K approximations to stabilize training of ultra-large models and reduce catastrophic resets.

- Why: Instability costs escalate with scale; divergence-based trust regions promise fewer collapses and better utilization.

- Tools/Workflows: Hierarchical trust regions; distributed mismatch monitoring; router-aware masks in massive MoE.

- Assumptions/Dependencies: Scalable telemetry; efficient distributed divergence approximations; new scheduler designs.

- Entropy-aware optimizers combining exploration signals with divergence constraints (Research/Optimizers)

- What: Optimizers that explicitly leverage high-entropy token dynamics while enforcing divergence limits.

- Why: High-entropy minority tokens drive effective RL; formalizing this balance can improve reasoning model training.

- Tools/Workflows: Dual-objective schedules (entropy + divergence); adaptive relaxation for low-probability tokens.

- Assumptions/Dependencies: Further empirical validation; theoretical extensions; robust hyperparameter strategies.

- Domain-specific alignment for legal/medical assistants with constrained negative updates (Healthcare/Legal)

- What: Train assistants that robustly incorporate corrective feedback (e.g., guideline violations) without destabilizing core knowledge.

- Why: DPPO masks prevent harmful shifts on high-probability tokens; safer incorporation of penalties.

- Tools/Workflows: Domain reward models; curated negative examples; divergence-threshold curricula.

- Assumptions/Dependencies: Expert-labeled data; rigorous evaluation; liability-aware deployment protocols.

Collections

Sign up for free to add this paper to one or more collections.