Position: Agentic Evolution is the Path to Evolving LLMs

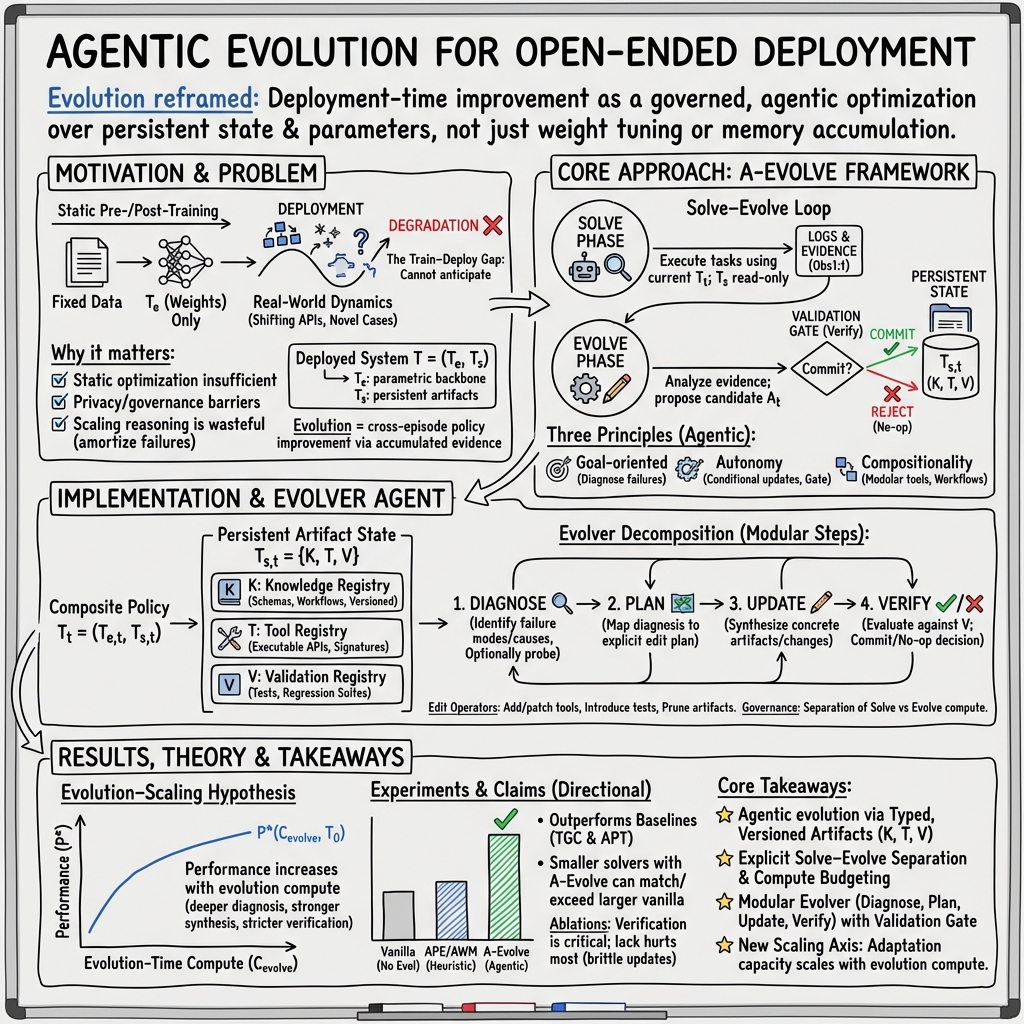

Abstract: As LLMs move from curated training sets into open-ended real-world environments, a fundamental limitation emerges: static training cannot keep pace with continual deployment environment change. Scaling training-time and inference-time compute improves static capability but does not close this train-deploy gap. We argue that addressing this limitation requires a new scaling axis-evolution. Existing deployment-time adaptation methods, whether parametric fine-tuning or heuristic memory accumulation, lack the strategic agency needed to diagnose failures and produce durable improvements. Our position is that agentic evolution represents the inevitable future of LLM adaptation, elevating evolution itself from a fixed pipeline to an autonomous evolver agent. We instantiate this vision in a general framework, A-Evolve, which treats deployment-time improvement as a deliberate, goal-directed optimization process over persistent system state. We further propose the evolution-scaling hypothesis: the capacity for adaptation scales with the compute allocated to evolution, positioning agentic evolution as a scalable path toward sustained, open-ended adaptation in the real world.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper talks about how to make LLMs—the kinds of AI that can read and write—get better on their own after they’re deployed in the real world. The authors argue that just training a model once and then using it isn’t enough, because the world keeps changing. They propose a new idea called “agentic evolution,” where an AI has a built-in “evolver” that acts like a coach and mechanic: it spots problems, figures out what to fix, and safely updates the system so it performs better over time.

The big questions the paper asks

- Why do LLMs struggle after they’re deployed, even if they’re powerful at the start?

- How can LLMs improve themselves during use, not just during training?

- What does a good “self-improvement” process look like—one that is safe, reliable, and keeps getting better?

- If we give more computing resources to this improvement process, will the AI adapt better in a predictable way?

How the authors approached the problem

Think of an AI agent like a student using a toolbox:

- The “brain” of the AI is its learned parameters (the model’s weights).

- The “toolbox” includes things like code tools, workflows, saved knowledge, and tests—these are called “persistent artifacts.”

When the AI runs into a new situation (like a log file format changing), just “thinking harder” every time is wasteful. Instead, the AI should learn once, add the fix to its toolbox, and reuse it later.

The authors introduce a framework called A-Evolve. It works in two loops:

- The “solve” loop: the AI tries to do tasks with what it already knows and the tools it has.

- The “evolve” loop: an evolver agent reviews what happened, diagnoses failures, plans changes, updates the toolbox (and sometimes the model), and then uses a “validation gate” (tests) to decide if the update should be accepted.

To keep things clear and safe, A-Evolve follows three simple principles:

- Goal-oriented: decide exactly what needs to change and why (like fixing a broken parser tool).

- Autonomous: only evolve when the evidence is strong, and decide whether to commit or reject changes.

- Compositional: make changes as structured, modular artifacts (like adding a tool, updating a schema, or writing a test), not just dumping more text into memory.

In everyday terms: it’s like fixing your bike by adding a proper part and a checklist, not just writing a note and hoping you remember next time.

What they found and why it matters

The authors ran experiments in a simulated app environment where agents use tools and must pass tests. They compared “agentic evolution” to more basic methods like just updating prompts or storing notes from past runs.

They found:

- Agentic evolution improved task success and reliability across different AI models, often more than simply using a larger model without evolution.

- The validation gate (tests before committing updates) is crucial; without it, bad fixes pile up and cause new problems.

- When they increased the computing power devoted to evolution—either by running more evolve steps or using a stronger evolver model—performance kept improving. This supports their “evolution-scaling hypothesis”: adaptation improves as you invest more compute into the evolution process.

This matters because it shows a practical, safe way for AI to get better over time in changing environments, not just by thinking longer during each task or retraining everything centrally.

What this could mean for the future

- More reliable AI: Systems can adapt to changes (like APIs or data formats) without breaking or needing constant manual updates.

- Better privacy: Improvements can happen locally using structured artifacts, reducing the need to send sensitive data back for retraining.

- Lower long-term cost: Instead of “re-solving” the same problem over and over, the AI learns once and adds a reusable tool or test, saving compute in the long run.

- Safer self-improvement: Because updates must pass tests before being accepted, it’s easier to audit changes and roll back if needed.

The authors suggest future work on:

- Benchmarks that better mimic real-world change.

- Stronger frameworks that diagnose, plan, and verify even more effectively.

- Theory to describe and predict how agentic evolution scales, so we can engineer adaptation the way we engineer training.

In short: the paper argues that the best way to make AI truly useful over long periods is to give it a smart, safe way to evolve itself during deployment—turning “learning how to improve” into a first-class capability.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

The paper leaves several issues unresolved; below is a concrete, actionable list of gaps and open questions for future research.

- External validity beyond AppWorld: Results are limited to a single tool-use benchmark with 50/50 train/test tasks; evaluate agentic evolution on genuinely non-stationary, real-world environments with evolving APIs, data schemas, and user constraints, including privacy/governance settings the paper motivates.

- Absence of parametric baselines: No comparisons against test-time/online fine-tuning methods (e.g., LoRA, active fine-tuning, TTT) under matched compute and governance constraints; quantify catastrophic forgetting vs. agentic evolution empirically.

- Limited drift scenarios: Despite motivating schema/API drift, experiments do not simulate time-varying, adversarial, or abrupt distribution shifts; design controlled drift protocols and measure adaptation speed, stability, and recovery.

- Verification gate robustness: The Verify module relies on unit/regression tests, but the paper does not assess false positives/negatives, test suite coverage, or susceptibility to specification gaming; devise methods to detect overfitting-to-tests and measure generalization of committed artifacts.

- Safety and security of artifact updates: No concrete sandboxing, permissioning, or supply-chain controls for evolver-synthesized code/tools; evaluate whether the evolver introduces vulnerabilities, side effects, or privilege escalation, and establish hardened execution/validation policies.

- Privacy claims untested: The claimed benefit of local, governed evolution (reduced data centralization) is not measured; analyze data residency, leakage risks via artifacts, audit trails, and compliance with privacy regulations.

- Compute accounting for Cevolve: Evolution-time compute is proxied by “steps” and model size, not a standardized metric; define and report consistent compute budgets (tokens, wall-clock, tool calls, memory footprint) and energy costs for solve vs. evolve phases.

- Cost–benefit trade-offs: No measurement of total cost and amortized savings; quantify whether evolution reduces future inference-time compute and latency, and compare ROI against simply using a larger solver or adding test-time reasoning.

- Scaling law formalization: The evolution-scaling hypothesis lacks formal derivations and cross-domain evidence; characterize P*(Cevolve) with empirical scaling curves, regimes of diminishing returns, and sensitivity to environment complexity.

- Joint optimization of Ts and Te: Experiments focus on non-parametric artifacts; specify algorithms and criteria for when to update weights vs. artifacts, how to co-train them, and how to avoid interference or redundancy.

- Triggering and scheduling evolution: The autonomy principle lacks formal decision rules; develop cost-sensitive policies for when to evolve vs. no-op, including thresholds, bandit/meta-RL strategies, and asynchronous scheduling under compute constraints.

- Diagnosis accuracy: No metrics for the Diagnoser’s correctness; measure localization precision/recall (root-cause identification), error taxonomy coverage, and robustness to noisy or partial evidence.

- Long-horizon stability: Evolution is evaluated over up to 12 steps; study months-long deployment, accumulation of technical debt, dependency/version conflicts, regression risk, and effectiveness of pruning/rollback strategies.

- Artifact lifecycle management: Ts growth, indexing, retrieval, versioning, and pruning policies are underspecified; design scalable artifact stores with provenance, dependency resolution, conflict detection, and garbage collection.

- Generalization across domains and modalities: Evaluate agentic evolution on domains without tool APIs (pure reasoning), multi-modal tasks (vision/audio), continuous control/robotics, and knowledge-intensive QA to test framework breadth.

- Multi-agent evolution workflows: The paper mentions multi-agent decompositions but does not evaluate coordination protocols, conflict resolution, credit assignment, or scalability with multiple evolvers/solvers.

- Governance metrics: Beyond TGC/APT, define measures for durability, reuse frequency, safety incidents, rollback rates, and governance effectiveness (e.g., proportion of rejected updates and reasons).

- Robustness to adversarial environments: Assess whether malicious inputs or poisoned feedback can steer the evolver to commit harmful artifacts; develop adversarial detection, trust-weighted evidence selection, and isolation mechanisms.

- Interaction with human-in-the-loop review: Specify workflows, thresholds for escalation, reviewer burden, and the impact of human oversight on adaptation speed, safety, and cost.

- Catastrophic forgetting in agentic evolution: Although risks are shown for no-verification ablation, quantify forgetting/regression under normal operation and propose metrics to monitor and prevent capability drift.

- Provenance and auditability standards: Define minimal metadata, reproducibility requirements, and audit trails for updates, including change impact analysis, differential testing, and explainable commit decisions.

- Reward and feedback modeling: Obs1:t includes traces and errors, but the framework lacks formal reward signals or feedback integration; explore structured feedback channels (user ratings, counterfactuals) and their effect on evolution quality.

- Environment–frontier coupling: P*(Cevolve, T0) is defined for fixed environments, while claims target open-ended ones; formalize how non-stationarity affects the frontier and whether monotonicity holds under shifting distributions.

- Baseline breadth and strength: Include stronger non-agentic baselines (e.g., optimized prompt-edit search, ReasoningBank-style memory, self-play fine-tuning) and ablations with equal verification gates to isolate agentic effects.

- Failure mode taxonomy: Provide systematic analysis of common evolution failures (e.g., brittle patches, incoherent edits, test overfitting) and mitigation strategies embedded in the framework.

- Data and code availability: Reliance on proprietary models (Claude, Gemini, “GPT-5”) limits reproducibility; release open-source implementations, benchmarks with drift generators, and standardized evaluation harnesses.

- Compute allocation strategies: Investigate optimal split between solve-time and evolve-time compute budgets, possibly via meta-optimization, to maximize long-run performance under fixed total compute.

- Separation results and regret bounds: Theoretical claims are deferred; formalize agentic evolution as optimization over combinatorial program spaces and prove separation from heuristic/non-agentic methods, with regret bounds vs. oracle fine-tuning.

Glossary

- A-Evolve: A general framework that implements agentic evolution for deployment-time improvement using governed, persistent artifacts. "A-Evolve is a general, implementation-agnostic framework"

- ablation studies: Experimental analysis that removes specific components to assess their individual contributions to system performance. "Ablation studies of A-Evolve using Claude Haiku 4.5 and Gemini 3. Flash as solvers."

- agentic evolution: A paradigm where an autonomous evolver agent diagnoses, plans, updates, and verifies changes to improve LLM systems during deployment. "In contrast, agentic evolution takes a different view."

- append-and-retrieve: A heuristic memory approach that appends experiences and retrieves them later without deep reasoning about relevance. "append-and-retrieve (Shinn et al., 2023)"

- autonomy principle: A core principle of agentic evolution that dictates when to evolve by conditionally deciding whether to commit updates. "The autonomy principle spec- ifies when to change:"

- Average Passed Tests (APT): An evaluation metric measuring the average fraction of unit tests passed per task. "Average Passed Tests (APT)"

- combinatorial program space: The large, structured space of possible artifacts (e.g., tools, schemas, tests) over which the evolver optimizes. "formalizing evolution as optimization over a combinatorial program space"

- commit decision: The binary outcome that determines whether a proposed update is applied to the system. "returns a commit deci- sion ct"

- composite policy: The joint representation of an LLM’s parametric model and persistent artifact state that governs behavior. "We model the system as a composite policy"

- compute-optimal evolution frontier: The best achievable performance as a function of the compute allocated to evolution. "the compute-optimal evolution frontier as"

- context saturation: Degradation that occurs when accumulated memories become too large/noisy for effective retrieval or use. "context saturation and in- consistent retrieval."

- cross-episode: Spanning multiple deployment episodes so improvements persist across tasks over time. "Evolution refers to cross-episode improvement"

- decision-theoretic control: The principled mechanism that allocates evolution compute only when updates are expected to be beneficial. "This decision-theoretic control allows evolution compute to be allocated"

- deployment-time adaptation: Methods that adapt models during deployment rather than solely in pre-training/post-training. "deployment-time adaptation methods"

- evolver agent: The autonomous component that diagnoses failures, plans, proposes, and verifies updates to the system. "an explicit evolver agent-a goal-directed optimizer"

- evolution-scaling hypothesis: The proposition that adaptation capacity increases predictably with more compute devoted to evolution. "We propose the Evolution-Scaling Hypothesis"

- evolution-time compute: The compute budget allocated to analysis, synthesis, and verification within the evolution loop. "strictly increasing with respect to evolution-time compute."

- FEvolve: The update mechanism that maps current policy and observations to new parameters and/or persistent state. "FEvolve is the update mechanism."

- goal-oriented principle: A core principle specifying what to change by targeting causes of failure for durable improvements. "The goal-oriented principle specifies what to change."

- governance assets: Validation artifacts (tests, reviews) used to ensure updates are safe and non-regressive. "governance assets such as unit tests, regression suites"

- governance gate: The mechanism that evaluates and approves or rejects candidate updates before they are committed. "from the gover- nance gate C."

- inference-time compute: Compute used during problem solving (reasoning) at deployment time for individual instances. "inference-time compute via reasoning chains"

- knowledge registry: The component of the persistent state that stores schemas, workflows, contracts, and exemplars. "The knowledge registry Kt stores structured or textual artifacts"

- non-parametric persistent artifact state: The editable, non-weight components (tools, knowledge, tests) that shape system behavior across episodes. "Ts is a non-parametric persistent artifact state"

- non-stationary environments: Settings where data distributions and constraints change over time. "open-ended, non- stationary environments"

- online fine-tuning: Updating model parameters during deployment using recent observations. "online fine-tuning"

- parametric backbone: The model’s learned weights that define its core capabilities. "Te denotes the parametric backbone (e.g., LLM weights)"

- parametric plasticity: The capacity of model parameters to adapt via weight updates during deployment. "Why not rely on parametric plasticity?"

- provenance: Recorded lineage of changes and artifacts to support auditing and rollback. "with provenance and tests."

- schema drift: Changes in data schemas/interfaces over time that break existing logic or tools. "Under the schema drift scenario"

- scaling axis: An independent dimension along which increasing resources (e.g., compute) improves capability. "We identify this as a third scaling axis"

- solve-evolve loop: The operational cycle that separates instance-level solving from cross-episode evolution. "The Solve-Evolve Loop."

- solve-time compute: Compute budget allocated to solving tasks (not evolving the system). "a solve-time compute budget Csolve"

- Task Goal Completion (TGC): An evaluation metric measuring the fraction of tasks successfully completed. "Task Goal Completion (TGC)"

- test-time compute: Compute expended during inference; increasing it alone may not yield durable improvements. "test-time compute alone does not scale."

- test-time training: Adapting the model at test time based on immediate observations. "test-time training"

- tool registry: The repository of executable functions/APIs with signatures and tests used during solving and diagnosis. "The tool registry Tt contains executable functions"

- validation gate: The verification checkpoint (e.g., unit/regression tests) that fixes must pass before being adopted. "uses a validation gate to verify the fix"

- validation gating: The practice of requiring updates to pass verification before commit. "commits updates without validation gating."

- validation registry: The store of tests and review hooks that ground commit decisions and prevent regressions. "The validation registry Vt contains governance assets"

- versioned adapter: A maintained, versioned function that adapts to interface or schema changes for robustness. "synthesizing a versioned adapter function"

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now, leveraging the A-Evolve framework’s persistent artifact state (knowledge, tools, validation), solve–evolve loop, and evolver agent (diagnose–plan–update–verify). Each item includes sector tags, potential products/workflows, and key dependencies or assumptions.

- Auto-adapter synthesis for API and schema drift

- Sectors: software, data engineering, cloud operations

- Product/workflow: “Versioned adapter” generator that diagnoses parser/API failures, synthesizes adapters, and commits only after unit/regression tests pass

- Dependencies/assumptions: Access to logs/traces and unit tests; tool-execution sandbox; evolver model and evolution compute budget; artifact versioning

- AgentOps Evolution Layer for enterprise LLM agents

- Sectors: software, IT operations

- Product/workflow: Middleware implementing A-Evolve (K/T/V registries, governance gate, audit/rollback, dashboards); integrates with existing agent frameworks

- Dependencies/assumptions: Integration hooks to agents/tools; test assets for validation; organizational policies for commit/rollback; human-in-the-loop for high-risk changes

- Privacy-preserving local adaptation for on-device assistants

- Sectors: consumer software, mobile, healthcare

- Product/workflow: Local compilation of reusable tools/workflows from user interactions; updates gated by on-device validation; no central retraining required

- Dependencies/assumptions: Sufficient edge compute/storage; secure local sandbox; curated validation assets to avoid harmful drift

- Customer support troubleshooting workflow evolution

- Sectors: customer support, SaaS

- Product/workflow: Evolving, validated playbooks (tools, SOPs, checklists) derived from ticket traces; persistent fixes amortize repeated reasoning

- Dependencies/assumptions: Access to historic tickets; end-to-end test scenarios; approval gates for customer-facing changes

- Security operations: parsers and detection rule upkeep

- Sectors: cybersecurity

- Product/workflow: Evolver diagnoses log parsing failures and stale IOCs; synthesizes parser patches and detection rules; commits after regression suites

- Dependencies/assumptions: SOC tooling integration; labeled alerts/ground truth; strict governance for production commits

- Compliance schema and rule maintenance

- Sectors: finance, healthcare

- Product/workflow: Versioned regulatory schemas and tool wrappers kept current via evolver; auditable change logs and validation gate reduce risk

- Dependencies/assumptions: Up-to-date regulatory feeds; legal review in the governance loop; test harnesses that encode compliance requirements

- Self-healing ETL and analytics pipelines

- Sectors: data engineering, BI

- Product/workflow: Evolver localizes transform failures, patches steps, and adds tests to prevent regressions; pipelines stabilize in non-stationary data environments

- Dependencies/assumptions: Pipeline observability; staging environment for verification; artifact registry for transforms and tests

- Education: course-specific tutoring artifacts

- Sectors: education, edtech

- Product/workflow: Library of typed artifacts (worked examples, graders, rubrics) evolved from assignments; validation via auto-grading and teacher review

- Dependencies/assumptions: LMS integration; graded test sets; teacher-in-the-loop governance

- Cost optimization via “evolution compute” budgeting

- Sectors: cloud cost management, AI platform ops

- Product/workflow: Scheduler to reallocate compute from repeated long chains-of-thought to evolver steps; ROI dashboards using TGC/APT and incident reduction metrics

- Dependencies/assumptions: Observability of solve/evolve budgets; policy to cap solve-time waste; performance tracking instrumentation

- Research benchmarking of train–deploy gap

- Sectors: academia

- Product/workflow: AppWorld-style environments with unit tests; datasets that induce schema/API drift; evaluation using TGC/APT; ablation studies of Diagnose/Plan/Verify

- Dependencies/assumptions: Executable environments; reproducible traces; agreed metrics and protocols

Long-Term Applications

These applications require further research, scaling, or development—especially stronger evolvers, larger evolution-time compute budgets, richer validation assets, and sector-specific governance.

- Autonomous software maintenance agents

- Sectors: software engineering, DevOps

- Product/workflow: Agents that triage bugs, generate tests, synthesize patches, and coordinate refactors; commits gated via CI/CD and formal checks

- Dependencies/assumptions: Advanced evolver reliability; comprehensive test coverage; organizational policies for autonomy and rollback; codebase-scale artifact registries

- Standards and regulation for autonomous evolution

- Sectors: policy, standards bodies, compliance

- Product/workflow: Certification requirements for validation gates, auditability/provenance, rollback protocols, and alignment testing of evolver updates

- Dependencies/assumptions: Multi-stakeholder consensus; regulatory buy-in; sector-specific safety benchmarks

- Evolver-as-a-Service and “Evolution Compute” in cloud platforms

- Sectors: cloud, AI platforms

- Product/workflow: Managed evolver APIs, evolution compute quotas, hosted artifact registries (tools/tests/schemas), governance plugins

- Dependencies/assumptions: Secure multi-tenant isolation; pricing and quota models; integration with agent runtimes and CI; robust provenance and audit trails

- Adaptive digital twins and operational control

- Sectors: energy, manufacturing, logistics

- Product/workflow: Evolver updates control workflows/tools as sensors, constraints, or equipment change; verification via high-fidelity simulators and guardrail tests

- Dependencies/assumptions: Accurate simulators; safety certification; fail-safe switching and rollback; domain-specific validation suites

- Healthcare clinical assistants with local evolving adapters and protocols

- Sectors: healthcare, medtech

- Product/workflow: EHR adapters and SOPs evolve in situ; clinician-in-the-loop; updates committed only after medical safety tests and prospective validation

- Dependencies/assumptions: Regulatory compliance (e.g., HIPAA, FDA); medical-grade validation datasets; rigorous governance and auditability

- Finance: governance-constrained strategy evolution

- Sectors: finance, trading, risk

- Product/workflow: Agents that evolve strategies and compliance checks; commit gated by risk tests (VaR/ES), scenario stress, and audit review

- Dependencies/assumptions: Robust risk modeling; regulatory sandboxes; stringent governance; market microstructure simulators for verification

- Robotics field adaptation

- Sectors: robotics, autonomous systems

- Product/workflow: Evolver synthesizes toolchains, adapters, and reusable capabilities as environments change; verify via sim-to-real tests and safety gates

- Dependencies/assumptions: Reliable simulation; hardware safety interlocks; continuous integration for control stacks; strong compositional verification

- Self-evolving cyber defense

- Sectors: cybersecurity

- Product/workflow: Blue-team agents that evolve rules/tools using red-team feedback loops; strict gating to avoid false positives/negatives; continuous audit

- Dependencies/assumptions: Attack simulation frameworks; measurable defense metrics; policy oversight; rapid rollback mechanisms

- Scientific lab automation and protocol evolution

- Sectors: R&D, biotech, chemistry

- Product/workflow: Evolver updates experiment workflows and protocols; verification through ground-truth experiments and statistical validation; provenance tracking

- Dependencies/assumptions: Instrument control integration; experimental safety; reproducibility standards; data governance

- Marketplace of validated artifacts (tools, tests, schemas)

- Sectors: software ecosystem, enterprise IT

- Product/workflow: Curated registries of typed artifacts with provenance, versioning, and licenses; reusable modules for common drifts (APIs, file formats, workflows)

- Dependencies/assumptions: Trust infrastructure; quality assurance; IP management; sector-specific compliance filters

Notes on Feasibility and Assumptions Across Applications

- Strong evolver capability and sufficient evolution-time compute are pivotal (evolution-scaling hypothesis): more compute allows deeper diagnosis, robust artifact synthesis, and stronger verification.

- Environments must support executable validation (unit/regression/end-to-end tests) and artifact versioning to make commit decisions safe and auditable.

- Governance (validation gates, audit logs, rollback) is a first-class requirement, especially in regulated sectors (healthcare, finance, critical infrastructure).

- Privacy constraints favor local, in-situ evolution using typed artifacts over global parameter tuning; however, device/edge compute must be adequate.

- Adoption will be accelerated by SDKs and platform support (registries, gates, dashboards), and by benchmarks that capture the train–deploy gap and measure durable capability gains.

Collections

Sign up for free to add this paper to one or more collections.