- The paper challenges the LPA by empirically showing that gradient alignment, such as an approximate 0.85 positive correlation, contradicts expected geometric anti-alignment during negation.

- It introduces Systematic Linear Propagation (SLP) to formally analyze how first-order parameter updates fail to preserve logical structure in neural models.

- The findings imply that methods like tensor factorization and improved geometric alignment are essential for reliable logical propagation and effective knowledge editing.

Dynamics Reveals Structure: Challenging the Linear Propagation Assumption

Introduction

The landscape of machine learning is marked by the evolution of neural networks that adapt via first-order parameter updates. A critical investigation into this adaptation mechanism questions the prevailing Linear Propagation Assumption (LPA), which postulates that local updates preserve logical coherence across related beliefs. The paper "Dynamics Reveals Structure: Challenging the Linear Propagation Assumption" (2601.21601) explores this assumption, focusing primarily on the functional limitations when logical operations like negation, converse, and composition are applied in a linearized context.

Theoretical Foundation and Motivation

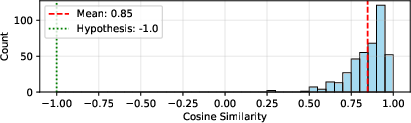

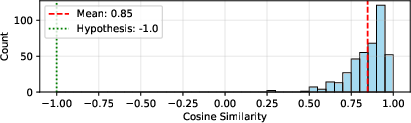

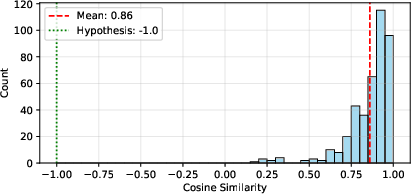

The study begins by establishing the foundational failure of LPA through empirical evidence. Current LLMs exhibit gradient misalignments that contradict the expected geometric anti-alignment required for logical consistency during parameter updates (Figure 1). This misalignment suggests persistent structural deficiencies that hinder reliable logical propagation under LPA. The authors introduce relation algebra as a formalism to dissect these operations—negation flips truth values, converse swaps arguments, and composition forms multi-relational links—and scrutinize the geometric alignment necessary for coherence under first-order updates.

Figure 1: Gradient alignment hinders negation consistency. Contrary to the theoretical requirement for anti-alignment (=−1), empirical gradients are strongly positively aligned (≈0.85).

Systematic Linear Propagation and Its Constraints

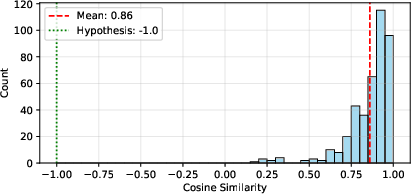

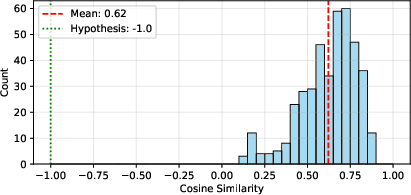

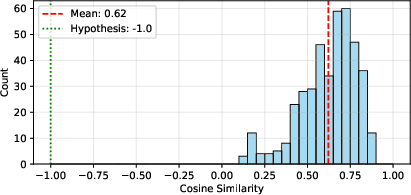

To formalize propagation under the LPA, the authors define Systematic Linear Propagation (SLP), which demands logical equivariance for features and independence among unrelated facts. Such requirements imply that when a model updates its parameter for one query, related queries should inherently propagate the logical transformation. This factor necessitates a geometric alignment within feature spaces—a condition unobserved in practical settings like Qwen3-30B (Figure 2).

Figure 2: Empirical analysis of Qwen3-30B illustrating prevailing positive gradient alignment which opposes SLP requisites.

The paper reveals that ensuring negation-consistent updates necessitates a tensor factorization separating entity-pair context from relation content. This separation aligns with the broader challenge of variable binding in connectionist models, emphasizing the need for structured feature representations.

Implications of Converse and Composition

When considering converse operations, the logical propagation requirements further mandate symmetric and antisymmetric decomposition within feature spaces. This necessitates capturing directionality of relations—a characteristic often absent in current models.

Moreover, the paper highlights an intrinsic incompatibility between bilinear structures required for conjunction and systematic propagation under linear operations. It formalizes that bi-linear conjunction under logical negation collapses to trivial solutions, underscoring the limits of linear transformations in preserving logical structures.

Practical and Theoretical Implications

This research contributes to the systematicity debate by highlighting the inadequacies of neural network architectures in capturing and preserving logical constraints through updates, thus questioning the LPA's fundamental assumptions. It suggests that these inadequacies may account for observed failures in knowledge editing, the reversal curse, and multi-hop reasoning.

By identifying geometric structures conducive to systematic logical propagation, this work advocates for a paradigm shift toward incorporating logical geometric deep learning principles, ensuring that logical transformations are intrinsic to neural representations rather than auxiliary constructs.

Conclusion

The analysis provided in "Dynamics Reveals Structure: Challenging the Linear Propagation Assumption" delineates clear geometric constraints imposed by the LPA on neural models. It elucidates that logical consistency under first-order updates is not merely a matter of optimization but requires adherence to specific structural formulations, particularly under negation and composition operations. The findings are poised to inform future developments focused on logical coherence in AI systems, moving toward architectures that are inherently structured to respect logical symmetries and dynamics.