- The paper establishes a novel framework for zero-shot sim-to-real policy transfer using domain-agnostic 3D keypoint representations that reduce the domain gap.

- It employs transformer-based policy learning over unified point-based representations, achieving up to 44% improvement on manipulation tasks.

- The framework supports efficient co-training with minimal real data, offering significant gains in sample efficiency and robustness to distractors.

Point Bridge: Domain-Agnostic 3D Keypoint Representations for Cross-Domain Policy Learning

Motivation and Problem Statement

A central bottleneck for generalist robot policy learning is the limited availability and high collection cost of large-scale real-world robotic manipulation datasets. In contrast to vision and language domains, scalable Internet-scale data is difficult to obtain for robotics, where embodied physical interactions are required. Simulation and synthetic data generation, leveraged by advances in generative models and high-fidelity simulators, represent a promising alternative. However, the utility of simulation data for real-world deployment is severely constrained by the domain gap—primarily visual, geometric, and sensing differences between simulated and physical environments.

To overcome these limitations, "Point Bridge: 3D Representations for Cross Domain Policy Learning" (2601.16212) presents a framework for sim-to-real policy transfer based on unified, domain-agnostic point-based representations. This architecture achieves robust zero-shot sim-to-real transfer without explicit visual or object-level alignment, and further enables effective co-training with small amounts of real data as well as multitask learning in a language-conditioned setting.

Point Bridge Framework

Point Bridge consists of three main stages: (1) automated extraction of compact task-relevant 3D keypoints from both simulation and real-world observations, (2) transformer-based policy learning over unified point-based representations, and (3) an efficient, flexible real-time perception pipeline for deployment.

Figure 1: The Point Bridge perception pipeline uses state-of-the-art vision-LLMs and segmentation methods to extract task-relevant 3D keypoints from real images and task descriptions.

Unified Point-Based Scene Representation

Point Bridge replaces image-based or dense point-cloud representations with a compact set of 3D keypoints representing the robot and task-relevant objects, all expressed in a common reference frame. In simulation, keypoints are sampled from object meshes, projecting to camera views and simulating real sensor noise. In real deployments, keypoints are extracted by:

- Object Identification: A VLM (Gemini-2.5) analyzes the scene image and natural language task description to identify relevant object categories.

- Object Localization: Molmo-7B localizes objects at the pixel level, initializing masks for semantic object segmentation (SAM-2) to generate robust 2D object masks.

- 3D Projection: Uniformly sampled interior mask points are projected to 3D using FoundationStereo’s depth estimation and camera calibration. Points are subsampled to maintain coverage and computational efficiency.

- End-Effector Representation: Gripper pose is encoded as a set of rigidly offset keypoints, paralleling object keypoint procedures.

This unified abstraction minimizes sim-to-real alignment requirements by enforcing domain-invariant object and robot representations.

Scalable Data Generation and Co-Training

Original human-provided teleoperated demonstrations in simulation are expanded via synthetic data generation tools (MimicGen). Segments are adapted to novel scenes by SE(3) transformations that preserve end-effector geometries relative to scene objects. This approach maximizes policy generalization from limited source data. For enhanced sim-to-real transfer, small sets of real-world demonstrations can be incorporated for joint training—unifying sim and real representations via the keypoint pipeline.

Policies are learned using a decoder-only multi-task transformer, architecturally following BAKU, with point embeddings derived from PointNet encoders (combining robot and object keypoints). For multitask learning, language instructions are embedded (MiniLM). Action prediction targets include the end-effector pose and gripper state, and smoothness regularization is achieved through action chunking and temporal averaging.

Experimental Validation

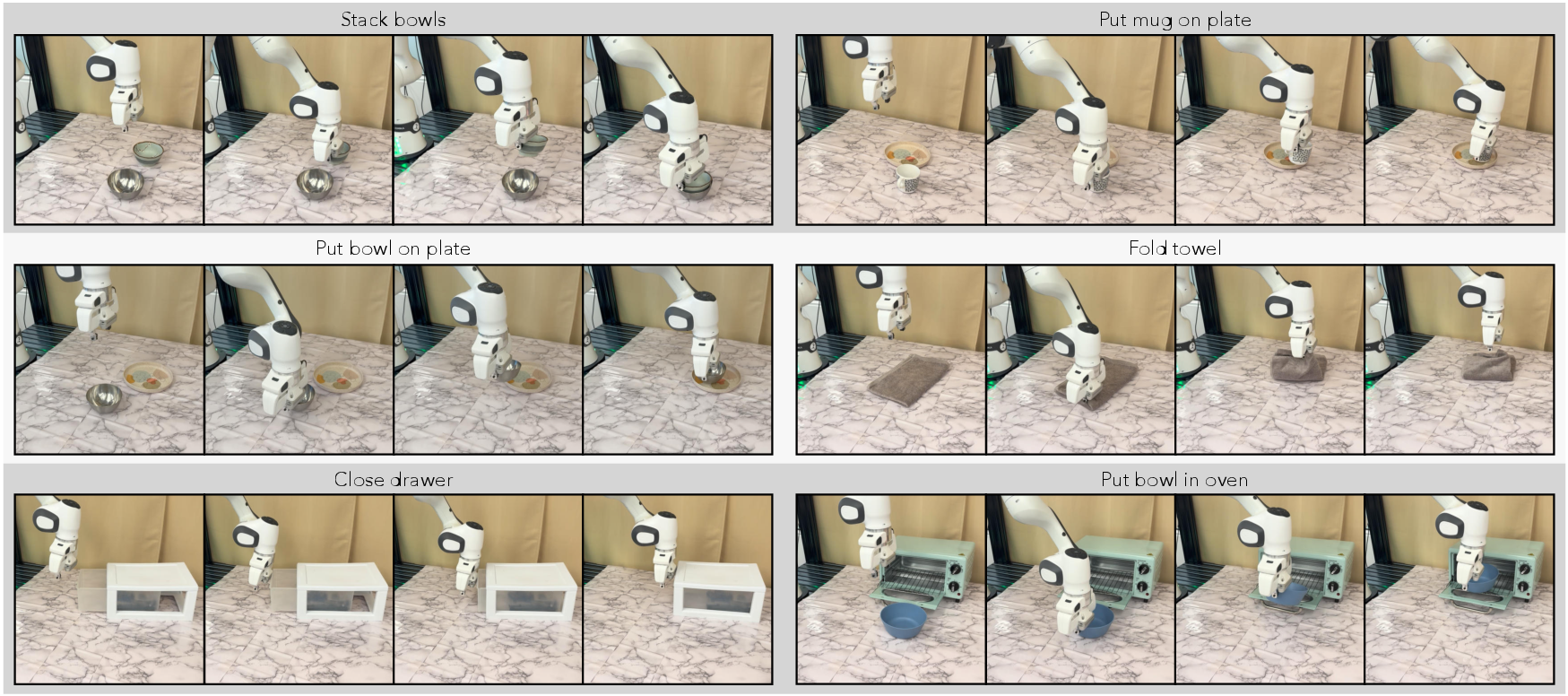

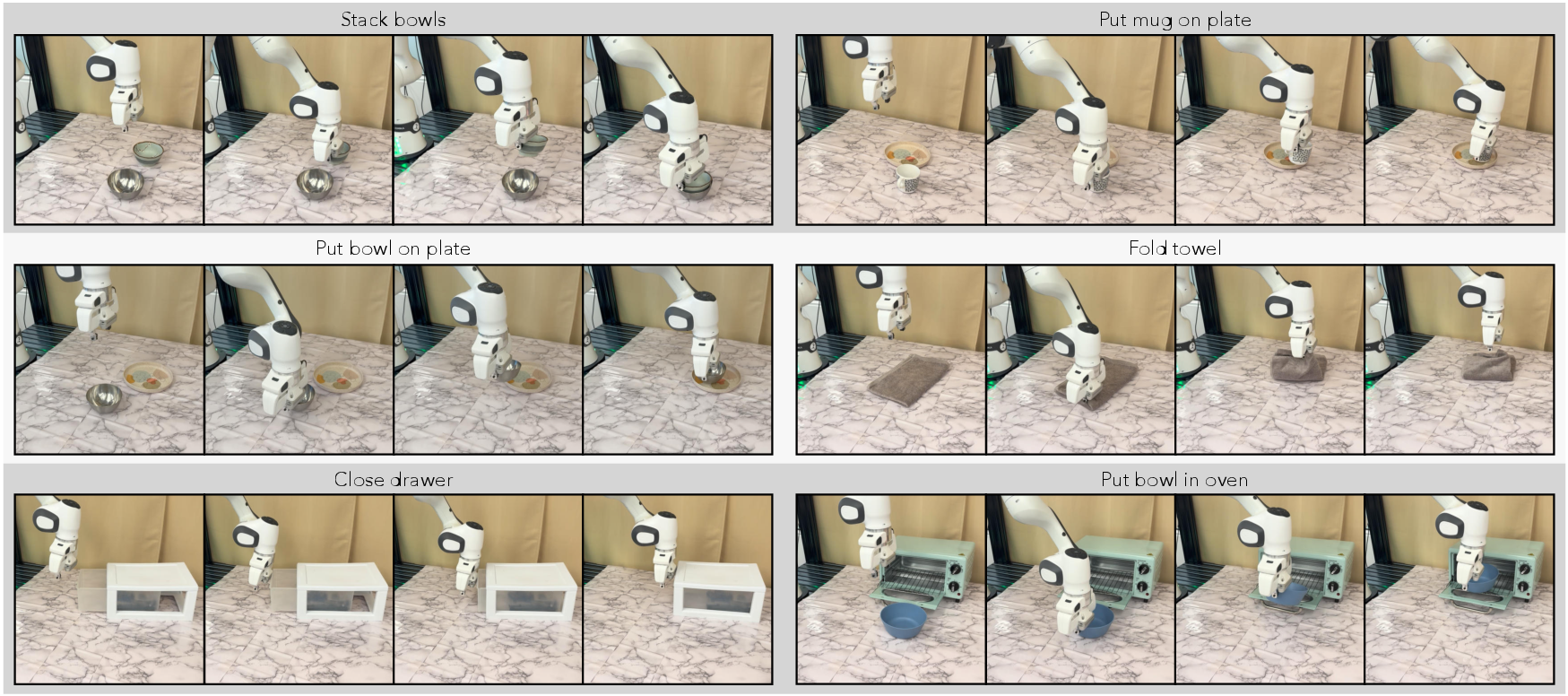

Point Bridge is evaluated on six real-world manipulation tasks, featuring substantial object and environment domain shifts. The experiments leverage both large-scale synthetic simulation data (augmented from demonstrations) and real-world teleoperated examples.

Figure 2: Real robot rollouts visualizing successful execution of six diverse physical manipulation tasks with Point Bridge policies.

Sim-to-Real Transfer and Robustness

Point Bridge enables zero-shot sim-to-real policy transfer with up to 44% improvement over strongest vision-based baselines in both single-task and multitask experiments. Notably, domain-invariant point-based abstraction outperforms approaches reliant on careful visual alignment or high-fidelity simulators.

- Zero-shot transfer is robust to large visual, object, and background discrepancies between sim and reality.

- Point Bridge generalizes across previously unseen object instances due to the abstraction of geometry over pixel-level features.

Adding small amounts of real data through co-training results in success rate gains of up to 66% in multitask settings compared to vision-based sim-and-real co-training methods, demonstrating superior sample efficiency.

Analysis of Critical Pipeline Design Choices

Depth estimation accuracy is a key determinant of success; FoundationStereo-based 3D lifting dramatically outperforms RGB-D sensors and triangulation alternatives, particularly under challenging visibility or reflectivity conditions. Camera view alignment between simulated and real data is a performance bottleneck, though diversity in simulated camera viewpoints alleviates this.

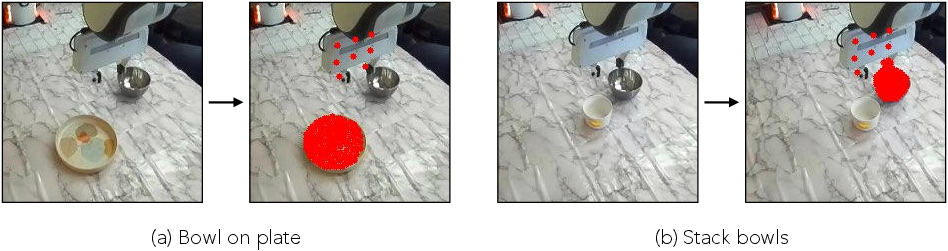

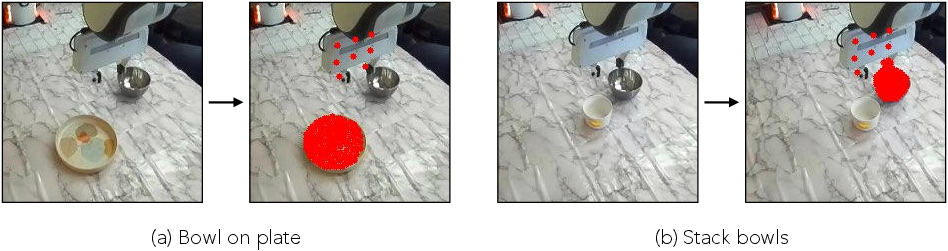

Clutter and Distractor Robustness

Point Bridge’s VLM-guided scene filtering pipeline is robust to background distractors, in contrast to point cloud methods that lack semantic filtering.

Figure 3: Example scenes with significant background clutter and distractors in the real-robot setup, illustrating the necessity for robust scene filtering.

Failure Cases and Limitations

Failure modes are largely attributable to VLM errors in object identification or segmentation, particularly under severe occlusions or ambiguous contexts. As VLM technology improves, these issues are expected to diminish.

Figure 4: Representative failure cases of the VLM-guided scene filtering pipeline, showing missed or incorrectly segmented objects.

Other practical limitations include (1) dependence on accurate scene-camera calibration for reference frame consistency, (2) reduced control frequency relative to image-based policies due to perception pipeline overhead, and (3) the potential loss of spatial context through overly sparse abstractions.

Theoretical and Practical Implications

The Point Bridge framework demonstrates that domain-agnostic 3D point-based representations, automated via foundation vision-LLMs, are sufficient for scalable sim-to-real and cross-domain generalization in robotic policy learning. This removes the need for feature-level domain adaptation or manual object annotation at scale. Furthermore, the unified pipeline supports multitask learning and is amenable to augmentation with real or internet-scale synthetic data. The extension of this paradigm to articulated or deformable object manipulation is corroborated by high task success rates beyond rigid-body scenarios.

The results indicate that as VLMs and large-scale synthetic scene generators improve, the domain gap will increasingly be addressed at the representation level, suggesting that future cross-domain robotic policy models can exploit “Internet-scale” synthetic and video datasets without object-level domain engineering. A promising direction for further study is the integration of hybrid, context-aware representations that provide both task-relevant abstraction and essential environmental spatial cues.

Conclusion

Point Bridge establishes a new paradigm for scalable cross-domain policy learning through domain-agnostic 3D representations and automated keypoint extraction, unlocking the vast potential of synthetic simulation data for highly sample-efficient, generalizable robotic manipulation. The architecture delivers robust zero-shot sim-to-real transfer, supports effective multitask and co-training with minimal real data, and is resilient to clutter and distractors by virtue of a strong VLM-guided filtering pipeline. Limitations regarding VLM failure modes, camera calibration dependence, and abstraction sparsity frame directions for continued research. The practical implication is a significant reduction in real-data collection costs and an expansion of scalable robotic generalization capabilities, accelerating progress toward practical, generalist embodied AI.