- The paper introduces ANO–VQC, a novel framework that jointly optimizes circuit rotations and adaptive non-local measurements to enhance super-resolution performance.

- It demonstrates quantitative gains, achieving lower MSE (0.35) and higher SSIM (0.87) in 3-local models compared to 2-local counterparts at a 3× scaling factor.

- This method leverages the quantum Hilbert space's exponential capacity to enable resource-efficient, high-resolution image reconstruction on NISQ devices.

Quantum Super-resolution with Adaptive Non-local Observables

Introduction

The paper "Quantum Super-resolution by Adaptive Non-local Observables" (2601.14433) introduces a new quantum machine learning paradigm for super-resolution (SR), advancing the use of variational quantum circuits (VQCs) by incorporating adaptive non-local observables (ANO). Contrasting with classical deep learning SR frameworks that require deep models and substantial datasets to capture high-frequency correlations, this work leverages the quantum Hilbert space's exponential dimensionality. By enabling the measurement (readout) process to adapt through learning Hermitian multi-qubit observables, the architecture enhances model expressivity and information extraction. The work demonstrates that ANO–VQC models can achieve up to five-fold super-resolution on downsampled image data with compact quantum models, signaling a promising direction for hybrid quantum-classical vision architectures.

Framework: Adaptive Non-local Observable Variational Quantum Circuits

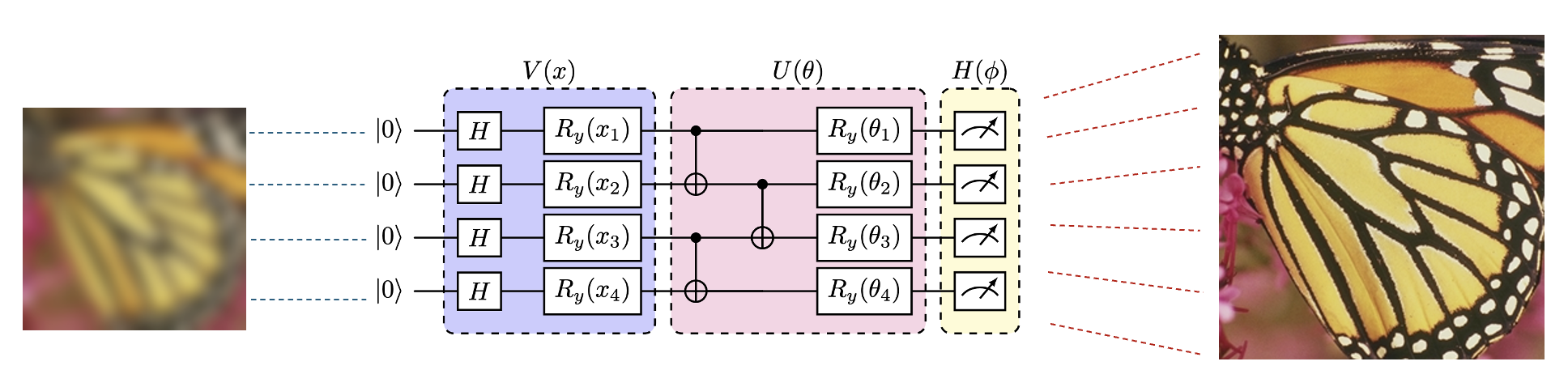

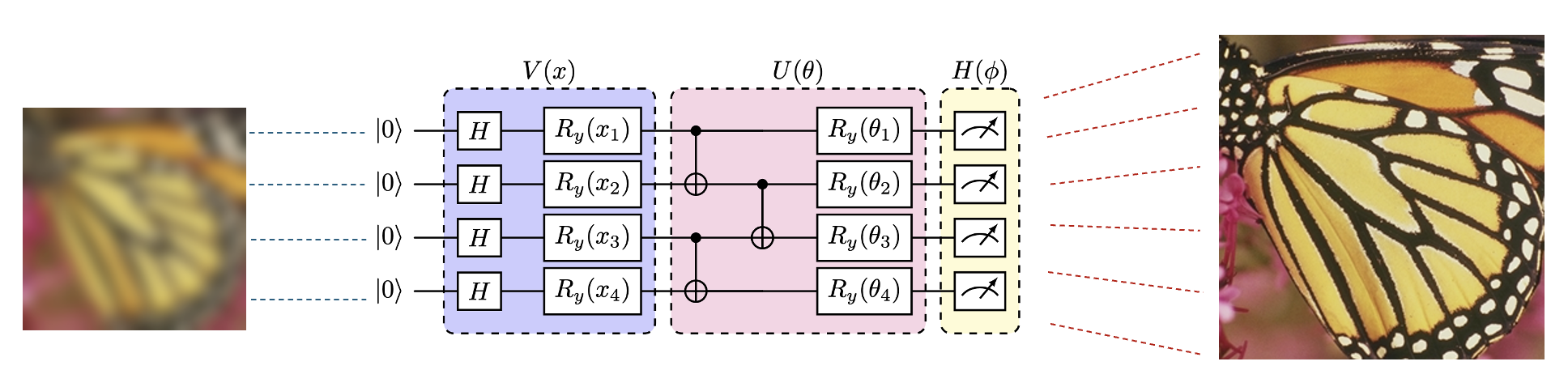

The core innovation is the ANO–VQC, which extends conventional VQC measurement from fixed local observables (typically Pauli strings) to trainable Hermitian operators acting over multiple qubits. The standard VQC consists of three stages: (1) encoding classical input into an n-qubit quantum state via unitary V(x), (2) evolution under a parameterized unitary U(θ), and (3) projective measurement using a fixed Hermitian observable. In ANO–VQC the final measurement operator H(ϕ), parameterized as a k-local Hermitian matrix, is optimized jointly with the circuit rotation parameters θ. This extends the function class representable by the quantum model, as different classes of Hermitian observables correspond to distinct measurement-induced projections in the Hilbert space.

The benefit of introducing adaptive non-local observables is a marked increase in the representational and generalization capacity of quantum neural networks, as confirmed in prior work [lin2025ano, chen2025learning]. The architectural diagram for ANO–VQC applied to super-resolution is shown below.

Figure 2: ANO–VQC for super-resolution: an LR input x is encoded by V(x), then transformed by U(θ), and finally reconstructed to HR output via measurement by adaptive k-local observable H(ϕ).

Quantum Super-resolution Protocol

The protocol for quantum super-resolution with ANO–VQC comprises:

- Encoding: LR images are vectorized and encoded onto quantum states.

- Variational Transformation: A trainable VQC U(θ) prepares the state for measurement.

- Adaptive Measurement: A k-local Hermitian observable H(ϕ) generates HR pixels through repeated, parameterized readouts.

- Joint Optimization: Both θ (circuit rotations) and ϕ (observable parameters) are jointly optimized using a composite loss: MSE for fidelity and LPIPS for perceptual quality. This dual-objective encourages reconstructions that are both pixel-accurate and perceptually realistic.

Experimental Evaluation and Results

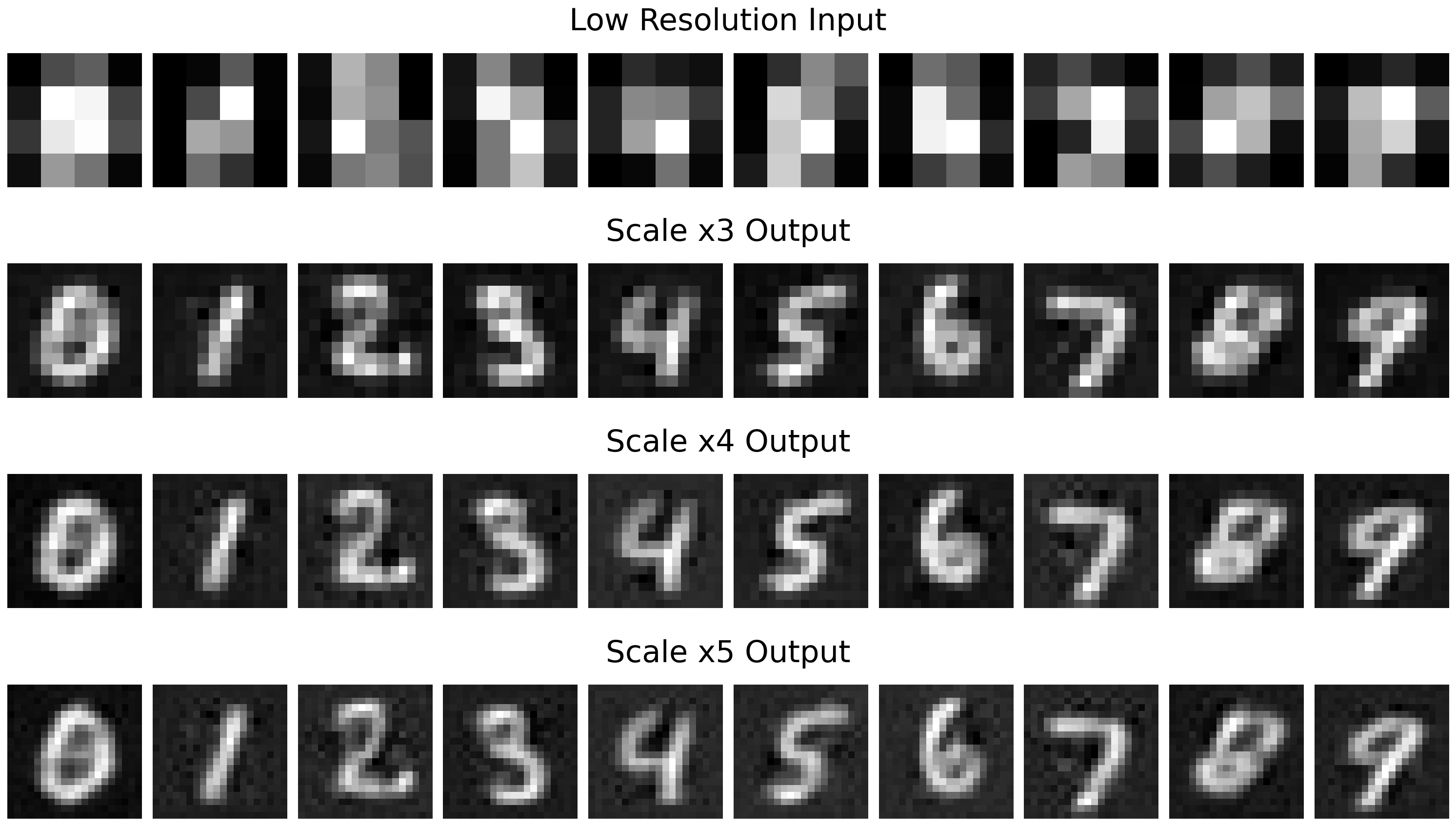

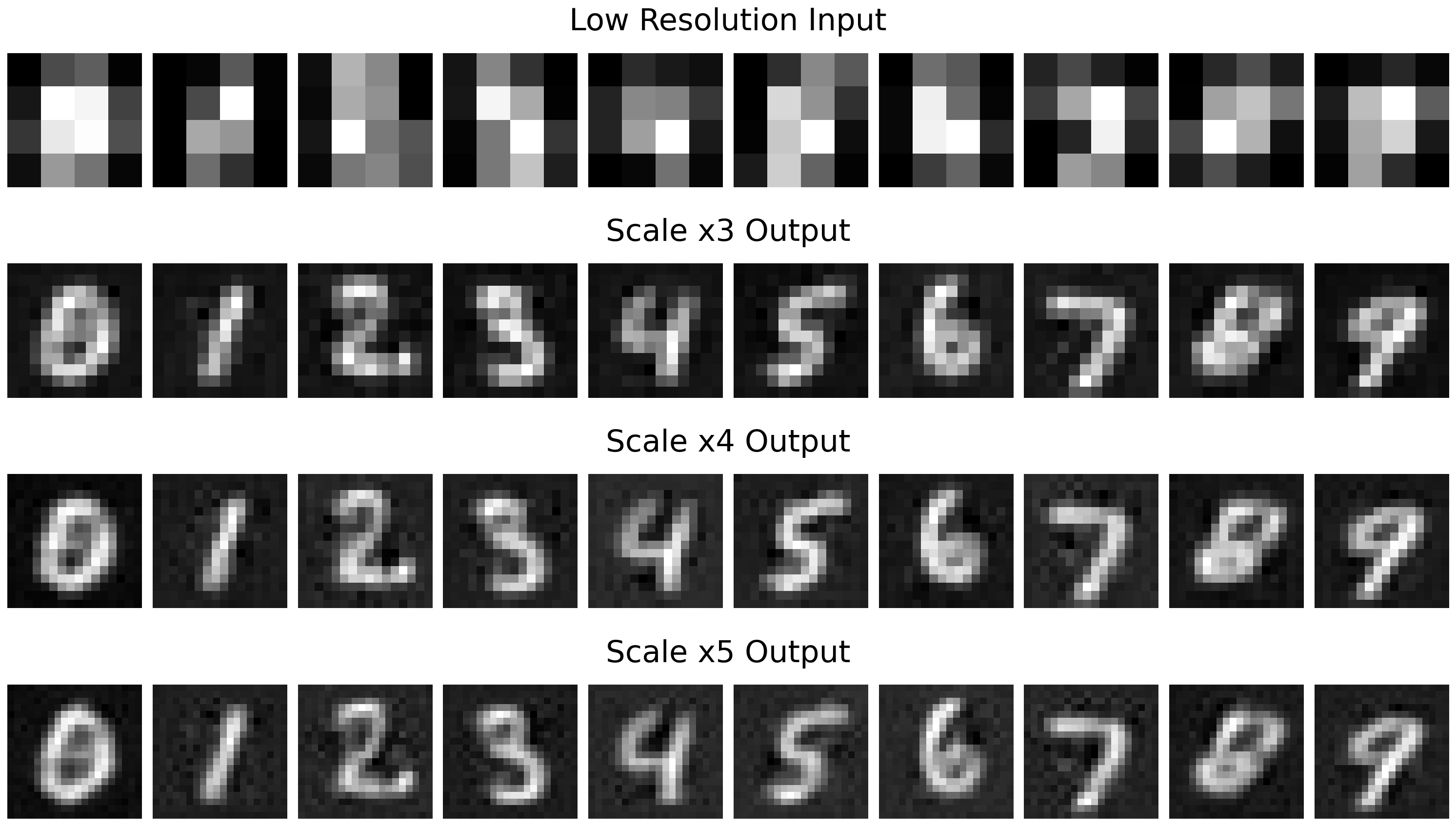

Experiments are conducted on the MNIST dataset with aggressive downsampling (4×4 LR) and various upscaling factors (×3, ×4, ×5 to 12×12, 16×16, 20×20 HR). Two variants—2-local and 3-local ANO–VQC—are tested to assess the impact of observable range.

The results demonstrate that the 3-local model achieves lower MSE, higher PSNR, and improved SSIM compared to 2-local, especially at lower upscaling ratios (×3), confirming higher pixel-level fidelity. For instance, at ×3, the 3-local model yields MSE = 0.35 and SSIM = 0.87 versus 0.42 and 0.84 for the 2-local scenario, which is a strong numerical improvement. However, the gain slightly sacrifices perceptual similarity (LPIPS increases modestly), implying a trade-off between sharpness and visual realism.

Qualitative results illustrate the reconstruction performance for various super-resolution scales:

Figure 4: Super-resolution with 3-local ANO–VQC: 4×4 LR digits (top) are reconstructed into 12×12, 16×16, and 20×20 HR images in subsequent rows.

By optimizing both the variational circuit and measurement process, ANO–VQC models can reconstruct salient image features from highly compressed quantum-encoded signals, exploiting the non-locality and entanglement inherent to quantum systems for spatial detail synthesis.

Implications and Future Directions

The introduction of trainable, non-local observables as measurement operators in quantum neural models provides a substantial increase in the effective function space and offers a resource-efficient path to high-resolution vision tasks on quantum hardware. The approach enables “measurement programming” alongside unitary evolution, expanding beyond the expressivity limits of standard VQCs constrained by local measurement.

This raises several implications:

- Resource Efficiency: Achieving significant resolution enhancement with shallow, low-qubit circuits is practical for NISQ-era devices.

- Quantum Advantage: The ANO–VQC demonstrates explicit scenarios where quantum models may match or exceed classical compact networks for tasks involving high-dimensional correlations.

- Interpretable Measurement: Adaptive observables introduce new dimensions for model interpretability by mapping eigenvalue spectra of learned measurement operators to output semantics.

- Transfer to Complex Domains: Scaling to color images, natural scenes, and hybrid models with classical postprocessing—potentially leveraging quantum advantage for selected sub-tasks—could extend applicability.

Theoretical directions include formal analysis of expressivity gains, extension to non-image domains, and comparison with quantum generative adversarial models for vision.

Conclusion

This paper systematically develops a quantum super-resolution framework using ANO–VQC, demonstrating that adaptive, multi-qubit measurement operators substantially enhance the expressive power of quantum neural architectures for vision tasks. Strong numerical gains in pixel-level metrics across increasing scaling factors attest to the model’s efficacy, despite a modest perceptual trade-off at larger upscaling ratios. The method opens practical and theoretical possibilities for resource-efficient quantum models in image synthesis and more generally for quantum representation learning that leverages the Hilbert space’s structure beyond what is facilitated by local observables. Future research should investigate scaling, real-device implementation, and cross-domain transfer for broader impact in quantum AI.