Cognition spaces: natural, artificial, and hybrid

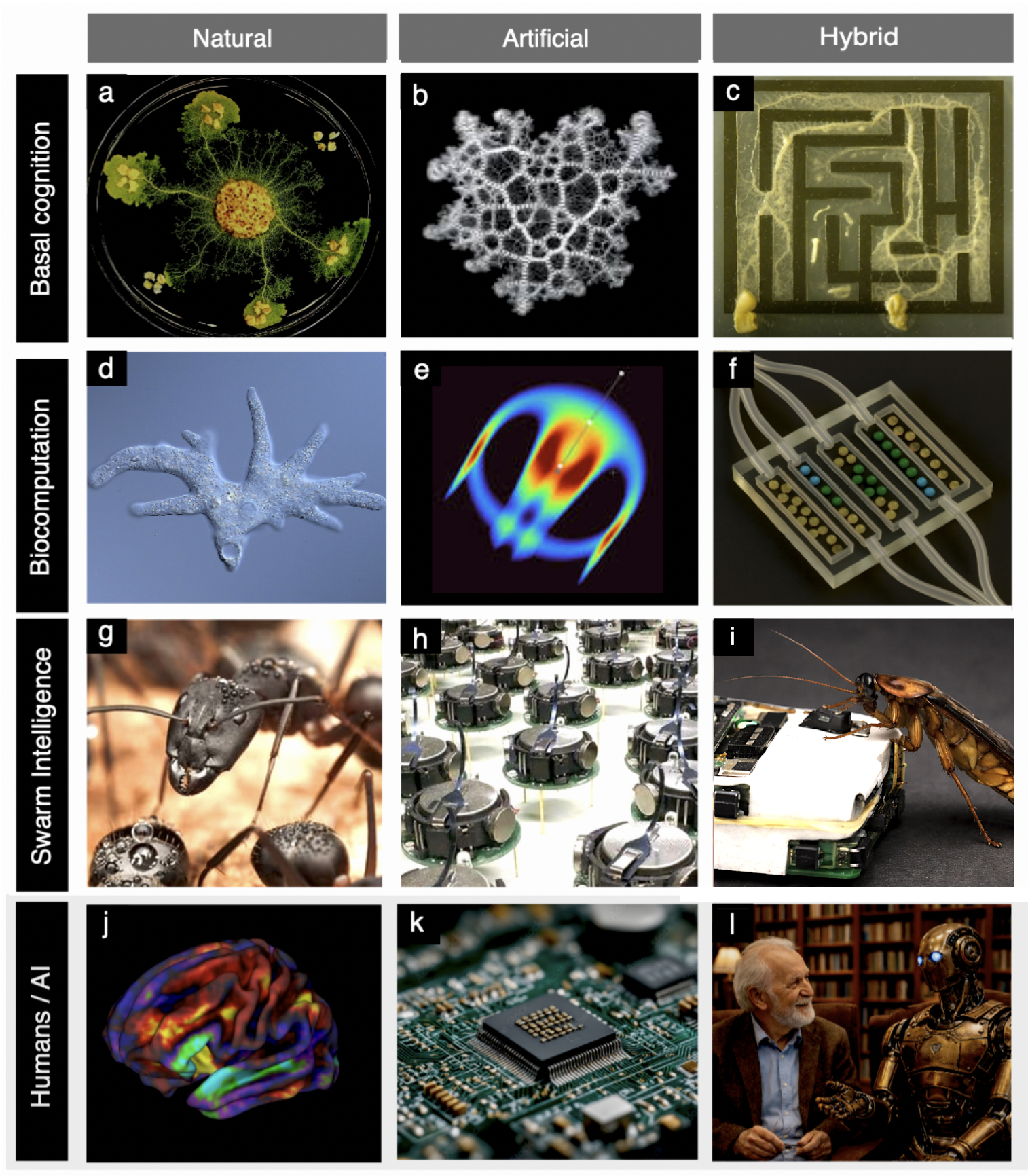

Abstract: Cognitive processes are realized across an extraordinary range of natural, artificial, and hybrid systems, yet there is no unified framework for comparing their forms, limits, and unrealized possibilities. Here, we propose a cognition space approach that replaces narrow, substrate-dependent definitions with a comparative representation based on organizational and informational dimensions. Within this framework, cognition is treated as a graded capacity to sense, process, and act upon information, allowing systems as diverse as cells, brains, artificial agents, and human-AI collectives to be analyzed within a common conceptual landscape. We introduce and examine three cognition spaces -- basal aneural, neural, and human-AI hybrid -- and show that their occupation is highly uneven, with clusters of realized systems separated by large unoccupied regions. We argue that these voids are not accidental but reflect evolutionary contingencies, physical constraints, and design limitations. By focusing on the structure of cognition spaces rather than on categorical definitions, this approach clarifies the diversity of existing cognitive systems and highlights hybrid cognition as a promising frontier for exploring novel forms of complexity beyond those produced by biological evolution.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about “cognition” — the ability to sense, process, and act on information — across many kinds of systems: living things (like cells and animals), machines (like robots and AIs), and mixtures of the two (hybrids). Instead of arguing over one strict definition of what counts as a mind, the authors build “maps” of cognition. These maps let us compare very different systems in the same picture and see where real examples cluster and where big gaps (empty regions) might be full of possibilities we haven’t explored yet.

Key questions the paper asks

The paper focuses on simple, practical questions:

- What kinds of minds exist in nature and technology today?

- What kinds of minds could exist but don’t (yet)?

- What limits or rules shape which minds are possible?

- How can we fairly compare a cell, a brain, a robot, and a human–AI team?

- Why are there “gaps” in what we see, and how might hybrids (living + artificial) fill them?

How the researchers approached it

The authors create three “cognition spaces.” You can think of each space like a 3D map where each axis measures a different feature. They place real and imagined systems on these maps to see patterns, clusters, and gaps.

- Basal (aneural) cognition space: systems without neurons or brains

- What it includes: single cells, bacteria, amoebas, slime molds, organoids (mini-organs grown from cells), and living “bots” made from cells (like xenobots).

- Axes (in simple terms): how complex their body layout is, how complex their information processing is, and how much development/self-building matters.

- Key idea: even simple life without brains can sense, remember, and adapt. For example, a slime mold can find short paths in a maze. It doesn’t “think” like we do, but its body and flows act like a problem solver. Putting it in a human-made maze is a hybrid: the living system plus the designed environment together “compute” the solution.

- Neural cognition space: systems with neurons and/or brain-like control

- What it includes: animals with nervous systems, human brains, swarms (like bees or fish schools), robot swarms, and robots wired to living neurons (“hybrots”).

- Axes: individual agency (how much a system’s own choices matter for keeping itself going), how strongly agents interact with others, and overall computational complexity.

- Key idea: many artificial systems are very “smart” at calculations but have low agency (their own choices don’t affect their survival much). Nature often shows the opposite: strong agency and rich social interaction. Some hybrids (like robot–insect groups) show that artificial agents can integrate into living collectives and steer group decisions.

- Human–AI hybrid space: people working tightly with AI

- What it includes: everything from simple tools (like early chatbots) to social robots to deep partnerships between humans and advanced AI systems.

- Axes: AI cognitive complexity, how much control the human has, and the depth of human–AI exchange (how rich and ongoing the interaction is).

- Key idea: as AI gets more capable and the interaction becomes deeper and more constant, the pair behaves like a single, combined cognitive system. Control and responsibility can shift as the partnership tightens.

Helpful analogy used in the paper: reservoir computing

- Imagine poking a bowl of jelly: ripples spread and carry information about the poke. Many living systems work a bit like that. Their ongoing body dynamics (flows, pulses, chemistry) “store” recent inputs, so simple rules can produce smart, context-sensitive actions. This helps explain how cells and slime molds show “proto-thinking” without brains.

Main findings and why they matter

What the maps show:

- Cognition is not all-or-nothing. It comes in degrees and many forms, from cells to societies to software.

- The maps are lumpy. Real systems cluster in certain regions, while large areas are empty.

- Those empty areas (voids) are informative. They likely reflect:

- Evolutionary history and chance: nature explored some paths but not others.

- Physical/biological limits: some designs are too costly, unstable, or impossible.

- Design biases: today’s labs and tools make some systems easier to build and study than others.

Why that’s important:

- The gaps are not just dead zones — they are opportunity zones. By smart design, especially with hybrids (mixing living tissue and engineered environments), we might stabilize new kinds of cognition.

- Examples:

- Slime molds in mazes: the maze acts as a “thinking scaffold,” helping the organism solve a problem.

- Organoids on chips: microfluidic channels shape growth and function, unlocking complex patterns not seen in plain dishes.

- Robot–insect societies: a few robots, designed with the right cues, can join insect groups and guide collective choices.

- Artificial systems often excel at computation but lag in agency. Hybrids and embodied systems offer paths to close that gap.

Implications and potential impact

- A clearer, fairer comparison: These cognition spaces avoid narrow, human-centered definitions and let us compare wildly different systems on shared terms.

- A roadmap for design: The maps highlight promising unexplored regions — where new biohybrids, organoid-based devices, neuromorphic hardware, or human–AI partnerships might succeed.

- Science and engineering benefits:

- Better experiments: use structured environments to “scaffold” living computation.

- Smarter collectives: integrate robots into animal groups to learn and guide group intelligence.

- Stronger human–AI teams: tune control and information flow to make partnerships safer and more effective.

- Ethics and governance: As hybrids blur boundaries (Where does the organism end? Where does the tool begin?), we need careful rules about responsibility, welfare, safety, and consent.

- Big picture: Hybrid cognition is a promising frontier. It can reach forms of complexity that biology didn’t evolve on its own and that current AI can’t achieve alone. These spaces help us see what’s possible, what’s limited, and what to try next.

Knowledge Gaps

Below is a single, focused list of concrete knowledge gaps, limitations, and open questions that the paper leaves unresolved. Each point is phrased to enable actionable follow-up by future researchers.

- Operationalizing the cognition spaces: Define measurable, comparable metrics for each axis (e.g., “spatial complexity,” “computational complexity,” “developmental complexity,” “agency,” “agent–agent interactions,” “human–AI exchange”) and establish protocols for data collection and normalization across substrates and timescales.

- Making the morphospaces metric: Develop methods to assign quantitative coordinates (with uncertainty) to systems, enabling statistical analysis, clustering, and longitudinal tracking rather than purely qualitative placement.

- Validating “voids” in the spaces: Distinguish between truly inaccessible regions (due to physical/chemical/evolutionary constraints) and empty regions caused by sampling bias or design limitations via targeted construction of synthetic/hybrid exemplars and destabilization analyses.

- Cross-substrate benchmarking: Create unified task suites and evaluation metrics that test sensing, memory, learning, and perception–action loops across cells, organoids, robots, LLMs, and human–AI dyads, with timescale and energy normalization.

- Empirical agency measurement: Operationalize the viability function V(x, E), estimate policies π from observed behavior, and measure the sensitivity ∂J/∂π in living, artificial, and hybrid systems; quantify the impact of reset/clone/interrupt operations on agency.

- Hybrid system boundaries: Develop causal inference frameworks (e.g., structural causal models) to delineate system boundaries and quantify coupling strength among biological substrates, engineered boundary conditions (BCs), and digital control components.

- Stability and robustness of hybrids: Characterize long-term dynamics (homeostasis, drift, fragility, failure modes) under resource constraints and environmental perturbations; identify conditions for sustained viability of composite systems.

- Developmental complexity measurement: Specify and validate experimental proxies for “developmental complexity” (e.g., lineage diversity, patterning entropy, morphogenetic feedback richness) and quantify its relation to cognitive performance in organoids and xenobots.

- Reservoir computing in basal cognition: Demonstrate RC capacity empirically across biological reservoirs (e.g., slime molds, amoebae, intracellular chemical networks) by identifying natural “readouts,” measuring memory depth, task generalization, and trainability.

- Transition pathways and thresholds: Identify and test actionable mechanisms by which basal cognition scales into neural regimes (e.g., adding conductive tissues, bioelectric scaffolds, sensory channel diversification), and locate thresholds of qualitative change.

- Hybrid boundary condition design principles: Systematically explore BC topologies (graphs, flows, microfluidic geometries) that maximize computational and developmental complexity; map which BC features reliably induce targeted cognitive behaviors.

- Generality of hybrid animal collectives: Assess cross-species transfer and ecological validity of robot–animal integrations; determine minimal sensory/behavioral mimicry needed for social acceptance and quantify how artificial minorities steer group decisions.

- Scaling biohybrid control (plantoids, neural robots): Address limitations in learning capability, interface fidelity, and scalability of living neural control; standardize biophysical interfacing, training protocols, and reliability benchmarks.

- Human–AI exchange depth: Define measurable dimensions of “depth” (bandwidth, reciprocity, persistence, emotional valence, shared memory) and test their causal impact on dyad-level performance, stability, and risk.

- Control–autonomy trade-offs in dyads: Model and experimentally probe how control shifts between human and AI over time; identify failure points (e.g., over-reliance, learned helplessness, miscalibrated trust) and design guardrails and override mechanisms.

- Dynamic trajectories in cognition spaces: Track systems’ positions over developmental, training, and evolutionary time; identify bifurcations, path dependence, and reversible/irreversible transitions induced by interventions or environmental changes.

- Predictive models of space occupation: Build mechanistic models linking energetic budgets, material constraints, and ecological payoffs to expected regions of occupation; derive testable predictions about why certain clusters exist and voids persist.

- Consciousness and representation: Specify testable criteria for representational capacity or consciousness that can be mapped into the spaces; design experiments to separate graded versus categorical shifts in these capacities.

- Shared data infrastructure: Establish repositories with curated exemplars, axis values, uncertainty estimates, and experimental protocols to enable replication, meta-analysis, and comparative studies across labs and disciplines.

- Safety-by-design for hybrid collectives: Identify and mitigate emergent pathologies (runaway feedback, manipulation, social hijacking) in biohybrid swarms and human–AI ecosystems; develop detection, containment, and recovery strategies.

- Energy and resource accounting: Quantify energy budgets and metabolic/compute costs across substrates; relate efficiency constraints to attainable regions of the morphospaces and to the feasibility of sustained hybrid cognition.

- Legal, ethical, and governance gaps: Clarify consent, welfare, and liability in robot–animal integrations and human–AI dyads; define agency, identity, and accountability for composite systems; propose governance mechanisms aligned with empirical risk profiles.

- Cross-scale integration: Develop methodologies to connect measurements from cellular/organoid scales to swarm/ecosystem and human–AI scales; formalize how local bioelectric/chemical dynamics propagate to system-level cognition.

- Experimental testbeds to explore voids: Design standardized microfluidic platforms, embodied AI simulators, and mixed-reality environments that can systematically populate currently empty regions of the morphospaces.

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed with current methods, guided by the paper’s cognition-space framework and concrete exemplars (basal aneural systems, neural and collective systems, and human–AI dyads).

- Cognition-space–driven R&D portfolio planning

- Sector: Software, robotics, biotech, R&D strategy

- Use: Map existing products and prototypes into the proposed cognition spaces to identify crowded clusters and “voids” (unoccupied regions) as opportunity areas; perform gap analysis and roadmapping across product lines (e.g., from tool-like human–AI interfaces to deeper, higher-bandwidth hybrids).

- Tools/workflows: Visualization dashboards of morphospace occupation; design-of-experiments for exploring adjacent regions; cross-disciplinary design reviews oriented around agency, interaction, and computational complexity axes.

- Assumptions/dependencies: Requires internal taxonomy alignment; access to cross-functional data on systems’ interaction structures and autonomy; acceptance that the spaces are comparative, not metric.

- Boundary-condition programming for organoid-on-chip platforms

- Sector: Healthcare, pharma, biotech

- Use: Employ microfluidic boundary conditions (flow, geometry, gradients) to steer organoid development into more structured, functional states for disease modeling and drug screening.

- Tools/workflows: Microfluidic perfusion chips; perfusion protocols; imaging and single-cell analytics; iterative design of boundary conditions to induce desired morphology/function.

- Assumptions/dependencies: Robust culture protocols and quality control; ethics compliance for organoid research; reproducible microfluidics manufacturing; translational validity for disease models.

- Spatially distributed multicellular logic for biosensing (MCDC)

- Sector: Environmental monitoring, industrial bioprocessing, diagnostics

- Use: Deploy spatially segregated engineered cell consortia implementing Inverted Logic Formulations to detect and compute on multi-analyte inputs (e.g., pollutant combinations, industrial metabolites) with reduced wiring complexity.

- Tools/workflows: Modular strain libraries; microfabrication or patterned substrates for spatial segregation; standardized input/output reporter modules.

- Assumptions/dependencies: Biosafety and containment; stability of engineered strains; regulatory approvals for environmental deployment.

- Educational and prototyping kits using Physarum-on-graphs and morphological computation

- Sector: Education, outreach, rapid prototyping

- Use: Demonstrate path finding and optimization through constrained growth on human-defined graphs to teach hybrid cognition and analog optimization concepts.

- Tools/workflows: Pre-patterned substrates, nutrient-node layouts, time-lapse imaging; simple Lagrangian-based teaching modules for constrained optimization.

- Assumptions/dependencies: Classroom-compatible biosafety; logistics for live culture care.

- Soft robotic reservoir computing (morphological computation) for low-power control

- Sector: Robotics, embedded systems

- Use: Exploit body dynamics as reservoirs to simplify control (e.g., compliant grippers adapting to variable objects) without heavy learning pipelines.

- Tools/workflows: Materials selection for rich internal dynamics; simple linear readouts on embedded microcontrollers; task-specific calibration.

- Assumptions/dependencies: Task robustness to environmental variability; manufacturability of compliant materials; acceptance of performance trade-offs vs. full-state control.

- Agency-based evaluation for autonomous systems and swarms

- Sector: Robotics, autonomy testing, safety and certification

- Use: Apply a viability-based agency metric to quantify how much a system’s action policy affects its continued functioning; create acceptance criteria for autonomy levels.

- Tools/workflows: Define viability functions for platform (power, damage thresholds); measure policy sensitivity; scenario-based testing for J(π) sensitivity.

- Assumptions/dependencies: Carefully chosen viability proxies; standardized test environments; alignment with regulatory bodies’ metrics.

- Human–AI coupling design and risk assessment

- Sector: Software (LLM copilots, decision support), regulated industries (health, finance, legal)

- Use: Use the human–AI morphospace (AI cognitive complexity, human feedback control, depth of exchange) to tailor governance, guardrails, and UX (e.g., oversight intensity, audit trails) to coupling depth.

- Tools/workflows: “Interaction contract” specifications; tiered supervision patterns by region in the space; coupling heatmaps for roles/tasks.

- Assumptions/dependencies: Available telemetry on interactions; organizational willingness to calibrate control vs. efficiency; compliance and privacy frameworks.

- Mixed robot–animal experiments for mechanistic ethology

- Sector: Academia (biology, behavior), agri-tech

- Use: Insert socially integrated robots into animal groups to causally test collective decision mechanisms (e.g., leadership, consensus) and assess interventions for herd guidance or welfare.

- Tools/workflows: Robotic platforms that replicate species cues (motion, chemical, tactile); controlled group trials; model-experiment loops.

- Assumptions/dependencies: Species-specific cue fidelity; ethics/IACUC approvals; field-to-lab transferability.

- Plantoid-inspired soil and root-zone exploration

- Sector: Environmental monitoring, precision agriculture

- Use: Deploy distributed, growth-like robotic probes for non-destructive sensing (moisture, nutrients) in soils where mobility is constrained.

- Tools/workflows: Modular root-like actuators; local rule controllers; power-efficient sensing packages.

- Assumptions/dependencies: Durability in heterogeneous soils; maintenance and retrieval plans; cost-benefit vs. conventional probes.

- Neurons-on-chip for closed-loop embodied neuroscience

- Sector: Academia (neuroscience, neuroengineering), medical device R&D

- Use: Couple in vitro neural cultures to simple robots in sensorimotor loops to study learning, plasticity, and embodied computation.

- Tools/workflows: MEA (multi-electrode array) culture systems; closed-loop control software; task design for motor feedback.

- Assumptions/dependencies: Culture stability and reproducibility; limited behavioral scope; ethical considerations for advanced neural substrates.

Long-Term Applications

The following use cases require further research, scaling, standardization, or ethical and regulatory development before broad deployment.

- Biohybrid “living devices” for environmental remediation (e.g., xenobot-based micro-cleanup)

- Sector: Environmental services, disaster response

- Use: Engineered living constructs that self-organize to collect microplastics or localize contaminants in constrained environments under boundary-condition scaffolds.

- Tools/products: AI-assisted morphology discovery; microfabricated deployment scaffolds; biosafety kill-switches.

- Assumptions/dependencies: Reliable containment/recall; ecological risk assessment; stability in non-lab environments; public acceptance.

- Programmable hybrid collectives for wildlife and ecosystem management

- Sector: Conservation, agriculture

- Use: Small numbers of socially integrated robots steer animal group decisions (e.g., migration corridors, avoiding hazards) or mitigate pest swarms via behaviorally-informed interventions.

- Tools/products: Species-specific social robots; adaptive control policies; real-time monitoring.

- Assumptions/dependencies: Long-term animal habituation; ethics and regulatory oversight; ecosystem-level impact evaluation.

- In situ therapeutic consortia with distributed biological computation

- Sector: Healthcare, synthetic biology

- Use: Spatially organized microbial consortia implementing multicellular logic to sense multi-omic cues and make local therapeutic decisions in the gut, skin, or implanted scaffolds.

- Tools/products: Clinical-grade strain libraries; spatial scaffolds; ILF-based circuit design; fail-safes.

- Assumptions/dependencies: Microbiome-host safety; precision spatial control in vivo; rigorous regulatory pathways (GMP, trials).

- Energy-efficient bio-computation for edge AI

- Sector: Computing, IoT, neuromorphic hardware

- Use: Harness living or biochemical reservoirs and neuromorphic-inspired architectures for ultra-low-power adaptive control and signal processing at the edge.

- Tools/products: Hybrid bio-electronic interfaces; stable biochemical reservoirs; standardized readout circuits.

- Assumptions/dependencies: Long-term stability and calibration; manufacturing and maintenance; ethical and biosafety concerns.

- Adaptive organoids guided by boundary conditions for personalized medicine

- Sector: Healthcare, precision oncology

- Use: Patient-derived organoids matured under programmable boundary conditions to emulate patient-specific tissue states for therapy selection.

- Tools/products: Automated microfluidic “organoid stewards”; analytics pipelines; therapy response assays.

- Assumptions/dependencies: Predictive validity to patient outcomes; scalability and cost; consent and data governance.

- Agency-aware autonomy frameworks for certification and governance

- Sector: Policy, standards bodies, safety engineering

- Use: Incorporate viability-based agency metrics into certification regimes for drones, autonomous vehicles, and industrial robots to define autonomy tiers and required oversight.

- Tools/products: Standard test suites; reference viability functions by class; continuous monitoring instrumentation.

- Assumptions/dependencies: Consensus on agency proxies; legal alignment; interoperability across vendors.

- Humanbot ecosystems for co-creative and high-stakes decision-making

- Sector: Knowledge work, design, healthcare, law

- Use: Deep, persistent human–AI couplings with high-bandwidth exchange and reciprocal adaptation (e.g., surgical copilots, legal analysis partners), with explicit morphospace-based operating envelopes.

- Tools/products: Adaptive UX that adjusts control/exchange; apprenticeship-like co-learning protocols; traceability and shared situational models.

- Assumptions/dependencies: Robust, trustworthy AI; human cognitive load management; liability frameworks; training and accreditation.

- CAD for hybrid living systems (morphospace exploration engines)

- Sector: Biodesign, robotics, materials

- Use: AI-driven platforms that search cognition spaces for feasible biohybrids (e.g., xenobot morphologies or plantoid architectures) under physical and ethical constraints.

- Tools/products: Generative design with constraint solvers; simulation-in-the-loop wet lab; digital twins for growth and behavior.

- Assumptions/dependencies: Accurate simulators; fast design–build–test cycles; regulatory-ready documentation.

- Ethical and legal frameworks for hybrid cognition

- Sector: Policy, law, ethics committees

- Use: Governance for systems with blended agency (e.g., organoids with neural activity, animal–robot societies, deep human–AI couplings), including consent, welfare, and accountability.

- Tools/products: Morphospace-based risk tiers; oversight protocols keyed to coupling depth; incident response templates.

- Assumptions/dependencies: Interdisciplinary consensus; dynamic updating as capabilities advance; international harmonization.

- Distributed, stigmergy-driven industrial swarms with external scaffolds

- Sector: Logistics, construction, inspection

- Use: Swarms using environmental “cognitive scaffolds” (tags, beacons, dynamic maps) to achieve robust coordination in complex sites (e.g., warehouses, refineries).

- Tools/products: Stigmergic middleware; scaffold deployment kits; fallback teleoperation frameworks.

- Assumptions/dependencies: Site-specific infrastructure; cybersecurity; failure-mode safety design.

- Embodied neuroprosthetics informed by neurons-on-chip control studies

- Sector: Medical devices, rehabilitation

- Use: Transfer insights from in vitro embodied neural control to develop prosthetics that leverage bioelectrical dynamics for more natural, adaptive control loops.

- Tools/products: Bioelectrical interface algorithms; adaptive controllers; training protocols coupling patient physiology with device dynamics.

- Assumptions/dependencies: Translational leap from dish to patient; long-term interface stability; clinical trials.

- Public literacy and interdisciplinary education using cognition spaces

- Sector: Education, workforce development

- Use: Curricula and training grounded in morphospace thinking to unify biology, AI, and robotics concepts; hands-on modules with basal cognition exemplars.

- Tools/products: Courseware, labs-in-a-box, visualization tools; teacher training.

- Assumptions/dependencies: Curriculum adoption; supply of safe, maintainable kits; assessment models.

These applications hinge on the paper’s core innovation: representing cognition as graded, substrate-independent capacities situated in structured spaces. In practice, the spaces function as design maps, benchmarking tools, and governance guides—surfacing unoccupied regions as innovation targets while clarifying constraints and risks across natural, artificial, and hybrid systems.

Glossary

- Active inference: A theoretical framework in which agents minimize expected surprise by acting to fulfill predictions about their sensory inputs. Example: "active inference~\cite{Friston2013Life, Kirchhoff2018Markov, Pezzulo2018Hierarchical, Rubin2020FutureClimates, Solms2019ConsciousnessFreeEnergy, Solms2024StrangeParticle, Parr2022ActiveInference}"

- Agency: The capacity of a system to regulate its own interactions to maintain its organization and goals over time. Example: "What is the origin of the agency gap?"

- Aneural: Lacking neurons; referring to systems without nervous tissue. Example: "Basal, aneural cognition space: single cells, simple multicellular organisms, xenobots, and organoids"

- Basal cognition: Minimal cognitive capacities (e.g., sensing, integration, adaptive response) arising without nervous systems. Example: "exemplify basal cognition"

- Bioelectrical networks: Networks of cells or tissues that process information via electrical signaling (e.g., voltage gradients). Example: "bioelectrical networks~\cite{Levin2023Bioelectric, martinez2019metabolic}"

- Biohybrid systems: Systems combining living biological components with engineered technological parts into a functional whole. Example: "biohybrid insect--robot systems (i)"

- Bilaterian: Relating to animals with bilateral symmetry and typically more centralized nervous systems. Example: "Bilaterian nervous systems exemplify this transition"

- Cytoskeletal plasticity: The ability of the cytoskeleton to reorganize dynamically, enabling adaptive cell behavior. Example: "cytoskeletal plasticity on the intracellular scale"

- Eusocial: Describing species with advanced social organization (e.g., overlapping generations, cooperative brood care). Example: "Eusocial insect societies combine low individual cognitive complexity"

- Homeostat: A self-regulating device that maintains stability by adapting internal parameters. Example: "Equally important was Ashby's homeostat"

- Humanbot: A tightly coupled human–AI composite agency exhibiting joint cognitive behavior. Example: "what we may call the humanbot, reflecting tightly-coupled but still distinctive agencies."

- Hybrid collective intelligence: Group-level problem solving emerging from mixed societies of biological and artificial agents. Example: "hybrid collective intelligence systems (Hybrid CollInt in Fig.~\ref{fig:agency})"

- Hybrots: Biohybrid robots that integrate living tissues (e.g., neurons) with machines in closed loops. Example: "Hybrots provide a distinct class of hybrid agents exhibiting basal cognition"

- Inverted Logic Formulation (ILF): A circuit design approach reformulating Boolean functions to enable distributed biological computation. Example: "This Inverted Logic Formulation (ILF) enables distributed computation"

- Laminar flow: Smooth, ordered fluid flow regime important in tissue engineering and organoid culture. Example: "exposed to laminar flow exhibit spatially differentiated domains"

- Lagrange multipliers: Auxiliary variables enforcing constraints in optimization problems. Example: "The Lagrange multipliers \lambda_i enforce local conservation of flow at each node."

- Lagrangian: A function combining objective and constraints whose minimization yields optimal system behavior. Example: "The Physarum Lagrangian is defined on the graph"

- Lenia: A continuous cellular automaton framework used to study artificial life and emergent behaviors. Example: "Lenia"

- Liquid brains: Distributed cognitive systems where computation is performed by transient, mobile, or reconfigurable agents. Example: "resembling biological ``liquid brains'' in its distributed control"

- Microbiome engineering: Designing and modifying microbial communities to achieve desired functional behaviors. Example: "microbiome engineering reveal how designed genetic circuits can reshape behavioral attractors"

- Microfluidic chips: Devices with micrometer-scale channels enabling precise control of cellular environments. Example: "most notably microfluidic chips providing controlled flows, geometries, and BCs"

- Morphogenetic regimes: Dynamical patterns that govern shape formation and tissue structuring during development. Example: "induces new morphogenetic regimes"

- Morphological computation: Exploiting a system’s physical form and dynamics to perform computation. Example: "exhibits morphological computation on the organismal scale"

- Morphospace: An abstract space mapping possible forms or organizations along selected dimensions. Example: "Our morphospace includes a diverse range of biological organizations"

- Multi-Agent Reinforcement Learning: RL involving multiple interacting learners, often yielding emergent coordination. Example: "Multi-Agent Reinforcement Learning further increases individual learning and social coupling"

- Neurons on a chip: In vitro neuronal cultures interfaced with microelectronic substrates for controlled experimentation. Example: "neurons on a chip \cite{eckmann2007physics}"

- Neuromorphic computers: Hardware systems emulating neural architectures and dynamics for efficient computation. Example: "neuromorphic computers (k)"

- Organoids: Self-organized multicellular mini-organs that recapitulate key features of tissues in vitro. Example: "organoids, that is, selforganized multicellular structures that recapitulate key features of organs."

- Perfusion: Continuous delivery of fluid (e.g., nutrients) through tissues or organoids to sustain and pattern growth. Example: "under perfusion develop cortical-like layered structures"

- Physarum polycephalum: A slime mold used as a model for distributed problem-solving and morphological computation. Example: "The slime mold Physarum polycephalum provides a canonical example of hybrid cognition"

- Plantoids: Robots inspired by plant growth and sensing, emphasizing distributed control and morphology. Example: "plantoids \cite{manca2014plantoid}, robotic systems inspired by plant organization"

- Reservoir computing: Computing paradigm leveraging rich internal dynamics as a high-dimensional memory for simple readouts. Example: "Reservoir computing (RC) provides a unifying framework for understanding basal cognition"

- Sociotechnical systems: Systems in which human social structures and technical components co-evolve and interact. Example: "distributed sociotechnical systems \cite{Hutchins1995, Hollan2000}."

- Stack Theory: A constraint-based framework modeling how agents shape viable environmental states and policies. Example: "Stack Theory \cite{bennett2025thesis}"

- Stigmergic signals: Indirect coordination cues left in the environment that guide collective behavior. Example: "stigmergic signals (as ants and termites do)"

- Symmetry breaking: Spontaneous emergence of asymmetric outcomes from symmetric conditions in dynamical systems. Example: "including symmetry breaking and consensus formation."

- Variational principle: An optimization principle where the system’s behavior minimizes (or extremizes) a functional. Example: "This can be conceptualized using a variational principle"

- Viability function: A measure of how far a system is from losing its organizational integrity or survival conditions. Example: "We define a viability function that measures how far the system is from a terminal boundary"

- Xenobots: Engineered living constructs made from amphibian cells that exhibit coordinated, goal-directed behaviors. Example: "xenobots, and organoids can exhibit minimal yet rich forms of memory"

Collections

Sign up for free to add this paper to one or more collections.