- The paper introduces an adversarial approach that leverages summary statistics to safeguard clinical AI models from temporal drift.

- It employs a convex-combination uncertainty set and minimax optimization to balance current performance with future resilience.

- Empirical evaluations on synthetic and real EHR datasets demonstrate ADAPT’s superior AUC retention and robustness to abrupt shifts.

Adversarial Drift-Aware Predictive Transfer (ADAPT): A Framework for Durable Clinical AI

Introduction

Clinical AI models deployed in high-stakes domains such as healthcare regularly encounter performance decay due to temporal distributional shifts. These shifts arise from factors including changes in population demographics, diagnostic coding updates (e.g., ICD-9 to ICD-10 transitions), and unexpected systemic events such as the COVID-19 pandemic. Conventional remedies—like frequent retraining—are often infeasible due to privacy regulations, annotation costs, and operational burdens. The paper "Adversarial Drift-Aware Predictive Transfer: Toward Durable Clinical AI" (2601.11860) presents ADAPT, an adversarial framework which seeks durability by proactively safeguarding models against plausible future drift using minimal retraining and only summary-level historical model statistics.

Methodology: The ADAPT Framework

The ADAPT framework is structured around adversarial, distributionally robust optimization (DRO), specifically designed to handle the problem of concept drift in temporally evolving clinical environments. The approach can be delineated in three steps:

- Extraction of Historical and Current Signals: ADAPT estimates separate models (e.g., GLMs) for each historical period and for the current target period using only summary statistics, explicitly circumventing the use of raw individual-level patient data to address privacy and regulatory constraints.

- Construction of an Uncertainty Set: The uncertainty set comprises convex combinations of historical and current model parameters subject to empirical adequacy on a held-out subset of contemporary data. This reflects a prior that plausible future mechanisms will lie within (or close to) the span of previously observed mechanisms, but allows for flexibility governed by a calibrated tolerance parameter.

- Adversarial Minimax Optimization: The final ADAPT estimator is computed by solving a tractable, closed-form minimax problem. The objective is the minimization of worst-case prediction error across all models in the uncertainty set, exploiting a second-order analytic approximation to achieve computational scalability.

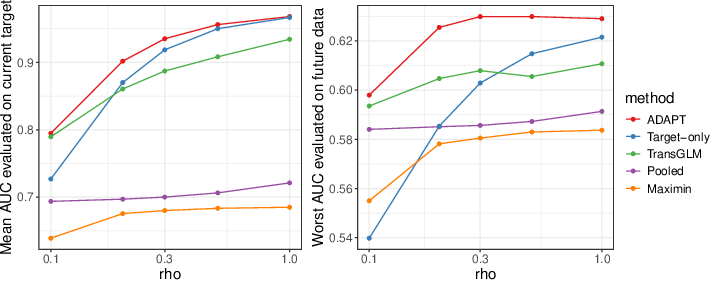

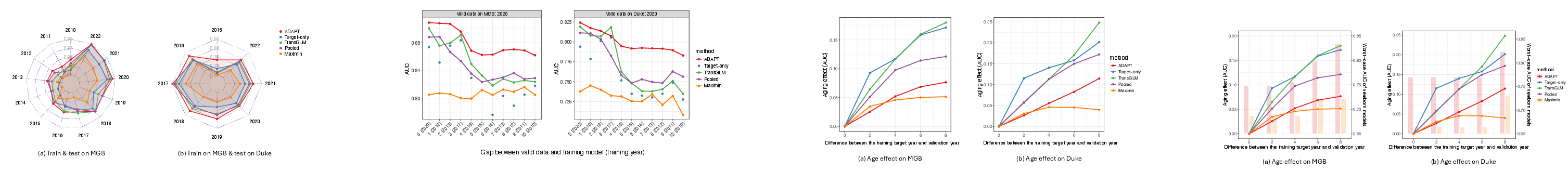

(Figure 1)

Figure 1: AUC as the current-to-historical data ratio increases. Left: validation and training periods coincide; right: model generalization to subsequent periods under drift.

This algorithmic structure aims to balance current-period accuracy with resilience to temporal degradation, in contrast to prior DRO methods (e.g., group DRO and maximin estimators) that often default to over-conservativeness at the expense of current relevance.

Empirical Validation: Synthetic and Real-World Evaluation

Synthetic Experiments

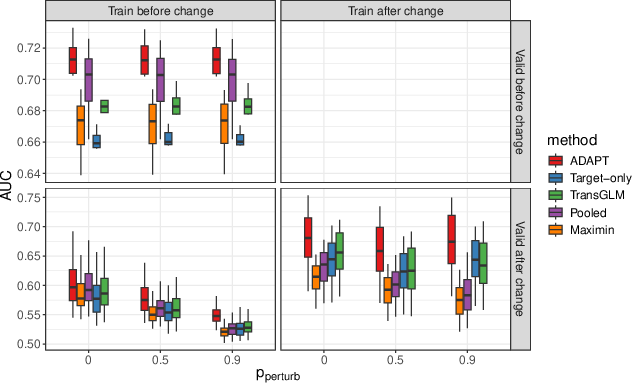

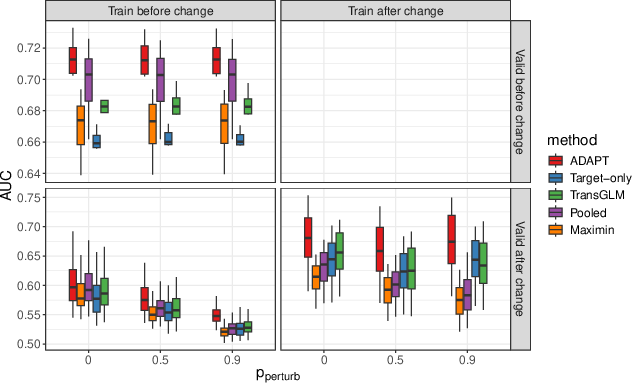

To rigorously characterize robustness to temporal and systemic drifts, the authors simulate data with both gradual and abrupt coefficient evolution, including controlled perturbations corresponding to real-world events like coding transitions.

Real-World Application: Suicide Risk Prediction

The main empirical demonstration involves longitudinal suicide risk prediction on multi-institutional EHR data from Mass General Brigham (MGB, 2005–2021) and external validation at Duke University Health Systems.

- Contemporaneous Performance: When trained and tested on the same year, ADAPT attains the highest mean AUC in both MGB (0.815) and Duke transfer (0.846), surpassing pooling, target-only, transGLM, and maximin approaches (Figure 3).

Figure 3: AUC for models trained and evaluated on the same year; ADAPT dominates across institutions.

- Temporal Robustness: When models are trained on prior years and validated on 2020 data, ADAPT exhibits the slowest AUC degradation as training/validation gap widens (Figure 4). Even with decade-old training, ADAPT maintains high AUC, with only modest dips around documented systemic shifts (e.g., ICD code changes).

Figure 4: AUC on 2020 validation for models trained on prior years; ADAPT uniquely preserves accuracy under long-term drift.

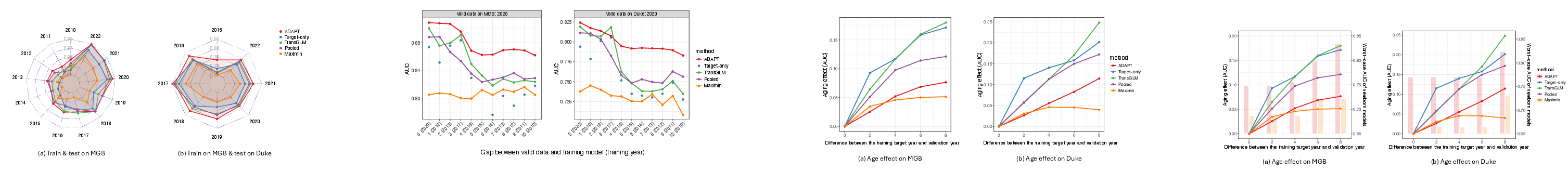

- Aging Effect Quantification: Relative AUC loss as a function of years since last retraining (the "age effect") is consistently minimized by ADAPT for both short (2-year) and longer (8-year) gaps, contrasting with the sharper decay in target-only and transGLM methods (Figure 5).

Figure 5: Relative AUC degradation ("age effect") versus training-validation gap. ADAPT shows minimal loss compared to alternatives; maximin is flat but with lower absolute AUC.

- Model Interpretability: Examination of top feature coefficients indicates that ADAPT coefficients exhibit tight inter-year stability bands, combining predictive power with resistance to overfitting contemporary noise—a key for clinical translation.

Theoretical and Practical Implications

ADAPT operationalizes the principle of adversarial robustness for temporal generalization, circumventing the pitfalls of both naïve updating and overly pessimistic DRO. Its analytic, privacy-preserving structure is computationally scalable and can be deployed in live clinical environments without extensive retraining. The reliance solely on model summaries ensures both regulatory compliance (e.g., HIPAA constraints) and the possibility of federated deployments. The empirical results indicate that durable AI systems are achievable in clinical practice—substantially reducing retraining frequency without sacrificing current accuracy or future reliability.

For theory, the convex-combination uncertainty set and minimax criterion offer a template for robust transfer in other non-i.i.d. environments. The framework is equally extensible to non-linear architectures, network embeddings, or semi-supervised settings with unlabeled target data.

Future Directions

Immediate avenues for extension involve integration of ADAPT with deep neural networks by generalizing the parameter uncertainty set to higher-order or manifold-valued representations and leveraging large unannotated cohorts for semi-supervised robustness. Further, systematic study of settings with severe or unprecedented shocks—beyond the historical span—will require compositional robustness mechanisms or incorporation of explicit out-of-support detection modules.

Conclusion

ADAPT offers a principled, adversarially robust approach for sustaining clinical AI utility in the face of endemic temporal drift. By leveraging summary statistics and analytical optimization, it aligns regulatory, computational, and performance imperatives, and demonstrates a significant reduction in model aging and performance decay across diverse clinical environments (2601.11860). These properties recommend ADAPT as a strong candidate for reliable, real-world deployment of clinical AI systems requiring high trust and long-term stability.