Thinking Before Constraining: A Unified Decoding Framework for Large Language Models

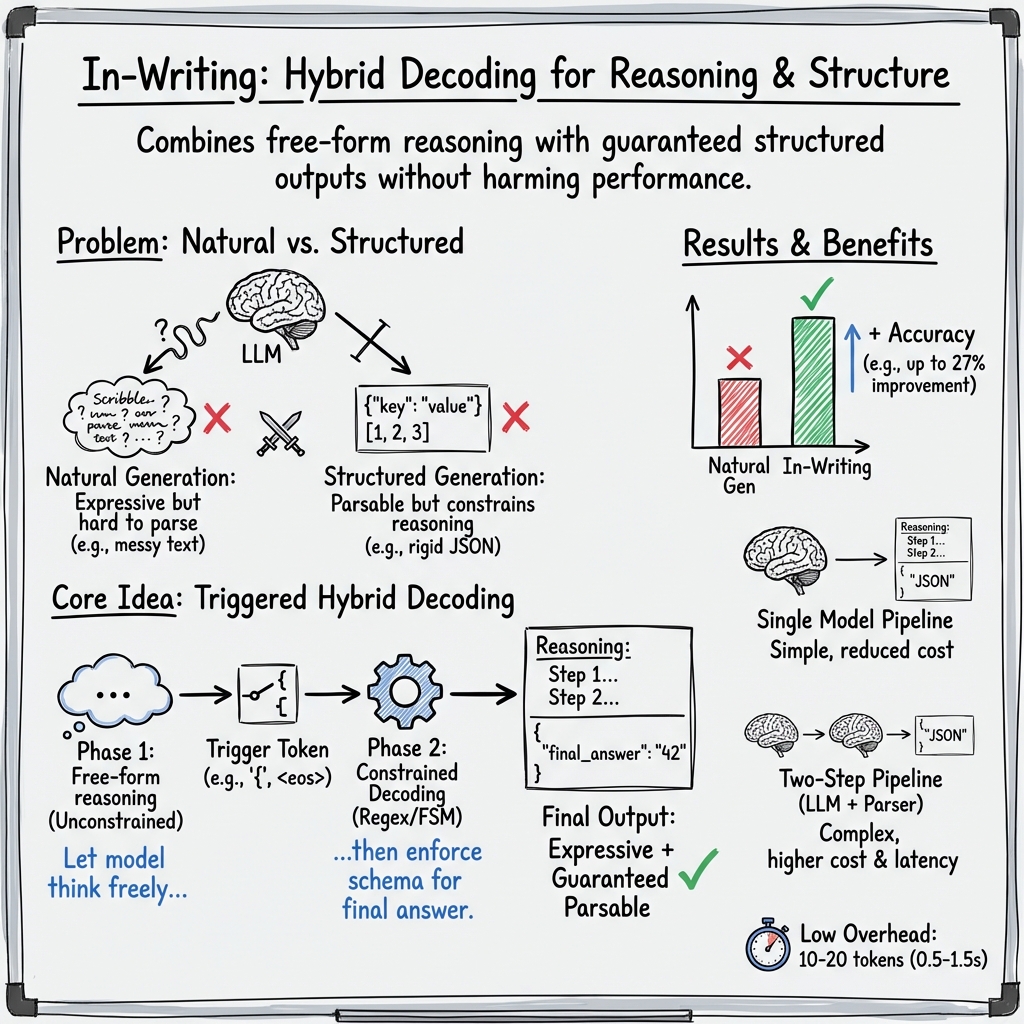

Abstract: Natural generation allows LLMs (LMs) to produce free-form responses with rich reasoning, but the lack of guaranteed structure makes outputs difficult to parse or verify. Structured generation, or constrained decoding, addresses this drawback by producing content in standardized formats such as JSON, ensuring consistency and guaranteed-parsable outputs, but it can inadvertently restrict the model's reasoning capabilities. In this work, we propose a simple approach that combines the advantages of both natural and structured generation. By allowing LLMs to reason freely until specific trigger tokens are generated, and then switching to structured generation, our method preserves the expressive power of natural language reasoning while ensuring the reliability of structured outputs. We further evaluate our approach on several datasets, covering both classification and reasoning tasks, to demonstrate its effectiveness, achieving a substantial gain of up to 27% in accuracy compared to natural generation, while requiring only a small overhead of 10-20 extra tokens.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A simple explanation of “Thinking Before Constraining: A Unified Decoding Framework for LLMs”

Overview: What is this paper about?

LLMs are great at explaining their thinking in natural sentences, but their answers can be messy and hard for computers to read. On the flip side, if we force them to write in a strict format (like a filled-out form or JSON), the answers are easy for apps to use—but the model’s thinking can get worse.

This paper proposes a simple middle ground called In-Writing: let the model “think out loud” freely first, then switch to a strict, computer-friendly format only at the end. It’s like doing your math on scratch paper and then neatly filling in the final answer on the answer sheet.

Objectives: What were the researchers trying to find out?

- Can we combine free thinking and strict formatting so we get the best of both worlds?

- Does this approach improve accuracy on tasks like math problems, logic puzzles, and multiple-choice questions?

- Can smaller models (which are cheaper and faster) also benefit from this method?

Methods: How does the approach work?

The method is called In-Writing. Here’s the idea in everyday terms:

- Free thinking phase: The model writes its reasoning normally, without any rules. This is the “scratch paper.”

- Trigger moment: When the model writes a special token (like a “{”, “final answer:”, or end-of-answer marker), that’s the signal to switch into strict mode.

- Strict output phase: Now the model must produce the final answer in a fixed format, such as JSON. For example:

{"final_answer": "A"}.

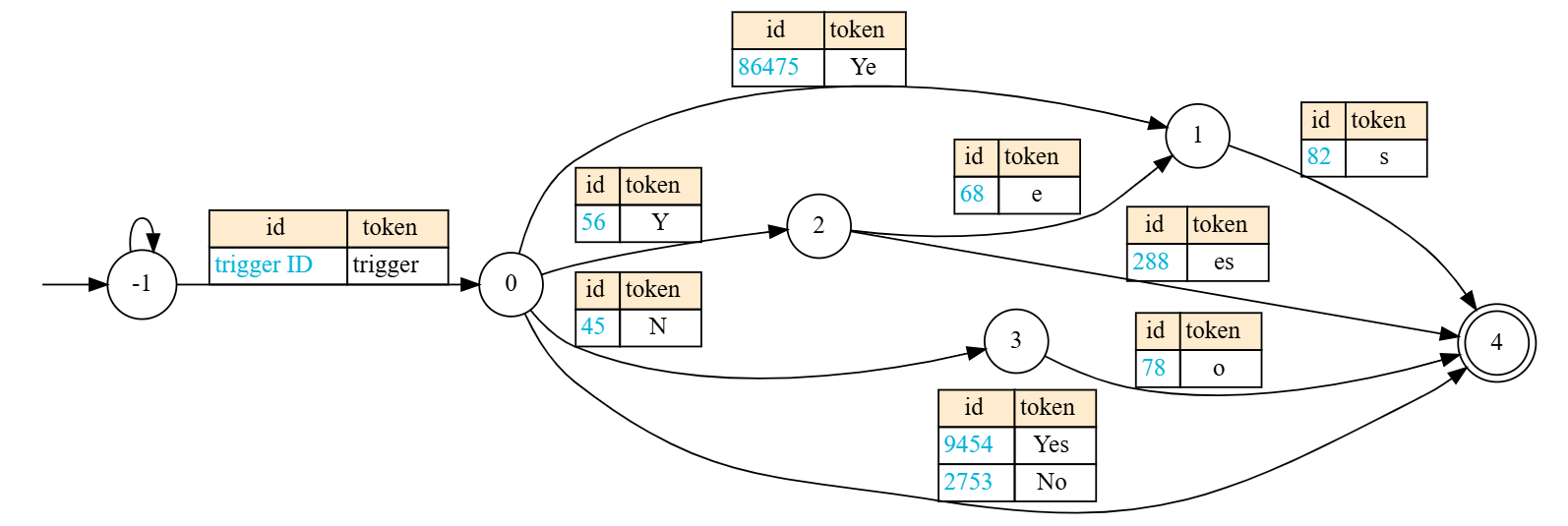

To enforce the strict phase, the paper uses:

- Regular expressions (regex): Patterns that describe what the final answer should look like (e.g., only “Yes” or “No”, or only the letters A–E).

- Finite-State Machines (FSMs): Think of this like a flowchart that only allows certain next characters or tokens. During the strict phase, the model is only allowed to pick tokens that fit the pattern, so the output is guaranteed to be valid and easy to parse.

Analogy: It’s like a hallway with doors (states). At each step, only certain doors are open (allowed tokens), so you can’t wander into the wrong room (invalid format).

They tested this approach on:

- Reasoning tasks: grade-school math (GSM8K), last-letter puzzles, and object shuffling logic.

- Classification tasks: medical diagnosis multiple-choice, finance category labeling, sports plausibility, and stereotype classification.

They compared:

- Natural generation (free-form writing only).

- A two-step pipeline (model writes freely, then a separate model parses it).

- Their In-Writing approach (one model, think-then-structure). They also tried variations with better prompts and tested small models to see if the method still helps.

Main findings: What did they discover, and why does it matter?

Key results (in simple terms):

- Better accuracy: In-Writing often beats plain free-form answers and usually beats the two-step pipeline. In some cases, accuracy improved by up to about 27%.

- Tiny cost: It only adds around 10–20 extra tokens to produce the final structured answer (about 0.5–1.5 seconds more in their setup).

- Stronger with good instructions: Using clearer, better-aligned prompts improved results even more.

- Works on small models too: Smaller, cheaper models also benefited in many tasks, though they can be more sensitive to “triggering” too early.

- Real example: In a partner-switching puzzle (like a dance), the model could explain the steps freely, then neatly output the final choice as valid JSON, making it easy for a computer to read and check.

Why this matters:

- Reliability: Apps need answers they can trust and parse, like

{"final_answer": "No"}instead of a messy paragraph. - Reasoning preserved: By delaying the strict rules until the end, the model can still think clearly and explain itself before submitting the final, clean answer.

- Simpler systems: You don’t need a second, bigger model to parse things. One model does both thinking and formatting.

A few caveats (and fixes):

- Early triggers: Sometimes the model accidentally writes the trigger symbol (like “{”) too soon and jumps into strict mode early, hurting its reasoning. Fixes include choosing safer triggers (like only switching at the end-of-answer) or using better prompts.

- Endless rambling: If the free-thinking part goes on too long, you can set a max “scratch paper” length before switching to the strict answer.

Implications: What could this change in the real world?

- More dependable AI tools: Chatbots, data extractors, and assistants can give answers that are both well-reasoned and guaranteed to be in formats apps expect (like JSON).

- Lower costs: No need for a second big model just to clean up the output, which saves time and money.

- Better for devices at the edge: Small models on phones or embedded devices can produce trustworthy, structured answers without heavy infrastructure.

- Easier integration: Developers can plug model outputs directly into other systems (APIs, databases) without worrying about broken formats.

In short, In-Writing is like telling the model: “Think freely first, then fill in the form.” It keeps the brainpower of free text and the reliability of structured outputs—making AI answers both smarter and easier to use.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of gaps and unresolved questions to guide future research:

- Formal scope of constraints: The method is limited to regular languages (regex/FSM). It does not support context-free or context-sensitive grammars, hierarchical JSON with nested structures, or cross-field dependencies. How can In-Writing be extended to richer constraint classes (e.g., CFGs) while preserving flexibility?

- Semantic constraints: Beyond syntax, the framework cannot enforce semantic conditions (e.g., numeric ranges, arithmetic relations, cross-key consistency). Can dynamic, value-dependent constraints be integrated into the guided decoding?

- Trigger token design: There is no principled method for choosing trigger tokens; current choices like

{or<eos>are ad hoc and model/tokenizer-dependent. How can we systematically select or learn robust triggers that minimize premature switching across models and tasks? - Premature triggering: The paper acknowledges early emission of trigger tokens that truncate reasoning, but lacks quantitative analysis or general mitigation strategies. What detection and recovery mechanisms (e.g., adaptive trigger thresholds, trigger confirmation sequences, logit penalties) can reduce premature switch events?

- Adaptive switching policy: The switch occurs on first trigger token without verifying readiness. Can we design a policy that detects the completion of reasoning (e.g., via confidence measures, discourse markers, or self-verification) before enforcing constraints?

- Multi-field schemas: Experiments focus on a single

final_answerfield. How does In-Writing perform with multi-field outputs, nested JSON, optional fields, and cross-field constraints? What is the parse success rate and error profile in such settings? - Structural validity metrics: Accuracy is reported, but structural validity (e.g., well-formed JSON rate, schema adherence, parse success rate) is not systematically quantified. Can we benchmark structural correctness separately from task accuracy?

- Robustness to adversarial or noisy inputs: The approach may be vulnerable to inputs that induce early triggers (e.g., user-injected

{). How robust is In-Writing to prompt injection, adversarial formatting, or hostile inputs, and what defenses are effective? - Tokenization dependence: Trigger detection and FSM masks depend on tokenizer behavior; cross-model inconsistencies are not analyzed. How sensitive is performance to tokenization differences, and can normalization strategies mitigate this?

- Decoding hyperparameters: Temperature, top-p, and other sampling settings likely affect trigger timing and parsing reliability, but are not studied. What are the effects of decoding parameters on premature switching, answer accuracy, and structural validity?

- Theoretical guarantees: There is no formal analysis proving that deferred constraints preserve reasoning quality or affect the distribution of generated tokens. Can we provide theoretical guarantees or bounds on reasoning preservation and constrained decoding impacts?

- Error taxonomy: The paper provides anecdotal failures but lacks a systematic breakdown (e.g., parse failure vs. wrong mapping vs. incorrect reasoning). Can we construct an error taxonomy and quantify failure modes to guide targeted improvements?

- Cross-lingual generalization: Experiments are English-centric. How does In-Writing perform on multilingual tasks, non-Latin scripts, and languages with complex tokenization or formatting conventions?

- Task diversity: Evaluation excludes code generation, SQL, tool/function calling with complex schemas, and real-world extraction pipelines. Does In-Writing improve performance and reliability on these practical, structure-heavy tasks?

- Industrial-scale constraints: FSM complexity and mask construction costs for large schemas are not analyzed. What are the scalability limits (time/memory) when masking against large or nested schemas, and how can they be optimized?

- Streaming and multi-turn settings: The method is demonstrated in single-turn generation. How does In-Writing operate in streaming outputs, multi-turn dialogues, or agent settings where constraints must be enforced intermittently?

- Integration with verification: The approach acts as a syntactic corrector but does not incorporate verifiers (e.g., math or logic checks). Can combined syntactic-semantic verification pipelines be designed to catch reasoning and formatting errors jointly?

- Small-model reliability: Small LMs show loops and early triggers; mitigations (e.g., max token budget) are heuristic. What training or instruction-tuning strategies help small models avoid premature triggering while preserving reasoning?

- EOS-only triggering trade-offs: Using

<eos>as the sole trigger can waste tokens or miss final answers embedded mid-output. Can we devise adaptive or task-specific policies to balance accuracy and efficiency? - Efficiency profiling: Token/time overhead is reported for three reasoning tasks; classification tasks, multi-field schemas, and larger grammars are not profiled. What is the comprehensive efficiency/latency profile across tasks, models, and schema complexities?

- Fair baselines: Comparisons to other hybrid frameworks (e.g., CRANE) and grammar-based libraries (Guidance, Outlines variants) are limited. Can we run controlled, reproducible benchmarks against strong, open baselines with matched prompts?

- Prompt quality and sensitivity: The paper reuses prompts criticized elsewhere and shows sensitivity in some tasks. What prompt design principles reliably prevent early triggers and improve parsing, and how can we automate prompt optimization?

- Reproducibility details: Decoding settings, seeds, and full code links are incomplete or deferred. Can the authors provide a complete, reproducible protocol (prompts, settings, versions) to validate findings across hardware and libraries?

- Safety and privacy: Reasoning traces remain unconstrained and may leak sensitive data; this is not assessed. How can we bound or sanitize free-form reasoning while ensuring structured, safe outputs?

- Automatic schema induction: The method assumes a known output schema. Can schemas or regexes be induced automatically from task descriptions or examples, and how reliable are learned schemas during constrained decoding?

- Dynamic constraints during reasoning: Constraints are applied only after triggering; dynamic masking earlier (without harming reasoning) is unexplored. Can lightweight, soft constraints guide reasoning phases to reduce off-task loops without restricting fluency?

- Calibration and confidence: The impact on model calibration, uncertainty estimation, and confidence tagging for structured outputs is not studied. Does In-Writing improve or degrade calibration, and can confidence be encoded in the schema?

- Edge deployment: While small-model feasibility is explored, energy use, memory footprint, quantization effects, and throughput on typical edge hardware are not reported. What are the practical deployment trade-offs and optimizations for edge devices?

Glossary

- 4-bit quantized: A model compression approach where weights are stored using 4 bits to reduce memory and speed up inference, often with minimal accuracy loss. "4-bit quantized"

- accept states: In finite automata, the subset of states in which input strings are accepted. "the set of accept states."

- allow_preamble: A feature in a decoding library that lets models generate unconstrained text before enforcing a schema. "allow_preamble feature."

- Application Programming Interfaces (APIs): Interfaces that allow software components to communicate; LLMs can call them to use external tools. "Application Programming Interfaces (APIs)"

- autoregressive sampling: A generation process where each token is sampled conditioned on all previously generated tokens. "an autoregressive sampling procedure"

- constrained decoding: Decoding that enforces structural or syntactic constraints (e.g., via grammars or schemas) on model outputs. "constrained decoding"

- context-free grammar: A formal grammar class capable of generating nested structures; sometimes used to constrain LLM outputs. "context-free grammar cases."

- decoder logits: The raw, pre-softmax scores over the vocabulary produced by a model’s decoder at each step. "masking the decoder logits"

- Diffusion Probabilistic Models: Generative models that iteratively denoise data from noise; referenced here via the inpainting analogy. "Diffusion Probabilistic Models"

- downstream tasks: Tasks that consume model outputs (e.g., extraction results) for further processing or evaluation. "downstream tasks"

- edge devices: Resource-constrained hardware (e.g., mobile or IoT) where smaller/faster models are deployed. "edge devices"

- end delimiter: A marker that signals the end of a constrained segment during hybrid generation. "end delimiter (>>)"

- exact match accuracy: An evaluation metric that requires the predicted output to match the gold answer exactly. "exact match accuracy"

- Finite-State Machine (FSM): A computational model with a finite set of states and transitions, often used to enforce regular constraints during decoding. "Finite-State Machine (FSM)"

- FSM states: The individual states of a finite-state machine that the decoding process can track and transition between. "FSM states"

- function calling: Mechanism enabling LLMs to invoke predefined functions/tools with structured arguments. "function calling"

- few-shot setting: A prompting setup where only a few examples are provided to guide the model’s behavior. "few-shot setting"

- guided decoding: Decoding that follows externally imposed constraints or schemas to ensure valid outputs. "guided decoding"

- Guidance: A structured generation toolkit that constrains LLM outputs to specified formats or grammars. "Guidance"

- hybrid constrained decoding: A strategy that mixes free-form generation with periods of constrained, schema-enforced decoding. "hybrid constrained decoding"

- inpainting: In generative modeling, filling in missing parts using context; used here as an analogy for constraining only parts of text. "inpainting technique"

- instruction format: A structured prompt organization (e.g., system/user/assistant roles) to guide LLM behavior. "instruction format"

- In-Writing: The proposed framework that lets models reason freely, then switches to constrained decoding upon triggers. "In-Writing"

- JSON schemas: Formal specifications of JSON structure used to enforce valid outputs during constrained decoding. "JSON schemas"

- Litelines: An open-source library (based on Outlines) supporting constrained decoding with features like allow_preamble. "Litelines"

- NL-to-Format: A two-step approach where the model outputs natural text first and a separate parser converts it to a structured format. "NL-to-Format"

- Outlines: An open-source library for grammar- or schema-guided generation with LLMs. "Outlines"

- Perfect Parser: An assumed highly reliable parser model used to convert free-form outputs into structured answers. "Perfect Parser"

- Pydantic: A Python library for defining and validating data models, here used to generate JSON schemas. "Pydantic"

- regular expression (regex): A formal pattern specification used to define allowable strings; can be compiled into FSMs for decoding. "regular expression (or regex)"

- regular languages: Languages recognizable by finite automata; equivalent in power to regular expressions. "regular languages"

- schema-based information extraction: Extracting data according to a predefined schema to ensure structure and consistency. "schema-based information extraction"

- start delimiter: A marker that signals entry into a constrained segment during hybrid generation. "start delimiter (<<)"

- structured generation: Producing outputs that adhere to specified formats or schemas (e.g., JSON), ensuring parsability. "Structured generation"

- Tokenization: The process of splitting text into tokens according to a model’s vocabulary and rules. "Tokenization details may vary across LLMs"

- tokenizer: The component that performs tokenization, mapping text to tokens and back. "tokenizer"

- transition function: In an FSM, the function that maps a state and an input symbol to the next state. "the transition function"

- trigger tokens: Specific tokens that, when generated, cause the decoder to switch from free-form to constrained mode. "trigger tokens"

- tool calling: Model-initiated invocation of external tools or APIs to perform actions or retrieve information. "Tool calling"

- XGrammar: A grammar-based approach/tool for constraining LLM outputs to predefined structures. "XGrammar"

- zero-shot prompting: Prompting without providing examples, relying only on instructions or task descriptions. "Zero-shot prompting results"

Practical Applications

Overview

The paper introduces In-Writing, a hybrid decoding framework for LLMs that lets models reason freely in natural language until a trigger token is emitted, then switches to constrained decoding (via regex/FSM/JSON schema) to guarantee syntactically valid, easily parsable outputs. Experiments across reasoning and classification tasks show up to 27% accuracy gains over natural generation with only 10–20 extra tokens, and feasibility on small/edge models using open-source libraries (e.g., Outlines/Litelines).

Below are actionable, real-world applications derived from these findings, methods, and innovations.

Immediate Applications

The following uses can be deployed now with current LLMs and open-source tooling (Outlines/Litelines) and standard JSON/regex schemas.

- Reliable function/API calling and tool orchestration

- Sector: Software, AI agents, DevOps

- Description: Use free-form reasoning to determine the next action, then switch to schema-validated JSON for function arguments and tool calls (e.g., search, database lookup, calculator).

- Tools/Workflow: LangChain/LlamaIndex agents wrapped with In-Writing; JSON schemas via Pydantic; regex/FSM masks via Outlines/Litelines allow_preamble.

- Assumptions/Dependencies: Target outputs expressible by regular languages; careful trigger selection (e.g., { or EOS) to avoid premature constraint.

- Production-grade structured outputs in RAG and chatbots

- Sector: Enterprise software, customer support, CRM

- Description: Guarantee template-adherent replies (e.g., summarization fields, action items, SLA flags) after unconstrained reasoning.

- Tools/Workflow: “Think-then-format” prompt templates; FSM-enforced JSON with final_answer and additional fields; A/B testing with EOS vs brace triggers.

- Assumptions/Dependencies: Output schema fixed; prompt hygiene to prevent early triggers.

- Compliance-ready information extraction pipelines

- Sector: Finance, Legal, Governance/Risk/Compliance (GRC)

- Description: Extract clauses, obligations, risk indicators, or regulatory tags into validated schemas with minimal post-processing.

- Tools/Workflow: Document parsing → free-form rationale → constrained JSON extraction (e.g., SEC/KYC/AML forms).

- Assumptions/Dependencies: Schema coverage of target fields; outputs fit regex/JSON; evaluation for recall/precision; handling long-tail entity formats.

- Healthcare triage and documentation structuring

- Sector: Healthcare/MedTech

- Description: Convert clinical free-text to structured fields (symptoms, duration, likely diagnosis options, ICD codes) while preserving chain-of-thought for auditing (internally).

- Tools/Workflow: On-device/small models for parsing on edge; JSON schemas validated by Pydantic; HL7/FHIR-aligned field mapping.

- Assumptions/Dependencies: Human-in-the-loop review; regulatory compliance; medical safety guardrails; outputs restricted to allowed code sets.

- Financial text classification and standardized reporting

- Sector: Finance

- Description: Assign categories/risk labels to narratives and emit formatted outputs (e.g., reason codes, confidence scores) guaranteed to parse downstream.

- Tools/Workflow: Batch/stream inference with In-Writing; schema-locked enumerations; analytics ingestion (BI/warehouse).

- Assumptions/Dependencies: Category sets known; stability of schemas across teams and systems.

- Programmatic grading and exam workflows

- Sector: Education/EdTech

- Description: Tutoring agents reason freely, then emit exact-answer formats (MCQ letters, numeric results) for auto-grading with high accuracy and low latency overhead.

- Tools/Workflow: GSM8K-like math tutors; final_answer regex (A–E, integers); ICS/LMS integration.

- Assumptions/Dependencies: Answer spaces modeled by regex; safeguards to prevent early finalization during worked solutions.

- Configuration, policy, and template synthesis

- Sector: IT/Cloud/SRE, Public sector

- Description: Generate configurations/policies with guaranteed syntactic validity (e.g., JSON/YAML snippets, Terraform blocks, access policies).

- Tools/Workflow: Constrained decoding inside code blocks; validation pipelines; partial autocomplete with “think-then-constrain.”

- Assumptions/Dependencies: Grammar approximable by regex or regular subsets; fallback validators for complex schemas.

- Robotic/IoT command issuance with safe schemas

- Sector: Robotics, IoT/Smart Home

- Description: Plan in natural language, then issue control commands constrained to a small, verified DSL or JSON schema for device safety.

- Tools/Workflow: FSM-limited command spaces; “SafeAction” schemas; runtime enforcement on the device/controller.

- Assumptions/Dependencies: Command language is regular or approximated; strict trigger control; safety interlocks.

- Contact center summarization and case filing

- Sector: Customer service, BPO

- Description: Free-form summaries mapped to structured case fields (issue type, sentiment, next steps), ensuring ingestion into CRMs without fragile post-processing.

- Tools/Workflow: Schematized CRM payloads; In-Writing switch at “Final JSON:” marker; monitoring of token overhead (10–20 tokens typical).

- Assumptions/Dependencies: Field definitions fixed; careful prompt design for multi-turn chats.

- ETL for semi-structured/unstructured data

- Sector: Data engineering/BI

- Description: Use LLMs as schema-locking mappers: free-form interpretation followed by guaranteed-formatted outputs for pipelines and dashboards.

- Tools/Workflow: Batch processing; “allow_preamble” decoding; robust schema evolution/versioning.

- Assumptions/Dependencies: Outputs are regular-language-compliant; robust error handling for missing fields.

- On-device parsing and privacy-preserving assistants

- Sector: Mobile/Edge, Privacy/Trust

- Description: Small LMs parse to structured outputs locally (e.g., expense extraction, to-do capture) with low overhead and no cloud round-trip.

- Tools/Workflow: Qwen3-1.7B/SmolLM2 variants; quantization; In-Writing to ensure parseability.

- Assumptions/Dependencies: Device resources sufficient; outputs simple enough for small models; throttle loops with max token budgets.

- Test harnesses and evaluation frameworks

- Sector: ML/QA engineering, Academia

- Description: Benchmarks that require exact-match or schema adherence (e.g., final_answer fields) to reduce evaluation brittleness.

- Tools/Workflow: FSM gates for answer spans; auto-metric computation; prompt variants for robustness studies.

- Assumptions/Dependencies: Benchmarks’ answers codified as finite sets/regex.

- “Answer-only” low-latency inference when reasoning text is not needed

- Sector: Ads/Ranking, Ops automations

- Description: Force immediate schema-constrained answer emission to reduce tokens/time when intermediate reasoning is irrelevant.

- Tools/Workflow: Early triggers; minimal “preamble” budget; aggressive mask enforcement.

- Assumptions/Dependencies: Business accepts no visible chain-of-thought; answer space is constrained.

Long-Term Applications

These uses are promising but may require further research, scaling, formalization, or integration beyond regex-level schemas.

- Generalized grammar-constrained agents beyond regular languages

- Sector: Software agents, Programming tools

- Description: Extend In-Writing to context-free/attribute grammars (e.g., full AST constraints) for code synthesis, SQL, and complex DSLs.

- Tools/Workflow: Parser generators + decoding masks; hybrid CFG+regex guards; incremental validation.

- Assumptions/Dependencies: Efficient masking for richer grammars; tokenizer/grammar alignment; performance optimizations.

- Safety-critical decision support with verified outputs

- Sector: Healthcare, Aviation, Industrial control

- Description: Combine free-form reasoning with formally verified, schema-locked outputs and audited rationales for high-stakes settings.

- Tools/Workflow: Guardrails + clinical/operational ontologies; provenance capture; human-in-the-loop sign-off.

- Assumptions/Dependencies: Regulatory approvals; rigorous validation data; robust defenses against premature triggers.

- Regulatory reporting standards for AI-generated structured content

- Sector: Finance, Public policy

- Description: Standardize “machine-verifiable” report schemas so LLM outputs can be filed directly to regulators with minimal human editing.

- Tools/Workflow: Industry schemas (XBRL-like) + FSM enforcement; audit logs of reasoning vs finalization.

- Assumptions/Dependencies: Interoperable standards; stakeholder adoption; certification frameworks.

- Curriculum-aligned tutors with verified final answers and hints

- Sector: Education

- Description: Multi-stage pedagogical dialogues where final answers and rubrics are schema-locked and traceable for fairness and consistency.

- Tools/Workflow: Role-based prompt flows; per-skill schemas; adaptive feedback loops.

- Assumptions/Dependencies: Long-context stability; alignment with assessment policies; bias monitoring.

- Multimodal structured outputs (vision/speech → structured forms)

- Sector: Insurance, Logistics, Field service

- Description: Extract structured claims/inspection reports from images/audio via free-form multimodal reasoning then schema-finalization.

- Tools/Workflow: VLMs + In-Writing; domain ontologies; on-device capture → cloud validation.

- Assumptions/Dependencies: Mature multimodal models; domain-specific schemas; robust OCR/ASR.

- Contract execution and automated workflow binding

- Sector: LegalTech, Enterprise automation

- Description: Natural-language negotiation/reasoning followed by schema-locked “execution payloads” to trigger downstream workflows (payments, access changes).

- Tools/Workflow: Verified DSLs for actions; policy-as-code; irreversible action safeguards.

- Assumptions/Dependencies: Strong identity/auth; rollback mechanisms; liability frameworks.

- Learned trigger management and training-time alignment

- Sector: ML research/engineering

- Description: Train models to defer triggers until reasoning completeness; RL to optimize state transitions and minimize premature constraint.

- Tools/Workflow: Reinforcement learning with penalties for early triggers; curriculum learning; decoding-time heuristics.

- Assumptions/Dependencies: Task-specific reward design; stable training; generalization across domains.

- Streaming and interactive protocols with alternating constraint bands

- Sector: Realtime assistants, Collaboration tools

- Description: Conversational protocols that interleave free-form and constrained spans for co-editing documents, forms, and code.

- Tools/Workflow: Delimiter-driven state machines; partial schema completion; conflict resolution.

- Assumptions/Dependencies: UI/UX standards for mixed-mode content; latency constraints; concurrency handling.

- Formal verification of agent outputs against policies

- Sector: Security, Governance

- Description: Map final outputs to formal policy checks (e.g., data handling, PII redaction) enforced at decoding time.

- Tools/Workflow: Policy-as-automata; composable masks; compliance proofs/logs.

- Assumptions/Dependencies: Expressive yet tractable policy grammars; compositional masking efficiency.

- Edge-native assistants with private, structured life admin

- Sector: Consumer/daily life

- Description: Personal devices performing local extraction and structured planning (budgets, shopping lists, schedules) with guaranteed parseability.

- Tools/Workflow: Small LMs with quantization; local vector stores; In-Writing wrappers for personal schemas.

- Assumptions/Dependencies: Continued advances in small models; energy/latency limits; private storage and syncing.

- Industry benchmarks emphasizing structure adherence and robustness

- Sector: Academia, Standards

- Description: New benchmarks that score both reasoning correctness and structure validity across prompt perturbations.

- Tools/Workflow: Prompt-variant suites; structure robustness metrics; error taxonomies (premature trigger, loop).

- Assumptions/Dependencies: Community adoption; reproducible testbeds; open baselines.

- Compiler-like toolchains for LLM outputs

- Sector: Software engineering

- Description: “Compile” LLM outputs: parse → type-check → optimize → emit verified artifacts (configs, scripts, manifests).

- Tools/Workflow: Typed schemas; intermediate representations; static analyzers integrated with constrained decoding.

- Assumptions/Dependencies: Standardized IRs; integrated dev tooling; performance tuning for large artifacts.

Notes on Feasibility and Dependencies

- Output regularity: Most immediate gains assume outputs can be expressed as regular languages (regex/JSON). Complex grammars may require extended methods or hybrid CFG approaches.

- Trigger tuning: Choice of trigger tokens (e.g., {, “Final answer:”, EOS) impacts reasoning completeness and latency. EOS-only triggers maximize reasoning but may increase tokens/time.

- Prompt design: Instruction formatting and few-shot demonstrations reduce premature triggering and improve mapping to final_answer fields.

- Model size: Small models can act as on-device parsers, but are more prone to early triggers and loops; set max preamble budgets and implement loop guards.

- Libraries and infra: Adoption hinges on mature support (Outlines/Litelines), tokenizer/grammar alignment, and production observability (logs for state transitions, parse failures).

- Governance: In regulated domains, pair In-Writing with human oversight, audit trails, and domain-specific ontologies/codes to ensure safe deployment.

Collections

Sign up for free to add this paper to one or more collections.