- The paper reveals that strict JSON mode degrades LLM reasoning tasks by causing output misordering.

- It compares constrained decoding, FRI, and NL-to-Format methods across various datasets and models such as GPT-3.5-turbo.

- Findings suggest that while structured formats enhance classification accuracy, they undermine nuanced reasoning, necessitating tailored approaches.

The paper "Let Me Speak Freely? A Study on the Impact of Format Restrictions on Performance of LLMs" explores the intricate relationship between format constraints such as JSON, XML, and YAML, and the efficacy of LLMs across reasoning and classification tasks. This examination is pivotal in understanding how structured output requirements impact the inherent performance capabilities of LLMs, particularly in real-world applications necessitating consistent and parseable outputs.

Methodological Exploration of Structured Generation

The study dissects structured generation by employing three primary techniques—Constrained Decoding, Format-Restricting Instructions (FRI), and NL-to-Format transitions. Constrained Decoding, exemplified by JSON-mode, restricts output to predefined token spaces, crucial for industrial applications where JSON compliance is non-negotiable. In contrast, FRI employs schema adherence without explicit decoding constraints, offering a more flexible approach compared to JSON-mode. Lastly, the NL-to-Format method first generates answers in natural language before converting them into target formats, mitigating potential performance degradation by decoupling content generation from formatting.

Experimental Design and Evaluation

The empirical analysis leverages datasets categorized into reasoning tasks (GSM8K, Last Letter Concatenation, Shuffled Objects) and classification tasks (DDXPlus, MultiFin, Sports Understanding, Task 280). The evaluation involves multiple LLMs, including gpt-3.5-turbo, claude-3-haiku, and LLaMA-3-8B, assessing performance through metrics such as accuracy for classification and exact match for reasoning tasks.

The evaluation method integrates task-specific metrics to provide a nuanced assessment of each model's capability. A notable feature is the use of Perfect Text Parser, an LLM-based tool, to minimize format errors during evaluation, offering a more reliable performance metric across different format-adherent outputs.

Main Findings: Performance Variability Under Format Constraints

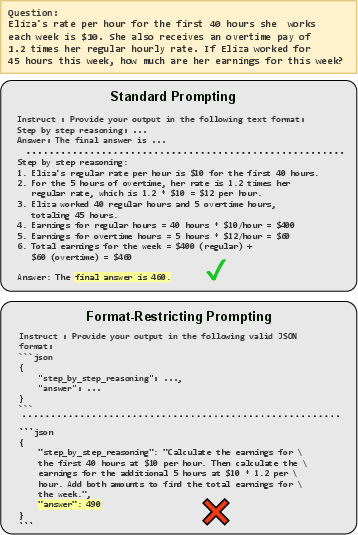

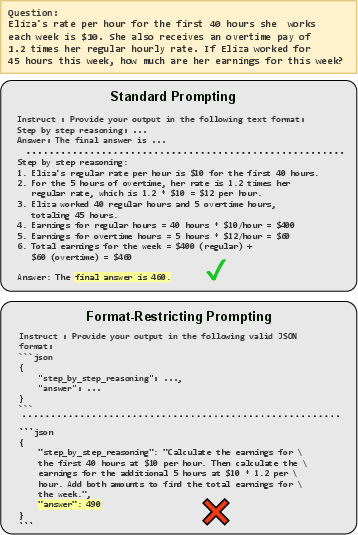

A critical finding is the dichotomy between the impact of format restrictions on reasoning versus classification tasks. In reasoning tasks, stricter format constraints, like JSON-mode, significantly degrade performance (Figure 1). This degradation underscores the pitfalls of forced structured adherence, which often undermines the reasoning process—exemplified by the misordering of reasoning and final answer keys in structured outputs.

Figure 1: GPT-3.5-turbo prompted with GSM8K math questions in standard natural language answered correctly, but failed when format restrictions were applied.

Conversely, classification tasks exhibited a contrasting trend, where JSON-mode either matched or exceeded performance across models due to its structured nature facilitating precise classification (Figure 2). This suggests that constraint-driven formats like JSON can enhance performance in tasks where stringent output specifications reduce ambiguity in answer selection.

Theoretical and Practical Implications

The paper posits that while structured outputs are imperative for seamless integration into computational workflows, their rigidity can be detrimental to the nuanced reasoning capabilities of LLMs. The observed variation in performance across reasoning and classification tasks suggests a task-dependent impact of format restrictions, advocating for a tailored approach when implementing structured generation.

From a theoretical standpoint, these findings urge a reevaluation of how structured generation methods are employed, prompting future investigations into optimizing performance while adhering to necessary format constraints. In practice, this research implies that industry practitioners must judiciously balance strict format requirements with maintaining the inherent strengths of LLMs in reasoning-intensive applications.

Conclusion

This study elucidates the multifaceted impact of format restrictions on LLM performance, highlighting the importance of adaptive methodologies that harmonize structured output demands with LLMs' reasoning prowess. As the deployment of LLMs continues to proliferate across sectors, understanding these dynamics becomes crucial, particularly in devising strategies that preserve the integrity and capability of these models amidst stringent format adherence requirements. Future work will hopefully extend these insights, broadening the applicability and robustness of LLMs across diverse industrial applications.