Higher-Order Knowledge Representations for Agentic Scientific Reasoning

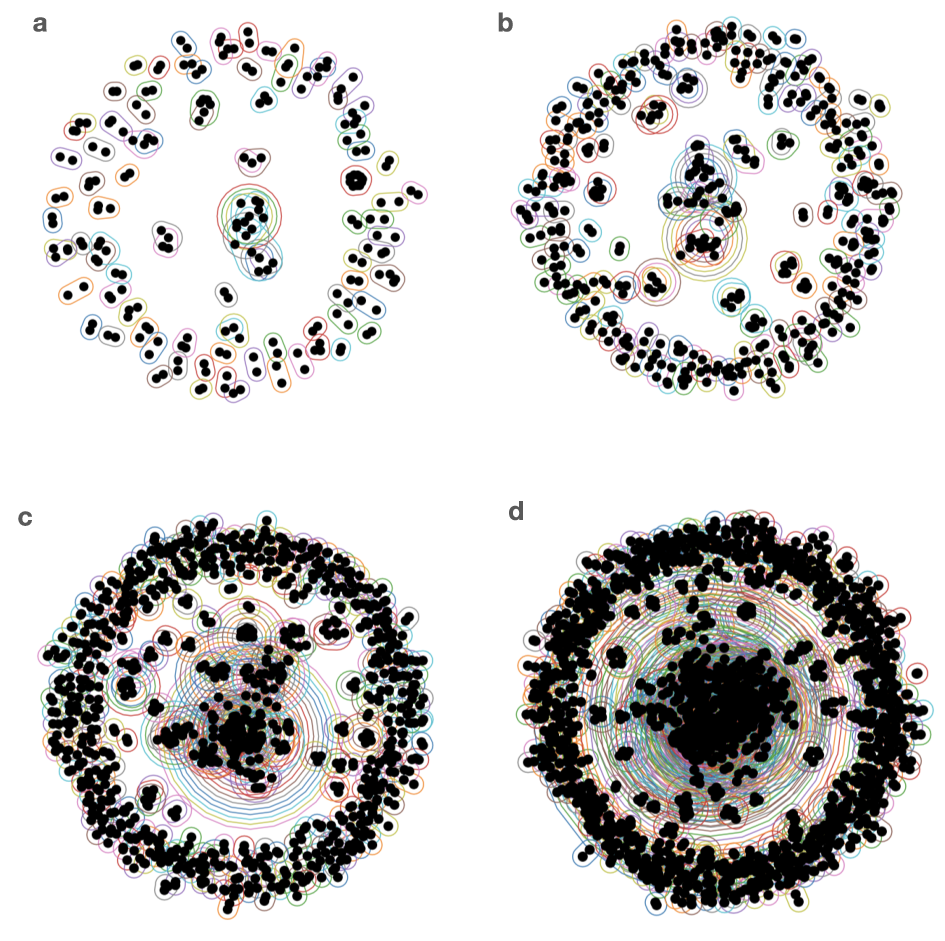

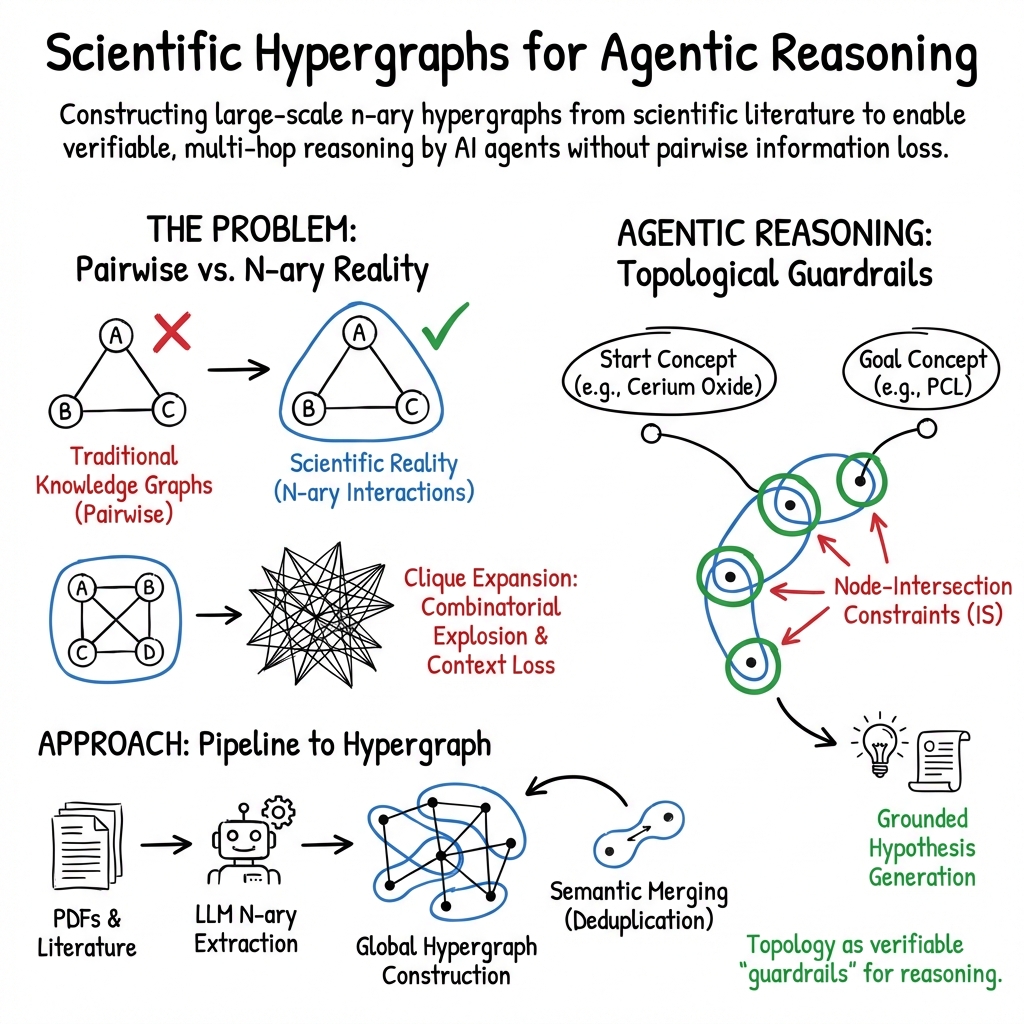

Abstract: Scientific inquiry requires systems-level reasoning that integrates heterogeneous experimental data, cross-domain knowledge, and mechanistic evidence into coherent explanations. While LLMs offer inferential capabilities, they often depend on retrieval-augmented contexts that lack structural depth. Traditional Knowledge Graphs (KGs) attempt to bridge this gap, yet their pairwise constraints fail to capture the irreducible higher-order interactions that govern emergent physical behavior. To address this, we introduce a methodology for constructing hypergraph-based knowledge representations that faithfully encode multi-entity relationships. Applied to a corpus of ~1,100 manuscripts on biocomposite scaffolds, our framework constructs a global hypergraph of 161,172 nodes and 320,201 hyperedges, revealing a scale-free topology (power law exponent ~1.23) organized around highly connected conceptual hubs. This representation prevents the combinatorial explosion typical of pairwise expansions and explicitly preserves the co-occurrence context of scientific formulations. We further demonstrate that equipping agentic systems with hypergraph traversal tools, specifically using node-intersection constraints, enables them to bridge semantically distant concepts. By exploiting these higher-order pathways, the system successfully generates grounded mechanistic hypotheses for novel composite materials, such as linking cerium oxide to PCL scaffolds via chitosan intermediates. This work establishes a "teacherless" agentic reasoning system where hypergraph topology acts as a verifiable guardrail, accelerating scientific discovery by uncovering relationships obscured by traditional graph methods.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Simple Explanation of the Paper

What is this paper about?

This paper shows a new way for AI to “think” like a scientist by organizing knowledge as groups of things that go together, not just pairs. The authors use a structure called a “hypergraph” to help AI connect ideas across many research papers and come up with new, testable scientific ideas, especially in materials science.

What questions are the authors trying to answer?

The paper focuses on a few clear questions:

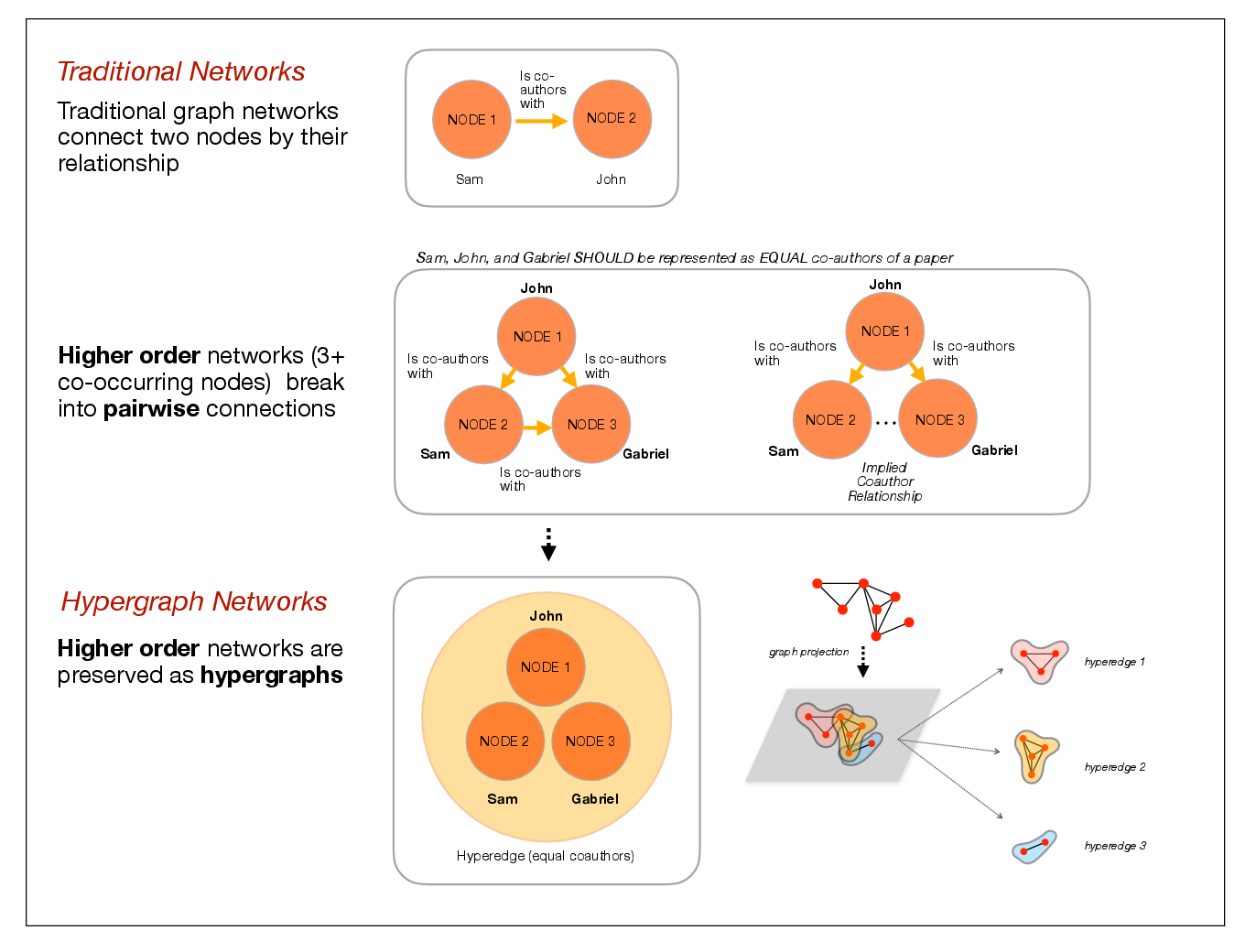

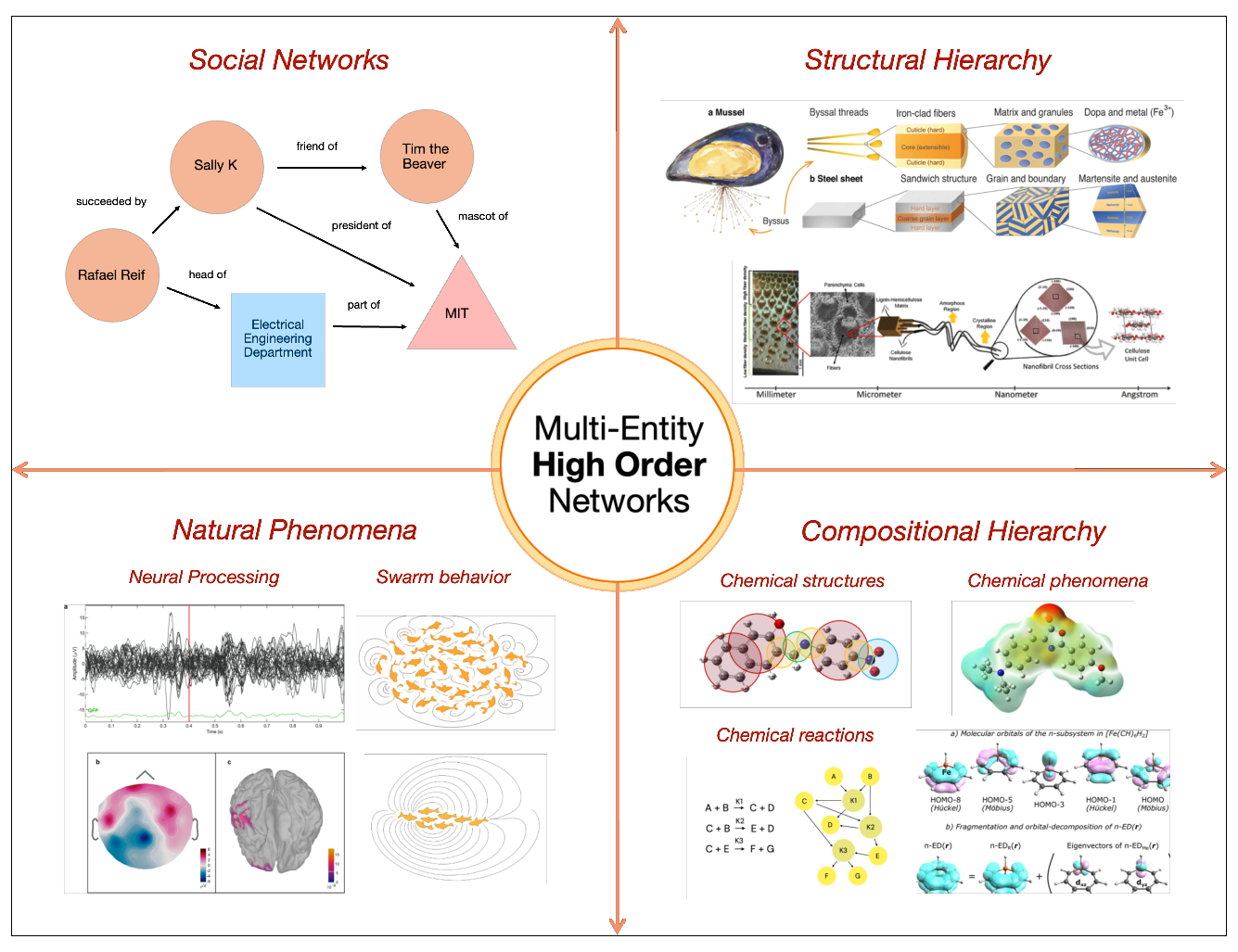

- How can we represent scientific knowledge so it captures real “group” relationships (like several ingredients working together in one experiment), not just one-to-one links?

- Can this representation help AI reason more reliably and avoid making things up?

- Will this help AI generate useful, grounded scientific hypotheses (new ideas that could be tested in the lab)?

How did they do it? (Methods in everyday language)

Think of scientific knowledge like a big map of ideas. Most maps used today connect ideas two at a time (A–B, B–C). That’s called a graph. But science often happens in groups: a formula may involve three chemicals together; a paper may study five factors at once. If you break that into pairs, you lose the full picture.

- Hypergraph: Instead of drawing only one-to-one connections (like handshakes), a hypergraph lets you draw a single “group link” that connects many items at once (like a group hug). One “hyperedge” can tie together several entities that belong together in the same statement or experiment.

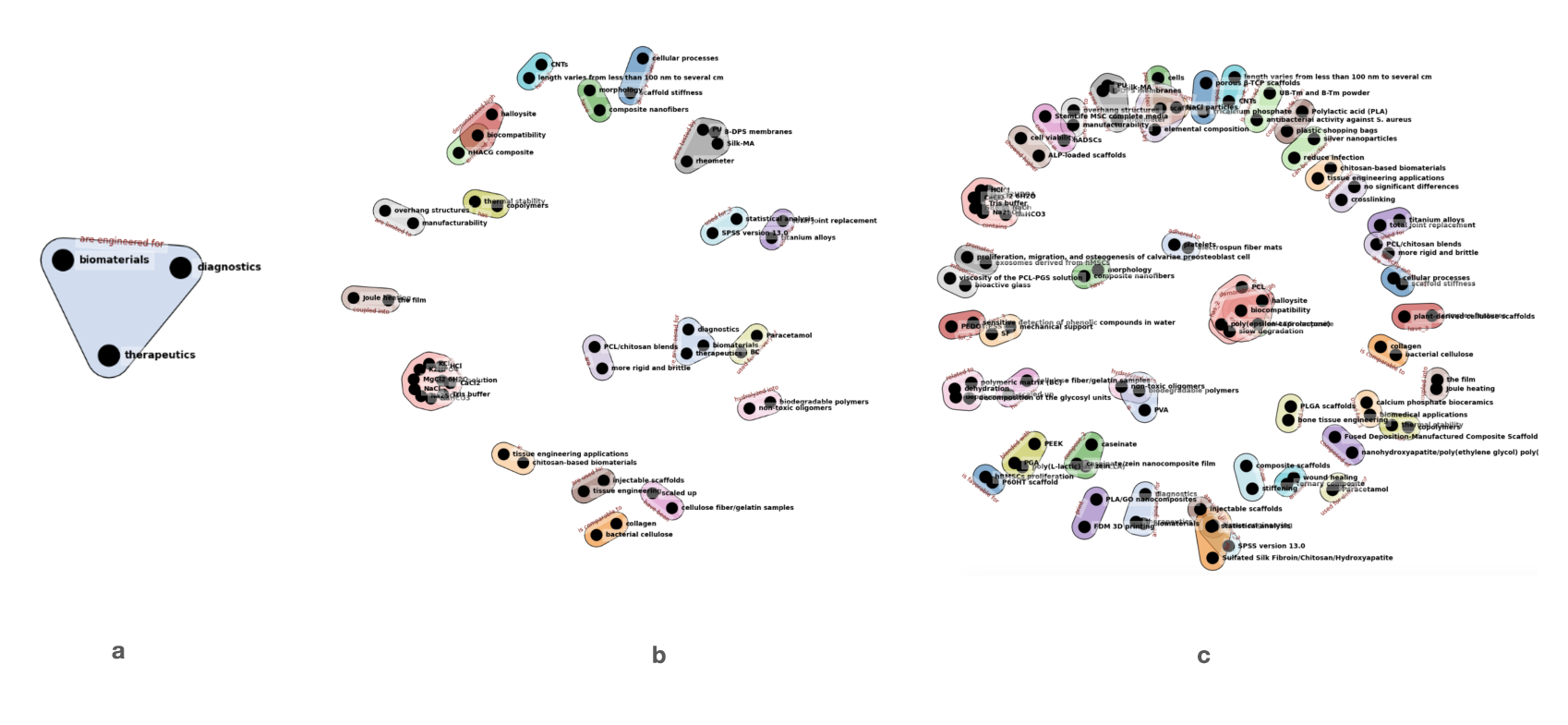

- Building the hypergraph: The authors collected about 1,100 research papers on biocomposite scaffolds (materials used to support tissue growth). An AI read the papers and pulled out multi-entity facts, such as “material X and material Y were used together to make scaffold Z for purpose P.” Each of these facts became one hyperedge that connects all involved items together.

- Two-pass reading strategy: 1) First, the AI captured clear, grammatical facts straight from sentences (who did what with what). 2) Then, it carefully added implied facts that were hinted at (like turning “fabrication of X” into “fabricate(X)” or “X for Y” into “X is used for Y”), without being too loose.

- Cleaning up names: Different papers use different names for the same thing (like “PLA” vs. “polylactic acid”). The team used a tool that measures how similar words are in meaning (embeddings) to merge synonyms, while keeping the most common term.

- Multi-agent reasoning: Think of a team of AI helpers.

- One “map-reader” agent can walk the hypergraph (the group-based map) to find paths that connect distant ideas.

- Other “expert” agents focus on the science details, checking and improving suggestions.

- Together, they propose and refine new material designs backed by evidence found in the hypergraph.

- A key trick: node-intersection constraints. This is like finding students who are in both the “math club” and the “robotics club” at the same time. In the hypergraph, it means the AI looks for concepts that show up together with multiple targets, helping bridge distant topics in a grounded way.

What did they find, and why does it matter?

Here are the main results and why they’re important:

- They built a very large hypergraph from the literature: about 161,000 nodes (things like materials, methods, purposes) and 320,000 hyperedges (multi-entity facts from the papers). This kept the original “who-appeared-with-whom” context from each paper, instead of breaking it into many pair links that can distort meaning.

- The hypergraph had a “scale-free” pattern: a few big hubs (very common concepts) and many smaller ones. That’s like airline networks where a few airports have flights to many places. This structure helps the AI jump efficiently from known ideas to new ones.

- It avoided the mess of pairwise graphs. If you turn every group into all possible pairs, you get way too many lines and lose the identity of the original group (like which exact paper or experiment tied them together). The hypergraph kept that context intact.

- It improved reasoning. Using hypergraph paths and overlaps, the AI could discover new, plausible material combinations. For example, it linked cerium oxide to PCL (a polymer) using chitosan as a bridge—suggesting a concrete, testable materials recipe, with explanations grounded in the literature.

- Built-in guardrails. Because every step runs along real group relationships pulled from papers, the AI’s ideas are easier to trace and verify. This reduces “hallucinations” (made-up facts).

Why is this important for science?

This approach helps AI act more like a careful scientist:

- It sees group relationships as they truly appear in experiments and papers.

- It can connect far-apart ideas through shared groups, revealing hidden links.

- It speeds up hypothesis generation—creating new material designs that could be tried in the lab.

- It’s “teacherless” in the sense that the structure of the hypergraph itself guides the AI’s reasoning, without needing constant human retraining or special tuning for each task.

- It’s update-friendly: as new papers come out, you can add them to the hypergraph without retraining the AI model.

Bottom line

By switching from pairwise graphs to hypergraphs, the authors give AI a better map of scientific knowledge—one that respects how experiments and ideas actually come in groups. This helps AI explore more safely and creatively, generating realistic, testable scientific hypotheses faster, with clearer evidence trails.

Collections

Sign up for free to add this paper to one or more collections.