BEDS : Bayesian Emergent Dissipative Structures : A Formal Framework for Continuous Inference Under Energy Constraints

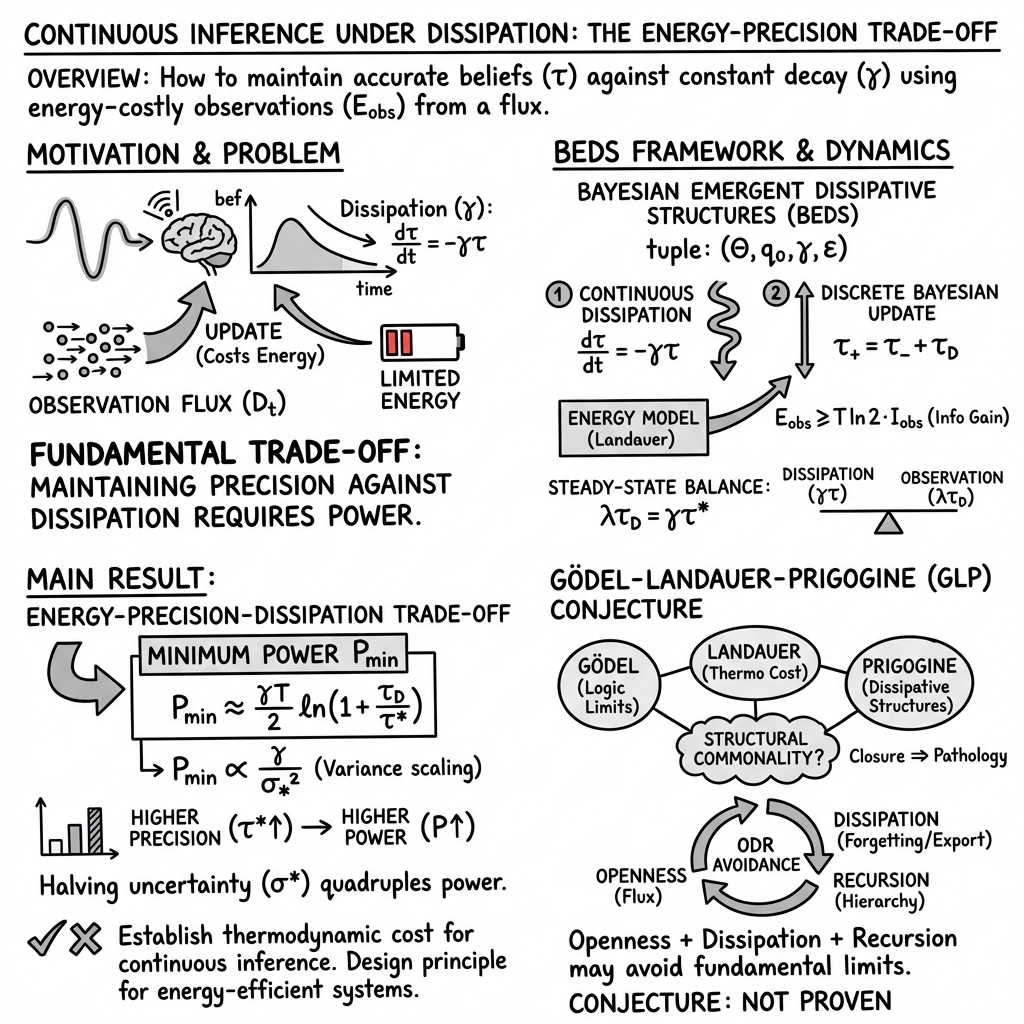

Abstract: We introduce BEDS (Bayesian Emergent Dissipative Structures), a formal framework for analyzing inference systems that must maintain beliefs continuously under energy constraints. Unlike classical computational models that assume perfect memory and focus on one-shot computation, BEDS explicitly incorporates dissipation (information loss over time) as a fundamental constraint. We prove a central result linking energy, precision, and dissipation: maintaining a belief with precision $τ$ against dissipation rate $γ$ requires power $P \geq γk_{\rm B} T / 2$, with scaling $P \propto γ\cdot τ$. This establishes a fundamental thermodynamic cost for continuous inference. We define three classes of problems -- BEDS-attainable, BEDS-maintainable, and BEDS-crystallizable -- and show these are distinct from classical decidability. We propose the Gödel-Landauer-Prigogine conjecture, suggesting that closure pathologies across formal systems, computation, and thermodynamics share a common structure.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces BEDS, which stands for Bayesian Emergent Dissipative Structures. The main idea is simple: in the real world, many systems (like brains, sensors, and robots) don’t just compute an answer once and stop. They have to keep their beliefs and estimates accurate all the time, even while their memory slowly fades or “leaks” and the environment keeps changing. Doing that takes energy. BEDS is a math framework that explains how much energy is needed to keep your beliefs sharp when they would naturally get blurrier over time.

The central result of the paper is a clean rule: if your system is constantly forgetting at rate γ (gamma), then keeping a belief sharp requires a minimum power (energy per second) of about . In short, fighting “forgetting” forever costs a steady amount of energy.

Key Objectives and Questions

The paper asks and answers questions like:

- How do we formally describe systems that keep updating their beliefs continuously, while their memory naturally fades?

- What kinds of problems can such systems solve, and how do those differ from traditional “one-shot” computations?

- What is the fundamental energy cost of maintaining a certain level of belief accuracy over time?

- Is there a deeper pattern linking limits in logic (Gödel), computation (Landauer), and physics (Prigogine)?

Methods and Approach (with everyday analogies)

Think of maintaining a belief like keeping a photo sharp on a foggy window:

- Dissipation (forgetting): Over time, the picture gets blurry. The paper models this as the belief becoming less precise. Precision is written as (tau), and it shrinks over time at a rate (gamma). Higher γ means faster blurring.

- Observations: To re-sharpen the picture, you look again (take a new measurement). Each new observation adds some sharpness, measured by its precision .

- Bayesian update: This is a smart way to combine your old belief with the new observation, like blending a blurry photo with a clearer snapshot to get a better estimate.

- Energy cost (Landauer’s principle): Making your belief more precise (reducing uncertainty) costs energy. It’s like wiping fog off the window—you have to spend effort. The paper uses a known physics rule: reducing uncertainty by a certain amount requires at least some energy proportional to the temperature of the system’s environment.

Putting it all together:

- If your belief gets blurrier at rate , you must add sharpness from observations fast enough to keep up.

- The system balances the “loss” (blurring) and “gain” (new data) to stay at a desired sharpness level.

- Power (energy per second) = observation rate × energy per observation.

This balance leads to the central energy rule.

Main Findings and Why They Matter

Here are the main results in simple terms:

- Energy-precision-dissipation trade-off: To maintain a fixed sharpness (precision ) against forgetting rate , the minimum power needed scales like . In the most efficient regime, there’s a clean bound: .

- Intuition: If you forget faster (larger γ), you must spend more energy to stay accurate.

- If you want much higher precision (very sharp beliefs), that also raises your energy needs.

- Variance scaling: Variance is the “blurriness” of your belief; precision is its inverse. The paper shows that reducing uncertainty is expensive: halving the uncertainty can require roughly four times the power.

- Problem classes:

- BEDS-crystallizable: You can get beliefs sharp enough (below a threshold) in finite time, then stop. Think “finish the job and freeze the result.”

- BEDS-attainable: You can keep getting closer to the target with finite energy over time.

- BEDS-maintainable: You can stay within a certain accuracy forever with bounded power.

- These notions are different from classical “decidable” problems for Turing machines, because BEDS focuses on continuous tracking instead of a single final answer.

- Comparison with classical computation:

- Turing machines assume perfect memory, a one-time input, and a final output. BEDS assumes fading memory, a stream of inputs, and a continuously maintained belief.

- The fundamental limits are different too: Turing machines face undecidability (some problems have no halting solution), while BEDS faces energy limits (precision costs power).

- The GLP conjecture (Gödel–Landauer–Prigogine): The paper suggests a deep connection between three famous limits:

- Gödel: Some truths can’t be proven within a fixed formal system.

- Landauer: Erasing information costs energy.

- Prigogine: Systems that keep order must export entropy (be “open”).

- The conjecture claims these are different faces of the same pattern: closed systems run into paradoxes or costs; open systems avoid them by allowing flow (of heat, information, or levels of description).

Why this matters:

- It gives engineers and scientists a “physics price tag” for staying accurate over time.

- It helps design energy-efficient sensors, robots, and learning systems.

- It suggests a unified way to think about limits across math, computing, and physics.

Implications and Potential Impact

For real-world systems:

- Brains: Your brain spends energy to keep beliefs stable even while synapses change and memories fade. This framework explains part of why brains need steady power to stay accurate.

- Sensor networks and robots: If you want long-term accurate tracking (like monitoring weather or self-driving), plan for a baseline energy cost that scales with how fast the system forgets and how sharp you need the estimates.

- Machine learning: Models that learn continuously and also “forget” outdated information (structured forgetting) could avoid some failures that frozen models display, but they’ll have an energy budget tied to their desired precision.

For theory and foundations:

- It reframes the “cost of knowledge” in physical terms: keeping accurate beliefs isn’t free—it’s like constantly pumping out a leaky boat.

- The GLP conjecture, if developed further, could bridge logic, computing, and thermodynamics, offering a common language for understanding their fundamental limitations.

In short, the paper says: if you want a system to stay smart and accurate all the time in a changing world, you must pay steady energy to fight natural forgetting. It turns continuous inference into a physics-aware activity with clear limits and design rules.

Knowledge Gaps

Unresolved gaps, limitations, and open questions

Below is a single, actionable list of what remains missing, uncertain, or unexplored in the paper. Each item is phrased to guide future research.

- Physical constants and units: replace the ambiguous temperature symbol

Twithk_B Tand ensure consistent units when converting between nats and bits (e.g., clarify when factors like\ln 2are needed), and avoid reusingTfor both time and temperature. - Applicability of Landauer to Bayesian updates: rigorously justify when and how entropy reduction in probabilistic updates corresponds to logically irreversible operations that incur Landauer costs; distinguish sensing, storage, and computation energy components.

- Non-Gaussian beliefs and likelihoods: extend the energy–precision–dissipation trade-off beyond Gaussian assumptions to heavy-tailed, multi-modal, and constrained distributions; specify a precision surrogate (e.g., Fisher information) and rederive bounds.

- Non-exponential dissipation models: analyze memory decay that is power-law, multi-timescale, or state-dependent, and quantify how the bound on

P_{\min}changes under these alternatives. - Time-varying and stochastic observation processes: replace constant

\lambdaand fixed\tau_Dwith models for bursty arrivals, variable-quality observations, and adversarial or endogenous flux; derive optimal policies\lambda(t), \tau_D(t)under uncertainty. - High-precision asymptotics: correct and clarify the limit behavior of

P_{\min}as\tau^* \to \infty; confirm monotonicity and whetherP_{\min}approaches(k_B T/2)\,\gammaor0, and reconcile with the linear-regime approximation. - Multi-dimensional covariance dynamics: generalize to

\Theta \subseteq \mathbb{R}^dwith anisotropic dissipation and observation precision; provide matrix-valued rate equations and bounds in terms of covariance/precision matrices and information geometry. - Crystallization criterion robustness: replace variance-only thresholds with criteria that handle multi-modality (e.g., entropy, credible sets, or posterior concentration rates) and specify guarantees on

|E[q_T] - \theta^*|under non-Gaussian posteriors. - Maintainability conditions: characterize necessary and sufficient conditions for BEDS-maintainability (bounded power) in terms of

\gamma, attainable\lambda, and achievable\tau_D; include stability analysis and existence of fixed points under realistic constraints. - Optimal observation design: formulate and solve the full energy minimization over

\lambdaand a distribution of observation precisions\tau_D(including sensing/processing costs), prove convexity or provide algorithms, and quantify suboptimality gaps. - Empirical validation: provide reproducible experiments (hardware setup, datasets, protocols) that measure actual energy and precision over time, test

P \propto \gamma \cdot \tau^*, and quantify deviations from the bound across platforms and conditions. - Temperature and environment dependence: model ambient temperature dynamics, cooling limits, and their impact on attainable precision and energy costs; evaluate whether temperature control is a dominant lever.

- Complete energy accounting: incorporate energy for sensing, communication, memory refresh/retention, storage leakage, and compute overhead, not only bit erasures; separate these contributions in the bound and in experiments.

- Divergence measure clarity: define

(q_t \| \pi^*)precisely (KL divergence, total variation, Wasserstein, etc.), justify its choice, and state assumptions under which\lim_{t\to\infty} (q_t \| \pi^*) = 0holds. - Classical computation comparison correctness: replace the flawed halting-problem example with rigorous separations (e.g., decidable problems requiring unbounded memory for finite-state devices vs. BEDS-maintainable tasks) and provide proofs or citations.

- GLP conjecture formalization: define “logical entropy” formally, construct a mapping between logical/computational irreversibility and thermodynamic entropy, and state testable, falsifiable propositions rather than analogies.

- GLP empirical tests: design toy models and empirical studies to assess whether ODR conditions reduce “closure pathologies” (e.g., hallucinations), quantify “structured forgetting,” and measure energy costs of maintaining consistency.

- Multi-agent energy bounds: specify a network model (communication topology, synchronization, redundancy) and derive

f(N, \text{topology})with explicit communication and consensus costs; compare centralized vs. decentralized strategies. - Moving targets and process noise: rigorously derive tracking bounds for

\theta(t)with velocity and acceleration (e.g., connect to Kalman–Bucy filtering), include process noise, and quantify the added power required for dynamics (v^2term) under dissipation. - Model misspecification robustness: analyze how incorrect likelihoods or priors affect energy requirements and precision guarantees; develop minimax or robust bounds and adaptive strategies.

- Safety and graceful degradation: specify how accuracy degrades when energy budgets are breached, define fail-safe policies, and provide guarantees on maximum error under energy shortfalls.

- Mapping dissipation

\gammato physical memory decay: operationalize\gammafor real devices (DRAM refresh, analog drift, synaptic decay), measure it empirically, and link device-level parameters to BEDS-level dynamics. - Recursive composition semantics: formalize how crystallized outputs become priors for higher-level systems, define composition operators, and analyze energy/precision propagation across levels.

- Entropy export quantification: for open systems, model and measure entropy flows to the environment during continuous inference, and verify compliance with the second law in realistic implementations.

- Coordinate invariance: ensure precision and energy bounds are invariant under reparameterization; express results in terms of Fisher information metrics to avoid coordinate artifacts.

- Edge-case regimes: analyze behavior as

\tau_D \to 0or\lambda \to \infty, and under discrete-time updates; identify feasibility limits and practical constraints (e.g., sensor saturation, bandwidth). - Tightness and achievability: provide upper/lower bounds around

P_{\min}, construct protocols that approach the bound, and quantify the gap due to nonidealities (finite precision arithmetic, hardware overhead). - Notational and typographical clarity: fix broken equations (e.g., missing braces in

E_{\text{obs}}, mismatched parentheses), remove symbol overloading, and standardize notation to avoid ambiguity in future derivations.

Practical Applications

Immediate Applications

Below are applications that can be deployed now using the BEDS framework’s energy–precision–dissipation trade-offs, steady-state balance, and observation-rate optimization.

- Edge sensor networks: energy-aware Bayesian tracking

- Sectors: IoT, energy, industrial monitoring, smart cities

- What it does: Use the precision balance λ·τ_D = γ·τ* and Landauer-informed power bounds to schedule sensor sampling and inference so that maintained accuracy (precision τ*) is delivered within battery budgets.

- Tools/products/workflows:

- Power-precision schedulers that set observation rate λ and per-observation precision τ_D based on target τ* and measured dissipation γ

- Firmware libraries implementing BEDS-aware Bayesian filters (e.g., Gaussian filters with adjustable precision and sampling)

- Edge orchestration policies that throttle sensing/compute when P approaches γ·T/2

- Assumptions/dependencies: Ability to estimate γ (information decay), Gaussian belief approximation, access to sensors with tunable τ_D (e.g., adjustable integration time or resolution), mapping device temperature T to effective energy cost.

- Wearables and medical implants: battery-accuracy policies for continuous monitoring

- Sectors: healthcare, consumer electronics

- What it does: Dynamically adjust sampling frequency and inference precision to keep diagnostic uncertainty below thresholds while meeting battery-life targets, using P_min ≈ γ·T/2 in the efficient regime.

- Tools/products/workflows:

- “Accuracy vs battery” control profiles in device firmware

- Clinical workflows that specify maintained variance σ2 and compute power budgets using P ∝ γ/σ2

- Assumptions/dependencies: Clinical acceptance of probabilistic accuracy thresholds, stable estimates of γ for each physiological signal, patient-specific variability, regulatory constraints.

- Robotics localization and SLAM: observation-rate control under thermal/power limits

- Sectors: robotics, autonomous vehicles, drones

- What it does: Modulate sensor updates (e.g., camera frames, LIDAR scans) and estimator precision to maintain τ* without exceeding thermal envelopes, guided by λ = γ·τ*/τ_D and P_min calculations.

- Tools/products/workflows:

- Perception stack module that computes real-time γ and sets λ to meet τ* under P_max

- Graceful degradation strategies that widen σ*2 when power is scarce

- Assumptions/dependencies: Near-Gaussian error models for pose/landmark estimates, accurate γ estimation for map/state decay, sensor precision controllability.

- Data-center inference services: energy–accuracy SLAs for continuous models

- Sectors: software, cloud, finance, ad-tech

- What it does: Establish service-level agreements that specify maintained precision τ* and corresponding power budgets, enabling autoscaling and throttling consistent with P ≥ γ·T/2.

- Tools/products/workflows:

- Monitoring dashboards tracking τ*, γ, λ, and E_obs

- Schedulers that set update cadence (λ) and batch sizes (affecting τ_D) to meet SLAs

- Assumptions/dependencies: Instrumentation for precision/error tracking, proxy for γ in non-physical data domains (e.g., model drift rate), temperature T is metaphorical (translate Landauer bound to practical energy per bit on hardware).

- Smart grid and industrial control: measurement scheduling for state estimation

- Sectors: energy, manufacturing

- What it does: Optimize PMU/SCADA measurement rates to maintain state estimation precision within power/communication budgets using the BEDS steady-state equation and variance scaling.

- Tools/products/workflows:

- Scheduler that selects which sensors to poll and how often (λ) given τ* targets

- Control-room analytics showing trade-offs between σ*2 and total power

- Assumptions/dependencies: Linear-Gaussian estimator regimes or good approximations, reliable γ estimation for grid states, network latency constraints.

- Smartphone OS features: accuracy–battery slider for continuous services

- Sectors: consumer software, mobile

- What it does: System setting that maps user-selected accuracy targets (e.g., navigation, activity tracking) to sampling rates and precision, enforcing power lower bounds informed by P_min.

- Tools/products/workflows:

- OS API exposing τ*, γ, λ knobs to apps

- App-level policies for GPS, IMU, camera that tune τ_D and λ dynamically

- Assumptions/dependencies: User acceptance of probabilistic accuracy, per-sensor precision controls, background power management integration.

- MLOps for continuously updated models: structured forgetting and retrain budgets

- Sectors: software, AI, e-commerce, content moderation

- What it does: Treat model drift as dissipation (γ) and schedule updates/retraining to maintain accuracy while respecting energy/compute budgets, aligning with BEDS-maintainable problem definitions.

- Tools/products/workflows:

- Drift monitors estimating γ from accuracy decay

- Retraining cadence optimization using λ·τ_D = γ·τ* with τ_D as per-update precision contribution

- Assumptions/dependencies: Reliable drift estimation, mapping training compute to “observation energy,” Gaussian surrogate for uncertainty, data availability.

- Research instrumentation: estimating dissipation rate γ and energy–precision profiling

- Sectors: academia, hardware R&D, neuroscience

- What it does: Empirically measure γ (rate of uncertainty growth without updates) and validate P ∝ γ·τ* scaling in controlled settings.

- Tools/products/workflows:

- Experimental pipelines to hold priors fixed, with/without observations, tracking σ2(t)

- Profilers that report τ*, γ, λ, E_obs and compute whether operations are near the P_min regime

- Assumptions/dependencies: Controlled environments, Gaussian approximations, precise power metering, stable temperature T.

Long-Term Applications

Below are applications that require further research, scaling, or development, including extensions beyond Gaussian cases, better γ estimation, and hardware/organizational changes.

- BEDS-aware hardware and near-Landauer architectures

- Sectors: semiconductors, energy, HPC

- What it could do: Architect compute pipelines and memory systems that approach Landauer limits for observation updates, integrating thermal management tuned to continuous inference workloads.

- Tools/products/workflows:

- Reversible or adiabatic computing modules for inference updates

- Sensor/ADC designs with tunable τ_D and energy-per-bit characterization

- Assumptions/dependencies: Manufacturing advances, practical reversible logic, accurate mapping from Landauer bounds to system-level energy.

- Neuromorphic and bio-inspired systems implementing openness, dissipation, recursion (ODR)

- Sectors: robotics, AI hardware, healthcare

- What it could do: Build systems that embody structured forgetting, continuous learning, and hierarchical crystallization, potentially reducing hallucinations and improving stability (per GLP predictions).

- Tools/products/workflows:

- Learning algorithms with explicit forgetting budgets and crystallization thresholds ε

- Hierarchical modules that “halt” and export stabilized priors to higher levels

- Assumptions/dependencies: Robust algorithms for controlled forgetting, safe online learning, hardware support for continual updates.

- Standards and policy for energy–accuracy labeling

- Sectors: policy, consumer tech, healthcare devices, sustainability

- What it could do: Create labeling and compliance regimes specifying maintained precision, dissipation rates, and minimum power for continuous inference devices (e.g., wearables, implants, home hubs).

- Tools/products/workflows:

- Testing protocols to measure γ, τ*, and power under specified use cases

- Regulatory guidance incorporating P ≥ γ·T/2 bounds into device certification

- Assumptions/dependencies: Agreement on metrics (τ, σ2), experimental reproducibility, stakeholder buy-in.

- Continuous-learning AI architectures guided by GLP

- Sectors: software, AI, education, content platforms

- What it could do: Design AI systems with ongoing data flux, structured dissipation (forgetting), and meta-level recursion to mitigate closure pathologies (e.g., hallucinations, drift).

- Tools/products/workflows:

- Online knowledge management that prunes stale beliefs at rates matching γ

- Meta-level validators that crystallize sub-beliefs and export them to higher reasoning layers

- Assumptions/dependencies: Safe deployment of online learning, methods to quantify “logical entropy,” governance to manage data openness.

- Formal extensions beyond Gaussian beliefs and moving targets

- Sectors: academia (statistics, control, information theory)

- What it could do: Generalize Energy–Precision Theorem to non-Gaussian distributions, multivariate cases, and targets with dynamics (e.g., tracking bounds with velocity v).

- Tools/products/workflows:

- New theoretical results and simulation toolkits supporting heavy-tailed/mixture models

- Benchmarks for continuous inference under motion and non-exponential decay

- Assumptions/dependencies: Mathematical advances, validated approximations, domain-specific parameterization of γ and τ_D.

- Multi-agent inference networks and topology-aware energy allocation

- Sectors: sensor networks, swarm robotics, distributed systems

- What it could do: Optimize collective power P_total ∝ γ·τ*·f(N, topology) for teams of agents maintaining shared beliefs.

- Tools/products/workflows:

- Protocols for distributing λ and τ_D across agents based on network structure

- Coordinated crystallization of sub-beliefs to reduce global energy

- Assumptions/dependencies: Reliable inter-agent communication, consensus mechanisms, topology-aware γ estimation.

- Healthcare regulation for continuous diagnostic accuracy

- Sectors: healthcare policy, medical devices

- What it could do: Codify minimum maintained precision for continuous monitors (e.g., glucose, cardiac), linking accuracy requirements to energy budgets and device longevity via BEDS.

- Tools/products/workflows:

- Clinical trial designs that measure dissipation and power–accuracy trade-offs

- Post-market surveillance tracking γ over real-world usage

- Assumptions/dependencies: Clinical validation, patient safety protocols, standardized metrics.

- Educational curricula and benchmarking for continuous inference under constraints

- Sectors: academia, professional training

- What it could do: Teach engineers and scientists to design systems for maintained beliefs under energy limits; establish benchmarks and competitions for BEDS-maintainable solutions.

- Tools/products/workflows:

- Course modules on thermodynamics of inference and continuous Bayesian methods

- Open datasets and evaluation harnesses tracking τ*, γ, λ, and power

- Assumptions/dependencies: Community adoption, resource support, cross-disciplinary collaboration.

Glossary

- Bayesian Update: The Bayesian rule for adjusting a belief distribution when new data is observed. "Bayesian Update."

- BEDS: The proposed framework (Bayesian Emergent Dissipative Structures) for continuous inference under explicit dissipation and energy constraints. "We introduce BEDS (Bayesian Emergent Dissipative Structures), a formal framework for analyzing inference systems that must maintain beliefs continuously under energy constraints."

- BEDS-attainable: A property meaning the target distribution can be approached asymptotically with finite total energy. "BEDS-attainable, BEDS-maintainable, and BEDS-crystallizable"

- BEDS-crystallizable: A property meaning the system can reduce uncertainty below a threshold and emit a point estimate within finite time. "BEDS-attainable, BEDS-maintainable, and BEDS-crystallizable"

- BEDS-maintainable: A property meaning the system can keep its belief close to the target after some time using bounded power. "BEDS-attainable, BEDS-maintainable, and BEDS-crystallizable"

- Crystallization: The event where posterior variance drops below a threshold, after which the system outputs its estimate and halts or escalates it. "Crystallization"

- Crystallization threshold: The variance bound that defines when crystallization occurs. "\varepsilon > 0 is the crystallization threshold"

- Dissipation: The modeled process of information loss (increasing uncertainty) over time. "Dissipation."

- Dissipation rate: The parameter governing how quickly precision decays in time. "\gamma > 0 is the dissipation rate"

- Dissipative structures: Open systems that maintain order by exporting entropy to the environment. "Prigogine~\cite{prigogine1977} characterized dissipative structures that maintain order through entropy export."

- Energy-Precision-Dissipation Trade-off: The named theorem establishing the minimum power required to maintain a target precision against dissipation. "Energy-Precision-Dissipation Trade-off"

- Flux: A time-indexed stream of observations that drive updates. "A flux is a sequence of observations , where is the arrival time and is the observation."

- Free Energy Principle: A theoretical framework suggesting biological systems act to minimize a free-energy bound on surprise. "Friston's Free Energy Principle~\cite{friston2010} proposes that biological systems minimize variational free energy."

- Gödel-Landauer-Prigogine conjecture: The conjectured cross-domain link between incompleteness, computational thermodynamic costs, and entropy in closed systems. "We propose the Gödel-Landauer-Prigogine conjecture, suggesting that closure pathologies across formal systems, computation, and thermodynamics share a common structure."

- Landauer bound: The minimum energy cost per logically irreversible information reduction, as applied here to observations. "(i) Landauer bound:"

- Landauer's principle: The principle that erasing information has an unavoidable thermodynamic cost. "By Landauer's principle, reducing entropy by nats requires energy ."

- Mutual information: The information gained about parameters from an observation, used to lower-bound observation energy cost. "is the mutual information gained from the observation."

- Normalization constant: The factor ensuring a posterior density integrates to one after applying Bayes’ rule. "is the normalization constant."

- ODR conditions: A triad (Openness, Dissipation, Recursion) posited to avoid closure pathologies. "Define the ODR conditions:"

- Observation rate: The rate at which observations arrive or are processed, affecting steady-state precision and power. "where is the observation rate."

- Precision: Inverse variance of a belief distribution, used to track certainty and its dynamics. "Equivalently, precision decays:"

- Precision balance: The steady-state equality equating precision gain from observations to loss from dissipation. "Precision Balance"

- Precision per observation: The incremental precision contributed by a single observation in the Gaussian case. "where is the observation rate and is the precision per observation."

- Steady state: The regime where precision no longer changes because gains and losses balance. "In steady state, the precision gained from observations must equal the precision lost to dissipation:"

- Variational free energy: An objective used in approximate Bayesian inference and the Free Energy Principle to bound surprise. "minimize variational free energy."

Collections

Sign up for free to add this paper to one or more collections.