- The paper proposes a method that discovers strongly coordinated joint options by compressing joint state spaces via Fermat state abstraction.

- It employs a neural graph Laplacian and feature-disentangled n-distances to capture temporal synchronization across agents in high-dimensional spaces.

- Empirical results on Level-Based Foraging and Overcooked environments demonstrate significant downstream performance gains relative to existing methods.

Discovering Strongly Coordinated Joint Options via Inter-Agent Relative Dynamics

Introduction

This work proposes a principled approach to multi-agent option discovery that explicitly targets the emergence of strongly coordinated, temporally extended behaviors in cooperative multi-agent reinforcement learning (MARL) settings. Addressing the well-known curse of dimensionality in joint state spaces, the method introduces a novel inter-agent relative joint state abstraction centered on the concept of the Fermat state—the point of maximal team alignment in the latent state space. By representing joint states in terms of relative agent distances and leveraging a neural graph Laplacian for option discovery, the framework compresses combinatorially large joint state spaces while preserving crucial coordination structures, providing significant theoretical and practical advances over existing multi-agent option discovery paradigms.

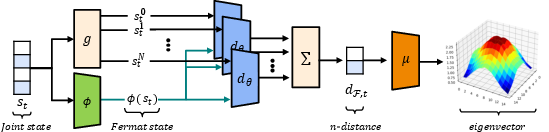

Inter-Agent Relative State Abstraction

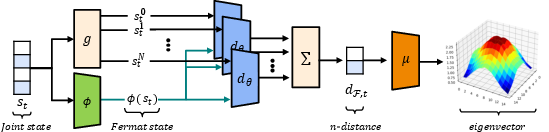

The core technical innovation is the factorization of the joint state s∈S into N agent-wise local states and measuring inter-agent configuration via multi-dimensional n-distance metrics. The Fermat state encoder ϕ approximates the point of minimal average aggregated distance from the entire team, generalizing the idea of the center of mass to spaces equipped with an arbitrary distance function dθ(⋅,⋅). The n-distance estimator is learned offline, jointly with the Fermat encoder, using temporal (successor) distances for enhanced expressiveness, enabling the abstraction to capture team spreadness and its evolution even in high-dimensional and semantically complex state spaces.

Figure 1: The proposed architecture factorizes the joint state, estimates the Fermat state and agentwise distances, and computes Laplacian eigenvectors on the resulting inter-agent relative representation.

A salient technical aspect is the use of disentangled, feature-wise n-distances, regularized by a conditional mutual information (CMI) penalty. This forces each predicted feature distance to depend primarily on its corresponding state dimension, thereby mitigating representation collapse and ensuring that different axes of coordination (e.g., spatial, inventory, orientation, etc.) can be independently exploited by option discovery.

Option Discovery with Graph Laplacians on Relative Representations

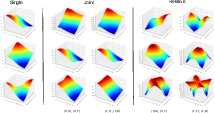

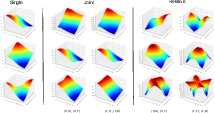

Options are extracted as policies corresponding to the eigenvectors of a graph Laplacian constructed from the trajectory-induced state transition graph, in the compressed relative state space. This process, inspired by the eigenoptions formulation, differs fundamentally from prior multi-agent approaches in that state transitions are now indexed by the team's relative configuration rather than absolute joint states. The resulting options drive agents toward or away from specific alignment patterns—both synchronized and anti-synchronized—across one or more feature axes.

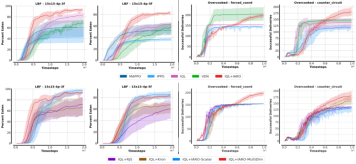

Figure 2: The first three non-trivial Laplacian eigenvectors are shown under different state encodings, highlighting the compactness and coordination-specific structure induced by the relative representation.

This approach alleviates the redundancy and inefficacy of standard eigenvector-based methods applied to raw joint states, where eigenvectors often encode non-coordinated, edge-seeking behaviors. In contrast, by centering the state representation around the Fermat state, the induced geometry is highly sensitive to mutual agent arrangement, promoting the discovery of temporally extended, coordinated behaviors that are robust across varying team sizes and compositions.

Execution and Theoretical Properties of Joint Options

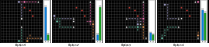

The discovered options are integrated into a modified MacDec-POMDP framework with decentralized execution but explicit support for joint macro-actions. With two key assumptions (permissive information sharing and synchronized joint option execution), the system can select, execute, and terminate options in a manner that preserves decentralized operation while leveraging strongly coordinated behavior templates. This structure generalizes classical macro-action formalisms and can be instantiated in both fully homogeneous and (with slight modification) heterogeneous agent settings.

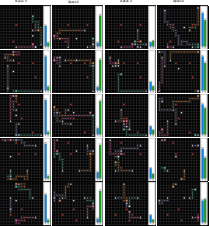

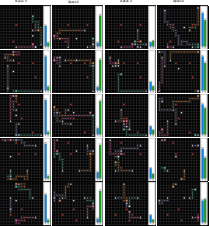

Figure 3: Policy rollouts of the first four discovered joint options in a four-agent gridworld exhibiting distinct synchronized movement and convergence patterns toward emergent Fermat states.

Empirical Analysis

The empirical study targets three key hypotheses:

- Coordinated joint options improve downstream task performance relative to baseline non-option methods.

- Inter-agent relative option discovery yields superior coordination (and, consequently, task performance) compared to existing multi-agent option discovery frameworks (e.g., Kronecker product-based, or direct joint state-based approaches).

- Feature-wise (multi-dimensional) n-distance representations are strictly more expressive and robust than scalar n-distance baselines, especially as state semantics grow more complex.

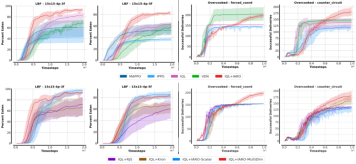

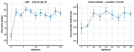

Results are demonstrated on Level-Based Foraging (LBF) and Overcooked environments, which exhibit differing coordination requirements and state space complexity. In all considered settings, introducing joint options via inter-agent relative discovery robustly boosts performance, often by significant margins.

Figure 4: Downstream performance gains achieved by IQL augmented with inter-agent relative options (IARO) are consistent across environments and surpass several strong baseline algorithms and alternative option discovery schemes.

Comparative results clearly establish that Kronecker-style and raw joint state eigenoption methods either saturate or diminish agent coordination, with their eigenvectors predominantly encoding uncorrelated or team-dispersion behaviors. In contrast, the proposed framework accelerates exploration and induces policies that reliably synchronize agent behaviors on subspaces pertinent to the collaborative task at hand.

A systematic analysis of the effect of option set cardinality shows strong positive returns for relatively small numbers of options, in accordance with eigenoption theory that early eigenvectors characterize long-range transitions critical for efficient exploration and coordination.

Figure 5: Performance scaling with the number of discovered joint options, highlighting saturation effects and demonstrating the strong utility of a small, well-chosen option set.

Extended evaluation of featurewise multi-dimensional representations versus scalar variants reveals that, while performance is similar in low-dimensional gridworlds, only the disentangled variant generalizes successfully to semantically richer domains such as Overcooked.

Option and Representation Visualization

Visualization of eigenvectors and induced behaviors reveals that, compared to joint-state and Kronecker eigenoptions, the relative approach produces eigenvectors that adaptively track team reconfiguration and enable diverse modes of axis-aligned and feature-subset synchronization. Rollouts of sample options further demonstrate their qualitative distinctiveness and alignment-driven utility.

Figure 6: Example option rollouts in a four-agent grid demonstrating the diversity of emergent alignment strategies achieved by the proposed method.

Implications and Future Directions

This work establishes the feasibility of compressing multi-agent state spaces to tractable inter-agent relative manifolds that preserve, and even amplify, coordination structures. Practically, these advances enable scalable MARL in domains where coordination is paramount and direct learning on the full joint state is computationally prohibitive. Theoretically, the Fermat-state based construction and feature-disentangled regularization highlight new classes of abstractions for option discovery in MARL, fundamentally refocusing the search on relational, rather than absolute, state geometry.

Further research avenues include:

- Extension to heterogeneous agent populations with minimal inductive bias.

- Relaxation of the information sharing and consensus assumptions (e.g., joint option triggering under partial observability or asynchronous execution).

- Leveraging the framework for emergent communication learning by tying option triggering and selection to communication acts.

- Application to competitive or mixed-cooperative/competitive settings where alignment may be transient or adversarial.

Conclusion

The paper introduces a representation-driven framework for discovering strongly coordinated, joint options in MARL, harnessing inter-agent relative dynamics to compress state spaces and induce rich, multi-featured coordinated behaviors. Across several collaborative domains, the method consistently outperforms both classic and recent baselines, demonstrating its practical utility and its role in advancing theoretical understanding of abstraction and temporal hierarchy in decentralized cooperative AI.