AI Meets Brain: Memory Systems from Cognitive Neuroscience to Autonomous Agents

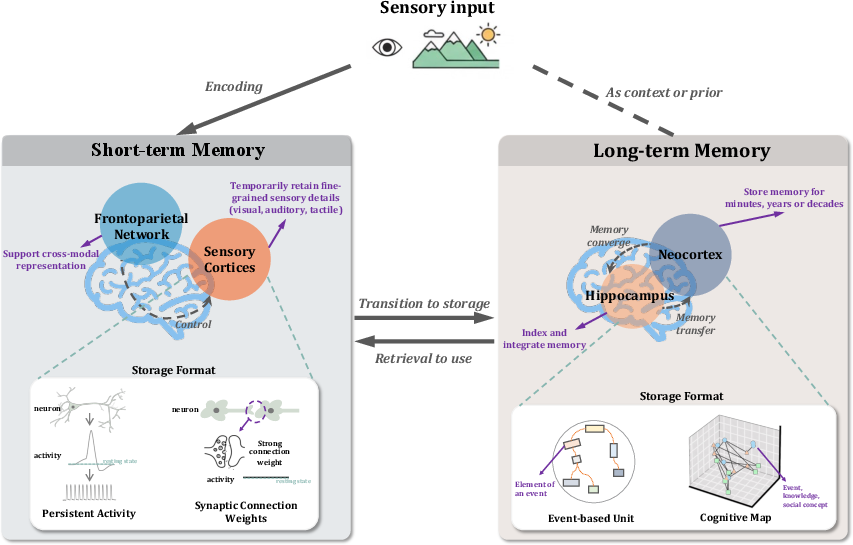

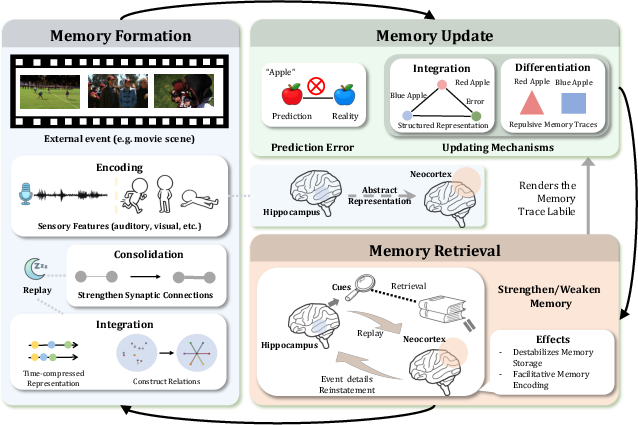

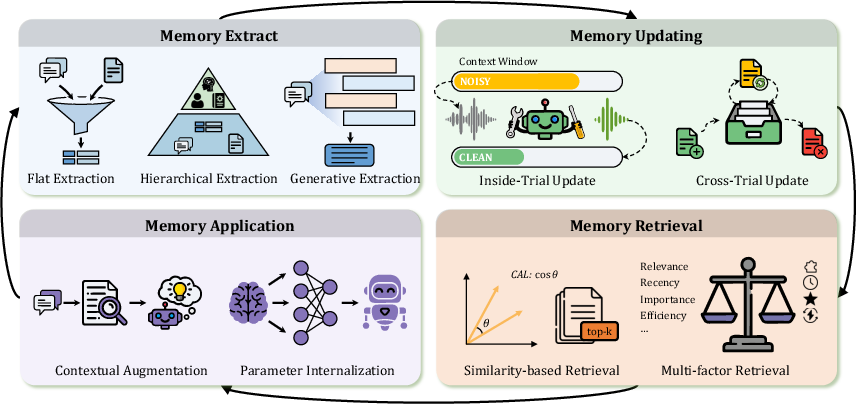

Abstract: Memory serves as the pivotal nexus bridging past and future, providing both humans and AI systems with invaluable concepts and experience to navigate complex tasks. Recent research on autonomous agents has increasingly focused on designing efficient memory workflows by drawing on cognitive neuroscience. However, constrained by interdisciplinary barriers, existing works struggle to assimilate the essence of human memory mechanisms. To bridge this gap, we systematically synthesizes interdisciplinary knowledge of memory, connecting insights from cognitive neuroscience with LLM-driven agents. Specifically, we first elucidate the definition and function of memory along a progressive trajectory from cognitive neuroscience through LLMs to agents. We then provide a comparative analysis of memory taxonomy, storage mechanisms, and the complete management lifecycle from both biological and artificial perspectives. Subsequently, we review the mainstream benchmarks for evaluating agent memory. Additionally, we explore memory security from dual perspectives of attack and defense. Finally, we envision future research directions, with a focus on multimodal memory systems and skill acquisition.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following points summarize unresolved issues and concrete research opportunities left open by the paper.

- Lack of an experimentally validated mapping between cognitive neuroscience mechanisms (e.g., hippocampal–neocortical consolidation, replay, reconsolidation) and specific agent memory modules (encoding, retrieval, updating, forgetting); design controlled, ablation-based studies that implement brain-inspired variants and quantify their impact on agent performance.

- Absence of standardized definitions and metrics for “agent memory quality” (e.g., relevance, fidelity, temporal accuracy, provenance integrity, utilization cost, personalization alignment); propose and validate a metric suite and reporting protocol.

- Limited long-horizon benchmarks with months-long, multi-session interactions and ground-truth annotations of what should be remembered, updated, or forgotten; build open datasets with episodic and semantic targets, decay schedules, and contradiction cases.

- No comparative quantification of trade-offs among parametric, working, and explicit external memory across tasks (latency, accuracy, robustness, maintenance/update cost, privacy risk); conduct systematic head-to-head evaluations with cost–benefit analyses.

- Heuristic vs. learnable memory management remains under-specified in terms of safety and reliability; develop constrained RL/optimization frameworks for summarization, deletion, and folding actions with safeguards, auditing, and rollback.

- Context-window mitigation strategies (folding, paging, summarization) lack model-agnostic, generalizable prescriptions; test policies across diverse LLM architectures and quantify lost-in-the-middle reduction versus information loss.

- Retrieval noise and spurious context injection are not robustly handled; design calibrated retrieval with uncertainty estimates, provenance filters, and conflict-aware reranking to minimize irrelevant or adversarial memory usage.

- Memory consolidation and forgetting policies are ad hoc; create adaptive retention/decay algorithms that balance recency, relevance, diversity, and redundancy with performance guarantees on downstream tasks.

- Multimodal memory systems are proposed but not concretely architected; develop cross-modal indexing, alignment, and consolidation for text–image–audio–video, and evaluate cross-modal recall, temporal grounding, and compression effectiveness.

- Skill acquisition and memory sharing across agents lack standardized interfaces and safety guarantees; specify skill schemas (preconditions, effects, versioning), compatibility checks, and negative-transfer detection/mitigation.

- The distinction between agent memory and RAG lacks rigorous evaluation criteria; design tasks with temporal evolution and interactive feedback to measure when dynamic agent memory outperforms static RAG (and vice versa).

- Security threat models for memory (poisoning, backdoors, exfiltration, privacy leaks) are incomplete; adopt formal adversarial evaluations and end-to-end secure memory stores (encryption-at-rest/in-transit, access control, differential privacy).

- Provenance and auditability of memory entries are underdeveloped; implement tamper-evident logs, edit histories, and causal tracing from outputs back to the memory records that influenced them.

- Personalization introduces bias, fairness, and consent risks that are not deeply analyzed; build consent-aware profiling pipelines, bias audits for memory-derived behaviors, and user-facing controls (opt-out, right-to-be-forgotten).

- Scalability of large knowledge graphs/vector stores under continual updates is not addressed; investigate incremental indexing, shard placement, compaction/garbage-collection policies, and their impact on retrieval latency and recall.

- Quantitative evaluation of cognitive processing modules (reflection, abstraction, workflow induction) is limited; define controlled tasks and metrics isolating their contributions beyond anecdotal demonstrations.

- Inter-agent memory coordination remains an open problem; design concurrency control, conflict resolution, and eventual-consistency mechanisms (e.g., CRDT-like models) for shared or federated memory.

- Catastrophic forgetting during parametric updates is unresolved; explore continual-learning protocols (rehearsal, adapters, modular networks) to ingest new memory without degrading prior competencies.

- Energy/computational cost accounting for memory operations (write/read/compress/update) is missing; add cost-aware benchmarks and optimization objectives that incorporate resource budgets.

- Human–AI memory alignment is not systematically studied; test how human phenomena (primacy/recency, false memories, confabulation) translate to agent design and develop mitigation techniques.

- Ethics and regulatory compliance (GDPR, data minimization, retention policies) for agent memory are not operationalized; codify retention schedules, consent tracking, data lineage, and compliance checks within memory lifecycles.

- Robustness under distribution shift and conflicting new evidence is underexplored; build conflict-detection, revalidation, and memory versioning pipelines to reconcile stale or contradictory entries.

- Formal reliability guarantees for evolving memory stores are absent; investigate invariants, consistency checks, and verification methods to ensure correctness and safe convergence of memory updates.

Collections

Sign up for free to add this paper to one or more collections.