Are We Ready for RL in Text-to-3D Generation? A Progressive Investigation

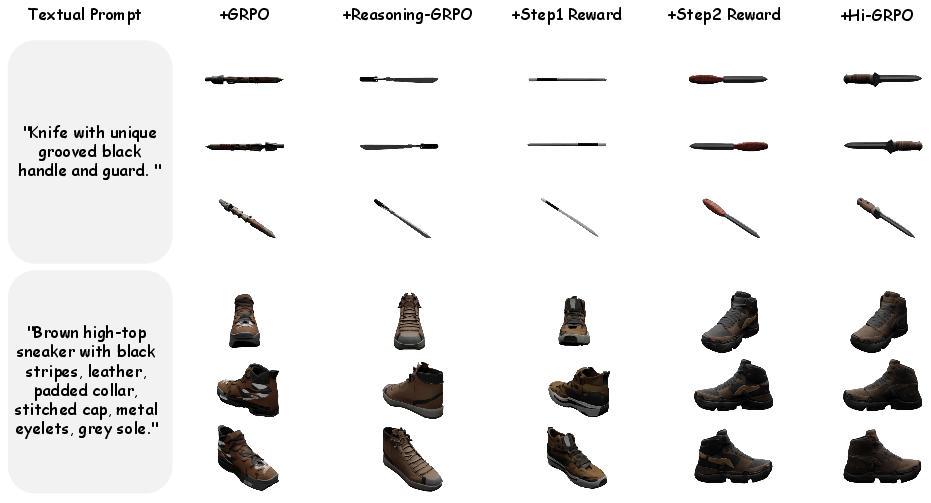

Abstract: Reinforcement learning (RL), earlier proven to be effective in large language and multi-modal models, has been successfully extended to enhance 2D image generation recently. However, applying RL to 3D generation remains largely unexplored due to the higher spatial complexity of 3D objects, which require globally consistent geometry and fine-grained local textures. This makes 3D generation significantly sensitive to reward designs and RL algorithms. To address these challenges, we conduct the first systematic study of RL for text-to-3D autoregressive generation across several dimensions. (1) Reward designs: We evaluate reward dimensions and model choices, showing that alignment with human preference is crucial, and that general multi-modal models provide robust signal for 3D attributes. (2) RL algorithms: We study GRPO variants, highlighting the effectiveness of token-level optimization, and further investigate the scaling of training data and iterations. (3) Text-to-3D Benchmarks: Since existing benchmarks fail to measure implicit reasoning abilities in 3D generation models, we introduce MME-3DR. (4) Advanced RL paradigms: Motivated by the natural hierarchy of 3D generation, we propose Hi-GRPO, which optimizes the global-to-local hierarchical 3D generation through dedicated reward ensembles. Based on these insights, we develop AR3D-R1, the first RL-enhanced text-to-3D model, expert from coarse shape to texture refinement. We hope this study provides insights into RL-driven reasoning for 3D generation. Code is released at https://github.com/Ivan-Tang-3D/3DGen-R1.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What this paper is about

This paper explores how to make computer models that turn text descriptions (like “a red robot with wheels”) into 3D objects more reliable and high‑quality using reinforcement learning (RL). The authors ask: “Are we ready for RL in text‑to‑3D generation?” and propose a new training approach called Hi‑GRPO. They also carefully test different types of feedback signals (called rewards) to see which ones help the model build better 3D shapes and textures.

Goals: What the researchers wanted to find out

The paper focuses on a few simple, big questions:

- Does RL actually improve text‑to‑3D generation compared to standard training?

- If the model first plans the overall shape and then adds details (a “two‑step” process), will that make 3D results better?

- Which rewards (feedback from automatic “judges”) are most useful—human‑preference, prompt alignment, appearance consistency, or part‑level checks?

- How should we combine these rewards fairly, so one doesn’t overpower the others?

Methods: How they approached the problem (in everyday terms)

Think of making a clay sculpture from a description. You first shape the rough form (body, arms, legs), then polish it (colors, materials, small features). The paper trains the 3D model in two steps just like that:

- High‑level planning (Step 1)

- The model creates “reasoning tokens” (like notes or a plan) that describe the overall geometry.

- It then generates a coarse 3D mesh (the rough shape).

- Technical note: The 3D is decoded from compact “tokens” using a tool called a VQVAE. You can think of tokens like LEGO pieces that, when put together, form the object.

- Low‑level detailing (Step 2)

- The model adds visual reasoning (notes focused on details).

- It refines the mesh with textures, materials, and fine features.

To judge how good the model’s outputs are, they use a team of automatic “judges” (reward models). Each judge looks at the object from multiple camera angles (6 viewpoints), like turning the sculpture around to inspect it:

- Human Preference Score (HPS): Tries to match human tastes; checks how well the rendered 3D images match the text.

- UnifiedReward: Scores prompt alignment (does it fit the description?), logic (does the structure make sense?), and style appeal.

- Qwen2.5‑VL (a vision‑LLM): Checks category correctness (is it actually a “chair”?) and appearance consistency (colors, materials, textures agree across views).

- ShapeLLM (a 3D‑aware model): Examines the 3D point cloud (a dense set of points on the surface) to verify parts—existence (is the handle there?) and completeness (is it the right size/shape and the right number?).

Fair reward mixing

- Some judges score multiple things (for example, 3 dimensions at once). To keep things fair, the authors normalize each reward by the number of dimensions it evaluates, so no single judge dominates.

- They also let the detailed Step‑2 reward influence Step‑1, so high‑level planning learns from final quality (like a teacher grading the final sculpture and also giving feedback on your sketch).

Training and evaluation

- Base model: ShapeLLM‑Omni (a strong text‑to‑3D model).

- Datasets for training prompts: Objaverse‑XL, HSSD, ABO.

- Evaluation set: Toys4K (about 4000 toy‑like 3D objects).

- Metrics: CLIP score (how well images match the text; higher is better) and KD_incep (a visual quality/stability metric; lower is better).

In RL terms

- The model uses a group‑based RL method (GRPO) and the authors’ hierarchical version (Hi‑GRPO).

- They carefully control learning with techniques like clipping and KL regularization (think of seatbelts that keep training stable and prevent wild swings).

Findings: What they discovered and why it matters

Key results from their tests:

- Two‑step generation helps

- Planning globally first (shape) and then refining (details) produces better geometry and textures than doing everything at once.

- Adding “textual reasoning” (the model’s internal notes) improves alignment with the prompt and overall structure.

- Reward design is crucial

- Using only detailed (Step‑2) rewards to control both steps doesn’t work well; the coarse shape still needs its own early‑stage rewards.

- Step‑specific rewards (different judges for Step‑1 and Step‑2) give clear improvements.

- Part‑level rewards (checking existence and completeness on 3D point clouds) are especially important for getting the right number and placement of parts (for example, two wheels instead of three, correct handle position, etc.).

- Numbers that show the gains

- In one ablation table, progressively adding rewards increased CLIP score from 25.0 to 29.3 and reduced KD_incep from 0.235 to 0.156.

- In another comparison, the full Hi‑GRPO setup achieved a CLIP score of 28.7 with KD_incep 0.182, notably better than the plain baseline (22.7, 0.249) and better than simpler RL setups.

Why this matters:

- Better geometry: Objects look correct from all angles (no weird shapes).

- Better details: Colors, materials, and textures are more consistent and realistic.

- Better alignment: Outputs match the prompt more faithfully.

Implications: What this could mean going forward

- More reliable text‑to‑3D tools: Designers, game developers, and VR creators could describe what they want and get high‑quality 3D assets that are consistent and physically plausible.

- Stronger evaluation practices: Combining multi‑view image checks with true 3D part checks makes training smarter and less likely to be fooled by camera angles.

- Clear path for RL in 3D: RL can boost text‑to‑3D models, but success depends on good planning (global‑to‑local steps) and carefully balanced, multi‑dimensional rewards.

- Future improvements: The approach invites better reward models, smarter reasoning steps, and broader tests on complex objects and scenes.

In short, the paper shows we are getting ready for RL in text‑to‑3D—especially when we guide the model with a two‑step plan and a fair, well‑designed set of “judges” that look at both the whole object and its parts.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following list distills what remains missing, uncertain, or unexplored in the paper, written as concrete, actionable items for future research:

- Quantitative evaluation breadth is limited to CLIP and an undefined KD_incep metric on Toys4K; rigorous, clearly defined 3D-specific metrics (e.g., watertightness, self-intersection rate, normal consistency, topology validity, geometric plausibility, physical affordance checks) are absent.

- Human evaluation is missing; there is no validation that improvements in model-based reward scores correlate with human judgments of 3D quality, usability, or fidelity across diverse prompts.

- The reliance on “max over 6 viewpoints” for rewards (HPM, UnifiedReward) may incentivize view-specific optimization rather than global consistency; alternative aggregation strategies (mean, min, percentile, learned aggregators) are not examined.

- The choice of 6 uniformly distributed viewpoints is not justified; sensitivity to the number and distribution of viewpoints and its effect on reward stability and model behavior is not studied.

- Reward model calibration and reliability are not assessed; inter-model agreement, score variance across seeds, and correlations among HPM, UnifiedReward, Qwen2.5-VL, and ShapeLLM are unknown.

- The dimension-normalized reward weighting is assumed to ensure “fair contribution,” but there is no empirical analysis of reward dominance, trade-offs, or optimal weighting; adaptive or learned reward weighting remains unexplored.

- The cross-step supervision coefficient λ is fixed to 1.0; there is no sensitivity analysis on λ or exploration of dynamic scheduling strategies to balance high-level planning vs. low-level detail.

- Decoupled clipping thresholds (ε_low, ε_high) in Hi-GRPO are introduced but concrete values, tuning procedures, and their impact on exploration/stability are not ablated.

- Group size (G) and advantage normalization within groups are fixed; the effects of group size, prompt grouping strategy, and intra-group variance on training stability and performance are not analyzed.

- The approach depends heavily on external LMM APIs (vLLM) for reward inference; latency, cost, non-determinism, version drift, and reproducibility impacts are not measured or mitigated.

- The part reward requires extracting component lists and expected quantities from prompts, but the extraction pipeline (methods, accuracy, failure cases) is not specified or evaluated; misparsed components could misguide training.

- ShapeLLM-based point-cloud part evaluation is used without benchmarking its accuracy across categories, clutter, occlusions, or mesh densities; robustness to sampling density (ρ) and point-cloud quality is not examined.

- Reward hacking risks are not discussed; there is no analysis of whether the generator learns to exploit quirks in HPM/UnifiedReward/Qwen2.5-VL/ShapeLLM rather than improving genuine 3D quality.

- Only GRPO-style optimization is ablated; direct comparisons to DPO, RLHF, off-policy actor-critic, GSPO, or hybrid IL/RL baselines on the same backbone are missing.

- The base model is fixed to ShapeLLM-Omni; generalization of Hi-GRPO to other 3D backbones (e.g., Trellis-like diffusion, flow-matching, MeshGPT variants, Gaussian splatting pipelines) is not tested.

- Token-type weighting within the loss (reasoning tokens vs. mesh tokens) is not explored; treating these tokens equally may be suboptimal for credit assignment and could be improved by differential weighting or hierarchical credit strategies.

- Training is short (1,200 steps on 8 GPUs) with small batches; sample efficiency, scaling behavior, and stability at larger scales (longer training, larger datasets, higher-resolution tokens) are unknown.

- Generalization beyond single-object meshes to multi-object scenes, occlusions, and relational constraints (e.g., support, containment, symmetry) is not studied; scene-level text-to-3D remains open.

- The method optimizes appearance via 2D LMM assessments; robustness to lighting/view-dependent effects and consistency between render-time shading and true material properties is not quantified.

- Prompt complexity robustness is unclear; performance under long, ambiguous, contradictory, or constraint-heavy prompts (e.g., specific dimensions, quantities, styles) is not evaluated.

- The “two-step” design is fixed; whether more than two hierarchical steps (e.g., coarse shape → topology refinement → material/texture → physics plausibility) further improve outcomes remains an open question.

- Failure mode analysis is limited; detailed breakdowns of typical errors (e.g., part placement mistakes, UV distortions, mesh artifacts, topology breaks) and which rewards address them are not provided.

- Diversity/novelty metrics and coverage across categories are absent; it is unknown whether Hi-GRPO reduces mode collapse or improves variety while maintaining quality.

- KD_incep is referenced but not defined; reproducible metric definitions, code, and statistical significance testing (e.g., confidence intervals, paired tests) are missing.

- No exploration of physical plausibility constraints (e.g., gravity, structural stability) or integration of physics-based rewards; mechanical affordances are shown visually but not quantitatively evaluated.

- The paper does not examine the impact of rendering settings (lighting, materials, shaders) on reward reliability; standardized rendering protocols or reward-robust rendering strategies are not described.

- Potential data contamination or category overlap between training prompts (Objaverse-XL, HSSD, ABO) and evaluation sets (Toys4K/MME-3DR) is not checked; out-of-distribution generalization is uncertain.

- The extraction and use of “semantic/visual reasoning tokens” are not analyzed; how reasoning content quality affects downstream mesh generation, and whether explicit reasoning supervision improves outcomes, remains unexplored.

- Open-source reproducibility is limited; full training/inference scripts, reward prompts, seeds, and model checkpoints are not provided for independent verification and longitudinal studies.

Glossary

- Advantage normalization: Normalizing per-sample advantage values to reduce reward scale differences across groups. "For each step, advantages are normalized within prompt groups to eliminate reward scale differences across prompts:"

- Area-weighted sampling: Sampling points on a mesh surface proportionally to triangle area to obtain a dense, unbiased point cloud. "Area-Weighted Sampling:"

- Autoregressive models: Generative models that produce outputs token-by-token conditioned on previous tokens. "Autoregressive models alleviate these limitations by discretizing 3D content into token sequences."

- Barycentric coordinates: Coordinates used to express points inside a triangle as convex combinations of its vertices. "generate random barycentric coordinates"

- Barycentric uniform sampling: Uniformly sampling points within a triangle using barycentric coordinates. "Barycentric Uniform Sampling:"

- Chain-of-Thought (CoT): Explicit, step-by-step reasoning traces used to guide model outputs. "combining Chain-of-Thought (CoT) reasoning with reinforcement learning (RL)."

- CLIP score: A metric measuring text–image semantic alignment using CLIP embeddings. "improving CLIP scores by 2.1 point."

- Completion mask: A per-token indicator specifying whether a token should contribute to loss/metrics. "m_{i,t}{(k)} is the completion mask."

- Decoupled clipping: PPO-style clipping with asymmetric thresholds to allow greater increases for low-probability tokens. "Decoupled Clipping: Asymmetric clipping thresholds ε_low and ε_high."

- Decoder-only transformer: A transformer architecture using only the decoder stack for autoregressive generation. "uses decoder-only transformer to model triangle meshes as sequences,"

- Diffusion models: Generative models that learn to reverse a noise-adding process to synthesize data. "For diffusion models, Dance-GRPO introduces a stepwise, motion-aware reward that aligns policy updates with temporal dynamics, enabling more coherent and physically plausible generation."

- Direct Preference Optimization (DPO): A learning paradigm that optimizes models directly from preference comparisons without explicit reward modeling. "applies DPO accordingly."

- Flow-matching models: Generative models trained to match probability flows between data and noise distributions. "Flow-GRPO extends GRPO to flow-matching models by coupling policy optimization with flow objectives, yielding smoother training and improved stability."

- GRPO (Group Relative Policy Optimization): A reinforcement learning algorithm that updates policy using group-relative comparisons. "GRPO offers better text–image alignment and aesthetic quality through group-relative policy updates."

- Group-relative policy updates: Policy optimization steps that compare candidates within a group to drive improvements. "group-relative policy updates."

- Hierarchical reward ensemble: Combining multiple rewards across levels (global to local) to guide training. "Hierarchical Reward Ensemble Design"

- Hi-GRPO: A hierarchical GRPO framework aligning global planning and local refinement with step-specific rewards. "To validate Hi-GRPO, we conduct ablation studies using the baseline GRPO algorithm."

- Human Preference Score (HPS): A learned metric modeling human preferences for image–text alignment and quality. "We adopt HPS V2.1 in both steps."

- KL divergence: A measure of discrepancy between two probability distributions used for regularization. "Token-level KL divergence"

- KL regularization: Penalizing divergence from a reference policy to stabilize training. "KL Regularization: Token-level KL divergence"

- Large multimodal model (LMM): Models that jointly process multiple modalities (e.g., text and images). "2D LMMs struggle to accurately detect 3D components from multi-view observations."

- Neural radiance field: A continuous volumetric representation modeling view-dependent appearance for rendering. "refine it as a neural radiance field"

- Policy ratio: The ratio of current to old policy probabilities for a token, used in PPO-style objectives. "Policy Ratio:"

- Point cloud: A set of 3D points (often with colors) representing object surfaces for geometric analysis. "employ direct evaluation based on 3D point clouds in step 2."

- Rectified Flow: A flow-based generative framework used for rendering or decoding latent representations. "using Rectified Flow model for rendering."

- Reference policy: A fixed or slowly updated policy used to constrain training via KL penalties. "Reference policy log probabilities"

- Token-level averaging: Averaging loss contributions over valid tokens to normalize across sequences. "Token-Level Averaging: The loss is normalized by the token count"

- Token-level log probabilities: Log-likelihoods computed per token for reasoning and generation sequences. "we compute token-level log probabilities by concatenating the log probabilities of reasoning tokens and mesh tokens."

- UV coordinates: 2D texture coordinates mapped to mesh vertices for sampling colors from a texture. "Interpolate UV coordinates using barycentric coordinates"

- Unified Reward Model: A multimodal reward model that evaluates alignment, logic, and style across views. "UnifiedReward-2.0-qwen-7b performs three-dimensional evaluation of textured objects:"

- Vector-Quantized Variational Autoencoder (VQVAE): A discrete latent generative model used to tokenize and decode 3D shapes. "decoded through the VQVAE decoder."

- Voxel grid: A 3D volumetric representation discretized into voxels for shape decoding or conversion. "decoded by the VQVAE into voxel grids"

- vLLM: A high-throughput inference and serving framework for LLMs. "Our reward models are deployed via the vLLM API framework."

Practical Applications

Immediate Applications

Below is a set of actionable use cases that can be deployed now, grounded in the paper’s hierarchical RL method (Hi-GRPO), two-step global-to-local generation, dimension-normalized reward ensemble, and multi-view/3D component evaluation.

- 3D asset quality assurance and auto-curation for marketplaces and datasets — sectors: software, media, e-commerce — tools/workflows: multi-view rendering (6 views), Qwen2.5-VL prompts for category/appearance consistency, ShapeLLM point-cloud part checks, dimension-normalized reward ensemble as a QA score — assumptions/dependencies: access to Qwen2.5-VL-7B, ShapeLLM-13B, HPS V2.1, UnifiedReward models via vLLM API; reliable multi-view renderers; legal/licensing for model use.

- RL fine-tuning plug-in for existing autoregressive 3D generators (e.g., MeshGPT, MeshAnything, ShapeLLM-Omni) to improve geometry-text alignment and texture fidelity — sectors: software, gaming, VFX — tools/workflows: Hi-GRPO trainer with step-specific rewards (step 1 coarse geometry; step 2 local appearance), group-relative advantage normalization, decoupled clipping and KL regularization; deploy on 8-GPU nodes as in paper — assumptions/dependencies: base model tokenization/VQVAE compatibility; datasets (Objaverse-XL/HSSD/ABO-like prompts); compute budget; reward APIs running stably.

- Two-step text-to-3D content production pipeline for studios — sectors: gaming, film/animation, AR/VR — tools/workflows: semantic reasoning tokens for global planning (step 1), visual reasoning tokens for local detail (step 2); render-and-score loop to gate progression from coarse to refined assets — assumptions/dependencies: integrating VQVAE decoders, rendering farm capacity; trained base model checkpoint; pipeline orchestration.

- Automated category verification for user-uploaded 3D content — sectors: e-commerce, 3D model repositories — tools/workflows: Qwen2.5-VL category-matching prompt (binary 0/1) over 6 views to flag mislabeled or off-category assets — assumptions/dependencies: robust multi-view rendering; prompt clarity; model generalization to catalog classes.

- Component existence/completeness checks for CAD and robotics parts — sectors: manufacturing, robotics — tools/workflows: mesh-to-point-cloud sampling; ShapeLLM per-component existence (0/1) and completeness (0–1) scoring against expected quantities; JSON audit reports — assumptions/dependencies: accurate component lists and quantities; domain-specific parts ontology; sufficient point density and clean meshes.

- Reward-as-a-service microservice for 3D generation teams — sectors: software R&D — tools/workflows: vLLM-served ensemble (HPS V2.1 + UnifiedReward + Qwen2.5-VL + ShapeLLM) with dimension-normalized aggregation; REST API returning step-1/step-2 scores — assumptions/dependencies: hosting and scaling LMMs; latency budgets; model licenses; monitoring for drift.

- Educational modules demonstrating hierarchical planning in 3D generative RL — sectors: education — tools/workflows: curated notebooks showing two-step reasoning, reward ablations, and multi-view consistency prompts; small-scale training on Toys4K subset — assumptions/dependencies: access to modest GPUs; open-source reward substitutes; instructor expertise.

- Benchmarking/procurement evaluation of 3D generators — sectors: academia, enterprise R&D — tools/workflows: standardized evaluation with CLIP score and KD_incep metrics on Toys4K; visual inspection using the paper’s prompt suites — assumptions/dependencies: metrics correlate with human judgment for target use; reproducible rendering configuration.

- Multi-view appearance consistency QA for product imagery — sectors: retail/e-commerce — tools/workflows: Qwen2.5-VL appearance JSON (color smoothness, material realism, texture rationality) to detect cross-view inconsistencies before publishing — assumptions/dependencies: consistent lighting setups; trustworthy LMM material perception; integration in CMS workflows.

- Structural plausibility checks to catch impossible geometry — sectors: policy/compliance, marketplaces — tools/workflows: shape outline consistency metric (0–1) across views; threshold-based rejection or manual review queue — assumptions/dependencies: calibrated thresholds to reduce false positives; policy definitions of “plausible geometry”.

- Texture/material refinement for existing meshes (step-2-only RL) — sectors: VFX, gaming — tools/workflows: apply visual reasoning tokens and step-2 reward ensemble to improve color/material/texture coherence without regenerating geometry — assumptions/dependencies: refined mesh decoders; high-quality textures; reward signal aligns with art style.

- Synthetic object dataset generation for robotics simulation with part correctness — sectors: robotics — tools/workflows: two-step RL fine-tuning to produce objects with consistent parts and quantities; component-level reward ensures manipulable affordances — assumptions/dependencies: simulation-friendly asset specs (scale, watertightness); domain-specific reward extensions.

Long-Term Applications

These use cases require further research, scaling, or integration beyond what the paper demonstrates (e.g., model efficiency, domain-specific reward design, scene-level generation).

- Real-time consumer text-to-3D apps for AR/VR — sectors: software, consumer AR/VR — tools/products: on-device or edge Hi-GRPO-style generation with compressed LMMs and lightweight reward proxies — assumptions/dependencies: model distillation/compression; hardware acceleration; streaming render pipelines; low-latency reward computation.

- Standards and certification for 3D asset quality using multi-dimensional rewards — sectors: policy, industry consortia — tools/products: public benchmarks, reference implementations of consistency, preference, and component completeness metrics; certification badges — assumptions/dependencies: stakeholder consensus; open evaluation suites; governance for bias and transparency.

- Closed-loop robotics training with generative 3D assets and part-aware rewards — sectors: robotics — tools/products: simulators that auto-generate task-specific objects with verified parts (existence/completeness) to train grasping/manipulation — assumptions/dependencies: integration with physics engines; safety validation; domain rewards covering affordances and tolerances.

- Generative CAD co-pilots that respect engineering constraints via domain rewards — sectors: manufacturing, engineering — tools/products: parametric CAD integration where rewards encode tolerances, materials, fastener standards, assembly constraints — assumptions/dependencies: CAD-native tokenization; constraint-aware reward models; verification bridges to FEA/DFM tools.

- Scene-level text-to-3D with hierarchical RL across object and scene layers — sectors: gaming, architecture, digital twin — tools/products: multi-object planners (global layout, local detail), scene consistency rewards (occlusion, scale, style) — assumptions/dependencies: scalable scene tokenization; new reward designs; significant compute; dataset coverage of scenes.

- Fidelity-preserving digital twin pipelines with cross-sensor consistency rewards — sectors: energy, infrastructure, industrial IoT — tools/products: assets validated against multi-view and point-cloud rewards; continual RL refinement from live sensor data — assumptions/dependencies: data availability; sensor fusion to reward functions; update policies that ensure stability.

- Unified 3D LMMs for generation, understanding, and editing trained with hierarchical reward ensembles — sectors: software R&D — tools/products: ShapeLLM-Omni-like models extended with multi-task rewards and step-to-step supervision — assumptions/dependencies: large-scale multimodal datasets; robust training infrastructure; task-balanced reward design.

- Marketplace quality scoring integrated into pricing/search and provenance — sectors: e-commerce, finance (valuation of digital goods) — tools/products: composite “quality” indices derived from human preference, alignment, consistency, and parts completeness — assumptions/dependencies: fairness and bias audits; IP/provenance checks; user acceptance and transparency.

- Energy-efficient RL training schedules for 3D generation (“green RL”) — sectors: energy/policy, academia — tools/products: adaptive reward weighting, early stopping via consistency metrics, curriculum learning to reduce GPU-hours — assumptions/dependencies: empirical validation of energy/quality trade-offs; tooling for carbon reporting.

- IP compliance/deduplication via reward-guided similarity and structure checks — sectors: policy/compliance, marketplaces — tools/products: structural and appearance consistency signals combined with retrieval embeddings to detect clones or derivative content — assumptions/dependencies: legal frameworks; robust similarity baselines; false-positive mitigation.

- Medical 3D asset generation with anatomy-aware rewards — sectors: healthcare — tools/products: anatomical correctness rewards (parts, proportions, textures), validated by clinical datasets for training simulators or patient education — assumptions/dependencies: medical data access; expert-annotated component lists; regulatory validation.

- Adaptive 3D reasoning curricula and assessments — sectors: education — tools/products: student-facing sandboxes where hierarchical planning and reward feedback scaffold learning; automated grading via multi-view consistency — assumptions/dependencies: age-appropriate datasets; classroom-friendly compute; pedagogy integration.

Cross-cutting assumptions and dependencies

- Model availability and licensing: Qwen2.5-VL-7B, ShapeLLM-13B, HPS V2.1, UnifiedReward; vLLM serving stack.

- Rendering and discretization: reliable 6-view rendering, VQVAE tokenization/decoding, mesh→point-cloud sampling.

- Compute and scaling: multi-GPU training (e.g., 8 GPUs), inference throughput for reward scoring, orchestration.

- Metric validity: CLIP/KD_incep correlation with human preference; calibration of consistency/parts rewards to domain needs.

- Data governance: rights to datasets (Objaverse-XL, HSSD, ABO); bias audits for human preference and alignment rewards.

- Integration readiness: pipelines to backprop step-2 rewards to step-1 (λ supervision), decoupled clipping/KL stability, group-normalized advantages.

Collections

Sign up for free to add this paper to one or more collections.