Resolution Where It Counts: Hash-based GPU-Accelerated 3D Reconstruction via Variance-Adaptive Voxel Grids

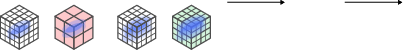

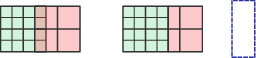

Abstract: Efficient and scalable 3D surface reconstruction from range data remains a core challenge in computer graphics and vision, particularly in real-time and resource-constrained scenarios. Traditional volumetric methods based on fixed-resolution voxel grids or hierarchical structures like octrees often suffer from memory inefficiency, computational overhead, and a lack of GPU support. We propose a novel variance-adaptive, multi-resolution voxel grid that dynamically adjusts voxel size based on the local variance of signed distance field (SDF) observations. Unlike prior multi-resolution approaches that rely on recursive octree structures, our method leverages a flat spatial hash table to store all voxel blocks, supporting constant-time access and full GPU parallelism. This design enables high memory efficiency and real-time scalability. We further demonstrate how our representation supports GPU-accelerated rendering through a parallel quad-tree structure for Gaussian Splatting, enabling effective control over splat density. Our open-source CUDA/C++ implementation achieves up to 13x speedup and 4x lower memory usage compared to fixed-resolution baselines, while maintaining on par results in terms of reconstruction accuracy, offering a practical and extensible solution for high-performance 3D reconstruction.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

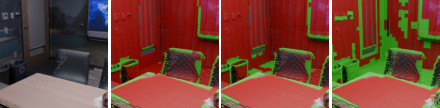

This paper is about making fast, memory‑efficient 3D maps of the world from sensors like depth cameras (RGB‑D) and laser scanners (LiDAR). The authors introduce a new way to store and update 3D information so computers can build detailed models in real time without using too much memory. They call their approach a variance‑adaptive voxel grid with a GPU‑friendly hash table—think of it as using small building blocks where detail matters and bigger blocks where it doesn’t, all organized for super‑fast access on a graphics card.

What the researchers wanted to figure out

- How to build accurate 3D models in real time while using much less memory.

- How to automatically use fine detail only where it’s needed (edges, corners, complex shapes) and use coarser detail elsewhere (flat walls, empty space).

- How to make this work well on GPUs (graphics cards), which are great at doing lots of things in parallel.

- How to support both reconstruction (making a 3D mesh) and rendering (making realistic images) from the same data.

How their method works, in simple terms

Here are the key ideas, explained with everyday language:

Voxels and TSDF: 3D “pixels” and distances to surfaces

- A voxel is like a 3D pixel—a tiny cube in space.

- The system stores a TSDF (Truncated Signed Distance Function), which is a fancy way of saying: for each voxel, keep the distance to the nearest surface (positive in front of the surface, negative behind it; far distances are clipped).

- As new sensor data arrives (from RGB‑D images or LiDAR point clouds), the system updates those distances by averaging many observations to get a stable surface.

Variance‑adaptive resolution: detail where it matters

- The system tracks not just the average distance in each voxel, but also the variance (how much those readings change).

- Low variance = flat, simple areas → use bigger voxels to save memory.

- High variance = edges or complex shapes → use smaller voxels for more detail.

- This way, the map automatically “zooms in” on details and “zooms out” in simple regions.

Fast lookup with a spatial hash table on the GPU

- Instead of using a tree‑like structure (like an octree) that is slow to step through, they put all voxel blocks into a flat hash table. Think of a hash table like a super‑fast dictionary that points you straight to the right block.

- This design is perfect for GPUs, which can update many voxels at once, making the system fast and scalable.

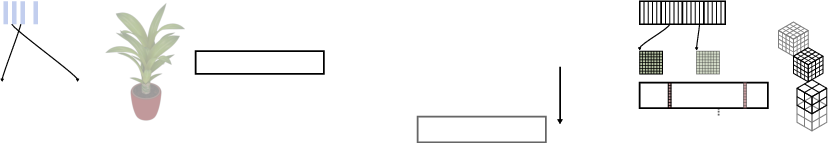

From voxels to meshes and images

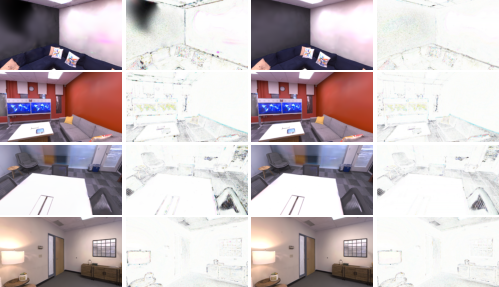

- To turn the voxel data into a 3D surface (a mesh), they use an algorithm called Marching Cubes, adapted to work across areas where voxel sizes change.

- For rendering (making images from the 3D model), they support 3D Gaussian Splatting, and manage it with a GPU‑accelerated quad‑tree to control how many “splats” are used in different regions—again, more where it matters, fewer where it doesn’t.

Works with both RGB‑D and LiDAR

- RGB‑D cameras give a depth image, so voxels are updated by projecting them into the image and comparing distances.

- LiDAR gives 3D points and rays; the system follows each laser ray and updates the voxels it passes through.

What they found and why it matters

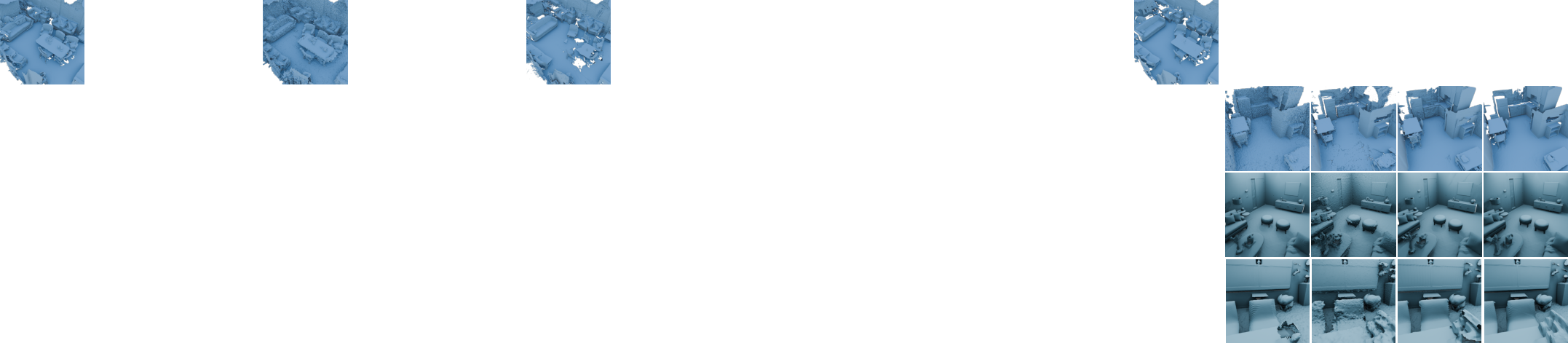

Across several datasets (indoor RGB‑D like ScanNet and Replica, and outdoor LiDAR like Oxford Spires and Newer College):

- Speed: Their system is very fast—often the fastest. It hits around 59–64 FPS in some RGB‑D tests and up to about 35 FPS in LiDAR tests, up to 13× faster than some existing methods.

- Memory: It uses a lot less memory (about 2× to 4× less on average), especially with the multi‑resolution setup. That means smaller meshes and cheaper storage.

- Accuracy: It keeps accuracy close to or better than the best traditional systems. In many RGB‑D cases, it matches or beats others on F‑score (a measure of how correct and complete the surface is).

- Rendering quality: For image rendering (Novel View Synthesis), their multi‑resolution setup improves image quality metrics (PSNR, SSIM, LPIPS) and still runs fast on the GPU.

- Robustness: Some competing methods failed on certain datasets or sensor types; theirs ran reliably across both RGB‑D and LiDAR.

There’s an important trade‑off:

- Multi‑resolution shines with dense data (like RGB‑D), keeping quality while saving memory.

- With sparse data (like LiDAR), using coarser voxels can hurt detail. Their tests show single‑resolution can be a bit more accurate for very sparse scenes, while multi‑resolution still wins on memory.

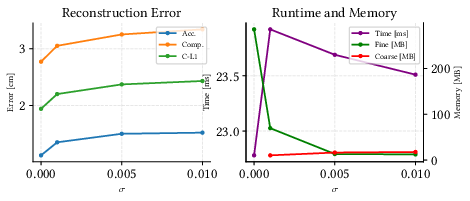

They also include a “knob” (a variance threshold, called σ) you can turn:

- Lower σ → more fine voxels → higher detail but more memory and time.

- Higher σ → fewer fine voxels → lower memory and faster, but you may lose tiny details.

Why this work is useful

This approach makes real‑time 3D reconstruction more practical:

- For robots and drones: faster mapping with less memory helps navigation and exploration.

- For AR/VR: quick, high‑quality scene capture makes experiences smoother and more immersive.

- For large‑scale scanning: using detail only where needed keeps projects efficient and affordable.

- It’s GPU‑friendly and open‑source, so others can build on it for research and real‑world apps.

In short: The paper shows how to “put resolution where it counts.” By adapting voxel size based on how complex the local geometry is, and organizing everything in a GPU‑friendly way, they achieve fast, accurate, and memory‑efficient 3D reconstruction and rendering across different sensors and scenes.

Knowledge Gaps

Below is a consolidated list of the paper’s unresolved knowledge gaps, limitations, and open questions. Each item is phrased to be actionable for future research.

- Evaluation relies on ground-truth, fixed poses; the method’s robustness under realistic SLAM pose noise, drift, and loop closure has not been tested.

- No assessment in dynamic scenes (moving objects). It is unclear how variance-driven adaptivity reacts to transient surfaces and whether temporal filtering is needed to avoid allocating fine resolution to dynamics.

- The adaptivity criterion uses unweighted TSDF sample variance with w_k=1. There is no study on sensor-aware (weighted) variance, robust statistics, or outlier rejection, and how these would affect resolution decisions.

- The choice of variance threshold σ is only ablated on limited settings; there is no principled procedure to set σ per sensor, per scene, or adapt it online as data statistics change.

- Limited resolution hierarchy (e.g., 20–40 cm in LiDAR experiments). It’s unknown how many levels are optimal, or whether continuous or finer-grained multi-scale schedules improve performance.

- The claimed “constant-time” hash-table access is not accompanied by an analysis of collision handling, load factors, warp divergence, or worst-case behavior at high occupancy.

- Out-of-core scalability is not addressed. There is no mechanism or evaluation for streaming, eviction, or map paging when the reconstruction exceeds GPU memory.

- No analysis of memory fragmentation or allocator behavior over long sessions with frequent split/merge of voxel blocks; potential fragmentation or performance degradation is unquantified.

- The merging/downsampling procedure (how TSDF, weights, colors, and variances are aggregated when reducing resolution) lacks algorithmic detail and a quantitative evaluation of the induced bias.

- The Marching Cubes extension for meshing across resolution boundaries is described at a high level; there is no evaluation of artifacts (cracks, non-manifold topology, normal discontinuities) or metrics that quantify boundary quality.

- Sensitivity to core fusion hyperparameters (truncation distance τ, block size, voxel size per level) is not explored; guidelines for selecting these parameters across different sensors and scene densities are missing.

- The LiDAR TSDF integration model assumes ray-direction-based signed distance without modeling beam divergence, multi-echo returns, intensity, or per-range noise; the impact on reconstruction quality is unknown.

- Sparse-data regimes (e.g., outdoor LiDAR) degrade under coarser voxels; no alternatives (density-aware adaptivity, anisotropic voxels, learned criteria) are proposed or evaluated to mitigate this.

- No cross-dataset generalization analysis for the GS rendering pipeline; Replica (synthetic) results may not reflect real-world photometric complexity, exposure variations, or sensor artifacts.

- The GPU-based quadtree for Gaussian distribution control is under-specified; parallel build/update costs, concurrency control (atomics), and stability across varying scene complexities are not analyzed.

- Mesh-to-Gaussian coupling is not dissected: how changes in voxel resolution and meshing affect GS initialization, optimization stability, and NVS quality under real data remains open.

- Failure analysis for baselines is absent (e.g., N3-Mapping and Supereight failures on certain datasets). Without root-cause investigation and fair reconfiguration, comparative conclusions remain tentative.

- There is no theoretical justification linking TSDF variance to optimal resolution allocation (e.g., bounds on reconstruction error or sample complexity as a function of σ and voxel size).

- No evaluation of long-horizon, large-scale mapping (city-scale, multi-session) in terms of cumulative runtime, memory growth, and degradation; the system’s practical limits are unknown.

- The approach is sensor-agnostic in principle, but experiments cover only RGB-D and LiDAR. Applicability to other modalities (stereo, event cameras, radar) and multi-modal fusion is untested.

- Color handling is mentioned but not deeply evaluated (especially for LiDAR). The effect of color fusion strategies on meshing and GS quality is unclear.

- Hardware variability is not discussed. Runtimes are reported without detailing GPU architectures, memory bandwidth effects, or portability to different vendors/drivers.

- Reproducibility gaps: key implementation details (hashing scheme, collision resolution, meshing boundary rules, streaming strategy) are not fully documented; unit tests and standardized benchmarks are lacking.

Practical Applications

Practical Applications of “Resolution Where It Counts: Hash-based GPU-Accelerated 3D Reconstruction via Variance-Adaptive Voxel Grids”

The paper introduces MrHash, a variance-adaptive, GPU-native voxel grid for TSDF-based 3D reconstruction that uses a flat spatial hash for constant-time access, plus a GPU quad-tree for controlling 3D Gaussian Splatting (3DGS) density. It delivers up to 13× speedups and ~2–4× lower memory than fixed-resolution baselines while maintaining comparable accuracy. Below are concrete applications enabled by these findings, with sector links, candidate tools/workflows, and feasibility notes.

Immediate Applications

- Real-time indoor mapping for mobile robots (AMRs, service robots)

- Sectors: robotics, logistics, retail, hospitality

- What: Onboard TSDF mapping with adaptive resolution to capture fine details (e.g., shelving edges, doorframes) while coarsening uniform areas (walls/floors) to save memory and compute. Use “Ours (single)” for sparse LiDAR; “multi” for dense RGB-D.

- Tools/workflow: ROS2 node wrapping MrHash CUDA/C++; integration with existing odometry (VIO/LiDAR SLAM); export meshes/ESDF for planning; threshold σ tuning by environment.

- Dependencies/assumptions: CUDA-capable GPU (e.g., NVIDIA Jetson Orin/Xavier), accurate and timely poses from a SLAM front-end, sensor calibration/time sync, handling of dynamics (ghosting mitigation).

- On-site as-built capture and progress tracking

- Sectors: AEC (architecture, engineering, construction)

- What: Real-time reconstruction from RGB-D/LiDAR backpacks or cart-mounted rigs to generate as-built meshes for clash detection and progress quantification; lower memory footprint simplifies laptop-on-site workflows.

- Tools/workflow: MrHash + GNSS/IMU-aided odometry; export meshes to BIM tools (Navisworks, Revit) and QA tools; use σ to balance detail vs speed depending on phase (rough-in vs finish).

- Dependencies/assumptions: Reliable pose estimation; large-scene management (tiling/chunking); safety constraints on scanning in active sites.

- Rapid digital twins for facilities and plants

- Sectors: energy, manufacturing, utilities, real estate

- What: Fast, memory-efficient scanning for updates to facility twins; adaptive voxels preserve valves, conduits, cable trays while coarsening large planar regions.

- Tools/workflow: Edge GPU capture → mesh/TSDF server; incremental updates; 3DGS for photorealistic walkthroughs; connectors to Omniverse/Digital Twin platforms.

- Dependencies/assumptions: Controlled environments or robust SLAM; asset governance; privacy/security policies.

- On-set environment capture for VFX and games

- Sectors: media/entertainment, game development

- What: Near-real-time meshing for set dressing and lighting; 3DGS module for photoreal proxies; smaller meshes speed DCC ingest.

- Tools/workflow: MrHash capture rig → USD/FBX export; 3DGS splat control via GPU quad-tree; iterative refinement with σ tweaks; engine plugins (Unreal/Unity).

- Dependencies/assumptions: High-end GPU laptops; color-calibrated RGB-D; controlled lighting for NVS.

- Telepresence and virtual tours with 3DGS acceleration

- Sectors: real estate, tourism, remote collaboration

- What: Capture-and-stream scenes with high FPS (e.g., 48–64 FPS on RGB-D) for low-latency novel view synthesis; adaptive splat density reduces bandwidth/render load.

- Tools/workflow: MrHash reconstruction → 3DGS rendering on GPU; live streaming to thin clients; bitrate control through splat culling.

- Dependencies/assumptions: Good uplink; GPU server; privacy (PII redaction) and interior scanning permissions.

- Warehouse/store mapping for inventory and layout analysis

- Sectors: retail, e-commerce logistics

- What: Nightly scans for planogram compliance, aisle clearance, fixture changes; finer resolution around shelves, coarser on floors/walls increases throughput.

- Tools/workflow: AMR with MrHash; mesh diff vs baseline; anomaly detection; H&S compliance checks (e.g., fire exits).

- Dependencies/assumptions: Access windows; SLAM robustness in repetitive environments; reflective packaging handling.

- Education and research platform for GPU mapping

- Sectors: academia, R&D labs

- What: Open-source CUDA/C++ pipeline for courses on mapping, GPU data structures, and NVS; benchmark alternative adaptivity criteria (variance vs information gain).

- Tools/workflow: Datasets (ScanNet/Replica/Oxford/Newer College); ablation of σ; compare single vs multi-resolution; extend meshing across resolution boundaries.

- Dependencies/assumptions: CUDA-capable lab infrastructure; reproducible pose sources; baseline frameworks for comparison.

- Disaster response and inspection robotics

- Sectors: public safety, infrastructure

- What: Fast mapping of interiors/underground spaces with mixed sensors; adaptive resolution saves compute for longer operations; single-res mode for sparse LiDAR in low-light/dust.

- Tools/workflow: Rugged robot with MrHash + LiDAR; remote operator UI; mesh overlays on floor plans; hazard hot-spot marking.

- Dependencies/assumptions: Challenging dynamics/dust; intermittent GPS; careful σ selection to avoid loss of thin obstacles.

- Cultural heritage digitization on location

- Sectors: culture, museums, archaeology

- What: Field-friendly, memory-efficient scanning of rooms and artifacts; coarser background, finer detail on artifacts; 3DGS for public-facing immersive content.

- Tools/workflow: Portable RGB-D+LiDAR rigs; artifact-focused σ profiles; mesh/texture export; web viewers with splat streaming.

- Dependencies/assumptions: Delicate surfaces (specular/transparent) may need special handling; photometric consistency.

- Cloud-scale reconstruction services with cost savings

- Sectors: software/cloud, mapping services

- What: Lower GPU hours and storage via reduced mesh sizes (often 2–4×); faster job turnaround (up to 13×) for batch reconstructions.

- Tools/workflow: Containerized MrHash microservice; autoscaling; cost-aware σ and voxel policies; API for uploads and callbacks.

- Dependencies/assumptions: GPU instances; robust queuing and quota control; user data privacy.

Long-Term Applications

- On-device AR mapping for smartphones and AR headsets

- Sectors: consumer software, XR hardware

- What: Real-time room scanning with adaptive voxels enabling longer sessions and better detail retention; on-device 3DGS previews.

- Tools/workflow: Port MrHash to Vulkan/Metal/WebGPU; mixed-precision kernels; integration with ARKit/ARCore.

- Dependencies/assumptions: Non-CUDA GPU support; thermal budgets; mobile memory limits; motion tracking quality.

- City-scale, real-time digital twins with adaptive streaming

- Sectors: smart cities, urban planning, public safety

- What: Continuous multi-agent scanning (vehicles, drones, pedestrians) with adaptive resolution streams; 3DGS for photoreal telepresence.

- Tools/workflow: Edge capture → cloud fusion; out-of-core streaming; standardized TSDF/mesh tiles; hierarchical σ policies by zone/mission.

- Dependencies/assumptions: Bandwidth; privacy-by-design; persistent pose graph and loop closure; open interchange formats.

- Autonomous driving HD mapping and continual updates

- Sectors: automotive, mobility

- What: High-throughput LiDAR mapping; careful σ and single-vs-multi policy to avoid fidelity loss on sparse scans; fast updates to road furniture and construction zones.

- Tools/workflow: Fleet-scale ingestion; IMU/GNSS/SLAM fusion; semantic layers atop TSDF; change detection.

- Dependencies/assumptions: Safety-grade validation; robust handling of dynamics; regulatory constraints on data collection.

- Semantics- and uncertainty-aware adaptive reconstruction

- Sectors: robotics, software, research

- What: Replace variance-only criterion with information gain/uncertainty/semantic cues to better preserve thin/safety-critical structures (cables, signs) in sparse LiDAR.

- Tools/workflow: Learn σ policies; multi-sensor confidence maps; adaptive meshing that guarantees minimum thickness preservation.

- Dependencies/assumptions: Additional models/sensors; more complex calibration; validation datasets.

- Real-time telepresence and holoportation with photoreal NVS

- Sectors: communications, enterprise collaboration

- What: Live 3DGS-based meetings with adaptive splat control for network-aware rendering; MrHash geometry for stable pose and occlusions.

- Tools/workflow: Capture rigs → MrHash → 3DGS → streaming; QoS-driven splat budgets; multi-user synchronization.

- Dependencies/assumptions: Low-latency networks; compression; privacy and consent management.

- Regulatory and standards development for indoor scanning

- Sectors: policy, standards bodies

- What: Guidelines for memory-efficient, privacy-preserving scanning and storage; minimum fidelity for safety-critical uses (e.g., egress, accessibility).

- Tools/workflow: Benchmarks referencing F-score thresholds and σ ranges by use-case; conformance tests; recommended file formats.

- Dependencies/assumptions: Multi-stakeholder alignment; public datasets; legal frameworks.

- Adaptive reconstruction for drones in GNSS-denied, cluttered spaces

- Sectors: public safety, inspection, mining

- What: Onboard mapping with adaptive compute budgets; dynamic σ informed by motion uncertainty; conservative preservation near obstacles.

- Tools/workflow: Tight VIO + MrHash; risk-aware σ scheduling; ESDF extraction for local planning.

- Dependencies/assumptions: High-reliability VIO; robust lighting; dynamic obstacle handling.

- Consumer-grade 3D scanning for fabrication and interior design

- Sectors: consumer apps, e-commerce, fabrication

- What: “Scan-to-3D-print” and “scan-to-furnish” apps that deliver lighter meshes while retaining critical geometric details.

- Tools/workflow: Mobile port; device-to-cloud refinement; automatic σ presets (e.g., “print”, “AR staging”).

- Dependencies/assumptions: Mobile GPU support; intuitive UX for trade-offs; standards for mesh quality.

- Energy-efficient mapping on edge devices

- Sectors: IoT/edge computing, sustainability

- What: Lower energy per scan via fewer memory ops and faster kernels; adaptive resolution minimizes unnecessary work.

- Tools/workflow: Power profiling; dynamic scaling of voxel sizes based on battery/thermal budget.

- Dependencies/assumptions: Hardware telemetry; OS-level DVFS; algorithm-level schedulers.

- Open ecosystem: SDKs, plugins, and data standards

- Sectors: software platforms, engine/tool vendors

- What: Mature SDK with Python bindings, ROS2 packages, Omniverse connectors, Unreal/Unity plugins; exchange standards for variance-adaptive grids.

- Tools/workflow: Versioned APIs; sample apps; large-scene tiling and streaming; CI for performance regressions.

- Dependencies/assumptions: Community adoption; cross-vendor GPU support; governance for spec evolution.

- Safety-critical robotics with guaranteed minimum detail

- Sectors: industrial automation, healthcare, defense

- What: Formalized σ policies and verification to ensure thin obstacle preservation; certified mapping modules.

- Tools/workflow: Offline proofs/tests; runtime monitors triggering local refinement when uncertainty spikes.

- Dependencies/assumptions: Certification processes; traceability; robust fail-safes.

Notes on feasibility and configuration across applications:

- Sensors: Dense RGB-D benefits most from multi-resolution; sparse LiDAR often prefers single-resolution or carefully tuned σ to avoid detail loss.

- Compute: The current implementation targets CUDA; non-NVIDIA platforms require a port (e.g., Vulkan/Metal) for mobile/headset use.

- Poses: The evaluation uses fixed ground-truth poses; real-world deployments must integrate a reliable SLAM/odometry front-end.

- Dynamics: TSDF fusion can “ghost” moving objects; dynamic scene handling (segmentation/outlier filtering) may be needed.

- Scale: For very large scenes, out-of-core map tiling and loop-closure-aware fusion should be added to maintain global consistency.

Glossary

- Ablation study: A controlled analysis to evaluate the effect of a specific parameter or component of a system. "Ablation study on variance threshold ."

- CUDA: A parallel computing platform and API model by NVIDIA enabling GPU acceleration of general-purpose computations. "An open-source CUDA/C++ implementation supporting real-time reconstruction and rendering, designed for extensibility and community use."

- DDA traversal: Digital Differential Analyzer; a grid traversal algorithm often used for ray marching through voxel grids. "using a DDA traversal~\cite{amanatides1987fast} from the sensor origin to each point ."

- F-score: The harmonic mean of precision and recall; used here as a reconstruction quality metric with a spatial error threshold. "the F-score is computed with a 10 cm error threshold for the RGB-D and 20 cm for the LiDAR."

- Gaussian Splatting: A rendering technique that represents scenes with 3D Gaussian primitives, enabling fast novel view synthesis. "parallel quad-tree structure for Gaussian Splatting, enabling effective control over splat density."

- Implicit surface representation: An approach where surfaces are defined implicitly by a scalar field (e.g., SDF/TSDF) rather than explicit meshes. "The \ac{tsdf} provides an implicit surface representation, where each voxel encodes a scalar value indicating the signed distance..."

- Isosurface: A surface extracted from a volumetric field at a constant value (e.g., TSDF = 0) to reconstruct geometry. "extract the isosurface from the volumetric representation"

- KinectFusion: A real-time TSDF-based reconstruction system using a dense voxel grid. "KinectFusion~\cite{newcombe2011kinectfusion} demonstrated real-time TSDF integration on a dense, uniform voxel grid, enabling accurate reconstructions in small, bounded scenes."

- LiDAR: A laser-based sensor that measures distances to produce 3D point clouds. "For 3D point cloud measurements (i.e.,~LiDAR sensors), we perform ray-based geometric integration"

- LPIPS: Learned Perceptual Image Patch Similarity; a metric for perceptual image similarity based on deep features. "Comparison of perceptual metrics (\ac{psnr}, \ac{ssim}, \ac{lpips}) and rendering speed (\ac{fps}) on Replica dataset \cite{straub2019replica}."

- Marching Cubes: An algorithm to extract polygonal meshes (isosurfaces) from volumetric data. "We extend Marching Cubes to support seamless meshing across resolution boundaries."

- N-Mapping: A neural mapping method that optimizes scene-specific representations; noted for failures in certain datasets. "N-Mapping fails on one RGB-D sequence and all the LiDAR sequences;"

- NKSR: A neural surface reconstruction approach that can fail under certain conditions or datasets. "NKSR fails on all Replica sequences but runs on Oxford Spires."

- Novel View Synthesis: Generating images of a scene from unseen viewpoints using learned or explicit scene representations. "Novel View Synthesis"

- Octrees: Hierarchical spatial data structures that adaptively refine space, often used for sparse volumetric representations. "Octrees hierarchically allocate memory where needed but suffer from recursive traversal and poor GPU performance."

- OpenVDB: A sparse hierarchical volumetric data structure widely used in graphics and VFX. "OpenVDB provides sparse multi-resolution storage through a tree structure"

- PIN-SLAM: A learning-based SLAM system leveraging hierarchical feature grids to reconstruct geometry. "Methods such as InstantNGP~\cite{muller2022instant}, NICER-SLAM~\cite{zhu2024nicerslam}, and PIN-SLAM~\cite{pan2024pinslam} leverage hierarchical feature grids and MLP decoding to reconstruct high-quality geometry while incorporating SLAM constraints."

- PSNR: Peak Signal-to-Noise Ratio; a traditional metric for image reconstruction quality. "Comparison of perceptual metrics (\ac{psnr}, \ac{ssim}, \ac{lpips}) and rendering speed (\ac{fps}) on Replica dataset \cite{straub2019replica}."

- Quad-tree: A hierarchical 2D spatial partitioning structure; used here on the GPU to control Gaussian splat density. "A fully parallel GPU-based quad-tree for controlling the spatial distribution of Gaussians for novel view synthesis"

- Ray bundling: An optimization that groups rays to reduce computation during volumetric updates. "Voxblox uses block-wise hashing and ray bundling to support onboard updates on resource-constrained UAVs"

- RGB-D: Color images paired with per-pixel depth information used for volumetric integration. "The RGB-D data are sequences of ScanNet~\cite{dai2017scannet}"

- Signed Distance Function (SDF): A scalar field where each point stores the signed distance to the nearest surface; negative inside, positive outside. "signed distance field (SDF) observations"

- SLAM: Simultaneous Localization and Mapping; jointly estimating a sensor’s pose and building a map. "incorporating SLAM constraints."

- Spatial hash table: A hash-based structure mapping spatial locations to entries, enabling efficient sparse voxel storage. "a flat spatial hash table to store all voxel blocks, supporting constant-time access and full GPU parallelism."

- Supereight2: A multi-resolution volumetric mapping system using hierarchical structures for adaptivity. "Supereight2 represents single resolution grid, Supereight2 represents multi-resolution grid."

- Truncated Signed Distance Function (TSDF): An SDF truncated to a fixed band around surfaces to limit updates and improve efficiency. "Volumetric methods, particularly the \ac{tsdf}, are widely used for this purpose."

- VDBFusion: A TSDF fusion system built on OpenVDB for sparse volumetric reconstruction. "variants such as VDBFusion~\cite{vizzo2022vdbfusion} have extended it to \ac{tsdf} fusion."

- Variance-adaptive voxel grid: A multi-resolution voxel structure where resolution changes based on local TSDF variance. "We propose a novel variance-adaptive, multi-resolution voxel grid that dynamically adjusts voxel size based on the local variance of signed distance field (SDF) observations."

- Volumetric fusion: Integrating depth observations over time into a volumetric field (e.g., TSDF). "using a standard volumetric fusion strategy~\cite{curless1996volumetric}"

- Voxel block: A fixed-size group of voxels managed together, often for hashing and memory allocation. "Voxel Hashing~\cite{niessner2013real} addressed this limitation by dynamically allocating voxel blocks using a spatial hash table"

- Voxel Hashing: A technique that uses spatial hashing to dynamically allocate and access voxel blocks efficiently. "Voxel Hashing~\cite{niessner2013real} addressed this limitation by dynamically allocating voxel blocks using a spatial hash table, reducing memory usage and enabling real-time mapping for mobile platforms."

- Voxblox: A block-wise hashed TSDF system optimized for onboard, real-time mapping. "Voxblox uses block-wise hashing and ray bundling to support onboard updates on resource-constrained UAVs"

Collections

Sign up for free to add this paper to one or more collections.