- The paper introduces a unified approach that integrates RGB-D data into a composite voxel-based feature field to model visual, semantic, and spatial uncertainties.

- It employs 2D and 3D CNNs for feature extraction and refinement, allowing zero-shot adaptability and efficient scene reconstruction as validated on the ScanNet dataset.

- The methodology enhances robotic scene interpretation by leveraging uncertainty modeling to focus on error-prone regions, improving decision-making in dynamic environments.

UniFField: A Generalizable Unified Neural Feature Field

Introduction

This paper introduces UniFField, which is designed to address challenges in robotic 3D scene interpretation. The architecture leverages RGB-D images to create an integrated feature field accommodating visual, semantic, and geometric properties, supplemented by associated uncertainty metrics. Such an approach is pertinent given that previous methodologies mainly focus on scene-specific setups and omit uncertainty modeling, which is vital for robust robotic operations in unknown environments.

Constructing UniFField

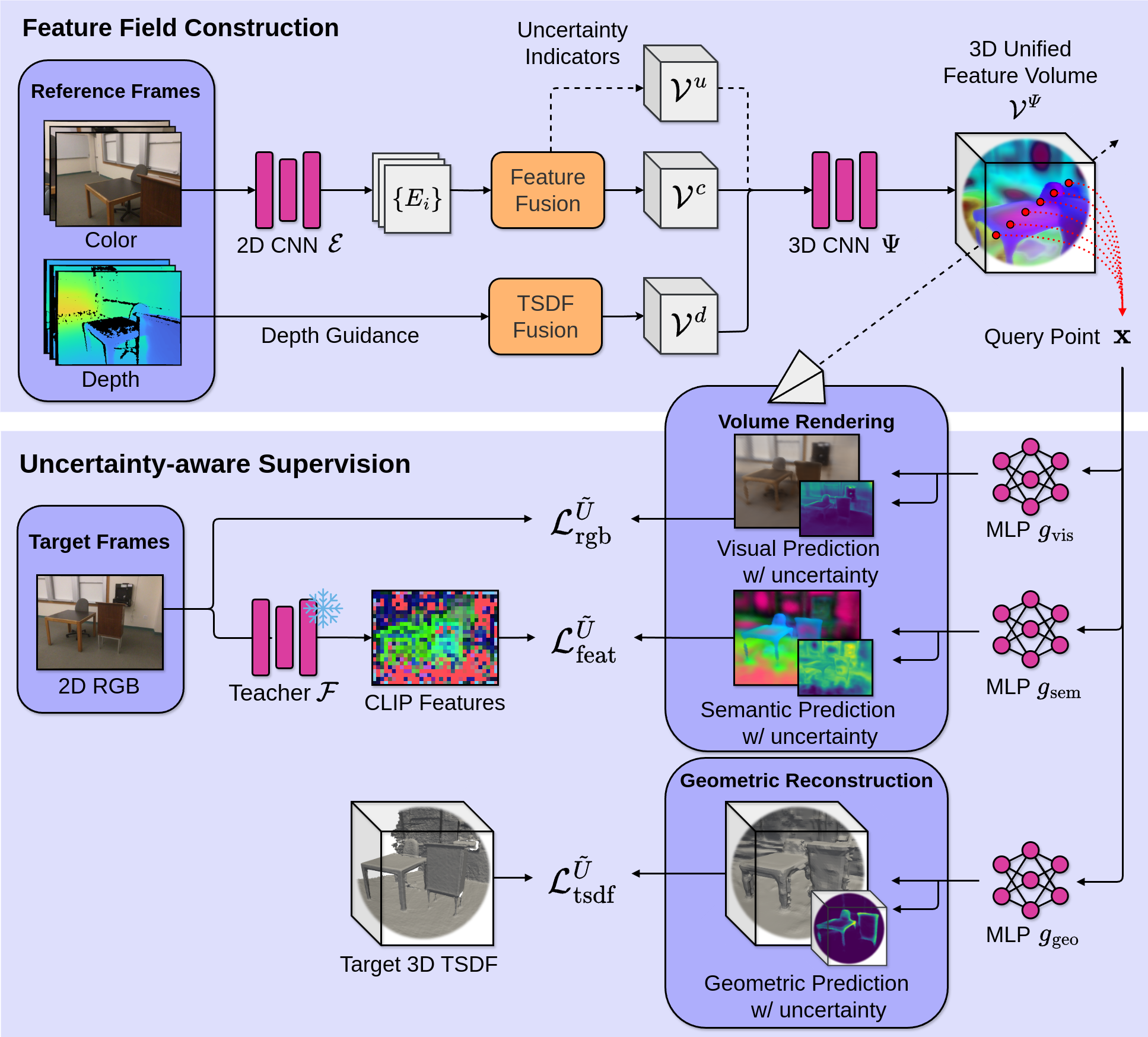

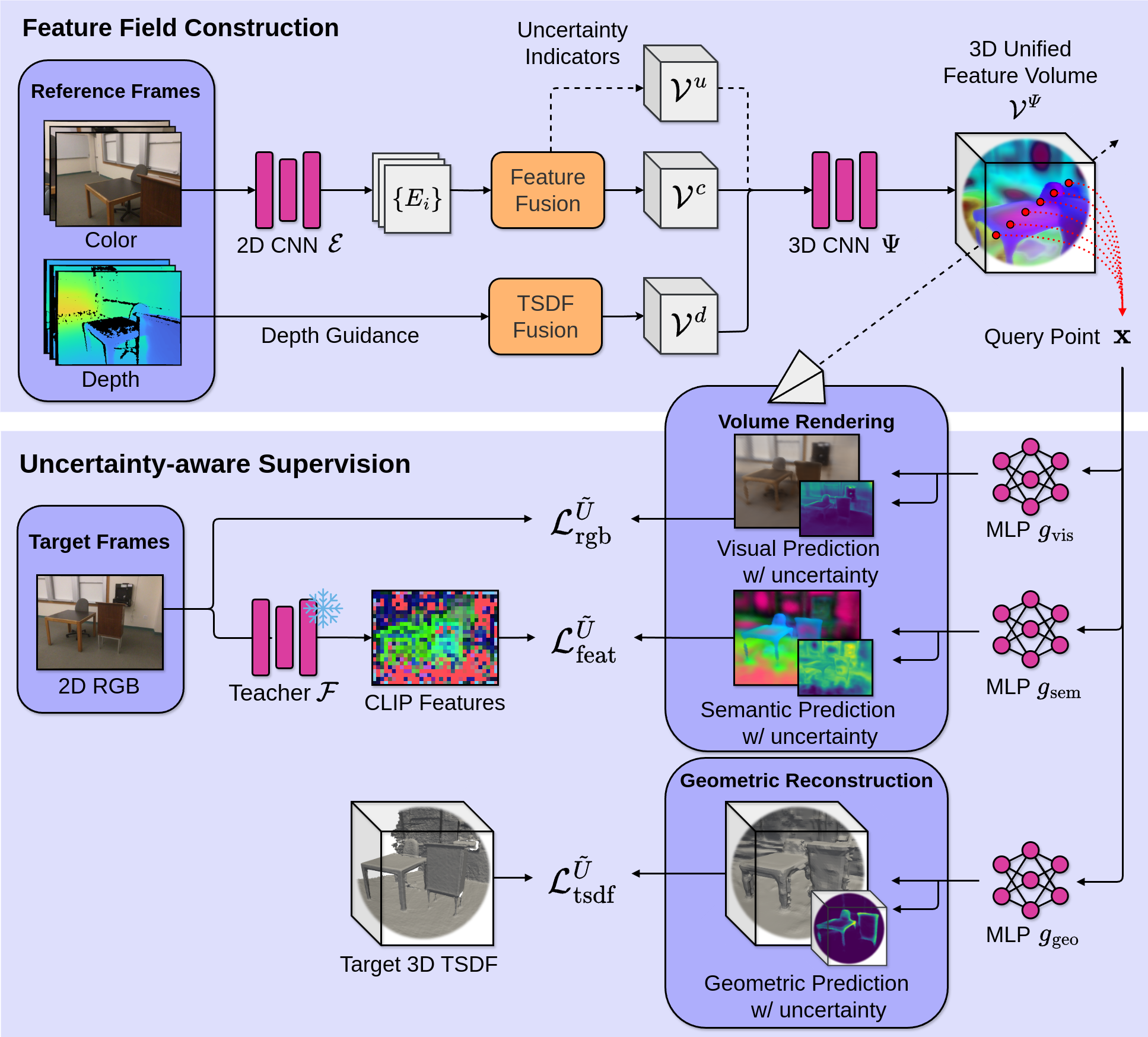

UniFField constructs its representation by integrating multiple streams of RGB-D data into a voxel-based feature volume. It uses depth signals to create an initial TSDF volume for spatial alignment, combined with image-derived features and uncertainty indicators (Figure 1). The initial processing stages employ a 2D CNN for feature extraction, which are projected into a 3D space to establish a composite feature volume.

Figure 1: Overview of UniFField, integrating image features, TSDF volume, and uncertainty indicators.

The model performs incremental refinement through a 3D CNN, addressing the need for continuous scene updates without retraining. Such architecture enables zero-shot adaptability to new environments, highlighting UniFField's potential scalability and efficiency.

Decoding Features and Uncertainty Estimation

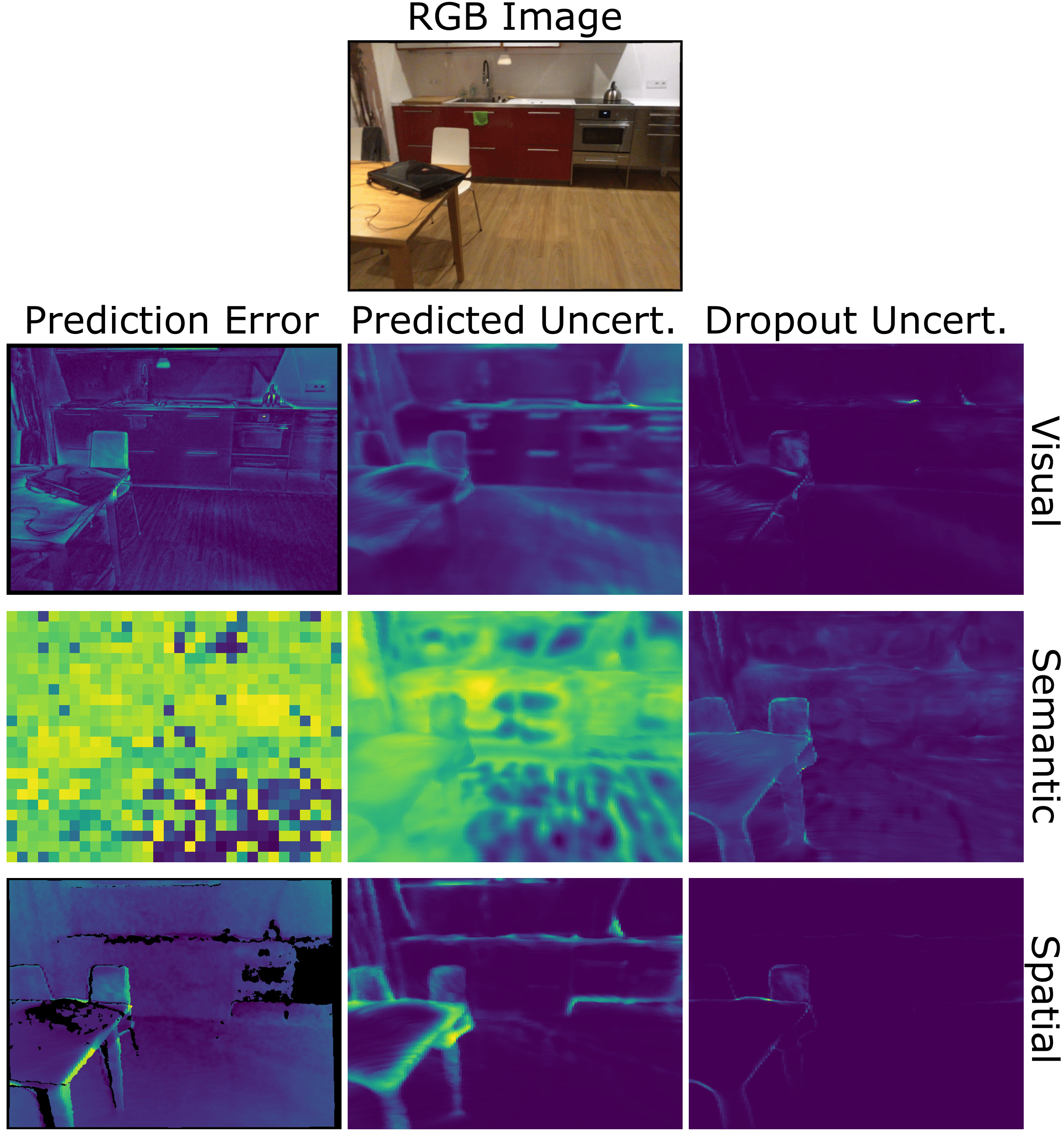

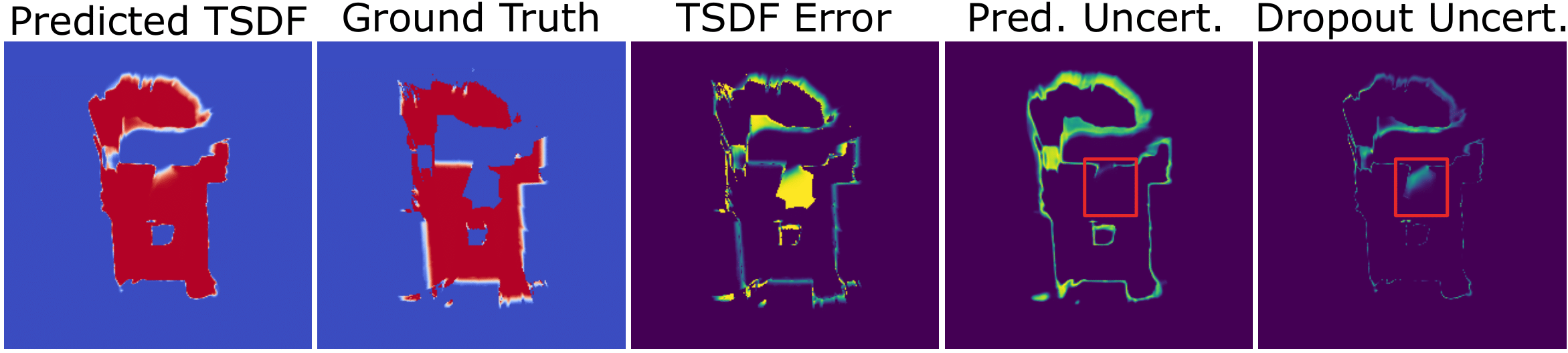

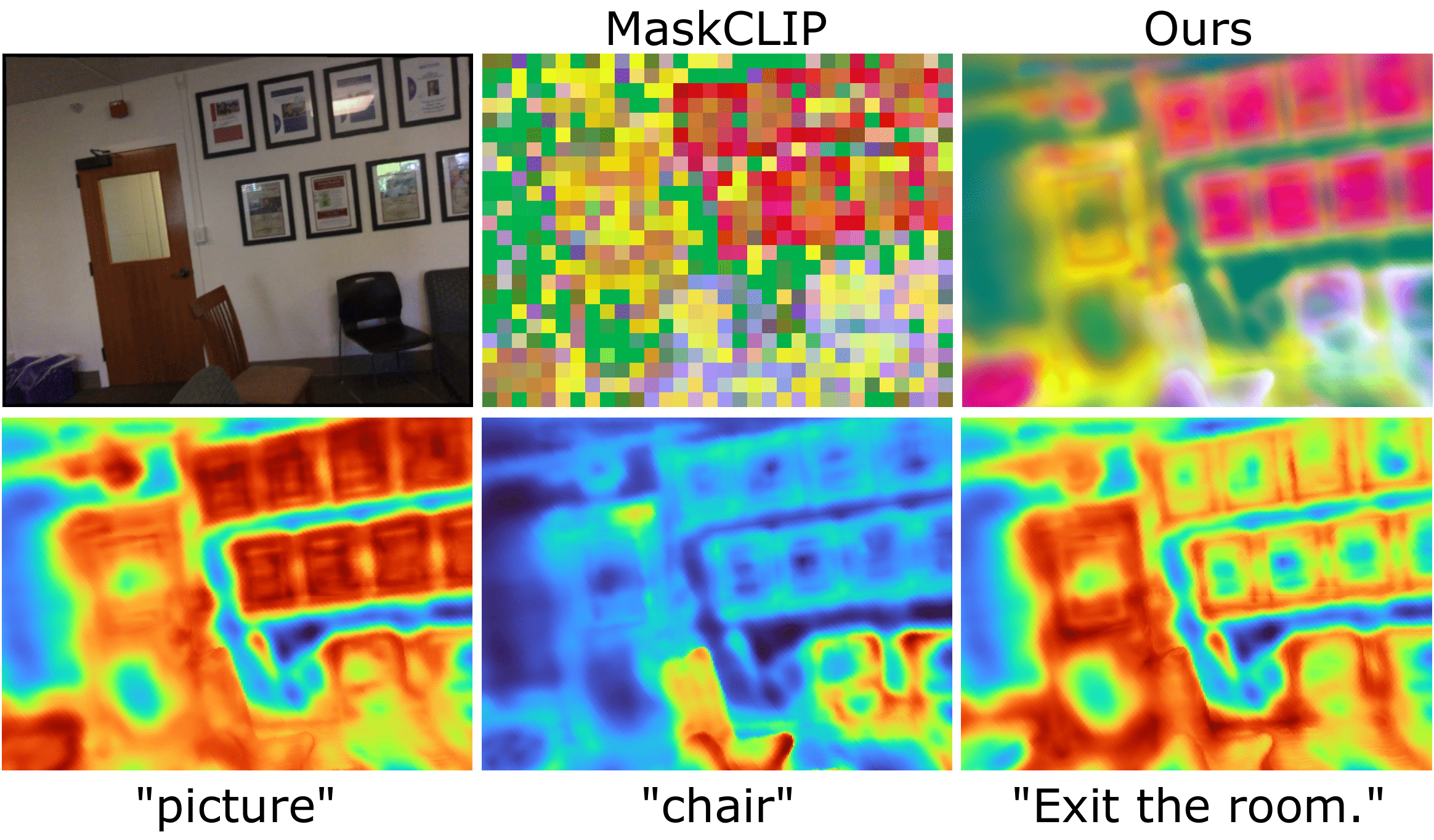

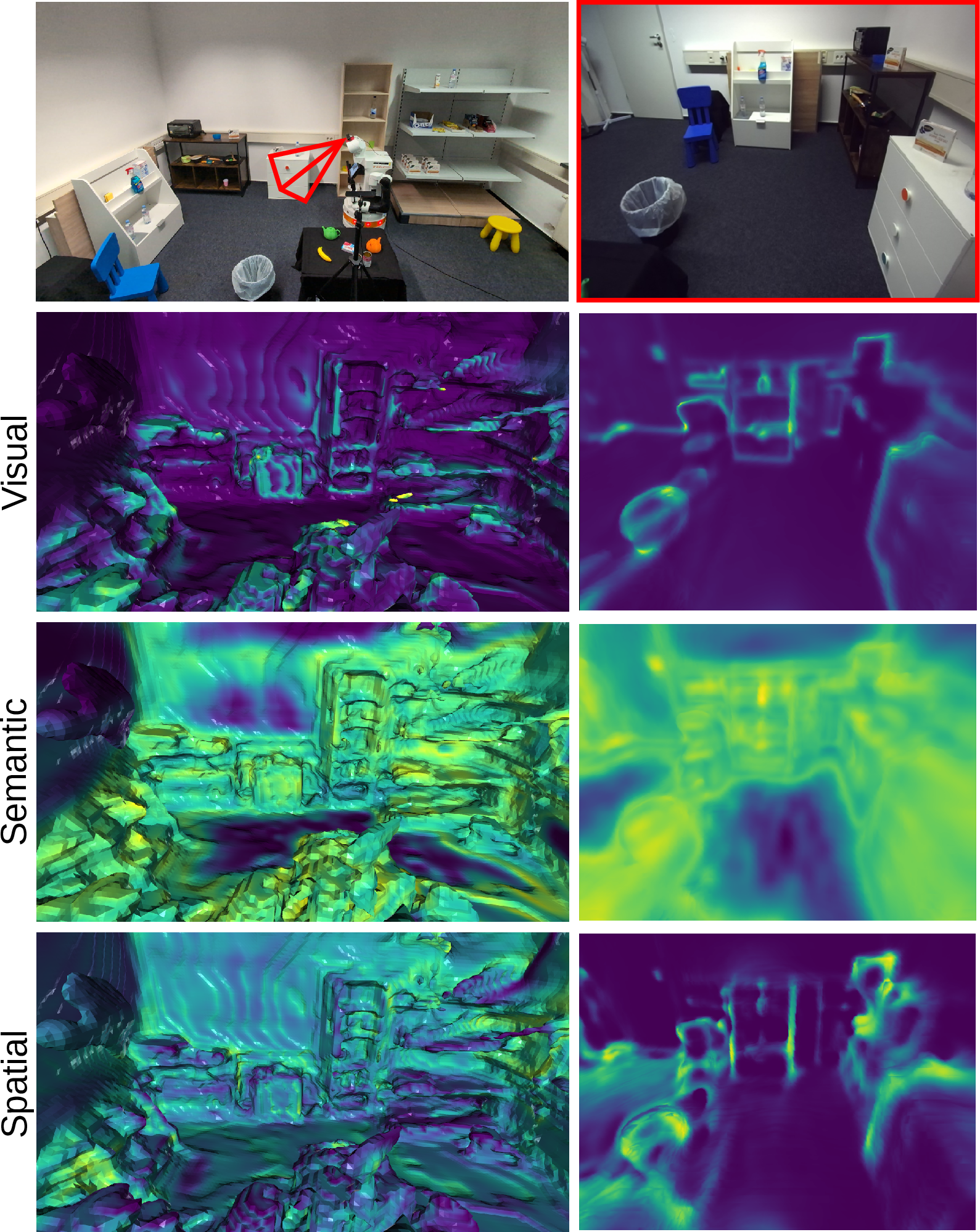

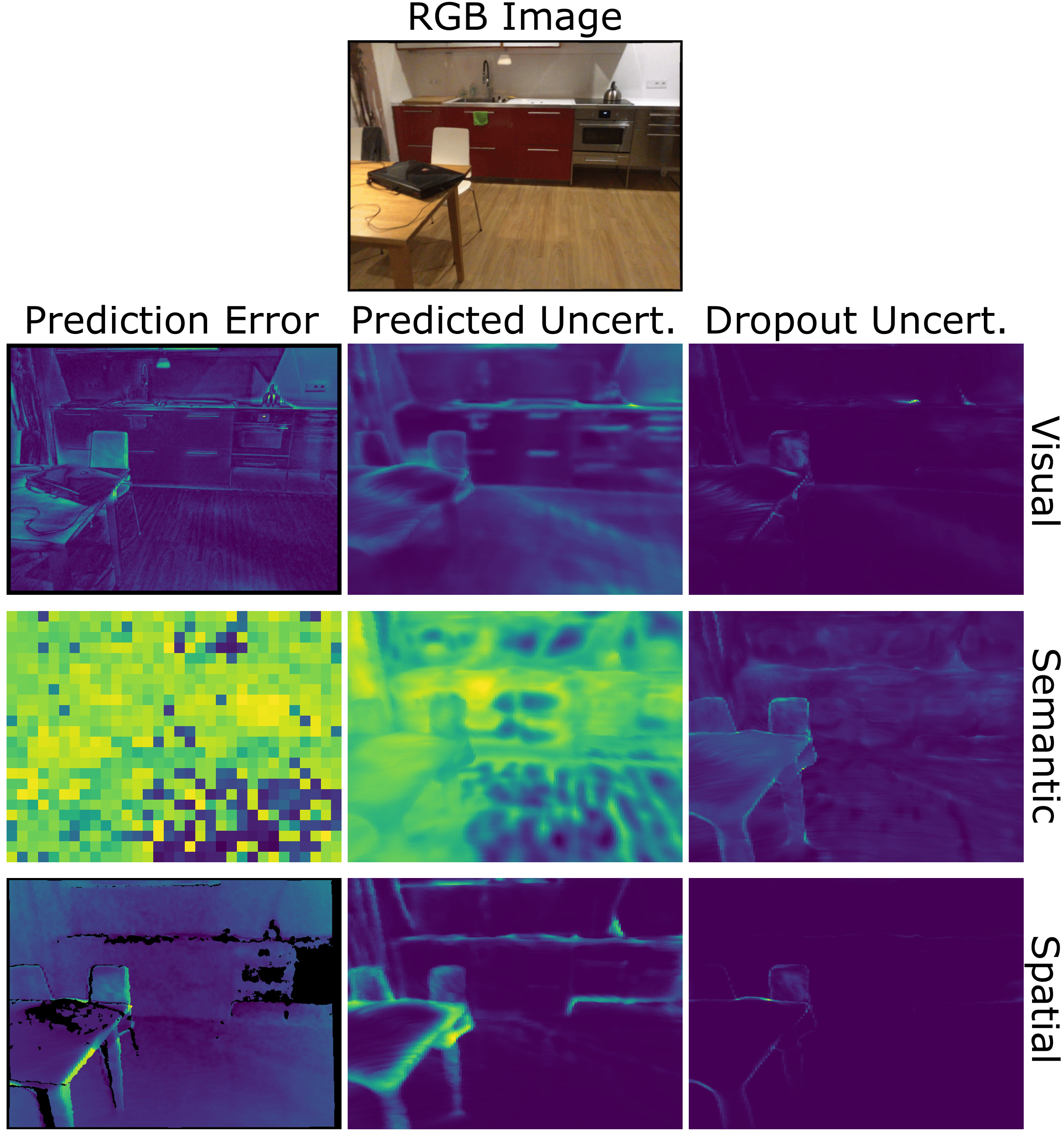

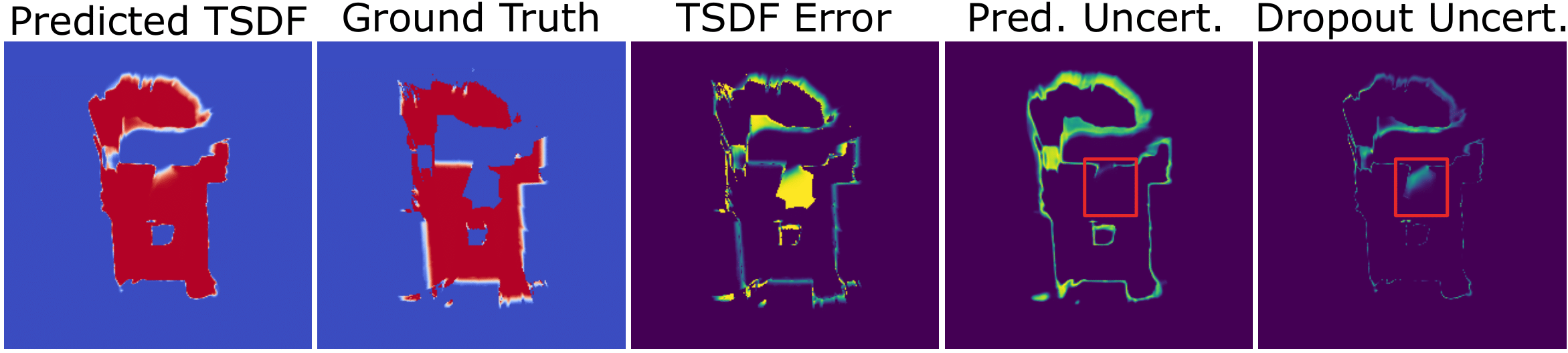

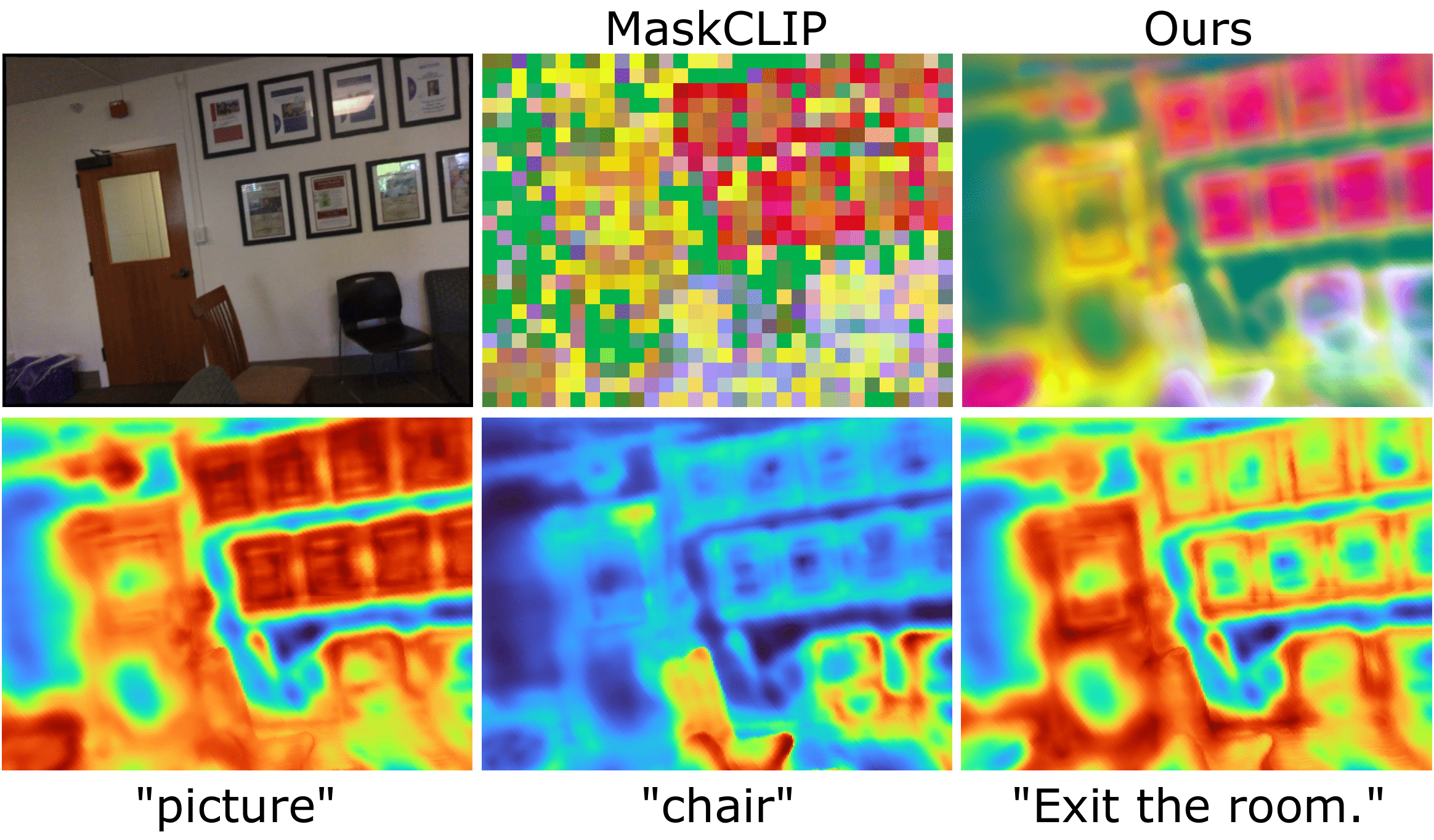

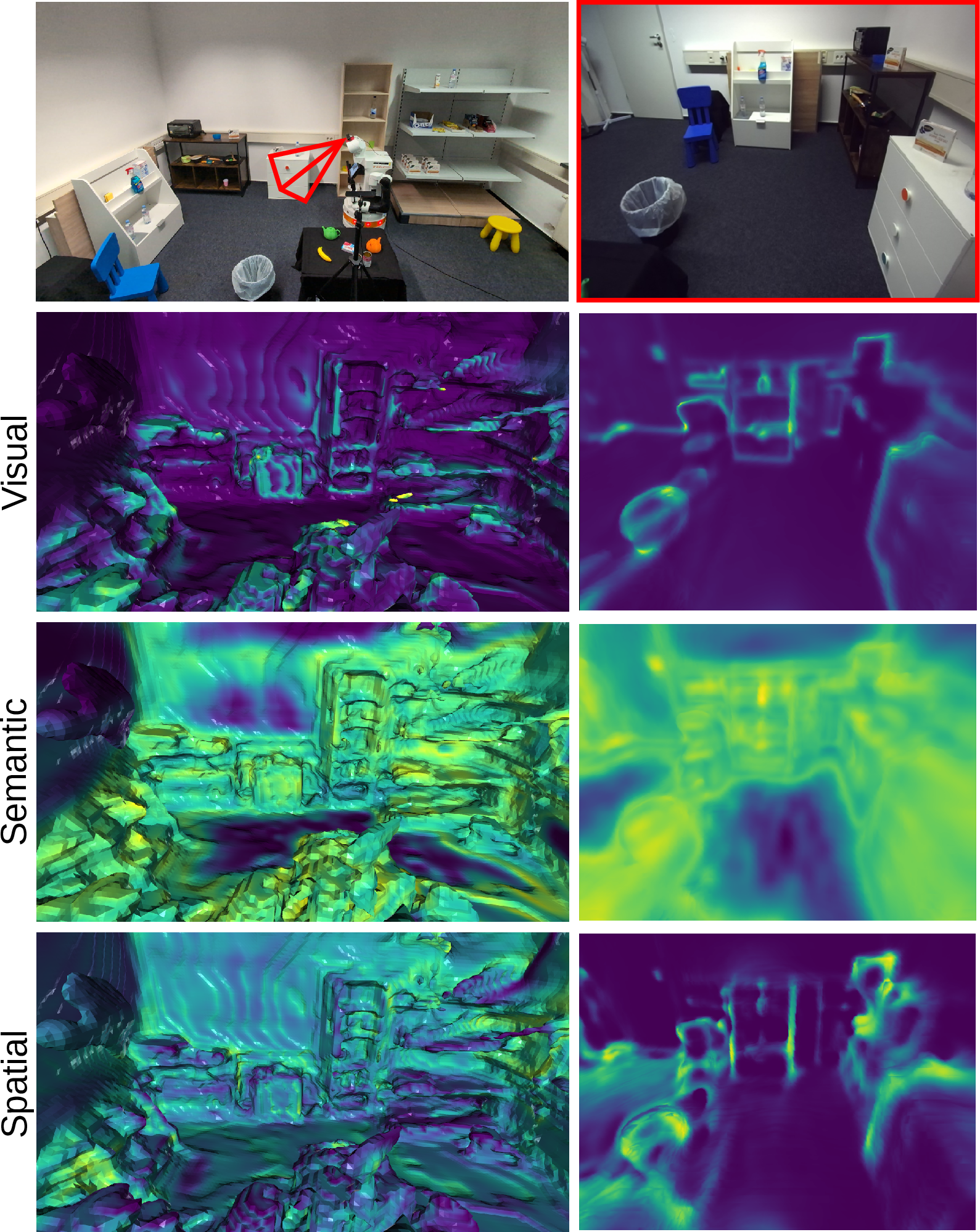

UniFField predicts scene properties using dedicated networks that output mean and variance for RGB, semantic, and geometric attributes, facilitating uncertainty evaluation. This uncertainty modeling includes visual, semantic, and spatial modalities, colored to emphasize prediction errors, offering crucial insights into error territories within the predicted scenario (Figures 3 and 4).

Figure 2: 2D uncertainty visualization highlighting prediction error correlation.

Figure 3: 3D spatial uncertainty with significant correlation to the TSDF error.

The derivation of uncertainties from epistemic and aleatoric sources restricts the necessity for large dropout ensembles, improving computational efficiency while maintaining high accuracy in the prediction of errors.

Experimental Evaluation

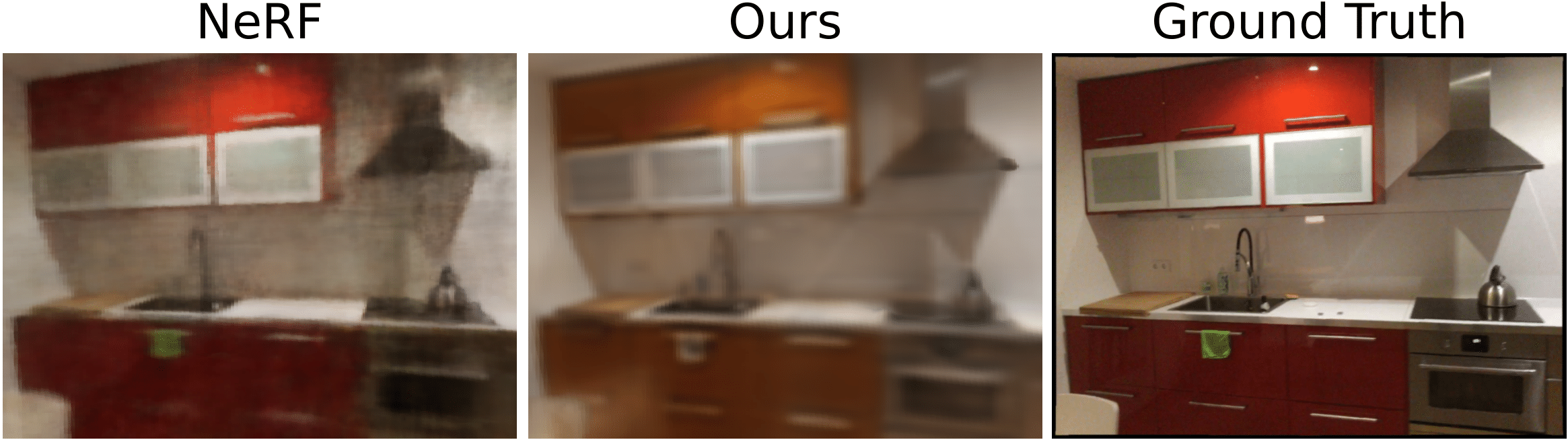

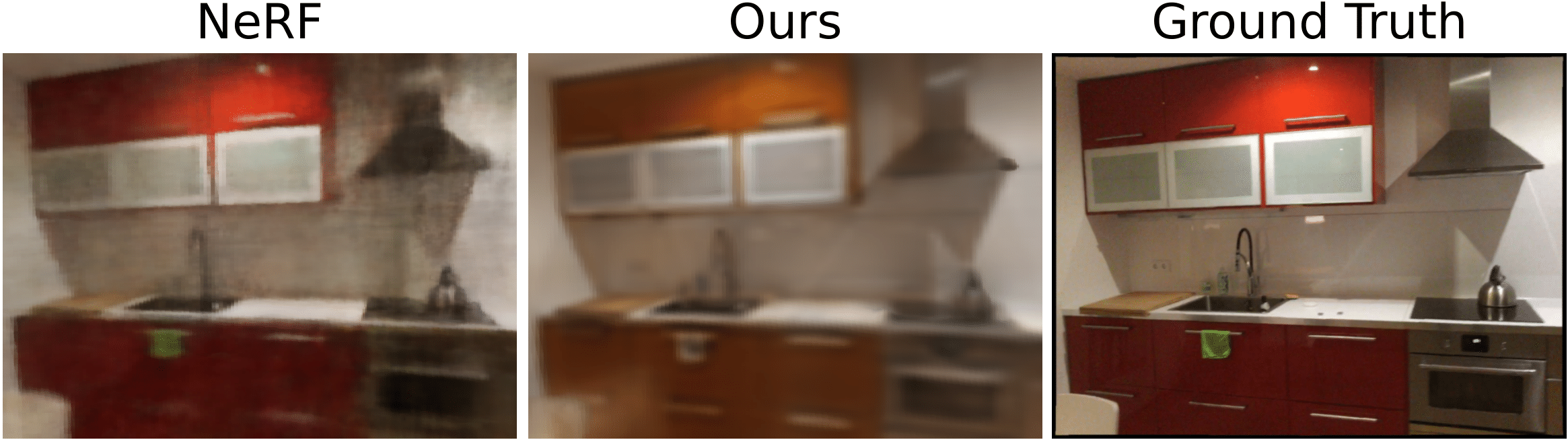

Experimental validation on the ScanNet dataset demonstrates UniFField's proficiency in scene understanding and uncertainty prediction. In comparative analyses, the synthesized outputs indicate effective scene reconstruction and semantic similarity, confirmed by cosine similarity metrics with LLMs such as CLIP (Figure 4).

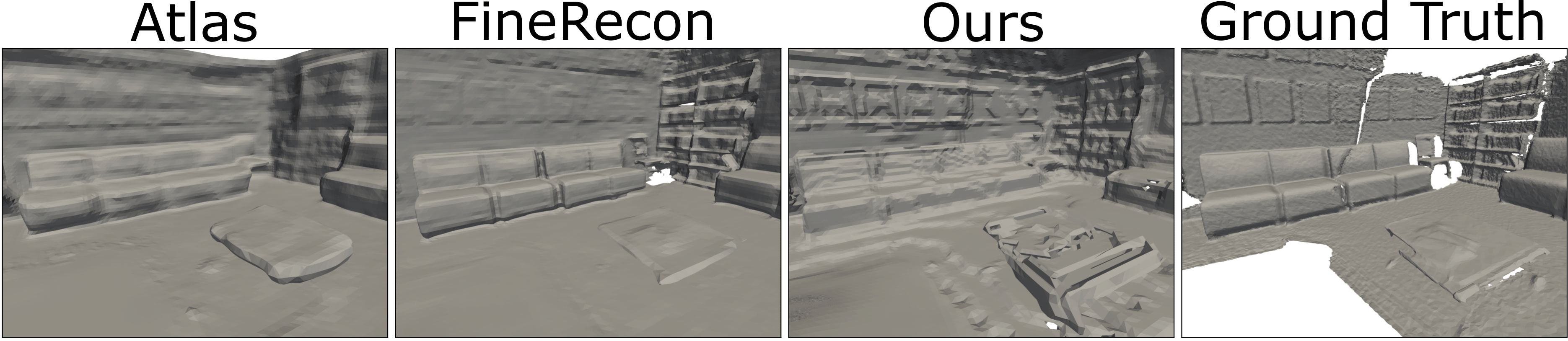

Figure 4: Novel view synthesis contrasting UniFField's zero-shot capabilities against NeRF techniques.

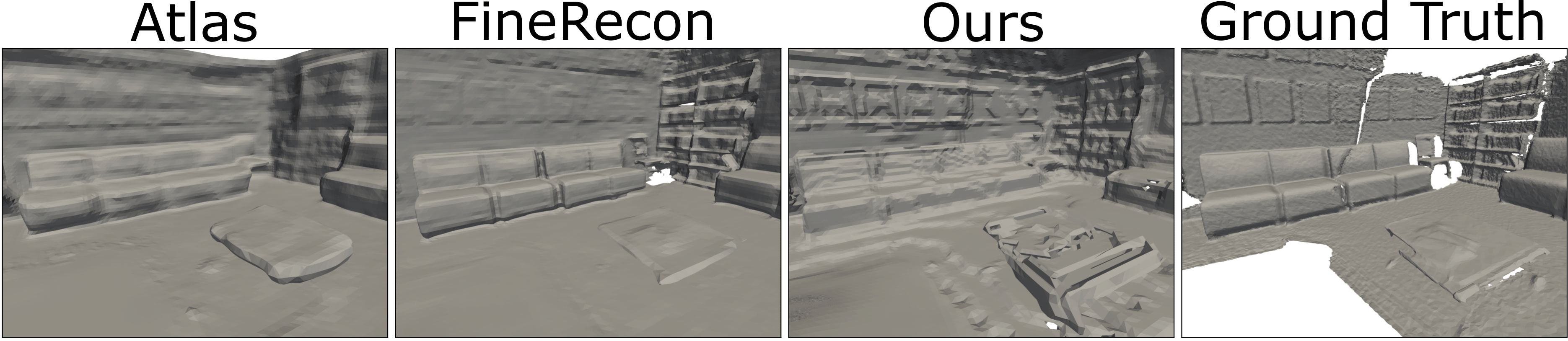

As a part of experimental design, the reconstruction metrics highlight the model's alignment with geometric ground truth, outperforming prior models like Atlas in visual acuity and depth accuracy, albeit at the potential expense of noisier geometry in certain contexts. The real-time integration with mobile manipulator robots further illustrates UniFField's applicability (Figure 5).

Figure 5: 2D and 3D uncertainty predictions showcasing spatial consistency.

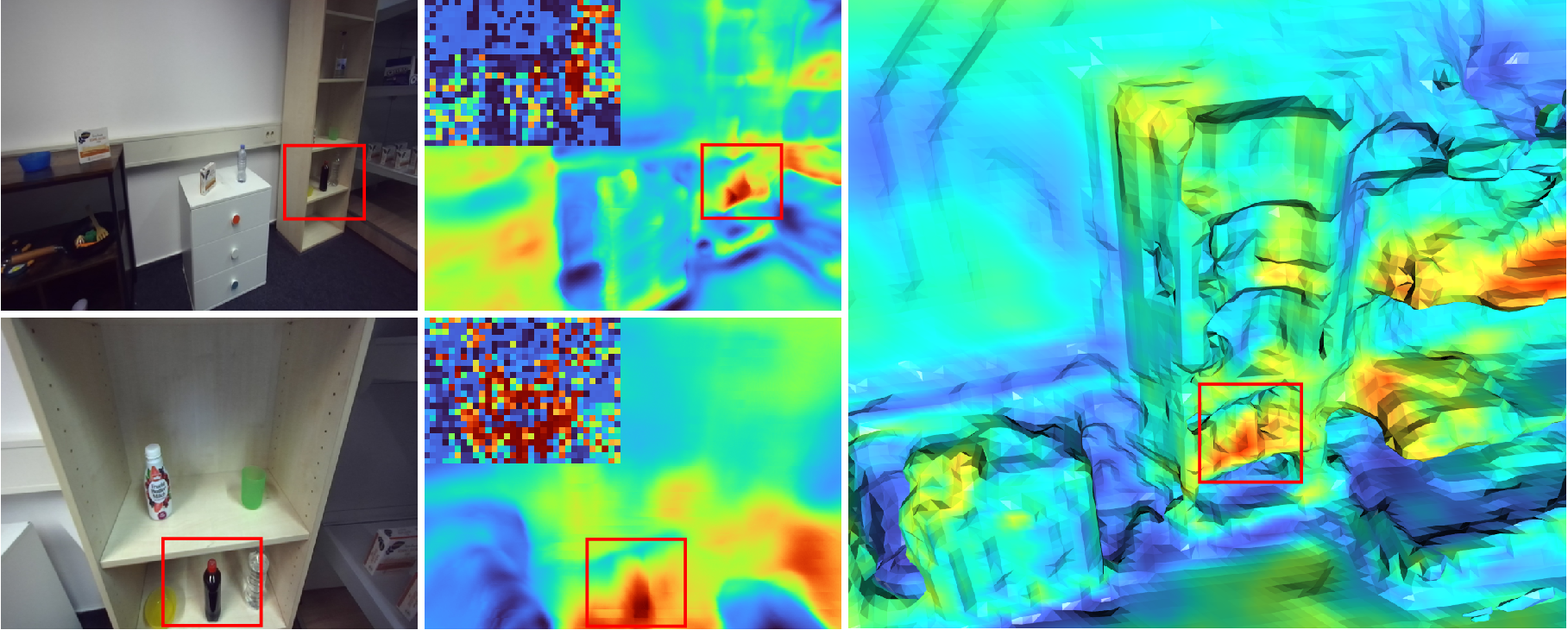

Active Object Search Scenario

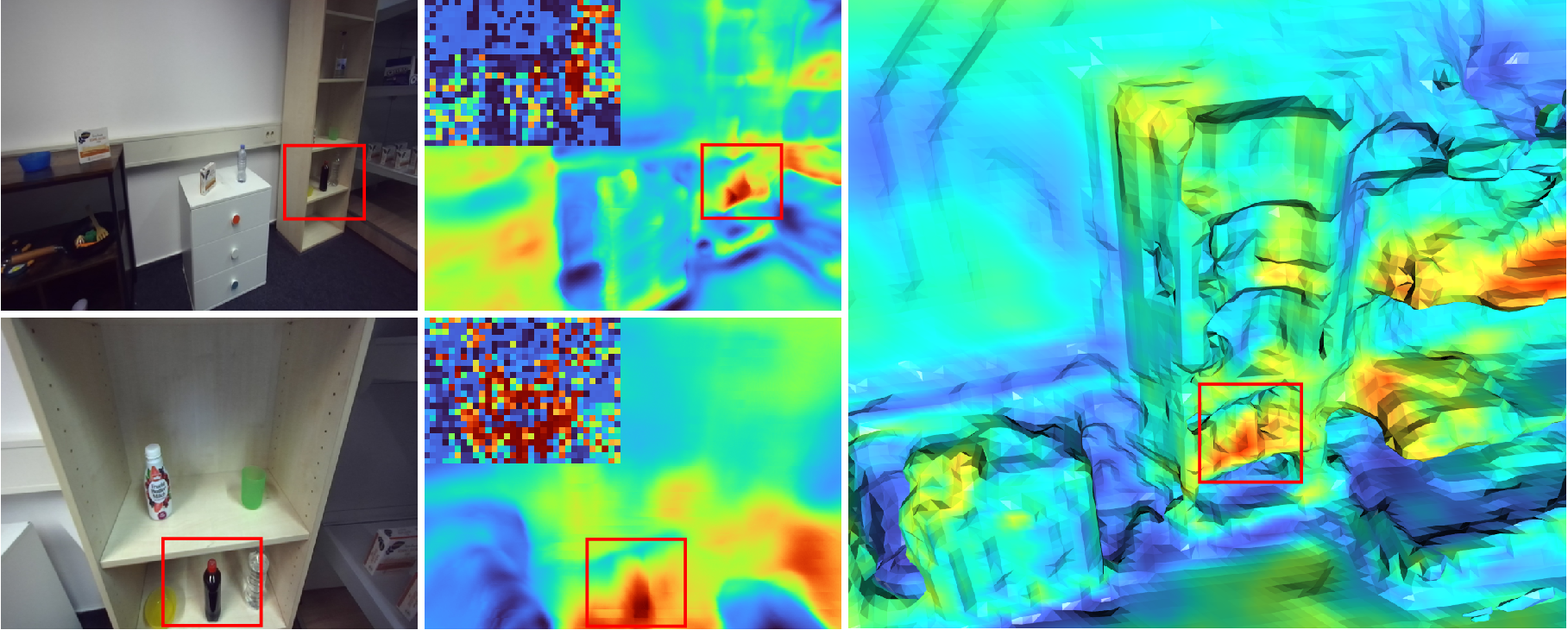

In practical application, UniFField demonstrates its utility in perception-guided robotic tasks. By calculating language-based similarity in 3D space, the model leans on semantic priors from CLIP features to localize objects effectively, presenting enhanced decision-making pathways in active object search scenarios (Figure 6).

Figure 6: Object localization using language-based similarity search in 2D and 3D.

The integration of uncertainties maximizes exploration accuracy by weighting areas with high uncertainty, ensuring focus on informative scene segments and minor computational overhead.

Conclusion and Future Prospects

UniFField represents a significant step in generalizing neural feature fields, achieving robust scene interpretation without trade-offs in adaptation performance. Future work could explore optimization strategies for uncertainty models and real-time applicability in larger and more complex environments beyond indoor settings. The foundational understanding provided by UniFField can further be adapted to enhance AI's role in dynamic, real-world scenarios.