- The paper introduces a parameter-efficient fine-tuning method that integrates LoRA with functionally enhanced rank-1 matrices to mitigate catastrophic forgetting.

- It employs innovative trigonometric and exponential transformations on U-Net convolutional layers, improving performance on CIFAR10, CIFAR100, and ImageNet100 tasks.

- Experiments demonstrate that learnable cosine functions optimize adaptation, achieving higher accuracy with reduced computational costs and memory usage.

Deep Generative Continual Learning using Functional LoRA: FunLoRA

Introduction

The work titled "Deep Generative Continual Learning using Functional LoRA: FunLoRA" (2510.02631) explores a novel methodology to enhance deep generative models in the context of continual learning. The primary challenge addressed is catastrophic forgetting, a common problem when models trained incrementally on new data overwrite learned representations from previous data. The paper introduces FunLoRA, a parameter-efficient fine-tuning (PEFT) approach leveraging Low-Rank Adaptation (LoRA) with innovative functional conditioning to mitigate forgetting while retaining computational efficiency.

Methodology

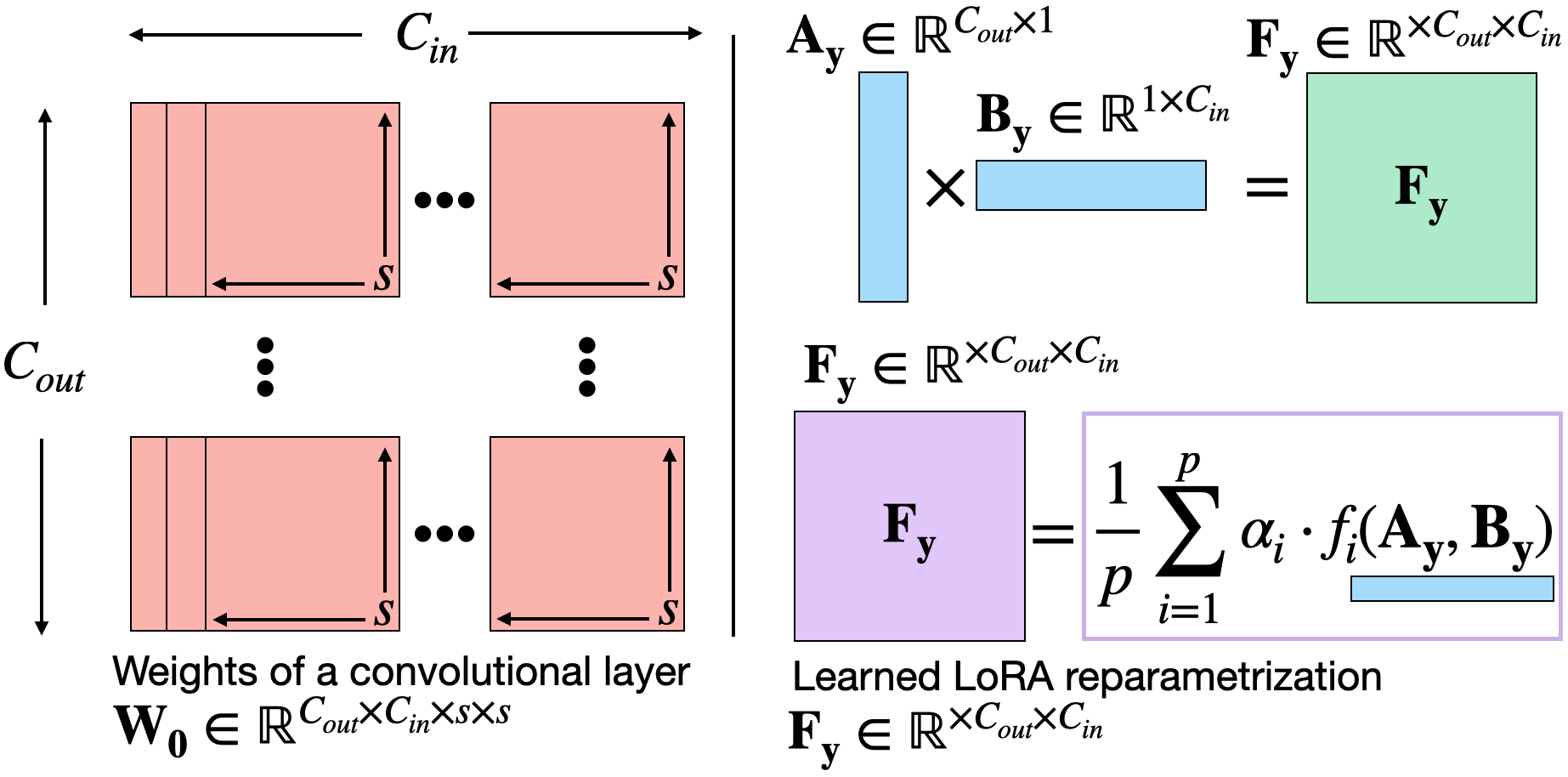

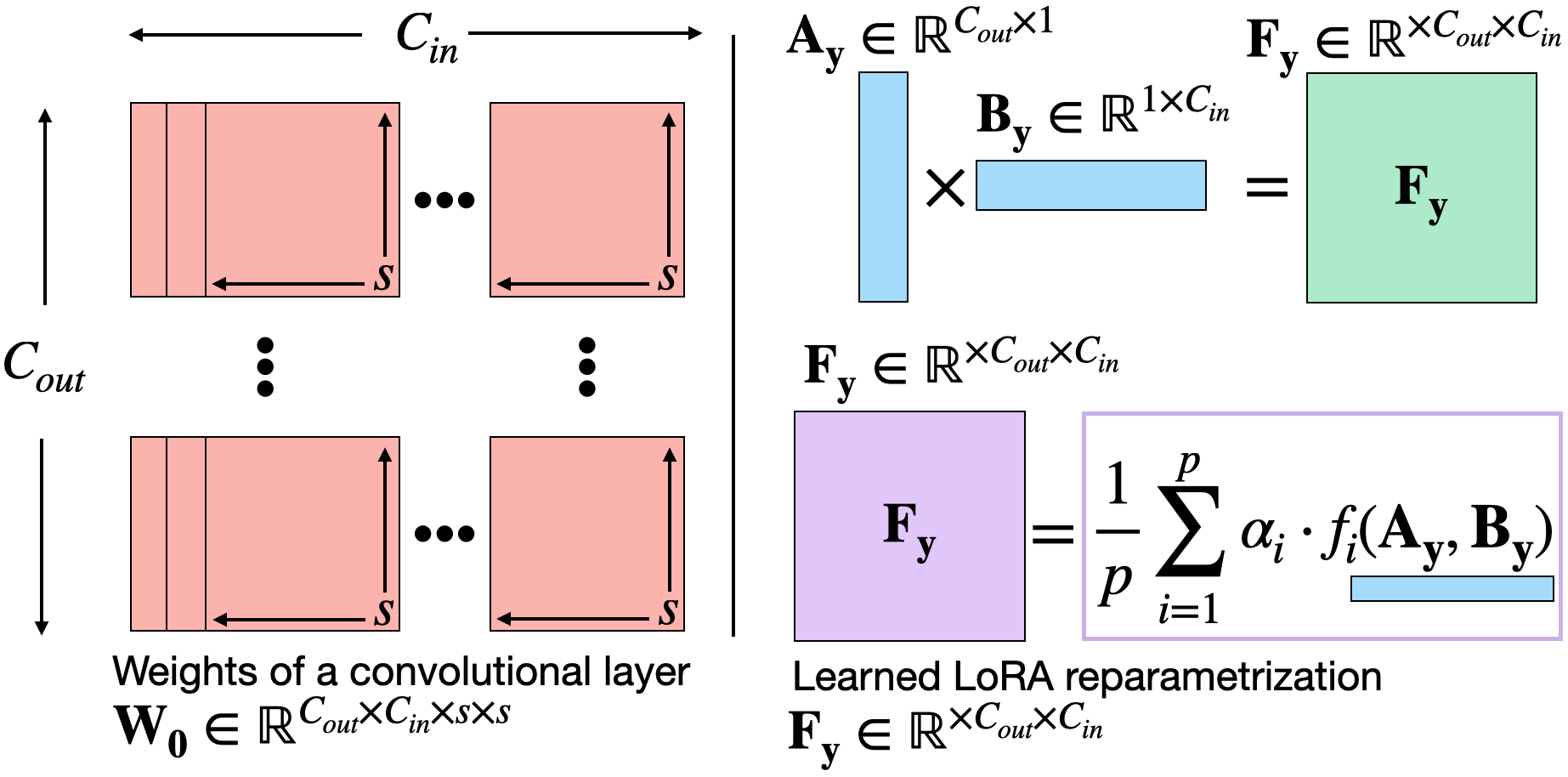

FunLoRA addresses the continual learning problem by integrating LoRA with functional transformations to enhance the rank and expressiveness of the adaptation matrices, using only rank 1 matrices. This technique contrasts typical LoRA applications that employ higher-dimensional matrices, aiming instead to efficiently maintain performance by functionally increasing matrix rank. The approach applies trigonometric and exponential functions to the matrices, enabling greater expressiveness while controlling the memory and computational costs associated with incremental model updates.

Figure 1: Visualization of the proposed LoRA reparametrization applied to rescale all the filters in a convolutional layer.

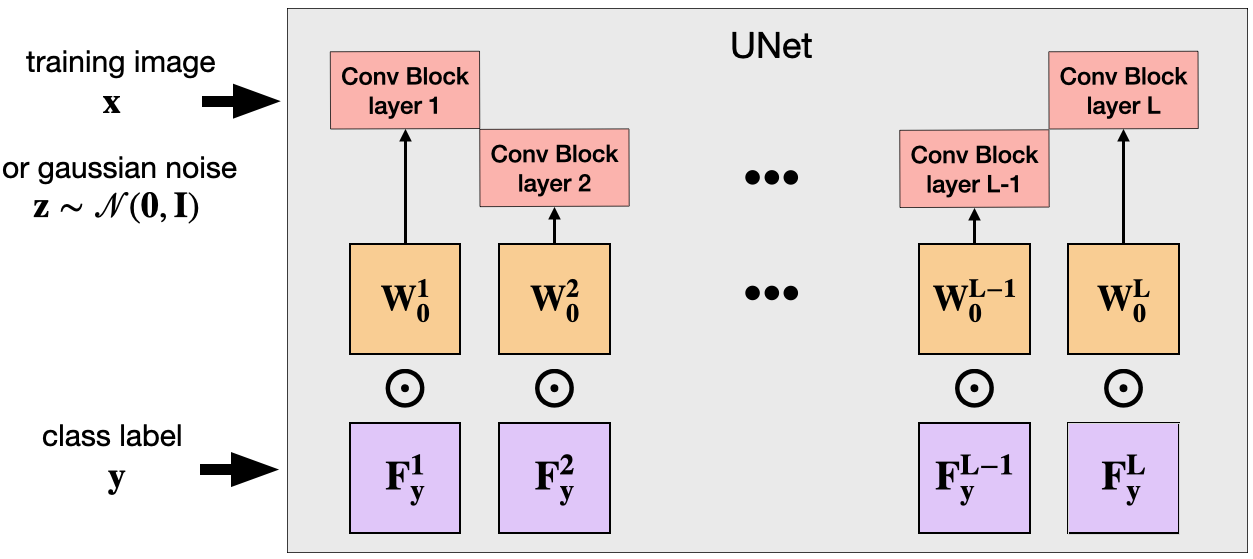

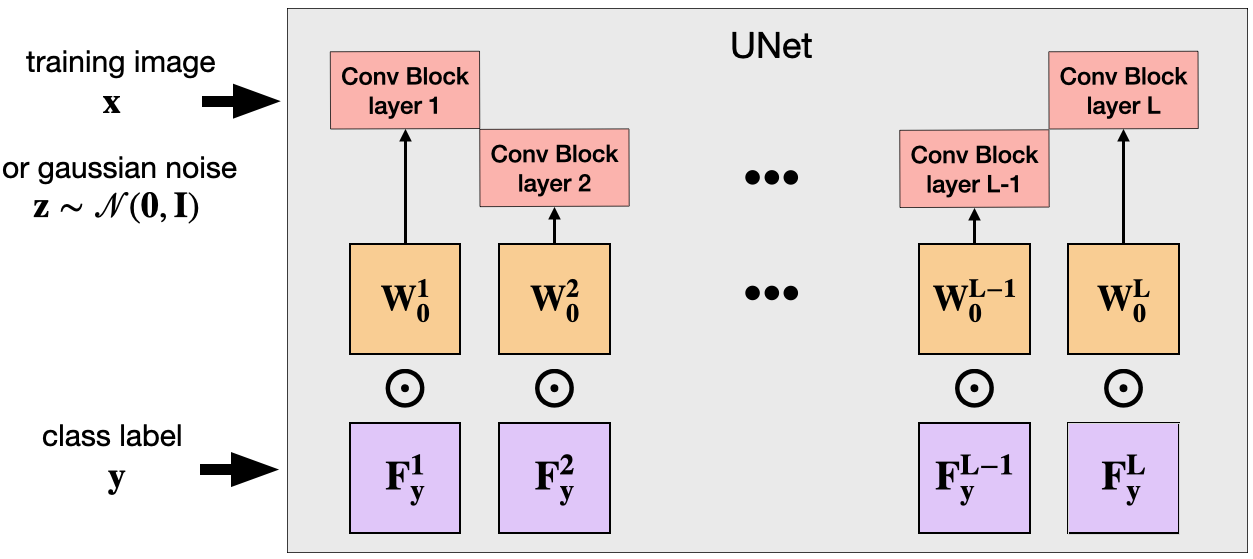

The FunLoRA method is demonstrated on convolutional layers of U-Net architectures commonly used in generative models, adapting these layers based on class-specific functional matrices for more effective learning across variable tasks.

Experimental Results

The experimentation focuses on standard datasets like CIFAR10, CIFAR100, and ImageNet100, split into incremental learning tasks to evaluate the model's efficacy against catastrophic forgetting. The FunLoRA model consistently outperformed baseline and comparative methods across these datasets, delivering superior classification accuracy with significantly lower parameter costs.

Extensive ablations were conducted to examine the influence of various functional configurations like power, cosine, and circular shifts on model performance. The cosine function, particularly when its hyperparameters were learnable, achieved the highest accuracy, indicating its utility in enhancing LoRA’s adaptability without compromising computational efficiency.

Figure 2: Illustration of the U-Net whose convolutional layers are adapted using the class conditional functional matrix Fyl.

Implications

The findings demonstrate the potential of FunLoRA to augment continual learning frameworks in generative models, providing a mechanism to efficiently incorporate new knowledge with minimal memory overhead. This development facilitates generative models' transition into areas requiring rapid adaptation and scalability without extensive retraining, such as real-time data analysis and online learning environments.

Conclusion

The FunLoRA framework presents a robust solution to the ever-present issue of catastrophic forgetting in generative models under continual learning scenarios. By employing a functionally enhanced LoRA mechanism, the model achieves a balance between expressiveness and resource efficiency. The research not only advances theoretical understanding in this domain but also sets the stage for practical applications where continual learning is integral. Future investigations can further refine these methods to assimilate more complex functional transformations and extend the approach to other types of neural architectures.