- The paper finds that human-like memory decay in transformers enhances language learning with improved validation loss and BLiMP accuracy.

- It introduces a novel non-trainable decay mechanism in the self-attention layer to simulate cognitive echoic memory.

- However, the fleeting memory models underperform in predicting human reading times, exposing a trade-off in model design.

The paper "Human-like fleeting memory improves language learning but impairs reading time prediction in transformer LLMs" (2508.05803) investigates the counterintuitive role of memory constraints in LLMs, specifically transformers, and their dual effect on language learning and predicting human reading times. This research revisits classic cognitive theories that propose memory limitations as a possible enabler of language acquisition and evaluates whether these can be successfully integrated within modern neural architectures.

Cognitive Motivation and Theoretical Background

Human memory has inherent limitations that manifest as a rapid decay of specific word forms during language processing. Cognitive theories, often stemming from classical connectionist models, argue that these memory limitations might inherently benefit language acquisition by promoting abstraction and focusing on immediate, relevant linguistic cues [elman1993learning, christiansen_now-or-never_2016]. Trendsetting models like transformers, however, typically operate with perfect memory within their context windows, seemingly challenging these ideas [hu2020systematic, linzen2021syntactic].

Method of Simulating Human-Like Memory

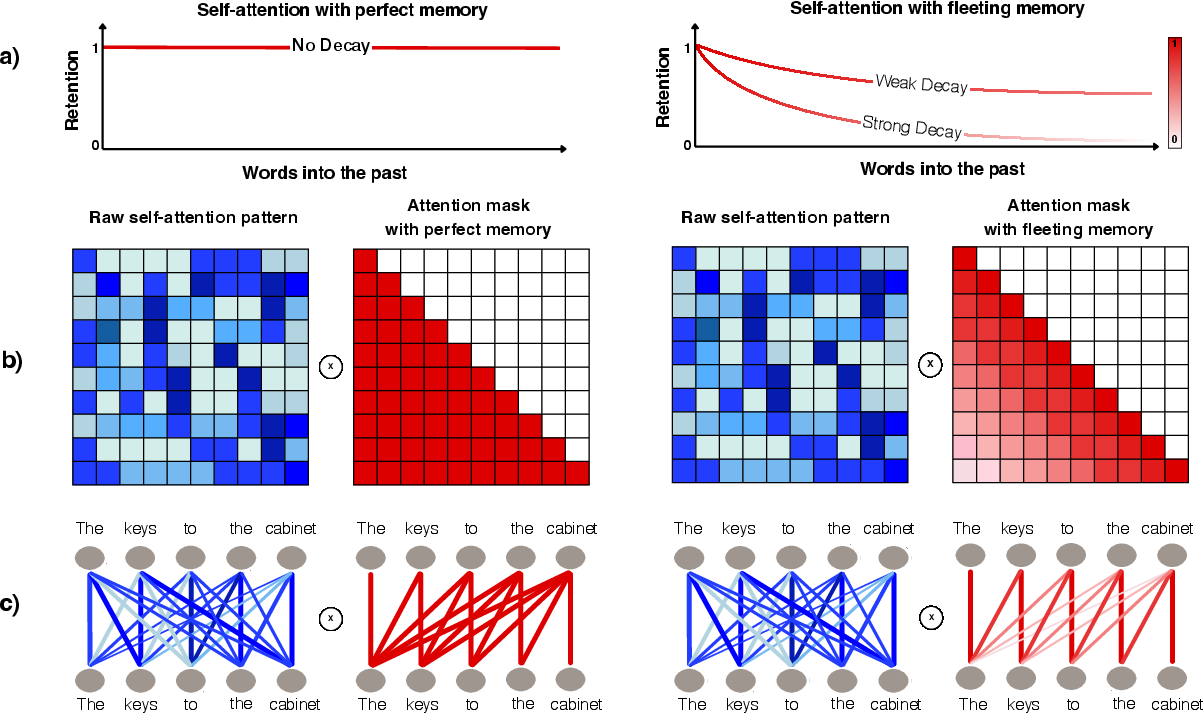

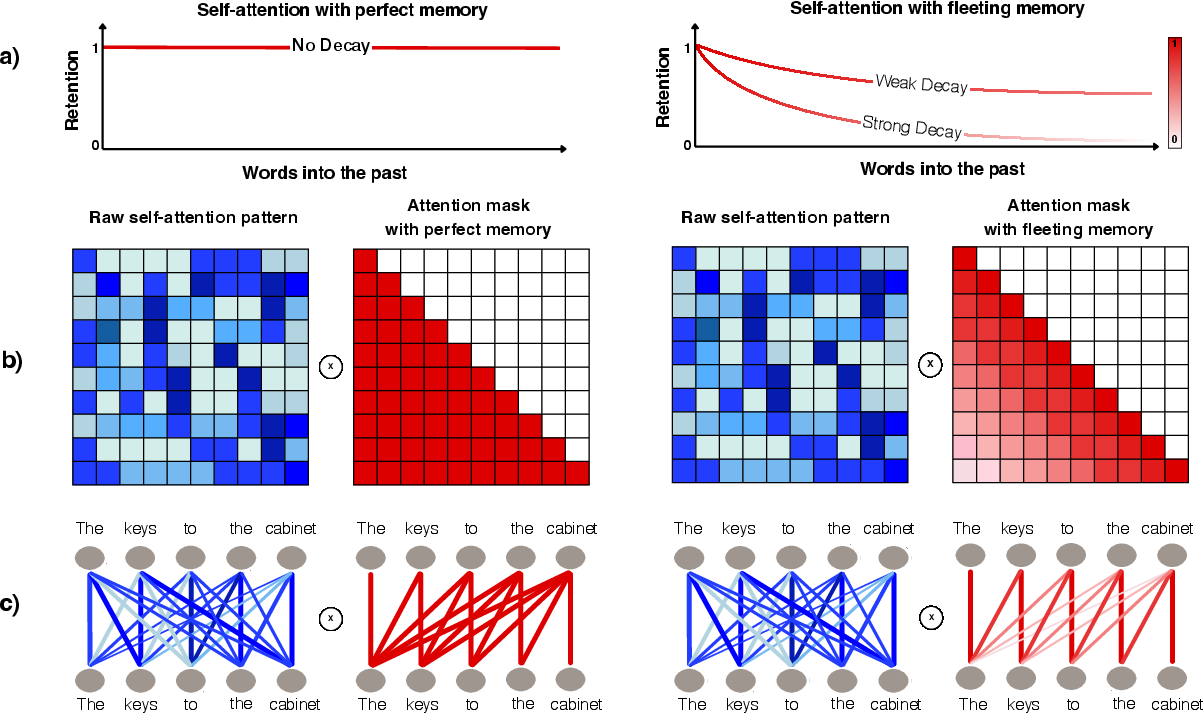

The study introduces a modification to the transformer architecture termed "fleeting memory transformers," which integrates a decay mechanism into the self-attention operation simulating memory loss (Figure 1). This decay process is mediated by a non-trainable matrix B, which decays attention weights as a function of token distance, incorporating an "echoic memory buffer" to model initial perfect retention before gradual loss:

Figure 1: A comparison between standard Transformers' context retention and fleeting memory implementation demonstrating decreasing retention as a function of distance.

Empirical Findings: Language Learning vs. Reading Time Prediction

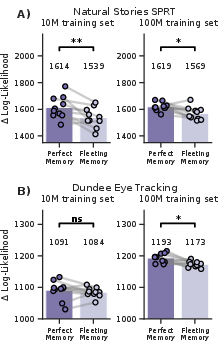

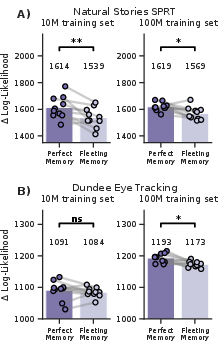

Upon experimentation with transformers trained on a scaled-down dataset simulating developmental data exposure (BabyLM), the fleeting memory models consistently outperformed controls with perfect memory in language modeling and syntactic tasks. This was assessed across multiple configurations and larger datasets, confirming improvements both in model validation loss and syntactic evaluation metrics like BLiMP accuracy (Figure 2).

Figure 2: Improvements in validation loss and BLiMP accuracy for fleeting memory models compared to perfect memory models, indicating enhanced language learning capabilities.

Conversely, these models showed decreased performance in predicting human reading times across datasets (Natural Stories and Dundee corpus), which posed an intriguing contrast. Despite achieving superior language modeling scores, the fleeting memory transformers predicted reading behaviors less accurately than their perfect memory counterparts, suggesting a complex interaction between model architecture and psycholinguistic tasks (Figure 3).

Figure 3: Differences in predictive accuracy of human reading times, indicating the impairment of fleeting memory models in surpassing the performance of perfect memory models.

Theoretical Implications and Future Directions

This study reinforces the cognitive perspective that certain memory limitations can indeed serve as beneficial inductive biases for language acquisition in models. However, it illustrates a paradox where architectural constraints intended to render models more human-like may degrade their ability to simulate human processing behaviors. This dichotomy exemplifies the nuanced relationship between model design and its alignment with human linguistic capabilities.

Notably, the impairment cannot be dismissed as a simple function of superhuman data exposure or memorization of low-frequency words, suggesting that these findings might reflect an inherent architectural bias rather than data-driven anomalies. Future work could explore dynamic or content-sensitive memory models that either expand or adapt retention strategies over time or based on input relevance, potentially reconciling these dual roles of memory in cognitive modeling.

Conclusion

The research presented in "Human-like fleeting memory improves language learning but impairs reading time prediction in transformer LLMs" (2508.05803) provides valuable insights into the complex interactions between memory simulation and language modeling. It emphasizes the importance of considering cognitive architectures in AI development, underscoring the potential for human-like memory constraints to facilitate learning, albeit with the trade-off of predicting human behavior. These findings invite further exploration into adaptive memory systems that might enhance both learning capability and cognitive alignment in LLMs.