- The paper proposes PhotonSplat, a framework that transforms binary SPAD images into precise 3D reconstructions and vivid colorizations.

- It employs a redefined Gaussian splatting model with spatial smoothing regularization to significantly improve noise reduction and scene fidelity.

- Experimental validations on simulated and real-world datasets show superior PSNR and SSIM, underscoring its potential for reducing motion blur in dynamic scenes.

PhotonSplat: 3D Scene Reconstruction and Colorization from SPAD Sensors

Introduction

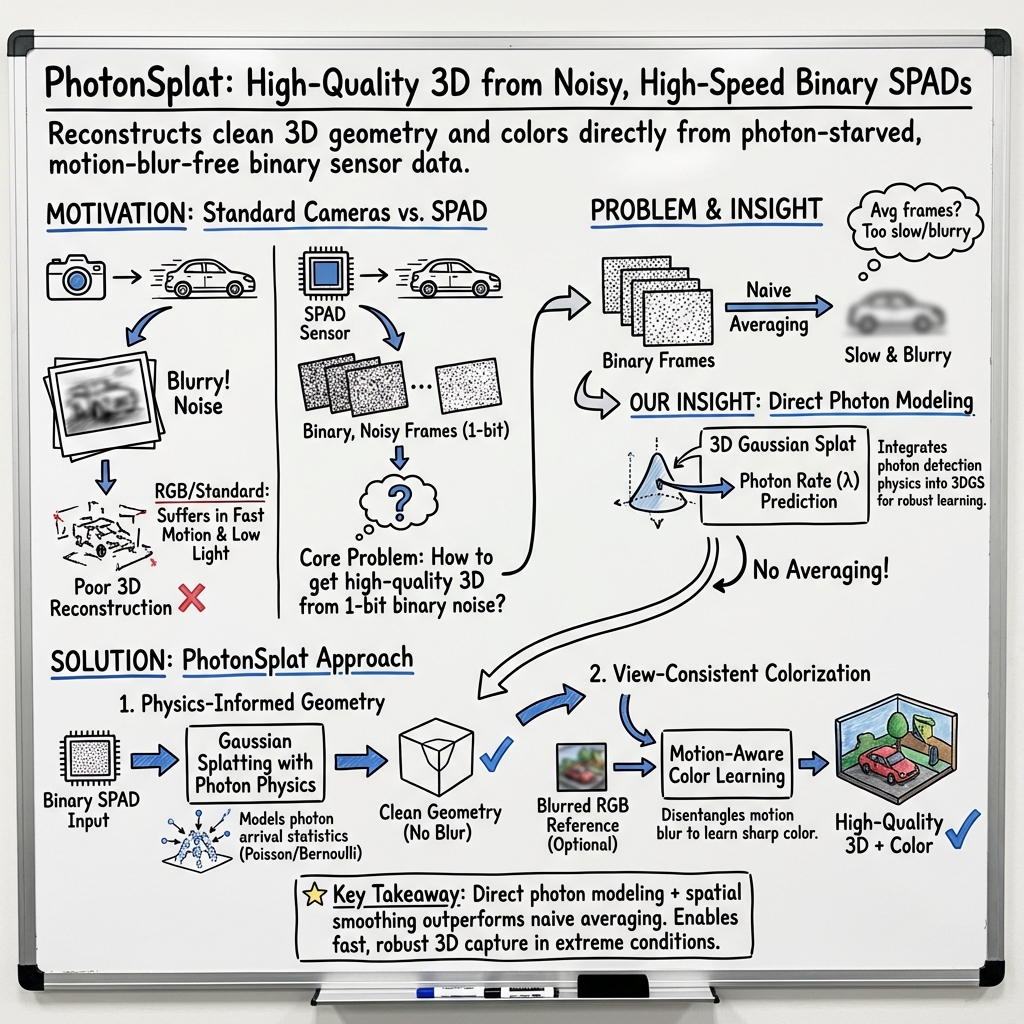

The introduction of Single-Photon Avalanche Diode (SPAD) arrays presents an advanced solution for the persistent challenge of motion blur in neural rendering. This paper introduces PhotonSplat, a framework designed to transform binary SPAD images into accurate 3D reconstructions, optimizing the interplay between noise and blur. The framework leverages a 3D spatial filtering technique that improves the noisiness of renderings, allowing both no-reference and reference-based colorization strategies that improve tasks like object detection and scene editing.

Single-Photon Imaging

SPAD cameras, with their precise photon counting capabilities, bring forward potential applications in low-light, high-dynamic-range, and fast-motion scenarios. The photon-counting nature of SPAD arrays inherently introduces challenges due to the stochastic nature of photon arrivals resulting in binary imaging data. Existing methodologies such as NeRF have demonstrated the potential for 3D reconstruction from multi-view SPAD images but often face issues with view smoothness and image fidelity. Although Gaussian Splatting aids in rapid training and rendering, PhotonSplat integrates SPAD-specific representations, addressing these challenges effectively.

Methodological Advancements

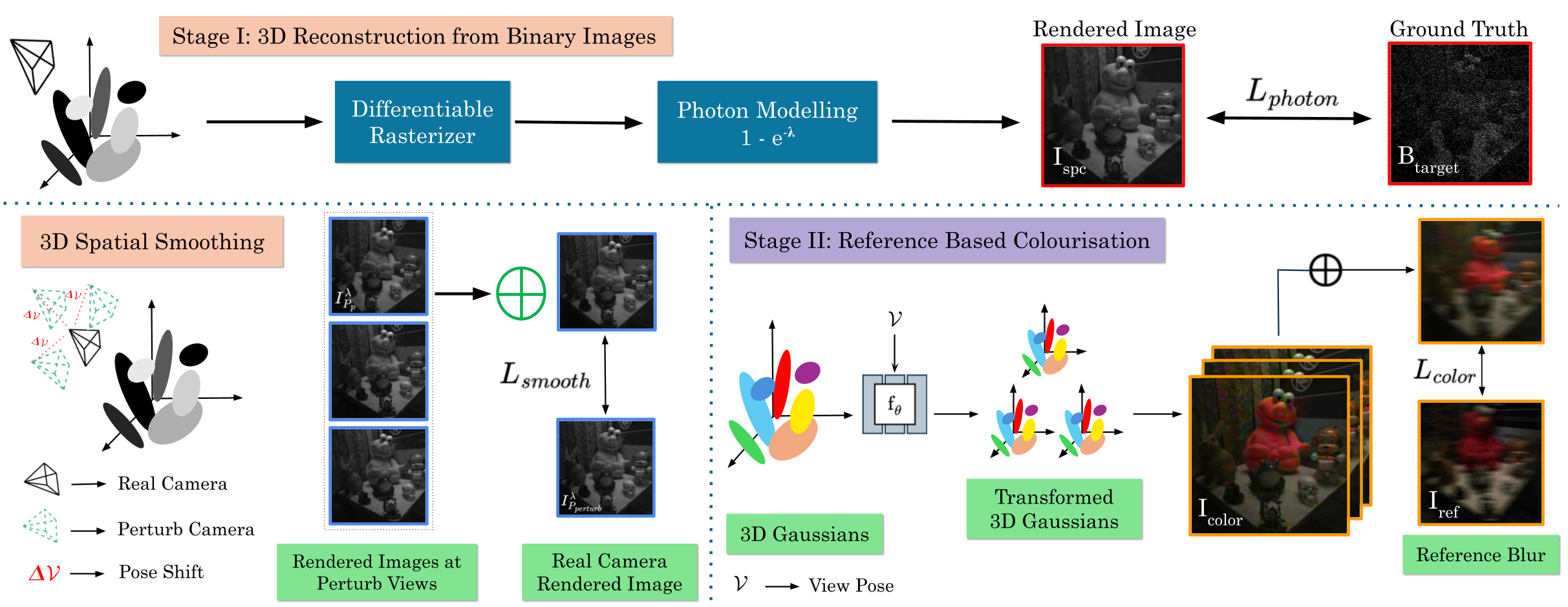

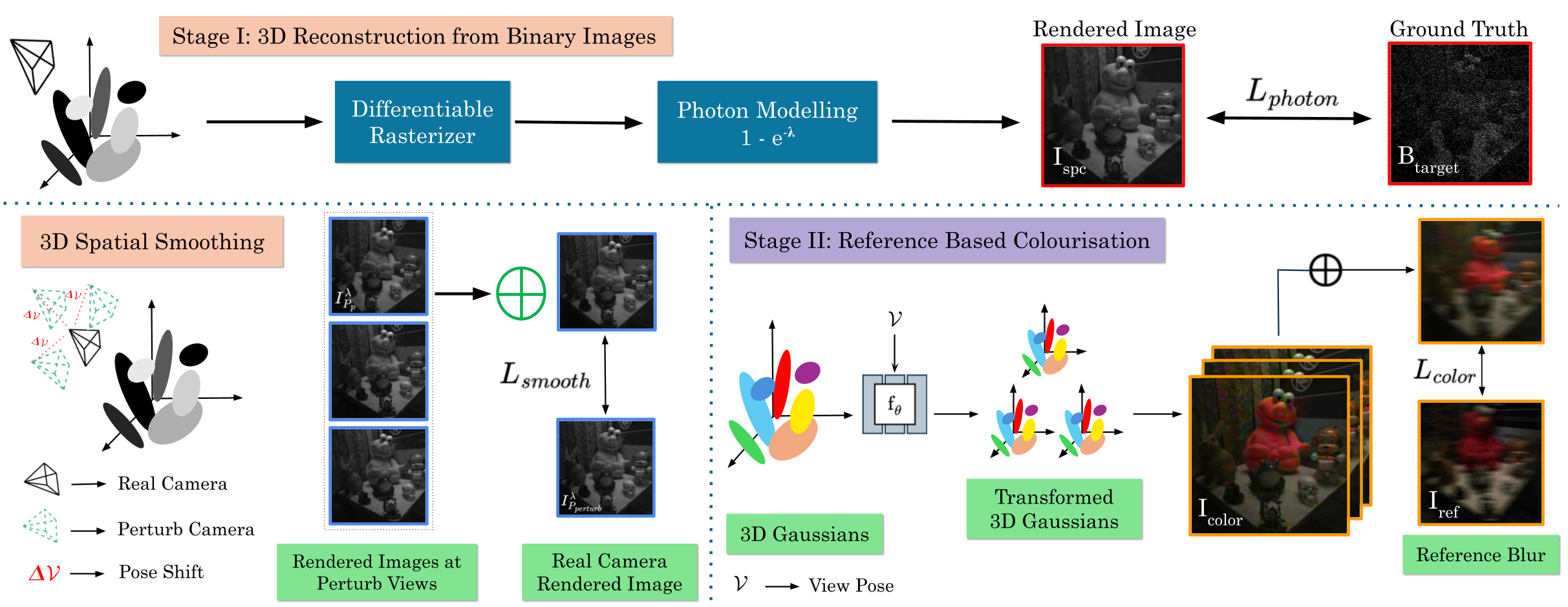

PhotonSplat utilizes a redefined Gaussian Splatting model incorporating photon detection probabilities, thereby modeling the SPAD imaging formation process more accurately. By adapting the conventional Gaussian Splatting framework to predict continuous photon probabilities from binary counts, it significantly enhances the 3D radiance field's accuracy.

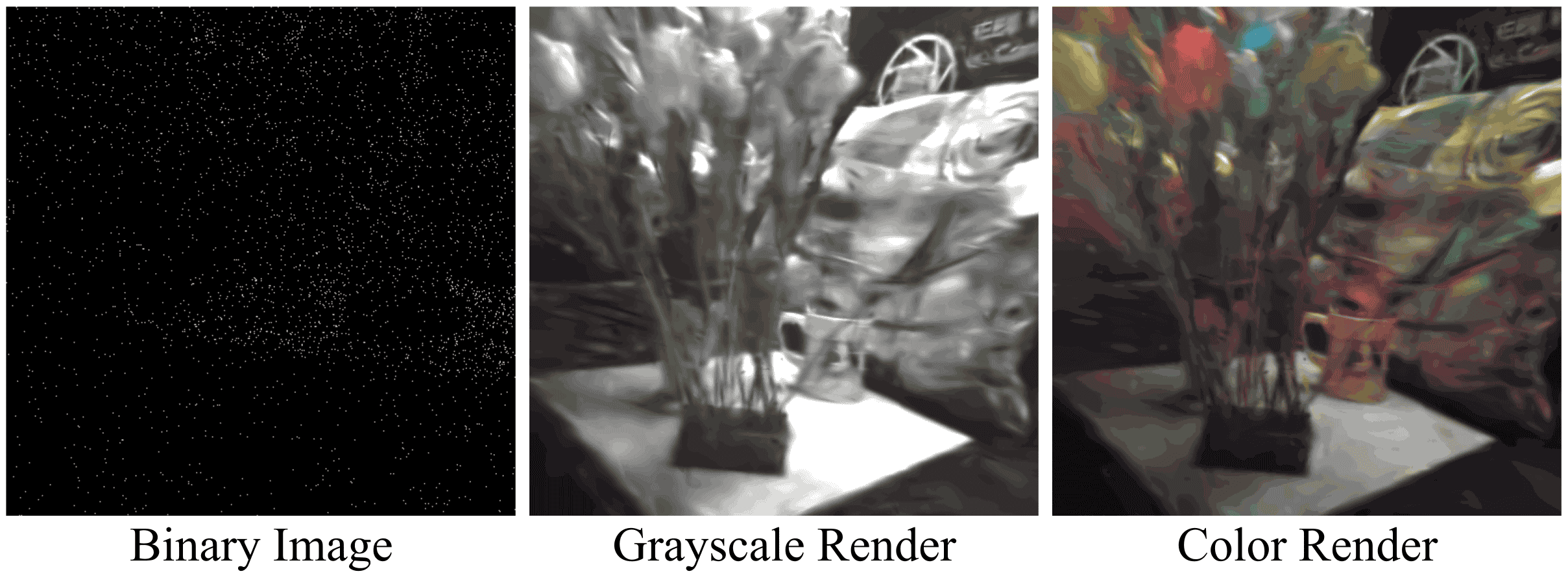

Figure 1: Method Overview. PhotonSplat learns to recover a 3D scene from binary SPAD images.

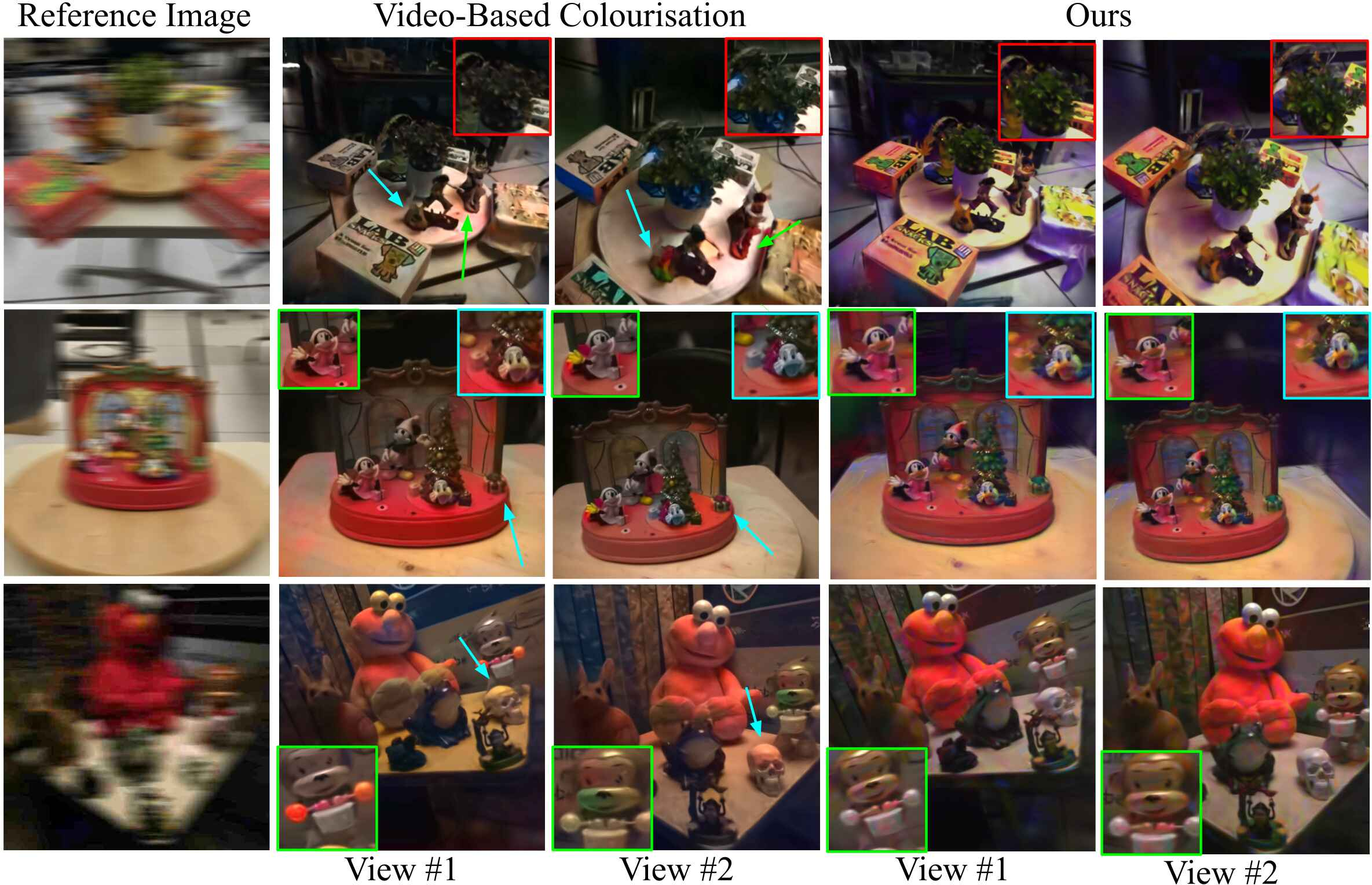

Spatial smoothing regularization mitigates noise from binary SPAD captures by enforcing consistency across nearby views, introducing robust scene geometry recovery. Furthermore, a novel approach to view-consistent colorization allows vivid visual appearance encoding from single motion-blurred images, enhancing applications requiring realistic scene visualization.

Experimental Validation

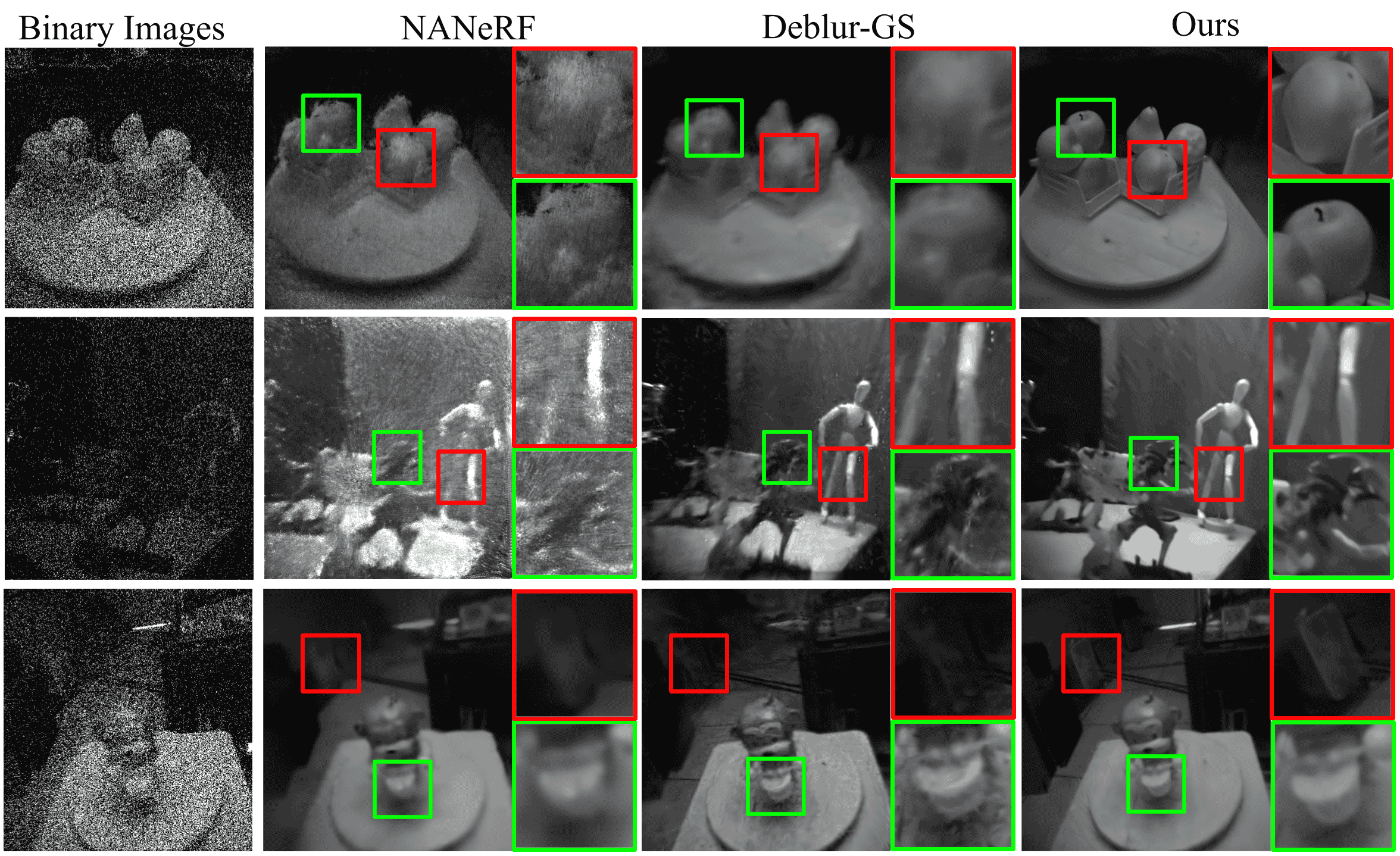

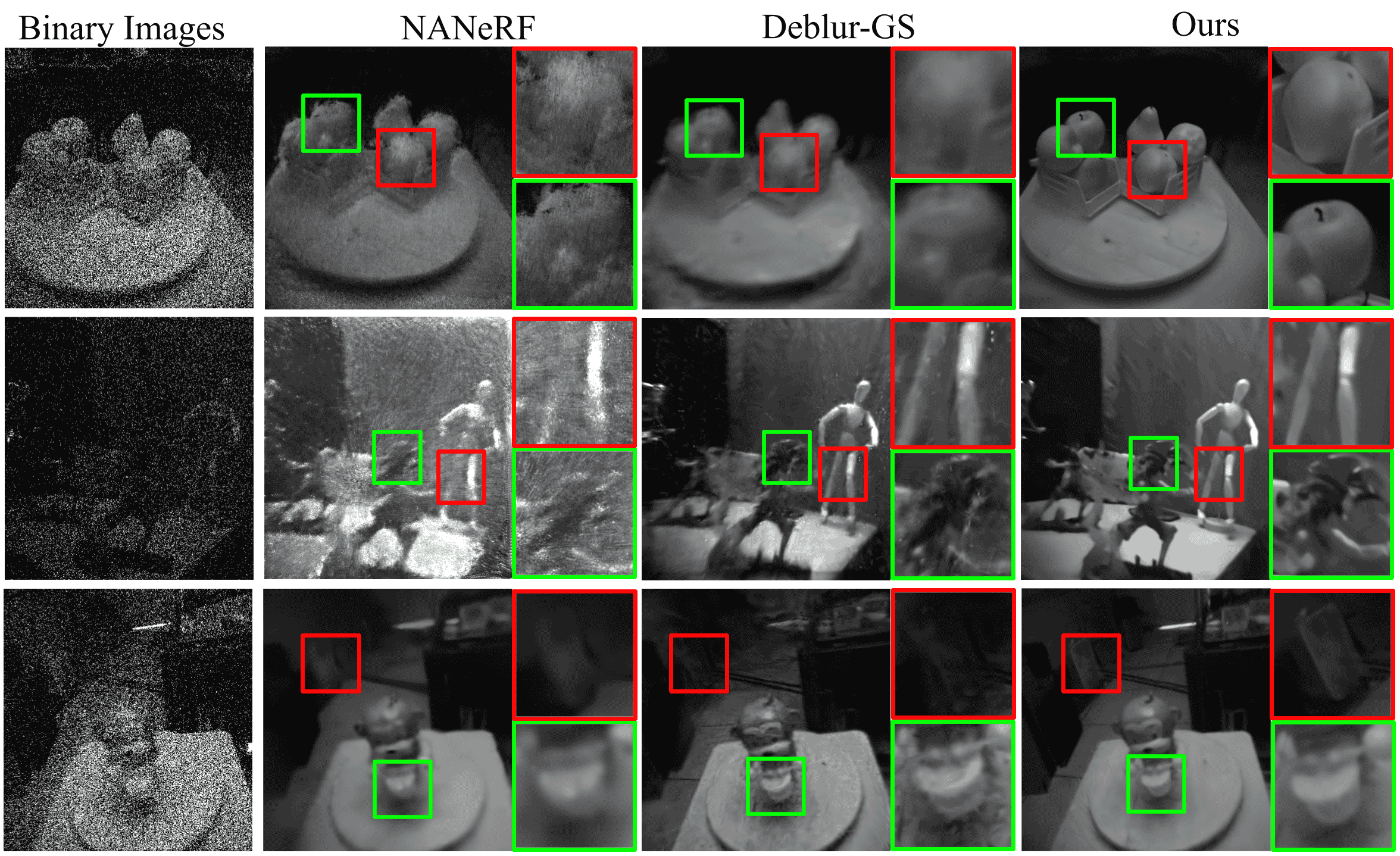

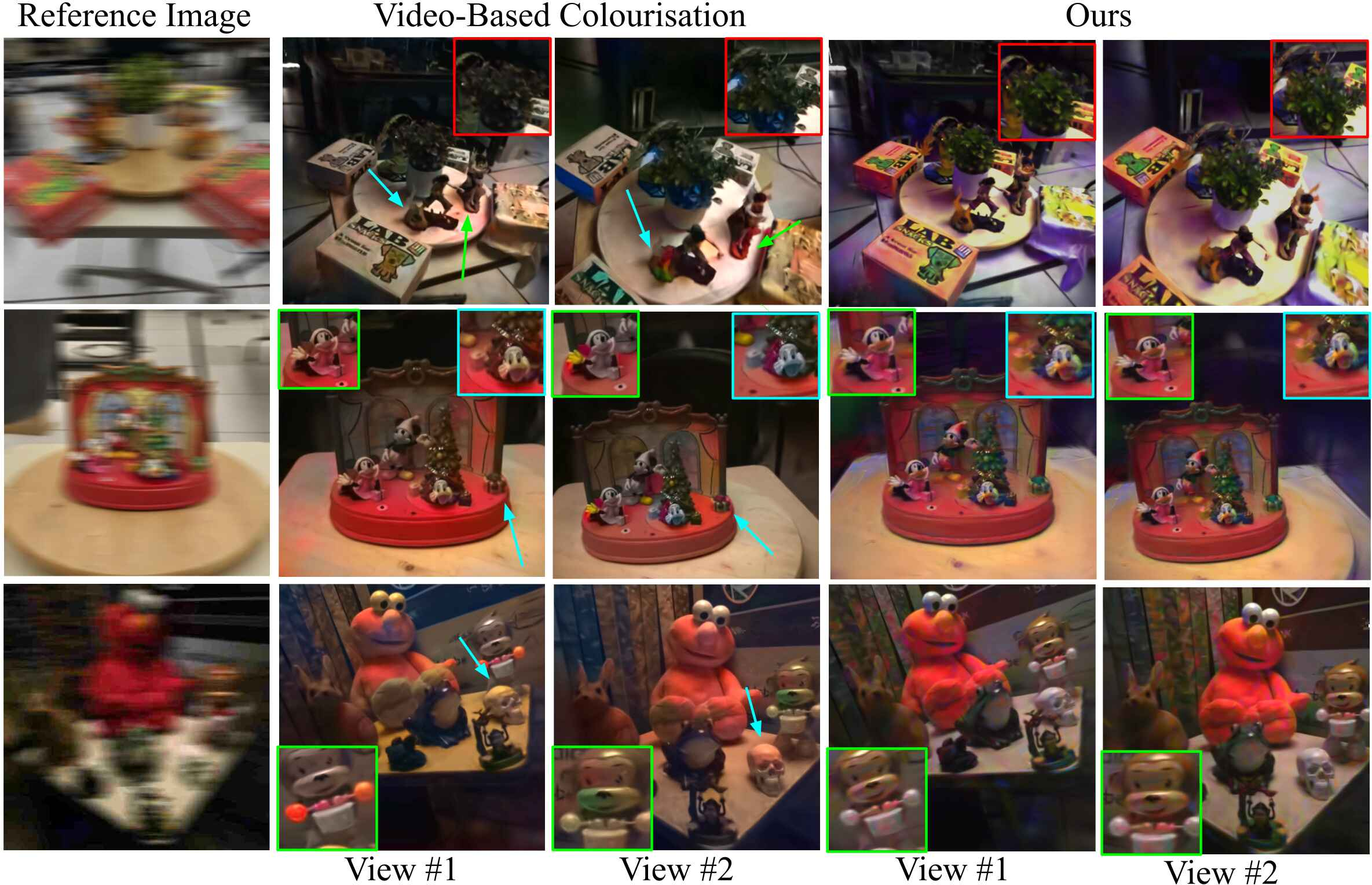

Experiments evaluate PhotonSplat on both simulated and real-world SPAD datasets, demonstrating significant advancements over baseline methods such as NANeRF and Deblur-GS in both geometric fidelity and visual consistency of synthesized views.

Figure 2: Qualitative results for novel view synthesis from multi-view binary frames on our real-world PhotonScenes dataset.

When quantitatively assessed, PhotonSplat exhibits superior performance in terms of PSNR and SSIM compared to baselines, underscoring its effectiveness in noise reduction and detail preservation. The framework's robustness extends to colorization longevity, as indicated through advanced consistency metrics even without reference images.

Figure 3: Qualitative results for colorization based on reference image on the PhotonScenes Dataset.

Implications and Future Work

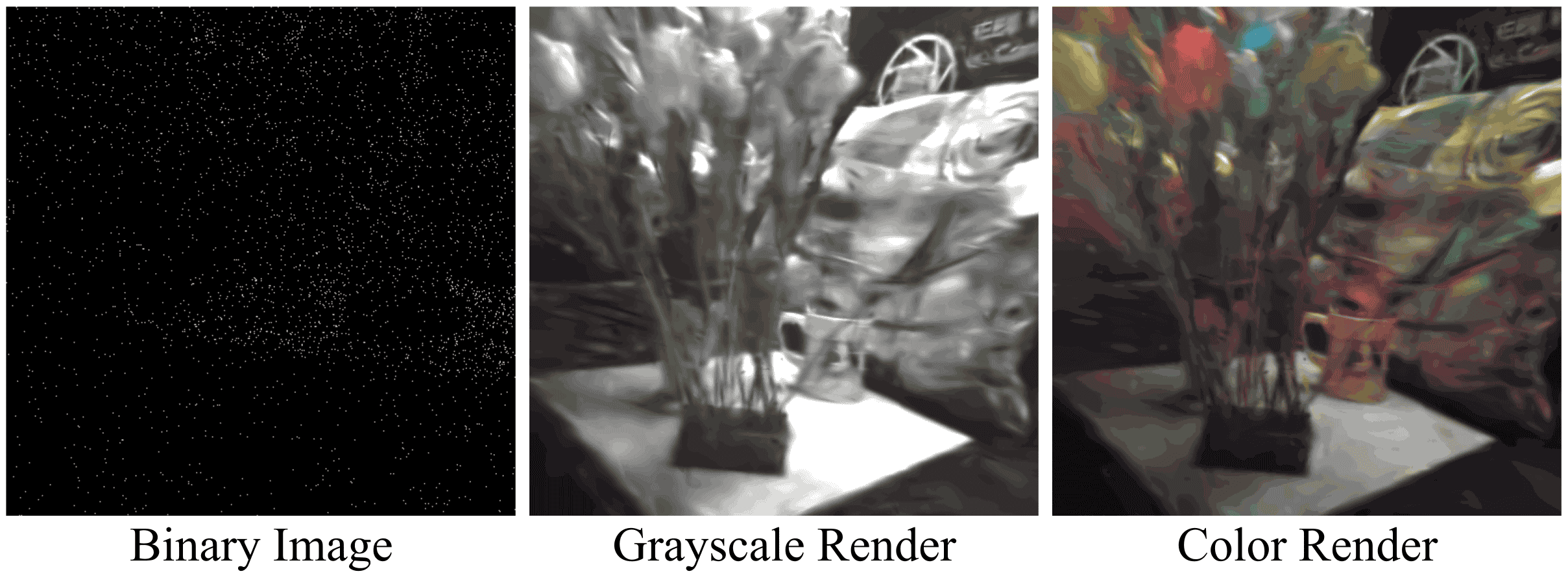

PhotonSplat's advancement in SPAD-based 3D reconstruction bridges critical gaps across high-speed dynamic capture scenarios, such as drone navigation and autonomous vehicle operation, where conventional imaging fails. With SPAD-based solutions gaining traction, this research paves the way for further exploration in augmented reality (AR) and virtual reality (VR), and defense-related applications. However, extreme low-light conditions remain a challenge.

Figure 4: Our method fails to reconstruct images in extreme low-light due to insufficient information in the input SPAD images.

Future research endeavors could focus on integrating pose estimation directly into the training pipeline to mitigate existing challenges in real-time applications, potentially harnessing IMU data. Additionally, expanding compatibility with color-filtered SPAD sensors can further advance PhotonSplat’s role in comprehensive scene reconstruction tasks.

Conclusion

PhotonSplat represents a notable progression in leveraging SPAD sensors for detailed 3D scene reconstruction, achieving breakthroughs in photorealistic renderings critical for numerous technological applications. Its methodological contributions and experimental successes mark it as a robust option in environments where traditional RGB imaging is insufficient, heralding new possibilities in computational imaging.