- The paper introduces Tropical attention, leveraging tropical geometry to preserve the crisp decision boundaries crucial for dynamic programming algorithms.

- It presents a new formulation that applies tropical semiring operations, achieving universal approximation of max-plus combinatorial tasks.

- Empirical results on tasks like Quickselect and Floyd-Warshall demonstrate superior out-of-distribution performance and adversarial robustness compared to softmax attention.

Tropical Attention: Neural Algorithmic Reasoning for Combinatorial Algorithms

This research introduces Tropical attention, a novel attention mechanism for neural algorithmic reasoning in combinatorial optimization tasks. It operates natively in the max-plus semiring of tropical geometry, enabling it to maintain the piecewise-linear, polyhedral structures integral to these problems. This paper's significant contributions are the theoretical and empirical validations of Tropical attention's expressivity and robustness compared to conventional softmax-based approaches.

Introduction

Dynamic programming (DP) algorithms are pivotal for combinatorial optimization, relying on operations in the max-plus semiring. Existing neural algorithmic models, however, typically employ softmax-normalized attention, which can obscure the crisp decision boundaries vital to these algorithms. By leveraging tropical geometry, Tropical attention preserves these boundaries while enhancing out-of-distribution (OOD) performance on algorithmic reasoning tasks.

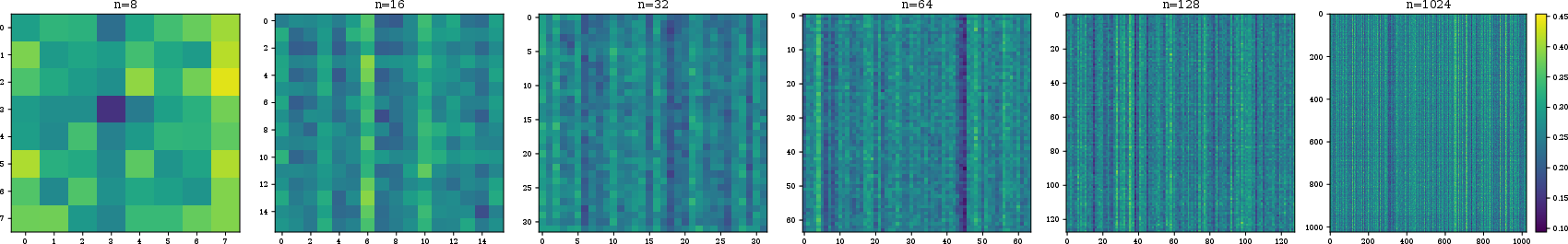

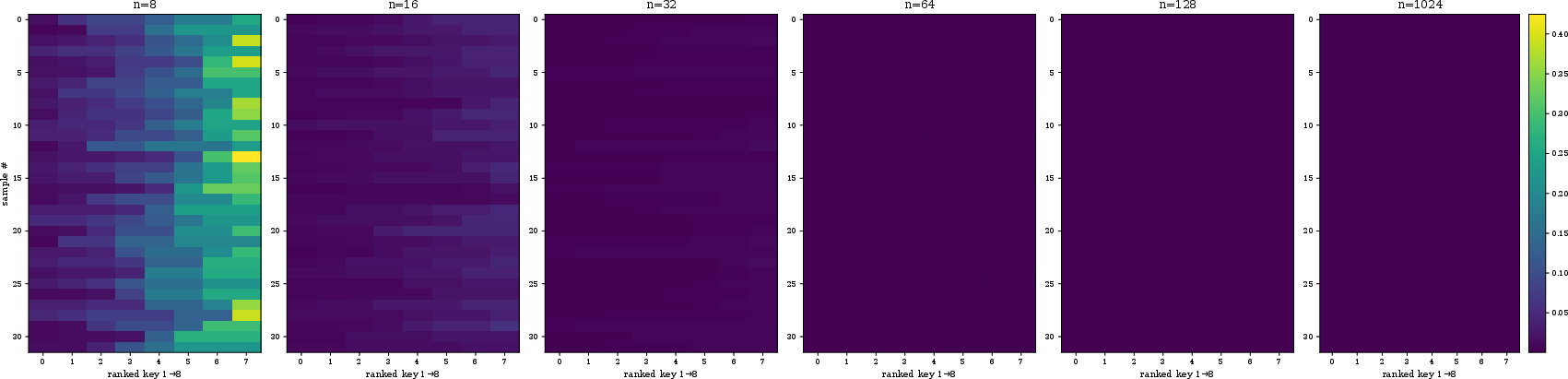

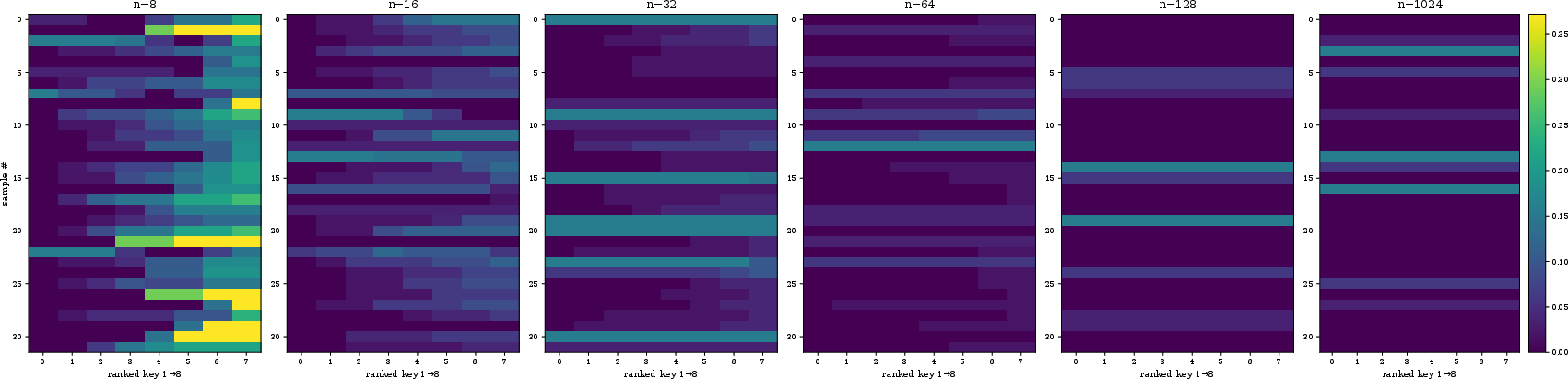

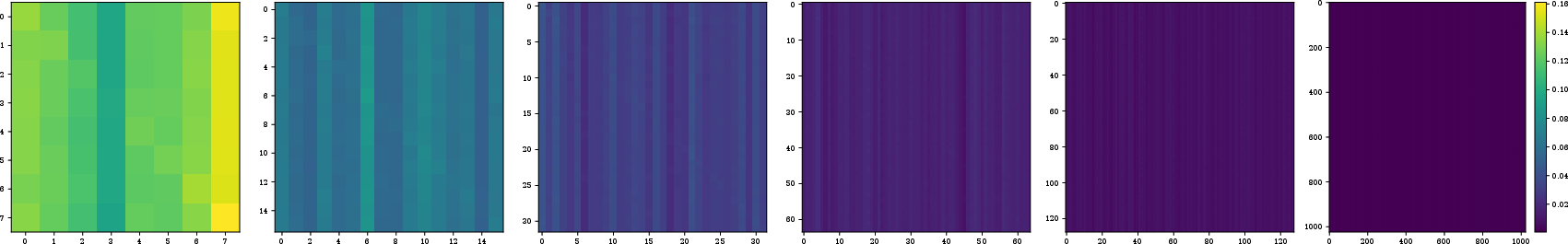

Figure 1: Tropical attention with sharp attention maps on learning the Quickselect algorithm, showcasing size-invariance and OOD lengths generalization beyond training (from length 8 to 1024).

Tropical Attention Mechanism

Tropical attention functions by mapping Euclidean input into the tropical semiring, applying tropical geometric operations, and mapping results back to Euclidean space. These operations, being Lipschitz continuous, ensure stability even under adversarial perturbations. The mechanism computes attention weights with the tropical Hilbert metric, aiding in approximating tropical circuits of DP-like combinatorial algorithms.

In the tropical domain, operations are defined as:

- Tropical addition: x⊕y=max(x,y)

- Tropical multiplication: x⊙y=x+y

The attention scores are computed using the tropical Hilbert metric, promoting robust aggregation by a tropical matrix-vector product. This leads to a system that naturally aligns with the recursive max-plus structures in DP.

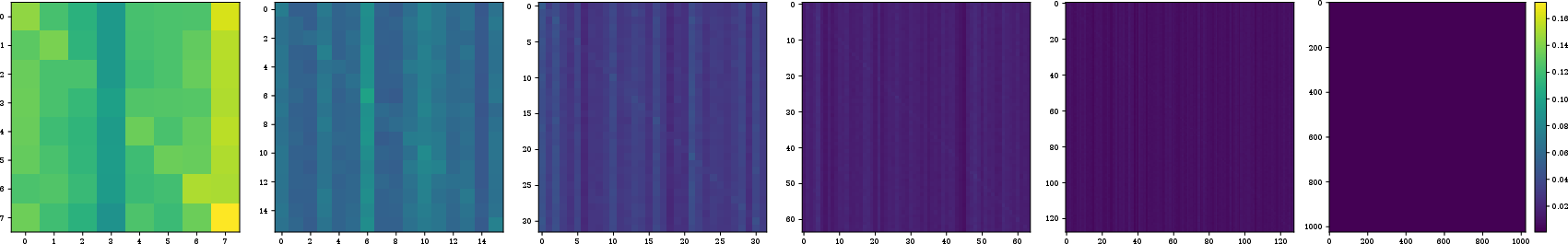

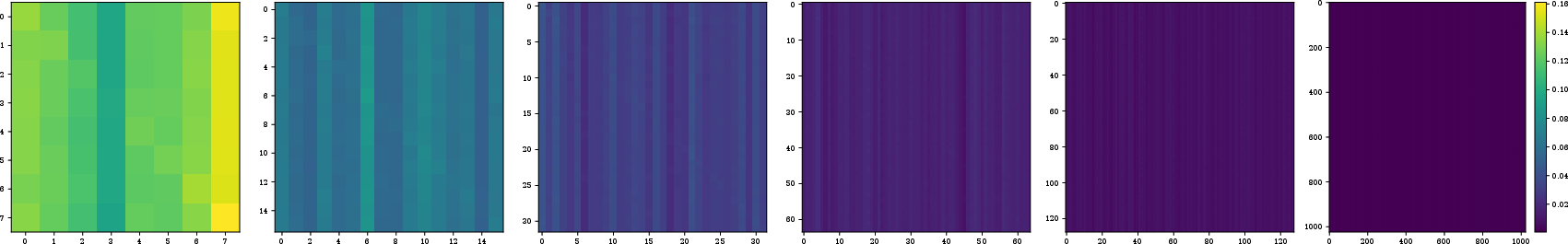

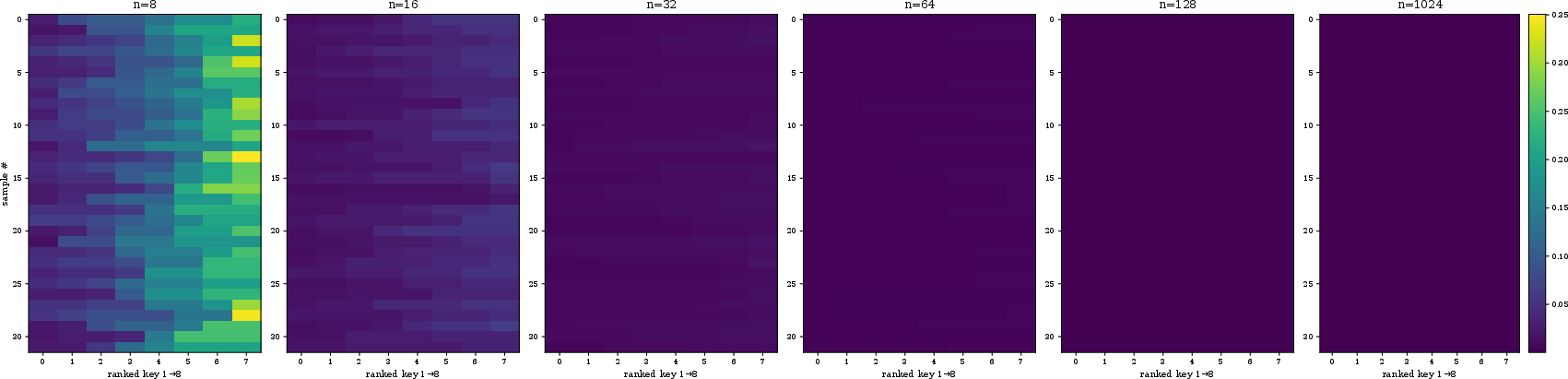

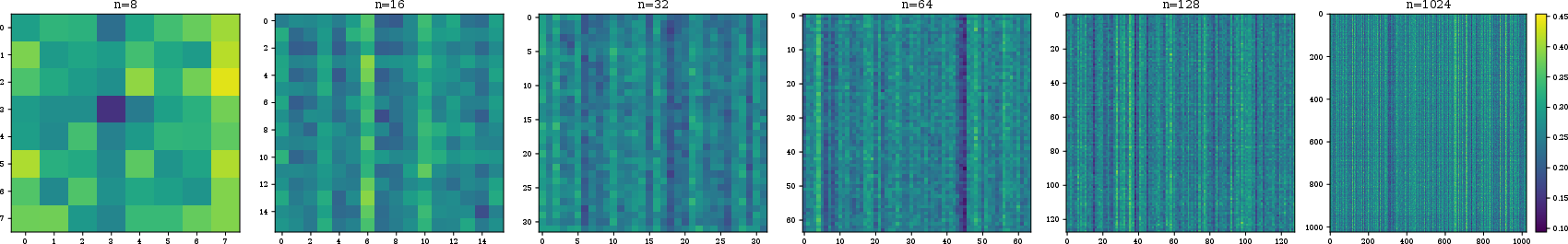

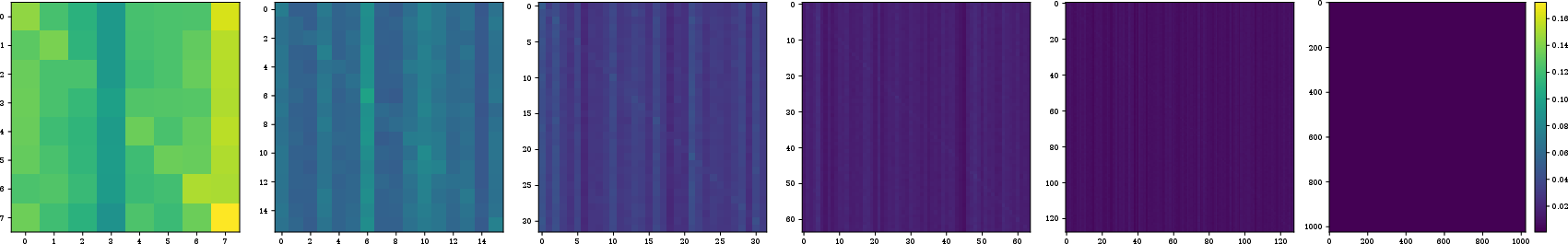

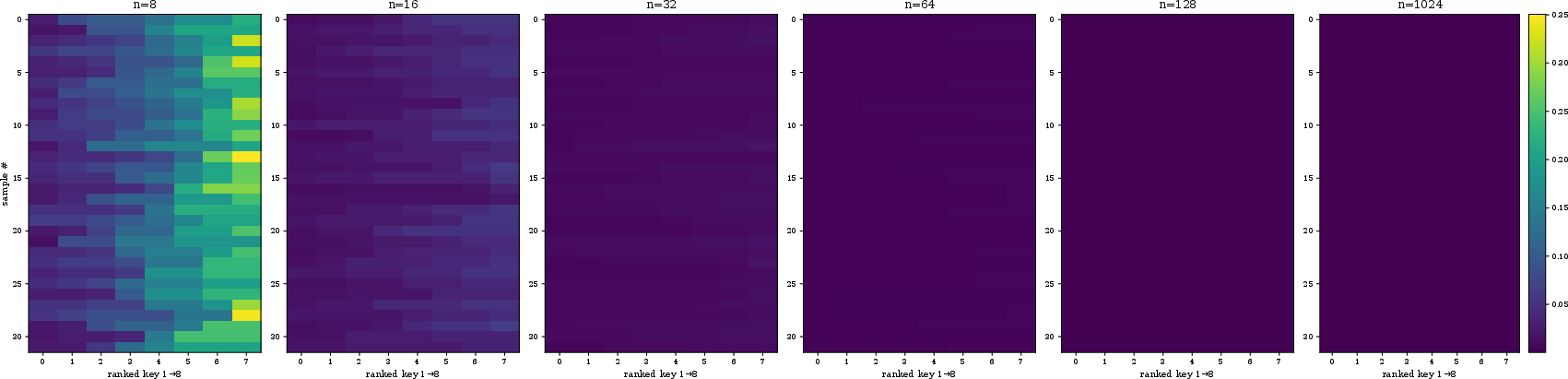

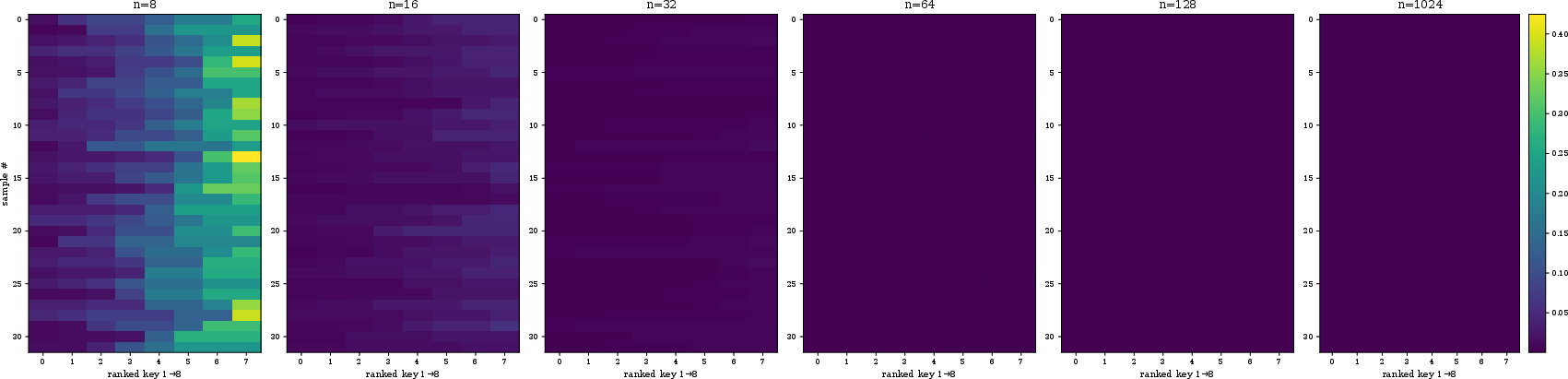

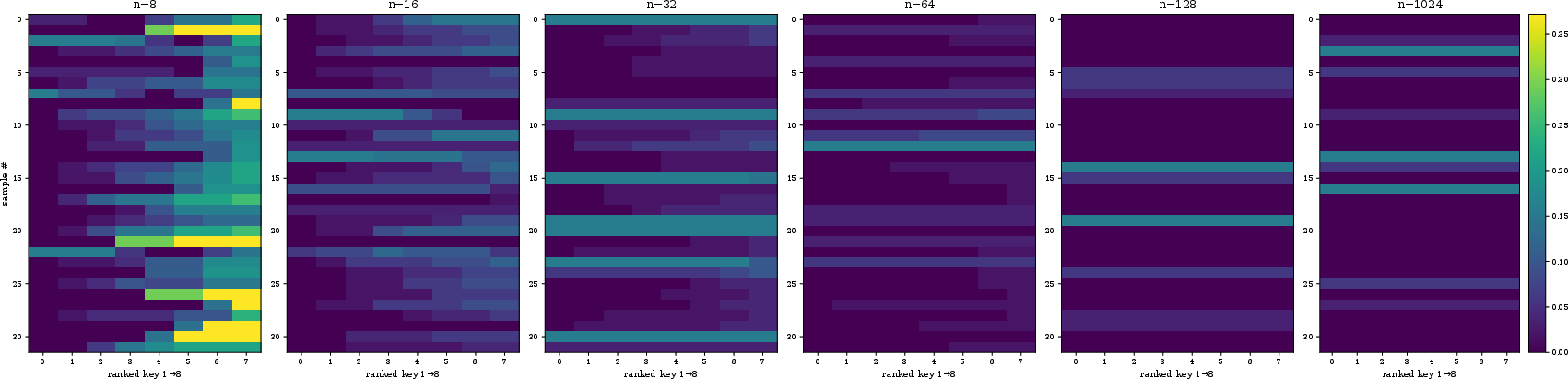

Figure 2: Stacked attention head representations for Quickselect under different models, evaluated on sequences from length 16 to 1024. Tropical attention maintains focus while vanilla and adaptive heads dilute.

Theoretical Expressivity and Robustness

Tropical attention exhibits universal approximation properties, allowing it to mimic any max-plus combinatorial algorithms effectively. The research proves that this mechanism simulates tropical circuits without demanding excessive width or depth, thus maintaining computational efficiency.

Theorems and Proofs

- Expressivity: Theoretical guarantees are provided that multi-head tropical attention simulates any DP, encapsulating max-plus computations.

- Universal Approximation: A single layer of tropical attention can approximate tropical polynomials, conserving computational resources.

Empirical Evaluation

On canonical combinatorial tasks—like Floyd-Warshall, Quickselect, and 3SUM-Decision—a Tropical transformer achieves state-of-the-art OOD performance and superior adversarial robustness compared to vanilla softmax-based models.

- Length Generalization: Tropical transformers consistently generalize to inputs beyond the training regime.

- Adversarial Robustness: Displaying resilience against perturbations, even in complex, real-world scenarios.

The architecture's capacity to maintain sharp attention maps in OOD settings (Figure 1 and 2) underscores its potential in broader reasoning tasks beyond synthetic benchmarks.

Results and Discussion

The empirical analysis reveals that Tropical transformers outperform vanilla models across all tested OOD metrics. The mechanism succeeds where vanilla attention disperses, indicating a learned structural understanding rather than data memorization. However, scaling challenges remain in applying tropical methods to domains like natural language processing due to computational overhead from tropical operations.

Conclusion

The introduction of Tropical attention advances neural algorithmic reasoning by reinforcing the algorithmic structure of attention mechanisms. This approach offers promising avenues for hybrid semiring architectures and the reinforcement of polyhedral decision boundaries. Future work will address scaling constraints and extend applications to more complex combinatorial and graph-theoretic domains. These results highlight tropical geometry's potential to enhance neural network robustness and generalization in algorithmic contexts.