- The paper introduces MM-Agent, a framework that advances LLMs from reasoning to structured mathematical modeling.

- It details a four-phase process and a benchmark (MM-Bench) to assess modeling rigor, practicality, and bias.

- Experimental results show over 11% improvement versus baselines and emphasize the framework’s cost efficiency.

Towards Autonomous Mathematical Modeling with LLMs

Introduction to MM-Agent

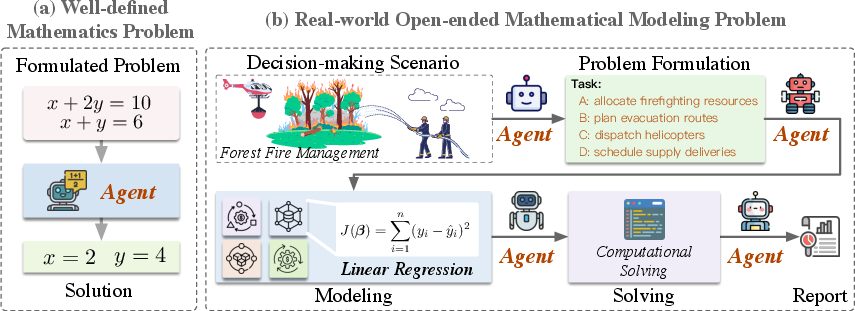

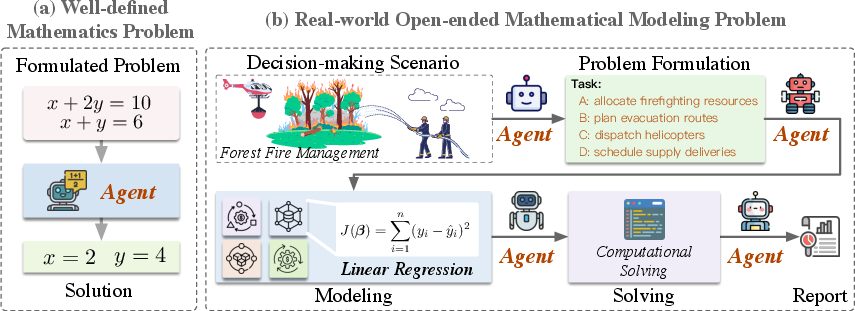

Mathematical modeling is pivotal in converting real-world problems into structured analytical frameworks, indispensable across various scientific and engineering domains. Notably distinct from mere mathematical reasoning, modeling necessitates an open-ended problem decomposition and principled formalization, crucial for real-world applicability. LLMs, proficient in reasoning, have shown limitations in constructing rigorous models, which hinders their capacity for real-world problem-solving. This paper introduces MM-Agent, a framework aimed at enhancing LLM-based mathematical modeling, enabling these models to transition from pure reasoning to adeptness in model construction.

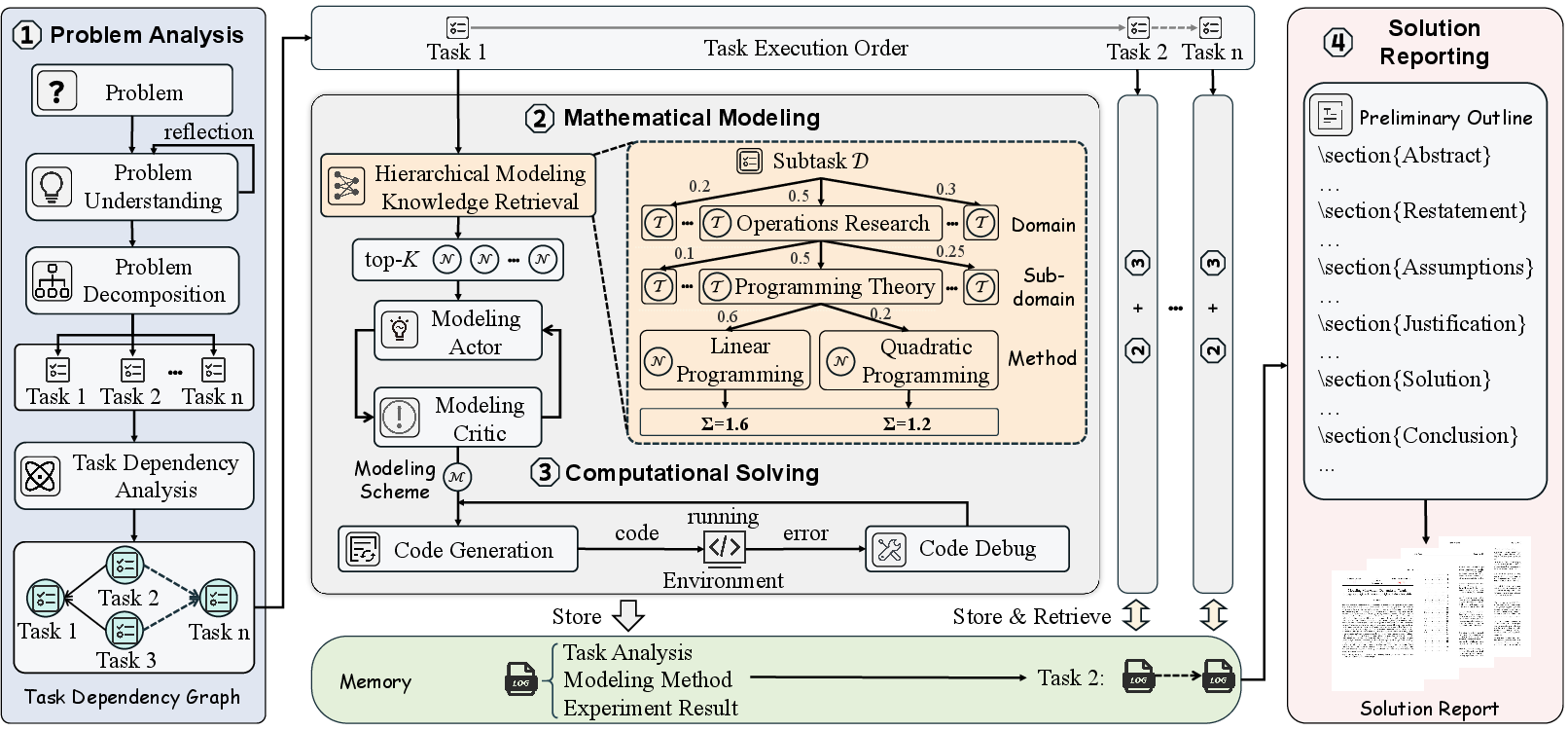

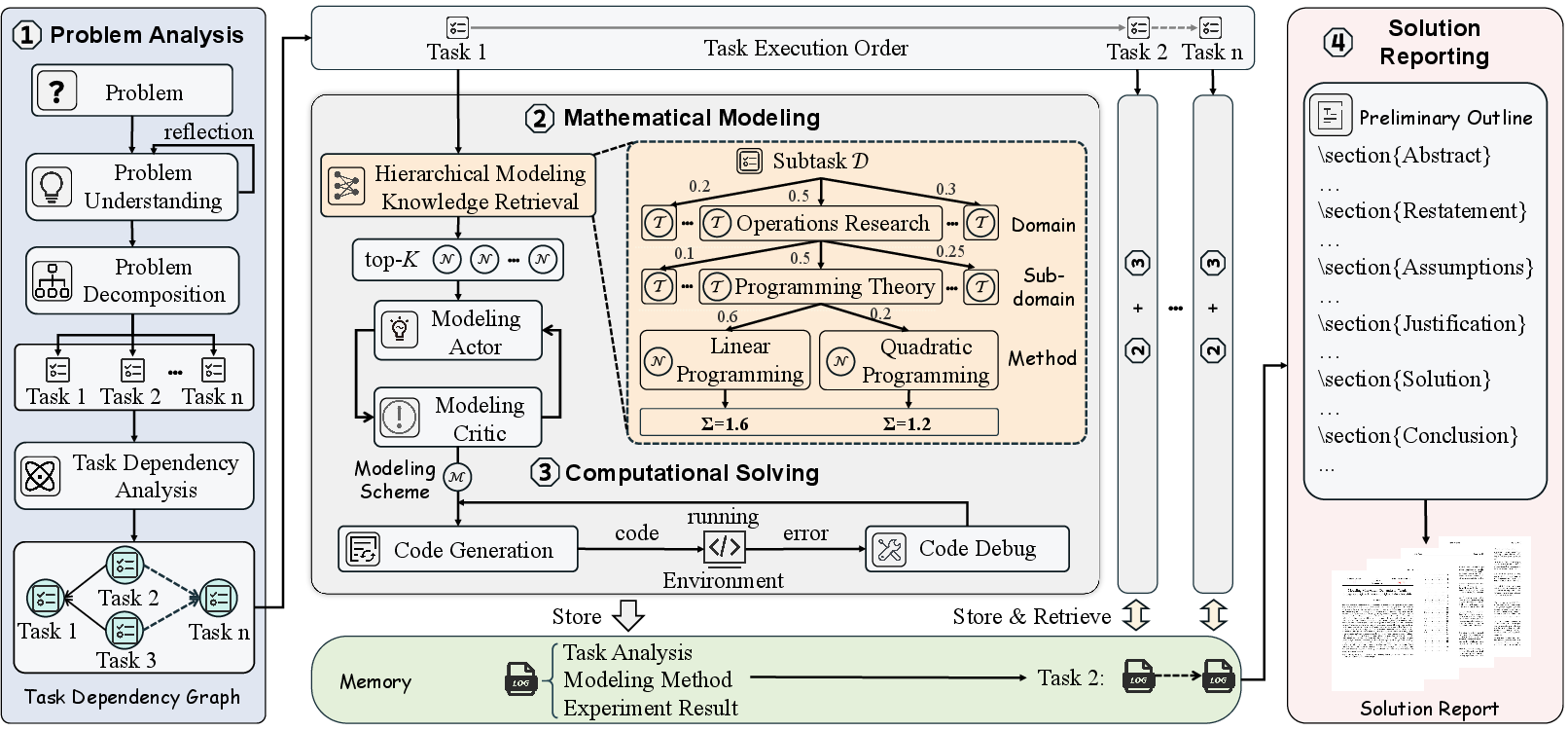

The MM-Agent framework operates through four core phases: Problem Analysis, Mathematical Modeling, Computational Solving, and Solution Reporting. These phases systematically guide the model from understanding the problem to generating actionable insights, significantly outperforming traditional LLM applications in real-world tasks.

Figure 1: Traditional well-defined mathematics problem vs LLM-powered open-ended mathematical modeling problem.

MM-Bench: A Benchmark for Evaluation

To evaluate MM-Agent, the MM-Bench dataset was developed, derived from the Mathematical Contest in Modeling (MCM/ICM). It consists of 111 diverse problems spanning domains such as physics and economics. MM-Bench provides a comprehensive benchmark, challenging LLM-based models in areas requiring intricate problem analysis, domain-appropriate modeling, and solution synthesis.

Evaluation Metrics: The performance of models on MM-Bench is assessed across the dimensions of Analysis Evaluation, Modeling Rigorousness, Practicality and Scientificity, and Result and Bias Analysis. These metrics ensure a holistic evaluation of the model's capability to handle complex, real-world problem scenarios.

Hierarchical Mathematical Modeling Library (HMML)

To support MM-Agent’s implementation, the Hierarchical Mathematical Modeling Library (HMML) provides a structured ontology of modeling methods, classified into domains, subdomains, and specific techniques. This resource facilitates systematic retrieval and application of modeling strategies, crucial for problem-aware method selection and sophisticated mathematical abstraction.

Figure 2: Overview of the MM-Agent framework. The workflow consists of four sequential phases: Problem Analysis, Mathematical Modeling, Computational Solving, and Solution Reporting.

Methodology of MM-Agent

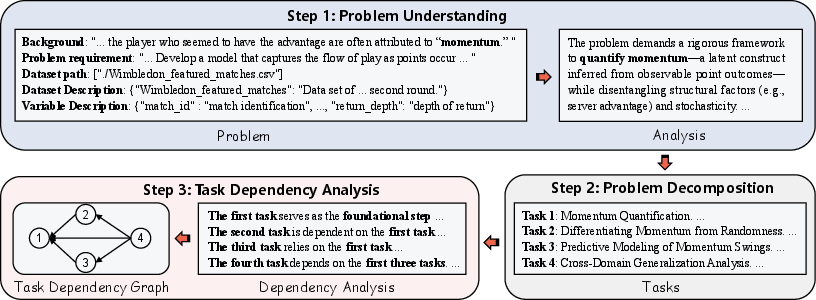

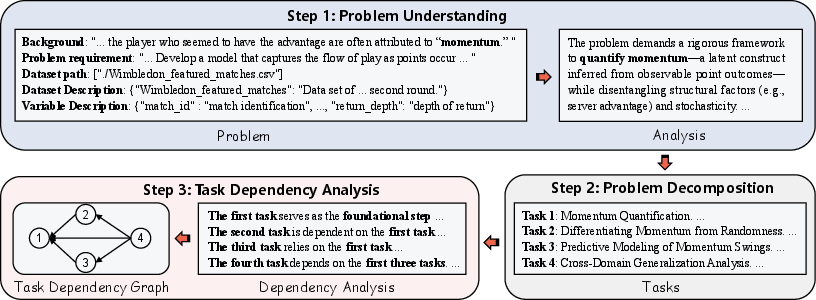

Problem Analysis Phase: MM-Agent begins with decomposing complex problem statements into structured subtasks. This step involves identifying assumptions, objectives, and logical coherence among subtasks, which are then systematically interlinked to form a coherent dependency graph.

Figure 3: The workflow of the problem analysis phase in MM-Agent.

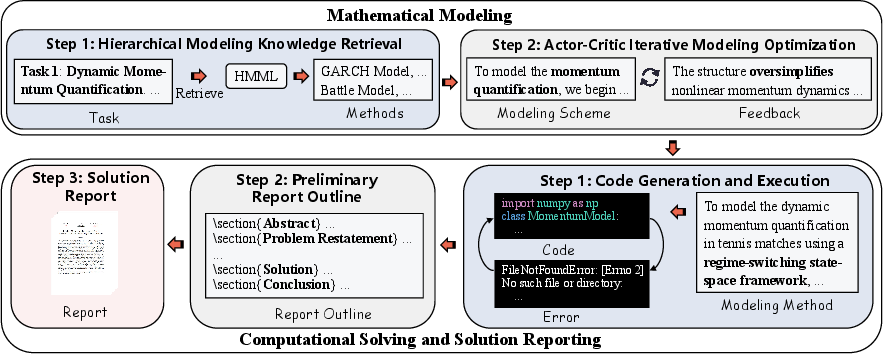

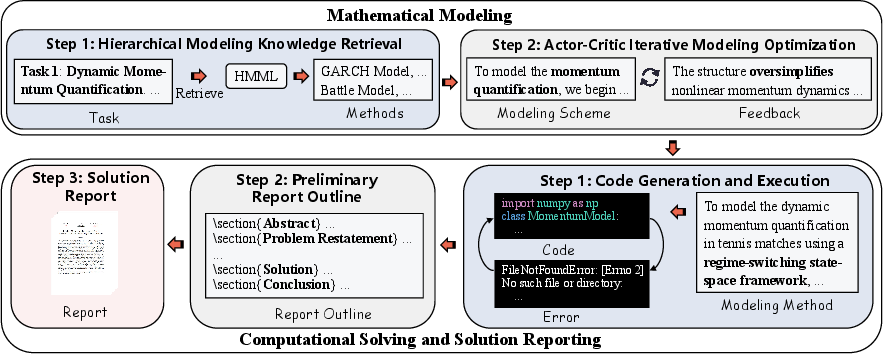

Mathematical Modeling Phase: Central to MM-Agent is the modeling phase, where retrieved methods from HMML form the basis for mathematical abstraction. The application of actor-critic mechanisms optimizes modeling strategies, iteratively refining the formulation until a satisfactory model representation is achieved.

Computational Solving and Solution Reporting: This phase involves executing the mathematical model using computational algorithms, followed by an automated synthesis of findings into a structured report. The solution report elucidates the modeling process, experimental results, and derived insights, ensuring transparency and interpretability.

Figure 4: The workflow of the mathematical modeling phase and computational solving and solution reporting phase of MM-Agent.

Experimental Results and Practical Applications

Experiments demonstrate that MM-Agent outperforms baseline modeling agents, improving outputs by over 11% compared to human solutions within MM-Bench. Additionally, MM-Agent proved its efficacy in competitive scenarios, aiding teams to achieve top-tier results in the MCM/ICM competition.

Cost Efficiency: The framework operates with high cost efficiency, requiring minimal computational resources (\$0.88 per task), highlighting its practicality for large-scale deployment.

Conclusion

The integration of MM-Agent with LLMs marks a significant advancement in LLM applications beyond traditional reasoning tasks. By enabling autonomous mathematical modeling, MM-Agent establishes a robust framework for real-world problem-solving, enhancing LLM utility across diverse scientific and engineering contexts. The continued refinement of MM-Agent and exploration of its applications promise significant advancements in scalable, efficient AI-driven analysis and decision-making.