- The paper demonstrates that human-aware AI design improves team scores and enhances user satisfaction in collaborative tasks.

- Experimental results reveal that agents considering human intentions achieve balanced performance, as shown by Bayesian logistic regression analysis.

- The study underscores the need to fine-tune AI strategies to integrate both quantitative performance and qualitative user metrics for broader applications.

Human-AI collaboration presents unique challenges and opportunities stemming from the balancing act between effective performance and user satisfaction. This paper explores these dynamics, particularly how AI agents can be designed to harmonize with human users, focusing not only on quantitative performance metrics but also on qualitative user experience measures.

Experimental Framework and Game Design

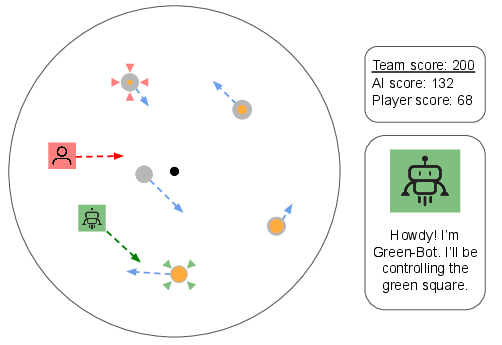

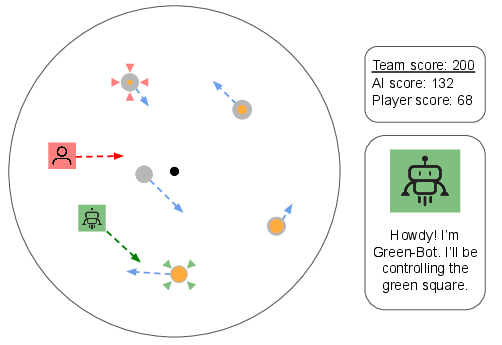

The study employs a collaborative target interception task, designed to simulate real-world decision-making scenarios where human and AI agents must work towards common goals. Participants engage with AI agents in a virtual, circular environment where both parties intercept targets to accumulate points.

Figure 1: Illustration of the collaborative target interception task where human and AI agents strive to achieve high scores by intercepting moving targets.

In this setup, targets appear with varying densities and values, demanding strategic planning and teamwork. The AI agents were programmed with differing collaborative strategies, ranging from egocentric performance-maximizing behaviors to human-aware strategies intended to complement the human player’s actions.

Analysis of AI Agent Strategies

Agent Variations and Their Impact

The five AI agents were designed with distinctive algorithms reflecting variations of a rudimentary search method. The basic search planning algorithm was enhanced with specific rules to simulate desirable collaborative traits such as avoiding interference with human actions, maintaining spatial separation, and mimicking human player strategies.

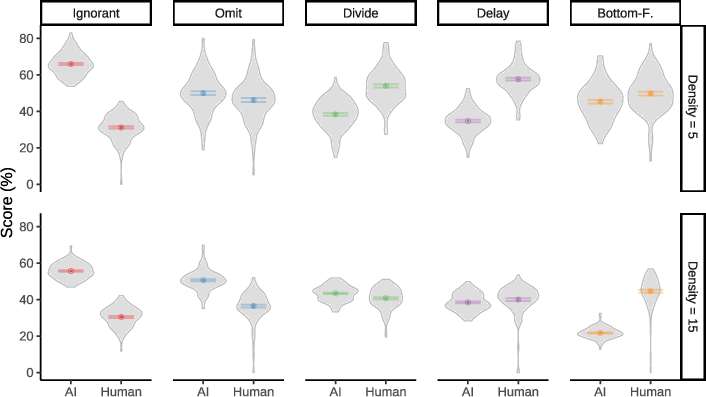

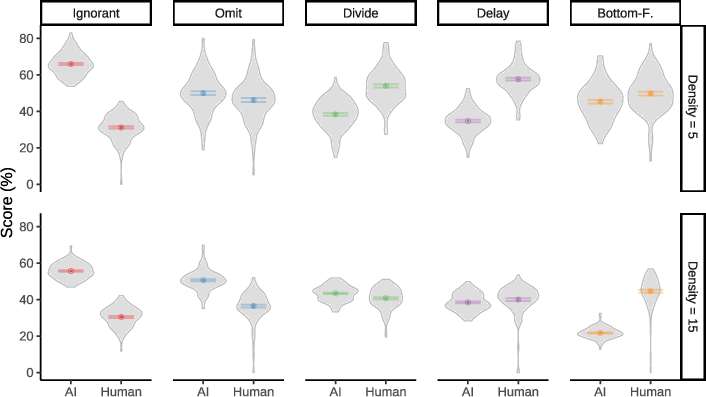

Performance analysis centered on both individual and team scores under varying target densities. The Ignorant agent, which disregarded human intentions, consistently showed high self-centered performance but at the cost of hindering human partner scoring.

Figure 2: Performance metrics across AI agents reflect varying AI-human performance dynamics influenced by AI strategy and target density.

Interestingly, agents like Omit, which consider human intended targets, balanced out individual scores and often demonstrated improved team scores even under high target densities, underscoring how human-aware design could effectively harmonize AI actions with human partners.

Subjective Preferences and User Experience

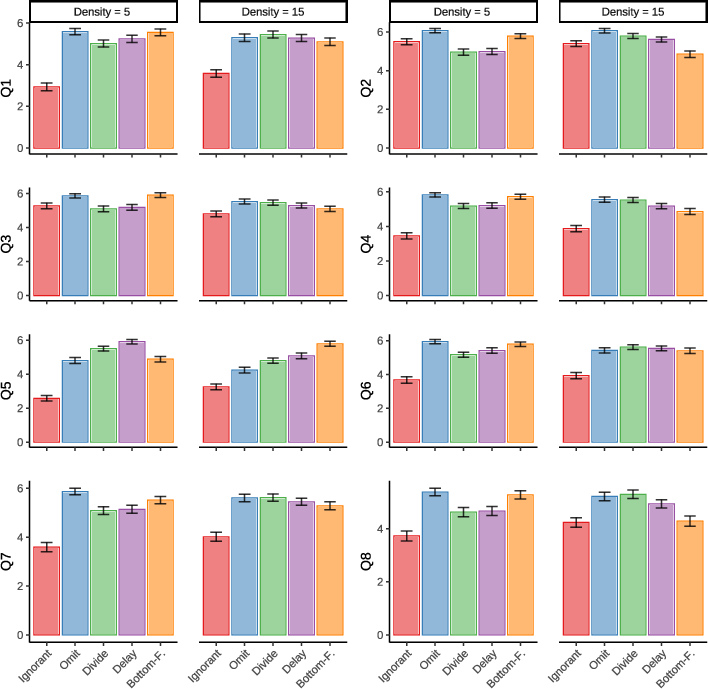

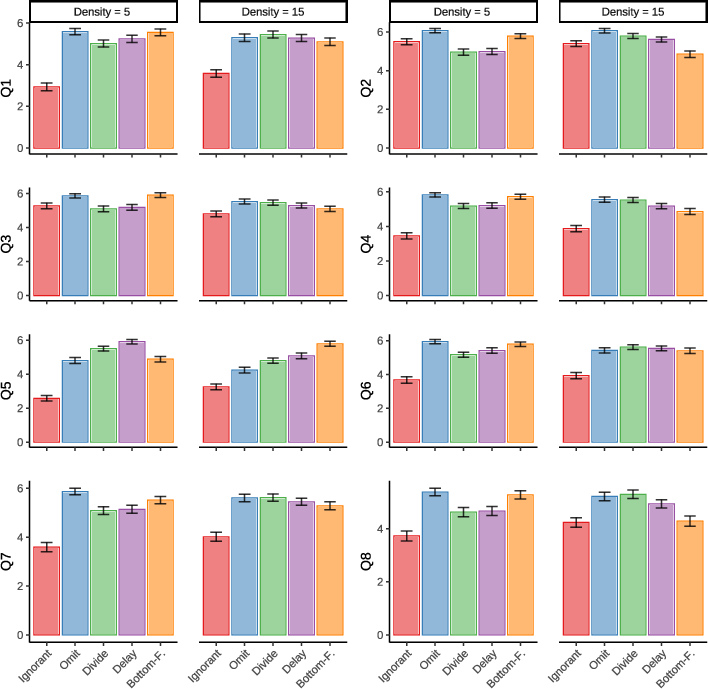

The questionnaire results reveal participants' preferences leaning towards agents perceived as more considerate of human intentions. The preference divergence highlights the complexity of human-AI interaction, where simple improvements in AI behavior can lead to substantial gains in user satisfaction.

Figure 3: Questionnaires assessed participant perceptions of AI agents, revealing trends towards preferences for agents displaying human-centric behaviors.

Participants favored agents that facilitate meaningful human contributions, reflecting an aversion to significant performance gaps unless the AI sacrifices its own performance to elevate the human’s role. Moreover, the concept of inequality aversion emerged, where balanced performance between human and AI players was favored.

Predictive Models for AI Preferences

The application of Bayesian logistic regression models helped pinpoint key factors influencing human preferences. Both objective indicators such as score equality and subjective measures like perceived teamwork and ease of collaboration demonstrated notable predictive strength, suggesting a multifaceted approach to understanding human-AI team dynamics.

Implications and Future Directions

Collaborative Design Considerations

The study illuminates the nuanced role of AI design in collaborative settings. Future algorithmic enhancements should consider not only raw performance metrics but also adaptability to human preferences, striving for complementarity in human-AI interactions.

Broader Socio-Technical Implications

These insights extend beyond gaming contexts, suggesting broader applications in fields where AI complements human decision-making, such as healthcare or autonomous driving. As AI systems become integral to collaborative environments, fostering user satisfaction alongside efficiency is crucial.

Conclusion

Harnessing human preferences for equitable and considerate AI interactions can foster more effective and satisfying collaborations. This study validates the need for human-centered AI designs that respect user contributions, echoing the call for AI systems capable of integrating subjective user metrics with objective performance efficiency.

Through continuous refinement of collaborative strategies, the field of human-AI interaction can evolve towards not only optimized performance but also enriched user involvement and satisfaction.