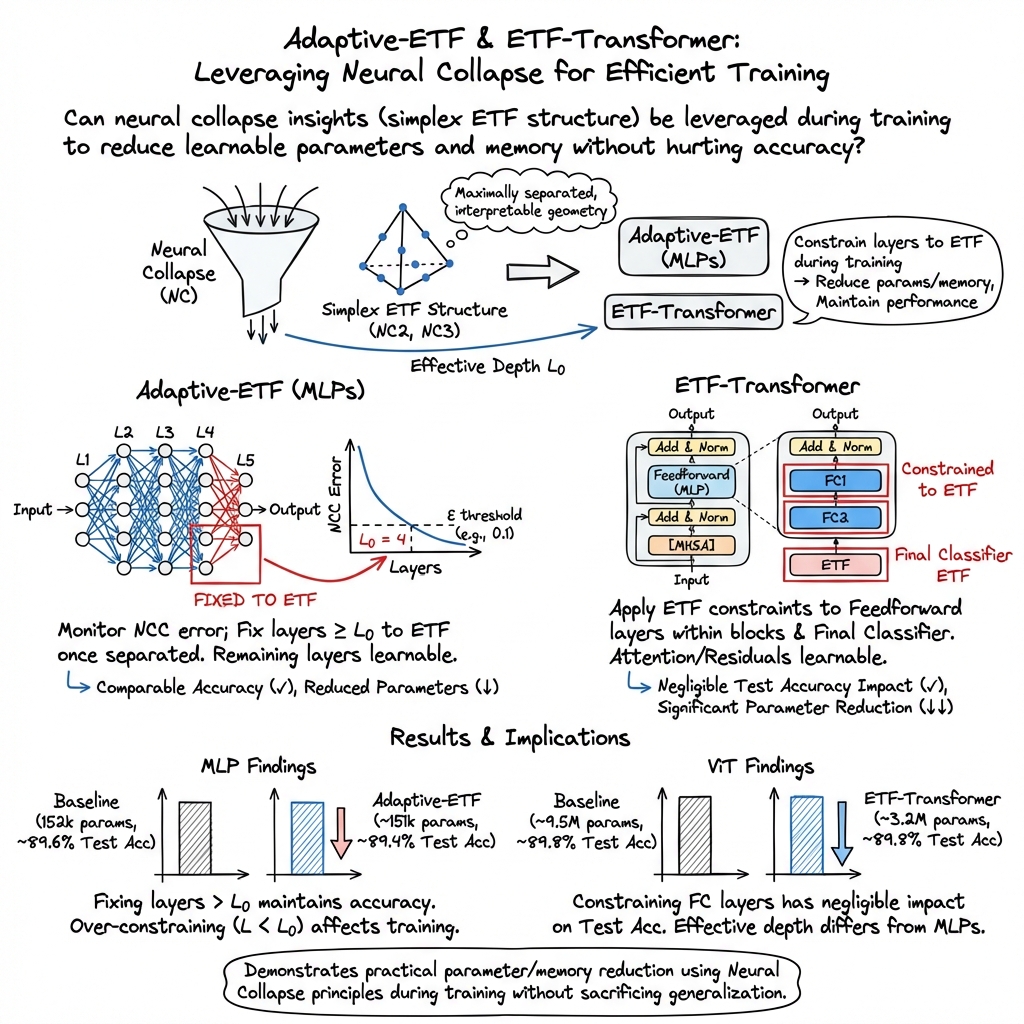

Leveraging Intermediate Neural Collapse with Simplex ETFs for Efficient Deep Neural Networks

Abstract: Neural collapse is a phenomenon observed during the terminal phase of neural network training, characterized by the convergence of network activations, class means, and linear classifier weights to a simplex equiangular tight frame (ETF), a configuration of vectors that maximizes mutual distance within a subspace. This phenomenon has been linked to improved interpretability, robustness, and generalization in neural networks. However, its potential to guide neural network training and regularization remains underexplored. Previous research has demonstrated that constraining the final layer of a neural network to a simplex ETF can reduce the number of trainable parameters without sacrificing model accuracy. Furthermore, deep fully connected networks exhibit neural collapse not only in the final layer but across all layers beyond a specific effective depth. Using these insights, we propose two novel training approaches: Adaptive-ETF, a generalized framework that enforces simplex ETF constraints on all layers beyond the effective depth, and ETF-Transformer, which applies simplex ETF constraints to the feedforward layers within transformer blocks. We show that these approaches achieve training and testing performance comparable to those of their baseline counterparts while significantly reducing the number of learnable parameters.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.