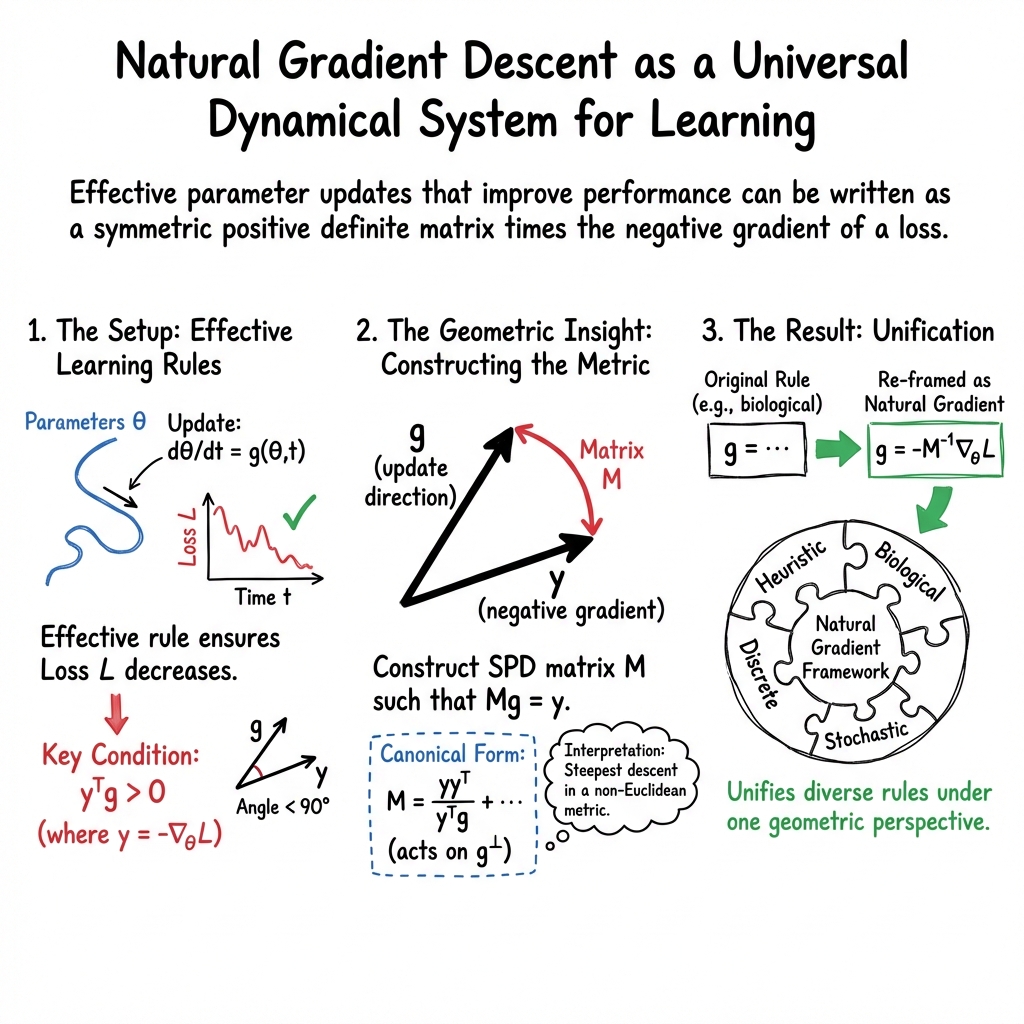

Is All Learning (Natural) Gradient Descent?

Abstract: This paper shows that a wide class of effective learning rules -- those that improve a scalar performance measure over a given time window -- can be rewritten as natural gradient descent with respect to a suitably defined loss function and metric. Specifically, we show that parameter updates within this class of learning rules can be expressed as the product of a symmetric positive definite matrix (i.e., a metric) and the negative gradient of a loss function. We also demonstrate that these metrics have a canonical form and identify several optimal ones, including the metric that achieves the minimum possible condition number. The proofs of the main results are straightforward, relying only on elementary linear algebra and calculus, and are applicable to continuous-time, discrete-time, stochastic, and higher-order learning rules, as well as loss functions that explicitly depend on time.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.