Making Large Language Models into World Models with Precondition and Effect Knowledge

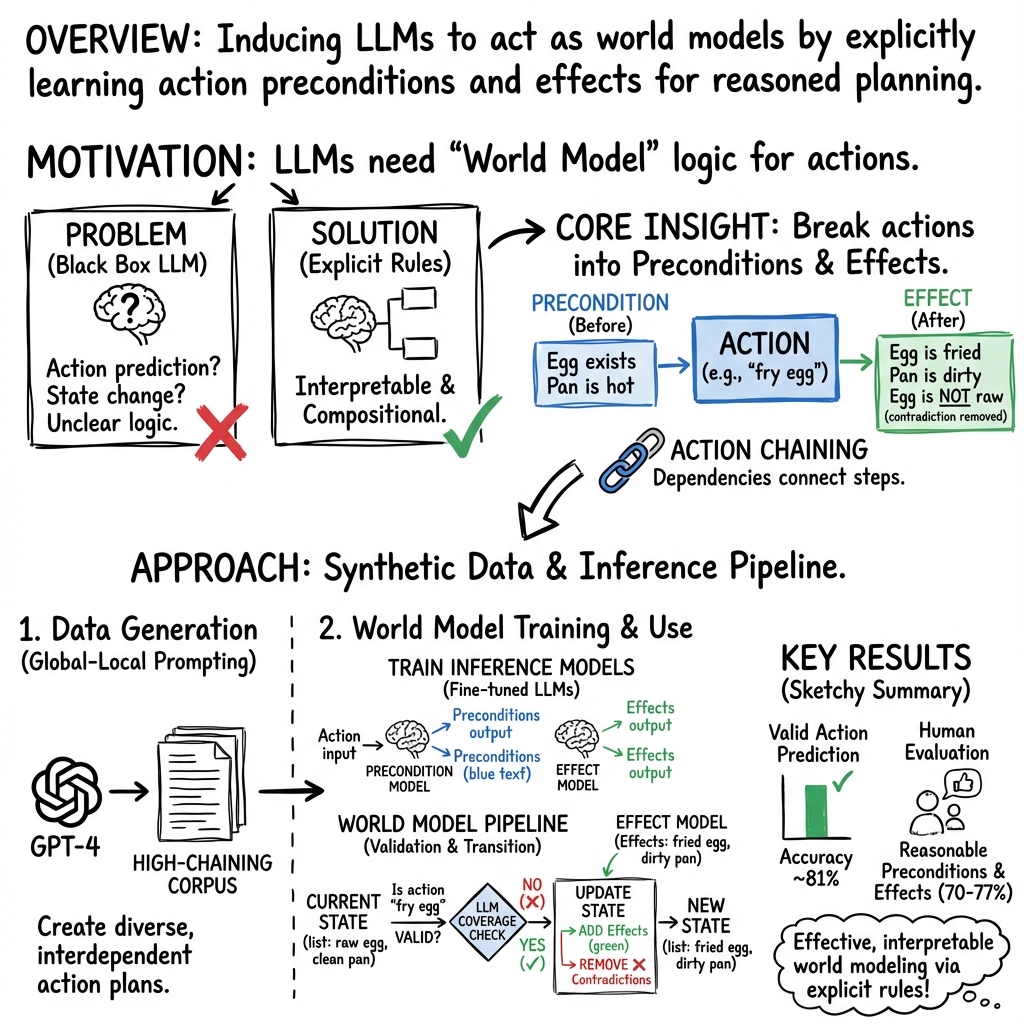

Abstract: World models, which encapsulate the dynamics of how actions affect environments, are foundational to the functioning of intelligent agents. In this work, we explore the potential of LLMs to operate as world models. Although LLMs are not inherently designed to model real-world dynamics, we show that they can be induced to perform two critical world model functions: determining the applicability of an action based on a given world state, and predicting the resulting world state upon action execution. This is achieved by fine-tuning two separate LLMs-one for precondition prediction and another for effect prediction-while leveraging synthetic data generation techniques. Through human-participant studies, we validate that the precondition and effect knowledge generated by our models aligns with human understanding of world dynamics. We also analyze the extent to which the world model trained on our synthetic data results in an inferred state space that supports the creation of action chains, a necessary property for planning.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper explores a big question in AI: can LLMs—the kind of AI that understands and writes text—learn to predict how actions change the world? The authors show a way to make LLMs act like “world models” by teaching them two things: when an action is possible and what changes happen after that action is done. They focus on real-life tasks like cooking, where actions depend on each other (for example, you need boiling water before you can cook pasta).

Objectives

The paper aims to answer two simple questions:

- Can an AI decide if an action can be done right now, given the current situation? (This is called checking “preconditions.”)

- If the action can be done, can the AI predict how the situation will change after doing it? (This is called predicting “effects,” or the new “state.”)

Methods (Explained Simply)

Think of a world model like a smart recipe engine. It needs to know:

- Preconditions: what must be true before you can do something.

- Effects: what becomes true after you do it.

To build this, the authors trained two separate LLMs:

- One model reads an action (like “add sea salt to boiling water”) and writes its preconditions (for example, “water is boiling,” “sea salt is available”).

- Another model reads the same action and writes its effects (for example, “water is seasoned with sea salt”).

Because there wasn’t a good dataset with lots of action dependencies (where steps really rely on other steps), they created one using GPT-4. To make sure the data was useful and had strong “action chaining” (steps depending on other steps), they used a “global-local prompting” technique:

- First, ask the AI to write a full plan (like a cooking plan with many steps).

- Next, remove steps that don’t connect well to others, and sometimes add better steps to improve the plan.

- Then, ask the AI to produce preconditions and effects for each step.

- Check and fix steps that still don’t connect well.

- Finally, filter out whole plans with weak connections and keep the ones with strong chaining.

Once they had this dataset, they fine-tuned two models (based on FLAN-T5) to predict preconditions and effects. They also built “semantic matching” tools (using GPT-4) that compare the predicted preconditions or effects to the current state, even if the wording is different, to decide if an action is valid and how the state should be updated.

A cooking example helps explain:

- Old state: “sea salt is available,” “pepper has been used up,” “the water is boiling.”

- Action: “add pepper to the boiling water.”

- Result: invalid, because the precondition “pepper is available” isn’t true.

- Action: “add sea salt to the boiling water.”

- Result: valid; new state includes “the water is seasoned with sea salt.”

Main Findings

The authors tested their approach with both computer-based metrics and human reviews. Here’s what they found:

- The synthetic dataset was high quality:

- 93% of sampled action entries (with their preconditions/effects) were judged reasonable by human reviewers.

- 87% of sampled plans showed strong action chaining (steps depended on each other in meaningful ways).

- The precondition and effect models worked well:

- Human reviewers agreed that 77% of predicted preconditions and 70% of predicted effects matched common sense.

- Computer scoring also showed strong performance, and removing their special filtering steps (“global-local” checks) made the models worse—so those steps were important.

- The full world model (preconditions + effects + matching) did the two main jobs accurately:

- It correctly judged whether actions were valid about 82% of the time.

- It predicted state changes in a way that matched human reasoning for about 63% of tested cases.

- The “search space” (all the states and actions the system can reason about) was flexible:

- For new, never-before-seen actions, 83.5% could be made valid by the model’s state space (meaning their preconditions could be satisfied).

- On average, there were about 9.7 different ways to satisfy a new action’s preconditions, showing the model isn’t just memorizing one recipe—it can combine steps creatively.

Why This Is Important

World models are key for planning and decision-making. If an AI can tell you whether an action is possible and what will happen next, it can help plan tasks in the real world—like cooking, assembling furniture, or even guiding robots—without needing perfect physical simulations.

This paper shows a practical way to turn LLMs into useful world models by:

- Teaching them action rules (preconditions and effects).

- Letting them check those rules against a current situation.

- Predicting how things will change.

This makes the AI’s reasoning more understandable and more reliable than just guessing or “freewriting” answers.

Implications and Potential Impact

In simple terms, this research could help build AIs that plan better, explain their decisions, and adapt to new tasks. For example:

- Smart assistants could guide you through complex tasks step-by-step, checking that each step is possible before doing it.

- Robots could use these models to plan actions in kitchens, warehouses, or hospitals.

- Educational tools could teach students how actions lead to outcomes, like science experiments or cooking projects.

However, there are limits. The AI still learns from text, not from real physics, and unusual situations might confuse it. The synthetic data, while helpful, can also carry biases. Even so, this approach is a solid step toward AIs that can reason about the world in a more grounded and explainable way.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of what remains missing, uncertain, or unexplored in the paper, written to be concrete and actionable for future research.

- Generalization beyond the cooking domain: The approach is only tested on dish cooking; it is unclear how well it transfers to domains with different ontologies (e.g., robotics, healthcare workflows, manufacturing) and richer dynamics.

- Grounded validation: No evaluation with real-world or simulated execution to verify that predicted preconditions/effects and state transitions lead to successful task completion when actions are actually carried out.

- Planning performance: The paper analyzes “search space” but does not integrate or benchmark an actual planner end-to-end (e.g., plan synthesis success rate, plan optimality, sample efficiency) using the induced world model.

- Causal reasoning vs. pattern matching: The models do not explicitly learn causal structure; there is no assessment of whether the induced preconditions/effects support counterfactual reasoning or causal inference.

- Uncertainty and stochasticity: Effects are treated as deterministic text updates; there is no modeling or evaluation of uncertain outcomes, probabilistic preconditions, or stochastic state transitions.

- Temporal dynamics: Preconditions/effects lack temporal semantics (durations, deadlines, ordering constraints, persistence/decay), and there is no handling of time-dependent or concurrent actions.

- Resource and quantity constraints: The state representation is purely textual and does not capture numeric resources (e.g., amounts of ingredients, timing, temperature), capacities, or continuous variables.

- Contradiction handling: “Deletion” relies on GPT-based contradiction detection with unclear criteria; there is no formal semantics for conflict resolution, negation, or mutually exclusive state facts.

- State canonicalization: World states are free-form natural language; there is no investigation into canonicalization, normalization, or schema design to reduce ambiguity and improve match consistency.

- Scalability of semantic matching: Matching preconditions/effects to states uses GPT-4-based modules; the computational cost, latency, and scalability to long state histories or large action sets are unquantified.

- Model dependency and circularity: GPT-4 is used to generate the synthetic corpus, perform semantic matching, and refactor states; this introduces potential circularity and bias, and the paper does not quantify its impact.

- Reproducibility with open models: Reliance on proprietary GPT-4/GPT-4o for data generation and evaluation limits reproducibility; it is unclear how performance degrades with fully open-source LLMs.

- Data quality and bias: The synthetic corpus creation process may encode biases or idiosyncratic GPT-4 behaviors; there is no systematic bias audit or error taxonomy of the generated preconditions/effects.

- Prompt sensitivity: The effectiveness of the global-local prompting pipeline is not evaluated under prompt variations or different seed LLMs; robustness to prompt changes remains unknown.

- Coverage vs. diversity trade-offs: The corpus emphasizes action chaining, but there is no analysis of coverage (breadth of action types) versus depth (chain length), nor of how this affects generalization.

- Granularity of state facts: The paper does not study how granular preconditions/effects should be (atomic vs. composite facts), or how granularity impacts matching accuracy and downstream planning.

- Negative and disabling conditions: While enabling preconditions are inferred, there is limited exploration of disabling conditions, constraints, or safeguards (e.g., safety rules, do-not-execute states).

- Long-horizon consistency: There is no evaluation of error accumulation across long action chains, nor mechanisms for state consistency checking or repair over extended sequences.

- Partial observability: The approach assumes full knowledge of the current state; handling hidden variables, noisy observations, and belief states is not addressed.

- Multi-agent and exogenous events: Interactions among multiple agents or external events that affect the state (e.g., environment changes) are not modeled or tested.

- Formal semantics and interoperability: There is no mapping to structured planning formalisms (e.g., PDDL, STRIPS) or evaluation of interoperability with classical planners and verification tools.

- Comparative baselines: The paper lacks comparisons to existing methods for extracting action models from text (e.g., ProPara, VerbNet-based systems, narrative model acquisition) or to world-model baselines.

- Evaluation metrics: Textual metrics (F1, BLEU, ROUGE, SMS) may not reflect correctness of state transitions; task-level, causal, or formal validation metrics are not employed.

- Human evaluation scope: Human studies are small-scale and domain-specific; there is no evaluation across diverse annotator populations, tasks, or adversarial cases to stress-test commonsense alignment.

- Error analysis: No detailed analysis of typical failure modes (e.g., missing preconditions, spurious effects, incorrect deletions), their root causes, or targeted mitigation strategies.

- Safety and robustness: There is no examination of robustness to adversarial prompts, misleading states, or safety-critical actions; fail-safe mechanisms and uncertainty-aware decision-making are absent.

- Data leakage risks: Using GPT-4 to evaluate or refactor outputs may leak training priors into evaluation; the paper does not control or report for such leakage.

- Domain adaptation: There is no study of how much fine-tuning data is needed to adapt to new domains, nor of transfer learning from the cooking corpus to other settings.

- Efficiency and compute: Training/inference compute costs and throughput are not reported, which limits understanding of practical deployment in large-scale agents.

- Multilinguality: The framework is English-only; it is unknown whether precondition/effect induction and matching are reliable across languages and cultural contexts.

- Continuous improvement loop: No mechanism is proposed to iteratively validate, correct, and update the world model from grounded experiences or user feedback to reduce model drift and errors.

Practical Applications

Immediate Applications

The following applications can be deployed now, leveraging the paper’s demonstrated approach to induce LLMs to perform valid action prediction and state transition prediction via precondition/effect knowledge.

- Healthcare (administration and training): Procedure and checklist validators for non-clinical workflows (e.g., patient intake, sterilization prep, inventory management)

- Potential tools/products/workflows: “Procedure Validator” that flags missing preconditions, simulates state transitions after steps; integration with hospital SOP documentation systems

- Assumptions/Dependencies: Domain-specific fine-tuning with vetted corpora; restricted to non-safety-critical tasks until formal verification; access to LLMs capable of semantic matching

- Education (STEM labs and vocational training): Interactive tutors that assess students’ lab protocols or cooking plans by checking preconditions and predicting effects

- Potential tools/products/workflows: Lab protocol coach; recipe planning app that adapts to pantry constraints and offers alternative chains (supports ~9.7 alternatives per action, per paper)

- Assumptions/Dependencies: Accurate mapping from textual states to real classroom/lab contexts; curated domain corpora for lab safety and materials; human oversight for grading and feedback

- Software (DevOps, SRE): Runbook auditor that validates whether operational steps (e.g., “restart service,” “rotate keys”) are applicable given current system state, and predicts resulting state changes

- Potential tools/products/workflows: CI/CD “Precondition Checker” before deployments; runbook linting; change-impact simulator

- Assumptions/Dependencies: Structured representation of system state (inventory, service status, configs); connectors to observability data; domain-specific fine-tuning on ops manuals

- Manufacturing (procedural documentation QA): Authoring assistant for assembly instructions that enforces action chaining (preconditions of step N covered by effects of steps 1…N−1)

- Potential tools/products/workflows: SOP coherence checker; action-chain coverage analyzer; “missing preconditions/effects” detector

- Assumptions/Dependencies: Textual SOPs that can be parsed to states/actions; domain adaptation of the models; limited deployment to documentation QA (not autonomous control)

- Robotics (simulation and high-level planning): Text-based planning assistant for simulated environments (e.g., household tasks) that determines action applicability and projected states

- Potential tools/products/workflows: Planner scaffolding for robot task design; validation of task sequences before executing in simulation

- Assumptions/Dependencies: Stays in simulation or controlled demos; no direct actuation without perception grounding; requires mapping from natural-language states to simulator variables

- Knowledge engineering (planning domain modeling): Automatic extraction of action schemas (preconditions/effects) from manuals to seed PDDL models or model-based planners

- Potential tools/products/workflows: “Schema Extractor” to bootstrap planners; bridging natural language SOPs to formal action models

- Assumptions/Dependencies: Domain text availability; human-in-the-loop validation; alignment between natural language and planner formalisms

- Games and interactive fiction: World-model-as-a-service for text games that validates actions in a given narrative state and predicts state transitions to improve causal consistency

- Potential tools/products/workflows: Narrative planning engine; authoring tool that ensures causal coherence in branching stories

- Assumptions/Dependencies: Purely textual environments; reliance on semantic matching robustness; tuning for narrative domains

- Data science/AI tooling: Synthetic corpus generation for action-chained domains using the paper’s global-local prompting technique

- Potential tools/products/workflows: “Synthetic Dataset Maker” for precondition/effect corpora; benchmarking suites for action chaining

- Assumptions/Dependencies: Access to high-quality base models (e.g., GPT-4) for data generation; bias checks; domain expert review

- Personal productivity (daily life): Smart assistants for complex tasks (meal planning, home organization) that check whether steps are valid (e.g., “Do I have the tools? Is the surface clean?”) and simulate outcomes

- Potential tools/products/workflows: Recipe companion that validates ingredients and equipment; step-by-step planner with alternatives (leveraging the search-space analysis)

- Assumptions/Dependencies: User-provided or tracked “world state” (inventory, tools); consumer-grade LLM access; clarity in textual state descriptions

- Policy and compliance (documentation hygiene): Prototype checkers for SOP completeness and internal consistency in non-safety-critical domains

- Potential tools/products/workflows: “SOP Consistency Checker” that identifies steps lacking preconditions/effects or weak chaining

- Assumptions/Dependencies: Constrained scope; human compliance officers review outputs; domain adaptation to policy language

Long-Term Applications

The following applications require further research, scaling, multi-modal integration, or domain certification, especially given limitations noted in the paper (e.g., lack of true causal reasoning, synthetic data biases).

- Healthcare (clinical decision support and procedure execution): Verifiable action-chain planners for clinical workflows (e.g., medication administration, surgical prep), with formal guarantees

- Potential tools/products/workflows: “Clinical Plan Validator” integrated with EHR; precondition checks against patient state; audit trails of predicted state transitions

- Assumptions/Dependencies: Regulatory approval; multimodal grounding (labs, vitals); formal verification; extensive domain-specific training

- Energy and industrial operations (digital twins): Natural-language interfaces to plant digital twins that validate procedures and simulate next-state impacts across systems

- Potential tools/products/workflows: Procedure simulation assistants; precondition/effect-aware scenario planning and HAZOP prototyping

- Assumptions/Dependencies: Real-time data integration; mapping textual states to formal plant models; safety certifications; domain-grade accuracy

- Autonomous robotics (embodied agents): Multi-modal world models that combine LLM precondition/effect reasoning with perception and control for robust household and industrial tasks

- Potential tools/products/workflows: High-level planner using preconditions/effects with sensor-grounded state updates; task generalization across environments

- Assumptions/Dependencies: Reliable perception-to-text state encoding; continuous learning; safety constraints; sample-efficient training

- Software (self-healing systems): Automated remediation planners that derive actions from incident states, verify preconditions, simulate effects, and execute or recommend fixes

- Potential tools/products/workflows: Ops copilots that close tickets autonomously; rollback/roll-forward simulators; “world model” backed AIOps engines

- Assumptions/Dependencies: High-quality observability and configuration state modeling; guardrails; change-management integration

- Public policy and governance (procedural standards): Standardization of machine-readable precondition/effect representations for SOPs, enabling automated audits and simulations

- Potential tools/products/workflows: National/sector standards for action schemas; compliance testing tools that simulate procedure chains under varying conditions

- Assumptions/Dependencies: Stakeholder agreement on formats; legal/regulatory buy-in; tooling ecosystem; risk assessment methods

- Education (competency-based training with simulations): Scenario-based evaluation platforms that track learner actions, verify preconditions, and model outcomes for complex tasks (e.g., EMT training)

- Potential tools/products/workflows: Multi-step skill assessment with dynamic state tracking; adaptive tutoring via alternative action chains

- Assumptions/Dependencies: High-fidelity domain modeling; integration with simulators; validation against expert ground truth

- Finance (operational risk and process compliance): World-model-based auditors for back-office processes (e.g., reconciliation, KYC), checking step validity and downstream effects

- Potential tools/products/workflows: “Process Chain Auditor” for compliance; effect-aware change management in workflows

- Assumptions/Dependencies: Sensitive-data handling; formal mapping of process states; regulatory alignment; high accuracy thresholds

- Logistics and supply chain (contingency planning): Action-chain planners that validate steps (e.g., “allocate stock,” “reroute shipment”) and simulate state changes under constraints

- Potential tools/products/workflows: Alternative route/sourcing planners using precondition/effect chains; stress-test scenarios

- Assumptions/Dependencies: Accurate state visibility (inventory, ETAs); integrations with TMS/WMS; domain-specific training

- Knowledge graph and planning integration (cross-domain): Unified pipelines that translate textual SOPs to formal planners (PDDL) and knowledge graphs, supporting model-based planning across sectors

- Potential tools/products/workflows: NL-to-PDDL compilers; KG-backed planners that enforce action chaining; hybrid neurosymbolic systems

- Assumptions/Dependencies: Robust semantic mapping; schema alignment; explainability tooling; performance on long horizons

- Consumer assistants (generalist task planning): Household copilots that plan multi-step tasks (home repair, cooking, travel), verify prerequisites, predict outcomes, and propose alternatives

- Potential tools/products/workflows: “Home Task Planner” with inventory/tool tracking; dynamic replanning when constraints change

- Assumptions/Dependencies: Persistent state tracking; multimodal perception (images of pantry/tools); user trust and safety guardrails

- Safety-critical verification (formal methods): Combining precondition/effect LLM outputs with symbolic verification to ensure procedural safety and detect contradictions before execution

- Potential tools/products/workflows: LLM-to-formal verification bridges; contradiction detectors in SOPs; counterfactual simulators

- Assumptions/Dependencies: High-precision extraction; formal semantics for actions; certified toolchains; human oversight

Cross-cutting assumptions and dependencies

- Domain adaptation: High-quality, domain-specific precondition/effect corpora (preferably human-verified) are necessary to move beyond cooking into safety-critical areas.

- Model access and orchestration: Availability of capable LLMs (for inference and semantic matching) and pipelines to orchestrate “precondition model + effect model + semantic matcher.”

- State representation: Clear, structured mappings between natural-language states and operational/system states; multimodal grounding (sensors, logs) for real-world deployments.

- Verification and governance: Human-in-the-loop review, bias detection in synthetic data, and formal verification where risk is high.

- Scalability: Efficient data generation (global-local prompting), evaluation frameworks, and integration with existing tools (EHR, CI/CD, digital twin platforms).

Glossary

- Ablation study: An experimental procedure where specific components or steps are removed to measure their impact on performance. "We perform similar ablation studies as subsection 4.2 on Ablation-Local and Ablation- Global, and the results demonstrate that both global and local step discard are necessary and beneficial for establishing a capable world model."

- AdamW: An optimizer for training neural networks that decouples weight decay from the gradient update to improve generalization. "using AdamW (Loshchilov and Hutter, 2019) with the default learning rate linearly decaying from 5E-5."

- Action chaining: A phenomenon where actions depend on each other such that preconditions and effects link across steps, enabling multi-step plans. "We call this phenomenon of dependency among actions as action chaining."

- BLEU (BLEU-2/BLEU-3): An n-gram overlap metric for evaluating the similarity between generated text and reference text; BLEU-2/3 consider 2- or 3-grams. "BLEU score (Papineni et al., 2002) (BLEU-2 and BLEU-3)"

- Causal reasoning: The process of inferring and reasoning about cause-and-effect relationships. "Improvements in world modeling have led to advances in causal reason- ing (Yu et al., 2023; Richens and Everitt, 2024)"

- Corpus refactoring: Reorganizing or transforming a dataset into formats suitable for specific tasks or evaluations. "we perform a corpus refactoring to adapt the data into appro- priate input-output formats for the two prediction tasks."

- Few-shot annotated examples: A small number of labeled examples used to guide or condition a model during prompting or training. "Prompt GPT-4 with few-shot annotated exam- ples to selectively discard the action steps..."

- Fleiss's kappa: A statistical measure of agreement for categorical ratings among multiple annotators. "we measure the average inter-annotator agreement using Fleiss's kappa (Fleiss, 1971)."

- FLAN-T5: An instruction-tuned variant of the T5 LLM family designed for better generalization on prompted tasks. "We train two FLAN-T5 models (Chung et al., 2024), flan-t5-large2, on our action pre- condition and effect corpus"

- Global-local prompting: A multi-step prompting strategy that uses both step-level (local) and plan-level (global) filtering to generate high-quality, interdependent action preconditions and effects. "We propose a global-local prompting technique to induce high-quality action preconditions and effects with significant action chaining."

- Greedy decoding: A decoding strategy that selects the most probable next token at each step without lookahead. "During inference, we perform greedy decoding."

- Holdout test set: A subset of data reserved from training, used only for evaluating model performance. "We maintain a holdout test set which is composed of 200 action plans with a series of action steps."

- Hugging Face Transformers: A popular open-source library for training and deploying transformer-based NLP models. "We use Hugging Face Transformers3 (Wolf et al., 2020) during implementation."

- Institutional Review Board (IRB): A committee that reviews and approves research involving human participants to ensure ethical standards. "These human evaluations have been approved by our institution's Institutional Review Board (IRB)."

- Inter-annotator agreement: A measure of consistency among multiple human annotators’ judgments. "we measure the average inter-annotator agreement using Fleiss's kappa (Fleiss, 1971)."

- Model-based planning: Planning methods that use an explicit model of environment dynamics to simulate and choose actions. "For model- based planning, Guan et al. (2023) argue that LLMs themselves are not sufficient to tackle difficult plan- ning problems"

- Monte Carlo Tree Search: A heuristic search algorithm that builds and explores a tree of possible actions using random sampling and value estimates. "and applies Monte Carlo Tree Search to build a reasoning tree that is explored iteratively to provide reasoning guidance."

- Precondition/effect corpus: A dataset composed of actions paired with their required preconditions and resulting effects. "we design a technique to create a synthetic precondition/effect corpus from the LLM that is essential for building a world model;"

- Precondition/Effect Inference Module: The component that predicts an action’s required preconditions and its effects in natural language. "Specifically, our world model consists of two main sub-modules: a precondition/effect inference module (§3.1) and a semantic matching module (§3.2)."

- Reinforcement learning (RL): A learning paradigm where agents learn to act by receiving rewards from interactions with an environment. "World modeling has emerged in reinforcement learning as an effective way to learn both the tran- sition function and policy for a given RL task (Ha and Schmidhuber, 2018)."

- ROUGE-L: A text evaluation metric based on the longest common subsequence between generated and reference texts. "ROUGE score (Lin, 2004) (ROUGE-L)"

- Sample efficiency: The ability of a learning approach to achieve high performance with relatively few training samples. "in order to im- prove sample efficiency of RL agents."

- Semantic matching module: The component that semantically aligns inferred preconditions/effects with the current world state to check applicability and update states. "a semantic matching module (§3.2)."

- Self-supervised world models: World models trained without explicit labels by predicting aspects of future states or observations. "demonstrate that self-supervised world models can be used to help RL agents more efficiently explore environments via planning"

- Sentence Mover's Similarity (SMS): A similarity metric that measures semantic closeness between multi-sentence texts using word or sentence embeddings. "sentence mover's similarity (Clark et al., 2019) (SMS)."

- State addition and deletion: The process of updating a world state by adding effects and removing contradicted elements after an action. "by combining the state addition and deletion as the final prediction"

- State-space models: Mathematical models that represent systems in terms of state variables and their transitions over time. "by integrating state-space models into the DreamerV3 world model to capture and main- tain long-range relational knowledge."

- State transition prediction: Predicting the next world state after applying a valid action to the current state. "State transition prediction means: given a world state at a certain time point and a valid action that has been taken, predicting what the new world state is,"

- Synthetic data generation: Creating artificial training data (e.g., via LLMs) to augment or replace manually annotated datasets. "while leveraging synthetic data generation techniques."

- Token-level F1 score: The harmonic mean of precision and recall computed over tokens to evaluate overlap with reference text. "token-level F1 score (Tjong Kim Sang and De Meulder, 2003) (F1)"

- Transition model P(s'|s, a): A function that specifies the probability of transitioning to state s' given state s and action a. "A world model can also be referred to as a transition model, P(s'|s, a)"

- Valid action prediction: Determining whether an action’s preconditions are satisfied in the current state so the action can be executed. "Valid action prediction means: given a world state at a certain time point, predicting which actions are valid to be taken."

- Weak supervision: Training with limited, noisy, or indirect labels instead of fully labeled datasets. "introduces an approach that leverages weak supervision and contextual informa- tion to improve action condition inference."

- World model: A model of environment dynamics that predicts how actions change the state of the world. "World models, which encapsulate the dynam- ics of how actions affect environments, are foundational to the functioning of intelligent agents."

Collections

Sign up for free to add this paper to one or more collections.