- The paper demonstrates a novel application of YOLO models for accurate bee detection, achieving up to 85.6% mAP with YOLOv5m.

- It introduces a comprehensive dataset of 9,664 annotated images and applies rigorous preprocessing to optimize model performance.

- An explainable AI interface translates detection events into actionable reports, enabling real-time decision-making for non-technical stakeholders.

Enhancing Pollinator Conservation towards Agriculture 4.0: Monitoring of Bees through Object Recognition

Introduction

The paper "Enhancing Pollinator Conservation towards Agriculture 4.0: Monitoring of Bees through Object Recognition" addresses a significant environmental challenge: the decline of pollinators, particularly bees, and its implications for global food security. This research explores the use of computer vision and object recognition to autonomously track and report bee behavior, integrating state-of-the-art models like YOLOv5 to facilitate the conservation efforts aligned with Agriculture 4.0. The authors present a comprehensive approach from data collection to the deployment of a user-friendly interface aimed at non-technical users, providing a bridge between sophisticated AI models and practical apiculture applications.

Dataset and Preprocessing

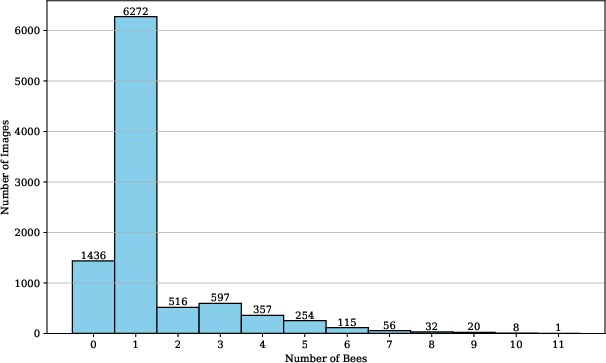

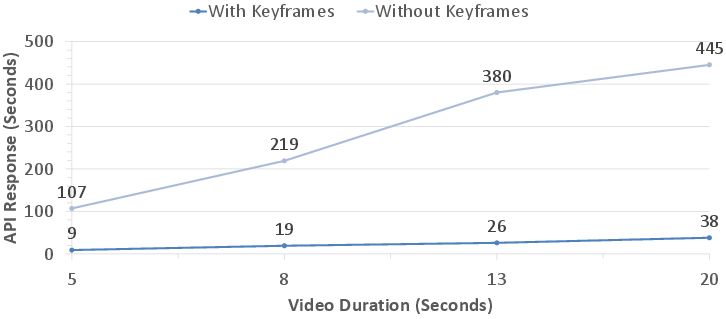

The research introduces a novel dataset specifically crafted for bee detection and tracking. This dataset comprises 9,664 images, each annotated with bounding boxes to identify individual bees. The distribution of bee counts per image (Figure 1) demonstrates that most images contain a single bee, which aligns well with typical field observations. The data preprocessing involved resizing the images to 416x416 pixels, suitable for YOLO model architectures, ensuring compatibility while maintaining a balance between computational efficiency and recognition accuracy.

Figure 1: Presence of the number of bees per image within the dataset.

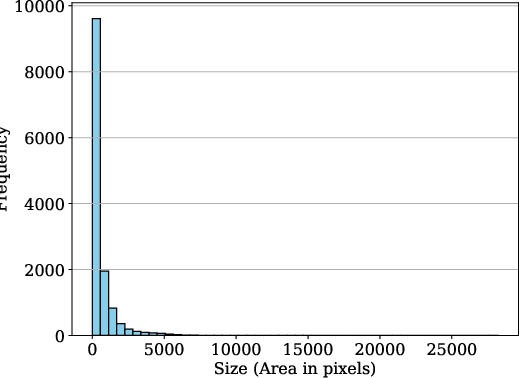

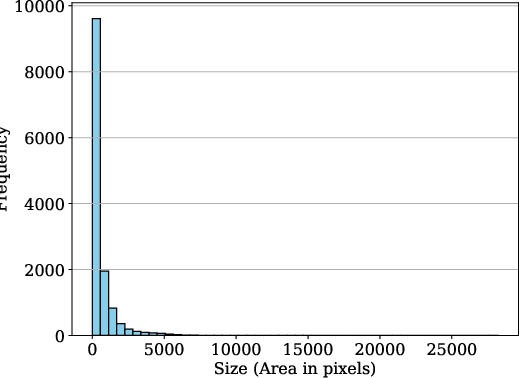

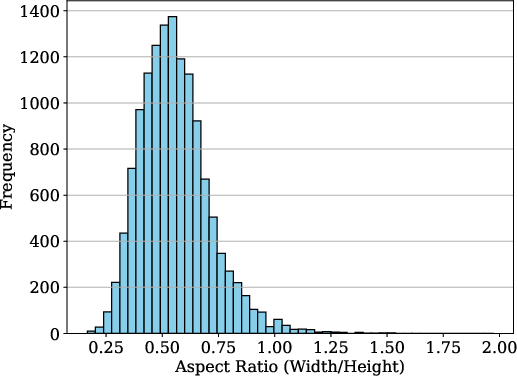

The diversity in annotation sizes and aspect ratios (Figure 2) ensures that the dataset encompasses a wide variety of bee positions and orientations, contributing to the robustness of the detection models. This dataset is a significant resource released to the research community, supporting further explorations into pollinator conservation.

Figure 2: Distribution of Annotation Sizes.

Object Detection Methodology

The study evaluates several object detection models, primarily within the YOLO family, to determine their efficacy in real-time bee detection. YOLOv5m emerged as the most precise model with an [email protected] of 85.6%, while YOLOv5s demonstrated optimal performance for real-time applications due to its lower inference time of 5.1ms per frame. The models were tested using a rigorous set of precision, recall, and mean average precision (mAP) metrics, highlighting YOLOv5m's superior performance in accuracy, albeit with a marginal increase in inference time compared to the lighter YOLOv5s.

Figure 3: Examples of annotated (yellow bounding boxes) and preprocessed images within the dataset, selected at random.

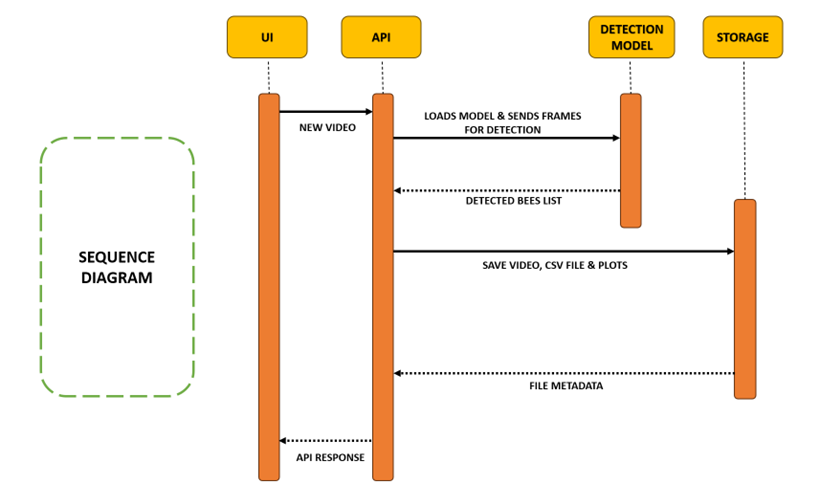

Implementation and Stakeholder Interaction

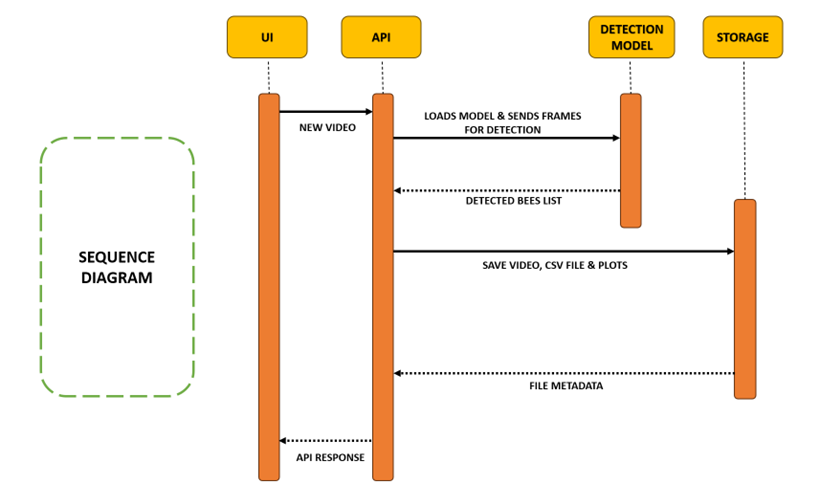

A significant component of the research is an explainable AI interface designed to convert detection events into timestamped reports and visual charts, effectively communicating complex information to non-technical stakeholders. This interface, depicted in Figure 4, democratizes access to advanced AI tools, allowing apiculture experts and farmers to utilize these insights without in-depth technical knowledge.

Figure 4: Graphical overview of the workflow for bee detection and timestamping.

The study emphasizes the importance of integrating such AI systems into practical applications, aligning with the principles of Agriculture 4.0, which hinge on data-centric automation to enhance agricultural practices' speed and efficiency.

Results and Discussion

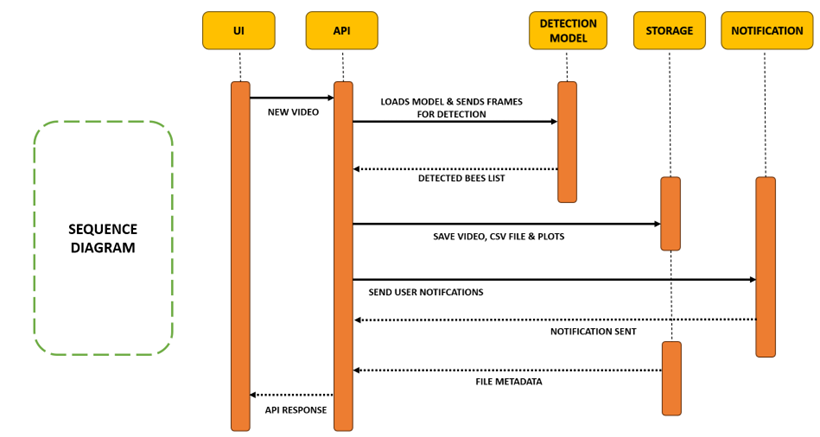

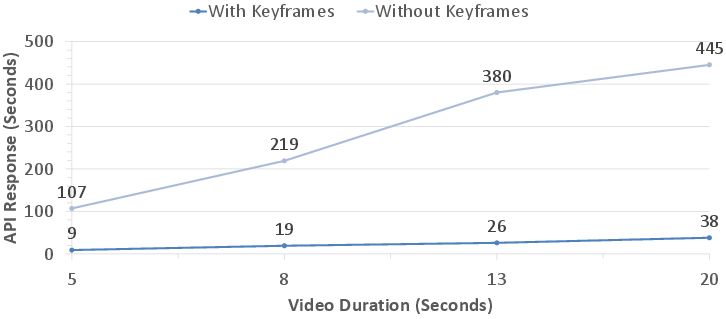

The models' performance metrics showcased a clear trade-off between computational efficiency and detection accuracy. YOLOv5m's higher precision and recall made it suitable for in-depth analysis scenarios, while YOLOv5s offered the responsiveness required for real-time monitoring. The discussive results underline the critical role of keyframe selection in reducing the computational load and improving response times (Figure 5).

Figure 5: Impact of keyframe selection on API Response.

Future Directions

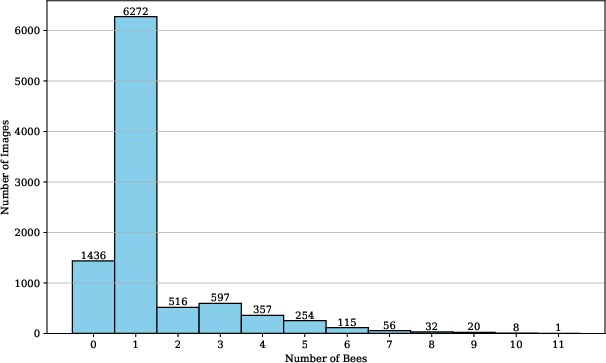

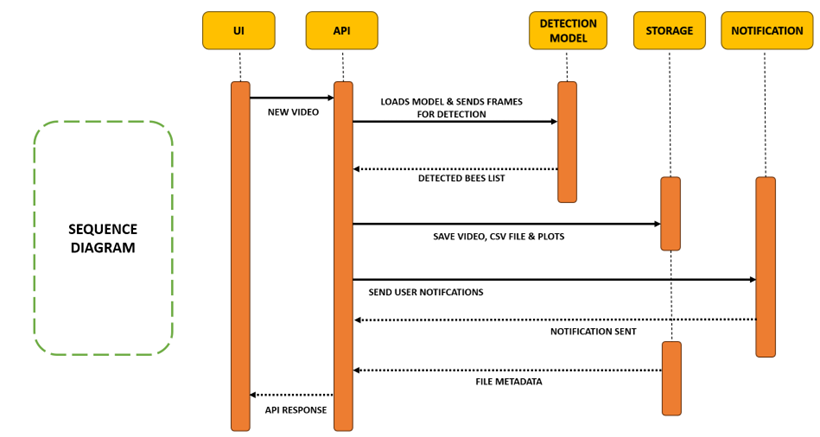

The paper proposes expanding the framework to support multiple recognitions, such as specific bee behaviors or species differentiation, potentially enhancing the utility of AI in environmental monitoring. Figure 6 illustrates a future pipeline that involves cloud-based data processing and real-time notifications, signifying an advancement towards more integrated and scalable conservation strategies.

Figure 6: Proposed future pipeline for improved use in industry.

Conclusion

This research sets a precedent for using AI in pollinator conservation, providing a template for integrating technology into ecological studies. By releasing the dataset and models as open-source resources, the paper encourages broader participation in environmental monitoring initiatives. The findings emphasize the viability of deploying AI solutions in agricultural contexts, particularly for conservation efforts critical to food security and ecosystem sustainability.