- The paper introduces a novel framework integrating generative AI with rejection sampling in reinforcement learning to boost user engagement.

- It employs a reward model to select email subject lines that better align with user feedback, addressing common content quality issues.

- Experimental results demonstrate measurable improvements, including a +1% increase in session time and a +0.4% rise in active users.

"Let AI Entertain You: Increasing User Engagement with Generative AI and Rejection Sampling" (2312.12457)

Introduction

This paper describes a novel framework to leverage generative AI for enhancing user engagement in social networks, applying rejection sampling techniques within reinforcement learning paradigms. It addresses the limitations of current generative AI models, which often fail to incorporate user feedback effectively and tend to lack authenticity compared to human-generated content. The framework is specifically applied to the generation of email subject lines, achieving measurable improvements in engagement metrics such as session time (+1%) and weekly active users (+0.4%).

Challenges in Generative AI

Generative AI models, especially LLMs, are adept at creating text and media content, yet struggle with maximizing user interactions. Two primary challenges that limit engagement include:

- Lack of User Feedback Integration: Generative models often produce content without considering explicit or implicit user interactions, failing to boost activities such as clicks effectively.

- Content Quality Issues: LLMs often generate content that is generic and lacks the distinctive quality and trustworthiness of human-generated content, sometimes generating fabricated information (hallucinations).

Proposed Framework

The paper introduces a method that combines LLMs with a reward model to enhance content engagement by applying rejection sampling. This method guides LLMs to generate content that better aligns with user preferences, and was implemented specifically for generating engaging email subject lines for Nextdoor, a social networking service.

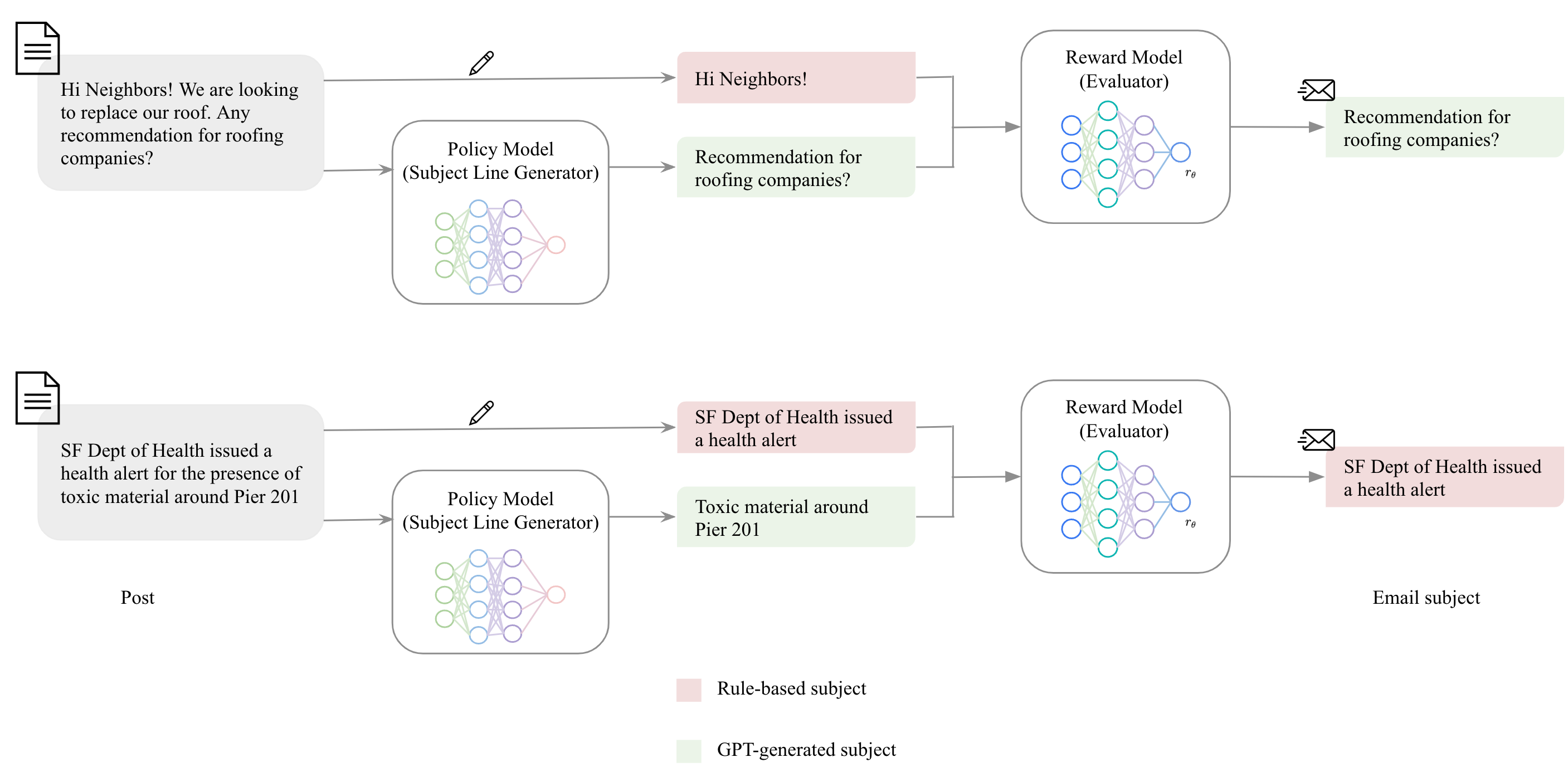

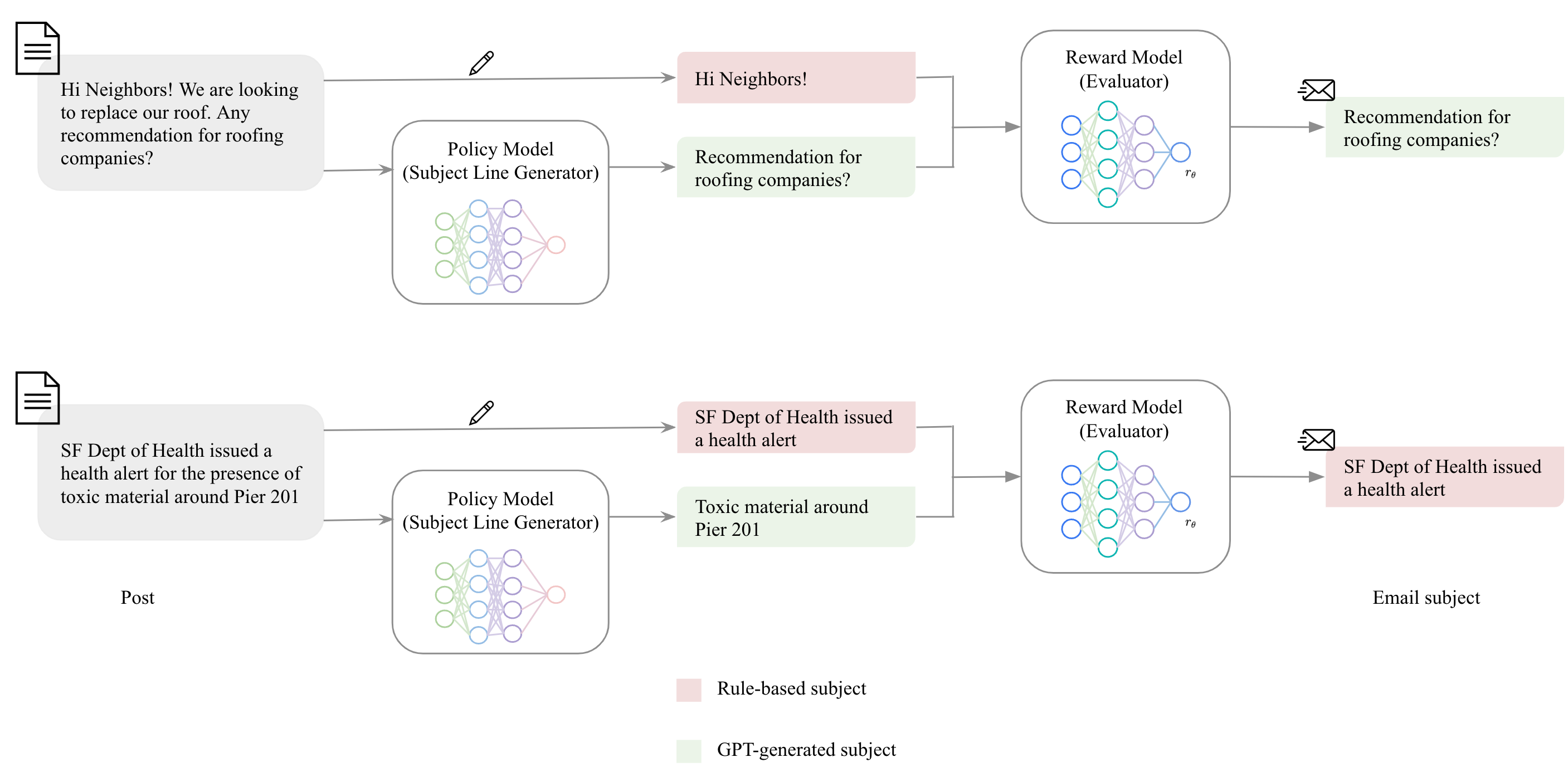

Figure 1: An Overview of the Framework Used in Email Subject Line Generation.

Components

- Generative AI (GPT): Employed for creating multiple candidate subject lines.

- Reward Model: Utilized to evaluate the engagement potential of each generated line, selecting the most promising variant through rejection sampling.

Implementation

The implementation centers around generating email subject lines to improve engagement on Nextdoor. By integrating a reward model, the result is an enhanced selection process of email subject lines that better reflect user preferences without introducing common issues like hallucinations or promotional tones.

Key methodologies include:

- In-Context Learning and Few-Shot Prompts: Utilize tailor-made prompts to generate initial text with GPT models, minimizing unwanted stylistic choices and improving authenticity.

- Supervised Fine-Tuning (SFT) on User Preferences: Employ user feedback focused training to align the generative model's outputs with actual user preferences, honing the engagement potential of LLM-generated content.

Deployment and Monitoring

Critical deployment strategies include caching to reduce cost and handling rate limits, daily monitoring of model performance, as well as implementing fallback strategies.

Experimental Results

Extensive A/B testing compared various versions of subject lines generated via rule-based, GPT-only, and GPT with reward model selection approaches:

- Engagement Metrics: The framework led to a +1% increase in sessions and a +0.4% rise in active weekly users.

- Comparative Evaluation: GPT-generated content improved user clicks significantly when coupled with the reward model's selection mechanism, demonstrating the efficacy of reinforcement learning principles applied to enhancing user engagement.

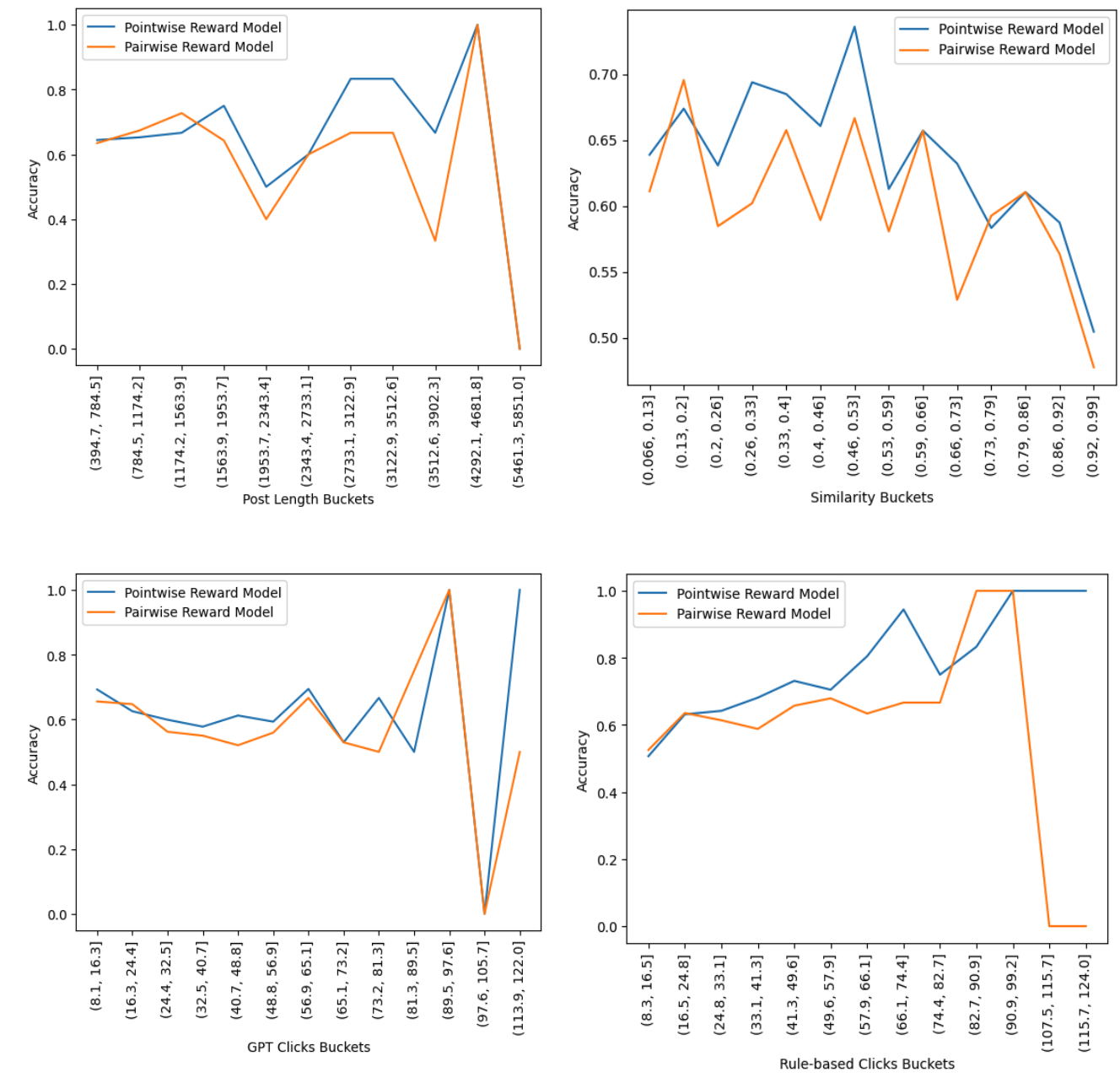

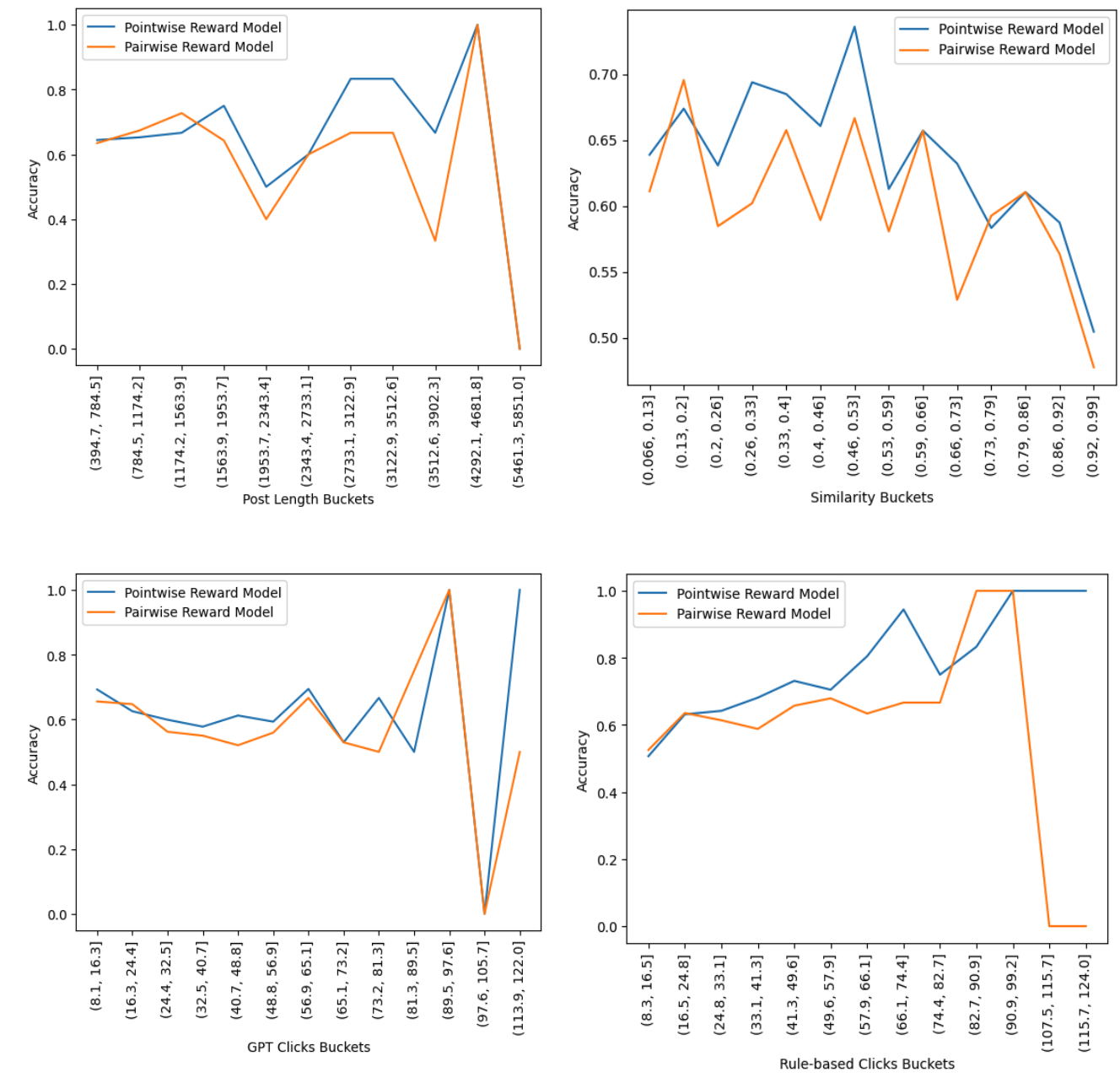

Figure 2: Accuracy Bucketed by Different Dimensions of Similarity, Post Length, Number of Rule-based Clicks, and Number of GPT Clicks.

Conclusion

This study provides evidence for the practicality of incorporating a reinforcement learning-based rejection sampling framework to improve user engagement via generative AI. This approach not only elevates the appeal of AI-generated content through user-centric design but also lays the groundwork for future improvements in personalized content generation strategies. Future work is suggested along the lines of further fine-tuning the generative model and enhancing real-time user personalization capabilities.