- The paper introduces a novel paradigm for evaluating and governing AI agent behaviors beyond traditional model-centric approaches.

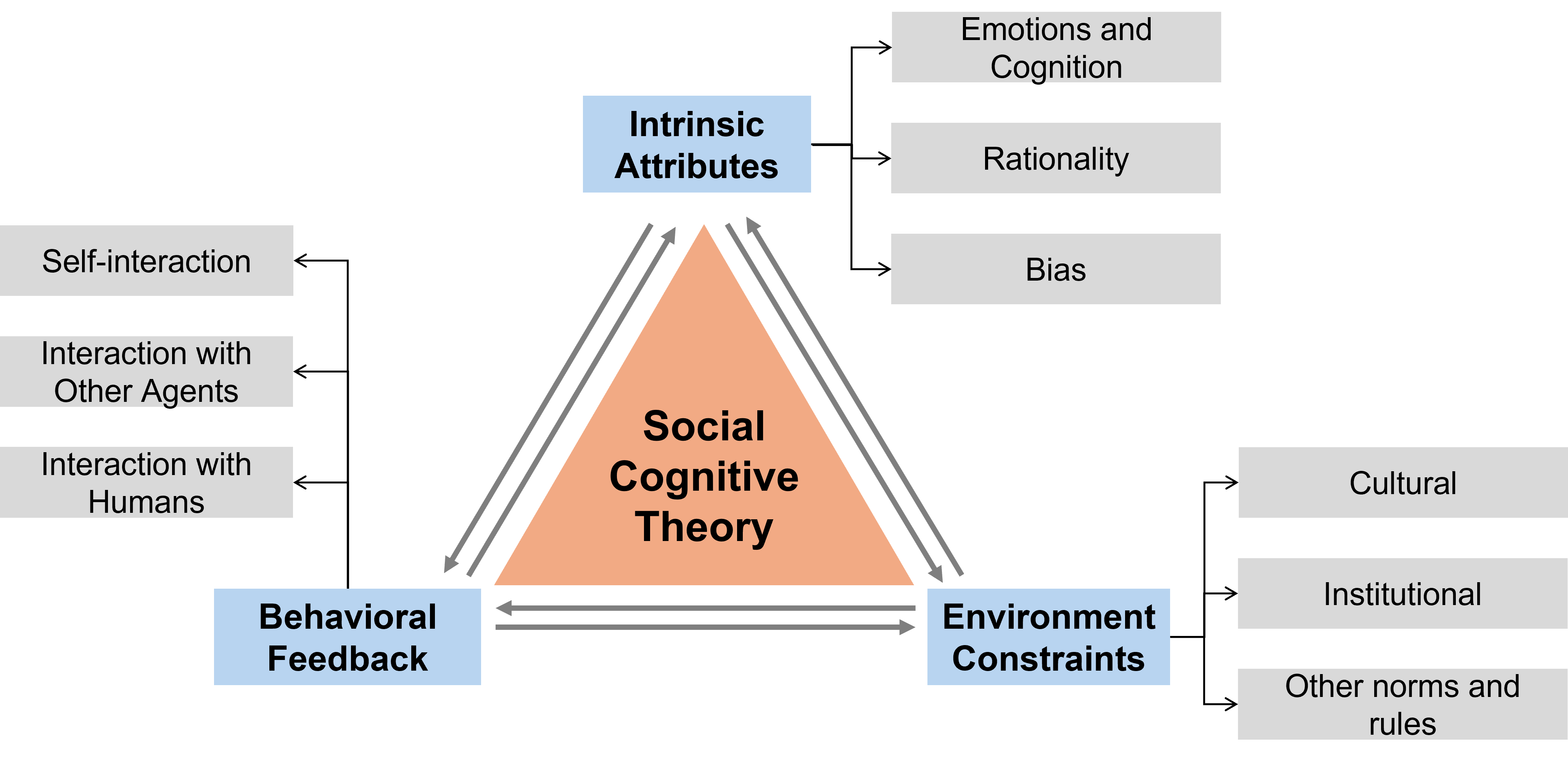

- It employs a social cognitive perspective to analyze intrinsic attributes, environmental constraints, and behavioral feedback in both individual and multi-agent contexts.

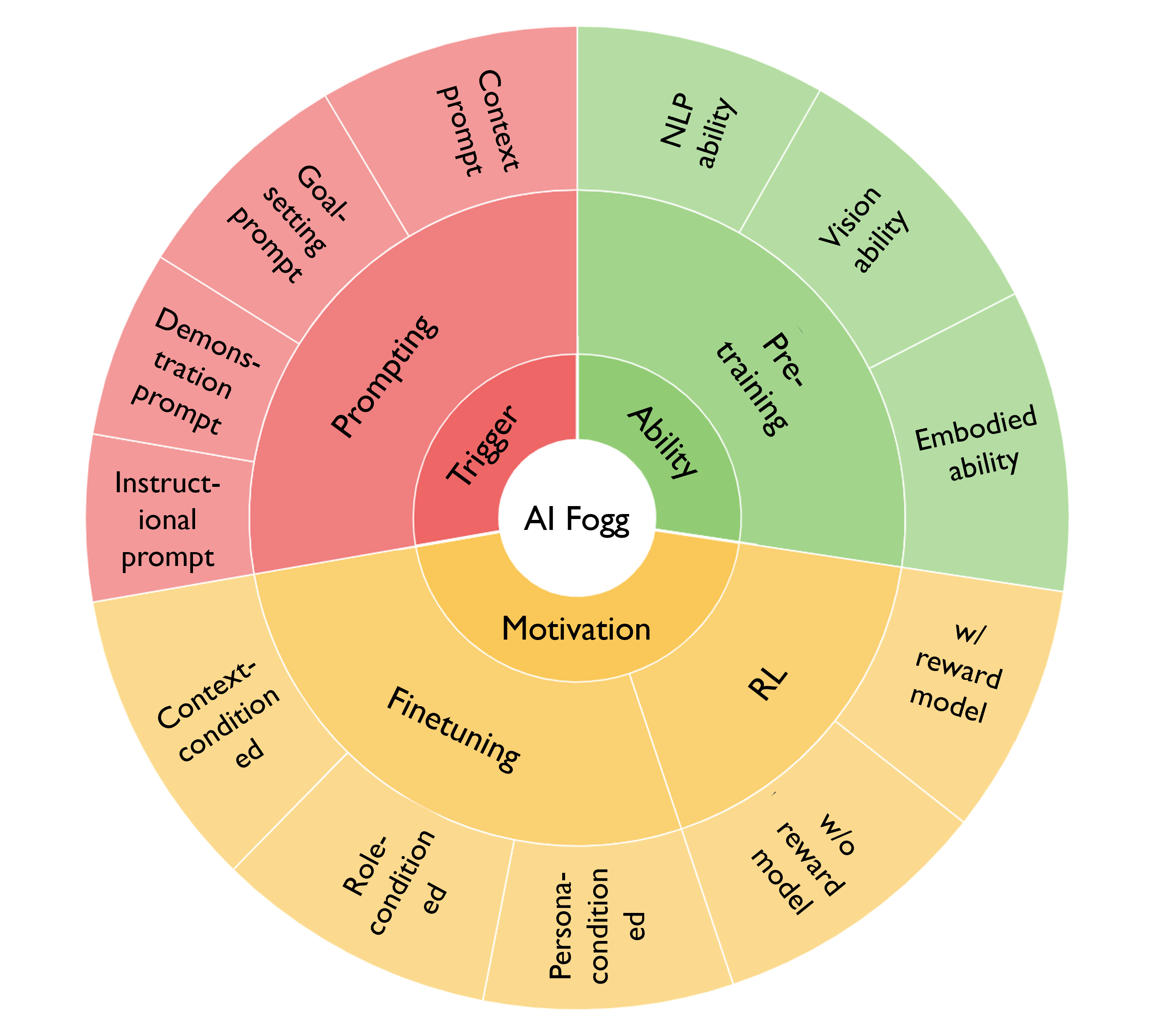

- The study applies frameworks like the Fogg Behavior Model to adapt AI actions, emphasizing fairness, safety, and transparency for responsible AI deployment.

AI Agent Behavioral Science

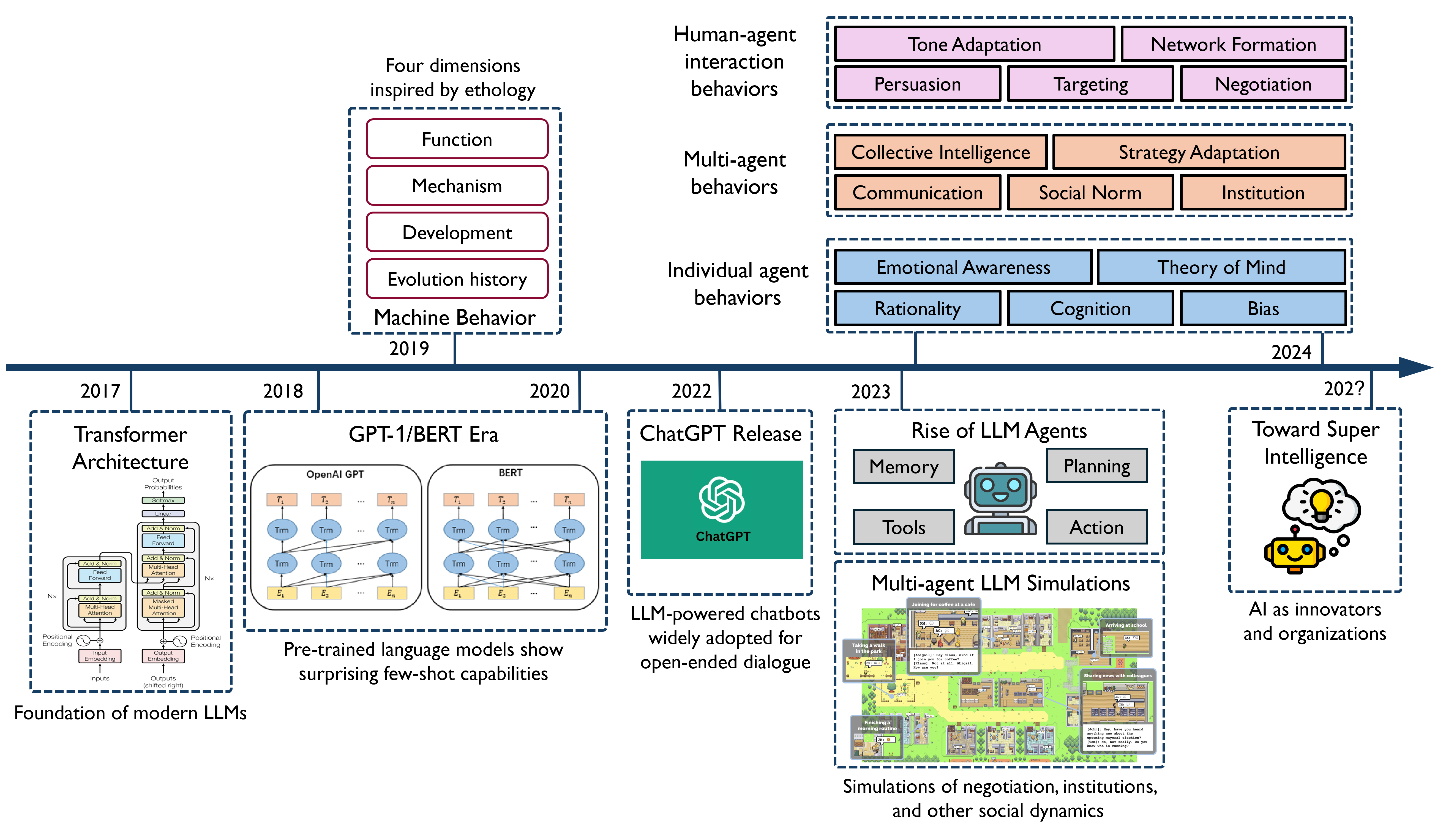

The paper "AI Agent Behavioral Science" explores the emerging scientific field dedicated to understanding, evaluating, and governing the behavior of AI agents. This new paradigm extends beyond traditional model-centric approaches by emphasizing how AI systems act, adapt, and interact within specific contexts. This essay provides a detailed exploration of the paper, focusing on its main contributions, insights into emergent behaviors, adaptation frameworks, and implications for responsible AI.

Introduction to AI Agent Behavioral Science

Recent advancements in LLMs have revolutionized the capabilities of AI systems, enabling them to perform not only static prediction tasks but also exhibit dynamic behaviors such as planning, adaptation, and social interaction. The integration of these models into agentic systems—those that can understand and react to feedback, goals, and social dynamics—has highlighted the necessity of AI Agent Behavioral Science. This new field aims to systematically observe AI behavior, design interventions, and develop theories to explain how AI agents operate over time.

Traditional approaches to AI focus on internal mechanisms such as architectures and training objectives. However, these perspectives assume behavior can be elucidated purely from internal computations. In contrast, AI Agent Behavioral Science views AI agents as dynamic entities whose actions are shaped by situated interactions within their environments. This shift in perspective is crucial for understanding complex behaviors like negotiation and deception that emerge not from mere model capabilities but from their deployment in interactive settings.

Emergent Individual AI Agent Behaviors

The emergent behaviors of individual AI agents are analyzed using a social cognitive perspective, which categorizes influencing factors into intrinsic attributes, environmental constraints, and behavioral feedback.

Emergent Multi-agent Behaviors

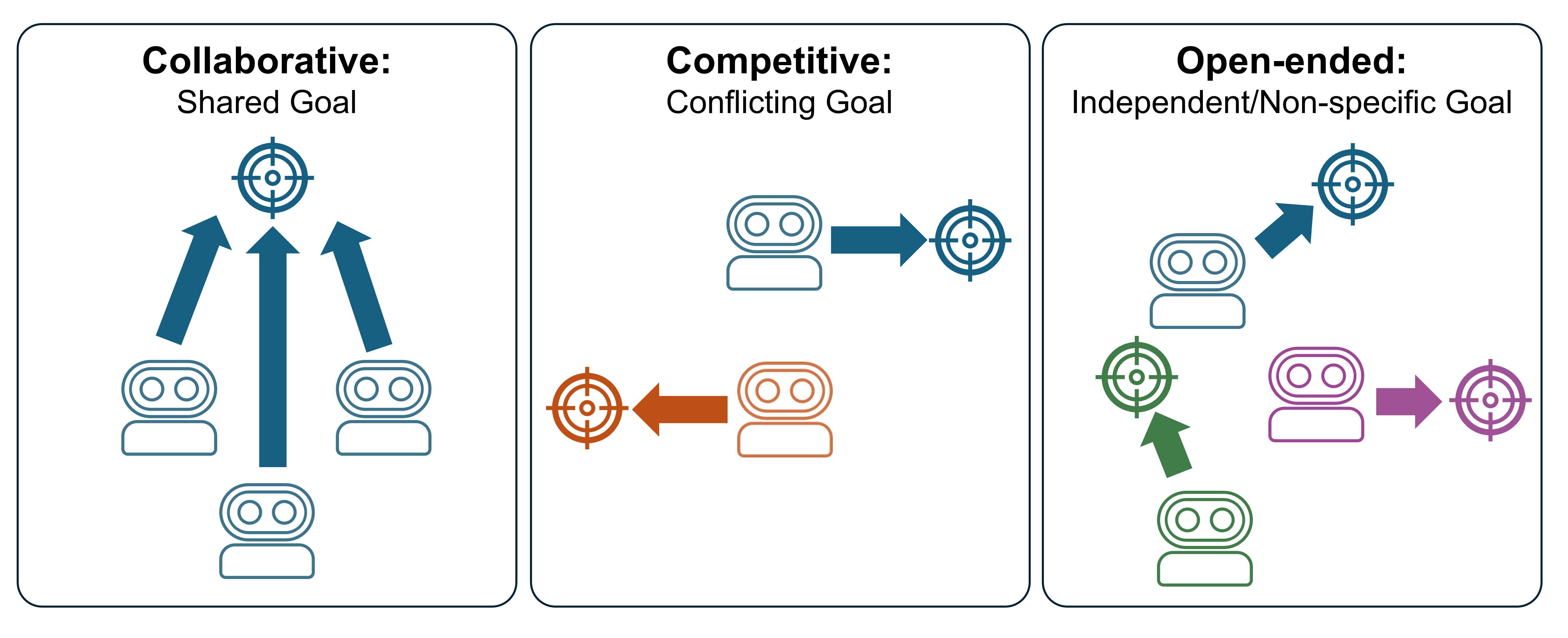

The paper examines how AI agents interact in multi-agent scenarios, highlighting cooperation, competition, and emergent open-ended interactions.

Emergent AI Agent Behaviors in Human-Agent Interaction

AI behaviors that emerge in human-agent interactions are shaped by their roles as companions, catalysts, or clarifiers in cooperative contexts, and as contenders or manipulators in rivalrous settings.

- Cooperative Roles: AI agents can act as companions fostering social attunement, catalysts stimulating idea generation, or clarifiers supporting decision-making. LLMs have demonstrated effective cooperation in tasks requiring emotional and motivational alignment with humans (Wu et al., 2024).

- Rivalrous Roles: In competitive or adversarial scenarios, agents engage in strategic opposition or manipulation. These behaviors have been studied in negotiations and influence operations, showcasing the capacity of LLMs to engage in complex strategic decision-making (Sel et al., 2024).

AI Agent Behavior Adaptation

The adaptation of AI agent behavior is informed by the Fogg Behavior Model, which decomposes behavior into ability, motivation, and trigger components, allowing for more human-aligned and interpretable actions.

AI Agent Behavioral Science for Responsible AI

Behavioral science principles are integral to achieving responsible AI, focusing on fairness, safety, interpretability, accountability, and privacy.

Conclusion

The paradigm of AI Agent Behavioral Science offers a comprehensive framework to understand and guide the behavior of AI systems. By integrating insights from behavioral science, this approach enhances the ability to evaluate, adapt, and govern AI agents in a manner that aligns with human and societal values. Future research directions include refining behavioral adaptation models, exploring scalable evaluation frameworks, and further embedding ethical principles in AI design, ensuring that as AI agents become more integrated into our lives, they do so responsibly and transparently.