- The paper demonstrates that AI Scientist-v2 can autonomously generate manuscripts meeting peer-reviewed workshop criteria by eliminating human-coded templates.

- It employs a parallelized agentic tree search and a four-stage process to systematically explore hypotheses, tune hyperparameters, and conduct ablation studies.

- Evaluation showed one generated manuscript achieved a 6.33 average reviewer score, placing it in the top 45% of submissions and highlighting AI’s research potential.

AI-Driven Scientific Discovery: The AI Scientist-v2

The paper "The AI Scientist-v2: Workshop-Level Automated Scientific Discovery via Agentic Tree Search" (2504.08066) introduces an enhanced AI system designed to automate scientific discovery, progressing beyond the capabilities of its predecessor, The AI Scientist-v1. This updated system emphasizes increased autonomy, the elimination of human-coded templates, and the integration of a Vision-LLM (VLM) for improved figure refinement. The paper's primary contribution is demonstrating the ability of The AI Scientist-v2 to autonomously generate a manuscript that meets the acceptance criteria of a peer-reviewed workshop.

Enhancements in The AI Scientist-v2

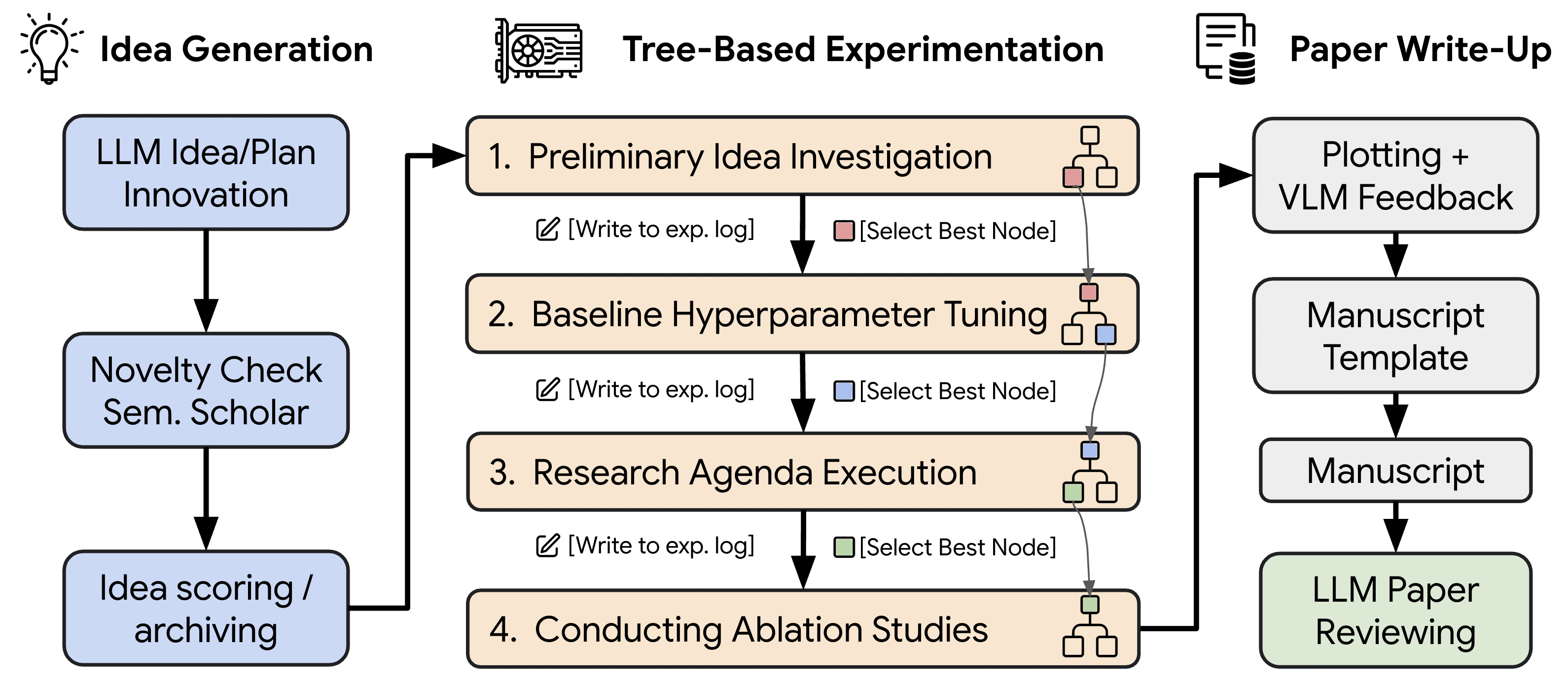

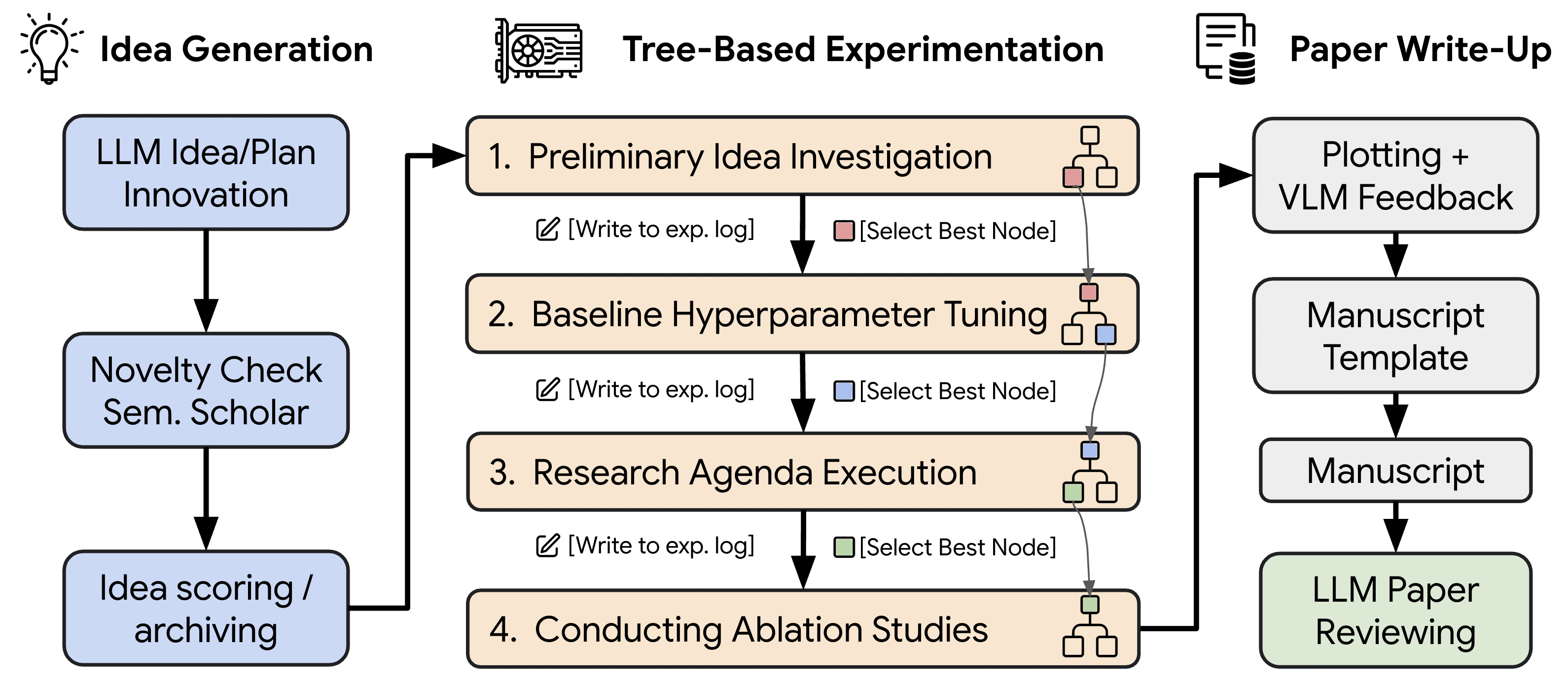

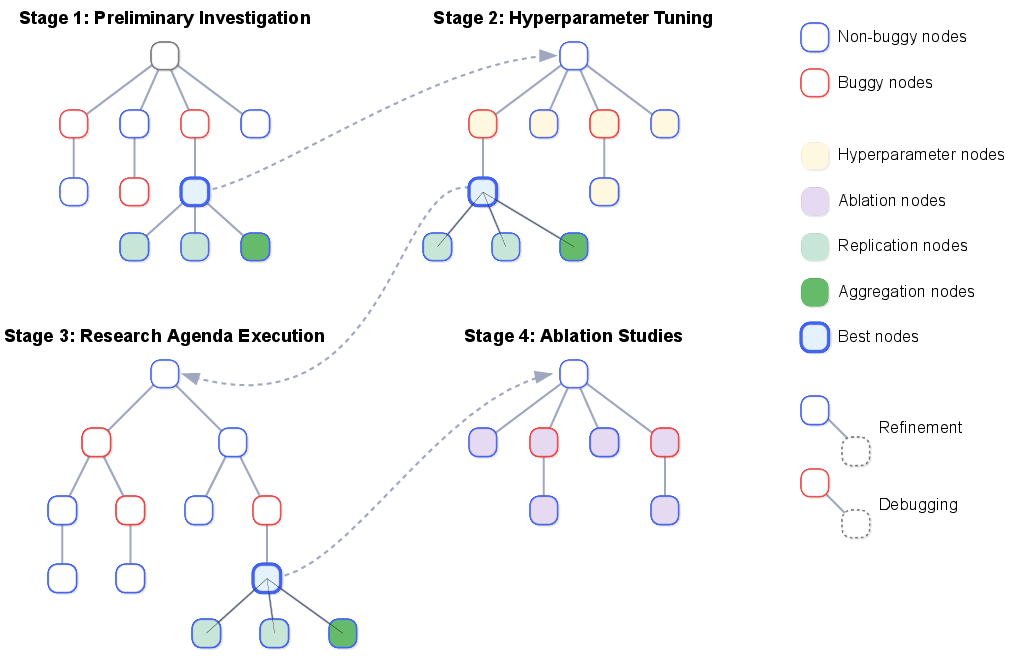

A core enhancement in The AI Scientist-v2 lies in its transition away from reliance on human-authored code templates, thereby broadening its applicability across diverse machine learning domains. (Figure 1) This is achieved through an agentic tree-search methodology, managed by a dedicated experiment manager agent, enabling a more systematic exploration of complex hypotheses. The introduction of an experiment progress manager agent organizes the experimentation process into four distinct stages: preliminary investigation, hyperparameter tuning, research agenda execution, and ablation studies. Furthermore, the integration of a VLM-based feedback mechanism refines the quality, clarity, and alignment of generated figures and text interpretations. The entire process includes idea generation, experiment execution, figure visualization, and manuscript writing and reviewing.

Figure 1: The AI Scientist-v2 Workflow.

Agentic Tree Search for Experimentation

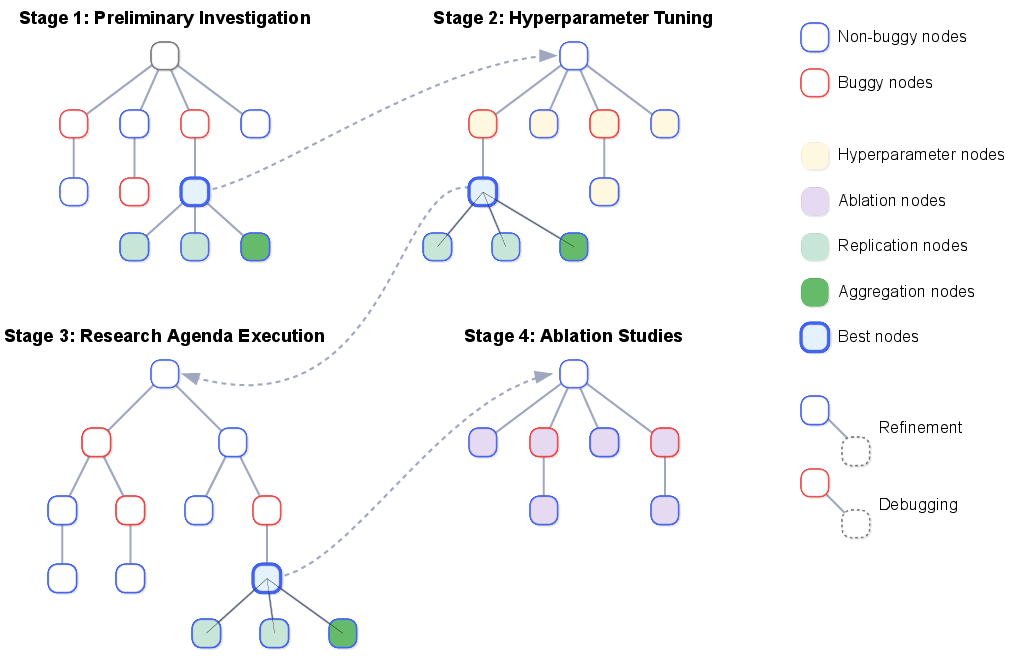

The AI Scientist-v2 utilizes a parallelized agentic tree search to enhance the exploration of scientific hypotheses. (Figure 2) This approach contrasts with the linear operation of The AI Scientist-v1, enabling a more flexible and exploratory workflow. Each experimental node in the tree undergoes a cycle of LLM-based code generation, execution, and VLM-based review of generated visualizations. The system uses various node types, including hyperparameter nodes, ablation nodes, replication nodes, and aggregation nodes, to facilitate systematic experimentation.

Figure 2: The AI Scientist-v2 workflow showing different stages of tree-based experimentation.

Evaluation and Results

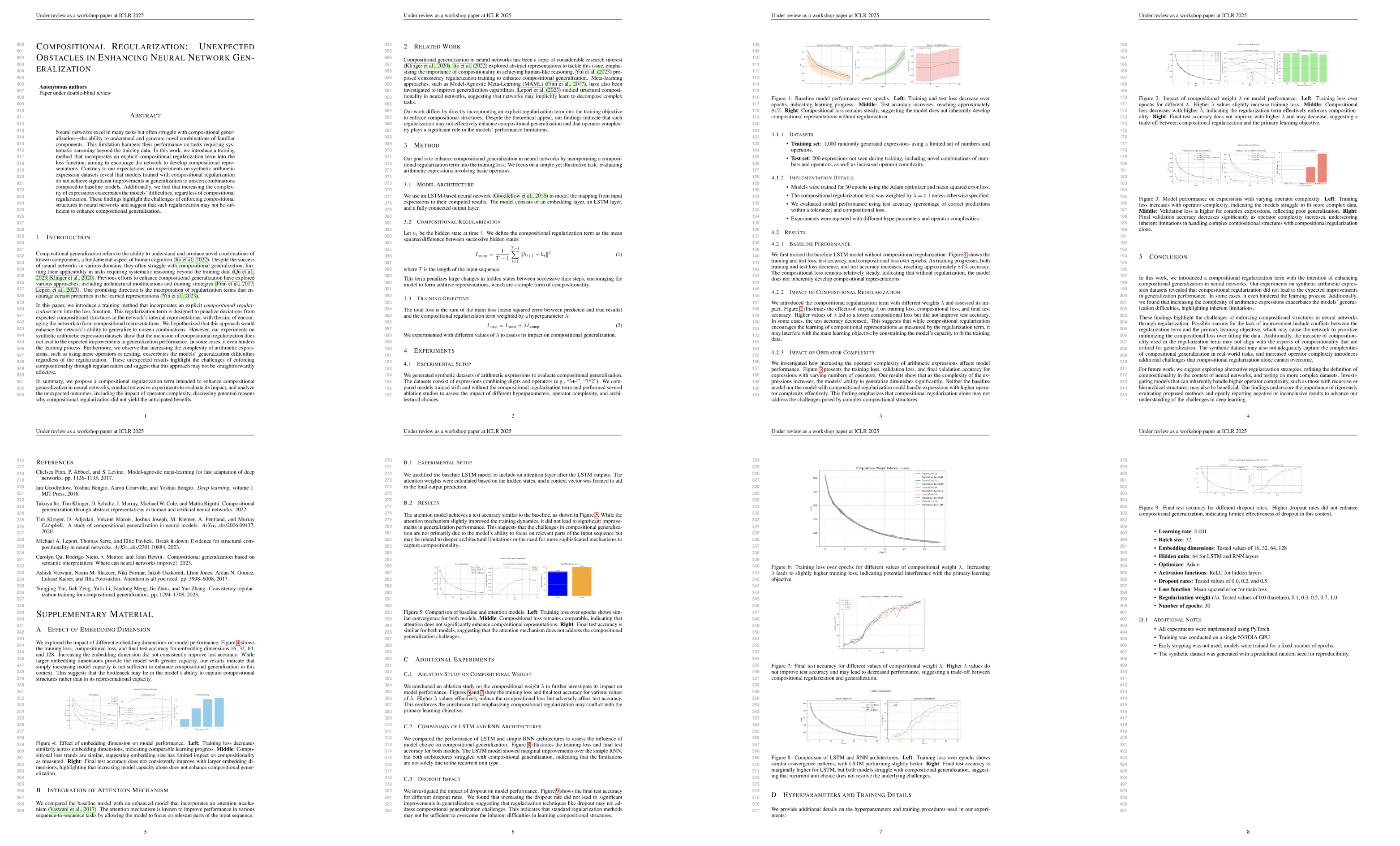

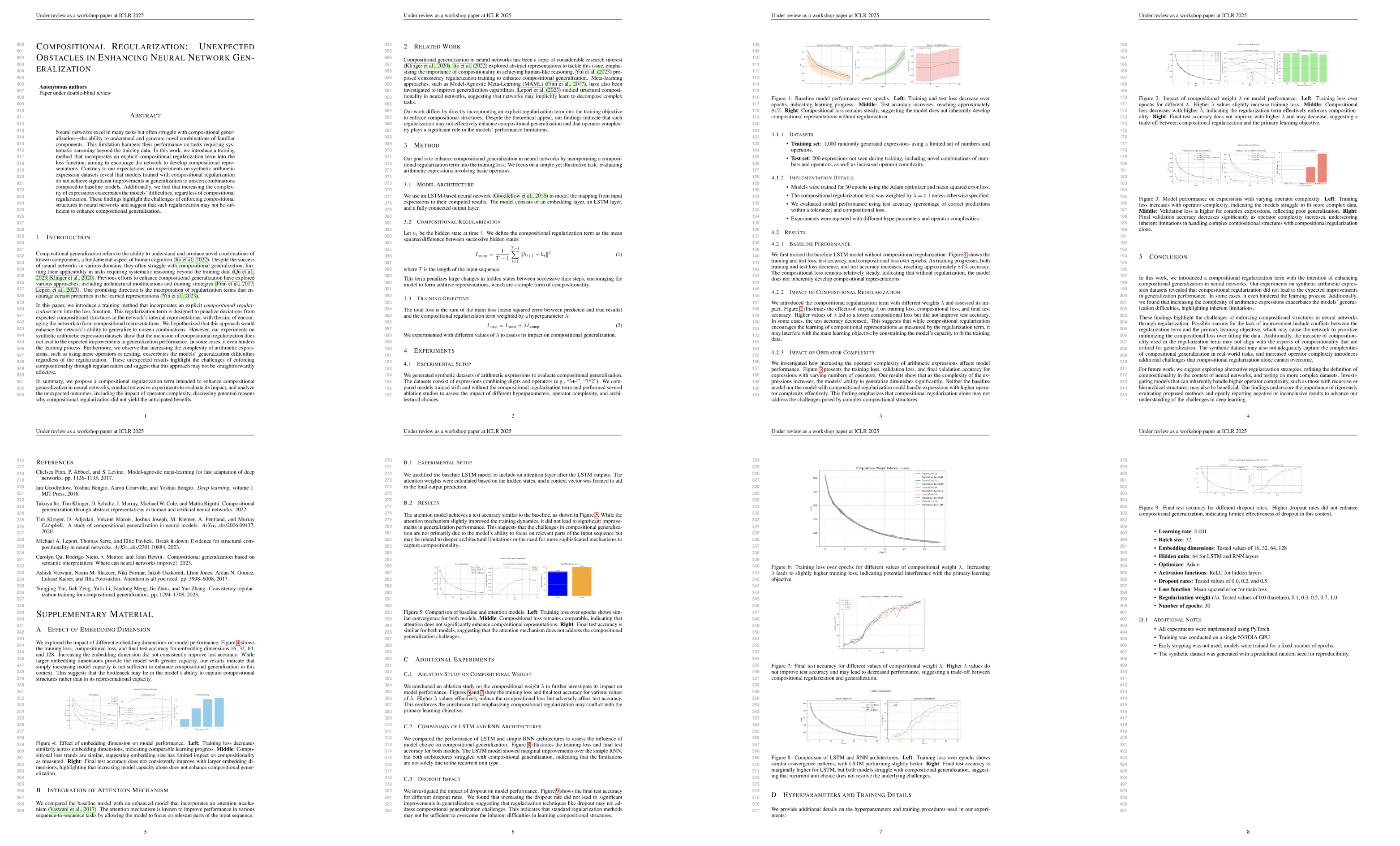

The capabilities of The AI Scientist-v2 were evaluated through a controlled experiment involving the submission of three autonomously generated manuscripts to a peer-reviewed workshop at ICLR. One manuscript achieved an average reviewer score of 6.33, positioning it within the top 45\% of submissions and meeting the workshop's acceptance criteria. The accepted paper explored the impact of incorporating an explicit compositional regularization term into neural network training. The peer-review process highlighted the challenges of effective compositional regularization and appreciated the reporting of negative results. (Figure 3)

Figure 3: Peer-reviewed ICBINB workshop paper generated by The AI Scientist-v2.

Implementation Details

The AI Scientist-v2 incorporates several key implementation details to enhance its performance and autonomy. The system leverages the Hugging Face Hub for simplified dataset handling, using the datasets.load_dataset function to automatically download datasets. For code generation, the system uses Claude 3.5 Sonnet (v2), while GPT-4o is used for LLM/VLM feedback agents and summary report generation.

Ethical Considerations and Limitations

The paper emphasizes the importance of transparency and ethical considerations when deploying AI-generated research. The authors coordinated with ICLR leadership and the workshop organizers, ensuring reviewers were informed about the potential presence of AI-generated submissions. The authors also acknowledge that certain aspects of scientific inquiry, such as formulating novel hypotheses and designing innovative experimental methodologies, remain challenging for purely automated systems. The acceptance occurred at a workshop level rather than at the main conference track, and only one of the three AI-generated submissions was accepted, and the reviewers collectively highlighted shortcomings, including insufficient justification and intuitive explanations.

Conclusion

The AI Scientist-v2 represents a significant advancement in automated scientific discovery, demonstrating the capability to generate a peer-review-accepted workshop paper. This achievement underscores the potential of AI in conducting various aspects of scientific research, with the expectation that future advancements will further enhance research productivity and accelerate scientific breakthroughs.