- The paper's main contribution is a comprehensive review comparing LLMs' language processing with human cognitive functions, highlighting both similarities and differences.

- It demonstrates that fine-tuning on psychological data enables LLMs to replicate human decision-making, offering new perspectives on cognitive modeling.

- It discusses strategies for integrating LLMs with cognitive architectures to enhance reasoning accuracy while mitigating inherent cognitive biases.

LLMs and Cognitive Science

Introduction

LLMs have significantly impacted the landscape of artificial intelligence and cognitive science by emulating certain elements of human cognition. The paper "LLMs and Cognitive Science: A Comprehensive Review of Similarities, Differences, and Challenges" (2409.02387) presents an in-depth analysis of the interplay between LLMs and human cognitive processes. By examining both the similarities and dissimilarities, this paper offers a nuanced understanding of LLMs' potential to serve as cognitive models and their implications for cognitive science.

Comparison of LLMs and Human Cognitive Processes

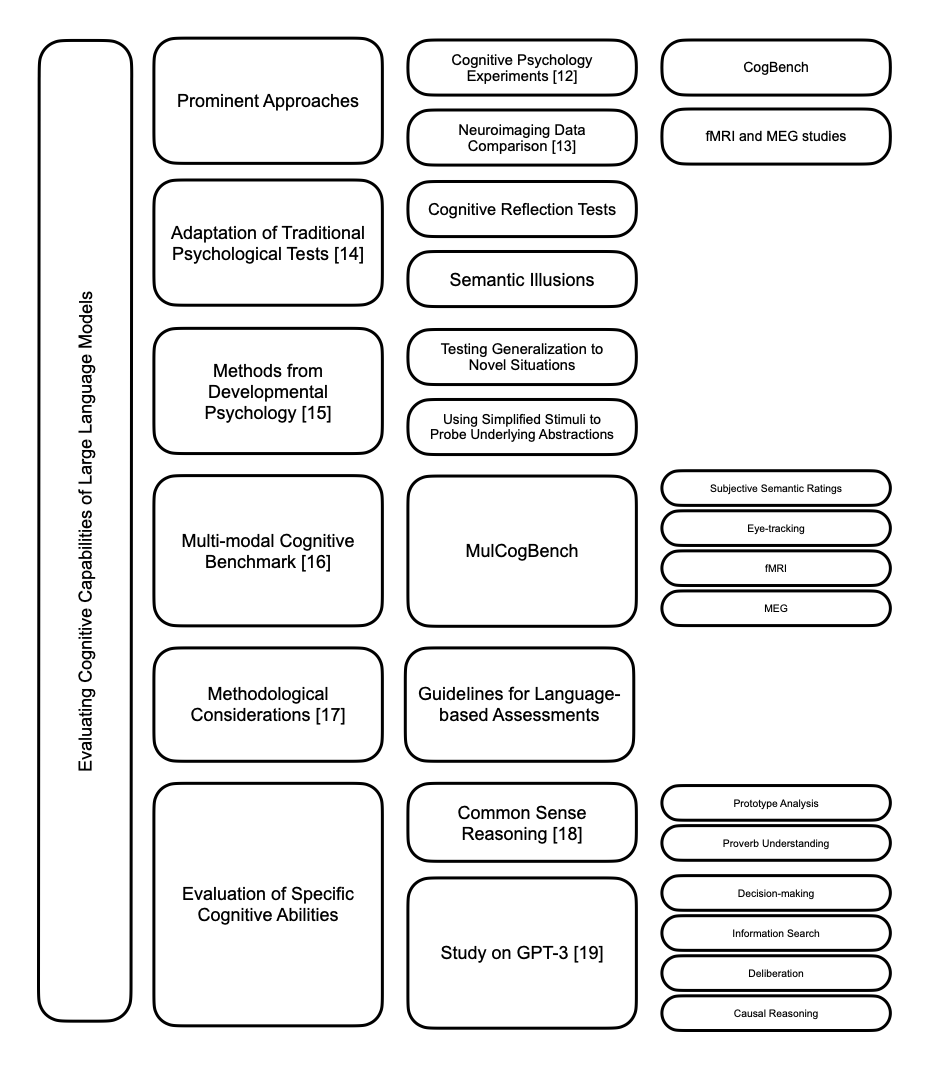

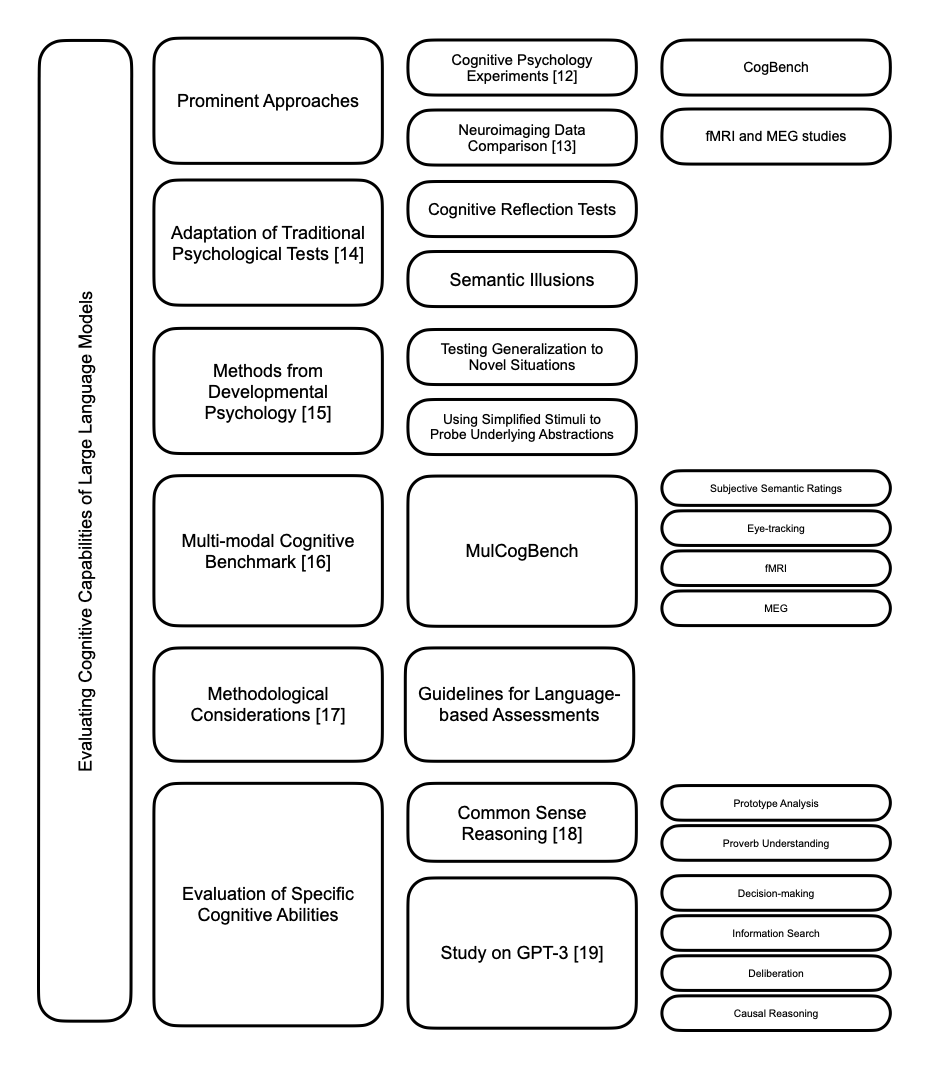

The capabilities of LLMs in mimicking human language processing and reasoning have garnered significant attention. LLMs display similarities to human cognitive behavior, particularly in language tasks, where they have been shown to achieve performance levels on par with humans in terms of word prediction and linguistic representation as evidenced by correlating neural patterns (Figure 1).

Figure 1: Evaluating Cognitive Capabilities of LLMs.

Despite these advances, LLMs exhibit discernible weaknesses compared to human cognition, particularly in their limited capacity to generalize reasoning across novel contexts. Human reasoners typically surpass LLMs in problem-solving capabilities, showing robustness when faced with novel and unexpected situations. Furthermore, the intricacies of human functional linguistic competence are yet to be fully captured by LLMs, which often fail to demonstrate the nuanced understanding required for in-depth semantic processing.

Applications of LLMs in Cognitive Science

LLMs have been instrumental as cognitive models, serving as tools for introspection into human cognitive processes. They have shown significant promise in replicating human judgments across various modalities and tasks, challenging existing cognitive theories and offering new insights. For instance, fine-tuning LLMs on psychological data allows for high fidelity in replicating human decision-making patterns, sometimes exceeding traditional models' predictive capabilities.

However, while LLMs provide new perspectives on linguistic comprehension and cognitive modeling, there remains a cautionary stance within the research community regarding over-reliance on these models for cognitive representations. The discrepancy in learning paradigms between LLMs and human cognition suggests that while parallels exist, the path to fully assimilating human-like intelligence remains intricate.

Limitations and Improvement of LLMs

LLMs, despite their sophistication, are not immune to cognitive biases and shortcomings inherent in human reasoning processes. They demonstrate biases such as overconfidence and framing effects, necessitating strategies for bias mitigation. Furthermore, LLMs have been critiqued for lacking deep semantic understanding, which poses a challenge for their application in tasks requiring nuanced reasoning and abstract thinking.

To address these limitations, various strategies, including integrating cognitive principles into training processes and enhancing model architectures, have been proposed. These methods aim to refine LLMs' ability to mimic the depth and breadth of human reasoning capabilities, thereby increasing their utility as reliable cognitive models.

Integration of LLMs with Cognitive Architectures

The fusion of LLMs with cognitive architectures represents a potential frontier for expanding AI capabilities. By leveraging LLMs within structured cognitive frameworks, researchers aim to develop systems that capitalize on the strengths of both models, enhancing reasoning abilities while reducing vulnerabilities. This integrative approach offers pathways for more adaptive and transparent AI systems capable of complex tasks traditionally reserved for human cognition.

Conclusion

The interaction between LLMs and cognitive science illuminates exciting avenues for enhancing our understanding of cognitive processes and developing advanced AI systems. While LLMs offer promising new perspectives and modeling opportunities, their current constraints underscore the necessity of disciplined research to overcome their cognitive limitations. The ongoing collaboration between AI development and cognitive insights promises to accelerate advancements in both fields, paving the way for systems that not only replicate but also enrich human cognitive capacities.