Softened Symbol Grounding for Neuro-symbolic Systems

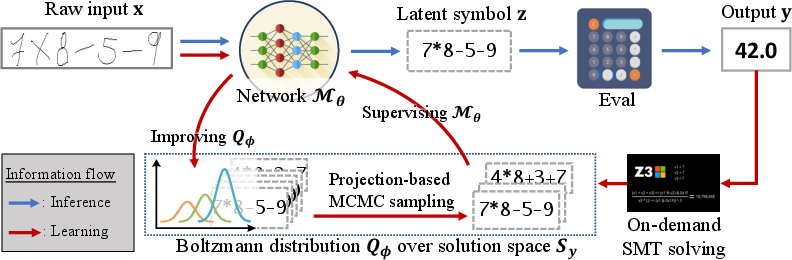

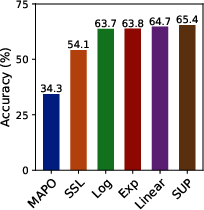

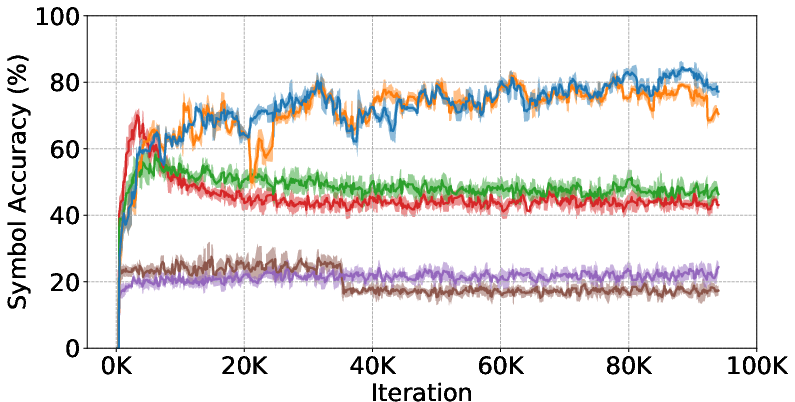

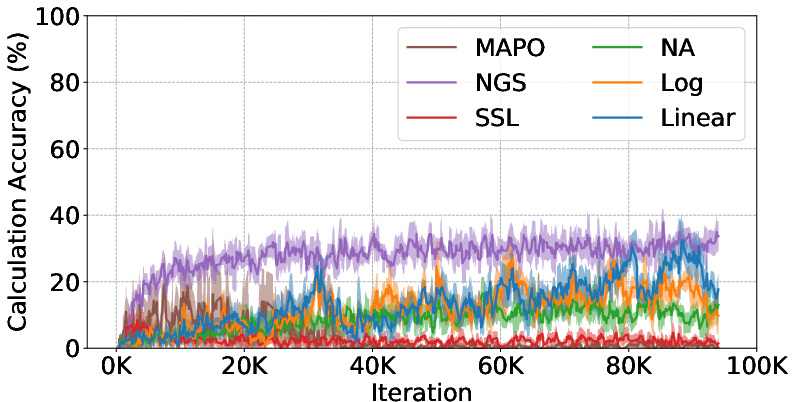

Abstract: Neuro-symbolic learning generally consists of two separated worlds, i.e., neural network training and symbolic constraint solving, whose success hinges on symbol grounding, a fundamental problem in AI. This paper presents a novel, softened symbol grounding process, bridging the gap between the two worlds, and resulting in an effective and efficient neuro-symbolic learning framework. Technically, the framework features (1) modeling of symbol solution states as a Boltzmann distribution, which avoids expensive state searching and facilitates mutually beneficial interactions between network training and symbolic reasoning;(2) a new MCMC technique leveraging projection and SMT solvers, which efficiently samples from disconnected symbol solution spaces; (3) an annealing mechanism that can escape from %being trapped into sub-optimal symbol groundings. Experiments with three representative neuro symbolic learning tasks demonstrate that, owining to its superior symbol grounding capability, our framework successfully solves problems well beyond the frontier of the existing proposals.

- On the convergence of sgd with biased gradients. arXiv preprint arXiv:2008.00051, 2020.

- Sheldon B. Akers. Binary decision diagrams. IEEE Transactions on computers, 27(06):509–516, 1978.

- Neuro-symbolic visual reasoning: Disentangling. In International Conference on Machine Learning, pp. 279–290. PMLR, 2020.

- Vision-and-language navigation: Interpreting visually-grounded navigation instructions in real environments. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 3674–3683, 2018.

- Computational complexity: a modern approach. Cambridge University Press, 2009.

- Yan Bai. An adaptive directional metropolis-within-gibbs algorithm. Preprint, 2009.

- The metropolis algorithm. Computing in Science & Engineering, 2(1):65–69, 2000.

- Gyan Bhanot. The metropolis algorithm. Reports on Progress in Physics, 51(3):429, 1988.

- A scalable approximate model counter. In International Conference on Principles and Practice of Constraint Programming, pp. 200–216. Springer, 2013.

- Enforcing policy feasibility constraints through differentiable projection for energy optimization. In Proceedings of the Twelfth ACM International Conference on Future Energy Systems, pp. 199–210, 2021.

- Vincent Conitzer. On stackelberg mixed strategies. Synthese, 193(3):689–703, 2016.

- Bridging machine learning and logical reasoning by abductive learning. Advances in Neural Information Processing Systems, 32, 2019.

- Adnan Darwiche. Sdd: A new canonical representation of propositional knowledge bases. In Twenty-Second International Joint Conference on Artificial Intelligence, 2011.

- A knowledge compilation map. Journal of Artificial Intelligence Research, 17:229–264, 2002.

- Problog: A probabilistic prolog and its application in link discovery. In IJCAI, volume 7, pp. 2462–2467. Hyderabad, 2007.

- Uniform solution sampling using a constraint solver as an oracle. In Proceedings of the Twenty-Eighth Conference on Uncertainty in Artificial Intelligence, pp. 255–264, 2012.

- Fast sampling and counting k-sat solutions in the local lemma regime. Journal of the ACM (JACM), 68(6):1–42, 2021a.

- Sampling constraint satisfaction solutions in the local lemma regime. In Proceedings of the 53rd Annual ACM SIGACT Symposium on Theory of Computing, pp. 1565–1578, 2021b.

- Dl2: Training and querying neural networks with logic. In International Conference on Machine Learning, pp. 1931–1941. PMLR, 2019.

- Speaker-follower models for vision-and-language navigation. Advances in Neural Information Processing Systems, 31, 2018.

- Neural-symbolic computing: An effective methodology for principled integration of machine learning and reasoning. Journal of Applied Logics, 6(4):611–632, 2019.

- Neural-symbolic learning and reasoning: A survey and interpretation. Neuro-Symbolic Artificial Intelligence: The State of the Art, 342:1, 2022.

- Near-uniform sampling of combinatorial spaces using xor constraints. Advances In Neural Information Processing Systems, 19, 2006.

- Stochastic optimization of sorting networks via continuous relaxations. In International Conference on Learning Representations, 2019.

- Exploring network structure, dynamics, and function using networkx. Technical report, Los Alamos National Lab.(LANL), Los Alamos, NM (United States), 2008.

- Bruce Hajek. Cooling schedules for optimal annealing. Mathematics of operations research, 13(2):311–329, 1988.

- The theory and practice of simulated annealing. In Handbook of metaheuristics, pp. 287–319. Springer, 2003.

- Pascal Hitzler. Neuro-symbolic artificial intelligence: The state of the art. 2022.

- Multiplexnet: Towards fully satisfied logical constraints in neural networks. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 36, pp. 5700–5709, 2022.

- Learning by fixing: Solving math word problems with weak supervision. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 35, pp. 4959–4967, 2021.

- Harnessing deep neural networks with logic rules. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 2410–2420, 2016.

- Fast abductive learning by similarity-based consistency optimization. Advances in Neural Information Processing Systems, 34:26574–26584, 2021.

- Unbiased markov chain monte carlo with couplings. arXiv preprint arXiv:1708.03625, 2017.

- Random generation of combinatorial structures from a uniform distribution. Theoretical computer science, 43:169–188, 1986.

- What is local optimality in nonconvex-nonconcave minimax optimization? In International conference on machine learning, pp. 4880–4889. PMLR, 2020.

- Joblib Development Team. Joblib: running python functions as pipeline jobs, 2020. URL https://joblib.readthedocs.io/.

- A tutorial on energy-based learning. Predicting structured data, 1(0), 2006.

- Dong-Hyun Lee et al. Pseudo-label: The simple and efficient semi-supervised learning method for deep neural networks. In Workshop on challenges in representation learning, ICML, volume 3, pp. 896, 2013.

- On the value of commitment. Autonomous Agents and Multi-Agent Systems, 28(6):986–1016, 2014.

- Markov chains and mixing times, volume 107. American Mathematical Soc., 2017.

- Closed loop neural-symbolic learning via integrating neural perception, grammar parsing, and symbolic reasoning. In International Conference on Machine Learning, pp. 5884–5894. PMLR, 2020.

- Neural symbolic machines: Learning semantic parsers on Freebase with weak supervision. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 23–33, Vancouver, Canada, July 2017. Association for Computational Linguistics. doi: 10.18653/v1/P17-1003. URL https://aclanthology.org/P17-1003.

- Memory augmented policy optimization for program synthesis and semantic parsing. Advances in Neural Information Processing Systems, 31, 2018.

- Information theory, inference and learning algorithms. Cambridge university press, 2003.

- Boolean satisfiability from theoretical hardness to practical success. Commun. ACM, 52(8):76–82, aug 2009. ISSN 0001-0782. doi: 10.1145/1536616.1536637. URL https://doi.org/10.1145/1536616.1536637.

- Deepproblog: Neural probabilistic logic programming. Advances in Neural Information Processing Systems, 31, 2018.

- Approximate inference for neural probabilistic logic programming. In KR, pp. 475–486, 2021.

- From statistical relational to neural symbolic artificial intelligence: a survey. arXiv preprint arXiv:2108.11451, 2021.

- Constrained sampling and counting: Universal hashing meets sat solving. In Workshops at the thirtieth AAAI conference on artificial intelligence, 2016.

- Equation of state calculations by fast computing machines. The journal of chemical physics, 21(6):1087–1092, 1953.

- Ankur Moitra. Approximate counting, the lovász local lemma, and inference in graphical models. Journal of the ACM (JACM), 66(2):1–25, 2019.

- Z3: An efficient smt solver. In International conference on Tools and Algorithms for the Construction and Analysis of Systems, pp. 337–340. Springer, 2008.

- A primal dual formulation for deep learning with constraints. Advances in Neural Information Processing Systems, 32, 2019.

- Solving sat and sat modulo theories: From an abstract davis–putnam–logemann–loveland procedure to dpll (t). Journal of the ACM (JACM), 53(6):937–977, 2006.

- A comparison of simulated annealing cooling strategies. Journal of Physics A: Mathematical and General, 31(41):8373, 1998.

- Neupsl: Neural probabilistic soft logic. arXiv preprint arXiv:2205.14268, 2022.

- Neural-symbolic solver for math word problems with auxiliary tasks. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pp. 5870–5881, Online, August 2021. Association for Computational Linguistics. doi: 10.18653/v1/2021.acl-long.456. URL https://aclanthology.org/2021.acl-long.456.

- Statistical relational artificial intelligence: Logic, probability, and computation. Synthesis lectures on artificial intelligence and machine learning, 10(2):1–189, 2016.

- Stuart J Russell. Artificial intelligence a modern approach. Pearson Education, Inc., 2010.

- The random walk metropolis: linking theory and practice through a case study. Statistical Science, 25(2):172–190, 2010.

- Elements of information theory. Wiley-Interscience, 2006.

- Techniques for symbol grounding with satnet. Advances in Neural Information Processing Systems, 34:20733–20744, 2021.

- Neural arithmetic logic units. Advances in neural information processing systems, 31, 2018.

- Leslie G Valiant. The complexity of enumeration and reliability problems. SIAM Journal on Computing, 8(3):410–421, 1979.

- Leslie G Valiant. Three problems in computer science. Journal of the ACM (JACM), 50(1):96–99, 2003.

- Moshe Y Vardi. Boolean satisfiability: theory and engineering. Communications of the ACM, 57(3):5–5, 2014.

- Probabilistic neural symbolic models for interpretable visual question answering. In Kamalika Chaudhuri and Ruslan Salakhutdinov (eds.), Proceedings of the 36th International Conference on Machine Learning, volume 97 of Proceedings of Machine Learning Research, pp. 6428–6437. PMLR, 09–15 Jun 2019. URL https://proceedings.mlr.press/v97/vedantam19a.html.

- Satnet: Bridging deep learning and logical reasoning using a differentiable satisfiability solver. In International Conference on Machine Learning, pp. 6545–6554. PMLR, 2019.

- Towards efficient sampling: Exploiting random walk strategies. In AAAI, volume 4, pp. 670–676, 2004.

- Avi Wigderson. Mathematics and computation. In Mathematics and Computation. Princeton University Press, 2019.

- Ronald J Williams. Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine learning, 8(3):229–256, 1992.

- Deepstochlog: Neural stochastic logic programming. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 36, pp. 10090–10100, 2022.

- A semantic loss function for deep learning with symbolic knowledge. In International conference on machine learning, pp. 5502–5511. PMLR, 2018.

- Neurasp: Embracing neural networks into answer set programming. In 29th International Joint Conference on Artificial Intelligence (IJCAI 2020), 2020.

- Neural-symbolic vqa: Disentangling reasoning from vision and language understanding. Advances in neural information processing systems, 31, 2018.

- A survey on neural-symbolic systems. arXiv preprint arXiv:2111.08164, 2021.

- Zhi-Hua Zhou. Abductive learning: towards bridging machine learning and logical reasoning. Science China Information Sciences, 62:1–3, 2019.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.