NeU-NBV: Next Best View Planning Using Uncertainty Estimation in Image-Based Neural Rendering

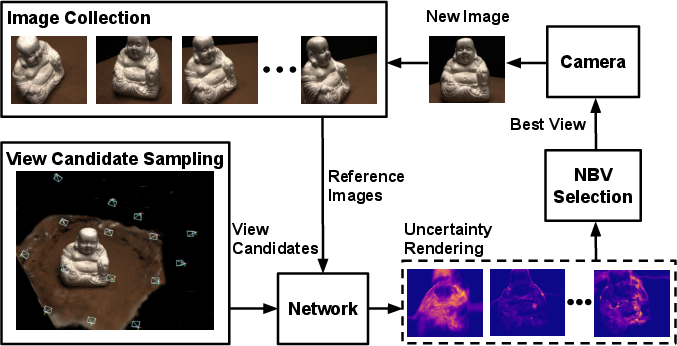

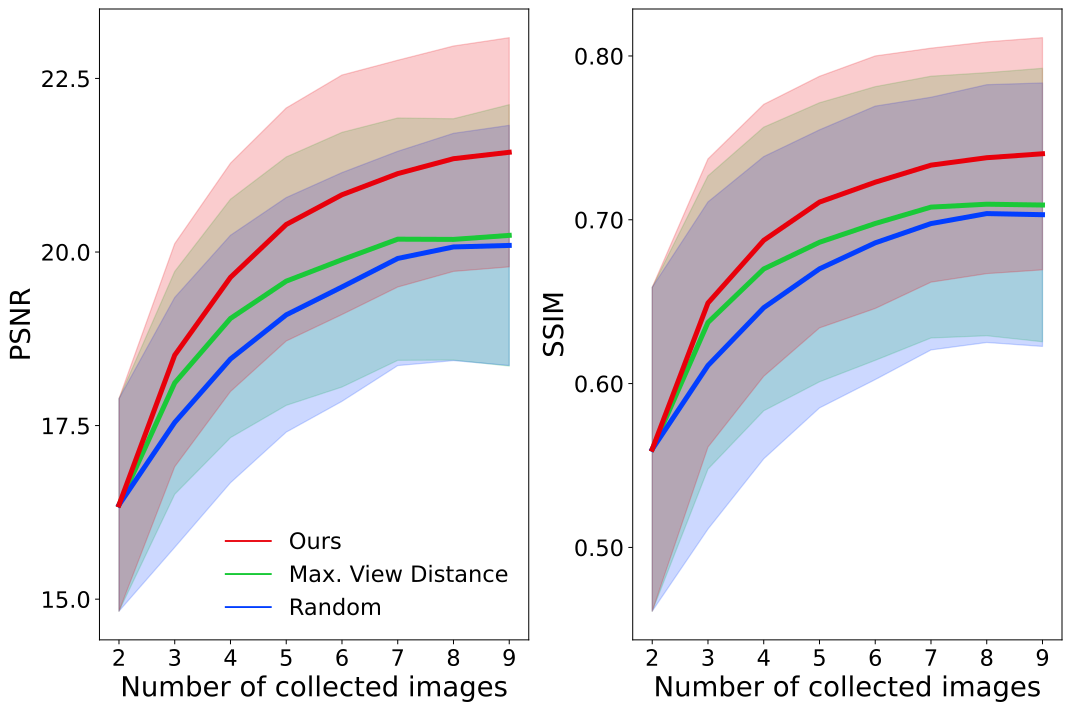

Abstract: Autonomous robotic tasks require actively perceiving the environment to achieve application-specific goals. In this paper, we address the problem of positioning an RGB camera to collect the most informative images to represent an unknown scene, given a limited measurement budget. We propose a novel mapless planning framework to iteratively plan the next best camera view based on collected image measurements. A key aspect of our approach is a new technique for uncertainty estimation in image-based neural rendering, which guides measurement acquisition at the most uncertain view among view candidates, thus maximising the information value during data collection. By incrementally adding new measurements into our image collection, our approach efficiently explores an unknown scene in a mapless manner. We show that our uncertainty estimation is generalisable and valuable for view planning in unknown scenes. Our planning experiments using synthetic and real-world data verify that our uncertainty-guided approach finds informative images leading to more accurate scene representations when compared against baselines.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Glossary

- Active perception: A robotic strategy where the agent deliberately selects sensor viewpoints or actions to gather informative data for its goals. "Active perception and exploration is a core prerequisite for embodied robotic intelligence."

- Acquisition function: A scoring function used to evaluate and select among candidate actions or views based on expected utility or information gain. "using an acquisition function capturing their expected utility based on the current map state."

- Adam optimiser: An adaptive stochastic gradient optimization algorithm that uses estimates of first and second moments of gradients. "We use the Adam optimiser with a learning rate of and exponential decay of $0.999$."

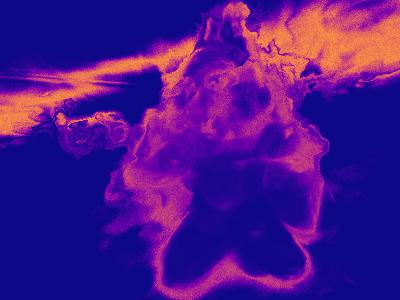

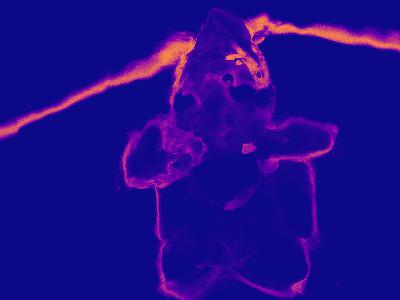

- Area Under the Sparsification Error (AUSE): A metric that evaluates how well uncertainty correlates with error by progressively removing high-uncertainty pixels and measuring residual error. "we report the Area Under the Sparsification Error (AUSE) curve~\citep{Ilg2018}, which reveals how strongly the uncertainty coincides with the rendering error pixel-wise."

- Bilinear interpolation: A method for interpolating values on a 2D grid using linear interpolation in both directions, commonly used to sample image features. "by grid sampling with bilinear interpolation~\citep{Yu2021}."

- Differentiable volume rendering: A rendering technique that integrates radiance and density along rays with differentiable operations, enabling gradient-based learning. "The final RGB and depth estimate of the ray is calculated by differentiable volume rendering."

- Entropy (uncertainty measure): A measure of uncertainty in a probability distribution; here used to quantify ambiguity in density predictions along a ray. "propose calculating the entropy of the density prediction along the ray as an uncertainty measure with respect to the scene geometry."

- Ensemble (of models): A collection of models whose aggregated predictions can capture uncertainty via variance across the ensemble. "train an ensemble of NeRF models for a single scene, and measure uncertainty using the variance of the ensemble's prediction, which is utilised for NBV selection."

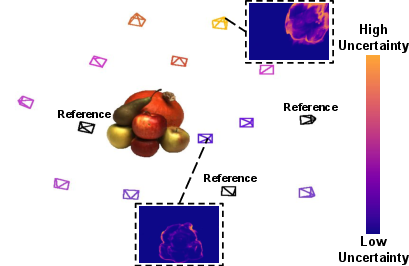

- Frustum: The pyramidal (or conical) volume representing a camera’s field of view in 3D space. "Given reference images from the current image collection of the scene (black frustums), our network outputs per-pixel uncertainty estimates at sampled view candidates (coloured frustums)."

- Image-based neural rendering: Methods that synthesize novel views by conditioning an implicit representation on features extracted from nearby images, without per-scene optimization. "another line of work focuses on image-based neural rendering~\citep{Yu2021, Rosu2021, Wang2021, Trevithick2021}."

- Implicit neural representations: Neural networks that parameterize continuous signals (e.g., radiance fields) implicitly in their weights, enabling differentiable query of values at arbitrary coordinates. "Implicit neural representations parameterise a continuous differentiable signal with a neural network~\citep{Tewari2022}."

- Instant-NGP: A fast framework for training and rendering neural graphics primitives (including NeRFs), often used to accelerate scene reconstruction. "we use Instant-NGP~\citep{mueller2022} to train NeRF models using images collected by the three planning approaches, respectively, under the same training conditions."

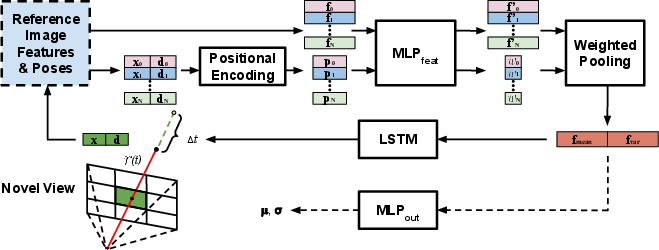

- Long short-term memory (LSTM): A recurrent neural network architecture that maintains long-range dependencies via gated memory cells. "we adopt a long short-term memory (LSTM) module~\citep{Hochreiter1997} to adaptively predict the jumping distance to the next sampling point"

- Logistic normal distribution: A distribution formed by applying the logistic (sigmoid) transform to a normal variable, yielding support on (0,1); used to model bounded channel values. "we model each channel value of the RGB prediction , where , as an independent logistic normal distribution described by:"

- Multilayer perceptron (MLP): A feedforward neural network composed of multiple layers of nonlinear transformations. "a multilayer perceptron (MLP) is trained to interpret the aggregated feature into appearance and geometry information at a novel view."

- Negative log-likelihood (NLL): A loss function that penalizes unlikely predictions under a probabilistic model, commonly used for maximum likelihood training. "we minimise the negative log-likelihood given ground truth RGB channel values"

- Neural radiance fields (NeRFs): Implicit models that learn a continuous 3D radiance and density field from images to render novel views via volume rendering. "NeRFs~\citep{Mildenhall2020} learn a density and radiance field supervised only by 2D images."

- Next Best View (NBV): The planning problem of selecting the next sensor viewpoint to maximize information gain given current knowledge. "we present a new framework for iteratively planning the next best view (NBV) for an RGB camera to explore an unknown scene."

- Peak signal-to-noise ratio (PSNR): A logarithmic metric (in dB) for image reconstruction quality based on mean squared error. "The rendering quality is measured by the peak signal-to-noise ratio (PSNR) and structural similarity index measure (SSIM)~\citep{Mildenhall2020}."

- Positional encoding: A mapping that lifts coordinates into a higher-dimensional space (e.g., via sinusoids) to enable learning high-frequency variations. "the point position is mapped into higher-dimensional space by the positional encoding operation proposed by~\citet{Mildenhall2020}."

- Receding-horizon (planning): A strategy that optimizes actions over a finite horizon and re-plans at each step as new information becomes available. "find the NBV in a receding-horizon fashion by generating a random tree"

- Scene priors: Prior knowledge of scene structure learned across many scenes, enabling generalization to new environments. "This allows training the network across multiple scenes to learn scene priors, enabling it to generalise to new scenes without test time optimisation."

- Scene-centric hemisphere: An action space where viewpoints lie on a hemisphere centered around the scene, used for view sampling and planning. "For view planning, we consider a scene-centric hemisphere action space."

- Spearman's Rank Correlation Coefficient (SRCC): A non-parametric statistic measuring monotonic association between two ranked variables. "We use Spearman's Rank Correlation Coefficient (SRCC)~\citep{Spearman1904} to asses the monotonic relationship between averaged uncertainty estimate and rendering error over a test view."

- Structural similarity index measure (SSIM): An image quality metric assessing perceived structural similarity between images. "The rendering quality is measured by the peak signal-to-noise ratio (PSNR) and structural similarity index measure (SSIM)~\citep{Mildenhall2020}."

- Uncertainty estimation: Quantifying the confidence of predictions, often to guide data acquisition or assess model reliability. "A key aspect of our approach is a new technique for uncertainty estimation in image-based neural rendering, which guides measurement acquisition at the most uncertain view among view candidates"

- Utility function: A scalar function that ranks candidate actions or views by expected benefit; used here to select NBV. "In this setup, we propose a simple utility function defined as:"

- Variational inference: An optimization-based approach to approximate complex posterior distributions in probabilistic models. "and uses variational inference to approximate their posterior distribution after training."

- Voxel map: A volumetric grid representation of 3D space where each voxel encodes occupancy or other properties. "\citet{Zaenker2021} maintain a voxel map of the scene and select the NBV among candidates obtained by targeted region-of-interest sampling and frontier-based exploration sampling."

- Volume rendering: Rendering method that integrates contributions along rays through a volumetric field (e.g., density, radiance). "PixelNeRF uses a volume rendering technique requiring dense sampling along the ray at predefined intervals"

Collections

Sign up for free to add this paper to one or more collections.