- The paper introduces EMVLight, a multi-agent reinforcement learning framework that integrates dynamic routing with adaptive signal control to reduce EMV travel times by 42.6%.

- The methodology employs a multi-agent advantage actor-critic model with local observations and spatial reward adjustments to foster cooperative traffic management.

- Simulations on synthetic and real-world maps demonstrate significant improvements in emergency and overall traffic flows, highlighting the benefits of decentralized control.

Multi-agent Reinforcement Learning Framework for Emergency Vehicle Routing

Introduction

The paper "EMVLight: a Multi-agent Reinforcement Learning Framework for an Emergency Vehicle Decentralized Routing and Traffic Signal Control System" (2206.13441) presents a sophisticated approach to optimizing emergency vehicle (EMV) navigation and traffic signal control in urban settings. Utilizing decentralized reinforcement learning, EMVLight endeavors to alleviate the coupling between dynamic EMV routing and traffic signal pre-emption, enhancing both EMV and non-EMV traffic flow efficiency.

Methodology

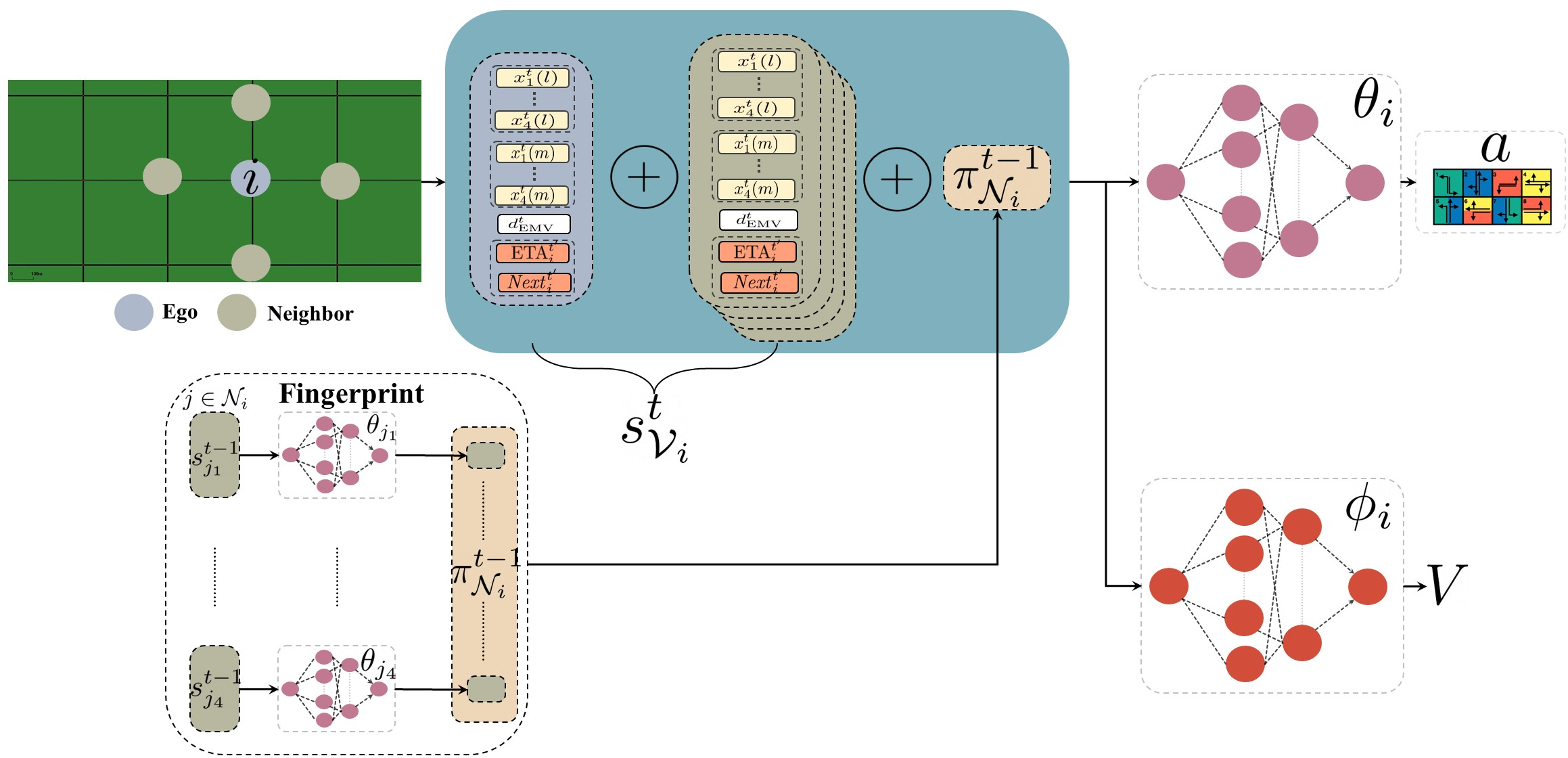

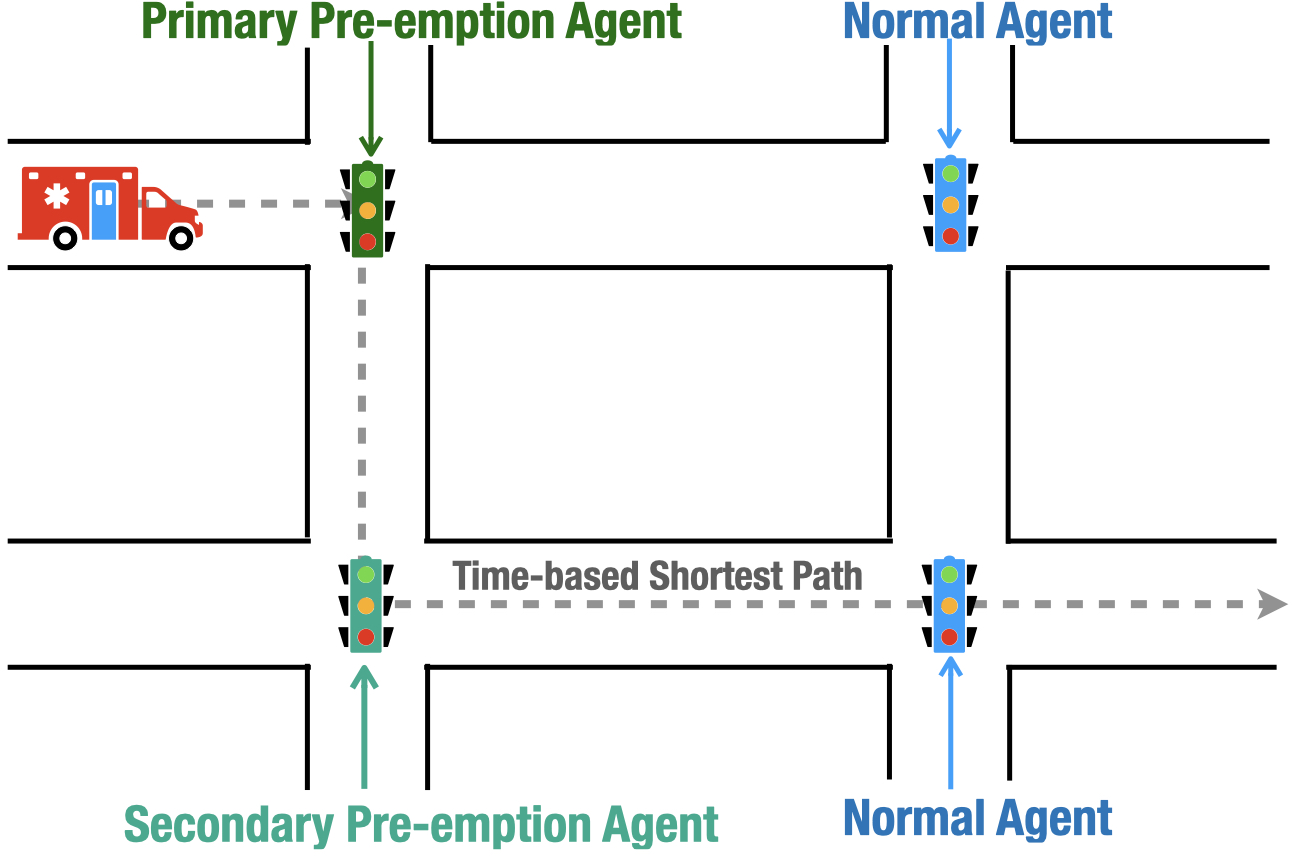

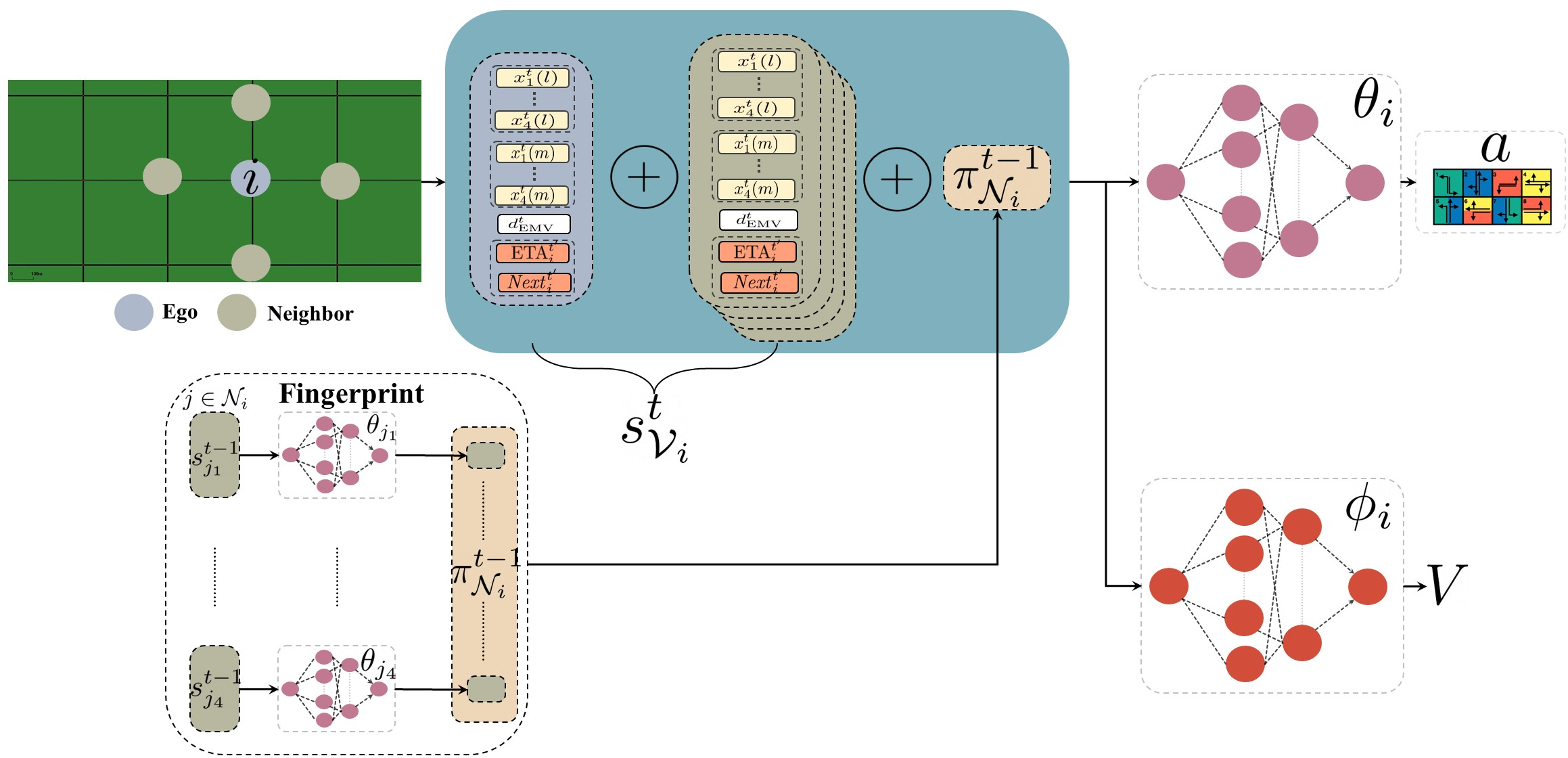

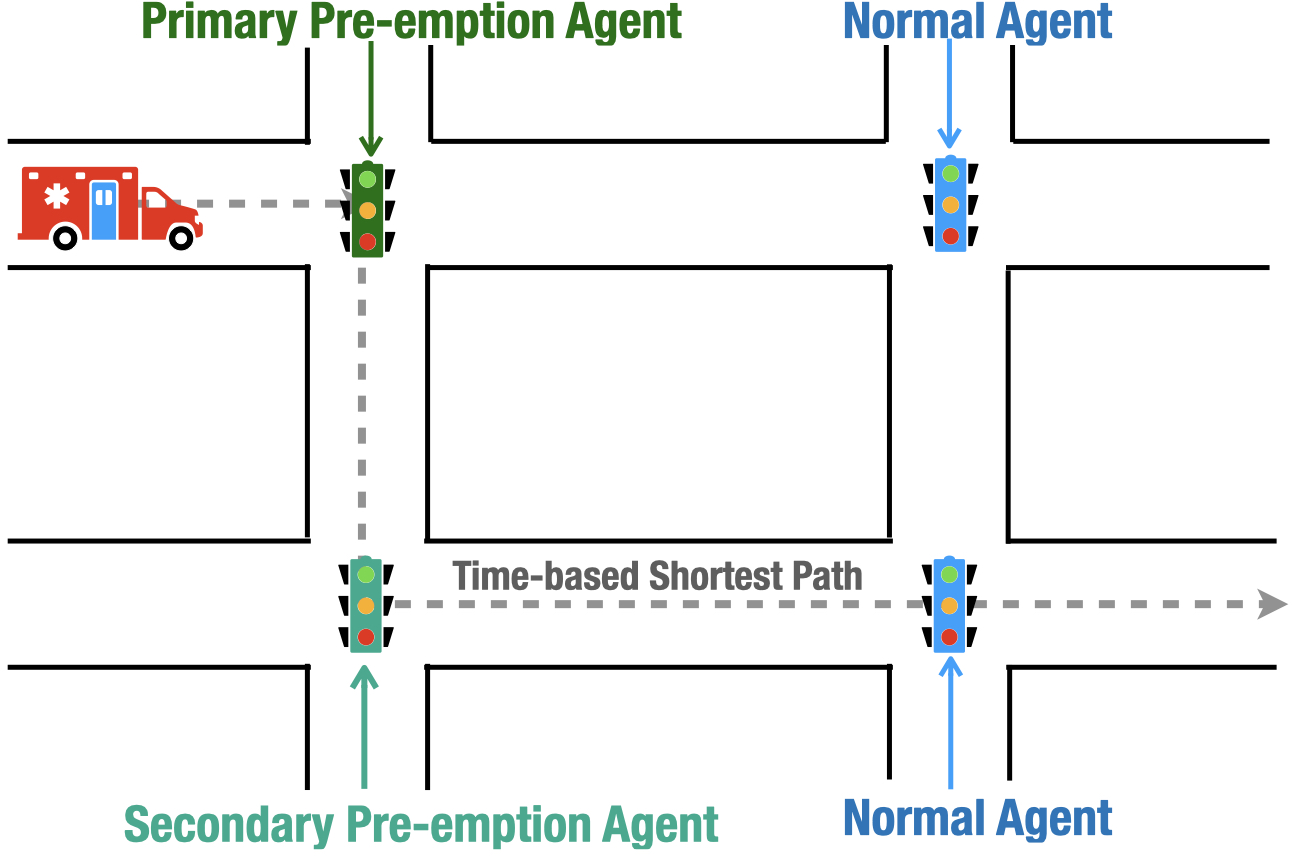

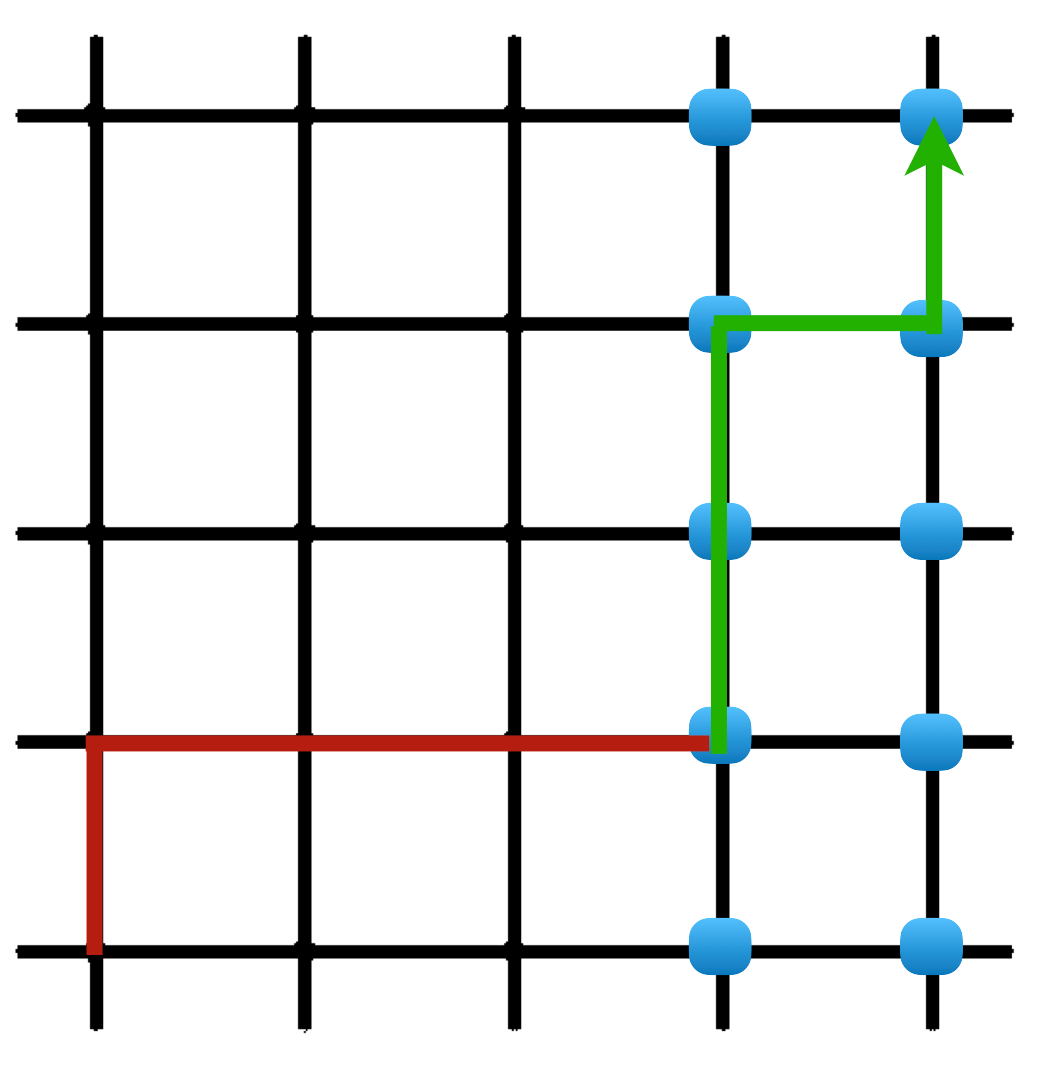

EMVLight leverages a multi-agent advantage actor-critic (MA2C) framework intertwining dynamic routing and adaptive traffic signal control. The reinforcement learning framework utilizes multi-class RL agents, categorized based on their role in EMV navigation. Primary and secondary pre-emption agents facilitate efficient EMV passage by dynamically adapting traffic signals. Coupling sophisticated routing algorithms with these agents allows for real-time route adjustments, optimizing EMV travel paths under fluctuating traffic conditions.

Figure 1: Overview of MA2C framework for EMVLight’s navigation and traffic signal control.

The agents utilize local observations, including EMV distance, estimated time of arrival (ETA), and upcoming link choices, alongside spatial discount factors to adjust rewards based on traffic interactions. This spatially aware reward system encourages cooperative signal phasing, reducing congestion and enhancing overall travel efficiency.

Experimental Setup

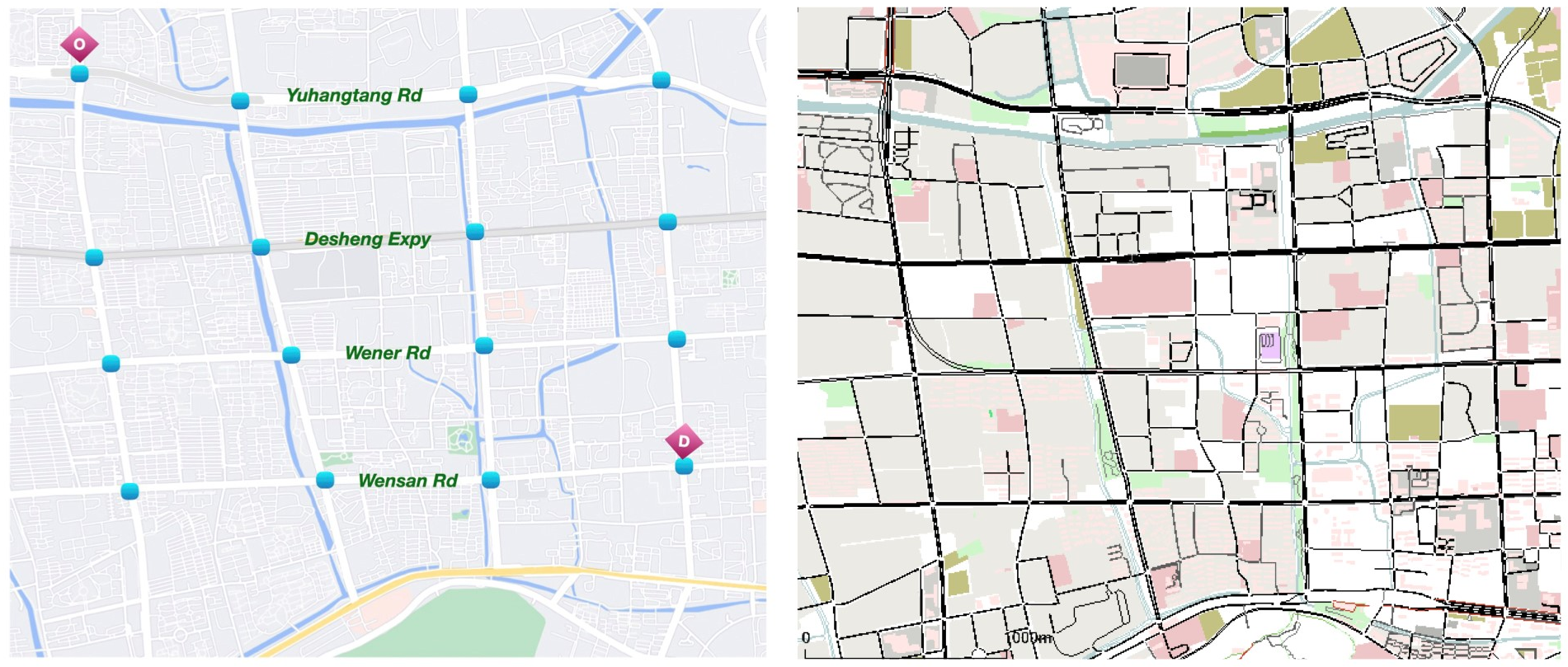

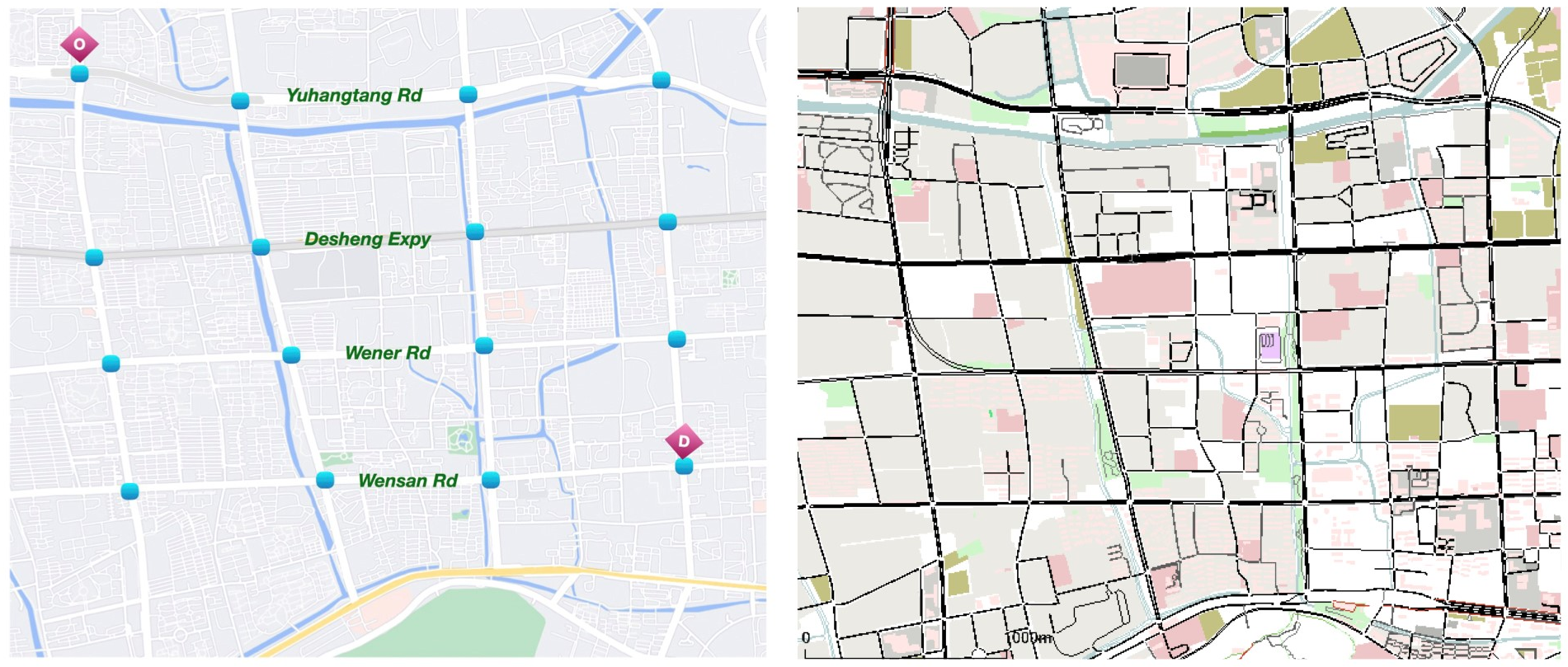

Simulations conducted in SUMO, incorporating synthetic and real-world maps (e.g., Manhattan and Hangzhou), demonstrate EMVLight's effectiveness. Various configurations evaluate EMVLight against traditional and reinforcement learning-based benchmarks, showing remarkable improvements in travel times.

Figure 2: EMV travel time and average travel time on Gudang4×4, showcasing EMVLight’s advantage.

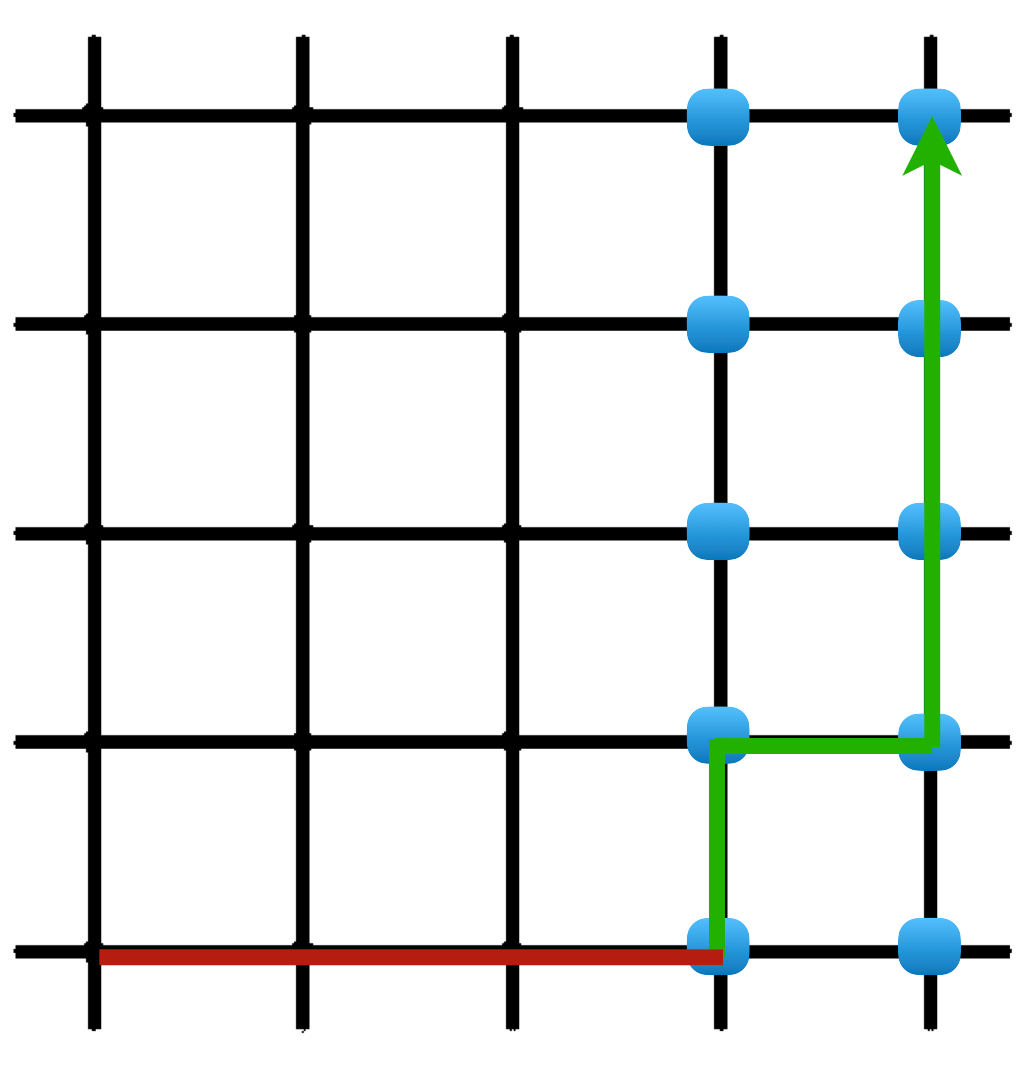

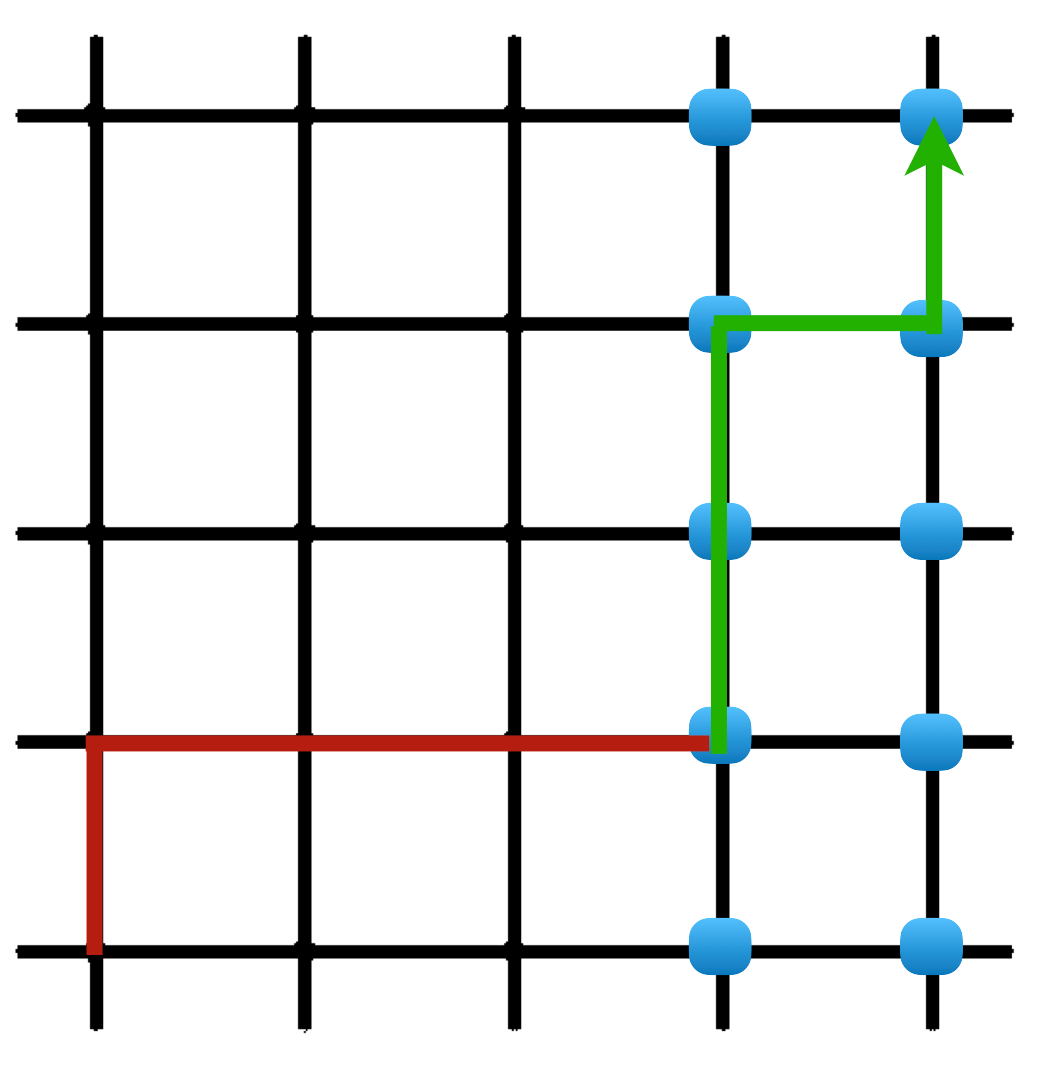

Figure 3: Types of agents through the EMV’s passage to the destination.

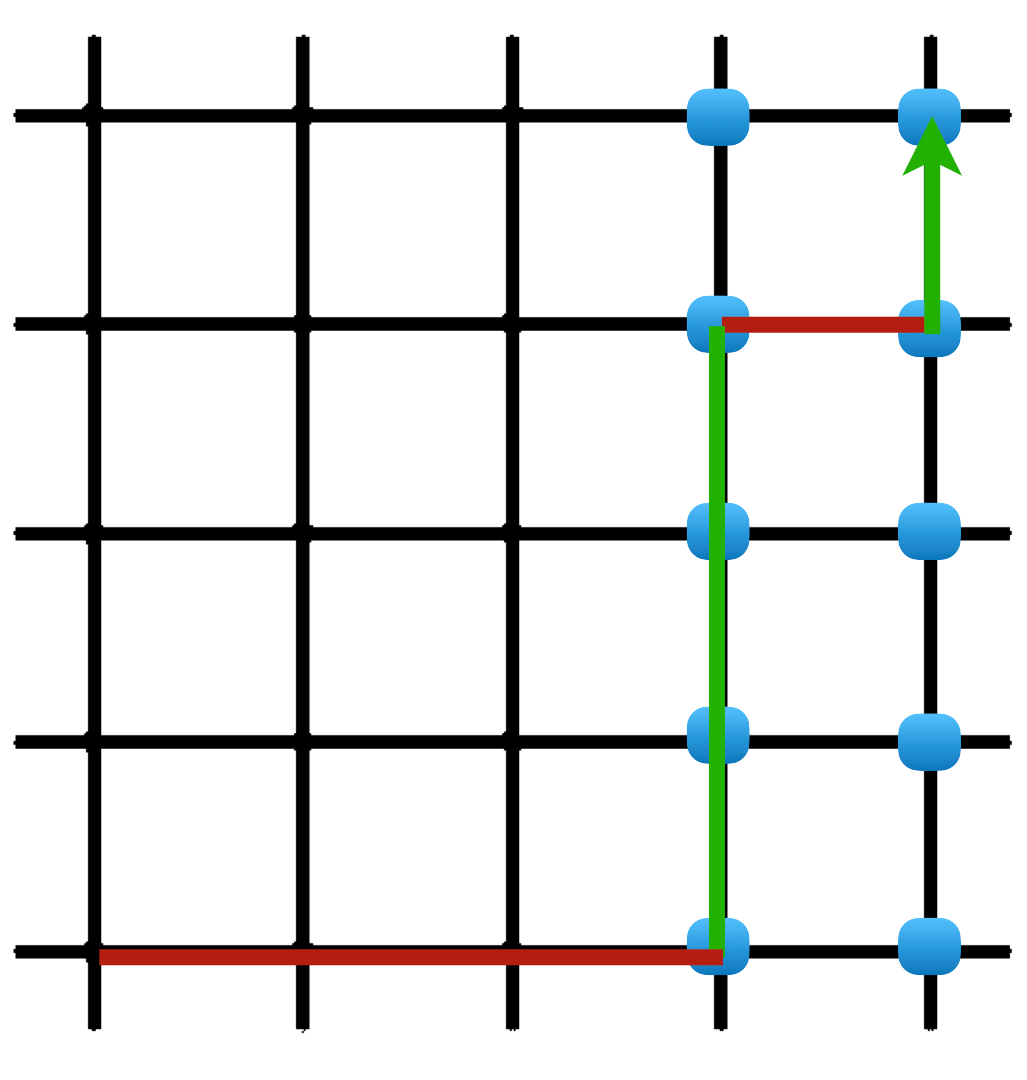

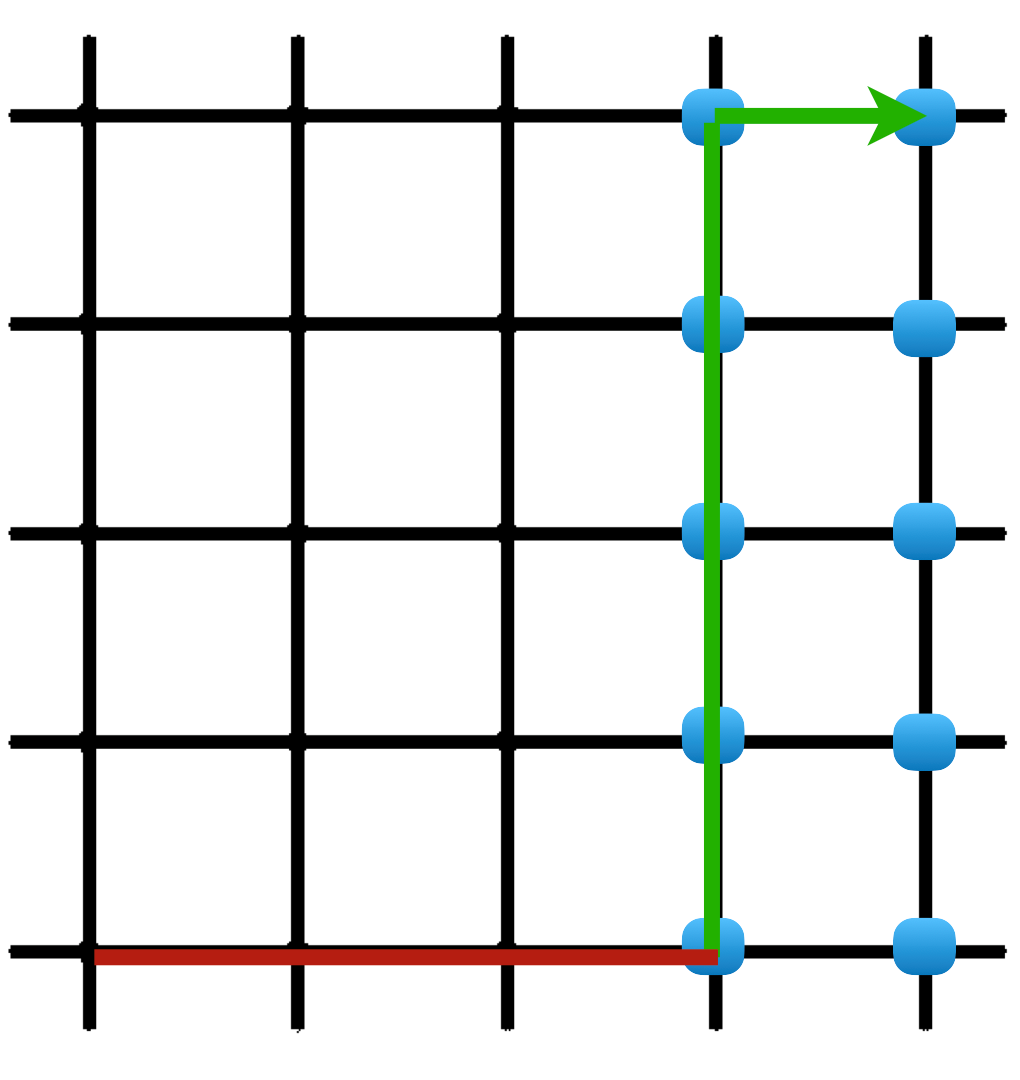

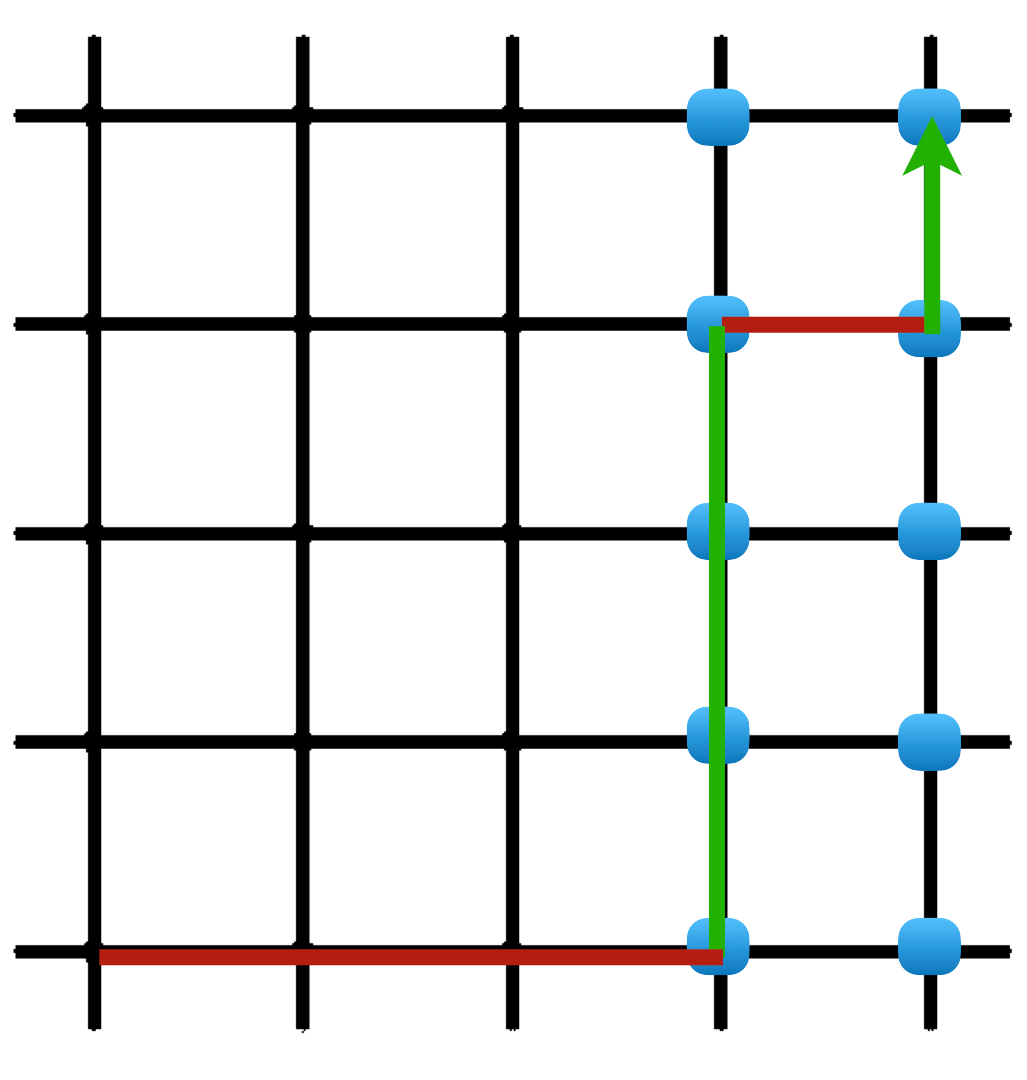

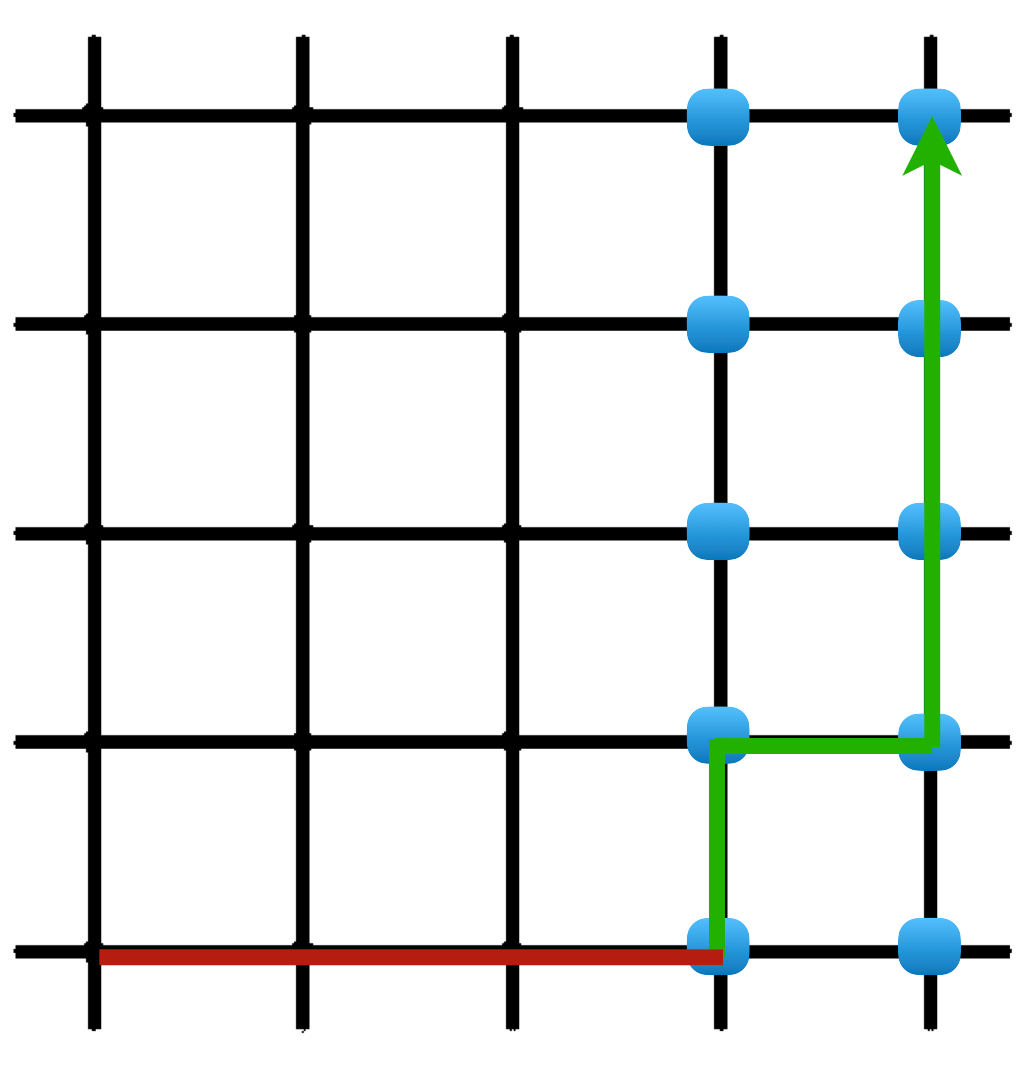

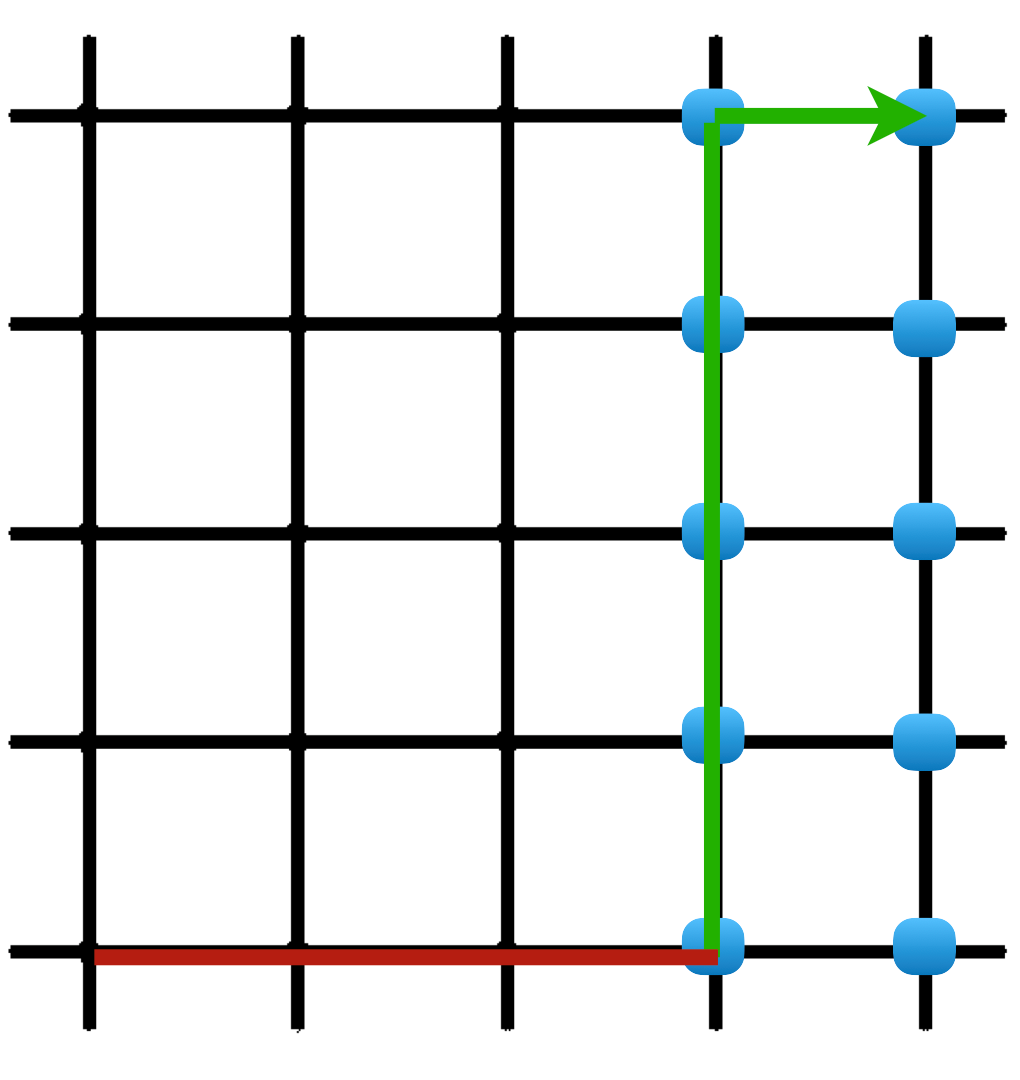

Advanced routing choices leveraging emergency capacities exhibited substantial reductions in EMV and average vehicle travel times. EMVLight capitalizes on additional roadway capacity for forming emergency lanes, guiding EMVs on routes with minimal congestion impacts.

Figure 4: EMV’s routing choice on Emergency-capacitated Synthetic Grid5×5, highlighting the utilization of emergency capacity.

Results and Discussions

Simulations indicate a 42.6% reduction in EMV travel time against benchmarks, affirming EMVLight's superior routing and coordination strategies. The presence of dynamic routing significantly outperformed static methods, underlining the necessity of real-time adaptability.

Ablation studies further validated the structural choices in EMVLight, illuminating the importance of reward design and agent types on system efficacy. The strategic use of spatial discount and policy networking underscored the multi-agent framework's robustness in handling urban traffic complexities for emergency situations.

Conclusion

EMVLight introduces a groundbreaking approach to EMV navigation, exemplifying the benefits of integrating decentralized reinforcement learning into intelligent traffic systems. By addressing the coupling between routing and signal pre-emption, EMVLight establishes itself as an effective strategy for optimizing urban traffic flows under emergency conditions. The insights gained pave the way for enhanced real-world traffic management and inspire future research directions in multi-agent reinforcement learning applications.

The scalability and efficiency demonstrated by EMVLight promise significant advancements not only in emergency vehicle management but also in broader intelligent transportation systems. By harnessing real-time adaptability, EMVLight offers practical solutions to critical urban challenges, reinforcing the role of innovative AI methodologies in contemporary infrastructure optimization.