- The paper presents the DepthGAN framework that integrates a dual-path generator and switchable discriminator with depth priors to improve 3D indoor scene synthesis.

- The method leverages sinusoidal embeddings for viewpoint encoding and separate latent spaces for depth and appearance, enabling precise geometric modeling.

- The approach achieves significant performance gains, reducing FID scores from 44.232 to 4.797 on LSUN bedroom datasets and enhancing 3D spatial consistency.

3D-Aware Indoor Scene Synthesis with Depth Priors

The paper "3D-Aware Indoor Scene Synthesis with Depth Priors" (2202.08553) addresses the challenge of generating realistic 3D indoor scenes using GANs by introducing depth as a 3D prior into the synthesis process. The paper identifies limitations in existing methods that only utilize 2D images, leading to poor modeling of complex indoor layouts and geometries.

DepthGAN Framework

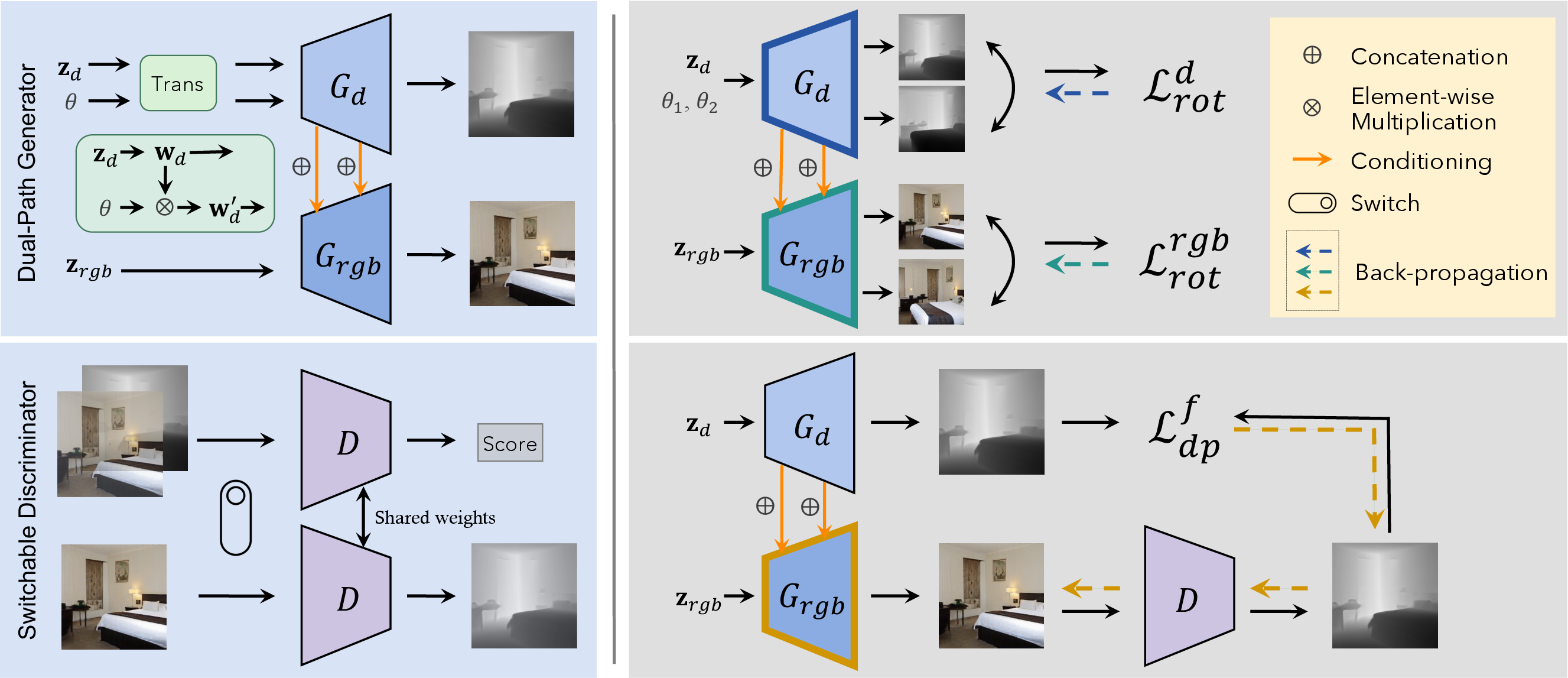

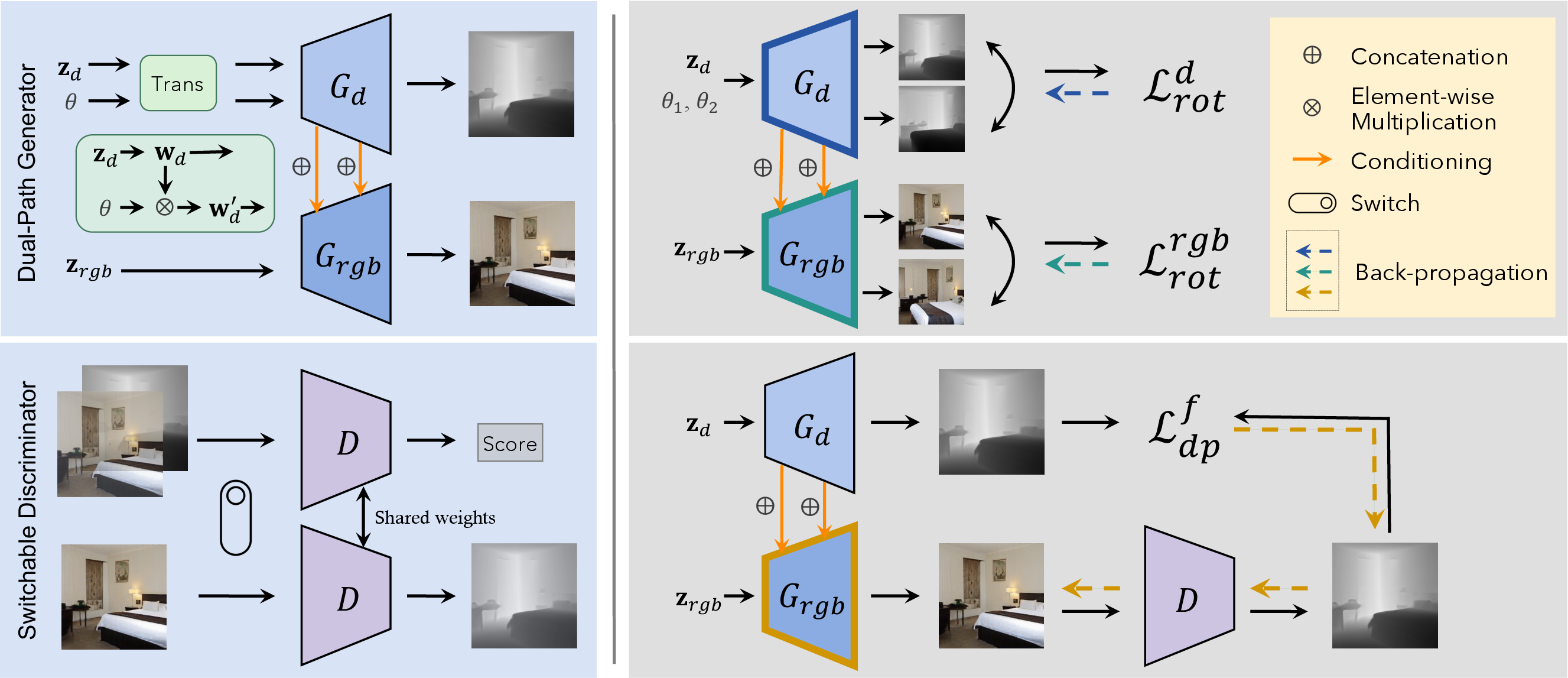

DepthGAN comprises a dual-path generator and a switchable discriminator. The dual-path generator includes distinct pathways for depth and appearance generation, allowing the network to synthesize images with independent representation of geometry and appearance conditioned on generated depth maps. The switchable discriminator is designed to utilize both RGB and depth information from synthesized images, predicting depth maps from RGB inputs while also distinguishing between real and generated RGBD data.

Figure 1: The framework of DepthGAN, consisting of a dual-path generator and a switchable discriminator.

Key Components

Dual-Path Generator

The dual-path generator incorporates a depth generator and an appearance renderer, each driven by separate latent spaces for depth and appearance. Depth maps are generated conditioned by viewing angles, encoded using sinusoidal embeddings to input precise viewpoint data. The depth generator feeds intermediate features into the appearance renderer, effectively linking geometric and stylistic aspects during synthesis.

Switchable Discriminator

Contrasting traditional discriminators operating strictly on RGB data, the switchable discriminator evaluates RGBD images in tandem, fostering learning insights on spatial arrangements and 3D geometry prediction. This dual role—realness discrimination and depth prediction—enhances the synthesis quality and alignment of geometry with appearance.

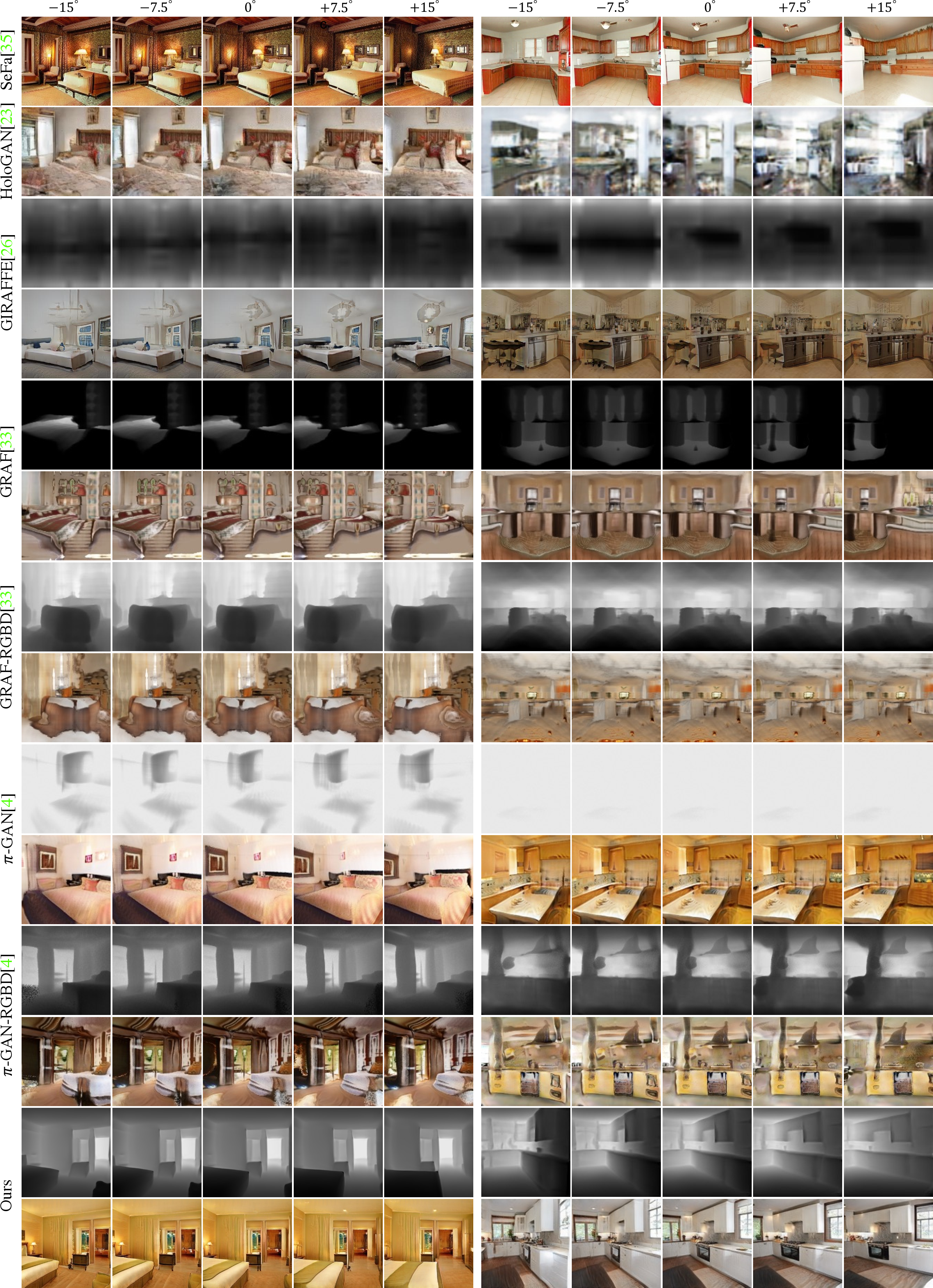

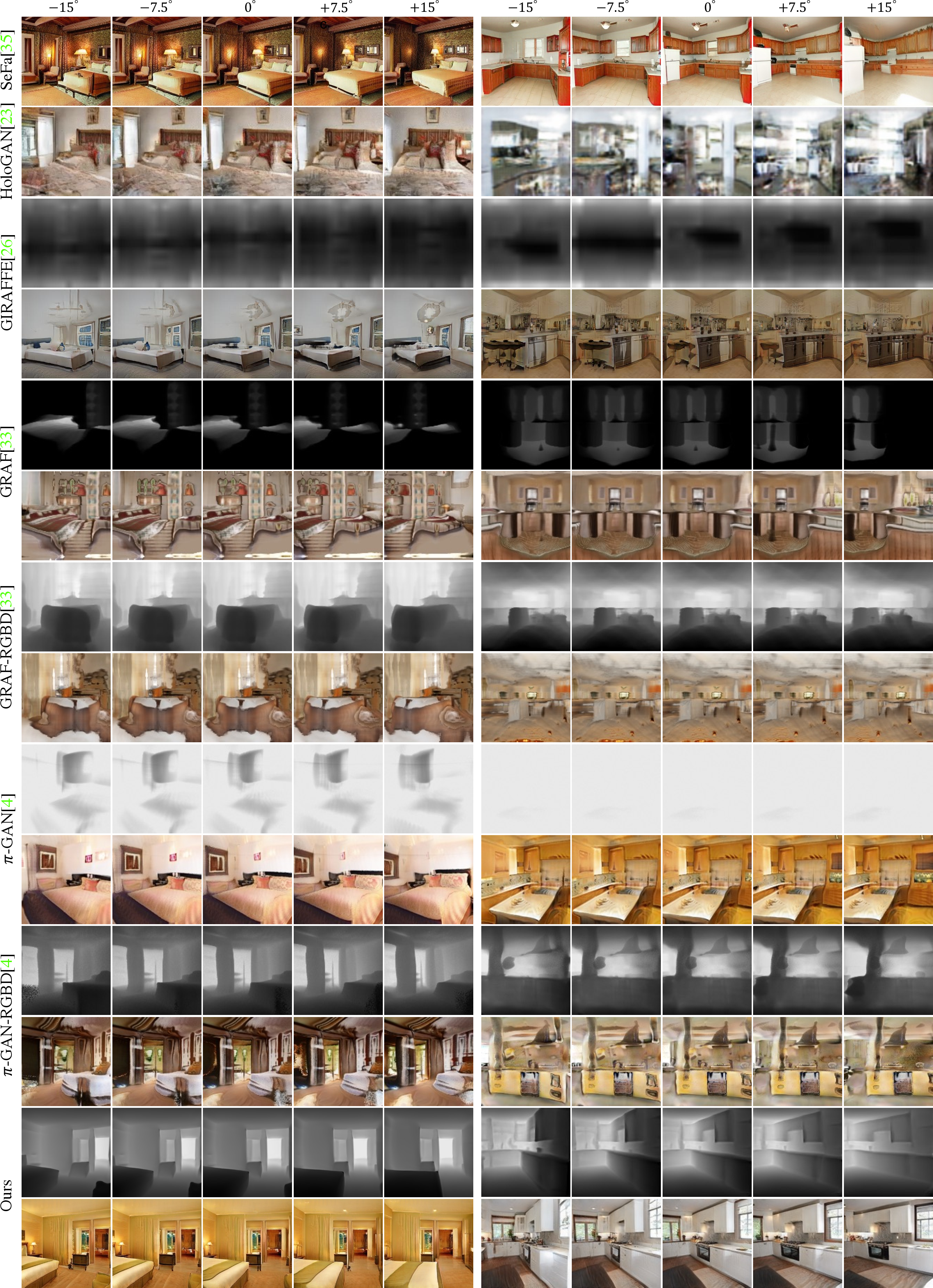

DepthGAN demonstrates significant improvements over existing methods, achieving superior synthesis quality and 3D spatial consistency. Quantitative analyses against state-of-the-art models reflect marked reductions in FID scores, with improvements from 44.232 to 4.797 for LSUN bedroom datasets. Rotation precision and consistency metrics further illustrate the model's advancement in 3D-aware image synthesis.

Figure 2: Qualitative comparisons with existing models on LSUN datasets reveal DepthGAN's superior synthesis fidelity.

Implementation Insights

DepthGAN's generator uses architecture from StyleGAN2 with enhancements for dual-path synthesis. Switchable discriminator augments standard discriminator functions with depth prediction capabilities formulated as a classification problem. The framework is optimized using adversarial, rotation-consistency, and depth-prediction losses to refine geometry-appearance coherence.

Implications and Future Directions

The integration of depth priors presents a promising avenue for indoor scene generation, addressing shortcomings in 3D geometry modeling from 2D datasets. Potential future improvements include optimized handling of occlusion and exploration of alternate 3D data formats for more nuanced scene representation.

Figure 3: Photo-realistic rendering of indoor scenes demonstrating DepthGAN's capabilities.

Conclusion

DepthGAN exemplifies a strategic integration of depth priors in GAN synthesis, achieving notable improvements in both image fidelity and 3D geometry representation for indoor scenes. Its dual-path and switchable discriminator architecture sets the stage for enhanced 3D-aware image synthesis applications in complex environments.