- The paper demonstrates the integration of a differentiable physics engine within video representation networks to maintain a physically consistent latent space.

- It employs both supervised and self-supervised training paradigms that leverage ground-truth parameters and reconstruction loss for accurate estimation.

- The model accurately predicts physical parameters and future video frames, advancing explainable AI for dynamic scene analysis.

Learning to Identify Physical Parameters from Video Using Differentiable Physics

This paper presents a novel approach to video representation learning by integrating a differentiable physics engine within an action-conditional video representation network. The method aims to learn physically interpretable latent space representations from video data of dynamic scenes, enabling the extraction of physical parameters such as mass and friction. The framework supports both supervised and self-supervised training methods, employing spatial transformers for image decoding in self-supervised scenarios.

Methodology

Differentiable Physics Engine Integration

At the heart of the proposed method is the integration of a differentiable physics engine, which enables the network to forward the latent physical scene state based on encoded scene parameters. This integration allows the model to maintain a physically consistent latent space where traditional deep learning architectures might not.

Network Architecture

The architecture blends convolutional networks for encoding image data into latent representations with a differentiable physics engine that simulates future states. The network's decoder uses spatial transformers to map the predicted physical states back into image space.

- Encoder: Encodes images into a latent space representing object poses and physical states.

- Decoder: Uses spatial transformations to render objects at predicted poses, allowing for image reconstruction.

- Physics Engine: Implements a linear complementarity problem (LCP) solving method to simulate physical dynamics, facilitating the learning of physical parameters.

Training Paradigms

- Supervised Learning: Utilizes ground-truth physical parameters for training, with an objective to minimize discrepancies between predicted and actual poses.

- Self-supervised Learning: Leverages known object models and uses reconstruction loss between input frames and decoded frames from the latent space to guide parameter learning without explicit physical supervision.

Experimental Setup

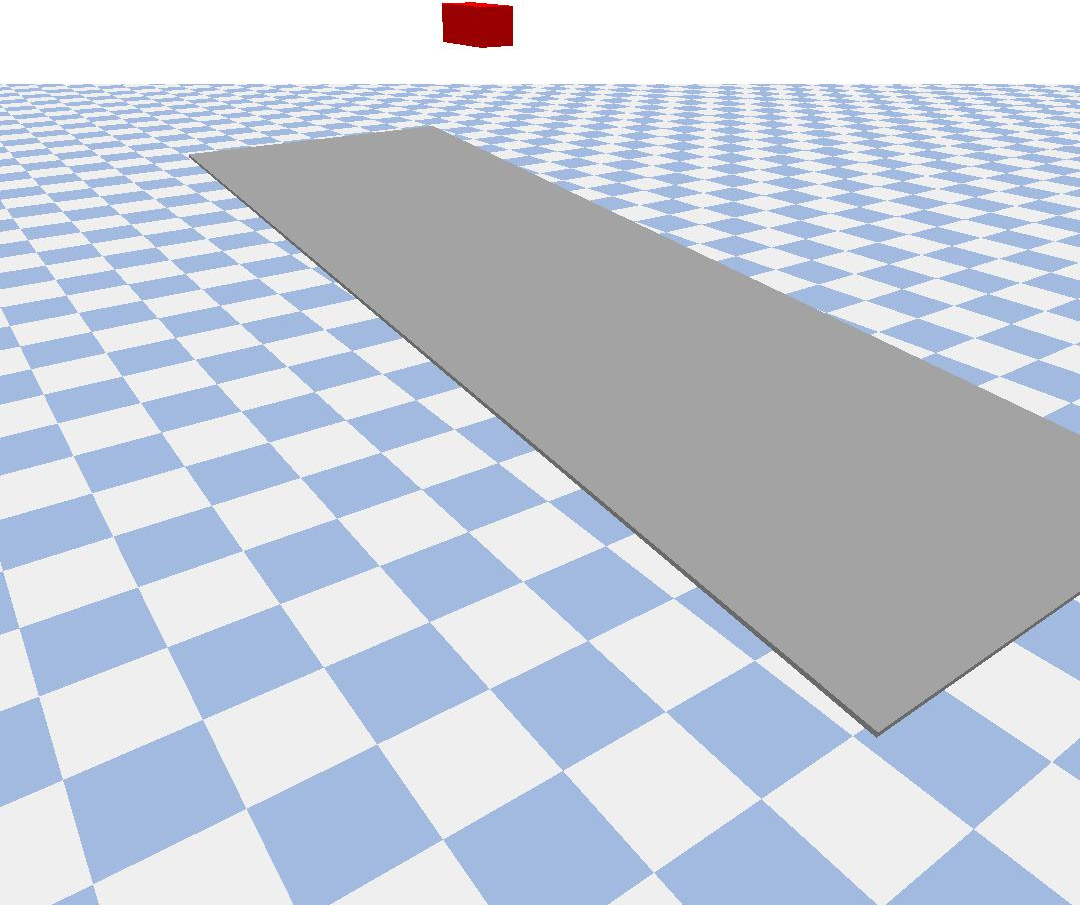

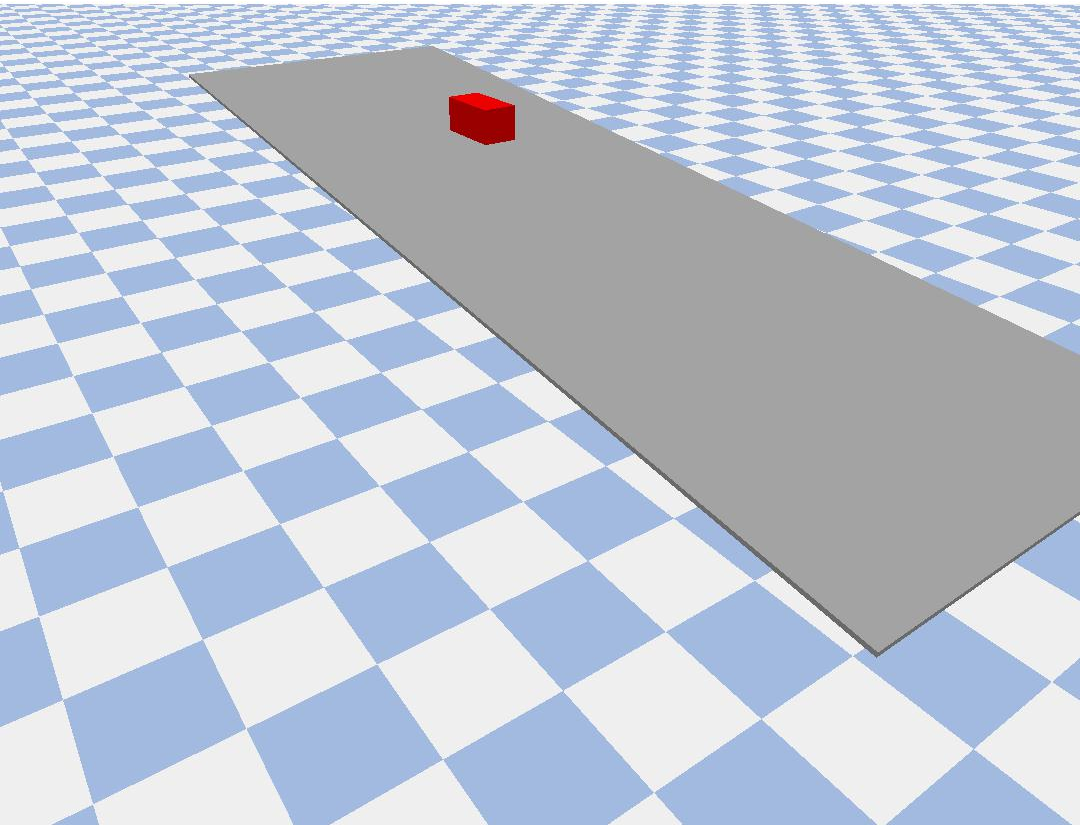

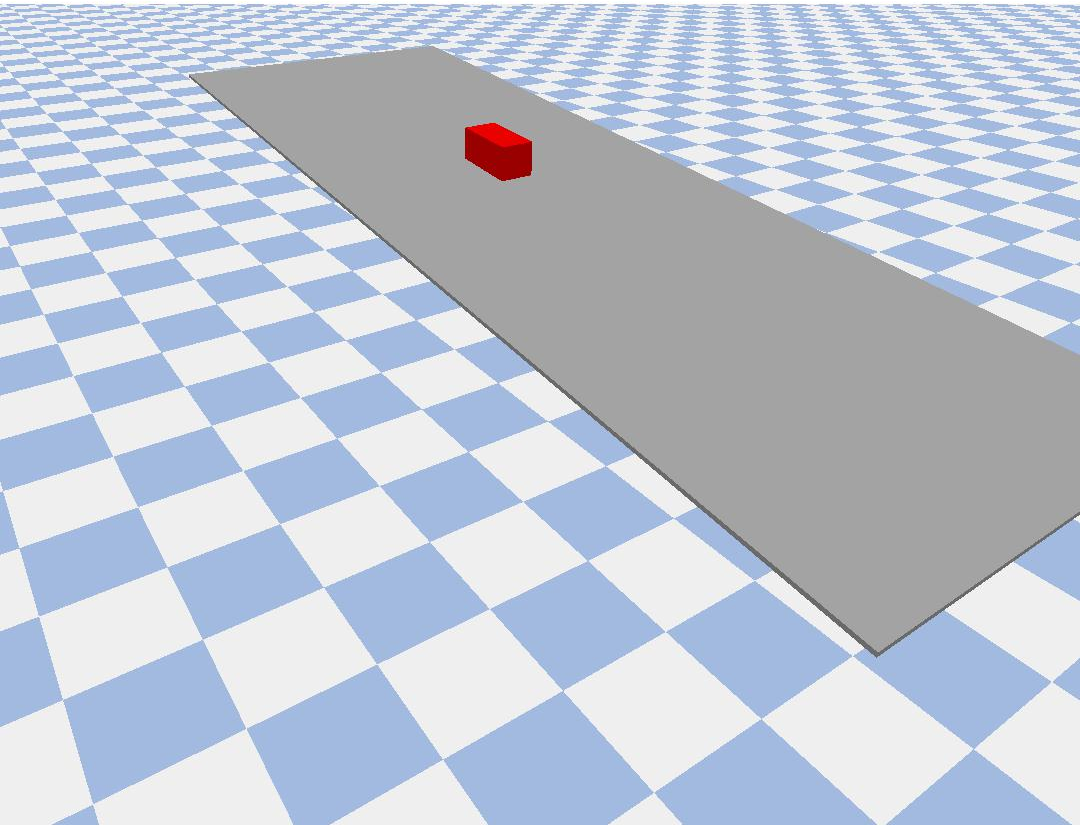

The experiments evaluate scenarios of pushing an object on a plane, collision between two objects, and sliding down an incline. Each scenario tests the model's ability to learn and predict physical parameters such as mass and friction through video sequences.

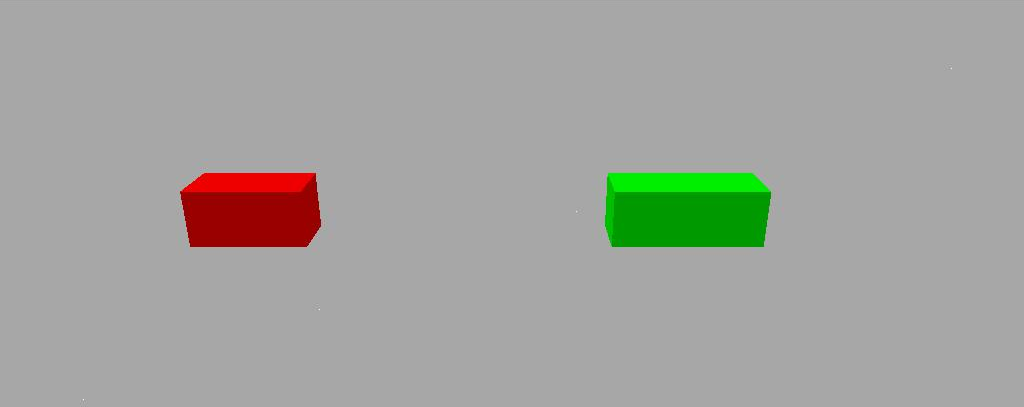

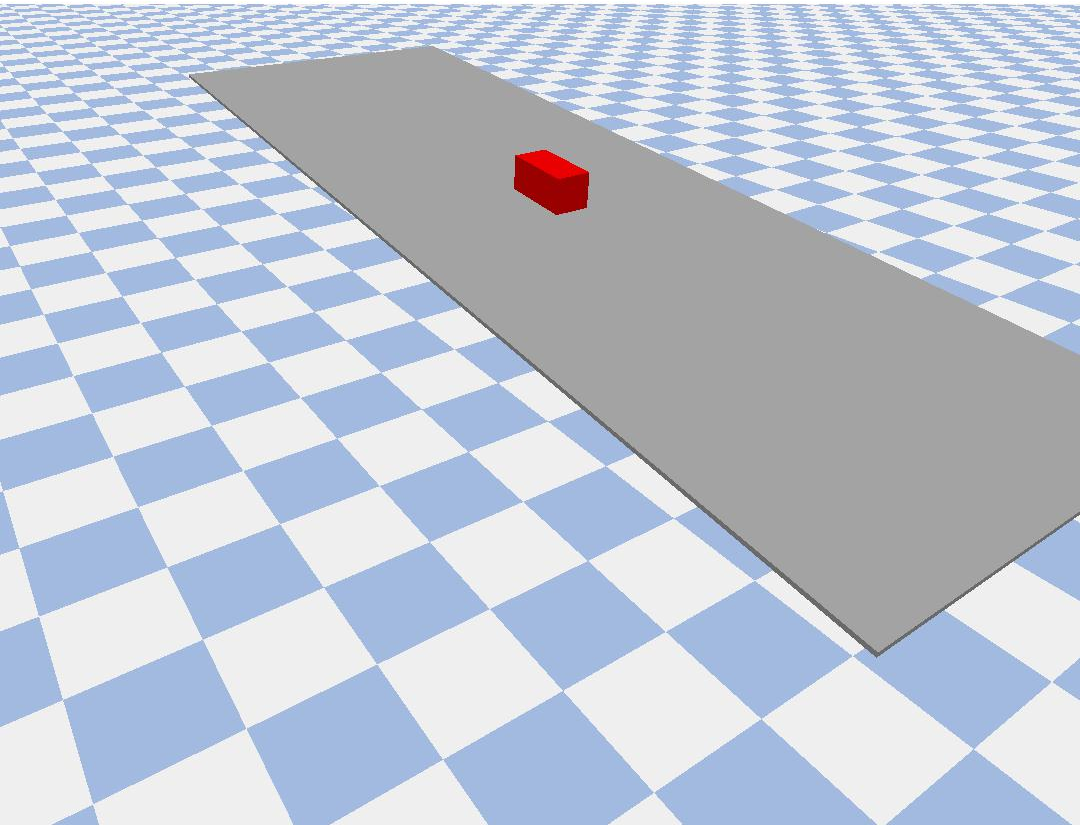

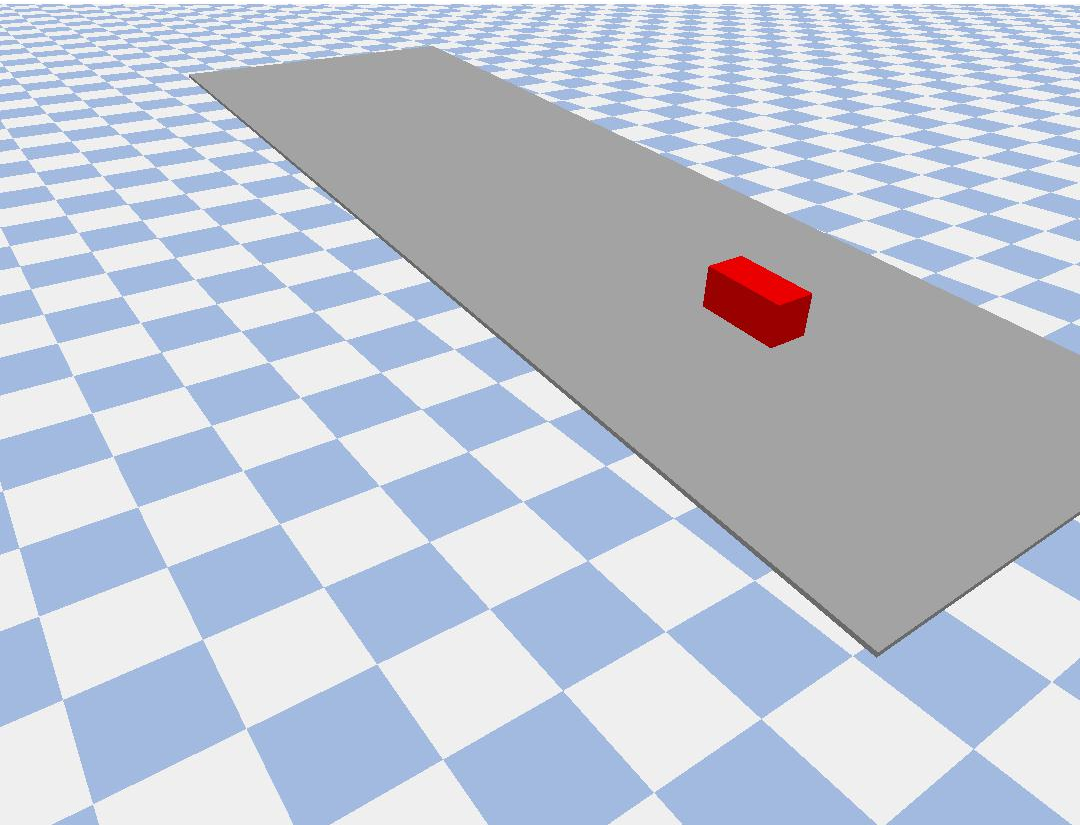

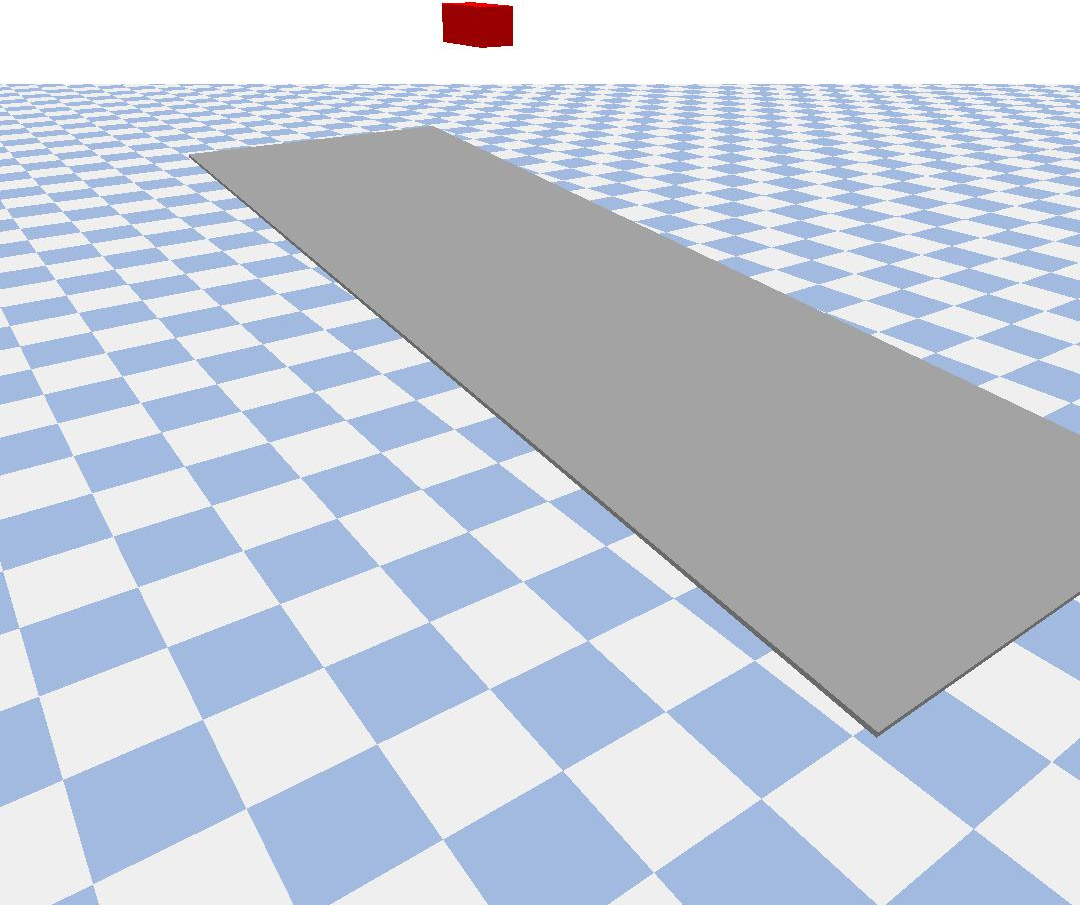

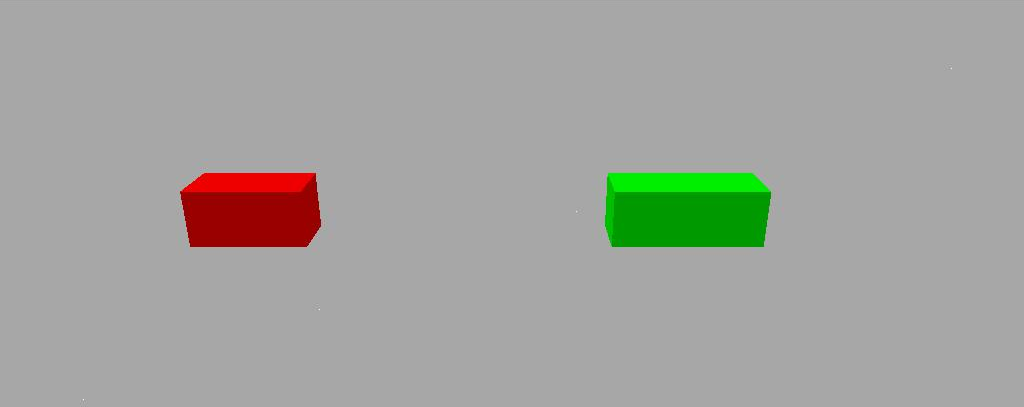

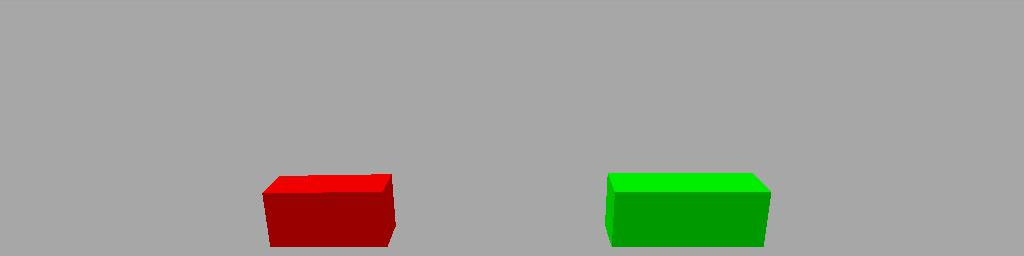

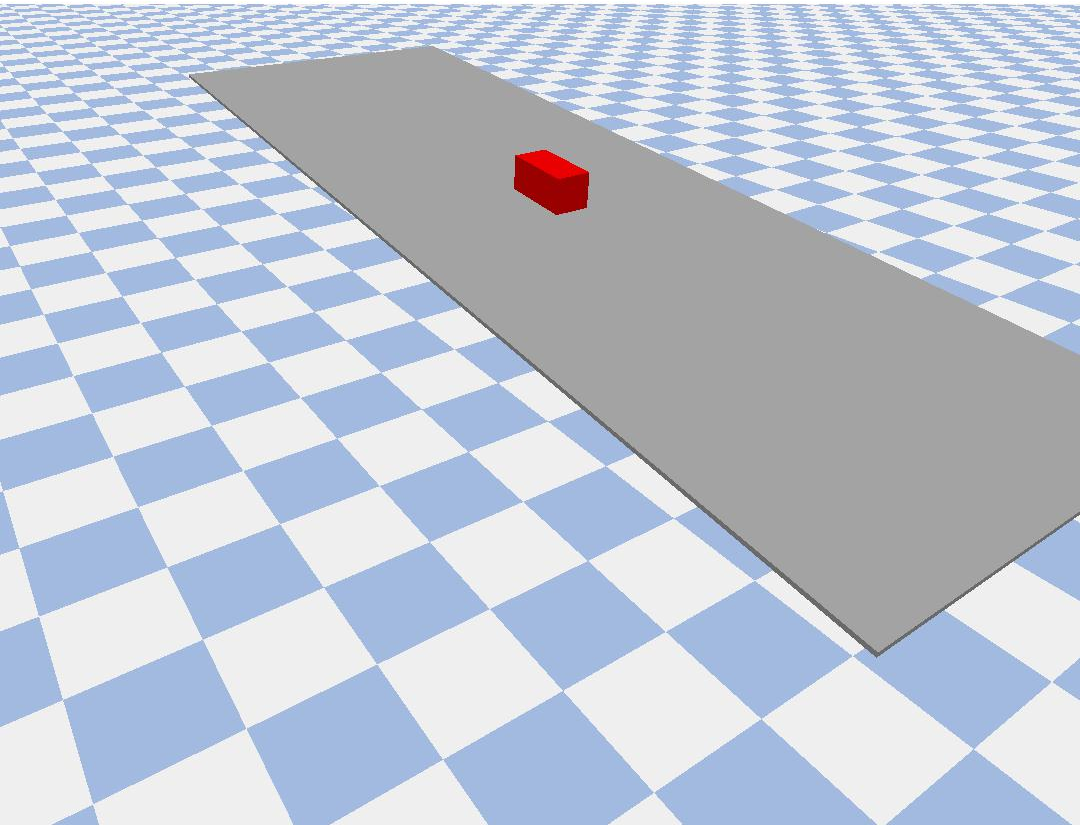

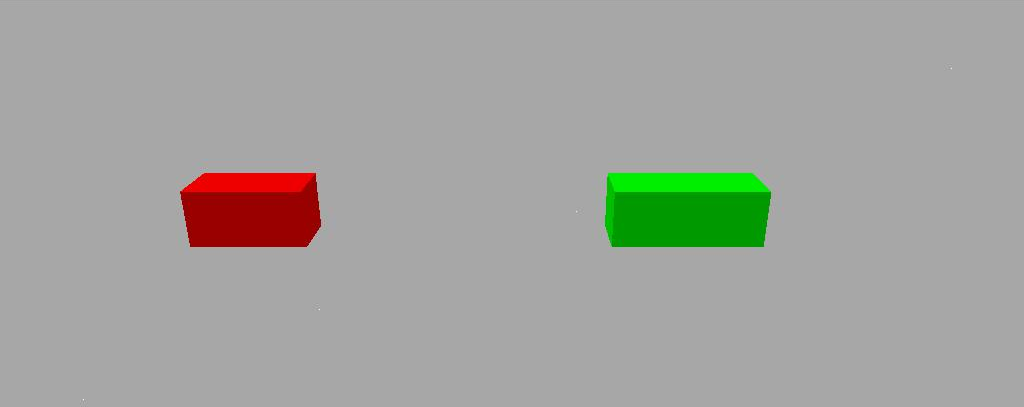

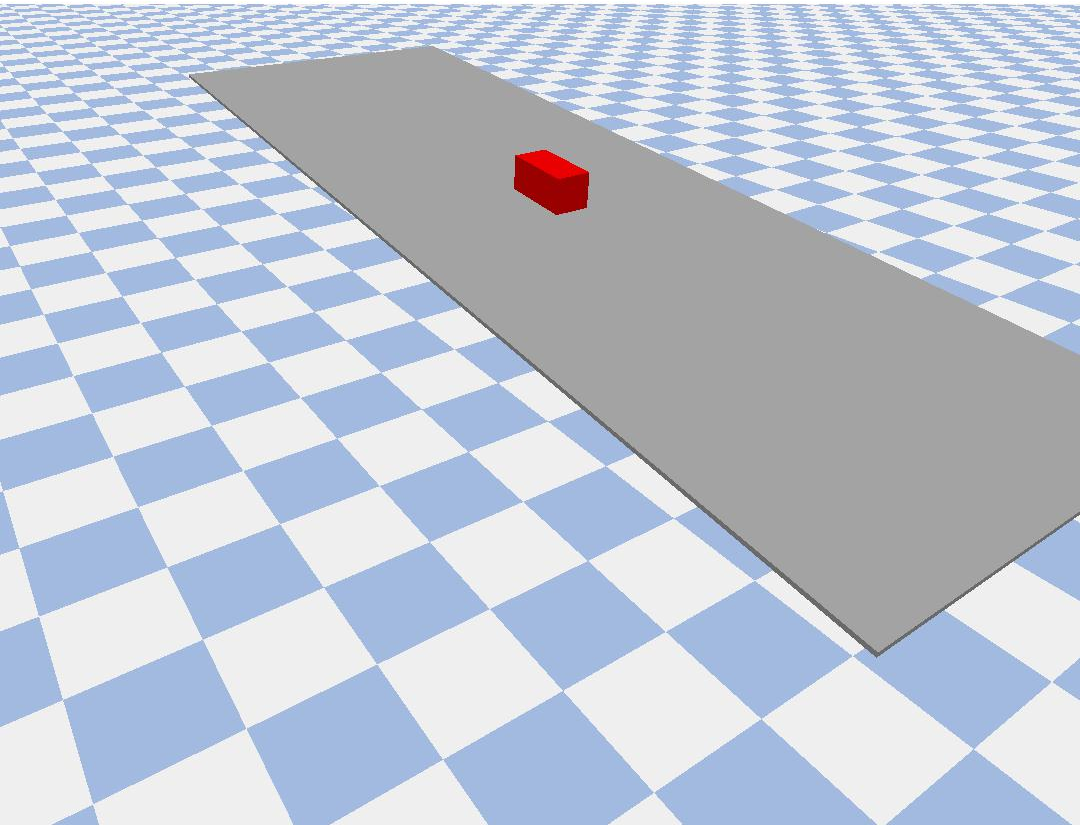

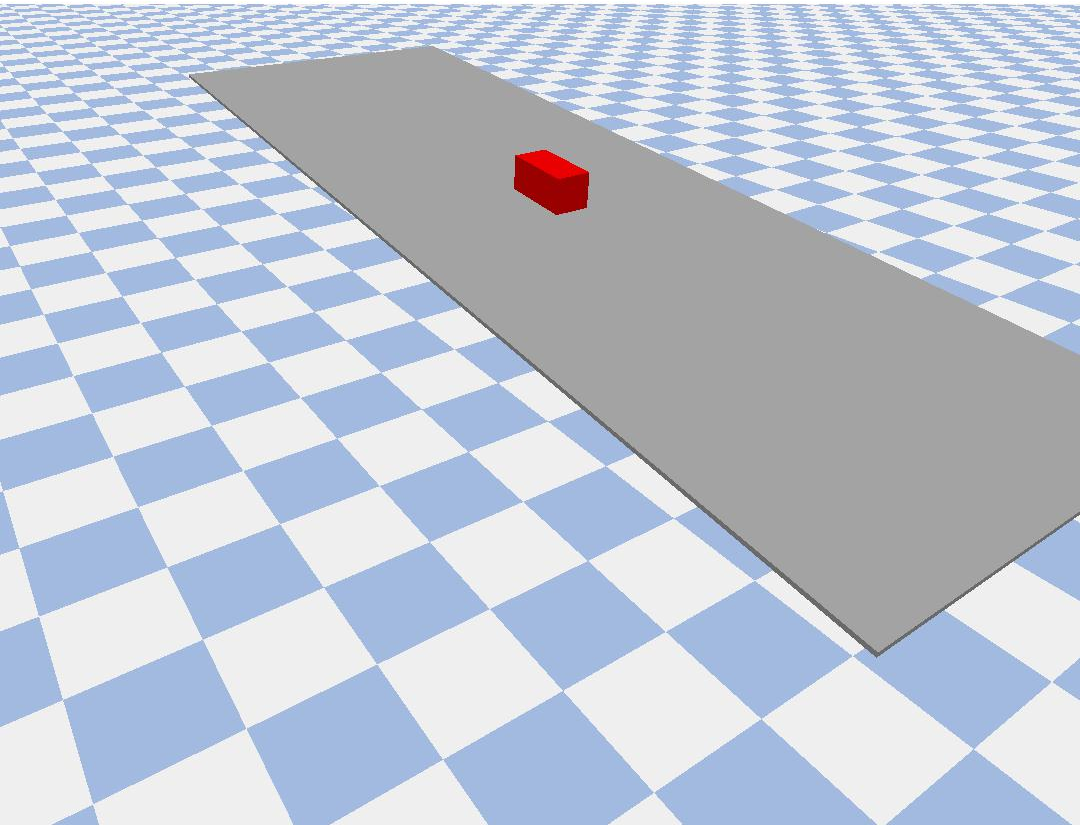

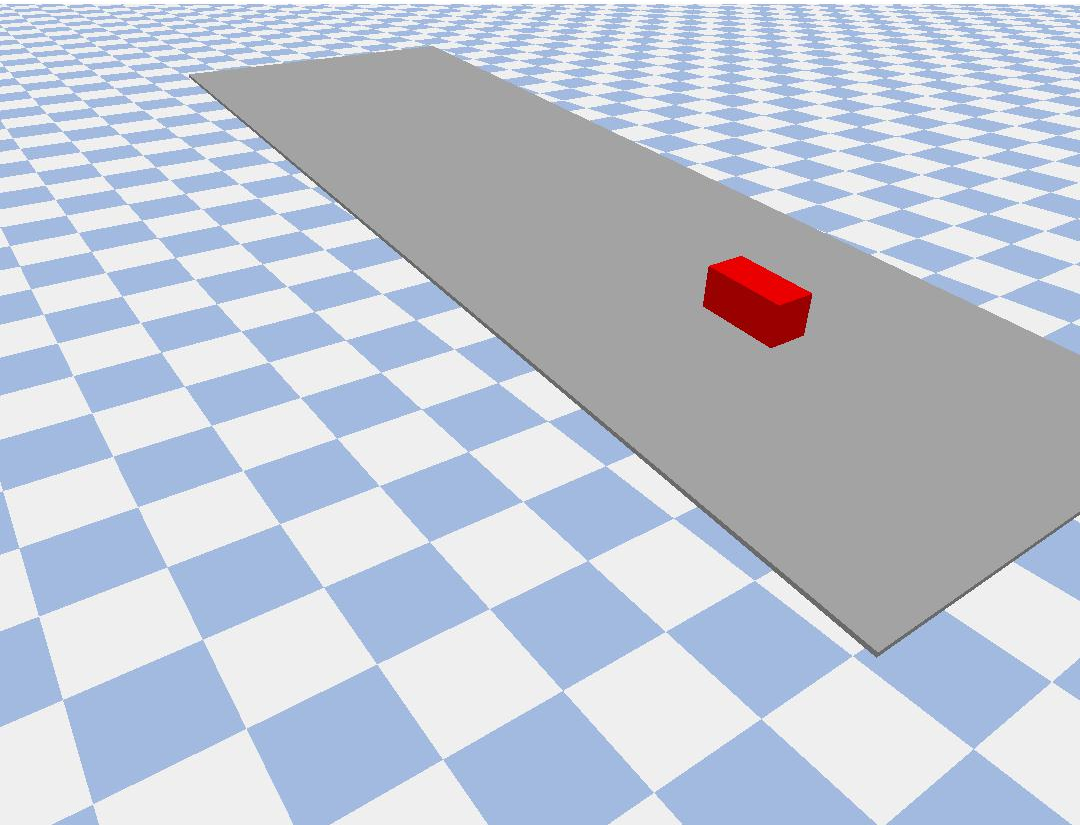

Figure 1: 3D visualization of the simulated scenes. Top: block pushed on a flat plane. Middle: block colliding with another block. Bottom: block falling and sliding down on an inclined plane.

Results

- Parameter Estimation: The model demonstrates the ability to estimate mass and friction parameters accurately across different scenarios. Supervised learning achieves a higher accuracy compared to self-supervised methods but both are effective in identifying physical properties.

- Video Prediction: The network successfully predicts future frames in the video sequences, demonstrating its capacity for scene forecasting in dynamic environments.

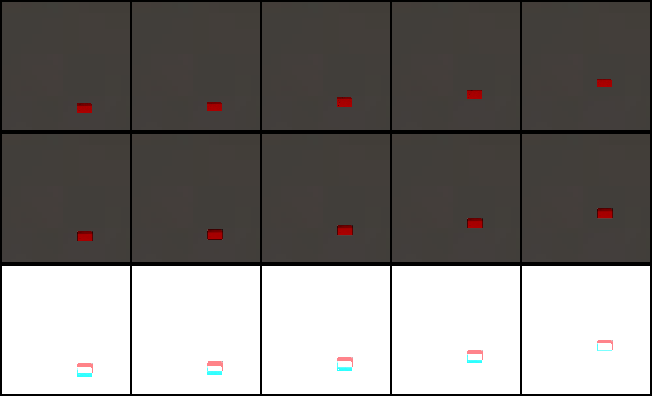

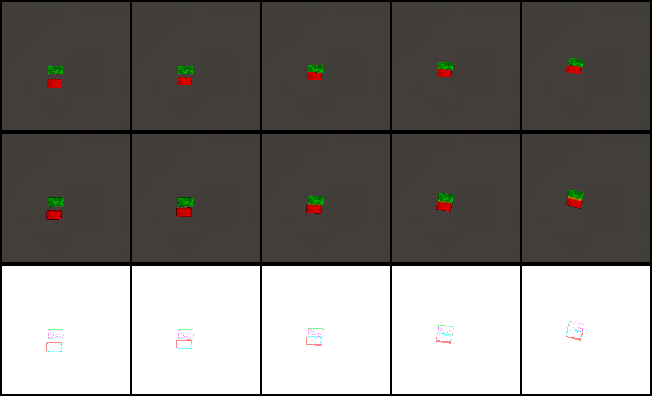

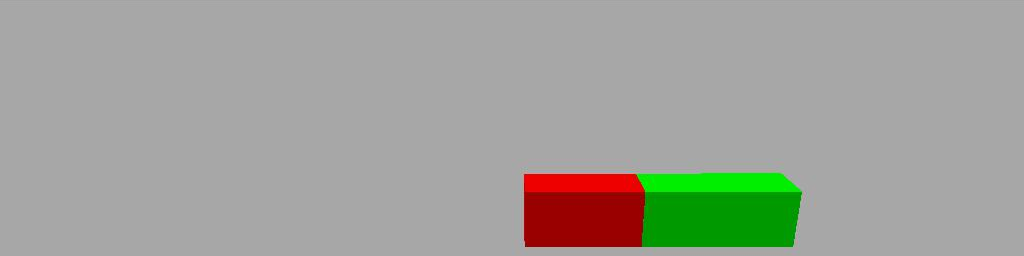

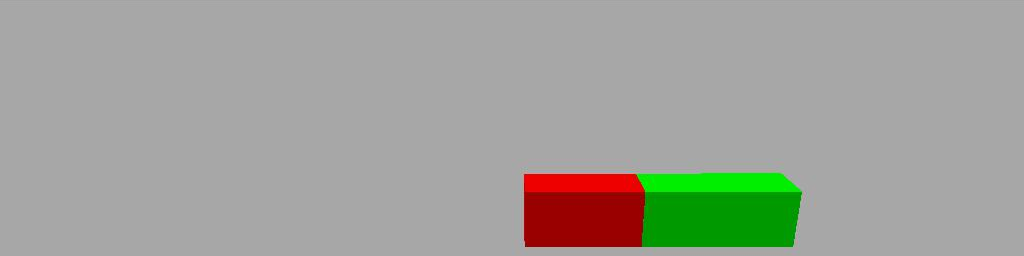

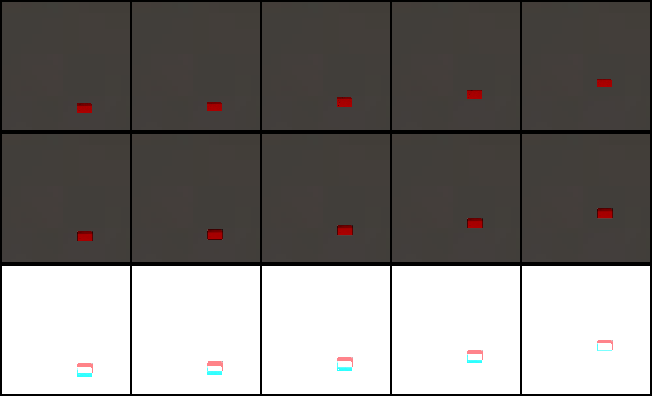

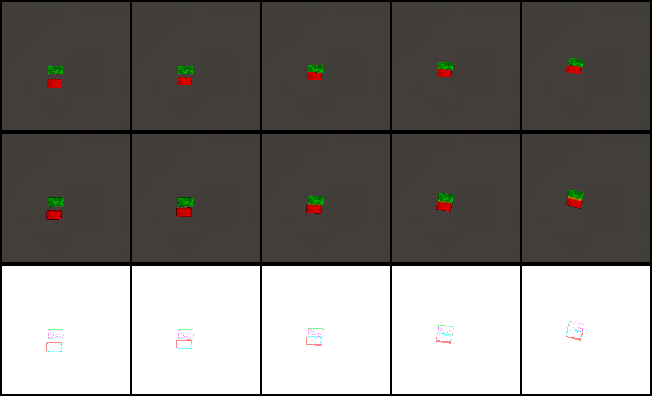

Figure 2: Qualitative video prediction results for block pushing (left) and collision scenarios (right) with our method. Top: simulated images (from left to right frames 0, 30, 60, 120, 180). Middle: predicted images by our approach. Bottom: difference images.

Practical Implications and Future Work

This research bridges the gap between neural video prediction and physics-based modeling, contributing to the development of more explainable AI systems by embedding physical reasoning into video representation learning. The proposed self-supervised learning framework is particularly appealing for applications where labeled physical data is unavailable.

Further investigations could explore extending the self-supervised methodology to real-world data, expanding to more complex 3D dynamics, and improving the generalization of learned models to novel, unseen environments.

Conclusion

The integration of differentiable physics into video representation networks provides a robust framework for learning interpretable physical parameters from video data. This work underscores the potential for combining traditional physics with state-of-the-art AI techniques to enhance scene understanding and prediction, setting a foundation for future research in dynamic video analysis with physics-aware neural networks.