- The paper introduces a novel two-level ensemble classification framework that integrates path-wise, node-wise, and depth-wise classifiers for hierarchical product categorization.

- The paper employs a graded weighted Bag of Word Vectors (gwBoWV) method to capture semantic nuances, overcoming sparsity issues of traditional representations.

- The paper demonstrates significant improvements in top-K path prediction accuracy over traditional models, enhancing efficiency in large-scale e-commerce catalog management.

Product Classification in E-Commerce using Distributional Semantics

Introduction

The research paper "Product Classification in E-Commerce using Distributional Semantics" (1606.06083) addresses the challenge of categorizing products in vast e-commerce catalogs using distributional semantics. The paper introduces a novel approach to represent document vectors and proposes a two-level ensemble classification system tailored to the hierarchical taxonomy employed by e-commerce platforms.

Distributional Semantics Representation

Traditional document representations like tf-idf and BoW fail in capturing semantic nuances due to their high dimensionality and sparsity. The paper adopts a distributional semantics approach, encoding words in low-dimensional continuous vector spaces (R100 to R300), capitalizing on the 'Distributional Hypothesis'. This method involves weighted summation and clustering of these word vectors to form graded weighted Bag of Word Vectors (gwBoWV).

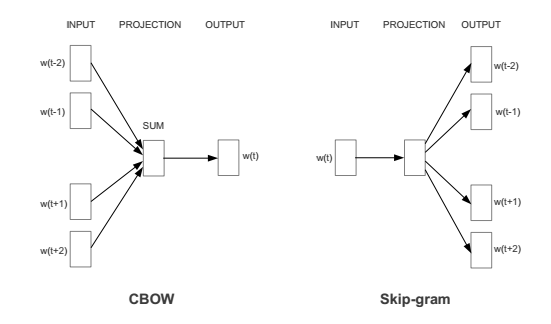

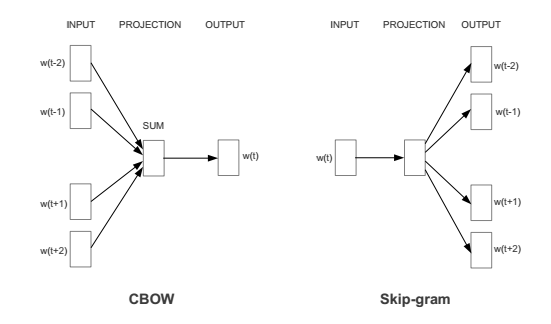

Figure 1: Neural Network Architecture for CBoW and Skip Gram Model.

Paragraph Vector Limitations and Improvements

The paper critiques the existing paragraph vector models (PV-DM and PV-DBoW) for their inability to capture multiple topic representations within a document and for treating all words in a document uniformly. To overcome these limitations, a new composite technique is proposed, leveraging enriched word vectors and graded weighting inspired by recent advancements in semantic vector composition.

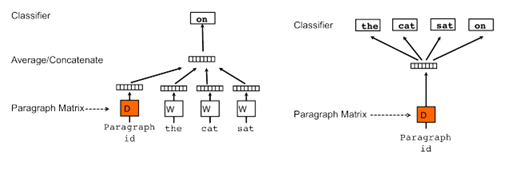

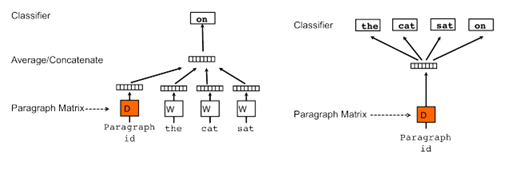

Figure 2: Neural Network Architecture for Distributed Memory version of Paragraph Vector (PV-DM) and Distributed BoWs version of paragraph vectors (PV-DBoW).

Hierarchical Product Categorization

Product categorization in e-commerce is a hierarchical problem, fraught with challenges such as data sparsity and label-dependent constraints. Existing methods, like the gates-and-experts approach, typically suffer from complexity and inefficiency. The paper's proposed ensemble approach uses classifiers trained on path-wise, node-wise, and depth-wise labels within the taxonomy, significantly improving classification accuracy while maintaining computational efficiency.

Ensemble Classification Framework

A two-level ensemble framework is employed, where the first level utilizes the outputs from different classifiers (path-wise, node-wise, and depth-wise classifiers) to form a feature vector. This vector is then used by the second-level classifier to refine and enhance prediction accuracy. The ensemble effectively integrates multi-type feature predictions, demonstrating superior performance across various metrics.

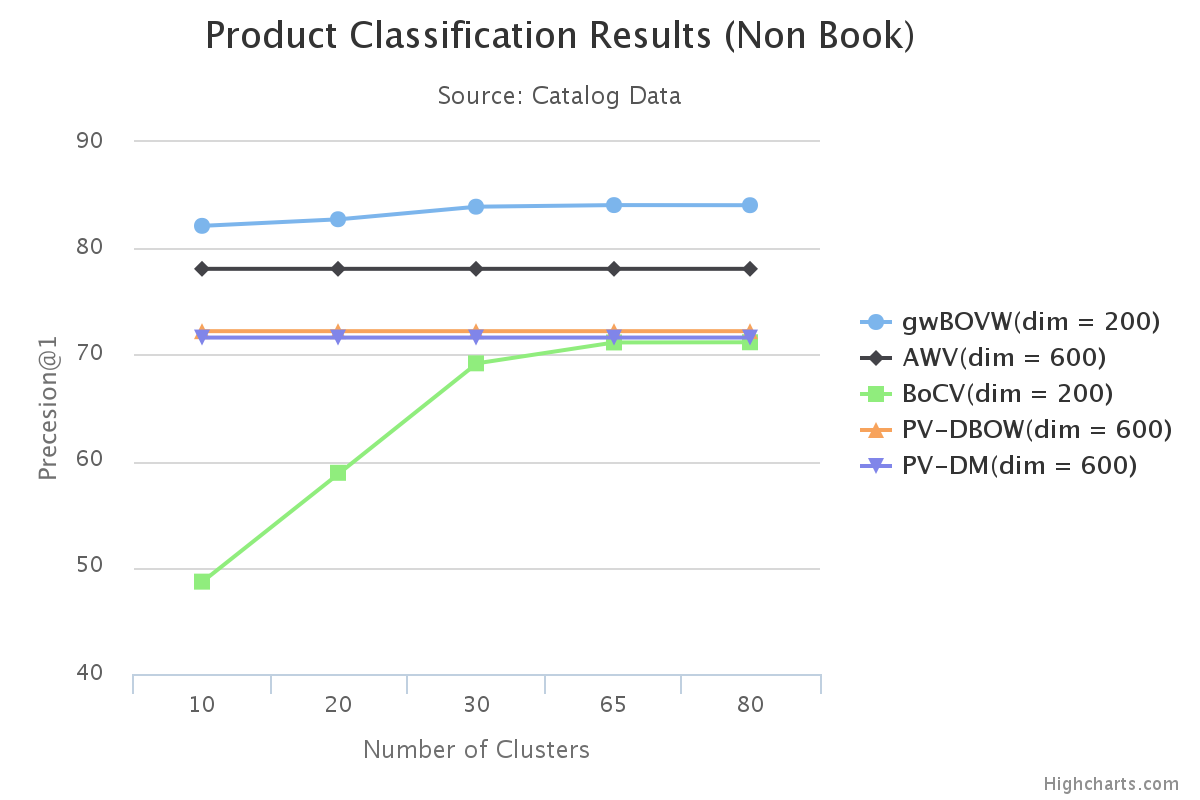

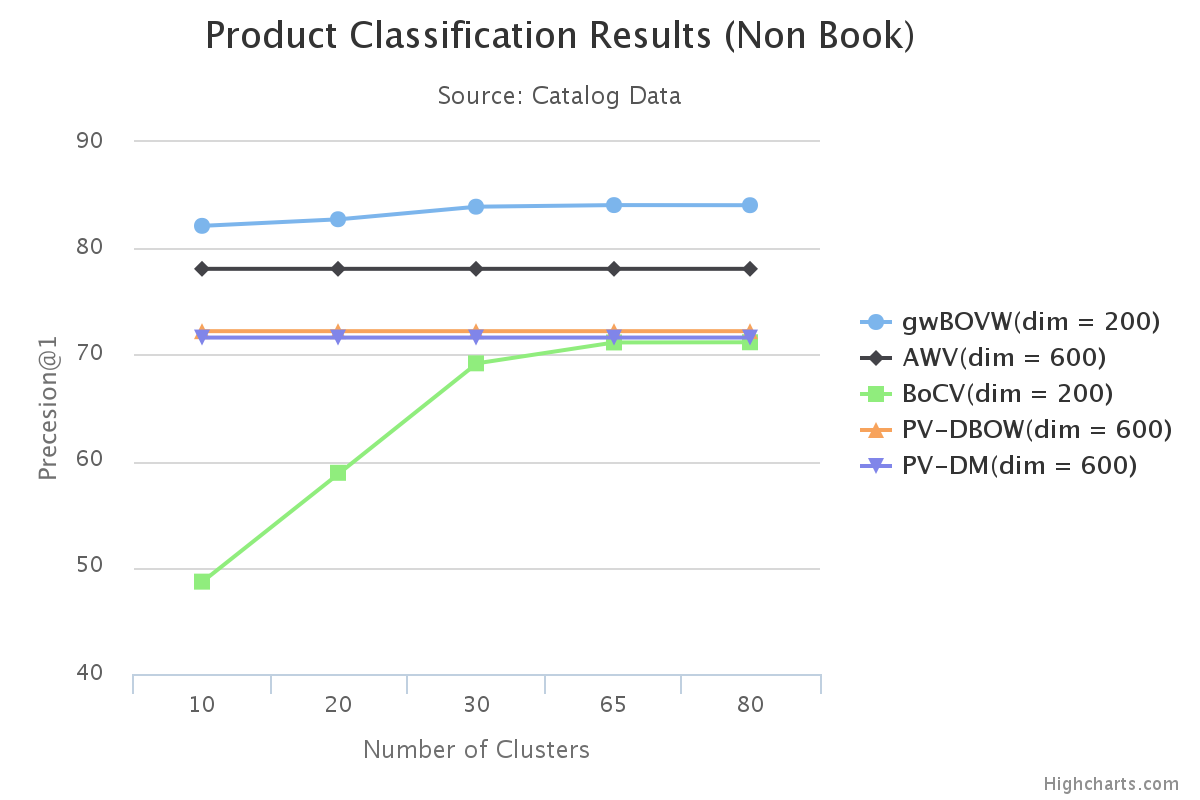

Figure 3: Comparison of prediction accuracy for path prediction using different methods for document vector generation.

Results and Discussion

The proposed method, when tested on datasets from a prominent e-commerce platform, showcases remarkable improvements in classification accuracy over baseline methods such as PV-DBoW and BoCV. Specifically, the introduction of gwBoWV combined with the ensemble approach yields enhanced top-K path prediction accuracy.

Implications and Future Work

The framework's success signifies a substantial leap in e-commerce product classification, paving the way for more nuanced and accurate catalog management. Future research could explore alternative clustering methods like the Chinese Restaurant Process, integrate supervised embeddings, and enhance label embedding approaches for improved performance.

Conclusion

This paper contributes a robust distributional semantics-based document representation and a novel ensemble classification approach, addressing the multifaceted challenges of e-commerce product classification. The methodologies set a foundation for further innovation in hierarchical classification and semantic representation, influencing future developments in AI-driven cataloging systems.

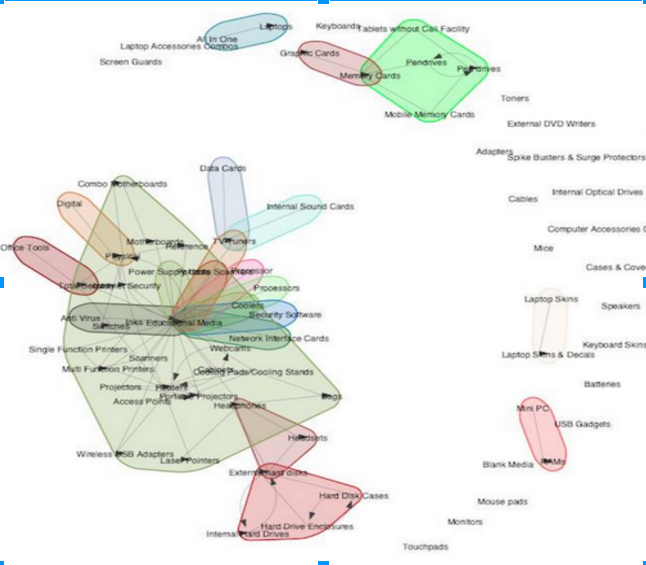

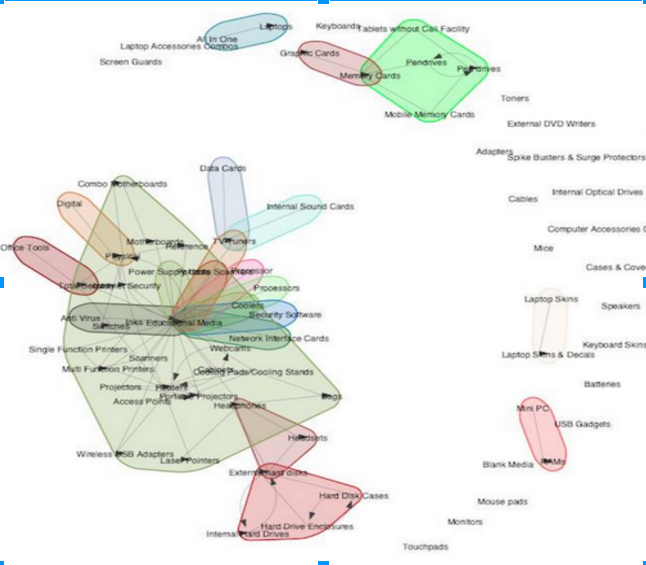

Figure 4: Misclassification Graphs with latent groups in Computer and Computer Accessories, here each edge from C1 → C2 represents misclassification from C1 → C2 with threshold >10% of correct prediction, here isolated vertices represent almost correctly predicted classes.