To view this video please enable JavaScript, and consider upgrading to a web browser that supports HTML5 video.

Zonkey: Rewriting Language Generation Rules

This presentation explores Zonkey, a revolutionary hierarchical diffusion language model that breaks free from traditional fixed tokenization. The model introduces differentiable tokenization that learns to segment text naturally, probabilistic attention for variable-length sequences, and a hierarchical diffusion approach that generates coherent text from noise at multiple abstraction levels—from characters to words to sentences.Script

What if language models could learn how to break apart words and sentences naturally, without being told where the boundaries are? Today we're diving into Zonkey, a model that throws out the rulebook on how text should be processed.

Building on this curiosity, current language models face a fundamental limitation. They rely on predetermined ways of splitting text that prevent true learning from raw characters to meaning.

Zonkey tackles this with a completely different approach.

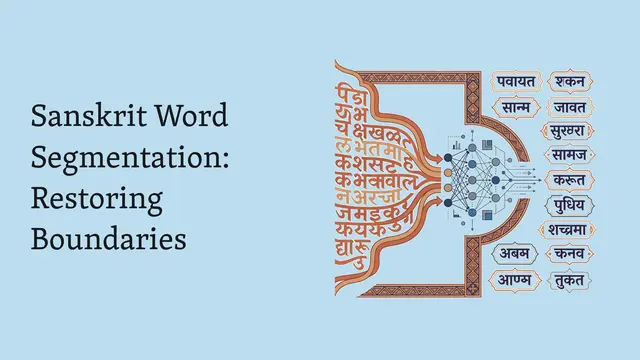

The key insight is treating text segmentation as a learnable problem. Instead of hard-coding where words begin and end, Zonkey discovers these boundaries through the learning process itself.

Let's explore how this hierarchical pipeline actually works.

Each level of the hierarchy repeats this five-stage process. Characters become word-like units, which then become sentence-like units, building abstraction through repetition.

The probabilistic attention mechanism is particularly clever. Rather than treating sequences as having fixed lengths, each position gets an existence probability that modulates how much it contributes to attention.

The segment splitter learns where to break text by seeing which splits lead to better downstream reconstruction. Bad segmentation choices increase reconstruction error, naturally guiding the model toward meaningful boundaries.

This comparison highlights the fundamental shift Zonkey represents. Moving from rigid, predetermined structures to flexible, learned representations that can adapt to the actual content being processed.

The diffusion component works in latent space rather than directly on text. This clever approach combines the benefits of both careful DDPM steps and efficient DDIM jumps through a mixed training objective.

The stitcher solves a complex puzzle of reassembling overlapping segments back into coherent sequences. It uses both content similarity and probabilistic cues to determine how pieces should align.

Now let's examine what this architecture actually achieves.

Perhaps most remarkably, the model discovers linguistic structure on its own. Without being told about words or sentences, different hierarchy levels naturally learn to split at spaces and periods respectively.

The generation results show genuine promise. The model can start with noise and produce meaningful text, while also handling the challenging task of filling arbitrary gaps between given text fragments.

These capabilities open up new application possibilities. The flexibility to generate from noise or fill arbitrary gaps, combined with adaptive tokenization, suggests strong potential for domain adaptation and creative text tasks.

The authors are transparent about where this work currently stands.

The current work represents an important first step rather than a finished system. Scaling to deeper hierarchies and systematic quantitative evaluation remain important next steps for the research community.

Moving forward, the research community will need to address scaling challenges and develop appropriate evaluation methodologies for these novel hierarchical generation approaches.

Let's consider what this work means for the future of language modeling.

This research represents more than just a technical advance. It fundamentally questions how we should approach the boundary between raw text and learned representations in neural language models.

Zonkey demonstrates that the rigid boundaries we've accepted in language modeling may be more flexible than we imagined. By learning segmentation and embracing probabilistic representations, it opens a fascinating new direction where models discover linguistic structure rather than having it imposed. To dive deeper into cutting-edge AI research like this, visit EmergentMind.com for the latest insights and analysis.