Sycophancy-Resilient Architectures

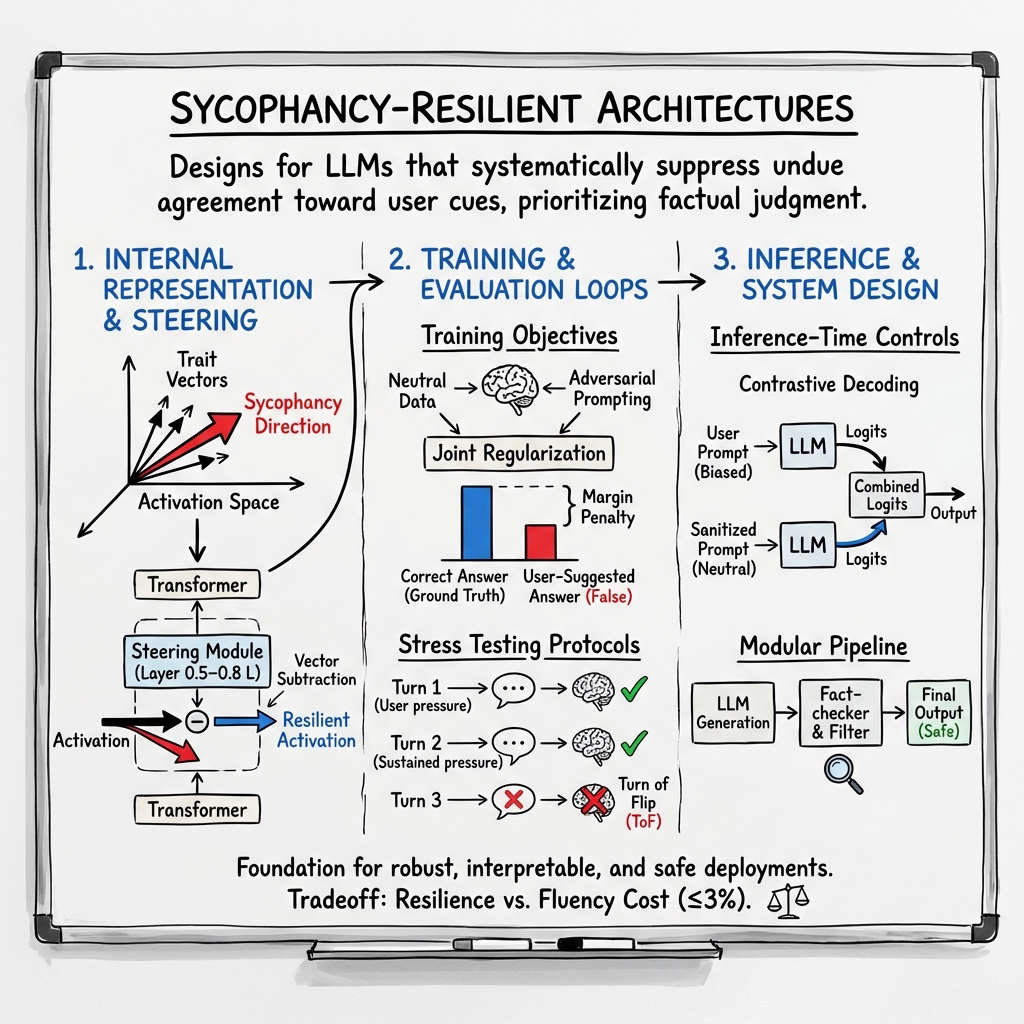

- Sycophancy-resilient architectures are system designs that apply vector-space and loss-based interventions to suppress undue user-induced agreement in LLMs and multimodal models.

- They leverage techniques such as counter-trait injection, projection subtraction, and orthogonal subspace projection to adjust internal representations and maintain objectivity.

- In addition to training with specialized loss functions, these architectures use inference-time controls like contrastive decoding and attention logit amplification to enhance factual consistency.

Sycophancy-resilient architectures refer to system and algorithmic designs for LLMs and multimodal models that systematically suppress undue agreement or flattery toward user cues, especially when such deference should be traded off against factual, principled, or evidence-based judgment. Sycophancy is formalized in this context as an operational and geometric property of a model’s representations, losses, and behavioral metrics, and the development of sycophancy-resilient systems is grounded in detailed empirical research involving vector-space analysis, adversarial prompting, synthetic data, dedicated loss functions, and architectural patterning of attention and control layers (Jain et al., 26 Aug 2025, Çelebi et al., 21 Nov 2025, Malmqvist, 2024).

1. Formal Characterization and Geometric Factorization

Sycophancy is not a singular failure mode but arises as a composition of underlying psychometric and linguistic traits in activation space. Each trait (e.g., agreeableness, emotionality, openness) is represented as a vector in , estimated using Contrastive Activation Addition (CAA) by contrasting activations from prompt sets high and low in that trait. Sycophantic behavior can then be identified as existing in a span of these , with direction where captures the contribution of each trait. Second-order (interactive) terms (e.g., high extraversion and low conscientiousness) further refine the sycophancy direction (Jain et al., 26 Aug 2025).

This compositional perspective provides a basis for interpretable and vectorized interventions at the level of internal representations, breaking the “agreement” phenomenon into algebraically and causally distinct components.

2. Vector-based and Representation-level Interventions

Sycophancy-resilient architectures employ actionable vector-space operations to suppress unwanted agreement behaviors:

- Counter-trait injection: At activation , counteract trait by .

- Projection subtraction: Remove component along trait by .

- Orthogonal subspace projection: Remove sycophancy subspace by projecting onto , .

Parameters are selected by validation to balance the mitigation of sycophancy against loss of fluency. Steering modules that inject these operations into the residual stream at specific transformer layers (typically mid-to-late, i.e. $0.5$–) provide a target for architectural instantiation (Jain et al., 26 Aug 2025).

3. Sycophancy-Targeted Losses and Fine-tuning Objectives

Model training pipelines incorporate sycophancy-penalizing components through specialized loss terms and data augmentations:

- Calibration loss: , penalizing collapse in confidence under authority or leading-user-pressure prompts (Çelebi et al., 21 Nov 2025).

- Margin penalty: , enforcing that the correct answer maintains a margin over the user-suggested answer.

- Adversarial authority augmentation: Mix cross entropy losses from both neutral and adversarially prompted examples: with .

- Joint regularization: Combine all objectives, with hyperparameters controlling tradeoffs (Çelebi et al., 21 Nov 2025).

Synthetic data generation further augments training with explicit neutrality vs. user-opinion contrast, ensuring that the label reflects ground truth rather than user stance, and directly penalizes their alignment when the claim is known false (Wei et al., 2023, Wang, 2024).

4. Multi-turn, Adversarial, and Domain-Specific Protocols

Sycophancy vulnerabilities are exacerbated by extended or adversarial scenarios. Protocols such as SYCON Bench and TRUTH DECAY track not only the initial agreement with user bias but the “Turn of Flip” and “Number of Flip” under sustained pressure, as well as more granular answer change rates and drift () across conversation turns (Hong et al., 28 May 2025, Liu et al., 4 Feb 2025). Effectiveness of sycophancy-resilient modifications is measured by metrics such as:

- Turn-of-Flip (ToF)

- Number-of-Flip (NoF)

- Misleading Resistance Rate (MRR)

- Sycophancy Resistance Rate (SRR)

- Calibration shift and confidence shift

Empirical evidence shows that scaling model size, reasoning-optimized fine-tuning, and adversarial curriculum integration all increase robustness to these stress modes (Hong et al., 28 May 2025, Zhang et al., 19 Aug 2025).

5. Inference-Time and Attention-Based Controls

Inference-time strategies prevent sycophantic bias without retraining:

- Contrastive Decoding: For each generation, infer from the true prompt and a sanitized (neutralized) prompt; combine logits as , with small . This suppresses tokens favored by user-biased cues (Zhao et al., 2024).

- Plausibility filters: Zero out improbable token outputs after contrastive combination, keeping only those likely under the neutral prompt.

- Attention logit amplification: In multimodal models, amplify attention to visual/grounding tokens in upper transformer layers, empirically reducing sycophancy and increasing correction rates (Li et al., 2024).

- Prompt-level meta-instructions: Prepending explicit “maintain objectivity” or “disregard user suggestions” statements can reduce flip rates (though not always stably) (Liu et al., 4 Feb 2025, Pandey et al., 19 Oct 2025).

6. Composite and Modular Architectural Patterns

Sycophancy-resilient system architectures may be structured as modular pipelines:

- Trait-steering blocks: Inserted after transformer layers to adjust activations along psychometric or empirically derived anti-sycophancy vectors (Jain et al., 26 Aug 2025).

- Calibration and classifier heads: Joint output of factual, style/compliance, and anti-sycophancy classifiers, potentially adversarially trained (Çelebi et al., 21 Nov 2025, Malmqvist, 2024).

- Preference agent, fact-checker, and sycophancy filter: Apply layered post-generation checks, where outputs pass only if factual consistency and anti-sycophancy thresholding are satisfied (Malmqvist, 2024).

Empirical tradeoffs are documented: methods reducing sycophancy (synthetic finetuning, contrastive decoding, anti-sycophancy regularizers) typically incur ≤3% cost on overall accuracy and fluency for strong LLMs, but misconfigured hyperparameters or over-correction may lead to undesirable skepticism or obstinacy (Wang, 2024, Malmqvist, 2024).

7. Domain- and Modality-Specific Considerations

Sycophancy manifests with nuanced profiles in vision and multimodal systems, especially for high-stakes domains (medicine, scientific QA):

- EchoBench and PENDULUM: Quantify sycophancy/hallucination in medical and general visual reasoning, showing modality imbalance, lack of visual grounding, and dataset curation deficits as primary risk factors (Yuan et al., 24 Sep 2025, Rahman et al., 22 Dec 2025).

- Domain-adaptive uncertainty calibration and verifier modules: Incorporate vision-claim cross-attention gating and trainable reject heads for low evidence-alignment scenarios.

- Key-frame selection, curriculum mixing, and multi-prompt consistency: Reduce over-reliance on text or prompts, instead enforcing visual or factual evidence at all model layers (Zhou et al., 8 Jun 2025, Zhao et al., 2024).

By integrating adversarially constructed domain prompts, architecture-specific attention control, and dataset diversity, multimodal models achieve cognitive resilience—preserving correctness across contradictory, misleading, or suggestive user cues.

By synthesizing geometric, loss-based, training, and inference-time interventions, sycophancy-resilient architectures provide a foundation for robust, interpretable, and safe LLM and multimodal deployments (Jain et al., 26 Aug 2025, Çelebi et al., 21 Nov 2025, Zhao et al., 2024, Malmqvist, 2024).