Neural Gabor Splatting: Enhanced Gaussian Splatting with Neural Gabor for High-frequency Surface Reconstruction

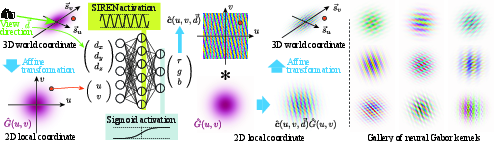

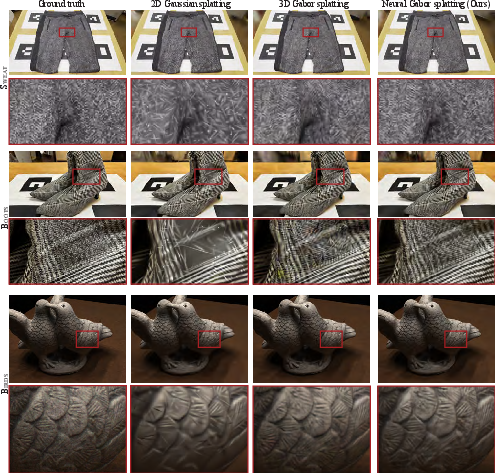

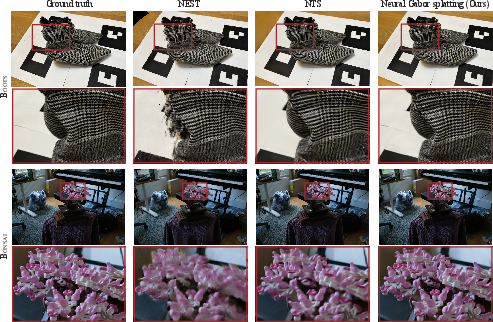

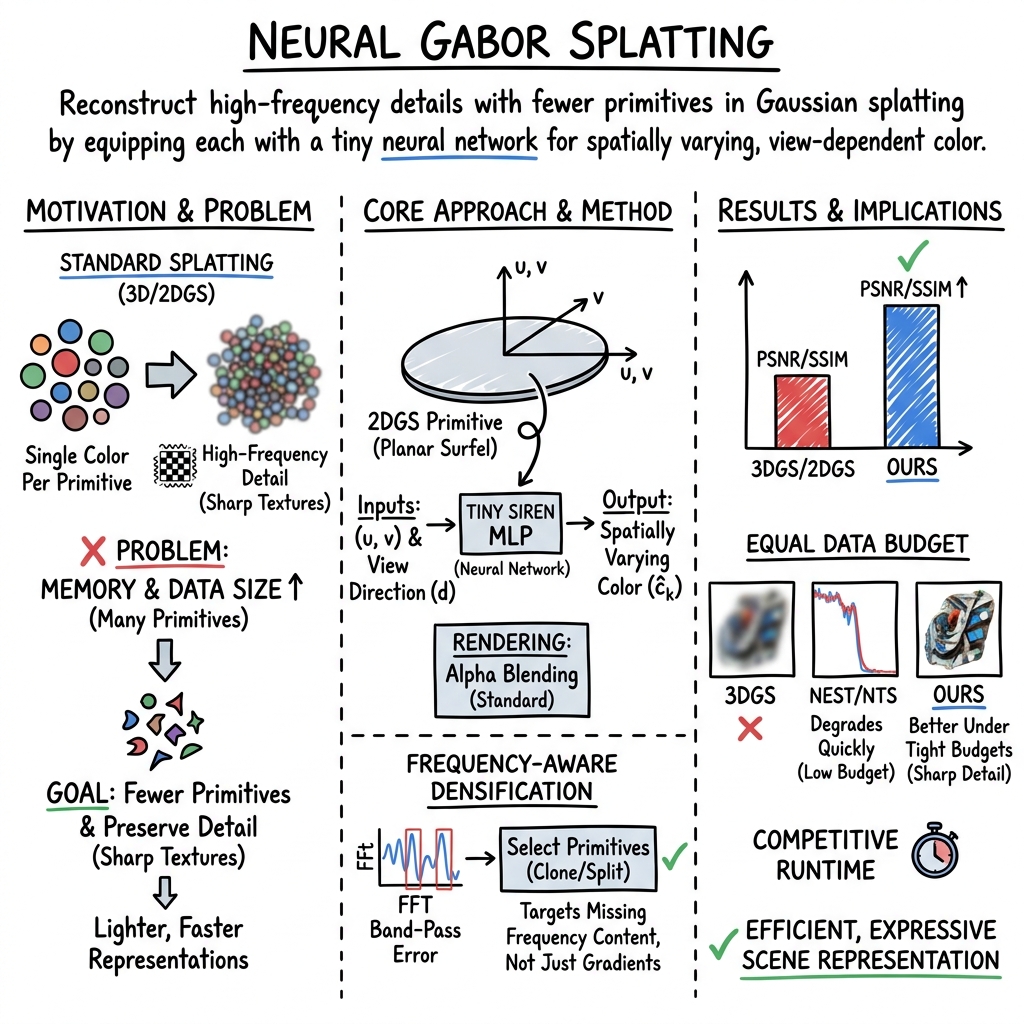

Abstract: Recent years have witnessed the rapid emergence of 3D Gaussian splatting (3DGS) as a powerful approach for 3D reconstruction and novel view synthesis. Its explicit representation with Gaussian primitives enables fast training, real-time rendering, and convenient post-processing such as editing and surface reconstruction. However, 3DGS suffers from a critical drawback: the number of primitives grows drastically for scenes with high-frequency appearance details, since each primitive can represent only a single color, requiring multiple primitives for every sharp color transition. To overcome this limitation, we propose neural Gabor splatting, which augments each Gaussian primitive with a lightweight multi-layer perceptron that models a wide range of color variations within a single primitive. To further control primitive numbers, we introduce a frequency-aware densification strategy that selects mismatch primitives for pruning and cloning based on frequency energy. Our method achieves accurate reconstruction of challenging high-frequency surfaces. We demonstrate its effectiveness through extensive experiments on both standard benchmarks, such as Mip-NeRF360 and High-Frequency datasets (e.g., checkered patterns), supported by comprehensive ablation studies.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Neural Gabor Splatting — A Simple Explanation

1) What is this paper about?

This paper is about making 3D scenes (built from photos) look sharp and detailed while keeping them fast and memory‑friendly. The authors improve a popular technique called “Gaussian splatting” by letting each tiny scene element paint patterns (like stripes or checks) on itself, instead of being just one flat color. They call this new method “neural Gabor splatting.”

2) What questions are the researchers trying to answer?

The paper focuses on two big questions:

- How can we represent super fine details (like tiny text, checkerboards, fur, or thin edges) without exploding the number of tiny pieces used to build the scene?

- How can we smartly decide where to add or remove those pieces so we only spend extra effort where detail is missing?

3) How does their method work? (In everyday language)

Think of rebuilding a scene with millions of small, soft stickers (these are the “Gaussians”). In standard Gaussian splatting:

- Each sticker has one color at a time.

- To show a sharp pattern (like a checkerboard), you need lots of tiny stickers, which uses a lot of memory.

The authors’ ideas:

- Give each sticker a tiny “artist” (a very small neural network) that paints patterns directly on the sticker. Now one sticker can show stripes, checks, or other textures without needing many duplicates.

- These stickers sit on flat little patches placed on surfaces (this is based on “2D Gaussian splatting”), so the method uses simple 2D coordinates on each patch.

- The tiny artist uses sine waves (called a SIREN network) to draw crisp patterns. Sine waves are great at making repetitive details (like stripes), which is why the paper uses the word “Gabor”—a math term often used for stripe-like patterns.

- The color the sticker paints can change depending on where you stand and look from (view direction), so shiny effects and reflections are possible.

Smarter adding/removing of stickers:

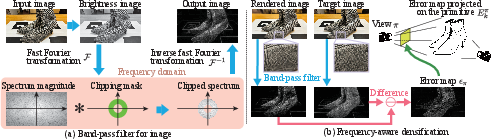

- Instead of just adding more stickers wherever the image looks wrong, they check which “frequencies” are missing—like how a music equalizer separates bass, mid, and treble.

- They use a tool (FFT) to split images into low, medium, and high-detail parts. If high-detail parts are missing, they add stickers there; if not, they avoid wasting extra pieces.

- This “frequency-aware densification” helps keep the sticker count low while keeping details sharp.

4) What did they find, and why is it important?

Main results:

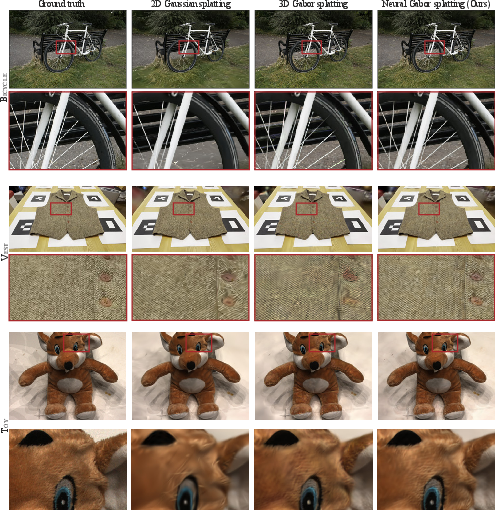

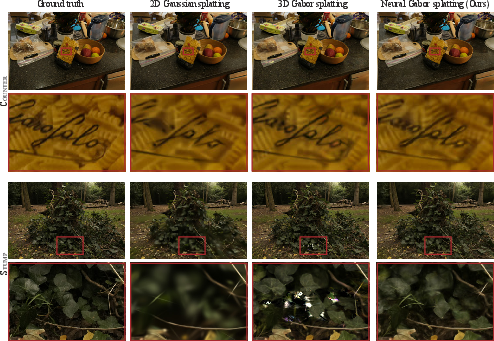

- Sharper details with the same memory: Their method makes fine textures (like checkered patterns) much clearer without needing tons of stickers.

- Fewer “needle-like” clutter pieces: Standard methods often create many thin, spiky elements to fake detail. Their stickers can paint details directly, so the scene stays cleaner.

- Works well on tough datasets: It performs strongly on both everyday scenes (like Mip‑NeRF360) and special “high-frequency” scenes full of tiny details.

- Efficient trade-offs: Training takes longer (about 2× compared to a simpler baseline), but rendering is still fast (dozens to hundreds of frames per second). Under tight memory limits, it holds up better than other advanced “neural splatting” methods.

Why it matters:

- You get better-looking 3D scenes without needing a huge amount of memory.

- It stays practical for real-time uses like AR/VR, games, and interactive 3D viewing.

5) What’s the impact, and what’s next?

Implications:

- This approach helps 3D apps show small, crisp details with fewer building blocks, saving memory and keeping things fast.

- It can improve the quality of virtual tours, 3D scanning, and any app that turns photos into 3D you can look around in.

Limitations and future directions:

- It’s focused on solid surfaces; it doesn’t directly handle fog, smoke, or moving scenes.

- Each sticker has a tiny neural network, which adds some computation.

- Future work could share those tiny networks across stickers or compress them further to save more memory and speed up training.

Overall, “neural Gabor splatting” teaches each little piece of a 3D scene to paint its own detailed patterns. That way, scenes look sharper and cleaner without needing millions of pieces to fake fine textures.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of concrete gaps and unresolved questions that future work could address:

- Memory–capacity accounting: Provide a rigorous, per-primitive memory budget comparing MLP weights (SIREN, ~6 hidden units) vs SH/color parameters in 2D/3DGS, and quantify when the added MLP cost is offset by reduced primitive counts across scenes.

- Capacity scaling laws: Characterize how hidden size, depth, and ω0 in SIREN affect quality, primitive count, and throughput; derive guidelines for choosing per-primitive capacity under a fixed memory budget.

- Adaptive capacity allocation: Replace fixed-size per-primitive MLPs with adaptive mechanisms (e.g., sparsity, learned neuron gating, rank reduction) that allocate capacity where needed and shrink it elsewhere.

- Parameter sharing/compression: Explore codebooks, shared decoders, low-rank factorization, or weight quantization to reduce on-disk and GPU memory footprint of per-primitive MLPs without sacrificing quality.

- Training stability and sensitivity: Systematically study sensitivity to SIREN initialization, ω0, learning rates, and activation choices to prevent ringing/overfitting common in high-frequency SIREN models.

- View-direction regularization: Add priors or losses to prevent per-primitive MLPs from memorizing training viewpoints; quantify generalization under sparse views and novel path extrapolations.

- Physical reflectance modeling: Investigate hybrid models that constrain or supervise the MLP with BRDF priors (microfacet or anisotropic lobes), or factorize albedo vs lighting to enable relighting.

- Anti-aliasing for neural colors: Integrate alias-free rendering (e.g., Mip-Splatting) with per-primitive MLP outputs; develop anti-aliasing or frequency clamping to suppress moiré/ringing at high frequencies.

- Inter-primitive continuity: Introduce cross-primitive consistency losses or shared latent fields to mitigate seams or pattern discontinuities across adjacent surfels.

- Function-aware splitting: Current cloning copies weights; design splitting strategies that partition the learned function (e.g., spatial frequency or orientation) so clones diversify rather than duplicate.

- Frequency-aware densification design: Justify and ablate FFT band choices, per-channel vs luminance error, color space, averaging kernel size, and thresholding; assess robustness across resolutions and camera baselines.

- Error attribution at occlusions: Analyze how frequency-domain errors near depth/occlusion boundaries are projected to primitives; mitigate misattribution that may cause spurious densification.

- Efficient frequency metrics: Replace global FFT with localized multi-scale/steerable filters or wavelets for faster and directionally sensitive band error estimation; quantify accuracy–speed trade-offs.

- Densification scheduling: Study adaptive schedules (cadence, view sampling count, camera selection) instead of fixed “every 100 iters and 20 views” to balance convergence speed and overhead.

- Geometry impact and mesh extraction: Measure how per-primitive MLPs affect geometry accuracy (depth, normals), mesh extraction, and texture baking; propose pipelines to bake neural colors into texture atlases.

- Volumetric and translucent media: Extend beyond planar surfels to handle participating media, subsurface scattering, and semi-transparent materials; assess compatibility with volumetric splatting.

- Dynamic scenes (4D): Generalize to temporal dimensions with per-primitive temporal MLPs or shared spatiotemporal decoders; enforce temporal coherence and evaluate flicker/stability.

- Robustness to data imperfections: Evaluate sensitivity to calibration noise, rolling shutter, motion blur, and photometric inconsistencies; design robust losses or data augmentation to stabilize frequency-driven densification.

- Fairness and breadth of comparisons: Provide comprehensive rate–distortion curves across more scenes/material types and stronger baselines (e.g., textured Gaussians with tuned capacity), with clearly matched memory budgets (including MLP weights).

- Runtime scalability: Report systematic FPS scaling with primitive count, image resolution, and MLP size; explore kernel fusion, vectorized batched MLP evaluation, and hardware-friendly approximations for mobile/AR.

- Storage vs runtime memory: Distinguish serialized footprint from training/inference GPU memory (including optimizer states and gradients for per-primitive MLPs); evaluate mixed precision and optimizer choices.

- Loss design: Augment L1+SSIM with frequency-domain losses aligned to densification bands, regularization in view-direction space, and perceptual priors to reduce high-frequency artifacts.

- Input features to MLP: Assess benefits of adding local surface frame (normal, tangent), curvature, or learned latent codes to improve view-dependent modeling and disentanglement.

- Initialization without SfM: Study robustness under weak or noisy SfM, or initialize primitives without SfM using learned priors or coarse neural fields before splatting.

- Adversarial/computation-cost robustness: Evaluate susceptibility to adversarial textures (e.g., Poison-Splat) that inflate compute via high-frequency content; design safeguards or regularizers.

- Hyperparameter auto-tuning: Develop automated methods to set band ranges, thresholds, and MLP sizes per scene based on online diagnostics (e.g., spectral energy statistics).

- Theoretical expressivity bounds: Analyze what classes of patterns a 6-neuron SIREN can represent over a surfel’s domain, and when additional primitives vs larger MLPs are theoretically preferable.

Practical Applications

Overview

Based on the paper “Neural Gabor Splatting: Enhanced Gaussian Splatting with Neural Gabor for High-frequency Surface Reconstruction,” the following applications distill practical uses of two core contributions: (1) per-primitive tiny MLPs (SIREN) that model spatially varying, view-dependent color within each Gaussian/planar primitive to represent high-frequency details with fewer primitives, and (2) a frequency-aware densification strategy that allocates primitives by band-limited frequency errors for better budget-quality trade-offs.

Below, applications are grouped by immediacy, linked to sectors, and include key assumptions/dependencies where feasibility might be affected.

Immediate Applications

These can be deployed now with the released code and commodity GPUs (e.g., RTX 30-series), for static scenes and high-frequency surfaces.

- High-fidelity 3D asset capture for games/VFX

- Sector: Media/Entertainment, Software

- What: Capture and render static sets/props with fine textures (e.g., fabrics, checkered patterns, thin-painted edges) using fewer primitives while maintaining real-time playback. Better handling of specular/view-dependent effects than single-color splats.

- Tools/workflows:

- “Neural Gabor Splat SDK” integrated into DCC tools and engines (Unreal/Unity) for import/export of 2DGS models with per-primitive MLPs.

- Cloud or local training pipeline: multi-view capture → SfM → neural Gabor training → engine-ready asset.

- Assumptions/dependencies: Static scenes; multi-view, calibrated images (or SfM poses); desktop-class GPU for training; engine-side rasterization support for per-primitive MLP evaluation or offline baking.

- Photoreal 3D product digitization for e-commerce and marketing

- Sector: Retail, Advertising/Marketing

- What: Lightweight, web-deployable 3D assets that preserve small prints, stitching, embossed logos, and glossy finishes under view changes, reducing model size compared to 3DGS for the same fidelity.

- Tools/workflows:

- WebGPU/WebGL viewer that supports per-primitive neural evaluation or pre-baked texture atlases derived from neural Gabor primitives.

- Product scanning kits for small businesses: phone capture → SfM → training → CDN-friendly asset.

- Assumptions/dependencies: Browser/WebGPU availability or server-side baking to textures; care with IP/privacy when scanning real products.

- Virtual tours and showrooms (real estate, museums) with fine surface detail

- Sector: Real Estate, Cultural Heritage, Education

- What: Interactive walkthroughs that render high-frequency surfaces (e.g., parquet grain, tile grout, artifacts’ inscriptions) at real-time speed and modest memory budgets.

- Tools/workflows: Capture rigs (mirrorless cameras or phones), batch training in the cloud, and a lightweight viewer.

- Assumptions/dependencies: Static indoor/outdoor captures; viewer decoding support for neural splats or pre-baked representations.

- Shiny/anisotropic material showcases

- Sector: Design/Manufacturing, Advertising

- What: Demonstrations of metallic paints, brushed metal, or glossy varnishes where view-dependent appearance is critical; neural per-primitive shading encodes specularity without heavy SH/texture overhead.

- Tools/workflows: “Material showcase” microsites or in-app viewers; asset pipeline that preserves per-primitive view-conditioning or bakes it into reflectance proxies.

- Assumptions/dependencies: Lighting kept similar to capture (method isn’t relightable); view-dependence captured during training.

- Compression-conscious 3D web experiences

- Sector: Software, Web Platforms

- What: Under strict bandwidth or memory budgets, frequency-aware densification prioritizes visually critical high-frequency areas to preserve perceived quality at low footprints.

- Tools/workflows: Progressive transmission prioritizing high-frequency regions; optional server-side density scheduling for budget tiers (1–20%).

- Assumptions/dependencies: Client viewer must support the chosen representation (neural primitives or pre-baked textures/meshes).

- Rapid scene previews and previsualization

- Sector: Media/Entertainment, Architecture/Design

- What: Faster high-frequency faithful previews than NeRF-like methods, with real-time render at 30–500 FPS on RTX 3090, enabling iterative creative decisions on set/desk.

- Tools/workflows: Capture → quick train (≈2× 2DGS) → real-time preview; integration with previz tools.

- Assumptions/dependencies: Static geometry; GPU availability; longer training than 2DGS but still practical.

- Enhanced surface-oriented mesh extraction

- Sector: CAD Visualization, Design Review

- What: Combine with surface-aligned splat-to-mesh pipelines to extract sharper textures into mesh-based assets for CAD or downstream rendering.

- Tools/workflows: SUGAR or similar mesh reconstruction → texture baking from neural Gabor splats to UVs.

- Assumptions/dependencies: Baking pipeline required; neural-to-texture conversion loses some view-dependence.

- Research acceleration in neural rendering and primitive design

- Sector: Academia/Research

- What: A testbed for per-primitive neural shaders, SIREN activations for high frequencies, and frequency-aware densification policies; useful for benchmarking under strict memory budgets.

- Tools/workflows: Open-source code; Mip-NeRF360 and high-frequency datasets; ablation-ready modules (densification, opacity reset).

- Assumptions/dependencies: GPU resources; familiarity with 2DGS/3DGS codebases.

- Training efficiency improvements for existing splatting pipelines

- Sector: Software/Rendering Tools

- What: Drop-in frequency-aware densification to 3DGS/2DGS to reduce overgrowth in low-impact regions and focus capacity on missing high-frequency bands.

- Tools/workflows: FFT/IFFT modules integrated into training loops; per-band error maps to guide cloning/pruning.

- Assumptions/dependencies: Adds minor GPU overhead; requires tuning band ranges and thresholds per dataset.

Long-Term Applications

These require further R&D (e.g., parameter sharing, mobile optimization, dynamic/volumetric extensions) before broad deployment.

- On-device AR rendering and capture with neural splats

- Sector: AR/VR, Mobile

- What: Real-time, high-fidelity AR assets on smartphones/AR headsets with strong detail preservation and low memory footprints.

- Tools/products:

- Mobile-optimized per-primitive networks (e.g., codebooks/weight sharing, quantization).

- Neural-primitive runtime for Metal/Vulkan/WebGPU with SIMD-friendly SIREN kernels.

- Assumptions/dependencies: Significant optimization for mobile GPUs; energy constraints; robust on-device SfM and training or cloud offloading.

- Dynamic/4D neural splats capturing hair/fur/clothing detail

- Sector: Telepresence, Avatars, Entertainment

- What: Extend per-primitive neural shading to time-varying scenes (4D splatting) to preserve high-frequency dynamics (e.g., fabric textures, hair) while keeping memory manageable.

- Tools/workflows: Integration with 4DGS pipelines; temporal parameter sharing; frequency-aware densification across time.

- Assumptions/dependencies: New temporal regularizers; streaming-friendly parameterization; increased compute budget.

- Standardized “neural primitive” scene formats and tooling

- Sector: Software Standards, Policy

- What: An interchange format for scenes with per-primitive neural weights, enabling cross-engine portability and archival.

- Tools/products: glTF-like extension or a new “Neural Primitive Format”; validators; viewers.

- Assumptions/dependencies: Consensus across engine vendors; versioning for safety/security; IP rights for scanned environments.

- Edge–cloud cooperative streaming of neural splats

- Sector: Cloud/Edge Computing, Telecom

- What: Progressive, frequency-aware streaming: edge devices request high-frequency bands first for perceptual quality under low bandwidth; cloud does heavy training/decoding.

- Tools/workflows: Adaptive streaming protocols; on-the-fly band prioritization; fallbacks to baked textures when compute is constrained.

- Assumptions/dependencies: Network QoS; server infrastructure; efficient prioritization policies.

- Relightable and material-aware neural splats

- Sector: Design/Manufacturing, E-commerce

- What: Incorporate illumination and material models (BRDFs) so assets can be relit while preserving detail; per-primitive MLPs approximate reflectance, not only view-conditioned color.

- Tools/workflows: Joint reflectance/illumination estimation; light probes during capture; training losses separating light and material.

- Assumptions/dependencies: More complex training; potential increase in per-primitive capacity; careful generalization.

- Robotics/digital twin simulators with photoreal fine-detail

- Sector: Robotics, Industrial IoT

- What: High-frequency realistic environments for perception training (e.g., reading labels/markings, texture-rich surfaces); frequency-aware resource allocation for scalable worlds.

- Tools/workflows: Conversion of captured sites to neural-splat scenes; simulator plugins; optional mesh/raster fallback for physics.

- Assumptions/dependencies: Static or mostly static environments; integration with physics engines; limits of purely visual fidelity for dynamics.

- Privacy and authenticity policy frameworks for photoreal scans

- Sector: Policy/Compliance

- What: Guidelines for capture rights, personal data in high-frequency scans (e.g., serial numbers, personal items), watermarking/authenticity of neural-splat assets.

- Tools/workflows: Automated redaction of sensitive high-frequency regions using frequency-aware error maps; provenance tags in neural-primitive files.

- Assumptions/dependencies: Legal harmonization across jurisdictions; adoption by platforms hosting 3D content.

- Cross-modal capture (LiDAR + neural splats) for resilient reconstructions

- Sector: AEC (Architecture, Engineering, Construction), Surveying

- What: Fuse depth sensors with neural Gabor splats to stabilize geometry while preserving fine texture; improved mesh extraction for BIM.

- Tools/workflows: Joint optimization pipelines; geometry-guided densification; asset baking for CAD tools.

- Assumptions/dependencies: Sensor calibration; handling of reflective/transparent surfaces; increased pipeline complexity.

- Neural-primitive compression codecs and codebook sharing

- Sector: Software, Telecom

- What: Parameter sharing across primitives (e.g., dictionary of tiny MLPs) for dramatic reductions in memory/stream size while preserving detail.

- Tools/workflows: Learned codebooks; quantization/pruning; hardware-aware kernels.

- Assumptions/dependencies: Research into rate–distortion for neural parameters; hardware support for fast dequantization.

- Assistive content creation (consumer scanning to high-quality assets)

- Sector: Creator Economy, Daily Life

- What: Consumer-friendly scanning apps that produce detailed 3D assets for marketplaces, social platforms, or personal archives with fewer artifacts in fine textures.

- Tools/workflows: Capture guidance UI (frequency coverage hints), cloud training, one-click publishing to viewers.

- Assumptions/dependencies: Simplified UX; automated pose estimation; platform-side viewers.

Key Cross-cutting Assumptions and Dependencies

- Scene type: Static, surface-dominant scenes; the paper explicitly notes limitations for volumetric phenomena and dynamic scenes.

- Capture: Requires multi-view, calibrated images (or reliable SfM) covering high-frequency regions sufficiently.

- Compute: Training is ≈2× 2DGS; inference is real-time on desktop GPUs (30–500 FPS on RTX 3090). Mobile or embedded deployment needs further optimization.

- Rendering stack: Viewers/engines must either support per-primitive MLP evaluation in the splatting pipeline or provide a baking path to textures/meshes.

- Lighting: Method models view dependence but not arbitrary relighting; consistent or captured lighting conditions yield best results.

- Band-limited densification: FFT-based error computation adds minor overhead; thresholds and bands may require scene-specific tuning.

- Data budgets: Benefits are most pronounced under tight memory/bandwidth budgets and in high-frequency areas; gains may be less in low-frequency scenes.

Glossary

- 2D Gaussian splatting: A radiance field representation that models each primitive as a planar Gaussian and computes exact ray-plane intersections for rasterization. "the 2D Gaussian splatting framework represents each primitive as a planar (flat) Gaussian distribution"

- 3D Gabor Splatting: A variant of splatting that replaces Gaussians with Gabor kernels to directly encode high-frequency patterns. "3D Gabor Splatting~\cite{watanabe20253d} and 3DGabSplat~\cite{zhou20253dgabsplat} replace Gaussians with augmented Gabor kernels"

- 3D Gaussian splatting (3DGS): A point-based, explicit scene representation using 3D Gaussian primitives for fast training and real-time rendering. "Since the introduction of 3D Gaussian splatting (3DGS)~\cite{kerbl3Dgaussians}"

- ablation studies: Controlled experiments that remove or modify components to analyze their impact on performance. "supported by comprehensive ablation studies"

- affine transformation: A linear mapping (plus translation) used here to convert 3D points to a primitive’s local 2D coordinates. "2D Gaussian splatting primitive's affine transformation from 3D space"

- alpha blending: Compositing technique that accumulates colors and opacities of overlapping primitives from front to back. "represents a scene with a set of Gaussian primitives and rasterizes them with alpha blending"

- alpha-compositing loss: A training objective that penalizes errors in alpha-based image compositing. "we did not adopt their alpha-compositing loss"

- anisotropic effects: View-dependent reflectance behaviors that vary with direction, not equally in all orientations. "complex specular and anisotropic effects"

- atomicAdd operations: GPU-side atomic additions used here during training to safely accumulate parallel weight updates. "mainly due to the increased number of atomicAdd operations required for weight updates"

- band-limited frequency components: Portions of an image’s spectrum restricted to specific frequency ranges. "Band-limited frequency components are extracted from both the rendered image and the ground truth"

- band-pass filtering: Filtering that isolates a specific range of frequencies while removing lower and higher bands. "using FFT, band-pass filtering, and inverse FFT"

- cloning: Duplicating primitives (with copied parameters) to increase capacity in regions with high error. "primitives with high errors are selected for cloning or splitting"

- covariance matrix: A positive semi-definite matrix defining the spread and orientation of a Gaussian primitive. " is the positive semi-definite covariance matrix"

- deferred neural rendering: A pipeline that stores neural features in textures and decodes them to colors only for visible pixels at render time. "deferred neural rendering (i.e., neural textures)"

- densification: The process of increasing the number of primitives in challenging regions to reduce reconstruction error. "The superior rendering quality of 3DGS primarily arises from its adaptive densification strategy"

- error-based densification: A densification method that projects image-space errors back to primitives to decide where to clone or split. "We introduce a frequency-aware densification strategy (see~Fig.~\ref{fig:frequency_map}), inspired by the error-based densification method"

- Fast Fourier Transform (FFT): An efficient algorithm to compute the discrete Fourier transform of an image or signal. "We use Fast Fourier Transform (FFT) and its inverse (IFFT)"

- frequency domain: A representation of signals in terms of their constituent frequency components. "we define in the frequency domain"

- frequency-aware densification strategy: A densification scheme that targets under-represented frequency bands by using band-limited error maps. "we introduce a frequency-aware densification strategy"

- Gabor kernels: Sinusoidal functions modulated by Gaussians, used as basis functions for high-frequency pattern representation. "replaced Gaussians with 3D Gabor kernels"

- Gabor noise: A procedural noise based on Gabor functions, offering controllable frequency content. "3D Gabor splatting is constrained by the properties of Gabor noise"

- Gaussian primitive: A single splatting element defined by position, scale, orientation, opacity, and color (or learned appearance). "Each Gaussian primitive has spherical-harmonic coefficients for a view-dependent RGB expression"

- gradual opacity reset: A training heuristic that slowly adjusts primitive opacity instead of abrupt resets to stabilize optimization. "we replaced the hard opacity reset with the gradual opacity reset"

- Half-Gaussian splatting: A technique using half-Gaussian shapes to better capture sharp edges and discontinuities. "Li et al.~\cite{li2025hgs} introduced Half-Gaussian splatting to capture sharp edges"

- hash grid: A compact multi-resolution feature grid indexed via hashing, used here as an appearance representation budget control. "by lowering the resolution of the hash grid and tri-plane, respectively"

- inverse Fourier Transform (IFFT): The operation that converts frequency-domain data back to the spatial domain. "We use Fast Fourier Transform (FFT) and its inverse (IFFT)"

- local mean filtering: Averaging over a neighborhood to reduce noise and stabilize error measures. "followed by a local mean filtering operator to build a robust error metric"

- LPIPS: A learned perceptual similarity metric that measures perceptual differences between images. "Table~\ref{tab:metrics} reports PSNR, SSIM, and LPIPS scores"

- multi-layer perceptron (MLP): A feedforward neural network used here per primitive to map local coordinates and view direction to color. "we introduce a lightweight MLP that predicts RGB values from the primitive’s local coordinates and view direction"

- neural radiance fields (NeRF): A representation that models scene appearance and density with an MLP and renders via volumetric integration. "Compared to the neural radiance fields (NeRF)~\cite{mildenhall2020nerf}"

- neural textures: Learnable texture features decoded by neural networks for efficient rendering of rich appearance. "deferred neural rendering (i.e., neural textures)"

- opacity: A per-primitive parameter controlling how much a primitive contributes to the final pixel color. " is the opacity of the primitive"

- opacity correction: A procedure to adjust newly cloned or split primitives’ opacities to maintain consistent compositing. "opacity correction follows~\cite{rota2024revising}"

- positional encoding: A technique to inject high-frequency signals into neural networks by encoding input coordinates. "NeRF leverages positional encoding to represent high-frequency details"

- PSNR: Peak Signal-to-Noise Ratio, a standard metric for image reconstruction fidelity. "Table~\ref{tab:metrics} reports PSNR, SSIM, and LPIPS scores"

- radiance field: A function describing emitted light in 3D space as a function of position and viewing direction. "NeRF~\cite{mildenhall2020nerf, tancik2020fourier} models a continuous radiance field"

- rasterizes: Converts primitives into pixel contributions during rendering, here via analytical splatting. "represents a scene with a set of Gaussian primitives and rasterizes them with alpha blending"

- ray marching: Numerical integration along camera rays through a volume, used by NeRF for rendering. "which represent a radiance field with a multi-layer perceptron (MLP) and require costly volumetric ray marching"

- SIREN: A neural network architecture using sinusoidal activations to represent high-frequency signals. "a SIREN activation function"

- spherical-harmonic (SH) bases: Orthogonal basis functions on the sphere used for compact, view-dependent appearance modeling. "without requiring explicit spherical-harmonic (SH) bases"

- Structure-from-Motion (SfM): A technique to recover camera poses and a sparse 3D point cloud from images. "The initial points were randomly sampled from the structure-from-motion (SfM) point cloud"

- transmittance: The accumulated fraction of light not yet absorbed by previous primitives along a ray. " is the transmittance (influence of -th kernel on the screen)"

- tri-plane: A tri-orthogonal plane parameterization used to store neural features compactly for 3D fields. "by lowering the resolution of the hash grid and tri-plane, respectively"

- view-dependent appearance: Appearance that changes with the viewing direction due to reflectance properties. "view-dependent appearance within a single primitive"

- specular: Reflective components that produce highlights and sharp view-dependent effects on surfaces. "effectively learning complex specular and anisotropic effects"

Collections

Sign up for free to add this paper to one or more collections.