- The paper reveals that the Canadian AI Register prioritizes technical features while occluding key sociotechnical and discretionary elements.

- It employs mixed-methods analysis with deductive coding and the ADMAPS framework to dissect algorithmic accountability in the public sector.

- The study identifies significant gaps in data provenance and transparency, questioning the effectiveness of register-based oversight mechanisms.

Bureaucratic Silences in Algorithmic Governance: A Critical Analysis of the Canadian AI Register

Introduction

"Bureaucratic Silences: What the Canadian AI Register Reveals, Omits, and Obscures" (2604.15514) develops an empirical and critical discourse analysis of the inaugural Canadian Federal AI Register, operationalized in November 2025. Employing the ADMAPS framework, the paper interrogates not only the dataset of 409 systems disclosed in the Register but the epistemic and ontological implications of register-based algorithmic accountability regimes in the public sector. The analysis elucidates a sharp disjuncture between official transparency rhetoric and the performative, technical, and tool-centric accountability that such registers instantiate. Instead of ensuring contestability and grounded oversight, the current design and operationalization of the Canadian AI Register predominantly foregrounds technical system features—obscuring critical sociotechnical infrastructures, discretionary loci, and domains of uncertainty.

Analytical Framework and Methodology

The study conducts a mixed-methods investigation, using deductive coding and critical discourse analysis grounded in ADMAPS. The ADMAPS framework parses public sector algorithmic governance as an interplay between algorithmic decision-making, bureaucratic processes, and human discretion, enabling a systematic analysis of how responsibility, uncertainty, and agency are reified or effaced in Register entries. The researchers emphasize what is rendered explicit—or omitted—in the dataset, scrutinizing technical capabilities, data provenance, system status, and the distribution of governances across organizations, developers, and jurisdictions.

Representation of Algorithmic Systems

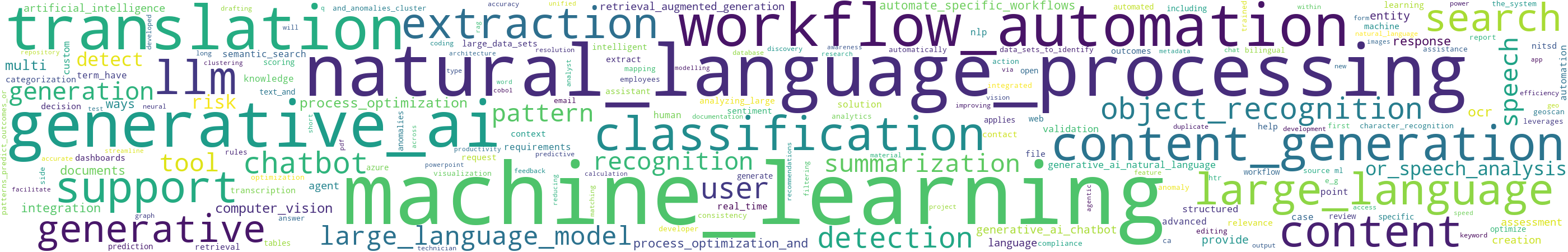

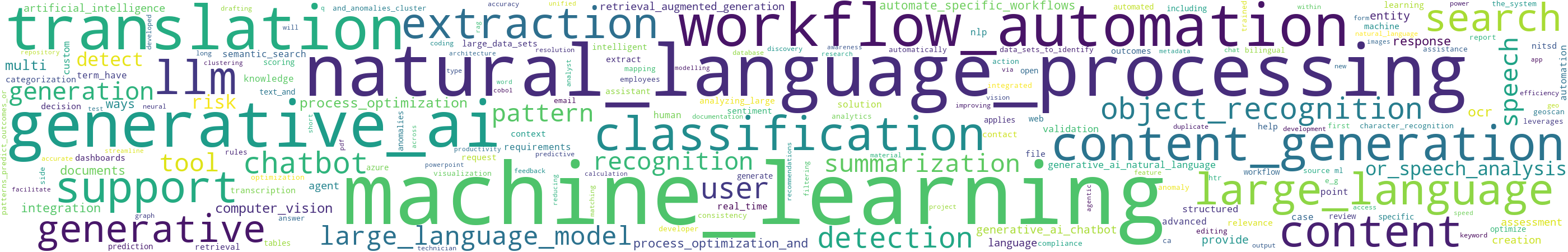

The Register predominantly constructs AI as "internal tooling" for efficiency, with 86% of systems deployed for use by government employees rather than for direct external interaction. The technical vocabulary of the systems is heavily weighted toward NLP, LLM, ML, and generative models, as reflected in the frequency distribution of register descriptions.

Figure 1: The most salient technical capabilities, with a pronounced emphasis on NLP, LLMs, and generative approaches.

A granular analysis reveals that:

- Informational and productivity systems comprise the bulk, where AI augments information retrieval, summarization, and document handling. Tooling such as CANChat and document chatbots are widely propagated across departments.

- Prescriptive and predictive systems—notably in enforcement, risk scoring, and triage functions—embed formalized risk assessments and recommendations into bureaucratic workflows, often absorbing what was formerly tacit professional judgment.

- Operational automation is also pronounced, exemplified by procedural OCR/LLM-based pipelines for grant analysis, identity verification, or task assignment.

One striking observation is the frequent occlusion of data lineage and governance, with 24.4% of systems omitting specifics on data sources. Where data is detailed, there is a reliance on administrative records, open web data, and, critically, a substantial dependency on external/proprietary vendor-supplied infrastructure.

Bureaucratic Processes and Infrastructural Dependencies

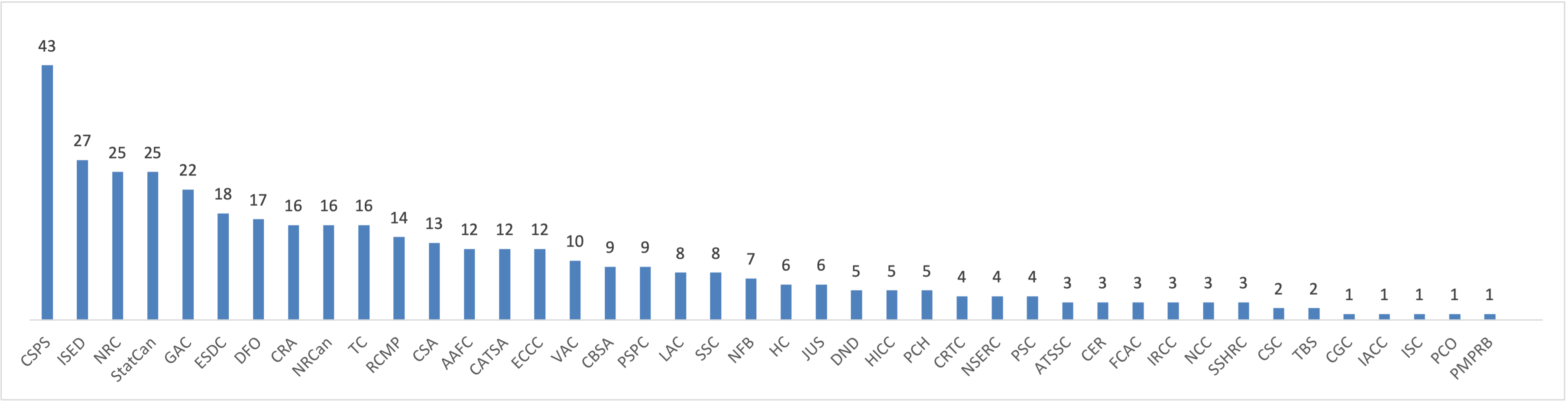

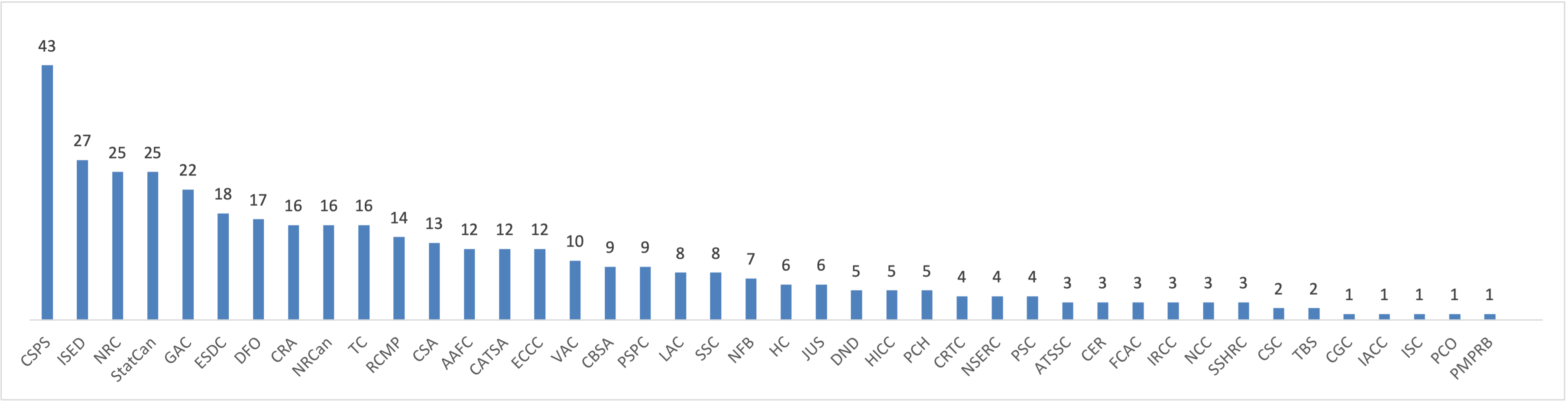

Analysis of organizational deployment reveals considerable concentration:

Figure 2: Top—systems are disproportionately registered by a subset of federal organizations; Bottom-left—jurisdictional mentions are patchy and non-uniform; Bottom-right—the temporal trend evidences increasing deployment post-2022.

AI deployment is heavily clustered within a few agencies (e.g., CSPS, ISED, NRC, ESDC, GAC) out of over 200 possible departments. The register data point toward a post-2022 expansion, with ongoing development and live production distributed across sectors.

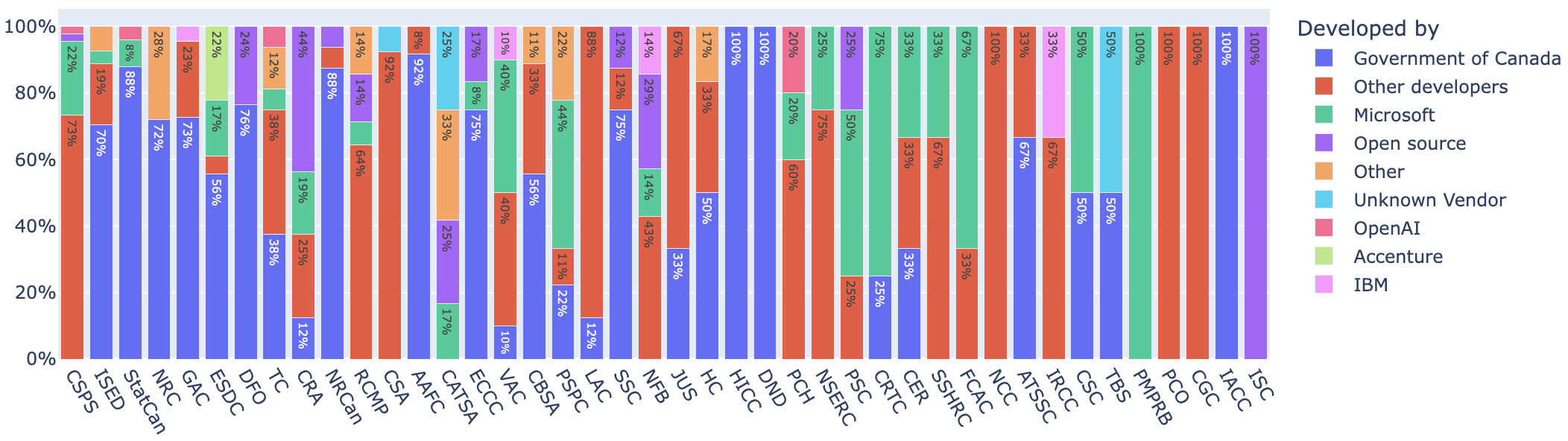

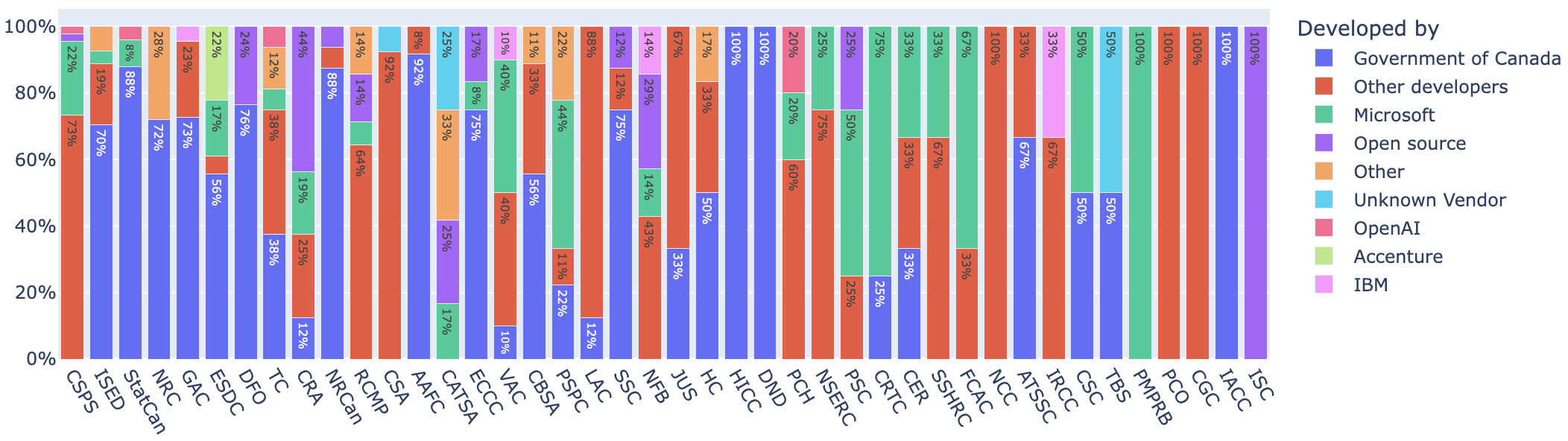

Crucially, developers' provenance evidences a dual structure:

Figure 3: Organizational developer share demonstrates a near-even split between in-house and vendor-developed systems, with notable third-party dominance by select vendors (e.g., Microsoft).

- 43.3% of systems are developed in-house.

- 38.1% are vendor-provided—conspicuously concentrated among large, often foreign, technology stack providers.

- Open-source-only deployments remain marginal at 6.1%.

This architecture exposes profound infrastructural entanglements. The technical sovereignty narrative is substantially contradicted by the revealed reliance on external, frequently non-Canadian, corporate vendors, challenging the claims of policy autonomy and "AI sovereignty" advanced in governmental rhetoric.

Administrative process and user training requirements are minimally described in the Register, with most entries presuming seamless integration into extant workflows; this creates an epistemic gap around the sociotechnical adaptation and operational management of uncertainty, delegation, and remediation practices.

Human Discretion, Expertise, and Silenced Uncertainty

An explicit design of AI for internal use reifies existing hierarchical workflows but occludes the locus of discretion and the substantive content of professional judgment. Several key findings emerge:

- Decision authority is consistently narrated as "human-in-the-loop," but descriptive granularity on how expertise, heuristics, and value negotiation interact with algorithmic outputs is largely absent.

- Where Algorithmic Impact Assessments (AIA) are required by the Directive on Automated Decision-Making, their completion and publication rates are markedly low—suggesting a significant transparency and compliance gap.

- Uncertainty is handled heterogeneously: some systems are openly experimental (marked as pilots); others are described as mature tools with "reliable" outcomes; many avoid the topic of uncertainty entirely, shifting its burden silently onto the practitioners who must operationalize the output.

Ultimately, the register functions more as a performative inventory of technical capacity and compliance, rather than an actionable infrastructure for robust contestability or error correction.

Ontological Implications and Limits of Transparency

The core theoretical insight is that the Canadian AI Register should be read as an artifact of ontological design: it both reflects and constructs the bounded domains of what counts as an "AI system," which decisions and actors are visible to governance, and which forms of responsibility and contestation are possible. Omission from the register (e.g., of IRCC’s "Chinook" system, a controversial tool in immigration processing) demonstrates that classification and naming control become crucial leverage points in the politics of algorithmic accountability.

The register's stylized and selective reporting not only narrows oversight to what is technically documented but also stabilizes a surface-level performativity of accountability that risks substituting mere visibility for actionable oversight mechanisms. This is particularly problematic in high-stakes domains (e.g., immigration, welfare adjudication, compliance enforcement), where affected parties are left with little insight into procedural logic or remedy mechanisms.

Implications for Practice, Theory, and Future Policy

Practically, the findings signal that registers of this genre—if not critically redesigned—may entrench compliance regimes that are decoupled from substantive oversight, embedding accountability in weakly contestable, technocratic artifacts. This suggests that increased technical documentation without concomitant institutionalization of review, stakeholder contestation, and adaptive governance may yield only epistemic opacity masked as transparency.

Theoretically, the work reinforces critiques of disclosure-oriented algorithmic governance as insufficient in sociotechnical settings, resonating with the literature documenting the limits of technical transparency as a mechanism for public trust and democratic legitimacy.

With policy regimes internationally converging on register-based accountability artifacts, the Canadian case sets a precedent for the potential institutional risks of such models.

Conclusion

The Canadian AI Register, as critically analyzed in this study, exemplifies both the promise and limitations of register-based transparency regimes for public sector AI oversight. It reveals a complex entanglement of technical, bureaucratic, and vendor-driven rationalities that together produce “bureaucratic silences” in the domains of discretion, uncertainty, and infrastructural dependence. For algorithmic governance to support meaningful public accountability and trust, registers must transcend the technically performative, tool-centric paradigm and foreground the full sociotechnical ecology of system operation, adaptation, and contestation. This mandates a recalibration of transparency mechanisms—prioritizing contestability, procedural adaptability, and multidirectional visibility as prerequisites for effective AI governance.