The LLM Fallacy: Misattribution in AI-Assisted Cognitive Workflows

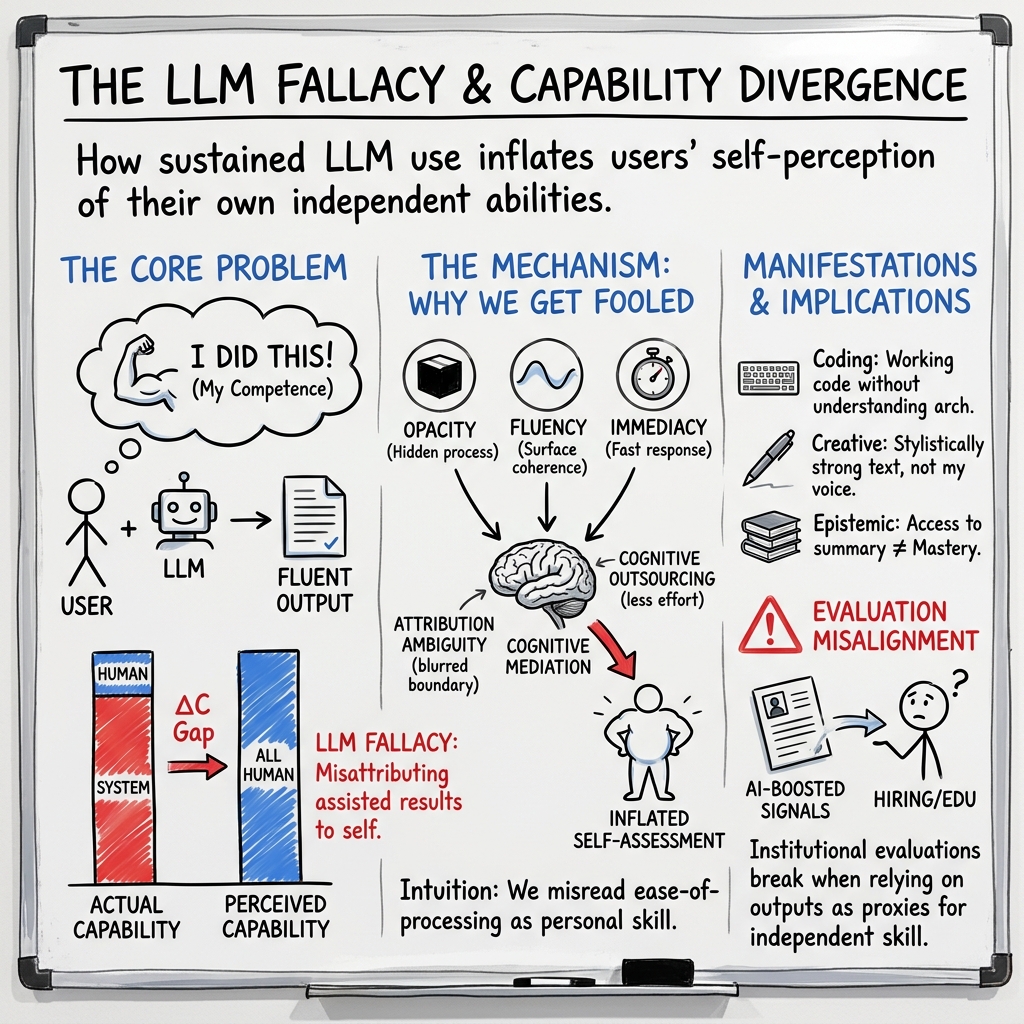

Abstract: The rapid integration of LLMs into everyday workflows has transformed how individuals perform cognitive tasks such as writing, programming, analysis, and multilingual communication. While prior research has focused on model reliability, hallucination, and user trust calibration, less attention has been given to how LLM usage reshapes users' perceptions of their own capabilities. This paper introduces the LLM fallacy, a cognitive attribution error in which individuals misinterpret LLM-assisted outputs as evidence of their own independent competence, producing a systematic divergence between perceived and actual capability. We argue that the opacity, fluency, and low-friction interaction patterns of LLMs obscure the boundary between human and machine contribution, leading users to infer competence from outputs rather than from the processes that generate them. We situate the LLM fallacy within existing literature on automation bias, cognitive offloading, and human--AI collaboration, while distinguishing it as a form of attributional distortion specific to AI-mediated workflows. We propose a conceptual framework of its underlying mechanisms and a typology of manifestations across computational, linguistic, analytical, and creative domains. Finally, we examine implications for education, hiring, and AI literacy, and outline directions for empirical validation. We also provide a transparent account of human--AI collaborative methodology. This work establishes a foundation for understanding how generative AI systems not only augment cognitive performance but also reshape self-perception and perceived expertise.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (big picture)

This paper looks at a new kind of mistake people can make when they use AI writing tools like ChatGPT or other LLMs. The authors call it the “LLM fallacy.” It happens when someone thinks, “Because I produced this great result with an AI’s help, I must be really good at this on my own.” In short, people can confuse AI-assisted success with their own independent skill.

What questions the paper asks

The paper tries to answer simple but important questions:

- What exactly is the LLM fallacy?

- How is it different from other problems like AI “hallucinations” or people just trusting automation too much?

- Why does this fallacy happen in the first place?

- Where do we see it show up (coding, writing, learning, hiring, etc.)?

- What does this mean for schools, jobs, and how we judge someone’s skills?

- How could future research prove this is real and measure it?

How the authors studied it (in plain language)

This is a concept paper, not a lab experiment. That means the authors:

- Reviewed past research on how people work with technology and make decisions.

- Built a simple model (a mental map) of why the fallacy happens.

- Collected real-world patterns and examples from different areas (like programming or schoolwork).

- Suggested ways to test the idea in future studies.

Key ideas explained with everyday analogies:

- LLM: A very advanced text tool that predicts words really well, like a supercharged autocomplete that can write essays, explain code, or translate.

- Opacity: The “black box” problem. You can see what comes out of the AI, but not how it got there—like getting a cake from a bakery without seeing the recipe or the baking process.

- Fluency: AI outputs often sound smooth and professional. That slickness can trick us into thinking the content (and our own skill) is stronger than it really is.

- Cognitive offloading: Using a calculator for math. It helps you get the answer, but you might not practice the steps yourself. With LLMs, you can offload writing, reasoning, and research, too.

- Attribution: Deciding who deserves the credit—did I do this, did the AI, or both?

The authors also used an LLM to help draft and refine the paper, but they followed strict rules: a human made decisions, checked the AI’s work, and took final responsibility. They describe this process to stay transparent.

What the paper found and why it matters

Main finding: The LLM fallacy is when people treat AI-assisted outputs as proof of their own solo ability. This creates a gap between what they think they can do and what they can actually do without help.

Why it happens (the mechanism):

- Opacity: You can’t see how the AI builds answers, so it’s hard to separate your work from the AI’s.

- Fluency: The output sounds great, which our brains often read as “skilled.”

- Immediacy: The AI responds fast, making the whole experience feel effortless.

- Cognitive outsourcing: You let the AI do tough thinking, so you practice less yourself.

Put together, these make people overestimate their own skill—what the paper calls a “capability divergence” between perceived ability and real, independent ability.

Where it shows up (examples):

- Coding: You can ship working code with AI help but not fully understand how it works or how to fix it later.

- Languages: You can “write” in another language using the AI but can’t actually read or speak it on your own.

- Analysis and problem-solving: You can present a clean step-by-step explanation, but those steps were suggested by the AI, not generated by your own reasoning.

- Creative work: You produce polished essays or stories with AI’s heavy lifting, then feel more creative than you actually are without it.

- Learning/knowledge: You read an AI’s summary and feel like you “get it,” but you might not be able to explain the idea from scratch.

- Professional signaling: You share AI-polished work in resumes or interviews, and others may (wrongly) assume you have that skill independently.

Why this matters:

- Schools, tests, and hiring often judge people by their outputs. If outputs are heavily AI-assisted, we can mistake tool-boosted performance for real, internal skill.

What this could change going forward

The paper suggests practical shifts:

- Education: Design assignments and assessments that check process, not just final answers—for example, oral explanations, showing work, or timed, low-tech tasks. Teach “AI literacy,” so students know when they’re learning versus leaning too much on tools.

- Hiring and skills tests: Use evaluations that reveal how someone thinks without hidden AI help (e.g., live problem-solving, whiteboard steps, portfolio explanations).

- Tool design: Interfaces could make the AI’s contribution more visible (for example, highlighting AI-written sections), so users don’t confuse AI’s work with their own.

- Research: Run experiments that compare people’s self-ratings after using AI versus working alone, measure the gap between perceived and real skill, and test which interventions reduce misattribution.

Bottom line: LLMs can boost performance, but they can also blur who did what. To be fair and accurate—in school, at work, and in everyday life—we need to notice the difference between “I can do this” and “I can do this with AI’s help,” and update our teaching, hiring, and tools to make that difference clear.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, focused list of unresolved issues that are missing, uncertain, or left unexplored in the paper. Each point is phrased to be actionable for future research.

- Absence of empirical validation: No controlled experiments quantify the LLM fallacy or its magnitude across tasks; randomized studies comparing assisted vs unaided performance and self-assessment are needed.

- No standardized operationalization of “actual capability”: The paper does not define how to measure ground-truth competence independent of LLM aid across different domains (e.g., code, writing, analysis).

- Undefined metric for capability divergence (ΔC): There is no concrete, validated instrument to jointly capture objective performance and subjective self-assessment and to compute ΔC reliably.

- Unverified causal mechanisms: The distinct causal effects of opacity, fluency, and immediacy are hypothesized but not disentangled; factorial experiments manipulating each property are missing.

- Boundary conditions are unclear: The conditions under which the fallacy attenuates or reverses (e.g., expert users, high-stakes settings, transparent pipelines, slow/low-fluency models) are not specified.

- Individual-differences moderators: The role of prior expertise, metacognitive skill, AI literacy, cognitive styles, age, and neurodiversity in susceptibility to misattribution is untested.

- Temporal dynamics and learning: Longitudinal evidence on how repeated LLM use changes self-assessment (e.g., calibration drift, habituation, or corrective learning) is not provided.

- Domain sensitivity and task features: It remains unknown which task characteristics (ill-structured vs well-structured, feedback availability, evaluability, time pressure) amplify or dampen the fallacy.

- Multimodal generalization: Whether analogous misattribution arises with multimodal models (e.g., image, audio, data visualization, UI design) is unexplored.

- Model-quality effects: How the fallacy varies with model capability (frontier vs small models), decoding parameters, and uncertainty expression is not empirically tested.

- Interface and workflow interventions: The efficacy of provenance indicators, contribution meters, delayed responses, reflective prompts, or step-exposure (e.g., chain-of-thought) in reducing misattribution is unmeasured.

- Intervention side effects: Potential trade-offs between calibration-improving interventions and productivity, usability, or user satisfaction are not examined.

- Educational impacts: How LLM scaffolding influences durable learning, transfer, and metacognitive calibration—versus short-term task completion—remains an open empirical question.

- Hiring and assessment redesign: Concrete, tested process-aware assessment methods to separate independent skill from assisted performance (e.g., proctored unaided tasks, trace-based evaluation) are not specified or evaluated.

- Provenance and detection: Practical, robust methods to detect or verify LLM assistance in artifacts (e.g., watermarking, cryptographic co-signing, contribution logging) and their effect on misattribution are not assessed.

- Ethical and policy boundaries: Criteria distinguishing acceptable tool use from misrepresentation in academic and professional contexts are not articulated or stress-tested with stakeholders.

- Under-attribution and imposter dynamics: Conditions producing the opposite bias (crediting the AI and undervaluing one’s own contribution) are not examined, nor how over- and under-attribution interact.

- Team and organizational contexts: How attribution errors propagate in group work (credit assignment, shared mental models, social amplification) is not studied.

- Cross-cultural and linguistic variation: Cultural norms about authorship and tool use, and their effect on misattribution across languages and regions, are uninvestigated.

- Safety-critical domains: The risk profile of misattribution in high-stakes settings (e.g., medicine, law, finance, infrastructure) and mitigation strategies are not analyzed.

- Distinctness from adjacent constructs: Empirical designs that partition variance between the LLM fallacy and related biases (automation bias, illusion of explanatory depth, Dunning–Kruger) are not presented.

- External validity: The paper relies on conceptual and observational cases; field studies in real workplaces and classrooms quantifying prevalence and effect sizes are absent.

- Replicability of the meta-methodology: Despite a disclosure section, concrete artifacts (prompts, model versions, logs) enabling replication of the human–AI collaborative writing process are not provided.

- Measurement beyond self-report: Objective and behavioral measures of metacognition (e.g., wagering, post-decision confidence, process tracing) are not specified to avoid self-report bias.

- Decomposition of competence: Methods to parse tasks into subskills and attribute which components were internally vs externally supplied are not detailed.

- Evaluation-system metrics: Validated metrics for the robustness of hiring, credentialing, and grading systems under AI mediation—and benchmarks for process-aware evaluation—are not proposed.

- Legal and institutional implications: How disclosure, attestation, and audit regimes should be designed and enforced to address misattribution is not addressed.

- Equity and fairness: Whether the fallacy disproportionately affects or disadvantages certain groups (novices, non-native speakers, underserved populations) is not investigated.

- Cost–benefit thresholds: Criteria for when capability divergence becomes harmful (vs benign augmentation) are not defined, nor how much miscalibration is tolerable by context.

Practical Applications

Immediate Applications

The following applications can be implemented with today’s tools and organizational practices, translating the paper’s insights into concrete changes across sectors.

- Bolded use case: Hiring and skills assessment with process-aware design

- Sectors: Software, finance, consulting, content, government

- What it looks like: Pair take-home tasks (AI-permitted) with proctored, unaided segments; require AI-use disclosures; use work-sample “walkthrough interviews” where candidates explain code/analyses line-by-line; compare outputs with and without AI to detect capability divergence.

- Assumptions/Dependencies: Access to secure proctoring; clear policy on permitted AI use; interviewer training; fairness review for different access levels to AI.

- Bolded use case: Education assessment that distinguishes learning from assisted performance

- Sectors: K–12, higher education, professional training, EdTech

- What it looks like: Assignments that combine AI-assisted drafts with oral defenses, in-class unaided quizzes, process journals, and reflection on AI contributions; rubrics that weight process evidence (draft history, reasoning steps) alongside product.

- Assumptions/Dependencies: Instructor time; LMS support for version history; policy clarity on AI use; equity considerations.

- Bolded use case: AI-contribution statements and provenance in research and publishing

- Sectors: Academia, journalism, policy analysis, think tanks

- What it looks like: Mandatory “AI contribution statements” describing where, how, and to what extent LLMs were used; inclusion of prompt logs and revision notes (e.g., adopting the paper’s human–AI collaborative methodology and NLD-P prompting records).

- Assumptions/Dependencies: Journal and conference policies; cultural norms for disclosure; lightweight tooling to capture prompts/edits.

- Bolded use case: UX patterns that make machine contribution salient

- Sectors: Productivity software, IDEs, CMS, email and doc suites

- What it looks like: Visual markers for AI-generated segments; contribution meters; side-by-side change tracking; “explain your edits” prompts; tooltips that reveal generation steps to counter fluency illusions.

- Assumptions/Dependencies: Product engineering capacity; user testing for usability; opt-in/consent for telemetry; privacy-by-design.

- Bolded use case: Software engineering governance for AI-generated code

- Sectors: Software, robotics, embedded systems

- What it looks like: PR templates that require authors to (a) identify AI-generated blocks, (b) provide comprehension notes, (c) include tests; linters that flag unreviewed generated code; CI gates preventing merges without verification artifacts.

- Assumptions/Dependencies: Dev-tool integration (IDE/SCM); team policy buy-in; test coverage culture.

- Bolded use case: AI literacy modules centered on metacognition and calibration

- Sectors: Corporate L&D, universities, public-sector training

- What it looks like: Short courses that contrast performance with and without AI, teach the LLM fallacy concept, and train users to articulate human vs. machine contribution; include fluency-illusion demos and self-assessment calibration exercises.

- Assumptions/Dependencies: Curriculum development; access to controlled tasks; leadership sponsorship.

- Bolded use case: Compliance and audit trails for regulated content

- Sectors: Healthcare, finance, legal, public administration

- What it looks like: Logs indicating when and how LLMs influenced medical notes, financial reports, or legal drafts; sign-off workflows requiring human rationale; periodic audits comparing unaided vs. aided capability for high-risk roles.

- Assumptions/Dependencies: Secure logging; privacy and PHI/PII protections; regulator acceptance; retention schedules.

- Bolded use case: Procurement and vendor evaluation with process criteria

- Sectors: Public procurement, enterprise sourcing

- What it looks like: RFP/RFQ language requiring bidders to disclose AI use and to demonstrate unaided capabilities on critical tasks; evaluation scoring that separates process quality from output polish.

- Assumptions/Dependencies: Policy templates; buyer education; consistent enforcement.

- Bolded use case: Customer support and content operations with accountability

- Sectors: CX/BPO, SaaS, media, marketing

- What it looks like: Workflow flags for AI-authored replies; QA checkpoints requiring human validation and rationale for high-impact messages; team dashboards tracking assisted vs. unaided responses to monitor capability drift.

- Assumptions/Dependencies: Platform instrumentation; throughput/QoS trade-offs; staff training.

- Bolded use case: Language learning that avoids false fluency

- Sectors: EdTech, adult education, individuals

- What it looks like: Translator apps with “training wheels” modes that force comprehension checks (cloze tests, paraphrase without AI), periodic offline practice, and progress dashboards distinguishing assisted from independent proficiency.

- Assumptions/Dependencies: App feature development; psychometric design; user motivation.

- Bolded use case: Safety-critical checklists with independent verification steps

- Sectors: Healthcare, aviation, industrial operations, energy

- What it looks like: Decision-support UIs that record human reasoning before revealing AI suggestions; mandatory second-person verification for high-risk steps; post-incident reviews that examine human–AI contribution.

- Assumptions/Dependencies: Workflow redesign; clinician/operator time; liability and documentation standards.

- Bolded use case: Team norms and coaching for human–AI collaboration

- Sectors: Knowledge work broadly

- What it looks like: Team charters that define acceptable AI use, require attribution, and encourage “explain-first, ask-later” practice; retrospectives that surface where fluency or opacity led to misattribution.

- Assumptions/Dependencies: Manager support; lightweight templates; psychological safety.

Long-Term Applications

These applications require further research, standardization, or infrastructure to scale, drawing directly from the paper’s proposed mechanisms, metrics, and interventions.

- Bolded use case: Capability divergence (ΔC) measurement and dashboards

- Sectors: HR/people analytics, education, professional licensing

- What it looks like: Psychometric batteries and dual-condition benchmarks that quantify gaps between perceived and actual competence under aided vs. unaided conditions; individual/team dashboards for calibration.

- Assumptions/Dependencies: Validated instruments; longitudinal norms; data governance; fairness analyses.

- Bolded use case: Provenance and attribution standards for AI-mediated content

- Sectors: Software, publishing, legal, government records

- What it looks like: Cross-industry standards (e.g., W3C-like) for AI contribution metadata; cryptographic provenance, watermarking, or “copilot ledgers” at OS/app level that record where/how AI assisted.

- Assumptions/Dependencies: Vendor and standards-body alignment; privacy controls; interoperable schemas; regulator buy-in.

- Bolded use case: Process-aware credentialing and licensure

- Sectors: Accounting, law, medicine, engineering, data science

- What it looks like: Exams that explicitly span unaided and AI-augmented sections, scoring both independent mastery and collaboration skill; new micro-credentials for “AI collaboration competence.”

- Assumptions/Dependencies: Accreditor consensus; secure testing environments; evidence linking scores to outcomes.

- Bolded use case: Adaptive assistance that promotes learning over outsourcing

- Sectors: EdTech, IDEs, productivity suites

- What it looks like: Interfaces that modulate help based on user understanding (e.g., reveal hints before full answers, require user reasoning steps, fade assistance as competence grows).

- Assumptions/Dependencies: User modeling; experiment-backed pedagogy; guardrails against frustration; personalization privacy.

- Bolded use case: Organizational performance models distinguishing human skill and AI leverage

- Sectors: Enterprise HR, consulting, sales, R&D

- What it looks like: KPIs and compensation frameworks that separate human capability growth from tool-enabled output; workforce planning that tracks where AI masks skills gaps.

- Assumptions/Dependencies: Change management; analytics infrastructure; legal/compensation implications.

- Bolded use case: Regulatory frameworks mandating AI-use transparency

- Sectors: Finance, healthcare, public administration, elections

- What it looks like: Rules that require disclosure of AI assistance in official filings, clinical documentation, or policy drafts; penalties for misrepresentation; audits of process, not just product.

- Assumptions/Dependencies: Legislative action; harmonization across jurisdictions; enforcement capacity.

- Bolded use case: Evaluation designs robust to ubiquitous AI

- Sectors: Education, hiring, certifications

- What it looks like: Novel assessments (simulation centers, practical stations, oral defenses, sandboxed environments) that elicit authentic unaided performance; secure device/identity controls where appropriate.

- Assumptions/Dependencies: Cost and scalability; accessibility; privacy-preserving proctoring.

- Bolded use case: IDEs and data tools with built-in pedagogy and provenance

- Sectors: Software, analytics, robotics

- What it looks like: “Explain-before-generate” modes; commit-time “blind spot” quizzes for AI code; automated tagging of AI segments and refactoring prompts to deepen understanding.

- Assumptions/Dependencies: Ecosystem adoption; developer tolerance for friction; empirical evidence of learning gains.

- Bolded use case: Healthcare decision support with explain-and-verify protocols

- Sectors: Healthcare, clinical informatics

- What it looks like: EHR-integrated flows that capture clinician reasoning prior to AI reveal, log divergences, and provide feedback on calibration over time; oversight dashboards tracking AI reliance by task/risk.

- Assumptions/Dependencies: EHR vendor integration; clinician workload; medico-legal frameworks.

- Bolded use case: Financial advisory and risk functions with AI audit trails

- Sectors: Banking, asset management, insurance

- What it looks like: Systems that bind AI-derived analyses to advisor sign-offs, track when AI influenced recommendations, and require independent stress tests for high-stakes decisions.

- Assumptions/Dependencies: Regulator alignment (e.g., SEC/ESMA); model risk management processes; data retention.

- Bolded use case: Human–robot interaction with assistance transparency

- Sectors: Manufacturing, logistics, field service

- What it looks like: Operator UIs showing when plans or scripts were LLM-generated, with on-device “why” summaries and checkpoints requiring human validation before execution.

- Assumptions/Dependencies: HRI research; safety certification; offline/on-edge capability.

- Bolded use case: Sector-wide AI literacy and ethics standards focused on attribution

- Sectors: Professional societies (AMA, IEEE, ABA), education ministries

- What it looks like: Canonical curricula and codes of conduct that define appropriate AI use, attribution norms, and user responsibilities for calibrated self-assessment.

- Assumptions/Dependencies: Multi-stakeholder consensus; periodic updates; integration into CPD/CE requirements.

Each application is designed to counter the paper’s central risk—misattribution of AI-assisted performance as independent human competence—by making process visible, measuring capability divergence, and aligning incentives for accurate self- and external assessment. Feasibility hinges on organizational will, tooling support (provenance and logging), validated measurement, and policy alignment.

Glossary

- Attribution ambiguity: Unclear delineation of who contributed what in human–AI outputs, making authorship hard to assign. "A primary mechanism is attribution ambiguity between human input and model output."

- Attributional distortion: Systematic misassignment of causes for outcomes, here misattributing AI-assisted results to oneself. "distinguishing it as a form of attributional distortion specific to AI-mediated workflows."

- Attributional misalignment: A mismatch between actual human vs. system contributions and what users believe they contributed. "At its core, the phenomenon reflects an attributional misalignment between human and system contributions."

- Automation bias: The tendency to over-rely on automated systems and accept their outputs uncritically. "automation bias, which describes the tendency to over-rely on automated systems"

- Capability divergence: The gap between perceived and actual ability, amplified by AI assistance. "capability divergence (, defined as the gap between perceived and actual capability) emerges from the interaction of system-level properties"

- Cognitive offloading: Shifting mental tasks to external aids, reducing internal cognitive effort. "cognitive offloading, in which individuals externalize mental processes by relying on external systems"

- Cognitive outsourcing: Delegating reasoning and composition to AI systems, reducing one’s own engagement. "Cognitive outsourcing further contributes to the phenomenon."

- Distributed cognitive architectures: Cognitive systems where tools (like LLMs) are integrated components of thinking processes. "LLMs as components within distributed cognitive architectures rather than as external aids."

- Dual-process perspective: A theory distinguishing fast, intuitive thinking from slow, reflective thinking in judgment and reasoning. "From a dual-process perspective, such misattributions arise when fast, intuitive judgments dominate reflective evaluation"

- Epistemic alignment: The degree to which AI-delivered knowledge matches user understanding and needs. "epistemic alignment in human--LLM interaction"

- Epistemic domain: The sphere concerned with knowledge acquisition and understanding. "In the epistemic domain, the LLM fallacy is observed in knowledge acquisition and understanding."

- Epistemic validity: The truth-related soundness of knowledge claims, independent of attribution. "it operates at the level of attribution rather than epistemic validity."

- Extended mind framework: The view that cognitive processes can extend beyond the brain into tools and environments. "The extended mind framework further develops this perspective"

- Fluency illusion: Mistaking ease and polish of generated language for genuine understanding or skill. "A second mechanism is the fluency illusion produced by high-quality natural language generation."

- Hallucination: Model-generated content that is incorrect or fabricated. "Hallucination refers to cases in which a model produces incorrect or fabricated information"

- Human-centered AI design: Designing AI with a focus on user needs, transparency, and interpretability. "Research in human-centered AI design further highlights the role of transparency and interpretability"

- Human--AI collaboration: Joint human–AI work where systems act as partners in task execution. "More recent work in human--AI collaboration examines how users engage with AI systems as partners in task execution"

- Human--in--the--loop: A workflow where humans remain actively involved in oversight and decision-making. "This workflow reflects a human--in--the--loop, human--in--control, and human--as--final--author model of collaboration."

- Human--machine teaming: Coordinated human and machine cooperation where performance emerges from their interaction. "In human--machine teaming, performance emerges from interaction rather than from the isolated capabilities of either component"

- Illusion of explanatory depth: Overestimating how well one understands complex systems. "This aligns with the illusion of explanatory depth, in which individuals overestimate their understanding of complex systems"

- Interactional immediacy: Rapid, low-friction response cycles in LLM interactions that blur process visibility. "LLM interaction properties (opacity, fluency, and interactional immediacy) shape cognitive mediation processes"

- LLM fallacy: Misinterpreting AI-assisted outputs as evidence of one’s independent competence. "The LLM fallacy is defined as a cognitive attribution error in which individuals misinterpret LLM-assisted outputs as evidence of their own independent competence."

- Metacognitive cue: A signal used to judge one’s own knowledge or performance, such as perceived fluency. "High fluency can function as a metacognitive cue"

- Metacognitive monitoring: Assessing and regulating one’s own knowledge and cognitive processes. "a failure of metacognitive monitoring, in which individuals are unable to accurately assess the sources and limits of their own knowledge"

- Natural Language Declarative Prompting (NLD-P): A structured prompting methodology for controlling and constraining LLM behavior. "Natural Language Declarative Prompting (NLD-P) framework"

- Pipeline opacity: Lack of visibility into the intermediate steps by which LLMs generate outputs. "Another critical factor is pipeline opacity, referring to the invisibility of the processes that generate LLM outputs."

- Professional signaling: Representing or advertising one’s skills and expertise to external evaluators. "In the domain of professional signaling, the phenomenon manifests in how individuals represent their capabilities"

- Proxy tasks: Stand-in tasks used for evaluation that may not reflect true competence. "proxy tasks and subjective evaluation measures can produce misleading signals"

- Trust calibration: Adjusting the level of trust in AI systems to match their capabilities and limitations. "user trust calibration"

Collections

Sign up for free to add this paper to one or more collections.