- The paper introduces a novel framework that uses a learnable reference vector and soft dot product quantization to achieve stable training and robust SID generation.

- It incorporates new semantic cohesion and preference discrimination metrics for direct and effective regularization, showing strong correlations with key performance metrics.

- Empirical results demonstrate up to 15.5% improvements in benchmark metrics, confirming its effectiveness in both cold-start scenarios and large-scale industrial applications.

Reference Vector-Guided Rating Residual Quantization VAE for Generative Recommendation

Introduction and Motivation

The evolution of recommendation algorithms has pivoted sharply with the advent of Generative Recommendation (GR), which leverages LLMs for end-to-end retrieval using Semantic Identifiers (SID) that encode item semantics via discrete tokens. GR eliminates legacy infrastructure such as embedding tables and ANN indexes, offering a more integrated workflow. However, prevailing SID generation paradigms based on vector quantization, in particular Residual Quantization VAE (RQ-VAE) and its variants, are hindered by two critical deficiencies: training instability attributed to sub-optimal gradient propagation (notably, the limitations of the straight-through estimator, STE) and an absence of principled, direct metrics for evaluating SID quality outside full-pipeline A/B testing.

The R3-VAE Framework

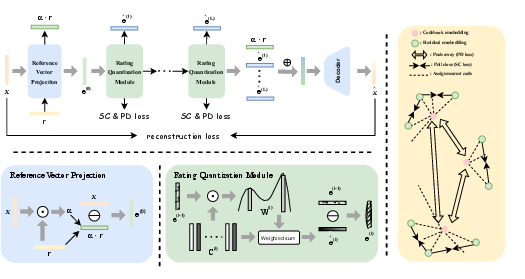

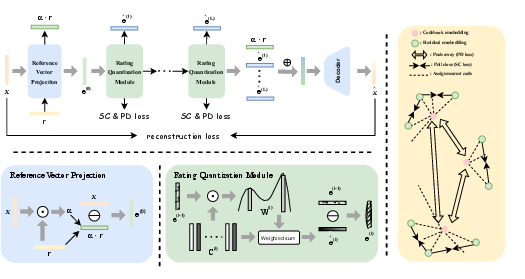

The R3-VAE framework directly addresses these bottlenecks via three architectural innovations:

- Reference Vector Anchoring: The model introduces a learnable reference vector that serves as a semantic anchor for the projection of item embeddings, facilitating initialization-robust clustering and improved alignment with semantic structures in recommendation data. This approach ensures initial residuals encode preference-relevant signals and reduces sensitivity to suboptimal initialization.

- Dot Product-Based Rating Quantization: Instead of the STE-based hard codebook lookup in RQ-VAE, R3-VAE applies a differentiable, softmax-weighted dot product (termed “rating”) to propagate gradients more faithfully. The residual is updated as a weighted combination of codewords based on angular similarity, which empirically yields more stable training and eliminates codebook collapse.

- SID Quality Metrics with Regularization: Two new evaluation metrics—Semantic Cohesion (SC, intra-cluster cosine similarity) and Preference Discrimination (PD, inter-cluster angular divergence)—are incorporated both as evaluation proxies and as regularization terms in the objective, enabling more direct and data-efficient model selection.

Figure 1: The overall R3-VAE pipeline, with reference vector projection, hierarchical dot product quantization, decoder, and metric-based regularization.

Empirical Analysis and Quantitative Results

R3-VAE demonstrates substantial improvements over leading baselines on six benchmark datasets, including three Amazon subsets (Beauty, Sports, Toys), LastFM, ML1M, and Clothing. It achieves average relative gains of 14.2% in Recall@10 and 15.5% in NDCG@10 on Amazon datasets. On the large-scale Toutiao industrial dataset, it delivers a 1.62% lift in MRR and a 0.83% enhancement in StayTime/U compared to R-KMeans. Most notably, it produces a 15.36% increase in cold-start content click volume in a CTR task, validating the utility of SID as a universal ID replacement for both discriminative and generative architectures.

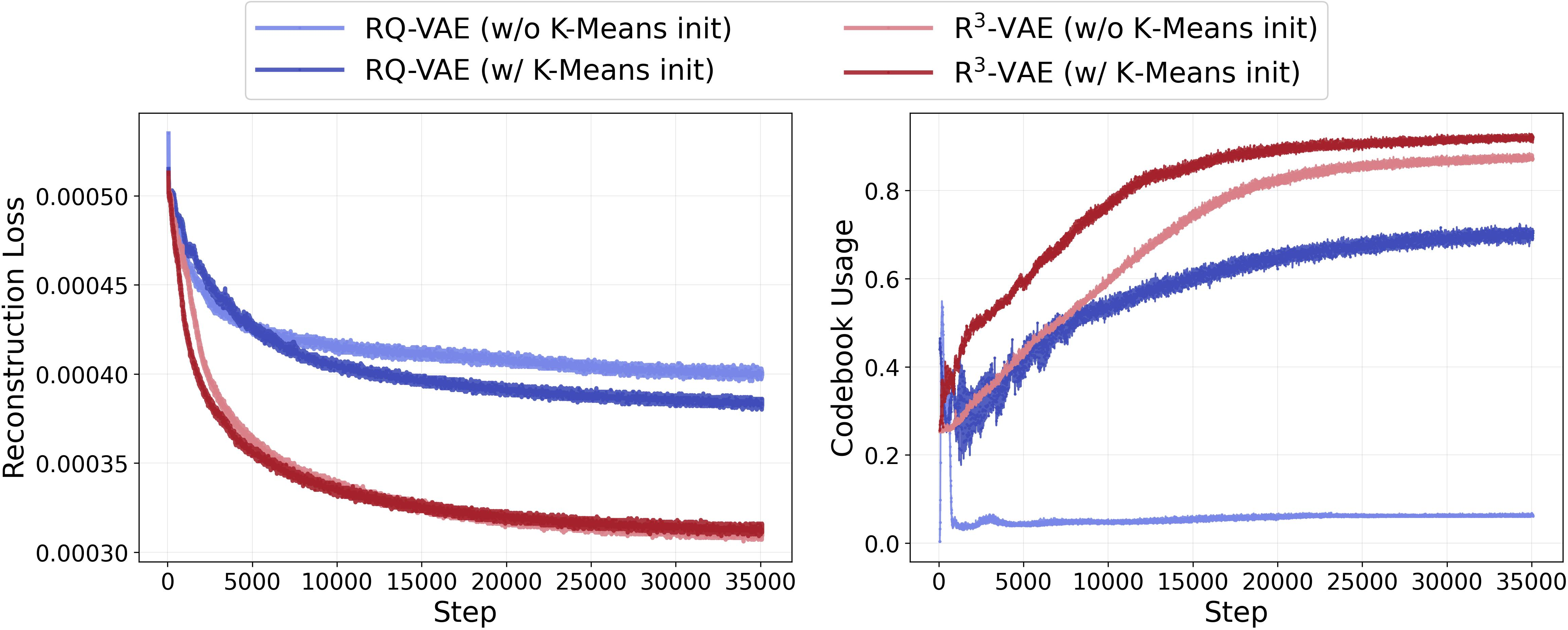

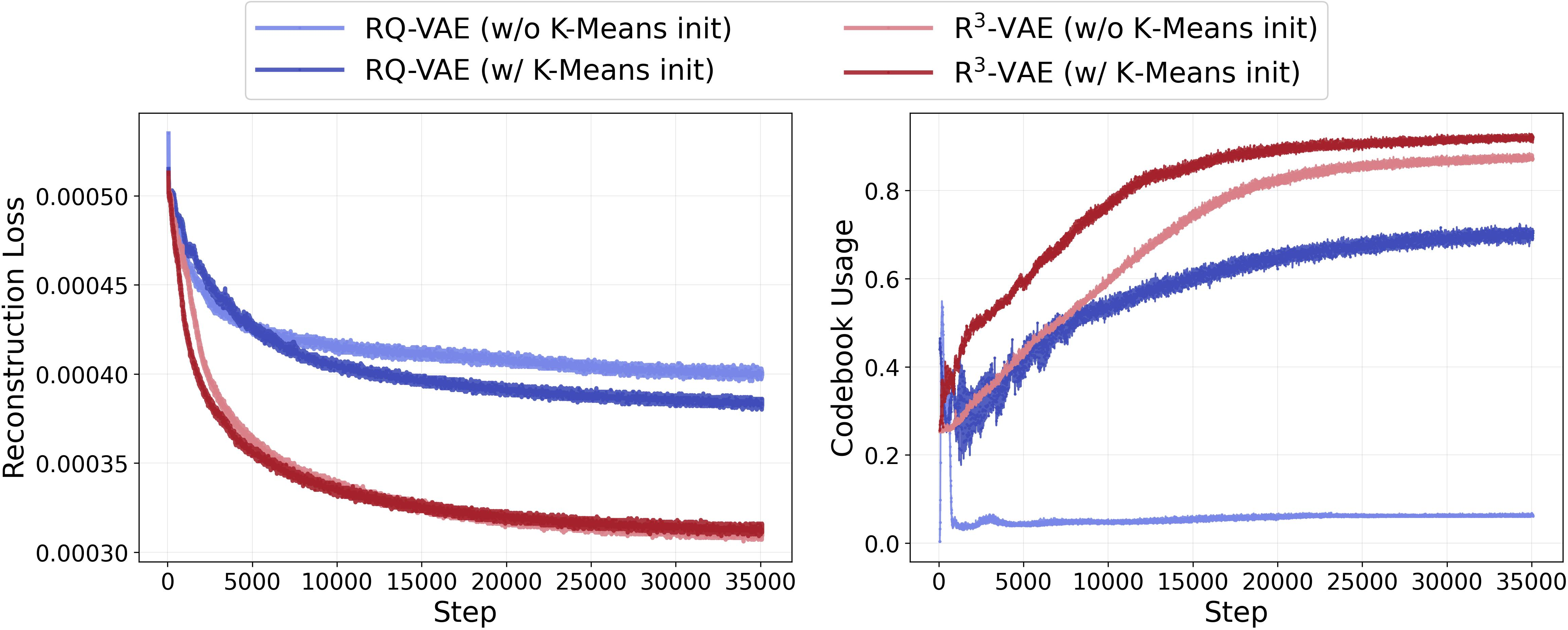

R3-VAE achieves near-complete codebook usage and stable, fast loss convergence independent of initialization, whereas competing VQ approaches show persistent under-utilization and high sensitivity.

Figure 2: Training stability: R3-VAE achieves fast convergence and near-full codebook activation, regardless of KMeans initialization, compared to RQ-VAE.

Embedding Visualization and Embedding Dynamics

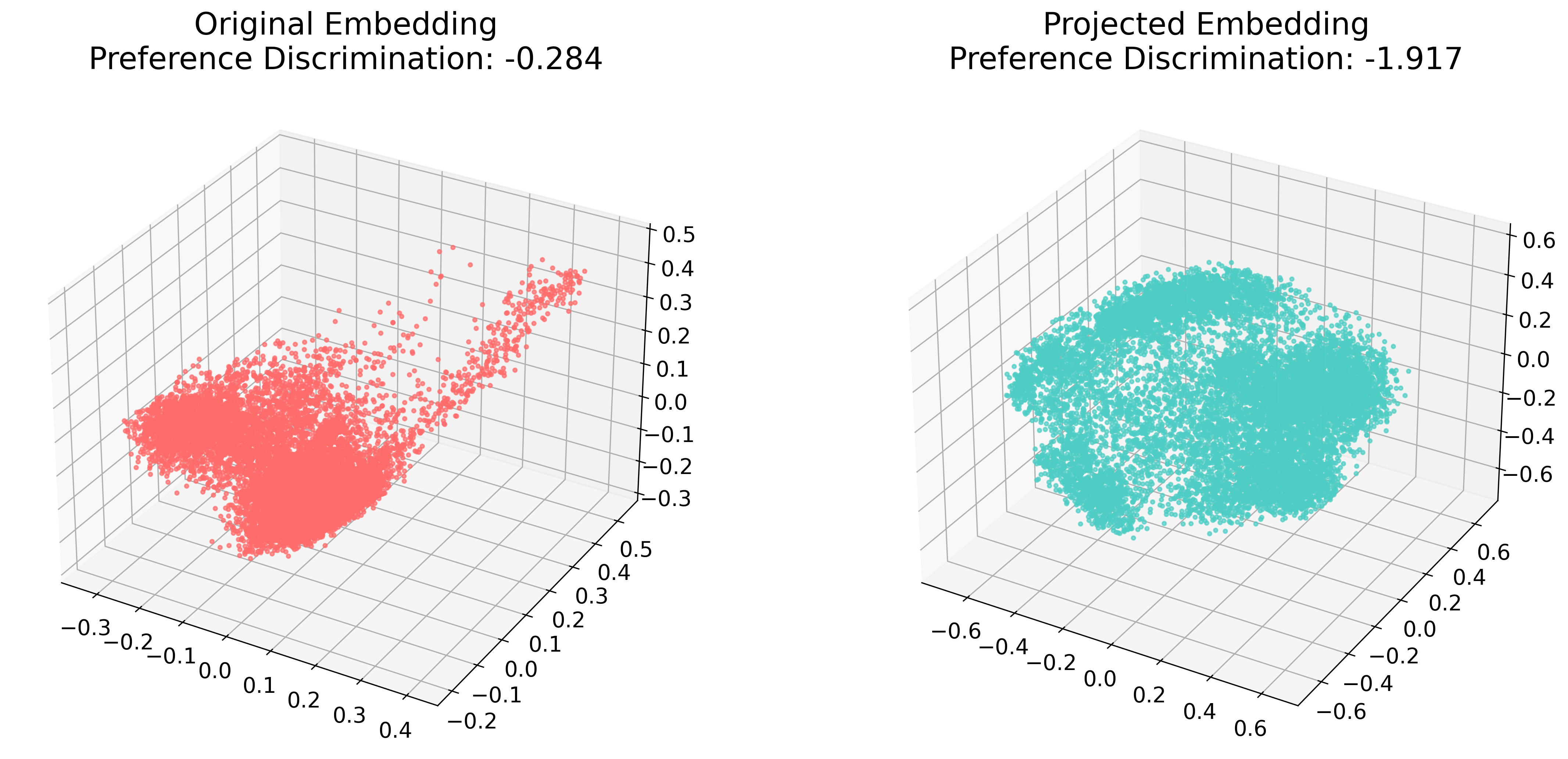

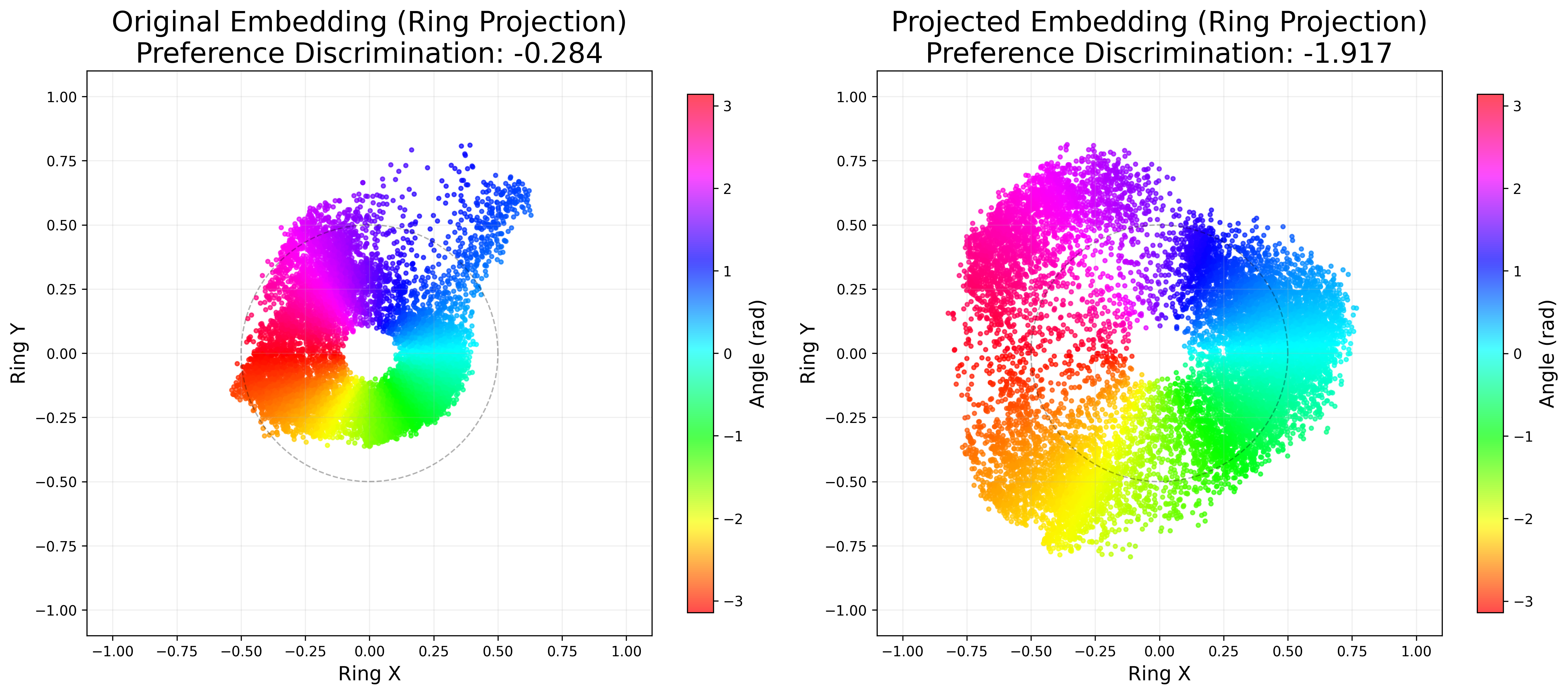

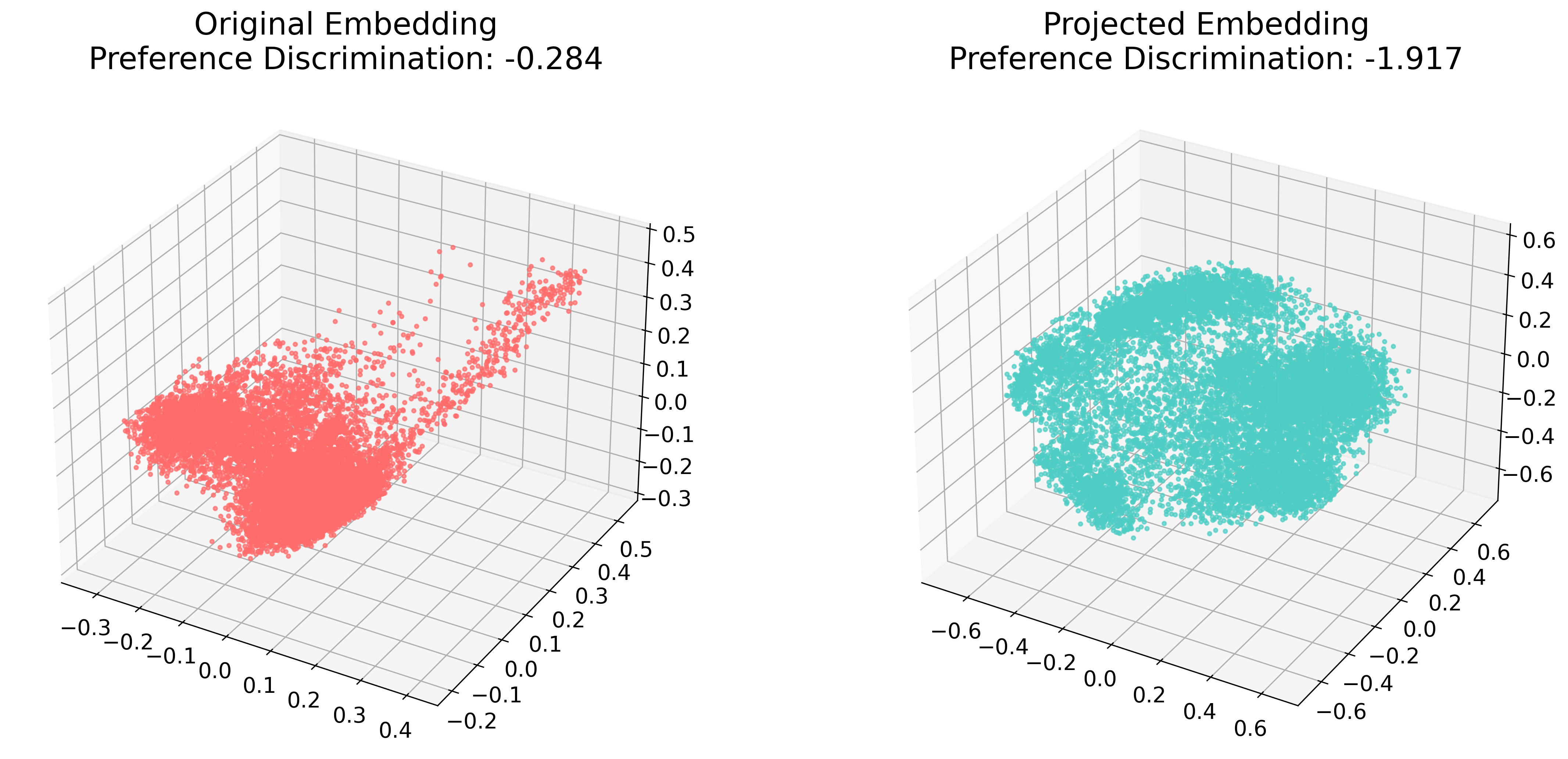

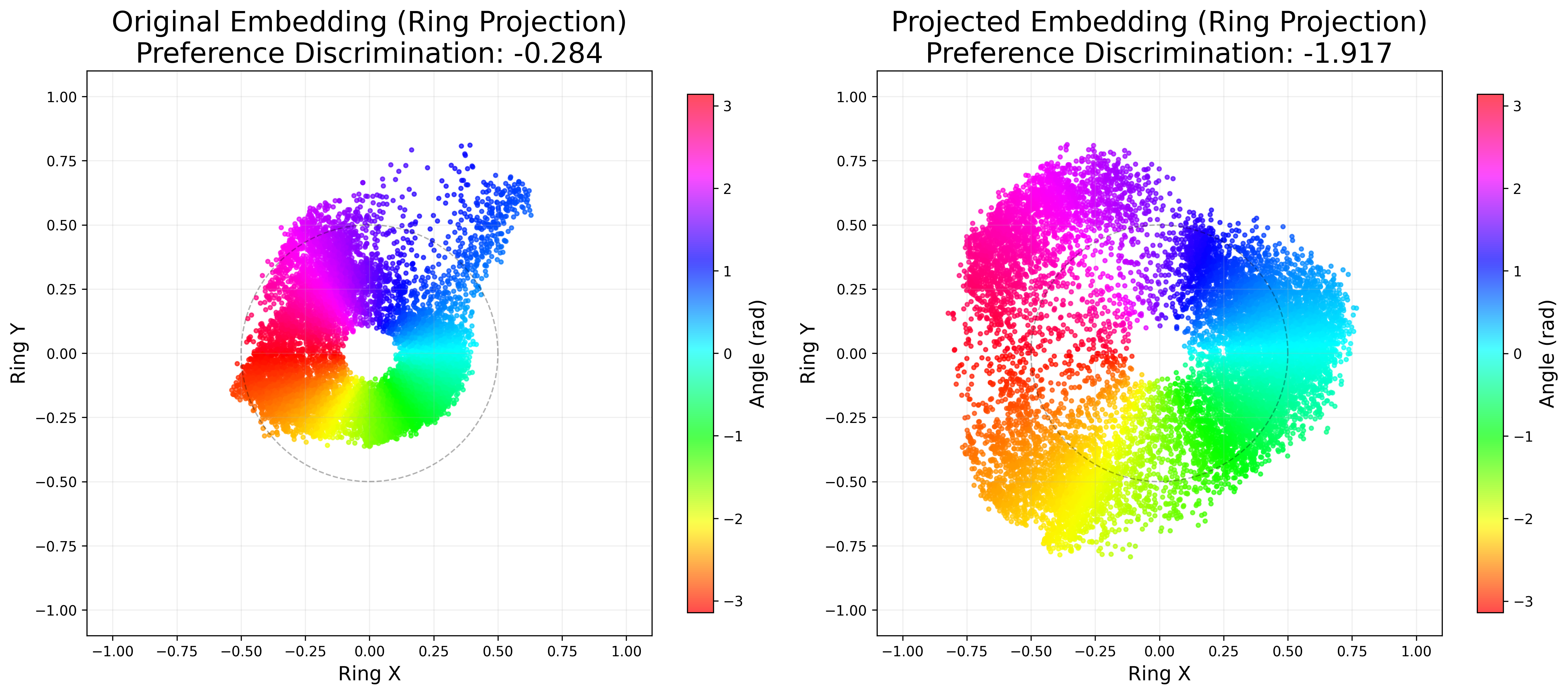

The effects of the reference vector projection are substantiated via low-dimensional visualizations: 3D PCA and 2D ring projections reveal that projected embeddings are more dispersed, greatly improving cluster separability and angular uniformity, as measured by the preference discrimination score.

Figure 3: Embeddings before (left) and after (right) reference vector projection: increased spatial dispersion in projected space enhances cluster separability.

Figure 4: 2D ring projections before (left) and after (right) projection: improved angular uniformity translates to lower preference discrimination, facilitating robust clustering.

Theoretical and Practical Implications

The innovations in R3-VAE—particularly soft dot product quantization and reference-vector-guided SID construction—mitigate the core deficiencies of previously dominant approaches. The proposed SC and PD metrics show strong Spearman’s correlation with downstream MRR and Recall@10 (up to 0.94 in GR, 0.90 in discriminative settings), surpassing Collision Rate and Gini as SID quality proxies and enabling more efficient offline tuning and deployment cycles.

These results substantiate the paradigm of semantic anchor–based hierarchical quantization with metric-driven regularization as essential for robust, scalable SID construction in industrial-scale GR and CTR. The deployment of R3-VAE in production environments further confirms its operational validity and transferability.

Future Directions

Potential research avenues facilitated by R3-VAE include:

- End-to-end integration of SID generative models with multi-modal and reasoning-enhanced retrieval architectures.

- Application of reference vector initialization in other residual learning systems.

- Automatic annealing or adaptation of SC/PD regularization weights for scenario-specific model calibration.

- Extension to zero-shot SID generalization on cold-start item domains.

Conclusion

R30-VAE introduces a robust SID generation framework that unifies semantic anchoring, stable gradient propagation, and principled SID evaluation for generative recommendation. The demonstrated empirical advantages and strong correlation of its quality metrics with downstream performance render it a significant step toward scalable, high-fidelity item tokenization in both academic and industrial contexts (2604.11440).