- The paper introduces a novel coordination mechanism that fuses multi-resolution semantic IDs with traditional hashed IDs to improve short-video search ranking.

- It employs adaptive attention-based fusion and target-aware gating to balance semantic generalization and memorization based on item popularity.

- Empirical results on a large-scale platform show measurable gains in offline AUC and online engagement metrics, validating the framework's practical impact.

Coordinating Semantic and ID-based Modeling for Short-Video Search Ranking: An Analysis of SID-Coord

Introduction

Contemporary short-video search platforms rely heavily on ID-based ranking models that leverage user–item interactions encoded as hashed item identifiers (HIDs). This paradigm is highly efficient for high-frequency, head items but exhibits well-documented deficiencies regarding long-tail generalization due to the sparsity of co-occurrence data. Existing solutions incorporating dense semantic embeddings partially mitigate this memorization–generalization trade-off, but incur task adaptivity limitations and non-trivial inference overhead due to their loose integration within production systems. The paper "SID-Coord: Coordinating Semantic IDs for ID-based Ranking in Short-Video Search" (2604.10471) addresses these deficiencies via a principled coordination mechanism that fuses discrete, multi-level trainable Semantic IDs (SIDs) with traditional HIDs, enabling scalable and adaptive modeling of semantic abstraction and memorization in large-scale short-video search systems.

Overview of SID-Coord Framework

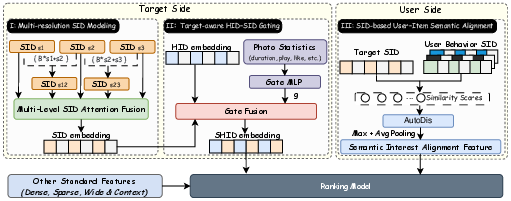

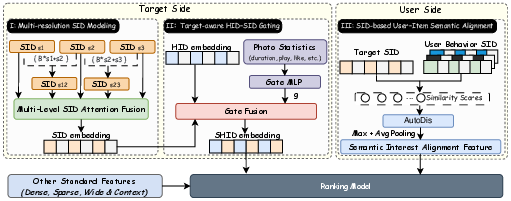

SID-Coord proposes an explicit coordination framework between semantic generalization and HID-based memorization, operationalized through three core innovations: (1) multi-resolution hierarchical SID modeling with adaptive attention-based fusion, (2) target-aware gating of HID and SID signals sensitive to item popularity, and (3) SID-driven user–item semantic alignment across historical behavior sequences.

Figure 1: Overview of the proposed SID-Coord framework illustrating integration of hierarchical SIDs, popularity-aware gating, and interest alignment.

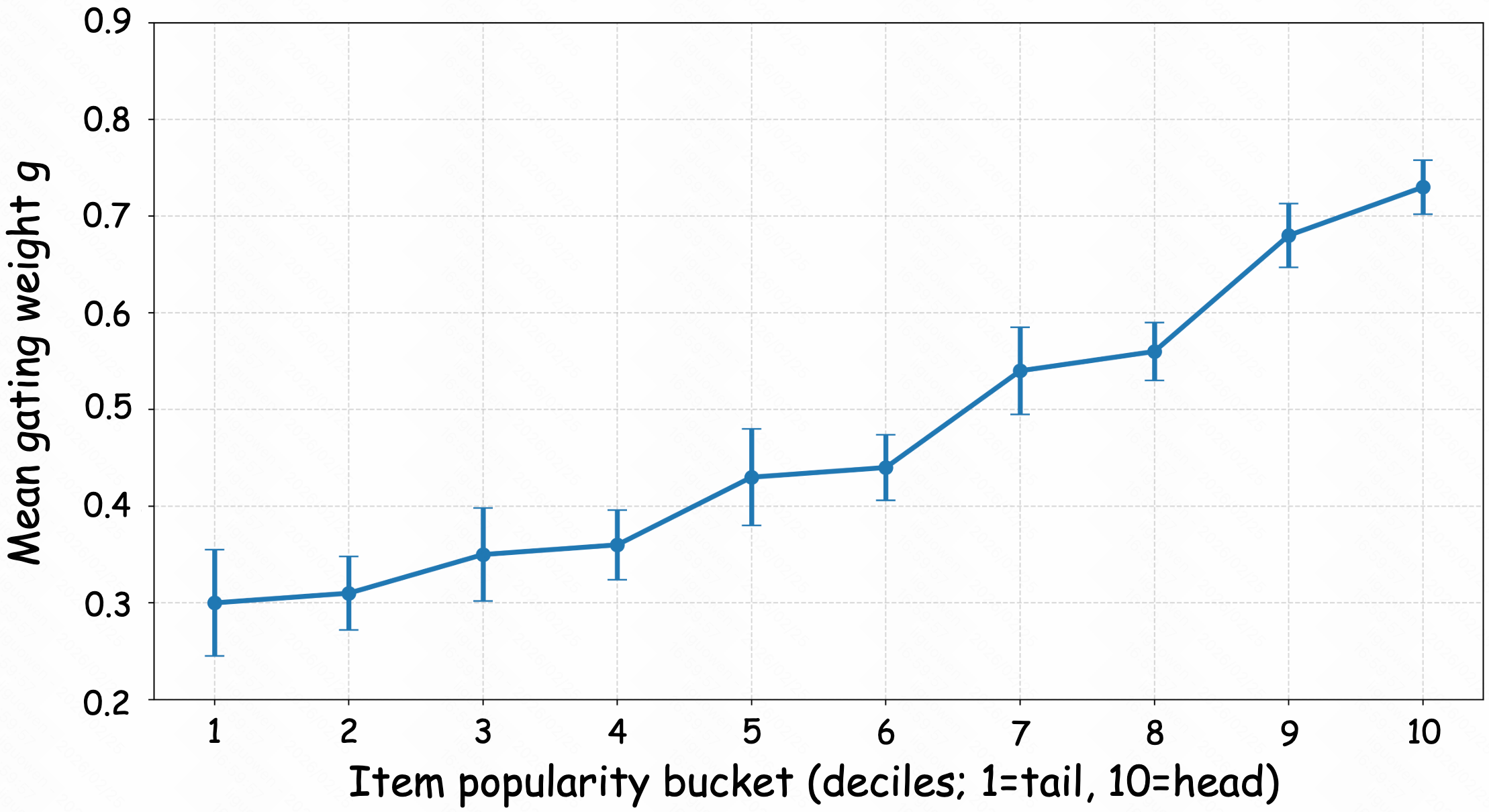

SID-Coord models hierarchical SIDs extracted via multi-level residual quantization that reflects coarse-to-fine semantic granularity. Composite SIDs and an attention module yield an adaptive fusion, selecting the optimal semantic abstraction for each item. HID and SID signals are coordinated by a popularity-aware gating mechanism: the gating weight is dynamically estimated using interaction statistics, emphasizing memorization for popular (head) items and semantic generalization for long-tail content.

Multi-Resolution SID Modeling

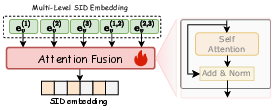

Hierarchical SID encoding leverages residual quantization to generate three levels of discrete codes per item. Composite SIDs are formed using radix-based composition, maintaining structured granularity while controlling the parameter space. Attention-based fusion selects among elemental and composite SIDs, yielding an embedding that adapts flexibly to varying item needs.

Figure 2: SID Multi-Level Attention Fusion Mechanism, demonstrating how the framework adaptively fuses multi-granular SIDs per item.

This structured approach yields two key advantages over n-gram or prefix-based SID integration: (1) it preserves locality in latent semantic space, supporting robust discrimination without fragmentation, and (2) it enables efficient scaling by avoiding combinatorial explosion.

Target-aware HID–SID Gating and Popularity-aware Coordination

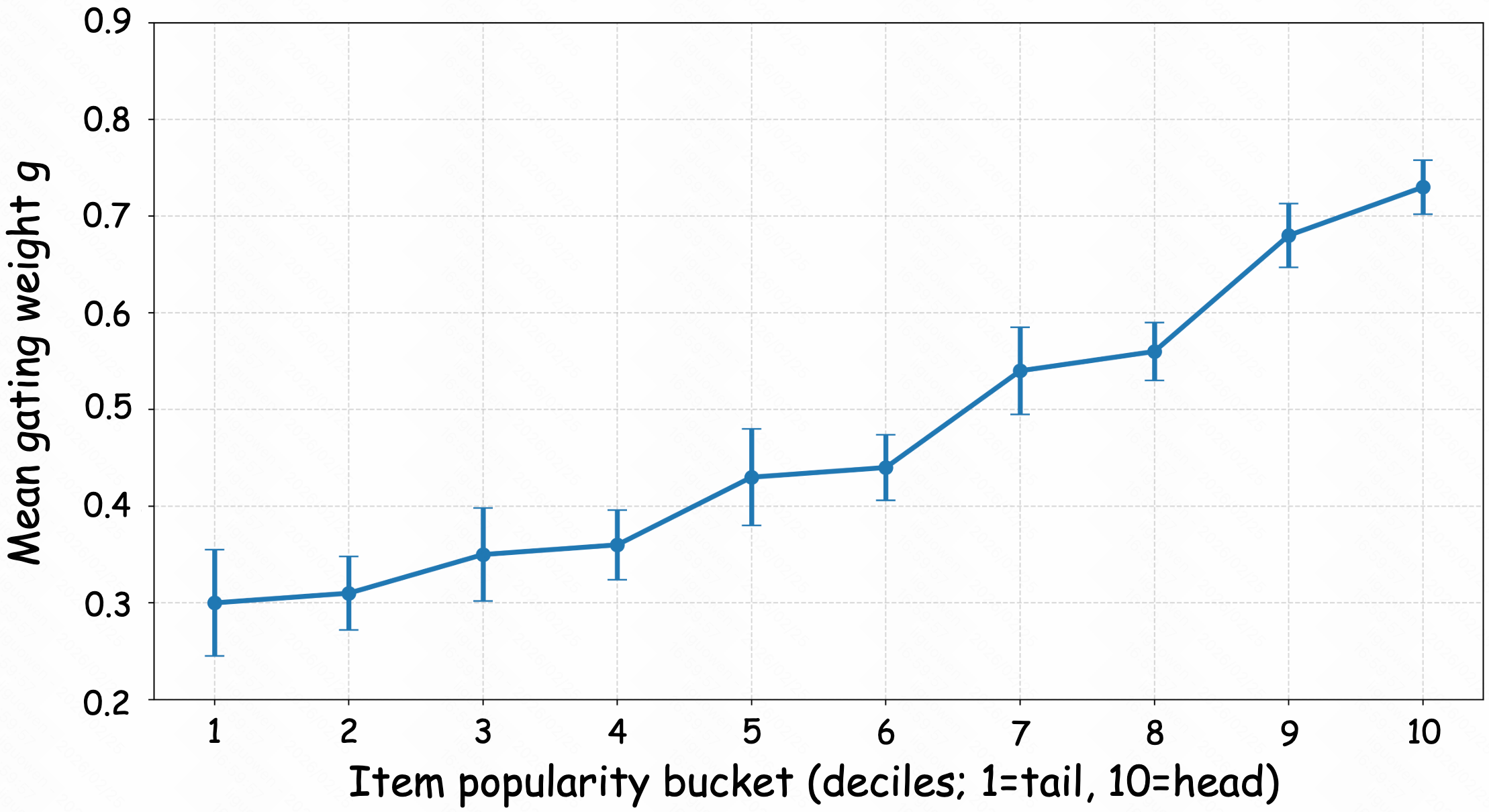

To balance HID-based memorization and SID-driven generalization, SID-Coord introduces a target-aware gating module. The gating weight is predicted from exposure and engagement statistics and controls the convex combination of HID and SID embeddings. Empirical evidence demonstrates this mechanism yields monotonic adaptation: as item popularity increases, gating increasingly favors HID, while for sparse long-tail items, SID carries more weight.

Figure 3: Analysis of popularity-aware gating across item popularity buckets, visualizing the calibration of the gating function.

This dynamic, data-driven fusion contrasts with static or naive feature concatenation, providing robust adaptation to interaction heterogeneity. The framework demonstrates that such gating is not just an architectural convenience but is empirically required for optimizing both head and tail.

SID-based User–Item Interest Alignment

SID-Coord extends semantic modeling to user sequence modeling by calculating distribution-stabilized (via AutoDis) similarity scores between candidate item SIDs and historical behavior SIDs. Both maximum and mean aggregations of these similarities are fed into the ranking model, supplying higher-order features that capture nuanced user interests beyond explicit IDs. This semantic alignment augments content-level affinity estimation, especially critical in instances when behavioral histories diverge in HID space yet remain semantically consistent.

Empirical Results

SID-Coord is evaluated in an industrial short-video search environment (Kuaishou), with extensive online (A/B test) and offline (AUC, UAUC) studies. The framework is compared with leading production baselines and alternative SID integration methods (Prefix-Ngram, SPM-SID, DAS).

Key results:

- Offline: Overall AUC gain +0.33% (+0.42% for long-tail); UAUC gain +0.93% on long-tail items.

- Online: Long-play rate +0.664% (+0.226 pp), search playback duration +0.369%, play count +0.472%, all statistically significant.

- Serving latency impact is negligible (+0.005%).

Ablation studies show all three modules contribute, with the largest degradation occurring when the multi-resolution SID module is removed, underscoring the centrality of adaptive semantic abstraction. Gating and interest alignment produce significant additive improvements.

Theoretical and Practical Implications

SID-Coord represents a shift from classic feature engineering toward an explicit coordination paradigm between semantic discretization and ID memorization. Its design validates the principle that latent semantic structure can be disentangled into hierarchical, trainable discrete codes—enabling both efficient retrieval and domain-adaptive generalization. Practically, the negligible serving overhead and incrementally pluggable architecture render SID-Coord amenable to production deployment at scale.

The results challenge the orthodoxy that dense multimodal representations are required for semantic generalization under extreme data sparsity, showing that compact, quantized SIDs suffices when tightly coordinated and gated. Moreover, SID-Coord's adaptivity anticipates future AI ranking systems that will require even finer-grained popularity-aware calibration and semantic–behavioral integration.

Future Directions

Open questions pertain to scaling SID-Coord with deeper quantization hierarchies, bidirectional user–item interest propagation, and the integration with generative ranking architectures. Advancements in adaptive codebook learning, context-aware gating, and continual updating of SID structures as content modalities (e.g., speech, image, text) become richer offer clear avenues for subsequent research. The framework also naturally extends to multi-objective ranking and cross-domain recommendation scenarios.

Conclusion

SID-Coord formalizes the coordination of multi-resolution semantic IDs and HIDs as an operational principle for large-scale search ranking in short-video platforms. Its integration of radix-composed hierarchical SIDs, popularity-aware gating, and SID-based user–item alignment yields measurable improvements—particularly in exacerbating long-tail regimes—while retaining strict online latency constraints. The paradigm exemplifies how structured, discrete semantic modeling, when tightly coordinated with traditional ID signals, overcomes longstanding obstacles in real-world recommendation and search systems (2604.10471).