Exponential quantum advantage in processing massive classical data

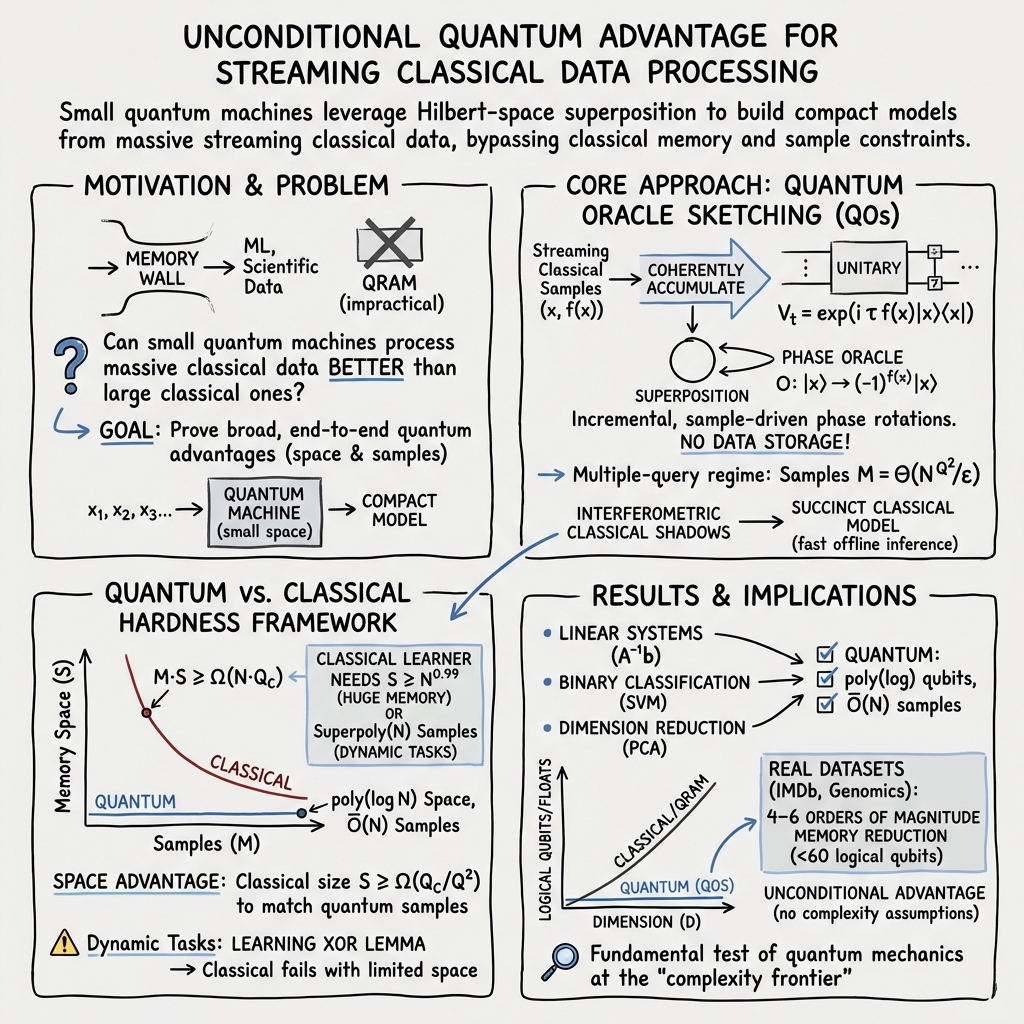

Abstract: Broadly applicable quantum advantage, particularly in classical data processing and machine learning, has been a fundamental open problem. In this work, we prove that a small quantum computer of polylogarithmic size can perform large-scale classification and dimension reduction on massive classical data by processing samples on the fly, whereas any classical machine achieving the same prediction performance requires exponentially larger size. Furthermore, classical machines that are exponentially larger yet below the required size need superpolynomially more samples and time. We validate these quantum advantages in real-world applications, including single-cell RNA sequencing and movie review sentiment analysis, demonstrating four to six orders of magnitude reduction in size with fewer than 60 logical qubits. These quantum advantages are enabled by quantum oracle sketching, an algorithm for accessing the classical world in quantum superposition using only random classical data samples. Combined with classical shadows, our algorithm circumvents the data loading and readout bottleneck to construct succinct classical models from massive classical data, a task provably impossible for any classical machine that is not exponentially larger than the quantum machine. These quantum advantages persist even when classical machines are granted unlimited time or if BPP=BQP, and rely only on the correctness of quantum mechanics. Together, our results establish machine learning on classical data as a broad and natural domain of quantum advantage and a fundamental test of quantum mechanics at the complexity frontier.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “Exponential quantum advantage in processing massive classical data” (for a 14-year-old)

What is this paper about?

This paper shows a new way a small quantum computer can beat much bigger classical (ordinary) computers at working with huge amounts of everyday data, like text and biology measurements. The trick is to handle the data as it arrives (one piece at a time), build a tiny quantum “summary” on the fly, and then use that summary to answer useful questions such as:

- Is this movie review positive or negative?

- What’s the most important direction to look at in a large gene-expression dataset (a way to simplify and see patterns)?

The authors prove that a quantum computer using only a few dozen high‑quality qubits could do these jobs, while any classical computer would need exponentially more memory to match its accuracy.

What are the main goals and questions?

The paper asks three simple questions:

- Can a very small quantum computer learn from massive classical data streams as they come in, without storing everything?

- Can it do important tasks—like classification (sorting things into groups) and dimension reduction (simplifying high‑dimensional data)—as well as or better than very large classical machines?

- Is there a solid, math‑based reason why classical machines can’t match this performance unless they’re much, much bigger?

How does their approach work? (With easy analogies)

The authors introduce two key ideas:

- Quantum oracle sketching Think of a huge library where books (data points) arrive randomly, one by one. You’re not allowed to store them all, but you can make tiny notes each time you see a book. If you choose your notes just right, after seeing enough random books, you end up with a compact “cheat sheet” that lets you answer many questions as if you had the whole library at once.

- In quantum terms, an “oracle” is like a fast, built‑in lookup tool a quantum algorithm uses to ask questions about data.

- “Sketching” means building an approximate version of that lookup tool by making tiny, careful quantum updates each time a new data sample arrives.

- This uses quantum “superposition” (asking many questions at once) but never requires storing the entire dataset.

A crucial detail: they show exactly how many samples you need to build a reliable sketch and prove this is basically the best you can do.

- Interferometric classical shadows After the quantum chip builds a tiny internal summary, you still need regular numbers you can use later. “Classical shadows” are like taking a few special photos of a complicated 3D object so you can later measure many properties from those photos alone. Here, the authors adapt that idea so you can efficiently extract a compact classical model from the quantum state—one you can use to make predictions for lots of new inputs.

Together, these ideas avoid two classic roadblocks:

- Data loading: You don’t need a special, hard‑to‑build quantum memory (QRAM) that stores the entire dataset.

- Readout: You don’t need to measure an enormous amount from the quantum state to get useful answers.

What did they test and prove?

They focus on three common tasks:

- Solving linear systems (a basic math task behind many engineering and science problems).

- Classification (e.g., deciding if a movie review is positive or negative).

- Dimension reduction (e.g., principal component analysis, or PCA, to simplify large datasets like gene expression).

The authors prove:

- A quantum computer whose size grows very slowly with the data size (think: much smaller than the dataset) can do these tasks with good accuracy by processing about as many samples as there are data items (linear in the dataset size).

- Any classical computer that tries to match the same prediction quality needs exponentially more memory—or else it needs super‑polynomially more time and samples.

They also test their method on real datasets:

- Movie review sentiment (IMDb): positive vs. negative reviews.

- Single‑cell RNA sequencing (PBMC): simplifying gene-expression data to separate cell types.

In these tests (run in simulation), the quantum method reached high accuracy or good dimension reduction while using four to six orders of magnitude less memory than standard classical methods—and it needed fewer than 60 logical qubits.

Why is this important?

- It shows an “exponential quantum advantage” in memory: tiny quantum devices can do what would require enormous classical memory to do equally well.

- The advantage doesn’t depend on controversial complexity assumptions. It only relies on quantum mechanics being correct.

- Even if a classical computer had unlimited time, without enough memory it still couldn’t match the quantum device’s performance on these tasks.

Why does the advantage happen? (Plain idea)

In classical computing, streaming and sketching can save memory but usually hurt accuracy. The authors show quantum machines can keep high accuracy with tiny memory by:

- Using superposition to “touch” many possibilities at once.

- Accumulating many tiny, well‑planned updates from random samples so that, together, they behave like a powerful data‑access tool (an oracle).

- Proving that small quantum models can capture the essential information needed for prediction, whereas small classical models can’t—unless you give them exponentially more space.

They also connect this to a deep mathematical result: if a problem needs many classical “lookups” but only a few quantum “lookups,” then a small quantum device can beat any classical device that doesn’t have huge memory.

What could this mean for the future?

- Practical promise: It suggests a path for near‑term quantum devices (with tens to hundreds of logical qubits) to make a real difference in processing enormous classical datasets—especially when memory is the bottleneck.

- New applications: The same approach could help in optimization, solving differential equations, signal processing, and more.

- Fundamental science: Because the advantage depends only on quantum superposition, successfully demonstrating it in the lab would be a powerful test of quantum mechanics at large scales of complexity, much like how certain physics experiments test the limits of our theories.

Bottom line

This paper argues—and backs up with proofs and simulations—that small quantum computers can learn from massive classical data streams and build tiny, accurate models that classical computers cannot match without exponentially more memory. It opens a broad, natural area—machine learning on classical data—where quantum devices could shine soon.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of unresolved issues that future work could address to validate, generalize, or operationalize the claims in the paper.

- Data access assumptions: quantify performance when samples are non-uniform, biased, or adversarially ordered (beyond uniform random rows/indices); develop online estimation/correction of unknown sampling distributions and integrate rigorously into the QSVT-based pipeline with explicit overheads.

- Sparsity and structure dependence: characterize how sample/query complexity scales when data are dense, only approximately sparse, or best described by low-rank/compressible models rather than sparsity; design sketching variants that exploit alternative structures.

- Conditioning and spectral gaps: provide explicit dependences on linear-system condition number κ and PCA spectral gap Δ in sample/query complexity and machine size; analyze failure modes and robust variants for ill-conditioned systems or vanishing gaps.

- Quantum preconditioning in a streaming setting: propose and analyze preconditioning strategies (and their resource costs) that can be constructed from streaming samples and combined with oracle sketching.

- Dynamic data model calibration: practical procedures to estimate and track the refreshing time T and repetition number R online; quantify sensitivity to mis-specification and to long-range temporal correlations/heavy-tailed processes.

- Robustness to hardware noise: analyze how coherent-accumulation guarantees degrade under realistic gate/measurement noise, calibration drift, and finite-precision phase setting; derive noise thresholds or error-mitigation schemes that preserve E ~ N/M scaling.

- Gate synthesis and depth: give concrete T-count, depth, and connectivity requirements for per-sample multi-controlled phase gates and other primitives as functions of N, ε, κ, Δ; establish parallelization schedules and provable depth/latency bounds exploiting commutativity.

- End-to-end runtime vs memory: beyond “ignoring exponential runtime,” provide asymptotic and constant-factor runtime models (data ingestion, gate execution, readout) and wall-clock estimates on plausible fault-tolerant hardware.

- Resource accounting parity: standardize memory metrics across paradigms (logical vs physical qubits, classical control memory, storage for classical shadows) and re-evaluate the reported 4–6 order-of-magnitude “size” advantages accordingly.

- Readout costs for interferometric classical shadows: quantify the number of shots and classical postprocessing required as a function of target error, margin γ, variance, and sparsity; assess robustness under measurement noise and imperfect controls.

- Reusability and lifecycle of sketches: determine how many algorithm invocations the constructed approximate oracle supports before drift/noise invalidates guarantees; devise update/refresh protocols that maintain accuracy with minimal additional samples.

- Multiple-oracle pipelines: when state preparation, block encoding, and control oracles are all needed, quantify the cumulative sample budget and explore cross-oracle sample sharing or co-design to avoid Q2 blowups.

- Quadratic sample penalty optimality: investigate whether the M = Θ(N Q2/ε) scaling can be improved for restricted oracle families via postselection, entanglement with classical randomness, mid-circuit measurements, or quantum memory; delineate tight optimality frontiers.

- Handling repeated samples: formal algorithms to prevent over-rotation when the same z appears multiple times within a correlation window; quantify the resulting variance/bias trade-offs.

- Guiding vector acquisition for PCA: procedures to obtain/bootstraps for a guiding vector g in streaming settings without scanning the full feature space; sensitivity analysis when g has small or noisy overlap with the top component.

- Classification margins and label noise: derive explicit relationships between margin γ, label noise rate η, and the sample/query complexities of the LS-SVM pipeline under sketching; address class imbalance and calibration.

- Regularization selection in-stream: methods to tune λ for LS-SVM within the quantum-sketching pipeline under memory constraints (e.g., streaming cross-validation or implicit regularization).

- Extensions beyond LS-SVM/PCA: feasibility and guarantees for multiclass classification, logistic regression, kernel methods, manifold learning, or other unsupervised objectives using oracle sketching and shadow readout.

- Tasks with large Q: identify practically important problems where required quantum queries Q grow with N such that M ceases to be O(N), and analyze whether the space advantage survives; propose ways to keep Q small.

- Non-i.i.d. and adversarial streams: extend guarantees to adaptive or adversarially chosen samples; specify failure cases and possible defenses in the streaming-to-quantum interface.

- Empirical baselines: re-benchmark against state-of-the-art classical streaming methods (e.g., Frequent Directions, CountSketch, randomized SVD, sketch-and-solve ridge regression) that use O(k/ε) memory, as well as strong domain-specific pipelines (e.g., hashing for text, Scanpy for scRNA-seq), to validate claimed separations.

- Fair comparison metrics: revisit the memory baselines that equate classical streaming memory to feature dimension D; adopt tighter, literature-backed bounds and report sensitivity of advantages to these choices.

- Experimental validation on hardware: demonstrate small-scale implementations on noisy devices or fault-tolerant prototypes; measure end-to-end fidelity, throughput, and robustness on real data streams.

- Diamond distance vs operational error: bridge the gap between analyzed operator-norm/expected-unitary errors and the diamond-norm/algorithmic performance that drives prediction accuracy; extract and report constants.

- Model size of classical outputs: provide precise bit-length bounds for the compressed classical models produced by interferometric shadows and how they scale with ε, δ, and data sparsity; benchmark inference latency on classical hardware.

- Unknown normalization and drift: methods to estimate and track vector norms and scaling factors needed for block encodings/state preparation under streaming, time-varying data.

- Multi-pass classical baselines and memory hierarchies: extend lower-bound analyses to allow multiple passes, external memory hierarchies, or disk-based streaming; clarify which separations survive under these models.

- Assumptions in hardness reductions: assess how reductions from NOPE/Forrelation to learning tasks preserve natural data statistics and whether the induced distributions remain representative of real-world problems.

- BPP = BQP caveat formalization: formally specify the computational model in which time is unbounded but space is limited, and clarify whether classical reversible/streaming computation with external storage could undermine the space separation.

- Impact of large redundancy R: characterize how advantages degrade with R≫1; identify domains where R is provably small; devise subsampling/decorrelation strategies to lower effective R.

- Finite precision requirements: determine the number of phase/rotation bits needed per update to achieve target ε and how finite precision interacts with fault-tolerant synthesis overhead.

- Data ingestion and I/O: engineer classical-to-quantum interfaces (latency, batching, synchronization) that preserve the sketching assumptions; quantify performance impact of realistic I/O constraints.

- Hardware mapping: compile multi-controlled rotations and related primitives to leading architectures (superconducting, trapped ions, photonics) with connectivity and crosstalk constraints; provide resource counts and schedules.

- Privacy and security: analyze whether on-the-fly processing and compact outputs confer privacy benefits or risks; investigate differentially private variants of quantum oracle sketching.

- Out-of-distribution (OOD) behavior: evaluate how the compressed models generalize to OOD test samples and whether shadow-based readout preserves calibration under distribution shift.

- Multi-class and structured outputs: extend interferometric shadow techniques to produce calibrated multi-class probabilities, structured predictions, or embeddings with guarantees.

- Dataset scale and diversity: broaden empirical validation to larger N, D and diverse modalities; report sensitivity to preprocessing choices (e.g., tokenization, rare-feature thresholds) and to hyperparameters.

Practical Applications

Overview

The paper introduces quantum oracle sketching and interferometric classical shadows as a way for small, fault-tolerant quantum computers (polylogarithmic size in problem parameters) to process massive classical datasets in streaming fashion and output compact classical models. It proves exponential space advantages (and superpolynomial sample advantages in dynamic settings) for tasks such as classification, dimension reduction (PCA), and solving sparse, well‑conditioned linear systems. Below are practical applications and workflows that emerge, grouped into immediate and longer‑term horizons.

Immediate Applications

These are deployable now in research and pilot environments, using small-scale fault-tolerant devices or high-fidelity emulation, and target memory bottlenecks rather than runtime.

- Quantum‑compressed sentiment analysis models for media and e‑commerce (sector: software/marketing)

- Use case: Stream reviews, perform LS‑SVM classification with quantum oracle sketching to produce a compact weight vector and an offline classical predictor; reduce memory by orders of magnitude for the same accuracy on large vocabularies.

- Tools/products/workflows: Cloud “quantum compression‑as‑a‑service” that ingests sparse text features and labels; interferometric classical shadow compiler produces a deployable classical model; integration with existing MLOps (feature stores, A/B testing).

- Assumptions/dependencies: Sparse feature encoding; regularized LS‑SVM is well‑conditioned; margins are non‑vanishing for classifiable points; availability of ~50–100 logical qubits with stable error correction; streaming access with low repetition R; tolerance for O(N) sample ingestion.

- Quantum‑accelerated PCA for single‑cell RNA‑seq pilot analyses (sector: healthcare/biotech/academia)

- Use case: Stream scRNA‑seq counts to compute top principal components and low‑dimensional embeddings with near-constant quantum memory; share compact models with collaborators while discarding raw reads.

- Tools/products/workflows: Quantum PCA preprocessor integrated into bioinformatics pipelines (e.g., Scanpy/Seurat adapters); guiding vector selection (e.g., marker gene) to seed PCA; classical shadow export for downstream clustering and visualization.

- Assumptions/dependencies: Spectral gap for leading components; availability of a guiding vector with non‑vanishing overlap; sparse gene expression matrices; small fault‑tolerant device; data governance for streaming and discard policies.

- Memory‑efficient sparse linear system estimation in labs and engineering teams (sector: engineering/software)

- Use case: Estimate quadratic forms of solutions to massive, sparse, well‑conditioned systems (Ax=b) for design verification (e.g., heat dissipation in power grids) using a compact quantum state sketch rather than storing A in full.

- Tools/products/workflows: Quantum block‑encoding builder for streamed measurements; interferometric classical shadow readout of target observables; integration with CAD/CAE tools for post‑processing.

- Assumptions/dependencies: Sparsity and conditioning of A; uniform random access to nonzero entries and b components; small quantum device; calibrated mapping from physical sensors to streamed indices.

- Complexity‑frontier experiments to test quantum mechanics via space advantage (sector: academia/physics policy)

- Use case: Design NOPE‑derived benchmarks and reductions to classification/PCA; empirically validate exponential space separations under controlled streaming.

- Tools/products/workflows: Standardized datasets and reporting of “machine size vs performance” curves; reproducible protocols; cross‑lab study coordination.

- Assumptions/dependencies: Correctness of quantum mechanics; fault‑tolerant control; rigorous tracking of sample complexity and repetition number R.

- Edge deployment of quantum‑compressed classical models (sector: mobile/IoT)

- Use case: Use cloud quantum preprocessing to compress large models (text classifiers, sensor anomaly detectors) into small classical predictors that fit on devices with strict memory budgets.

- Tools/products/workflows: Pipeline from quantum classical shadow export to embedded inference libraries; periodic refresh with streamed updates; MLOps for drift monitoring.

- Assumptions/dependencies: Stable upstream data distribution or controlled refresh cadence (T); secure data streaming to quantum service; regulatory compliance.

- Developer tooling and standards for quantum‑streaming ML (sector: software/standards)

- Use case: Provide open‑source libraries to build quantum oracle sketching circuits, adapters for sparse datasets, and error‑analysis utilities; establish “machine size” reporting standards.

- Tools/products/workflows: SDKs with QSVT modules, randomized Hadamard transforms, in‑place binary search; connectors to common data lakes; documentation and benchmarks.

- Assumptions/dependencies: Interoperability with existing ML stacks; community adoption; clarity on memory accounting (logical qubits vs floats).

Long‑Term Applications

These require further hardware maturation (hundreds–thousands of logical qubits, robust error correction), scaling of classical‑quantum interfaces, and operational integration.

- Real‑time power grid and infrastructure estimation via streamed linear systems (sector: energy/infrastructure)

- Use case: Continually estimate heat dissipation, fault risks, and load flows in massive networks from sensor streams, using quantum block encodings to keep memory usage minimal.

- Tools/products/workflows: “Quantum preprocessor” appliances colocated with SCADA; parallelized commuting rotations to reduce wall‑clock time; classical shadow dashboards for operators.

- Assumptions/dependencies: Reliable fault‑tolerant hardware; low‑latency classical‑quantum data links; sparse, well‑conditioned models; refreshing time T ≈ O(N).

- Dynamic recommendation and personalization engines with space‑advantaged learning (sector: media/e‑commerce)

- Use case: Maintain up‑to‑date compact models under rapid user behavior drift; leverage super‑polynomial sample advantage in dynamic tasks to track evolving distributions with modest memory.

- Tools/products/workflows: Quantum oracle sketching for collaborative filtering features; streaming updates with interferometric classical shadows; privacy‑preserving discard of raw events.

- Assumptions/dependencies: Appropriate reductions where memory advantages persist even for “dequantized” tasks; labeled or semi‑supervised streams; careful fairness and compliance governance.

- On‑the‑fly dimension reduction for astronomical surveys and particle physics (sector: science/HPC)

- Use case: Reduce and classify high‑rate detector/survey data into compact representations, cutting storage and memory while preserving scientific signal.

- Tools/products/workflows: Quantum PCA nodes integrated with experiment DAQs; model export to HPC clusters; provenance and audit trails for compressed outputs.

- Assumptions/dependencies: Persistent spectral gaps in leading components; robust streaming interfaces at extreme throughput; certification of scientific fidelity.

- Population‑scale drug discovery and precision medicine (sector: healthcare/pharma)

- Use case: Process multi‑donor scRNA‑seq and multi‑omics streams to infer cell types and trajectories, under shifting lab conditions and donors, with compact models for downstream analytics.

- Tools/products/workflows: Quantum bioinformatics co‑processors; clinical data governance; model validation pipelines (reproducibility, bias audits).

- Assumptions/dependencies: High‑quality sparse assays; guiding vectors for initial PCA directions; regulatory approval and security; workforce training.

- Communications and signal processing (sector: telecom/defense)

- Use case: Channel estimation, interference mitigation, and compressed sensing via streamed linear algebra, producing compact predictors for baseband or edge devices.

- Tools/products/workflows: Quantum block‑encoding builders tied to RF front‑ends; accelerated model compilation to classical firmware; continuous refresh.

- Assumptions/dependencies: Models amenable to sparsity/well‑conditioning; tight latency budgets; hardened hardware for field environments.

- Finance: feature extraction, portfolio optimization, and risk modeling at scale (sector: finance)

- Use case: PCA for factor discovery, dynamic classification for risk signals, and solving large sparse systems for optimization—maintaining small memory footprints despite massive tick data.

- Tools/products/workflows: Quantum data interfaces to market feeds; compliance logging; integration with risk engines.

- Assumptions/dependencies: Spectral and conditioning properties; strict latency/regulatory constraints; robust model monitoring.

- Robotics and autonomy: on‑board model compression for perception (sector: robotics)

- Use case: Stream sensor data to cloud/edge quantum preprocessors to produce compact classical models deployable on constrained robots for perception and anomaly detection.

- Tools/products/workflows: Edge quantum nodes; shadow‑compiled models for embedded stacks; periodic refresh over patchy connectivity.

- Assumptions/dependencies: Real‑time constraints; hardware miniaturization; resilience to distribution shifts.

- National quantum data‑processing infrastructure and standards (sector: government/policy)

- Use case: Establish classical‑quantum streaming interfaces, memory‑based performance metrics, and incentives to deploy small fault‑tolerant devices for data‑center memory efficiency.

- Tools/products/workflows: Standards bodies (e.g., NIST‑like frameworks) defining space‑advantage benchmarks; procurement of “quantum oracle sketching appliances” for public data centers.

- Assumptions/dependencies: Supply chains for error‑corrected hardware; workforce development; environmental impact assessments.

- Experimental program to probe Hilbert‑space reality at scale (sector: physics/academia)

- Use case: Systematic validation or refutation of space advantages under varying noise/correlation (R, T) parameters; cross‑task reductions (classification, PCA, linear systems).

- Tools/products/workflows: Multi‑site collaborations, standardized NOPE tasks, certification protocols for device behavior under streaming randomness.

- Assumptions/dependencies: Long‑term hardware reliability; reproducibility across platforms; transparent reporting.

Cross‑cutting assumptions and dependencies

- Hardware: Access to small, fault‑tolerant quantum processors (tens to hundreds of logical qubits), stable error correction, and low‑latency classical‑quantum data paths.

- Data properties: Sparse structures; well‑conditioned linear systems; non‑vanishing margins for classifiable points; spectral gaps in PCA; availability of guiding vectors where needed.

- Streaming model: Randomized or manageable non‑uniform sampling; bounded repetition number R; refreshing time T on the order of dataset size N for dynamic tasks.

- Algorithmic constraints: Sample complexity scales as O(NQ2/ε) for Q queries; space advantage persists even if BPP=BQP; runtime dominated by O(N) data ingestion but rotations largely commute, enabling parallelization.

- Governance: Privacy and compliance for streaming and discard policies; auditability of compact models; standardized memory accounting and performance reporting.

Glossary

- Bell inequalities: A family of inequalities that any local hidden-variable theory must satisfy, used to test quantum nonlocality. Example: "Bell inequalities [76] test quantum nonlocality [77, 78]."

- block encodings: A technique that embeds a (generally non-unitary) matrix into a block of a larger unitary, enabling quantum algorithms for linear algebra. Example: "block encodings of matrices."

- Born rule: The quantum rule linking amplitudes to measurement probabilities (probability equals the squared magnitude of the amplitude). Example: "governed by the Born rule."

- BPP: The class of decision problems solvable by a probabilistic classical computer in polynomial time with bounded error. Example: "even when classical machines are granted unlimited time or if BPP = BQP"

- BQP: The class of decision problems solvable by a quantum computer in polynomial time with bounded error. Example: "even when classical machines are granted unlimited time or if BPP = BQP"

- BQP-hard: At least as hard as the hardest problems in BQP; solving it efficiently would solve all BQP problems efficiently. Example: "are BQP-hard."

- classical query complexity: The minimum number of oracle queries a classical algorithm needs to estimate a property. Example: "the classical query complexity Qc of the target property"

- classical shadow tomography: A method to efficiently estimate many properties of a quantum state from randomized measurements, producing compact “shadows.” Example: "Combined with classical shadow tomography [74] for efficient readout"

- communication complexity: The study of how much communication is required between parties to compute a function; used to derive space/sample lower bounds. Example: "using communication complexity tools [88-94]"

- diamond distance: A norm on quantum channels measuring worst-case distinguishability when ancillary systems are allowed. Example: "e-error approximation in diamond distance"

- fault-tolerance: Techniques ensuring reliable quantum computation despite noise and errors via error correction. Example: "incurs significant overhead in fault-tolerance and con- trol [16]"

- Forrelation: A property comparing a Boolean function and the Fourier transform of another, exhibiting large quantum-classical query separations. Example: "the Forrelation property [95, 96]"

- Hadamard test: A quantum procedure to estimate real or imaginary parts of inner products/phases via interference. Example: "combines the idea of the Hadamard test with the efficient offline prediction capability of classical shadow tomography."

- Hilbert spaces: Complete inner-product spaces forming the mathematical setting for quantum states. Example: "the exponential dimensionality of quantum Hilbert spaces"

- Holevo's bound: An upper bound on the amount of classical information extractable from a quantum system. Example: "Holevo's bound shows that only n classical bits can be stored in an n-qubit state [63]"

- Interferometric Classical Shadows: A variant of classical shadows leveraging interference (Hadamard-test-like) to capture sign/phase-sensitive information. Example: "B. Interferometric Classical Shadows"

- Joule's law: Relates heat dissipation to current and resistance, used here to define a quadratic form objective. Example: "By Joule's law, this is given by a quadratic form &T Ma"

- Kirchhoff's laws: Circuit laws for conservation of charge and energy (current and voltage rules), used to model linear systems. Example: "According to Ohm's law and Kirchhoff's laws"

- least- squares support vector machine (LS-SVM): A variant of SVM using least-squares loss with L2 regularization; equivalent to ridge classification. Example: "the least- squares support vector machine (LS-SVM), also known as the ridge classifier."

- Noisy Oracle Property Estimation (NOPE): A formal task of estimating a property of a function from noisy oracle data, used to relate query and space complexities. Example: "Noisy Oracle Property Estimation (NOPE)"

- oblivious amplitude amplification: A method to boost the success amplitude of a procedure using unitary reflections, without depending on the specific input state. Example: "oblivious amplitude amplification"

- Ohm's law: Relates voltage, current, and resistance (V=IR), used to derive linear system structure. Example: "According to Ohm's law and Kirchhoff's laws"

- oracle separation: A separation in query complexity between models (e.g., quantum vs. classical) for oracle problems. Example: "Space advantage from oracle separation"

- phase oracle: A unitary that encodes a Boolean function’s value as a phase on computational basis states. Example: "the phase oracle of a Boolean function"

- poly(log N): Polynomial in the logarithm of N; denotes very modest scaling in N. Example: "a quantum computer with poly(log N) size"

- principal component analysis (PCA): An unsupervised method that finds directions of maximum variance for dimension reduction. Example: "principal component analysis (PCA)"

- quantum oracle sketching: The paper’s technique to construct approximate quantum oracles from streaming classical samples. Example: "These quantum advantages are enabled by quan- tum oracle sketching"

- quantum query complexity: The minimum number of oracle queries a quantum algorithm needs. Example: "quantum query complexity Q and classical query complexity Qc"

- quantum random access memory (QRAM): An architecture enabling coherent superposition access to classical data indices. Example: "quantum random access memory (QRAM)"

- quantum singular value transformation (QSVT): A framework to implement polynomial transformations of singular values via unitary circuits. Example: "quantum singular value transformation (QSVT)"

- quantum superposition: The principle that quantum states can exist in linear combinations, enabling parallelism. Example: "relies only on the principle of quantum superposition"

- randomized Hadamard transform: A technique using random sign flips and Hadamards to uniformize or spread amplitudes. Example: "a randomized Hadamard transform"

- ridge classifier: A linear classifier using L2-regularized least squares; equivalent to LS-SVM. Example: "also known as the ridge classifier."

- sparse oracles: Oracles tailored to sparse data structures, exposing only nonzero entries efficiently. Example: "sparse oracles and block encodings of matrices."

- spectral gap: The difference between the largest and second-largest eigenvalues, impacting convergence/robustness. Example: "the spectral gap A between the largest and second- largest eigenvalues of XTX"

- state preparation unitaries: Unitaries that prepare a target quantum state (e.g., encoding a classical vector) from a basis state. Example: "state preparation unitaries of vectors"

- superpoly(N): Growth faster than any polynomial in N; used to denote super-polynomial sample/time requirements. Example: "requires superpoly(N) samples."

- XOR lemma: A hardness amplification principle stating that XORing instances can increase computational hardness. Example: "a learning version of the XOR lemma [97-99]"

Collections

Sign up for free to add this paper to one or more collections.