- The paper presents FDB-v3, a benchmark that assesses full-duplex voice agents’ multi-step API tool use and turn-taking dynamics under realistic speech disfluencies.

- It rigorously evaluates six architectures, showing that models like GPT-Realtime achieve high tool selection (F1 = 0.876) but struggle with dynamic state updates under self-correction.

- The study highlights the need for continuous intent parsing and robust rollback mechanisms to enhance real-time spoken dialogue systems in unpredictable environments.

Introduction

Full-duplex real-time voice agents capable of robust tool use and goal-directed action are critical for practical human-computer interaction. Despite significant progress in text-based LLM agents orchestrating multi-step API workflows, spoken dialogue systems have lagged in extending these agentic capabilities to natural, real-world conversation, where latency constraints and speech disfluencies dominate the dynamic. "Full-Duplex-Bench-v3: Benchmarking Tool Use for Full-Duplex Voice Agents Under Real-World Disfluency" (2604.04847) introduces a rigorously constructed and reproducible benchmark assessing leading voice agents in realistic streaming environments, targeting the unmet challenge of multi-step API tool use under spontaneous human speech with annotated disfluencies.

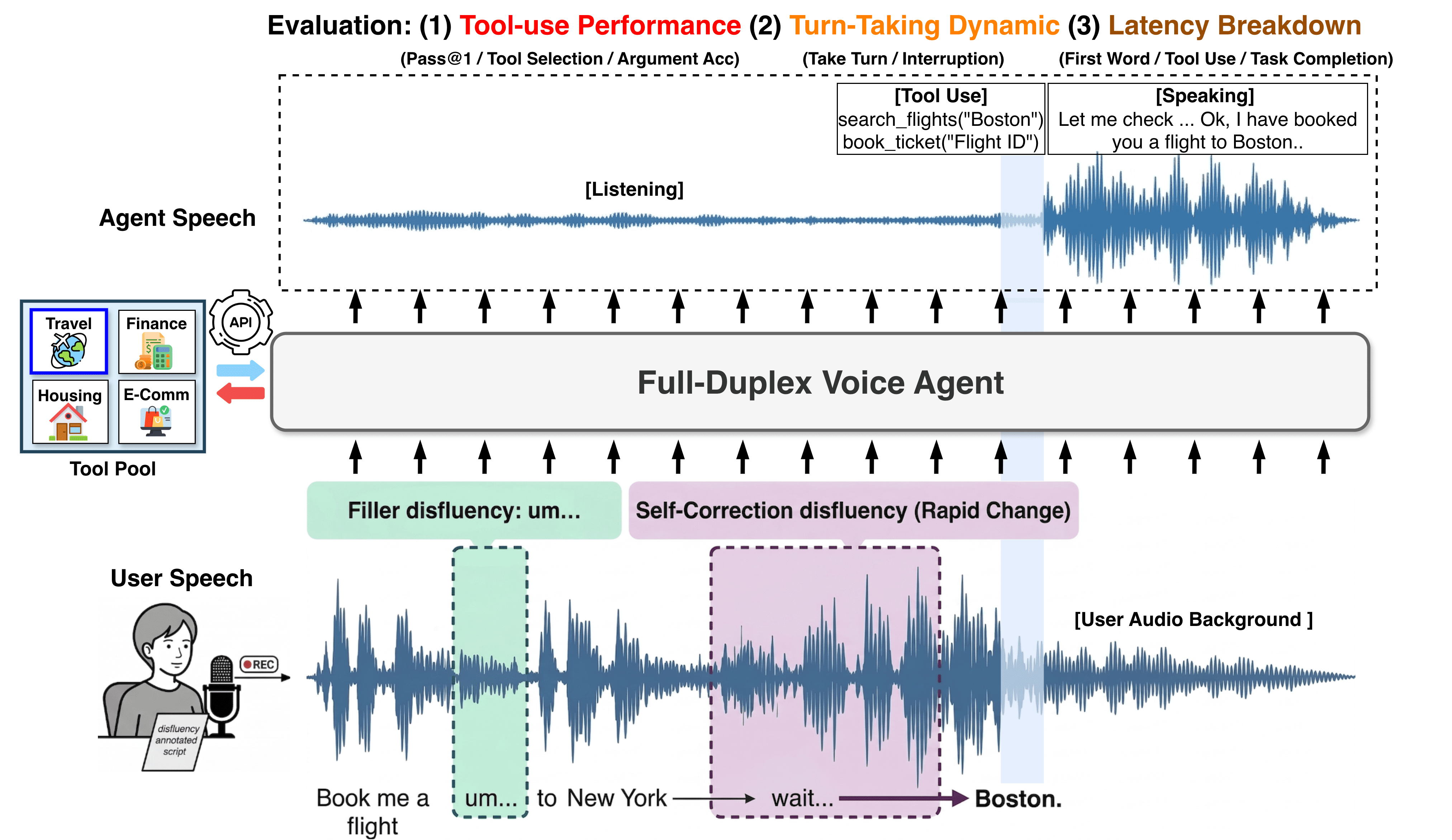

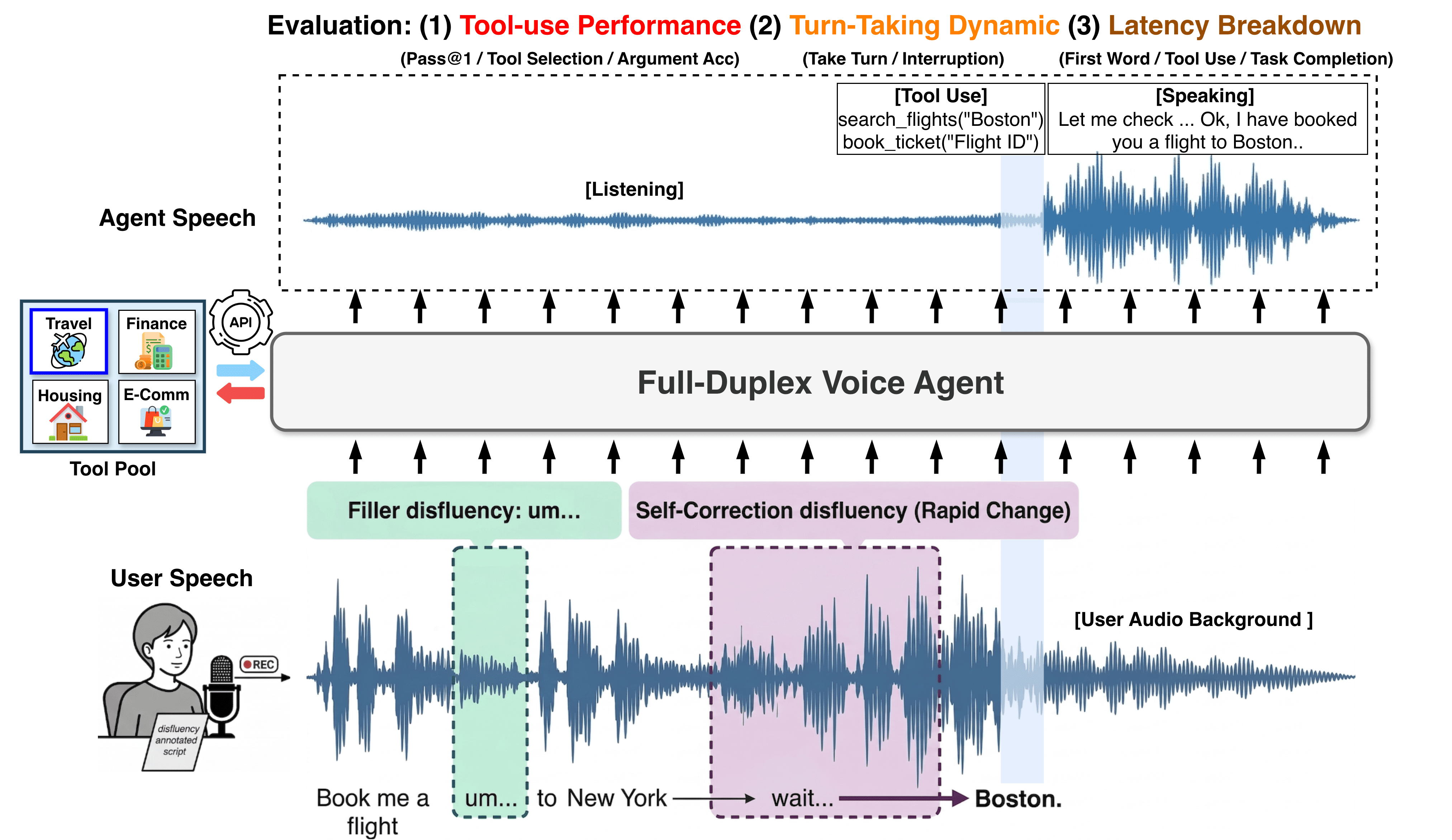

Figure 1: The Full-Duplex-Bench-v3 framework for evaluating real-time voice agents, with a focus on tool-use performance, turn-taking dynamics, and latency breakdown under realistic speech disfluency.

Benchmark Design and Dataset Characteristics

FDB-v3 departs from prior work by exclusively utilizing real human speech, capturing five prominent classes of disfluencies: fillers, pauses, hesitations, false starts, and self-corrections. Each user query is sourced from naturalistic microphone recordings in uncontrolled environments, including both native and non-native speakers and real-world background noise conditions. Importantly, 21 out of 100 scenarios are explicitly constructed to require real-time state updates, testing the agent's ability to handle on-the-fly intent correction and parameter rollback—the primary failure mode in open-agent pipeline designs.

The benchmark covers four operationally relevant domains (Travel/Identity, Finance/Billing, Housing/Location, E-Commerce/Support) and defines deterministic, locally executed mock APIs. Scenarios are stratified into three difficulty tiers, scaling from single-step calls (Easy) to multi-step, constraint-laden reasoning (Hard). This design enables precise attribution of observed performance bottlenecks to reasoning and streaming issues, independent of external service noise.

Evaluation Protocols and Model Comparison

FDB-v3 evaluates six system architectures, including proprietary end-to-end S2S models (GPT-Realtime, Gemini Live 2.5/3.1, Grok, Ultravox v0.7) and a standard Cascaded pipeline (Whisper ASR → GPT-4o → TTS). Consistent deployment infrastructure (LiveKit) and zero-latency mock APIs eliminate confounding variables related to network and service unpredictability, ensuring that all system-level latencies are model-induced. Evaluation is multifaceted, with core axes:

- Tool use: F1 for API selection, argument accuracy, strict Pass@1 (all-or-nothing per scenario), response naturalness.

- Turn-Taking Dynamics: Turn-take rate, interruption (speech-overlap) frequency.

- Latency: First word, tool call, and task completion response.

Key Empirical Findings

GPT-Realtime achieves the highest tool selection F1 (0.876), argument accuracy (0.680), Pass@1 (0.600), and response naturalness. Its interruption rate (13.5%) is the lowest among real-time systems at comparable turn-take rates (96%). Gemini Live 3.1, while fastest (median 4.25 s task completion), exhibits a turn-take rate bottleneck (78.0%), failing to respond in ~22% of scenarios and disproportionately in complex cases—primarily due to systemically dropped output speech even when tools are invoked correctly. The Cascaded baseline reliably returns speech (100% turn-take), but with maximal latency (10.12 s), primarily traced to the serialized ASR-LLM-TTS stages.

Domain-level analysis highlights that finance (routine argument mapping, minimal ambiguity) is more tractable for all models (e.g., GPT-Realtime: 0.960 Pass@1), whereas housing/e-commerce multi-constraint scenarios are most challenging. Disfluency robustness analysis reveals that handling of surface-level fillers is near saturation, while accurate rollback under self-correction events is universally deficient: GPT-Realtime leads with 0.588 Pass@1 but still fails on over 40% of such cases. This inability to defer tool invocation until user intent is certain is heavily implicated in both rigid and eager processing architectures.

Latency breakdowns demonstrate that, while Ultravox and Grok may exhibit faster initial speech (filler pre-responses), this behavior inflates overall completion latency and increases the probability of user interruption, with Ultravox's interruption rate peaking at 47.9%. Pre-emptive tool use, where API calls are issued before user utterance end, varies widely across architectures, but does not consistently line up with interruption profiles—revealing that "thinking while listening" and "responding before the speaker finishes" are architecturally distinct failure modes.

Implications, Failure Analysis, and Theoretical Outlook

FDB-v3 exposes critical design trade-offs in real-time voice agent architectures. End-to-end models deliver marked reductions in pipeline delay but are susceptible to both over-eager response (interrupting users, especially via content-free fillers) and no-response scenarios due to architectural silence on post-processing completion (e.g., Gemini Live 3.1). The traditional cascaded pipeline, though reliable in terms of engagement, is fundamentally limited by sequential component bottlenecks and error propagation, especially under disfluency-induced state updates—e.g., early ASR finalization abandoning self-corrections.

Of particular importance is the observed inability of all major systems to track and update the dialog state in response to rapid self-correction, a core aspect of human conversational management. Eager commitment to tool calls for latency minimization sharply decreases the capacity for within-turn rollback, while conservative delay strategies inflate apparent system sluggishness. Gemini Live 3.1 exemplifies an architectural divide between correct tool-use reasoning and output speech generation—manifest as a "silent worker," correctly calling APIs in the background yet forgoing the user-oriented response.

The results directly suggest that the next cycle of S2S agent research must converge on architectures capable of continuous, incremental intent parsing and commitment, enabling real-time dynamic state updates well-aligned with non-deterministic human speech patterns. Multi-threaded or speculative processing, incremental confirmation, and robust rollback mechanisms—as well as more targeted supervision on disfluency-heavy corpora—are immediate future directions.

Conclusion

Full-Duplex-Bench-v3 (2604.04847) sets a new standard for rigorous, reproducible evaluation of full-duplex spoken agents operating in real-world, disfluent conversational regimes with multi-step tool use. The reported results elucidate strong trade-offs between throughput, natural turn-taking, and dynamic reasoning fidelity, establishing that while current end-to-end systems are substantially faster and more naturalistic than legacy pipelines, they uniformly underperform on dynamic within-turn state management—especially for mid-sentence self-correction. Addressing this limitation is prerequisite for truly agentic, human-aligned spoken interface systems.